Advanced Augmentation for Small Datasets: Albumentations vs Torchvision

JUL 10, 2025 |

In the realm of machine learning and computer vision, data augmentation is a critical technique used to enhance the diversity of training datasets without the need to collect new data. This is particularly beneficial when dealing with small datasets, where limited data can lead to overfitting and poor generalization. Two popular libraries for implementing data augmentation are Albumentations and Torchvision. In this blog post, we will delve into their features, compare their functionalities, and discuss their suitability for handling small datasets.

Understanding Data Augmentation

Data augmentation involves applying a variety of transformations to the existing dataset to create new, modified versions of the data. This can include operations such as rotation, flipping, scaling, cropping, noise addition, and color adjustments. The goal is to simulate variations that might occur in real-world scenarios, thereby improving the robustness of the machine learning model.

Overview of Albumentations and Torchvision

Albumentations is a Python library designed specifically for fast and flexible image augmentation. It was developed to address the limitations of existing libraries by providing a wide range of transformations that can be applied efficiently. Albumentations is particularly known for its speed and ease of use, making it a popular choice for applications involving deep learning models.

Torchvision, on the other hand, is a package that extends the capabilities of PyTorch in the field of computer vision. It includes popular datasets, model architectures, and transformations for image processing. While it may not offer as broad a range of transformations as Albumentations, it integrates seamlessly with PyTorch, which is a significant advantage for users of the PyTorch ecosystem.

Comparing Transformations

When it comes to transformations, Albumentations offers a comprehensive suite of options that are both flexible and customizable. It supports a variety of image types and allows users to combine multiple transformations into a single pipeline. This makes it easy to apply complex augmentations with minimal code. Additionally, Albumentations supports spatial-level transformations, pixel-level transformations, and more advanced techniques such as cutout and grid distortions.

Torchvision also provides a solid set of transformations, but its focus is primarily on essential and commonly used operations. These include resizing, cropping, flipping, and normalizing images. While these may suffice for many applications, the lack of more advanced transformations might limit its appeal for users looking for more diverse augmentation strategies.

Performance and Speed

Performance is a crucial consideration when choosing an augmentation library, especially for large datasets or real-time applications. Albumentations is renowned for its speed, achieved through optimized implementations of transformations. Its ability to handle large batches efficiently makes it suitable for high-performance scenarios.

Torchvision, being tightly integrated with PyTorch, benefits from seamless pipeline integration, which can be advantageous in terms of code simplicity and maintenance. However, users might find that Torchvision's augmentations are not as fast as those in Albumentations, particularly for complex transformations.

Ease of Use and Flexibility

Ease of use is another important factor, especially for researchers and practitioners who want to implement augmentations quickly. Albumentations has a user-friendly API that allows for easy composition of augmentation pipelines. Its syntax is intuitive and requires minimal effort to learn and implement, making it accessible for beginners and experts alike.

Torchvision, while straightforward, may require more effort to set up complex pipelines due to its less extensive range of transformations. Users familiar with the PyTorch ecosystem may find it more convenient to use Torchvision, but those seeking a greater variety of augmentations might gravitate towards Albumentations.

Suitability for Small Datasets

For small datasets, the choice between Albumentations and Torchvision can hinge on the specific needs of the project. Albumentations, with its extensive transformation capabilities and speed, is well-suited for creating diverse and complex data augmentations that can help alleviate overfitting. Its ability to apply multiple transformations simultaneously allows for richer data variations, which is advantageous for small datasets.

Torchvision, while more limited in transformation variety, offers the advantage of seamless integration with PyTorch models and training pipelines. It can be a practical choice for users who prioritize simplicity and integration within the PyTorch framework.

Conclusion

In conclusion, both Albumentations and Torchvision have their unique strengths and limitations. Albumentations shines with its extensive transformation capabilities and speed, making it ideal for applications that require advanced augmentations. Torchvision, with its integration into the PyTorch ecosystem, provides a straightforward and convenient option for users who require basic augmentations. Ultimately, the choice between the two will depend on the specific requirements of the project, including the complexity of augmentations needed and the framework used for model development.

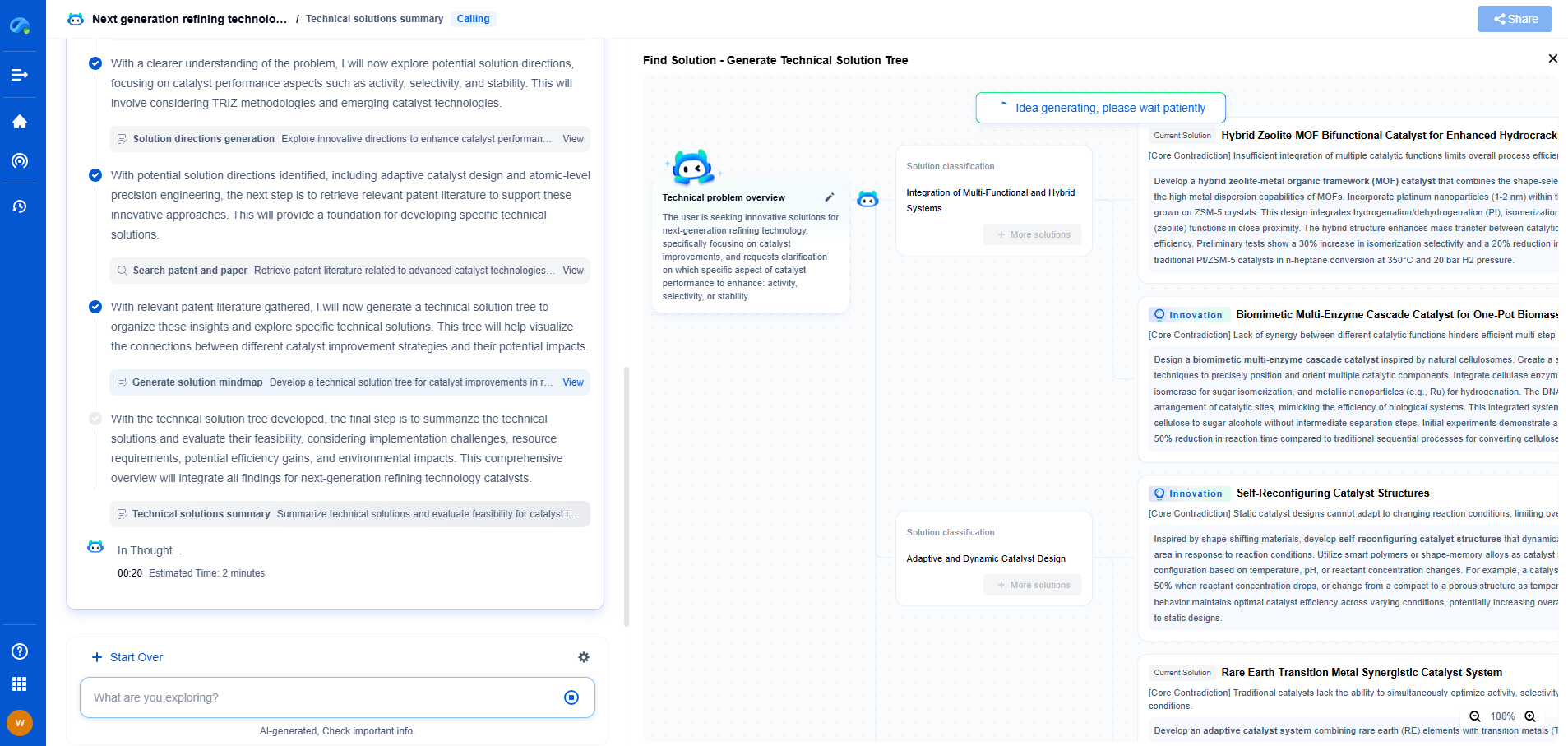

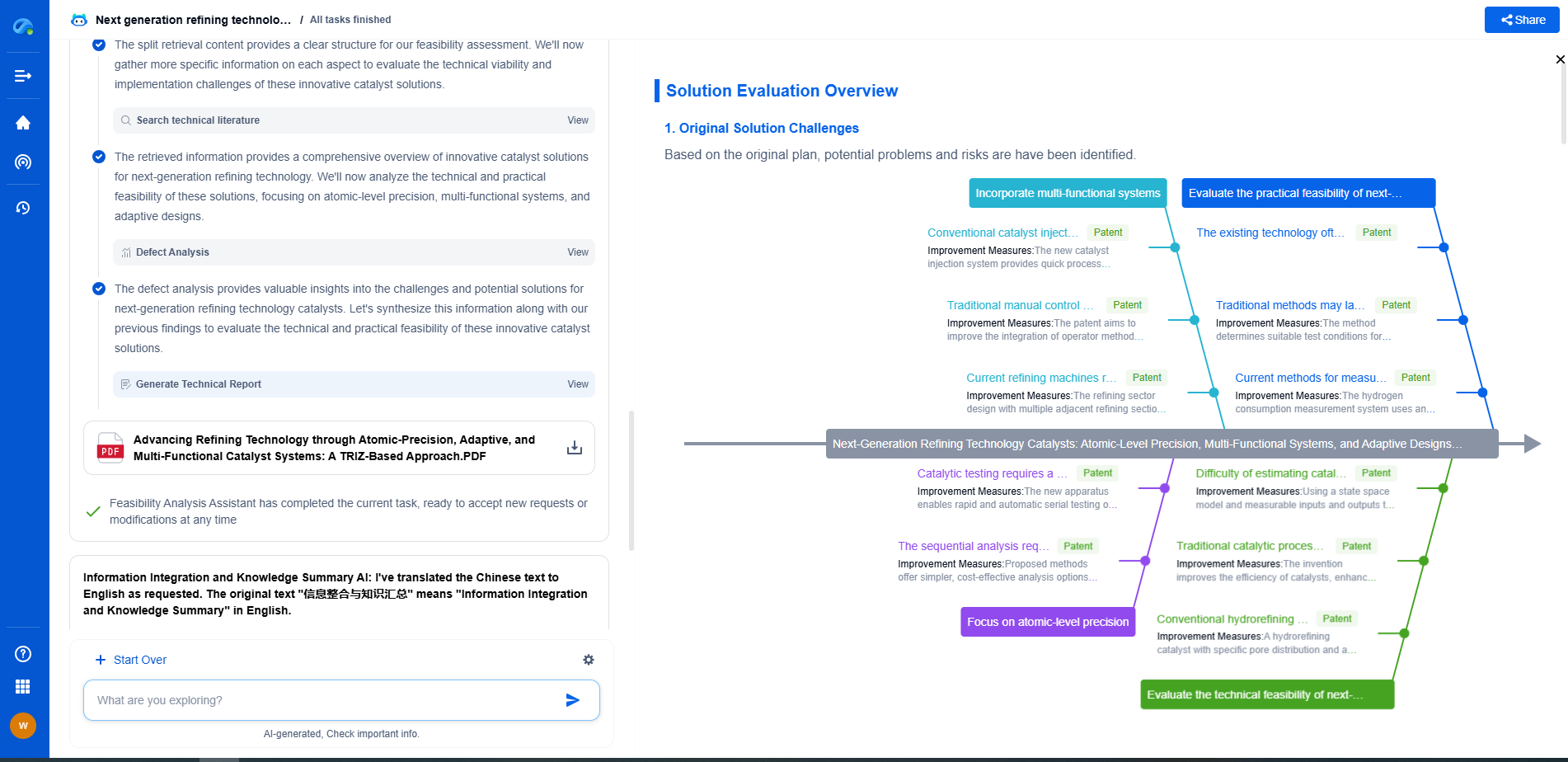

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.