AR vs. VR: Computer Vision Requirements for Immersive Technologies

JUL 10, 2025 |

Introduction to AR and VR

As immersive technologies continue to blur the lines between the digital and physical worlds, Augmented Reality (AR) and Virtual Reality (VR) have emerged as pivotal innovations. Both AR and VR are increasingly integrating into various sectors, from gaming and entertainment to education and healthcare. At the heart of these technologies lies computer vision, a critical component that enables devices to interpret and understand the visual world. This article will delve into the computer vision requirements specific to AR and VR, exploring how they contribute to the immersive experiences that users crave.

Understanding Computer Vision in AR

Augmented Reality overlays digital content onto the real world, enhancing the user's perception of their environment. For AR to function seamlessly, it requires sophisticated computer vision algorithms capable of real-time analysis and interaction with the physical world.

**Object Recognition and Tracking**

One of the primary requirements for AR is precise object recognition and tracking. Computer vision systems must identify and track objects in real-time to accurately overlay digital information. This involves understanding the geometry and movement of objects, ensuring that digital augmentations maintain their position relative to the real world. Advanced machine learning models are often employed to recognize various objects and predict their motion patterns, enabling dynamic interaction.

**Environment Mapping and Spatial Understanding**

To provide a coherent AR experience, devices must comprehend the spatial layout of the environment. This is achieved through techniques like simultaneous localization and mapping (SLAM), which creates a 3D map of the surroundings. By understanding spatial relationships, AR applications can anchor digital objects to specific locations, allowing for interactions that appear natural and intuitive to the user.

**Lighting and Occlusion Handling**

For AR overlays to blend realistically with the real world, computer vision systems must account for lighting conditions and occlusion. This involves understanding the lighting environment, adjusting digital content to match ambient light, and managing occlusion, where virtual objects are partially or fully obscured by real-world objects. This enhances the realism and immersion of the AR experience, making digital content appear as if it is part of the physical world.

The Role of Computer Vision in VR

Virtual Reality, on the other hand, immerses users in a completely digital environment, disconnecting them from the real world. While VR does not interact with the physical surroundings in the same way AR does, computer vision remains a crucial element in delivering a convincing virtual experience.

**Head and Motion Tracking**

In VR, users expect responsive environments that react to their movements. Computer vision systems enable head and motion tracking, capturing the user's position and orientation to render the virtual world accordingly. This is essential for providing a sense of presence, where users feel truly immersed within the virtual environment. High precision and low latency in tracking are crucial to prevent disorientation and motion sickness.

**Gesture Recognition and Interaction**

VR environments benefit greatly from intuitive user interfaces that allow for natural interaction. Computer vision facilitates gesture recognition, enabling users to manipulate virtual objects through hand movements and gestures. This expands the possibilities for interaction and enhances user engagement, making the experience more interactive and immersive.

**Environmental Realism and Object Rendering**

Although VR does not rely on the real-world environment, creating believable virtual spaces is paramount. Computer vision contributes to realistic rendering by simulating physical properties such as texture, lighting, and shadows. Techniques like ray tracing and real-time rendering are employed to enhance the visual fidelity of virtual objects, ensuring that the digital world feels authentic.

Conclusion: The Future of Immersive Technologies

As AR and VR technologies continue to evolve, computer vision will play an increasingly vital role in shaping the future of immersive experiences. The ongoing advancements in machine learning, artificial intelligence, and sensor technologies promise to enhance the capabilities of computer vision, enabling even more seamless and realistic interactions in AR and VR. By understanding the unique requirements of each, developers can continue to push the boundaries of what is possible, creating compelling immersive experiences that captivate and inspire users worldwide.

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

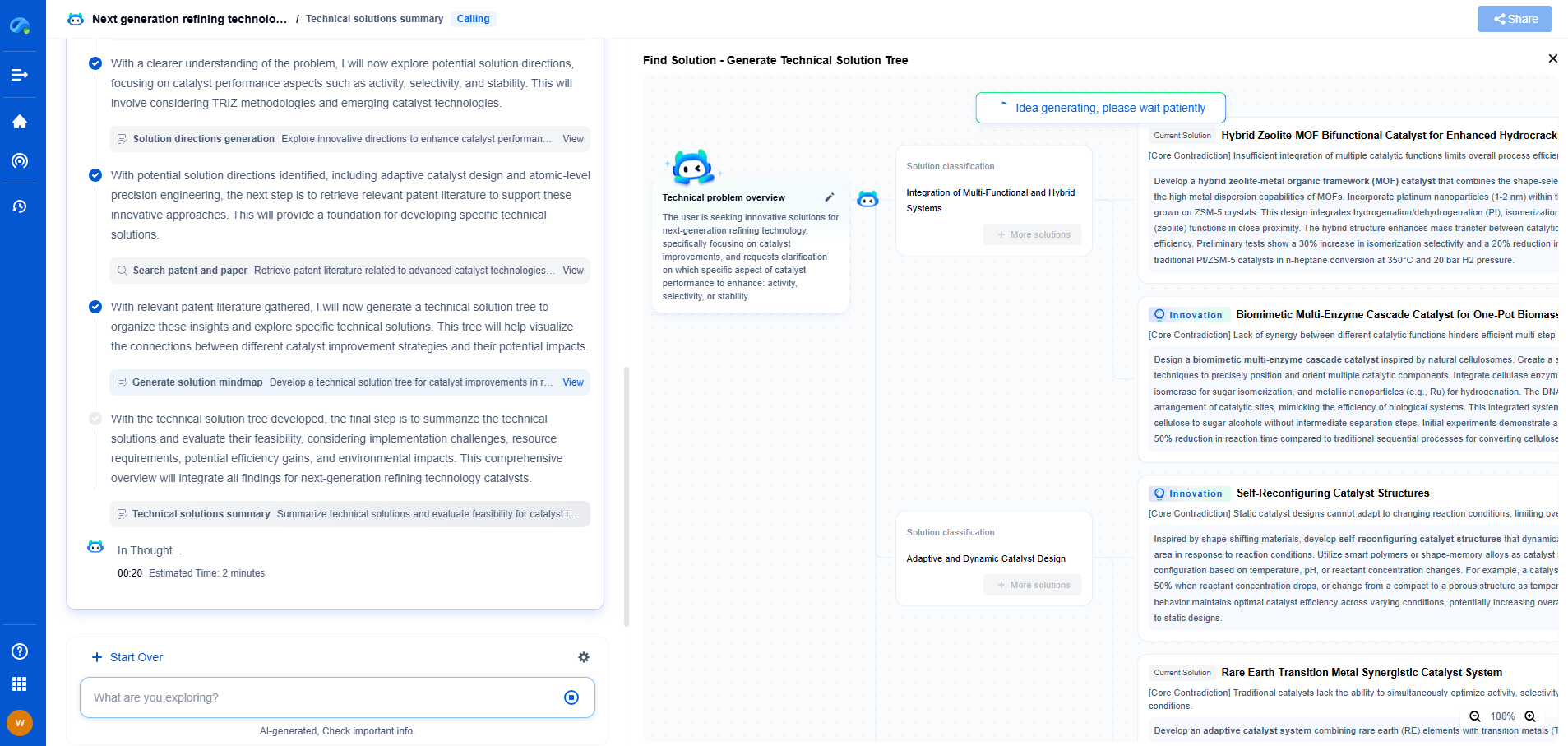

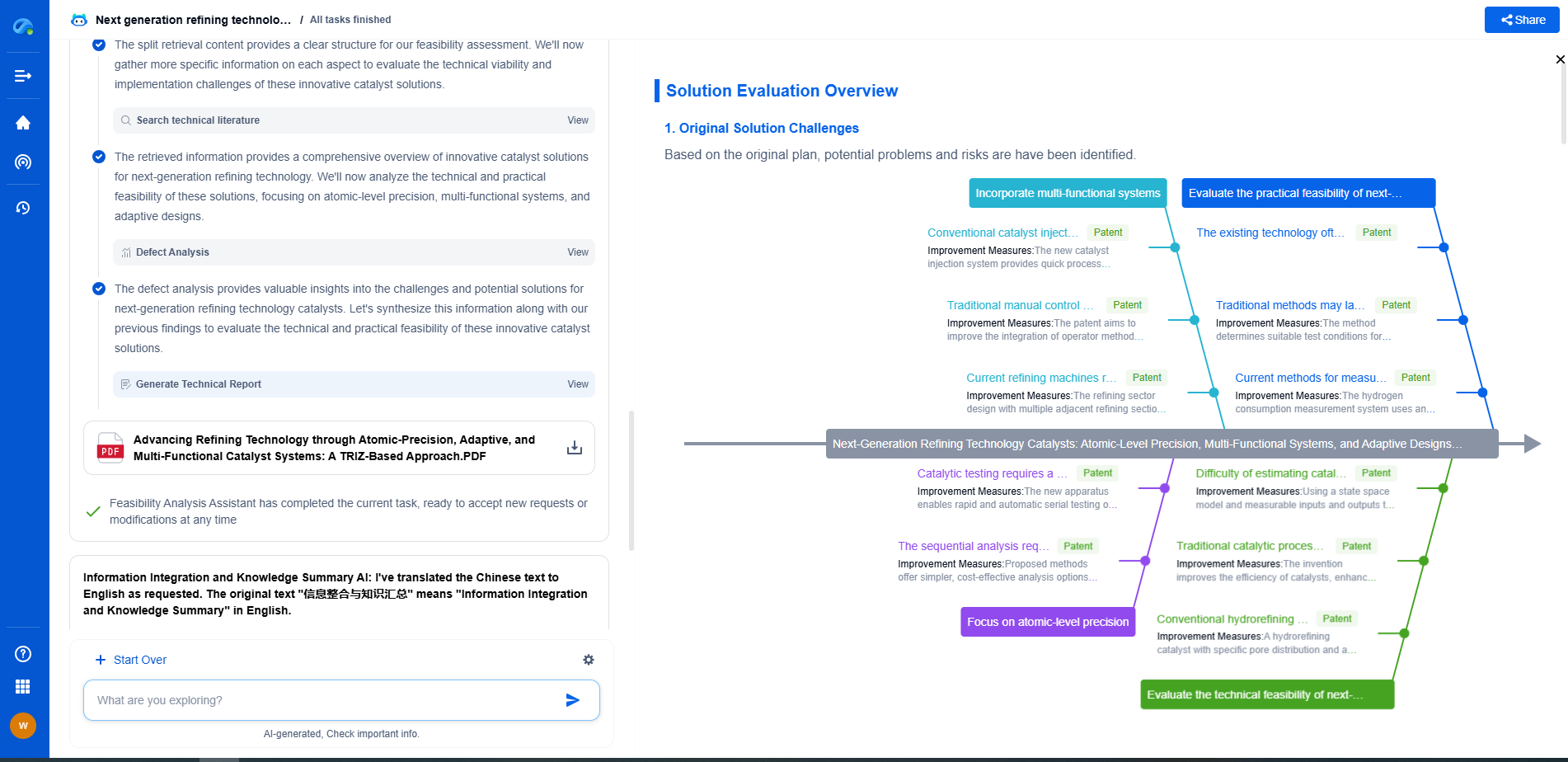

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.