Avoiding Black-Box Behavior in AI-Enhanced Control Agents

JUL 2, 2025 |

In the rapidly evolving field of artificial intelligence, one of the most pressing challenges is avoiding the so-called "black-box" behavior, particularly in AI-enhanced control agents. These agents are pivotal in many applications, from autonomous vehicles to industrial automation systems. The term "black-box" refers to systems whose internal workings are not transparent to the user, making it difficult to understand how decisions are made. This opacity can lead to a lack of trust and potential safety issues, especially in critical applications.

The Importance of Transparency

Transparency in AI systems is crucial for several reasons. First, it fosters trust among users, developers, and stakeholders. When users understand how an AI reaches its decisions, they are more likely to trust its recommendations or actions. Second, transparency is essential for debugging and improving AI systems. If developers can see how an AI agent processes inputs and produces outputs, they can more easily identify and fix errors. Lastly, transparency is critical for compliance with regulatory requirements, which are becoming increasingly stringent.

Strategies for Enhancing Transparency

1. **Explainable AI (XAI) Techniques**: One of the most promising approaches to avoiding black-box behavior is the development of explainable AI techniques. These methods aim to make AI systems more interpretable by providing clear and understandable explanations of how decisions are made. Techniques such as decision trees, rule-based systems, and model distillation can help shed light on the inner workings of complex models.

2. **Model Simplification**: Simplifying models is another effective way to enhance transparency. While complex models like deep neural networks are powerful, they are often difficult to interpret. Using simpler models, or combining complex models with interpretable ones, can strike a balance between performance and transparency. Techniques like pruning or using ensemble methods can help achieve this.

3. **Visualization Tools**: Visualization tools can be incredibly helpful in understanding the behavior of AI systems. By visualizing data flows, decision pathways, and the impact of different variables, users and developers can gain insights into how the system operates. Tools such as heat maps, saliency maps, and dependency plots are commonly used to visualize model behavior in a comprehensible manner.

4. **User-Friendly Interfaces**: Designing user-friendly interfaces that allow users to interact with AI systems can also enhance transparency. Interactive interfaces that let users query the system, inspect decision-making processes, and receive feedback enable a deeper understanding of how the AI functions. This interaction can demystify the AI's operations and lead to greater user acceptance.

Balancing Performance and Transparency

It's important to note that there is often a trade-off between performance and transparency. Highly accurate models, such as deep learning algorithms, can be less transparent due to their complexity. However, this does not mean that transparency should be sacrificed for performance. By employing the strategies mentioned above, it is possible to create AI systems that are both effective and transparent.

The Role of Regulation

Regulation plays a crucial role in ensuring that AI systems remain transparent and accountable. Regulatory bodies are increasingly mandating that AI systems, especially those used in sensitive or high-stakes areas, comply with certain transparency standards. Adhering to these regulations not only helps avoid legal issues but also promotes ethical AI development. It's vital for developers and companies to stay informed about regulatory changes and incorporate compliance into their design processes.

Conclusion

Avoiding black-box behavior in AI-enhanced control agents is not just a technical challenge but a moral and practical imperative. As AI continues to permeate various aspects of our lives, ensuring transparency in these systems will be key to gaining and maintaining trust. By leveraging explainable AI techniques, simplifying models, utilizing visualization tools, and adhering to regulations, we can create AI systems that are both powerful and transparent. This balance will ultimately lead to safer, more reliable AI applications that benefit society as a whole.

Ready to Reinvent How You Work on Control Systems?

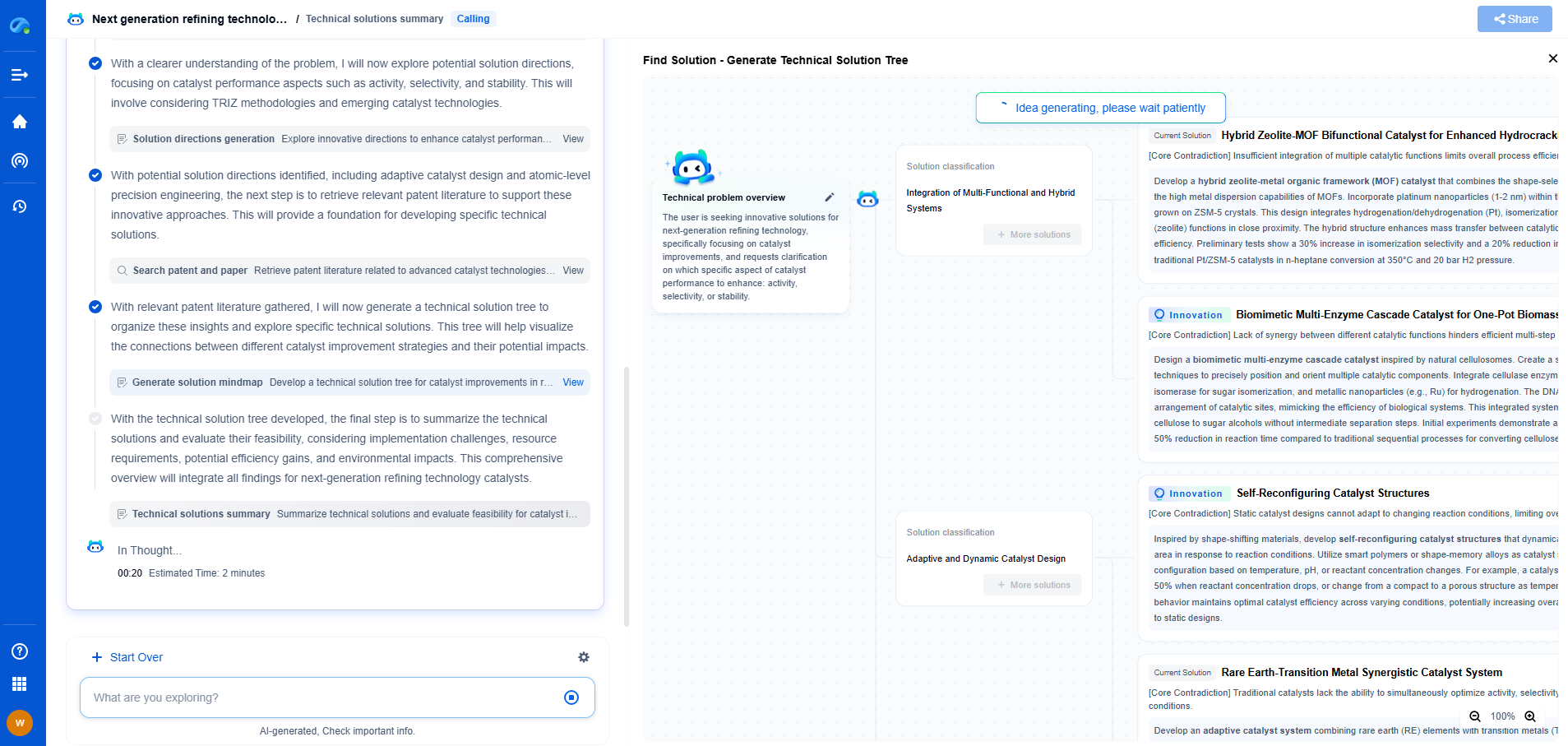

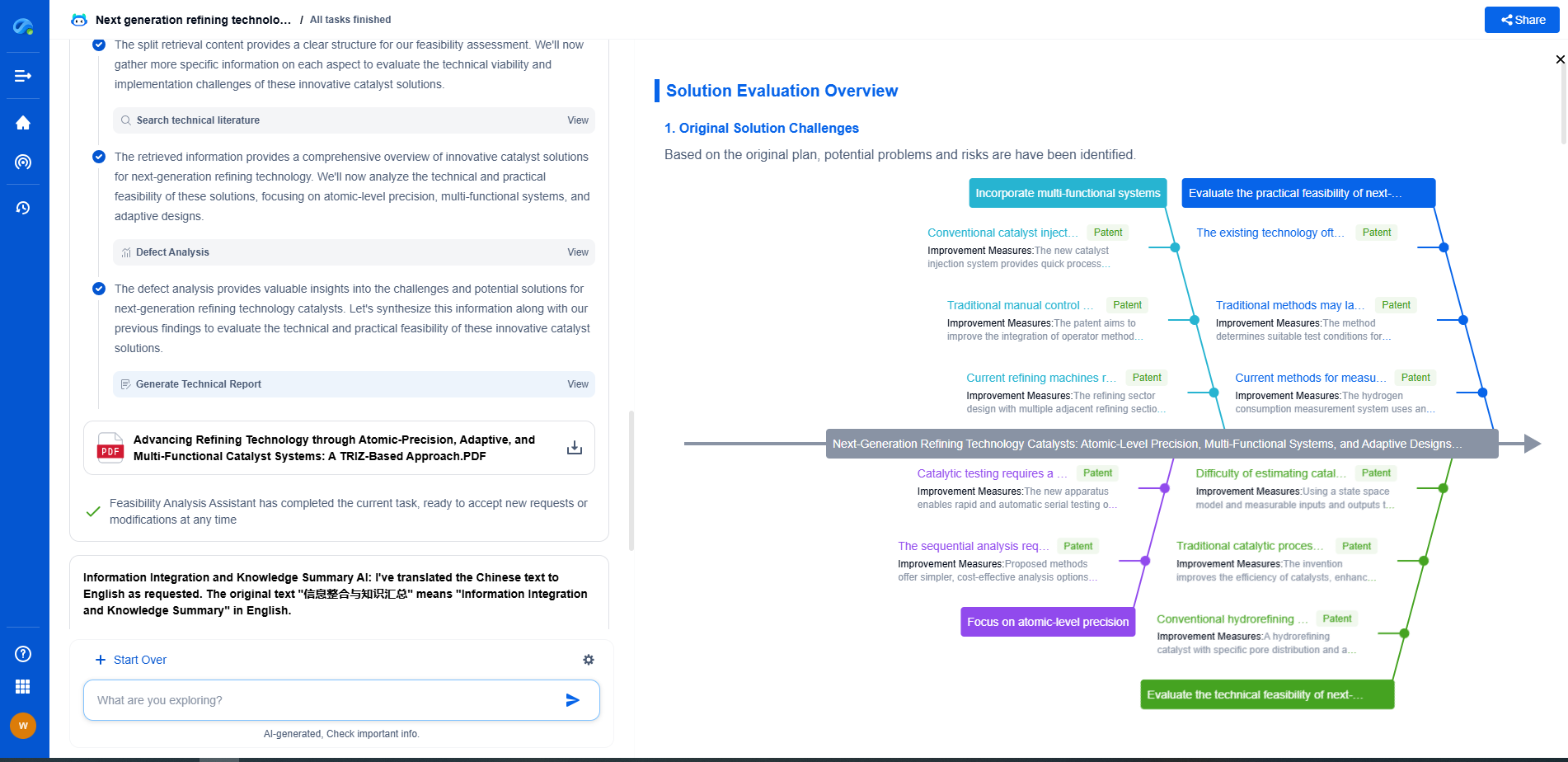

Designing, analyzing, and optimizing control systems involves complex decision-making, from selecting the right sensor configurations to ensuring robust fault tolerance and interoperability. If you’re spending countless hours digging through documentation, standards, patents, or simulation results — it's time for a smarter way to work.

Patsnap Eureka is your intelligent AI Agent, purpose-built for R&D and IP professionals in high-tech industries. Whether you're developing next-gen motion controllers, debugging signal integrity issues, or navigating complex regulatory and patent landscapes in industrial automation, Eureka helps you cut through technical noise and surface the insights that matter—faster.

👉 Experience Patsnap Eureka today — Power up your Control Systems innovation with AI intelligence built for engineers and IP minds.