Class Imbalance in mAP: Strategies for Rare Object Detection

JUL 10, 2025 |

In the realm of computer vision, object detection is a critical task with applications in various domains, from autonomous driving to medical imaging. One of the prevalent challenges in this field is class imbalance, especially when dealing with rare object detection. Class imbalance occurs when the number of instances of certain objects is significantly lower than others, affecting the performance metrics like mean Average Precision (mAP). This blog explores the strategies employed to address class imbalance in mAP, with a focus on detecting rare objects effectively.

Understanding Class Imbalance in Object Detection

Class imbalance is a common issue in datasets where some classes, often the ones representing rare objects, have significantly fewer samples compared to others. This imbalance can bias the learning process of detection models, making them more sensitive to common classes and less effective at detecting rare objects. In the context of mAP, this can lead to skewed evaluation metrics where the presence of fewer true positives for rare classes causes an overall drop in performance scores. Thus, addressing class imbalance is crucial to ensure fair evaluation and improved detection of all objects, irrespective of their frequency.

The Impact on Mean Average Precision (mAP)

Mean Average Precision is a widely used metric to evaluate object detection models. It considers both precision and recall, providing a balanced measure of a model's performance. However, when class imbalance is present, mAP can be significantly affected. Models tend to achieve high precision and recall on frequent classes while underperforming on rare ones, leading to a misleadingly high mAP if averaged across all classes. This necessitates the development and adoption of strategies specifically targeting the detection of rare objects to ensure a comprehensive evaluation.

Strategies for Addressing Class Imbalance

1. Data Augmentation

Data augmentation is a popular technique to artificially increase the size of a dataset. By applying transformations such as rotation, scaling, and flipping, more samples of rare objects can be generated, effectively balancing the dataset. This not only helps in preventing overfitting but also ensures that models are exposed to a wider variety of examples, improving their ability to detect rare objects in different contexts.

2. Resampling Techniques

Resampling involves either oversampling the minority class or undersampling the majority class. Oversampling techniques, such as SMOTE (Synthetic Minority Over-sampling Technique), create synthetic samples of rare objects, enhancing their representation in the dataset. On the other hand, undersampling reduces the number of instances of frequent classes to match the rare ones, though this could potentially lead to loss of valuable data.

3. Cost-sensitive Learning

Incorporating class imbalance into the learning process itself, cost-sensitive learning assigns different costs to errors associated with each class. By penalizing the misclassification of rare objects more heavily than that of frequent ones, models are incentivized to improve their performance on the rarer classes. This approach requires careful calibration of the cost matrix to balance the trade-offs between different classes.

4. Advanced Loss Functions

Custom loss functions can be designed to tackle class imbalance by emphasizing the errors in rare class detection more than those in frequent classes. Focal loss, for instance, reduces the loss contribution from easy-to-classify samples, focusing more on hard-to-classify, rare objects. This approach encourages the model to pay more attention to challenging cases, ultimately improving detection across all classes.

5. Ensemble Methods

Ensemble methods combine the predictions of multiple models to improve robustness and accuracy. By training several models with different subsets of the data or using different architectures, the ensemble can capture a wider range of features and patterns. This diversity helps in mitigating the biases introduced by class imbalance, leading to better detection performance for rare objects.

Conclusion

Addressing class imbalance in mAP is essential for developing reliable and fair object detection models. By employing strategies such as data augmentation, resampling, cost-sensitive learning, advanced loss functions, and ensemble methods, researchers can enhance the detection capabilities of models, particularly for rare objects. As the field of computer vision continues to evolve, ongoing exploration and innovation in handling class imbalance will remain crucial for advancing the performance and applicability of object detection systems across various domains.

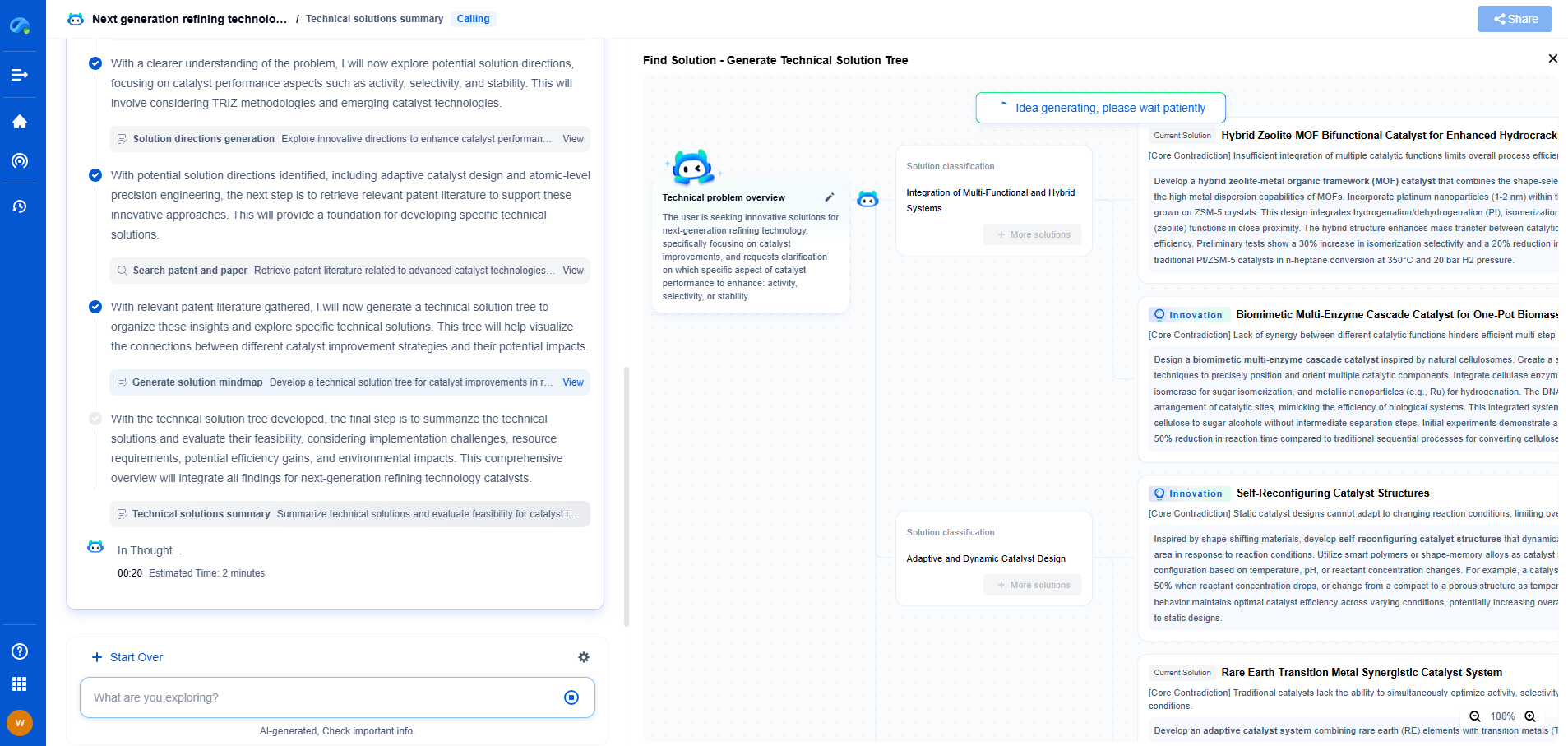

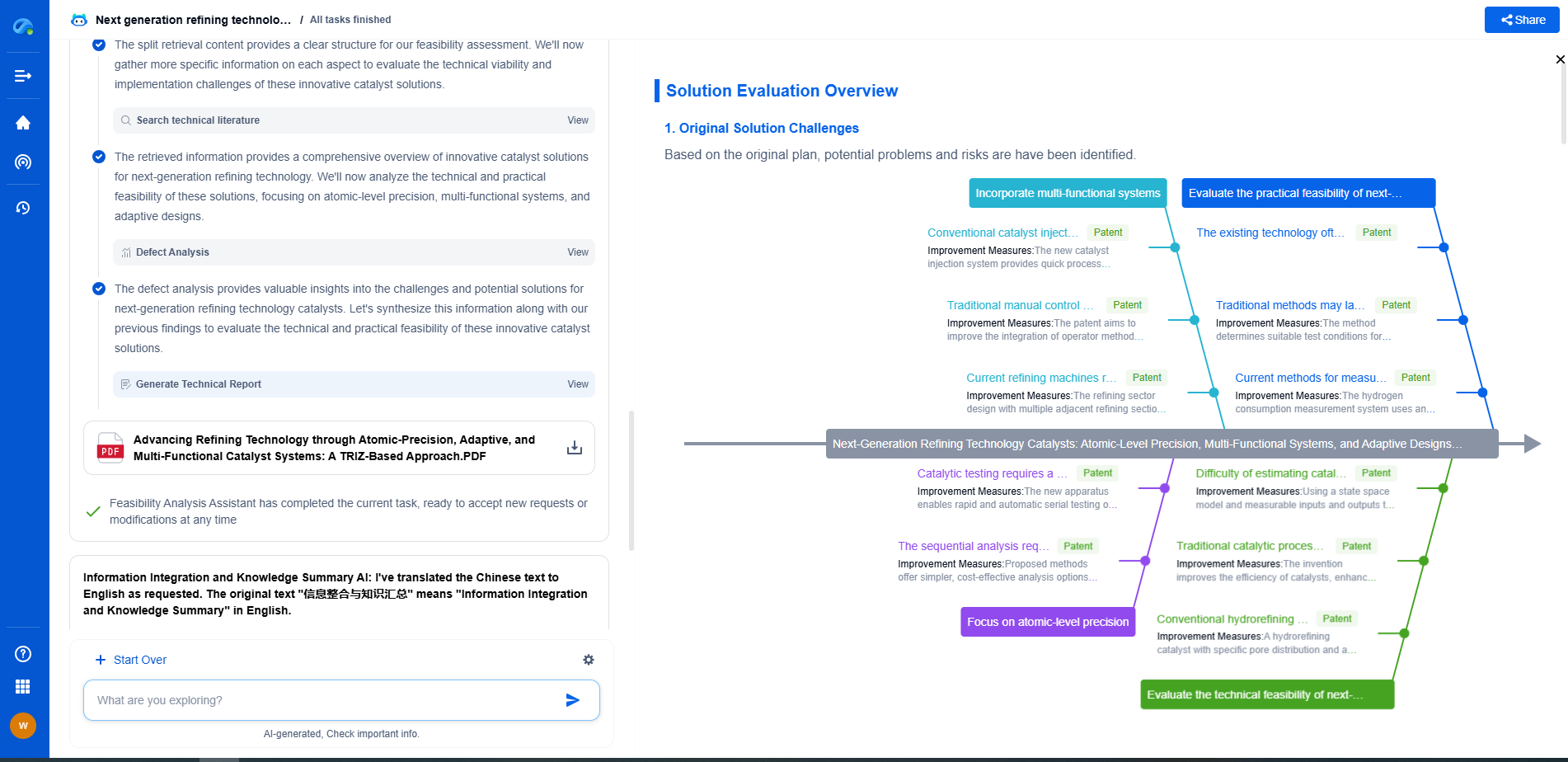

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.