Common Pitfalls in RF Performance Benchmarking – and How to Avoid Them

JUL 7, 2025 |

In the fast-evolving world of wireless communication, radio frequency (RF) performance benchmarking is crucial for evaluating the effectiveness of various network solutions. However, this process is fraught with potential pitfalls that can lead to misleading results, wasted resources, and misguided strategic decisions. This article explores some common pitfalls in RF performance benchmarking and offers practical guidance on how to avoid them, ensuring accurate and reliable outcomes.

Insufficient Planning and Objective Definition

One of the most fundamental mistakes in RF performance benchmarking is embarking on the process without a clear plan or set of objectives. Often, teams dive into testing without fully understanding what they are trying to measure or achieve. This lack of direction can lead to collecting irrelevant data and drawing incorrect conclusions.

To avoid this, it is crucial to define your benchmarking objectives clearly from the outset. Ask yourself what specific aspects of RF performance are most important for your network's success. Are you primarily concerned with throughput, latency, signal strength, or coverage? Establishing these goals early will help you focus your efforts and resources effectively.

Neglecting Real-World Conditions

Another common pitfall is conducting tests in controlled environments that do not accurately reflect real-world conditions. While laboratory tests can provide valuable insights, they often fail to account for variables like interference, topographical challenges, and environmental factors that affect RF performance in the field.

To mitigate this issue, incorporate field tests into your benchmarking strategy. Simulate real-world scenarios as closely as possible by testing in various locations and conditions. Use data from these real-world tests to validate and refine your lab results, ensuring they are applicable and reliable in practical settings.

Inadequate Sample Size and Data Collection

A small sample size or insufficient data collection can significantly skew your benchmarking results. Testing too few devices, locations, or time periods can lead to inaccurate conclusions that do not represent the true performance of your network.

To avoid this pitfall, ensure you collect a comprehensive and statistically significant amount of data. Define a robust sampling strategy that covers different devices, geographic areas, and times of day. The more diverse your data set, the more reliable your conclusions will be.

Overlooking Test Equipment Calibration

Calibration errors in testing equipment can introduce significant inaccuracies into your benchmarking results. Over time, equipment can drift out of specification, leading to erroneous data that can misinform network decisions.

Regularly calibrate your testing equipment according to the manufacturer's specifications to prevent this. Document calibration procedures and maintain a calibration schedule to ensure all equipment remains within its operational limits. This diligence helps maintain the integrity of your benchmarking efforts.

Failure to Account for Network Traffic Patterns

Network traffic patterns can vary significantly throughout the day and week, affecting RF performance. Benchmarking at the wrong times can lead to results that do not represent typical network conditions, leading to misguided decisions.

To counteract this, conduct benchmarking at various times, including peak and off-peak periods, to capture a full picture of network performance. Analyze traffic patterns to understand how they correlate with RF metrics and adjust your benchmarking strategy accordingly.

Ignoring the Impact of New Technologies

With the rapid advancement of wireless technologies, new protocols, and standards are continually being introduced. Failing to account for these can lead to outdated benchmarking methods and results that do not reflect current capabilities.

Stay informed about the latest trends and technologies in RF performance. Incorporate new tools, techniques, and standards into your benchmarking process to ensure your evaluations remain relevant and forward-looking. Regularly update your benchmarking criteria to reflect these advancements.

Conclusion

RF performance benchmarking is a vital process for understanding and improving wireless network performance. However, it is fraught with potential pitfalls that can significantly impact the reliability and usefulness of its results. By taking a structured approach, defining clear objectives, incorporating real-world conditions, ensuring adequate data collection, calibrating equipment, considering network traffic patterns, and staying abreast of technological advancements, you can avoid these common pitfalls. Doing so will enable you to produce accurate, meaningful insights that drive effective decision-making and enhance network performance.

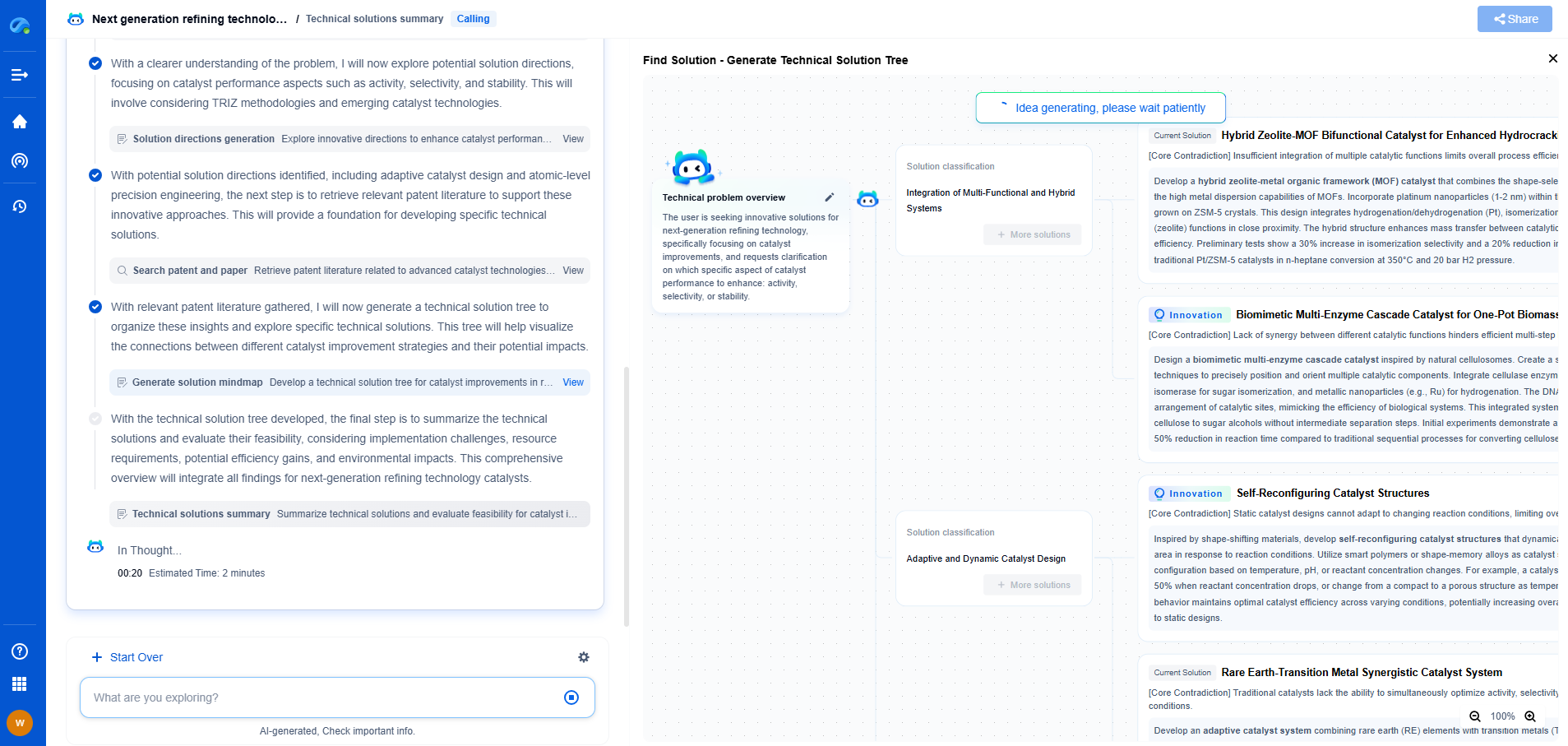

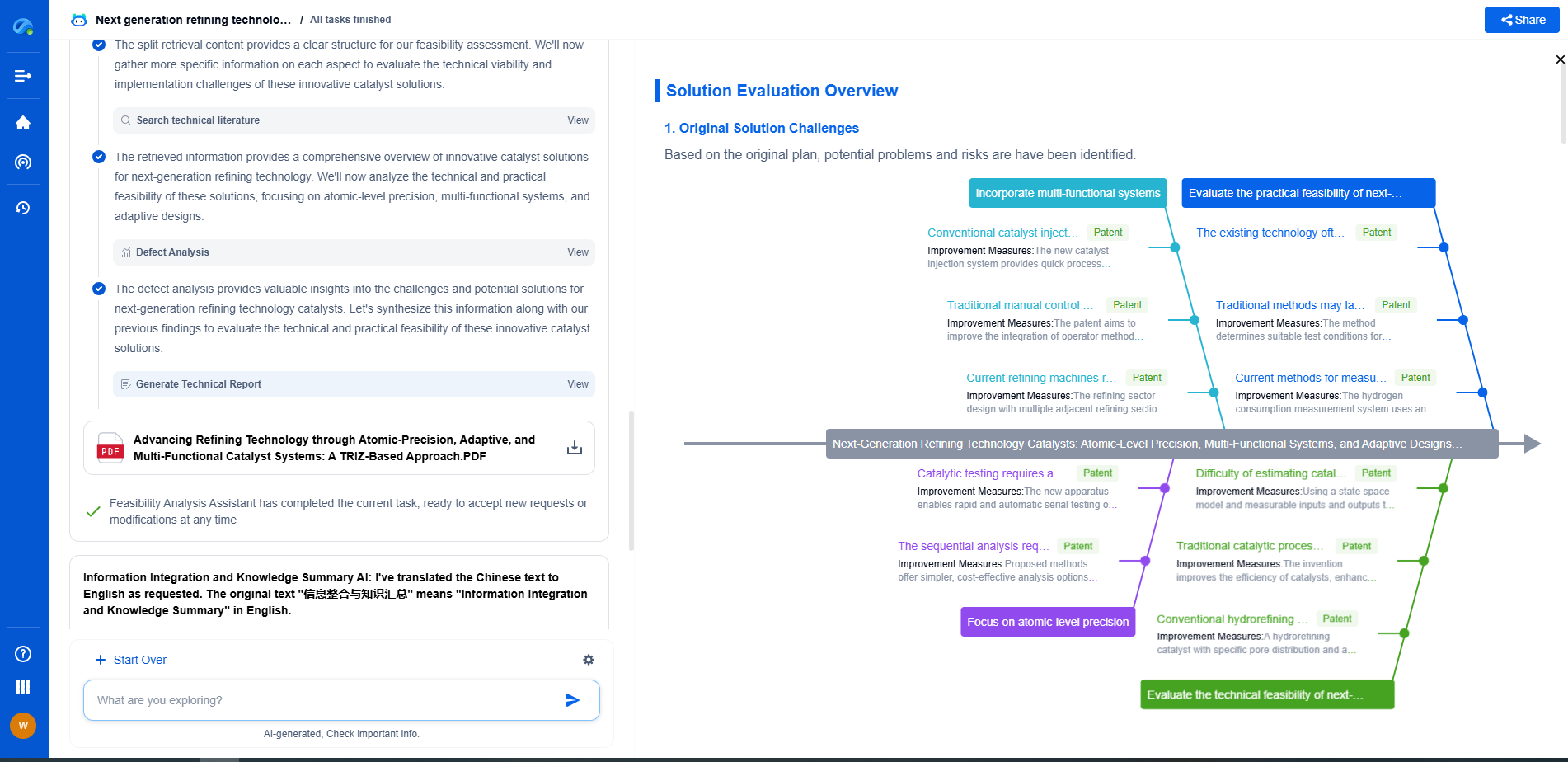

Empower Your Wireless Innovation with Patsnap Eureka

From 5G NR slicing to AI-driven RRM, today’s wireless communication networks are defined by unprecedented complexity and innovation velocity. Whether you’re optimizing handover reliability in ultra-dense networks, exploring mmWave propagation challenges, or analyzing patents for O-RAN interfaces, speed and precision in your R&D and IP workflows are more critical than ever.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

Whether you work in network architecture, protocol design, antenna systems, or spectrum engineering, Patsnap Eureka brings you the intelligence to make faster decisions, uncover novel ideas, and protect what’s next.

🚀 Try Patsnap Eureka today and see how it accelerates wireless communication R&D—one intelligent insight at a time.