Debugging TensorRT Engine Failures: Common Pitfalls and Fixes

JUL 10, 2025 |

TensorRT, developed by NVIDIA, is a high-performance deep learning inference optimizer and runtime library, primarily designed to enhance the performance of deep learning models in production environments. Leveraging TensorRT can significantly reduce the latency and enhance the throughput of models, making it an essential tool for deploying AI solutions at scale. However, achieving optimal performance with TensorRT often involves navigating a variety of potential pitfalls and challenges that can arise during the engine conversion and execution process.

Common Pitfalls in TensorRT Engine Failures

1. Model Conversion Issues

One of the most frequent issues faced when working with TensorRT involves the conversion of a model from its original framework (such as TensorFlow or PyTorch) into a TensorRT engine. Incompatibilities between the model’s operations and TensorRT's supported operations can lead to conversion failures. It's crucial to ensure that the model uses layers and operations that are fully supported by TensorRT to avoid this pitfall.

2. Precision Mismatches

TensorRT supports different precisions such as FP32, FP16, and INT8 to optimize performance. However, not all models are natively compatible with lower precisions, which can result in precision mismatch issues. It is important to calibrate the model properly when using INT8 precision and verify that the accuracy meets the required standards. This may involve using representative datasets for calibration to ensure the model performs correctly under the reduced precision.

3. Inadequate Resource Allocation

Another common issue is inadequate resource allocation. TensorRT is optimized for NVIDIA GPUs, and running a TensorRT engine requires sufficient GPU memory and compute resources. Insufficient resources can lead to failures during engine execution or suboptimal performance. Ensure that your deployment environment has the necessary hardware capabilities, including adequate GPU memory, to effectively run the TensorRT engine.

4. Incorrect Tensor Dimensions

TensorRT requires precise input and output dimensions to build and execute an inference engine effectively. Mismatched tensor dimensions between the model input and what the TensorRT engine expects can result in runtime errors. It is essential to ensure that the input dimensions specified are consistent with the model's architecture and the data being fed into the model.

5. Unsupported Layer and Plugin Challenges

Sometimes, models contain layers that are not natively supported by TensorRT. In these cases, custom plugins can be developed to handle such layers. However, developing and integrating custom plugins requires a thorough understanding of both the model and TensorRT’s execution environment. Careful implementation and testing of these plugins are necessary to ensure they work seamlessly with the rest of the model.

Fixes and Best Practices

1. Thorough Model Analysis

Before converting a model to TensorRT, perform a thorough analysis to ensure compatibility. Tools like NVIDIA’s TensorRT support matrix can help identify supported layers and operations. Additionally, consider simplifying the model architecture to remove any unnecessary complexities that could complicate the conversion process.

2. Precision Calibration

For models using INT8 precision, perform detailed calibration using representative datasets to fine-tune the model’s performance without significantly impacting accuracy. NVIDIA provides calibration tools within TensorRT that can help streamline this process.

3. Optimize GPU Utilization

Ensure that the computing environment is optimized for TensorRT execution. This includes selecting the right GPU that has enough memory and compute power to handle the model’s requirements. Monitor GPU usage and adjust resource allocation as needed to maintain optimal performance.

4. Dimension Verification

Before deploying a model, verify that all tensor dimensions are correctly defined and consistent throughout the model architecture and TensorRT engine. This step can prevent many runtime errors and issues related to dimension mismatches.

5. Effective Use of Plugins

If your model includes unsupported layers, invest time in developing robust custom plugins. Use NVIDIA's plugin examples and documentation to guide the creation and integration of these plugins. Thorough testing is critical to ensure these custom components work as intended without introducing new issues.

Conclusion

Debugging TensorRT engine failures requires a structured approach that involves understanding common pitfalls and employing effective fixes. By addressing model conversion issues, precision mismatches, resource allocation, tensor dimension correctness, and unsupported layers, developers can enhance the reliability and performance of their TensorRT deployments. With careful planning and execution, TensorRT can significantly accelerate deep learning inference, making it a valuable asset for AI applications in production environments.

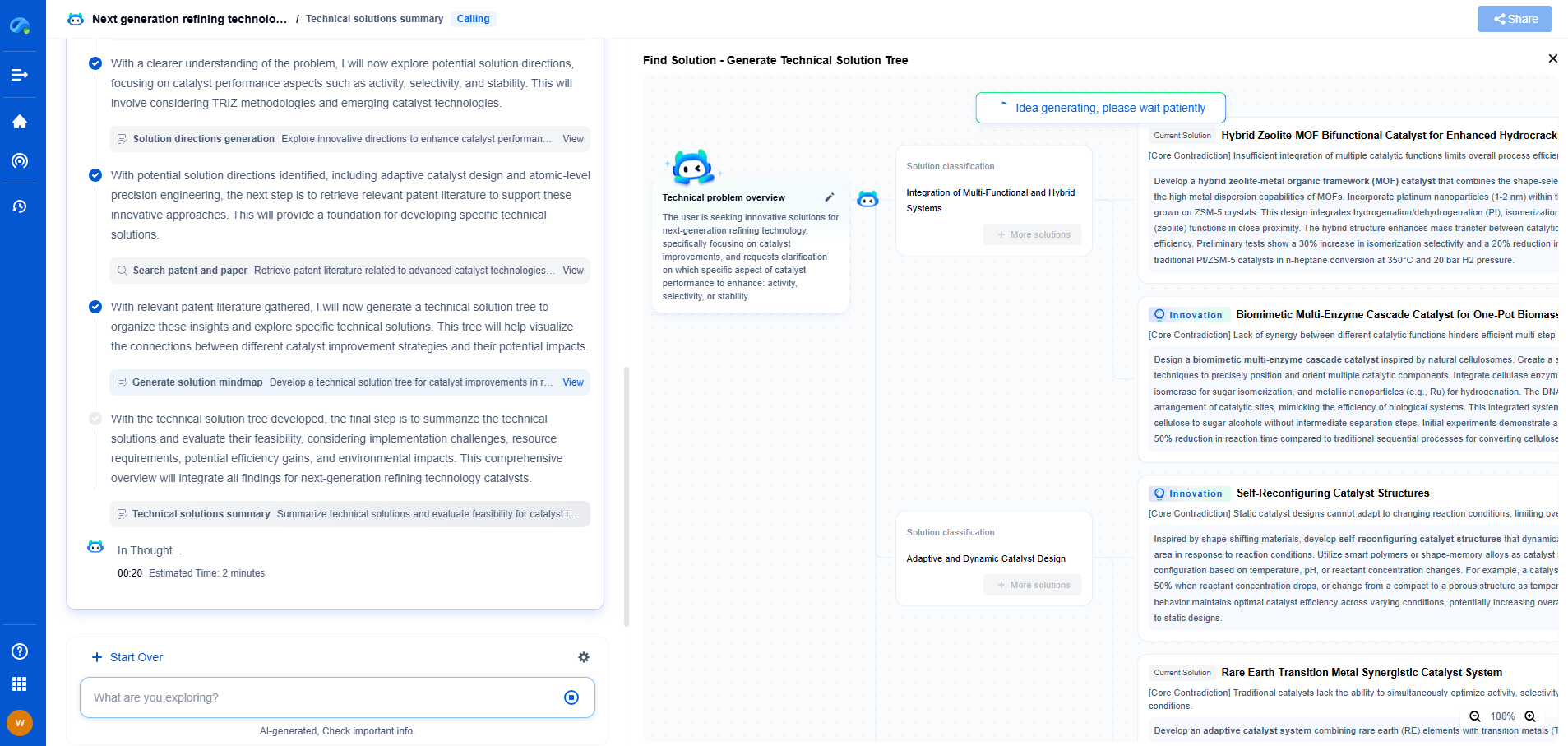

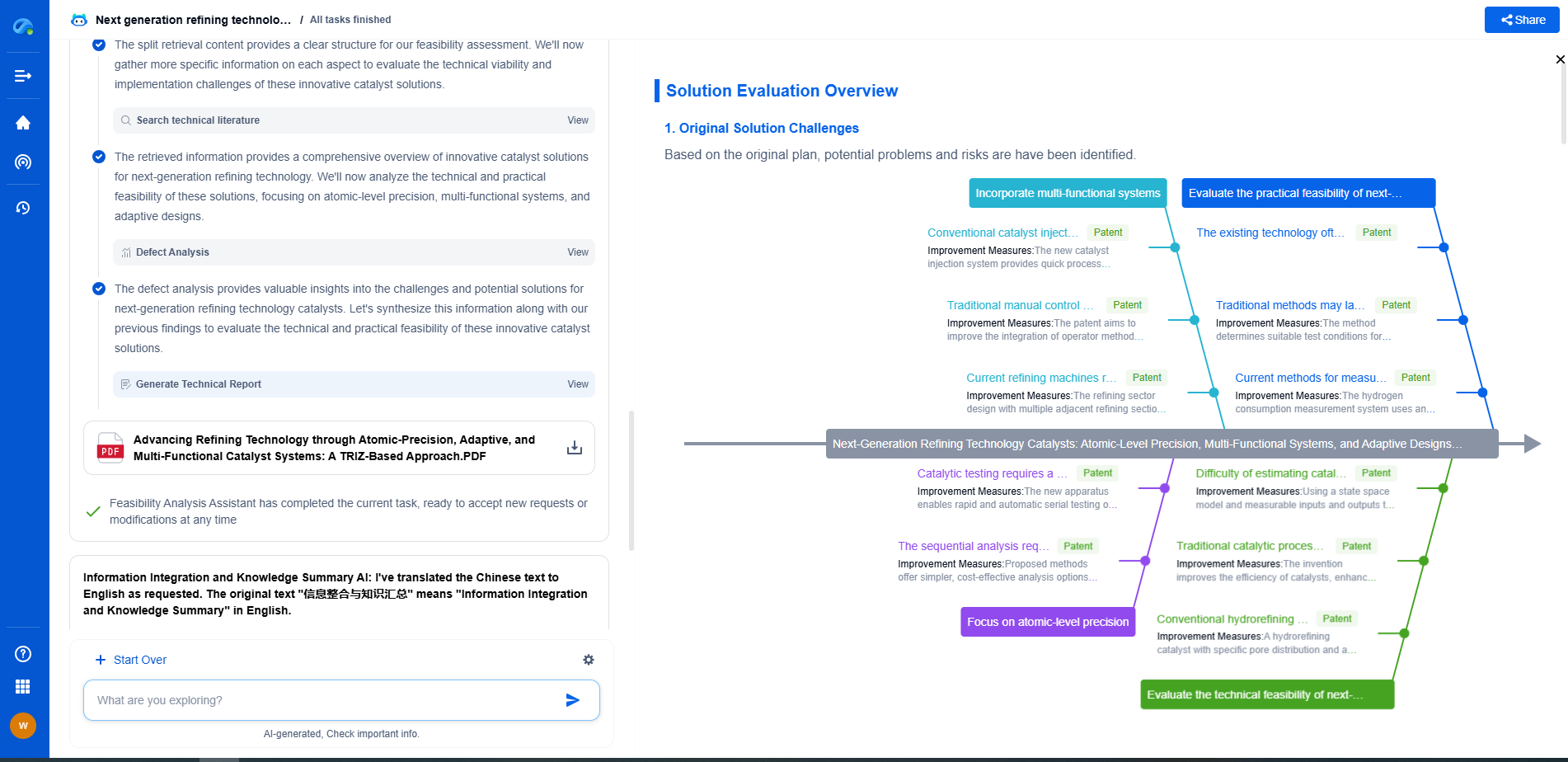

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.