Deploying ViT-Based Models on Edge Devices: What You Need to Know

JUL 10, 2025 |

Vision Transformers (ViTs) have emerged as a powerful architecture for computer vision tasks, offering impressive accuracy and flexibility. Their roots lie in the transformer models initially developed for natural language processing, and they've been adapted to process image data with astounding success. However, deploying ViT-based models on edge devices presents unique challenges and opportunities. Edge devices, characterized by limited computational resources and power constraints, require careful consideration when integrating complex models like ViTs.

Understanding the Challenges of Deploying ViTs on Edge Devices

1. Computational Complexity and Resource Constraints:

ViTs generally require significant computational power due to their extensive attention mechanisms and large model sizes. Edge devices, such as mobile phones, IoT devices, and embedded systems, typically lack the processing power and memory capacity found in cloud-based systems. This disparity necessitates model optimization before deployment.

2. Power Consumption:

Running ViT models on edge devices can lead to high power consumption, which is a critical concern, especially for battery-powered devices. Efficient energy management is crucial to maintaining device usability and longevity.

3. Latency Requirements:

For real-time applications, such as augmented reality or autonomous navigation, low latency is essential. The inherent complexity of ViT models could introduce delays that impact performance in time-sensitive scenarios.

Strategies for Optimizing ViTs for Edge Deployment

1. Model Pruning:

Pruning involves reducing the number of parameters in a model by removing less important connections. This technique can significantly decrease the model size and computational requirements without substantially affecting accuracy, making it an effective strategy for deploying ViTs on edge devices.

2. Quantization:

Quantization reduces the precision of the model's weights, typically from 32-bit floating point numbers to 8-bit integers. This approach drastically decreases the memory footprint and speeds up inference times on devices with hardware support for integer operations.

3. Knowledge Distillation:

This technique involves training a smaller, more efficient 'student' model to replicate the behavior of a larger 'teacher' model. By leveraging the knowledge embedded in the larger ViT model, the student model can achieve competitive performance while being more suitable for edge deployment.

4. Efficient Model Architectures:

Some architectures are specifically designed for efficiency, such as MobileViT, which combines the strengths of ViTs with the lightweight characteristics of mobile networks. Exploring such architectures can provide a balance between performance and resource usage.

Implementing ViT-Based Models on Edge Devices

1. Hardware Considerations:

Choosing the right hardware is fundamental for successful deployment. Devices equipped with dedicated AI accelerators or GPUs can provide the necessary computational boost, while optimizing for CPU-only devices requires more aggressive model compression techniques.

2. Software Frameworks:

Utilize frameworks like TensorFlow Lite, ONNX Runtime, or PyTorch Mobile, which are designed to support model deployment on edge devices. These tools offer functionalities for model optimization and provide runtime environments tailored to low-power hardware.

3. Edge AI Platforms:

Leveraging platforms like NVIDIA Jetson or Google's Coral can simplify the deployment process. These platforms offer pre-built solutions and libraries that can accelerate development and integration of ViT models on edge devices.

Best Practices for Deployment

1. Continuous Monitoring and Updating:

Once deployed, it is important to continuously monitor the model's performance and update it as necessary. This approach ensures that the model adapts to changing environments and data distributions, maintaining its effectiveness over time.

2. Balancing Accuracy and Efficiency:

While optimizing for resource constraints, it’s crucial to maintain a balance between model accuracy and efficiency. Application requirements should guide decisions on model optimizations, ensuring that performance benchmarks are met without excessive resource consumption.

3. Security Considerations:

Deploying AI models on edge devices introduces potential security vulnerabilities. Implementing secure model deployment practices, such as encryption and secure boot mechanisms, can protect against unauthorized access and tampering.

Conclusion

Deploying ViT-based models on edge devices requires a meticulous approach to overcome the challenges of computational complexity, power consumption, and latency. By employing strategies such as model pruning, quantization, and leveraging efficient model architectures, it's possible to harness the power of Vision Transformers even in resource-constrained environments. With careful planning and implementation, ViTs can be successfully integrated into edge applications, paving the way for advanced AI functionalities across diverse domains.

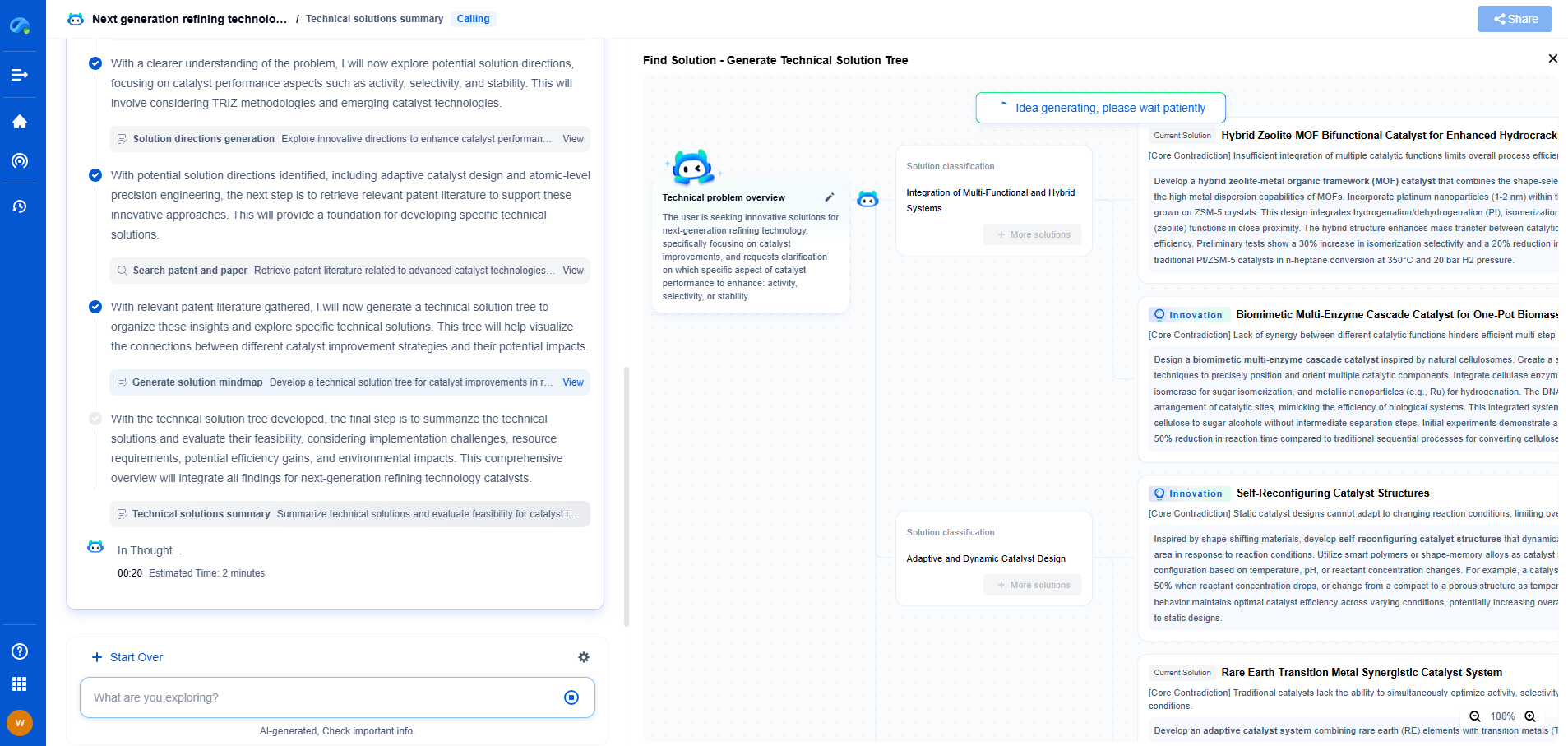

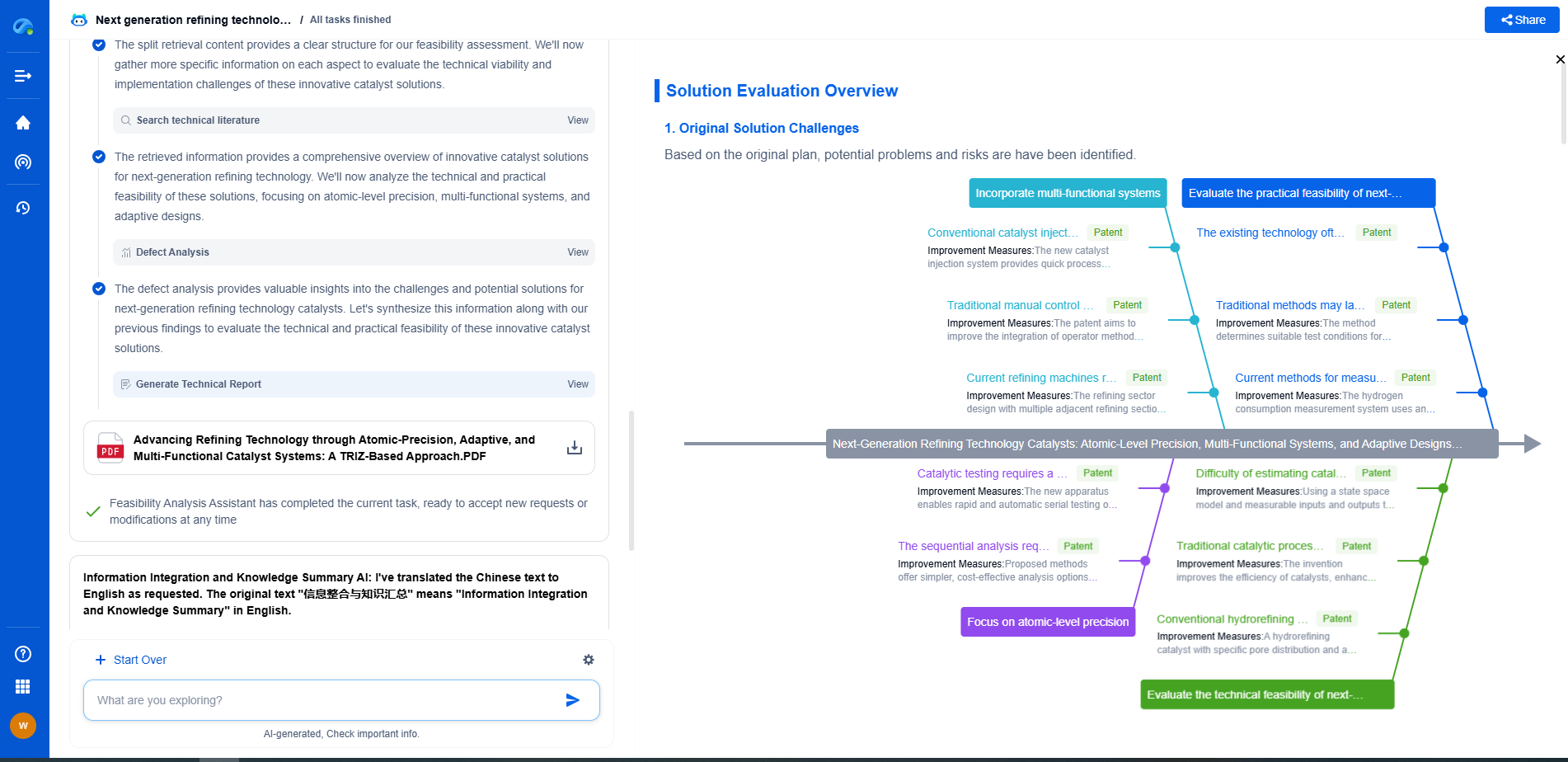

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.