DICOM Metadata Extraction at Scale: Performance Optimization

JUL 10, 2025 |

In the realm of modern medical imaging, DICOM (Digital Imaging and Communications in Medicine) stands as the cornerstone format for the storage and transmission of medical images. This format not only encapsulates the image data but also a wealth of metadata crucial for clinical and operational purposes. As healthcare organizations increasingly rely on large-scale imaging operations, the efficient extraction and processing of DICOM metadata have become pivotal. This blog delves into the strategies for optimizing performance when extracting DICOM metadata at scale.

Understanding the Importance of Metadata

Before diving into performance optimization, it's essential to comprehend the significance of DICOM metadata. Metadata in DICOM files includes patient demographics, study descriptions, modality information, imaging parameters, and more. This metadata is vital for various applications, including patient management, clinical workflows, research studies, and quality assurance. Efficient extraction of this data ensures timely access to critical information, ultimately enhancing healthcare delivery.

Challenges of Scaling DICOM Metadata Extraction

Scaling the extraction of DICOM metadata presents several challenges. First and foremost is the sheer volume of data. Hospitals and imaging centers generate thousands of DICOM files daily, demanding swift and scalable extraction processes. Additionally, DICOM files can vary in size and complexity, with nested sequences and private tags posing further complications. Network latency, storage bottlenecks, and processing overhead also contribute to the complexity of scaling metadata extraction.

Strategies for Performance Optimization

To address these challenges, several performance optimization strategies can be employed:

1. Parallel Processing:

Utilizing parallel processing frameworks can significantly boost metadata extraction performance. By distributing the workload across multiple processors or nodes, extraction tasks can be executed concurrently, reducing overall processing time. Frameworks like Apache Spark or Dask can be leveraged to handle large datasets efficiently.

2. Incremental Extraction:

Implementing an incremental extraction approach allows for processing only the newly added or modified DICOM files. This reduces the need to repeatedly parse unchanged files, thereby saving valuable computational resources and time.

3. Efficient Data Structures:

Optimizing the data structures used to store and process metadata can lead to significant improvements in performance. Choosing the right data structures, such as hash tables for quick lookups or trees for hierarchical data representation, can enhance efficiency.

4. Streamlined Pipeline Design:

Designing a streamlined extraction pipeline that minimizes data movement and leverages in-memory processing can improve performance. By reducing the number of I/O operations and intermediate data storage, the pipeline becomes more efficient and faster.

5. Hardware Acceleration:

Employing hardware acceleration techniques, such as utilizing GPUs for parallel processing or FPGA for specialized tasks, can further enhance performance. These technologies offer significant speed advantages for specific computational tasks.

6. Caching and Indexing:

Implementing caching mechanisms and indexing strategies can expedite repeated access to metadata. By storing frequently accessed data in memory or on faster storage mediums, retrieval times are reduced.

Monitoring and Maintenance

Optimizing performance is not a one-time task but an ongoing process. Regular monitoring of the extraction system is essential to identify bottlenecks and inefficiencies. Implementing comprehensive logging and analytics tools can help in diagnosing issues and ensuring the system operates at peak performance. Additionally, periodic maintenance, including software updates and hardware checks, is crucial to sustaining optimal performance.

Conclusion

DICOM metadata extraction at scale is a complex yet vital process in today's healthcare landscape. By employing a combination of parallel processing, incremental extraction, efficient data structures, streamlined pipeline design, hardware acceleration, and caching strategies, healthcare organizations can significantly enhance their metadata extraction performance. As technology continues to evolve, staying abreast of the latest advancements and continuously optimizing processes will ensure that healthcare providers can effectively manage their ever-growing imaging data landscape.

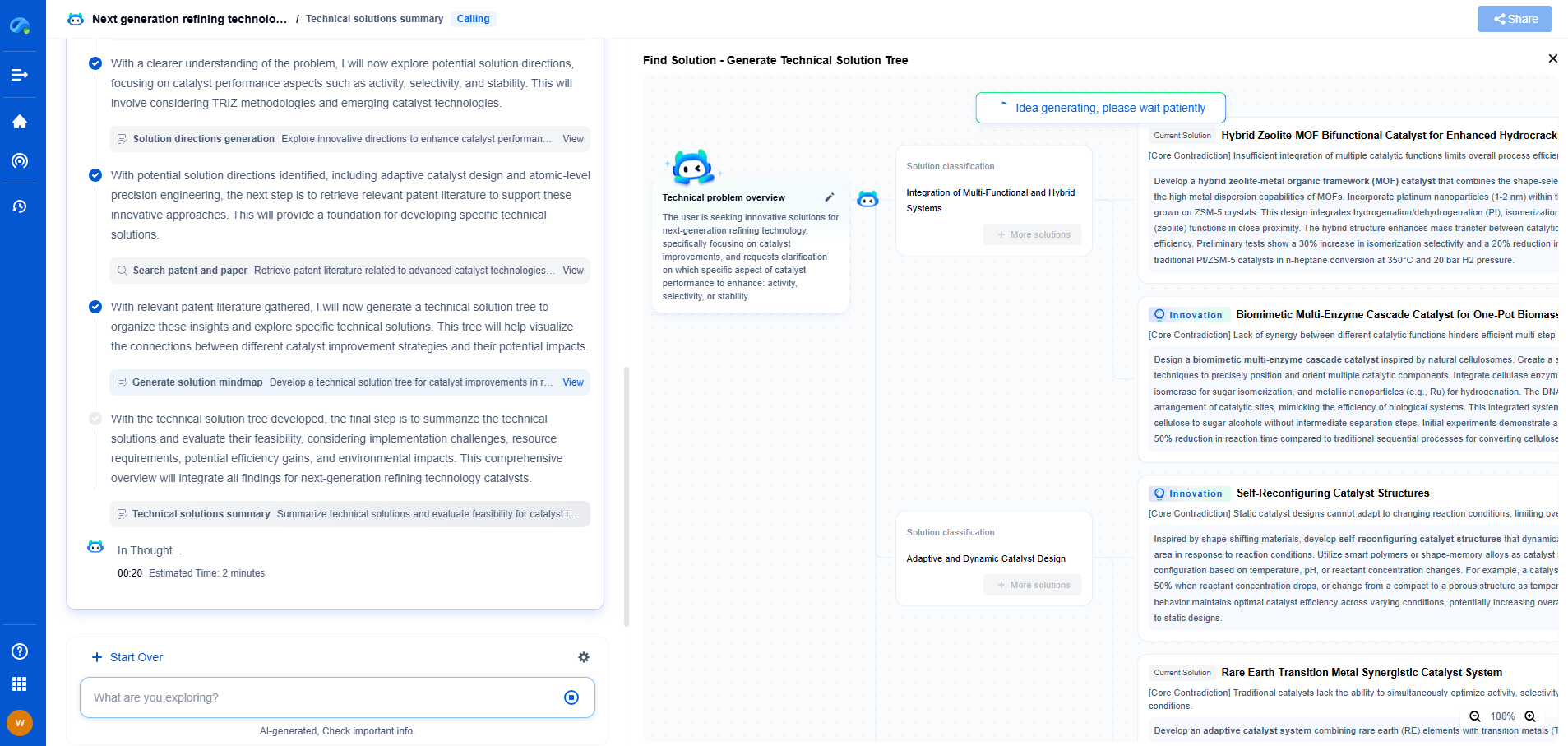

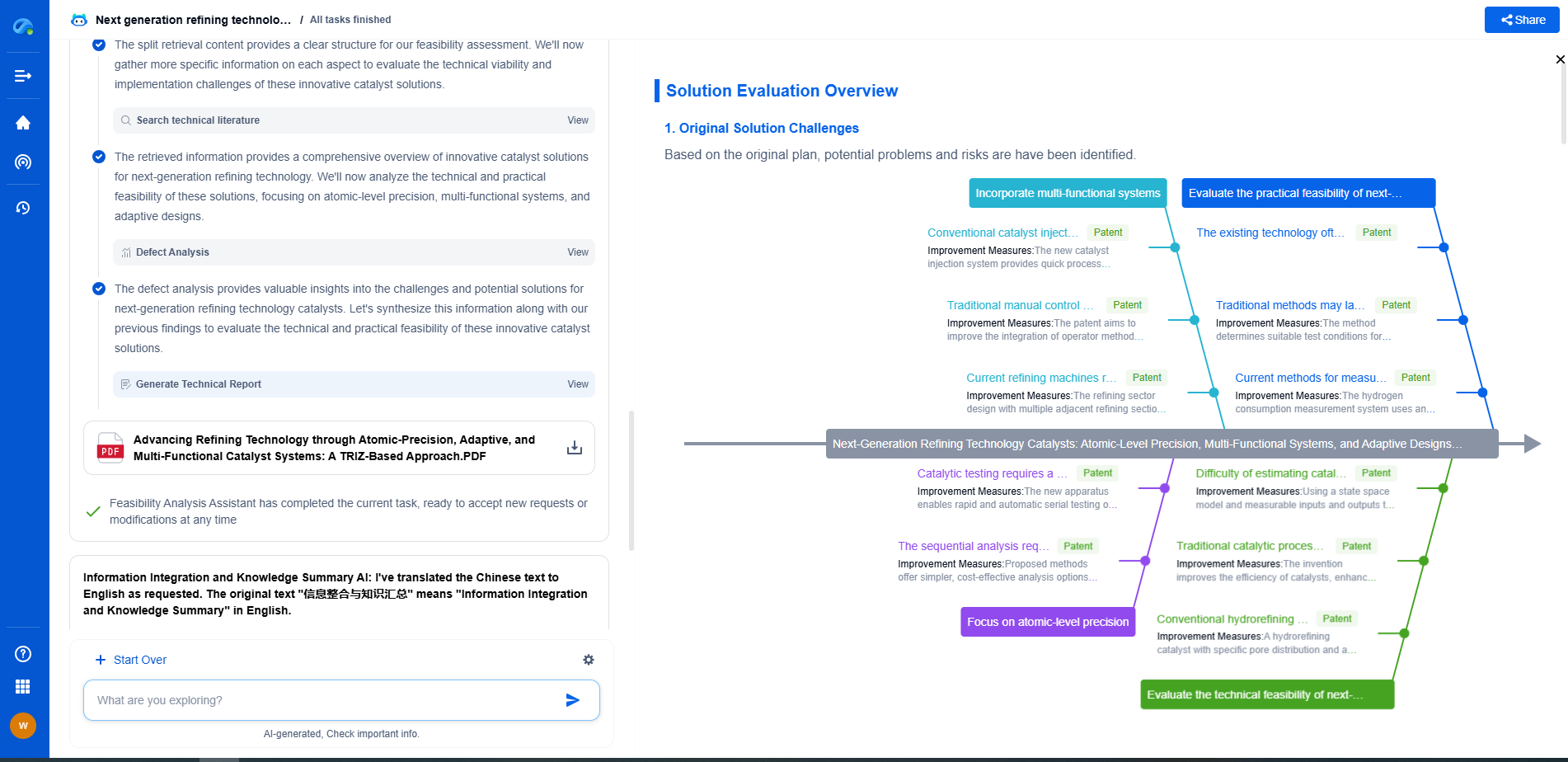

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.