Diffusion Models for Image Generation: Will They Replace GANs?

JUL 10, 2025 |

In recent years, the field of image generation has been dominated by Generative Adversarial Networks (GANs). However, a new contender has emerged: diffusion models. Both are sophisticated neural network architectures capable of generating high-quality images, but they differ fundamentally in their approach and execution. Understanding these differences is key to predicting whether diffusion models might eventually replace GANs in certain applications or even altogether.

How GANs Work

GANs, introduced by Ian Goodfellow and his colleagues in 2014, consist of two neural networks: a generator and a discriminator. The generator creates images, while the discriminator evaluates them against real ones. This adversarial process continues until the generator produces images that are indistinguishable from real ones. GANs have been remarkably successful, used in applications ranging from art creation to data augmentation. However, they are notoriously difficult to train, often suffering from issues like mode collapse and instability.

The Rise of Diffusion Models

Diffusion models, on the other hand, represent a newer approach to generative modeling. These models slowly transform noise into images through a series of iterative steps. The process can be likened to reversing the diffusion of particles from high concentration to low concentration, hence the name. Diffusion models leverage stochastic differential equations to gradually denoise a variable, ultimately yielding a coherent image. This gradual process can offer better stability and diversity in image generation compared to GANs.

Advantages of Diffusion Models

One of the primary advantages of diffusion models over GANs is their training stability. The training of GANs can be quite sensitive, requiring careful tuning of hyperparameters and training schedules. Diffusion models, in contrast, approach image generation in a more structured manner, reducing the likelihood of the model collapsing during training.

Additionally, diffusion models excel in producing a rich diversity of outputs. Where GANs might struggle with mode collapse—where the generator outputs only a limited variety of images—diffusion models naturally sample from a broader range of possibilities, thus capturing a wider distribution of the data.

Performance and Quality

When considering image quality, diffusion models have shown impressive results, rivaling and in some cases surpassing GANs. This is especially evident in detailed textures and complex scenes where the iterative refinement process of diffusion models can capture intricate details better. However, GANs still hold their ground when it comes to speed. The iterative nature of diffusion models often makes them slower, as generating a single image can require hundreds or even thousands of passes through the model.

Applications and Use Cases

The choice between using GANs or diffusion models largely depends on the specific requirements of a project. For applications needing rapid image generation, such as real-time video processing, GANs might still be the preferred choice. However, for tasks where image quality and diversity are paramount, diffusion models can offer a compelling alternative. Fields such as medical imaging, where precision and detail are crucial, could particularly benefit from the strengths of diffusion models.

Future Prospects

While diffusion models are gaining traction, it is unlikely that they will completely replace GANs in the near future. Instead, we might see a convergence of techniques, where the strengths of both models are combined to create hybrid systems. Researchers are already exploring ways to integrate the robustness of diffusion models with the speed of GANs, potentially leading to the next generation of image generation technologies.

Conclusion

Diffusion models present a promising avenue for image generation, bringing forth stability, diversity, and quality. While they are unlikely to fully eclipse GANs immediately, they offer a valuable alternative for applications where their advantages can be fully leveraged. As the field progresses, the interplay between GANs and diffusion models will likely continue to evolve, shaping the future of image generation in exciting ways.

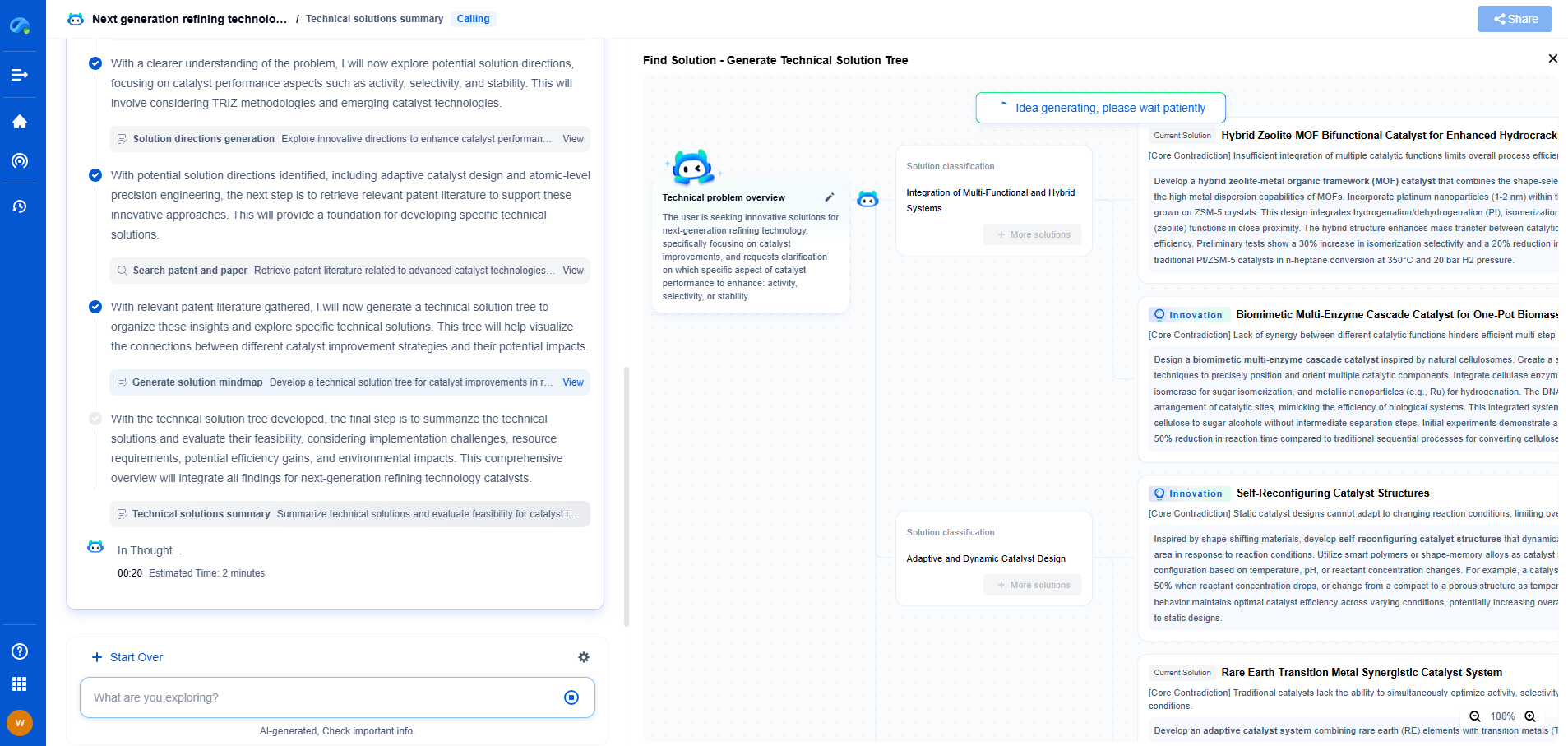

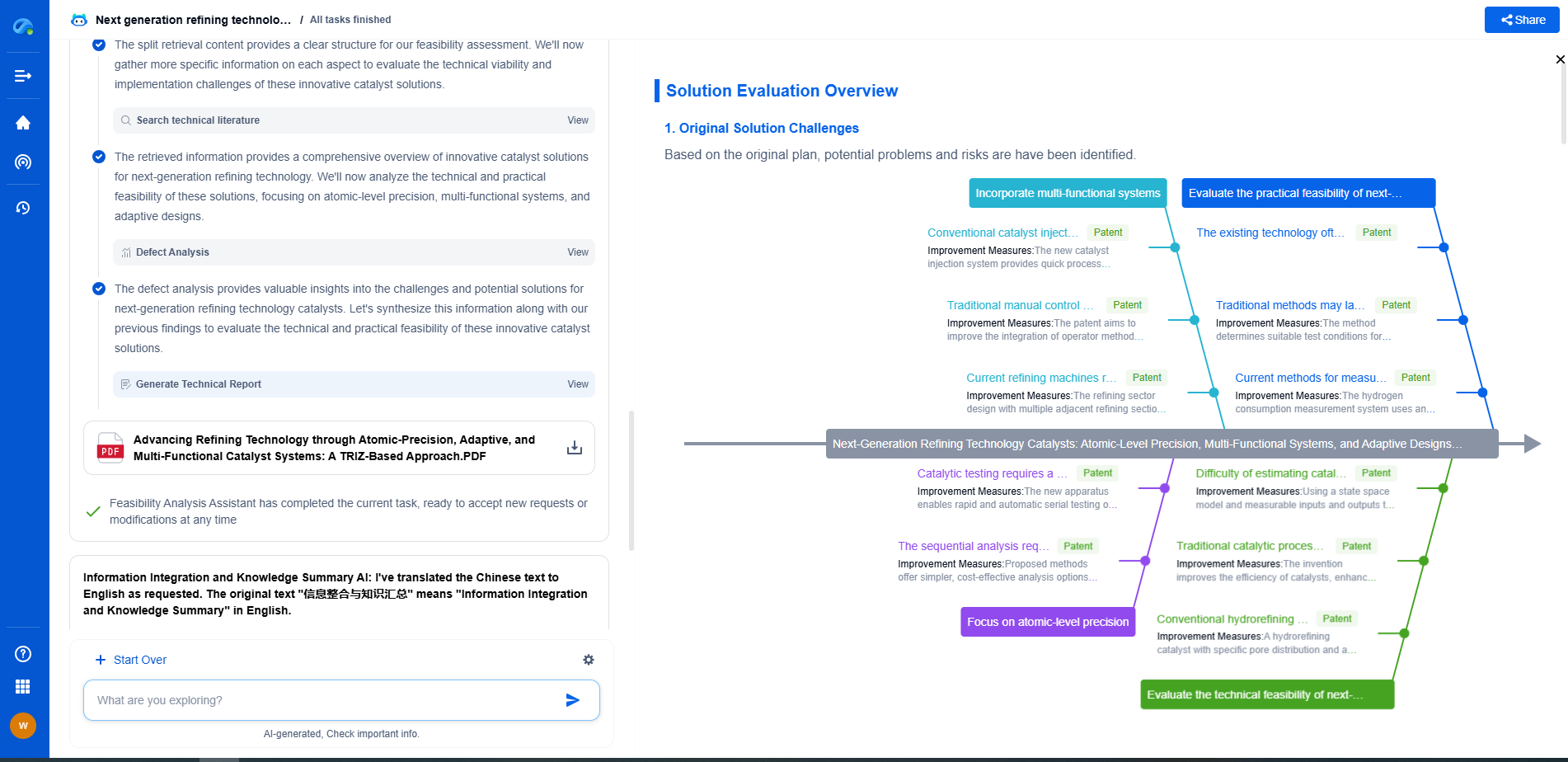

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.