Error propagation patterns in Hamming-encoded data streams

JUL 14, 2025 |

Hamming codes are a family of linear error-correcting codes that are frequently used in computer memory systems and telecommunications to detect and correct errors in data transmission. Developed by Richard Hamming in the 1950s, these codes add redundant bits to data to allow the detection and correction of single-bit errors. The elegance of Hamming codes lies in their simplicity and efficiency; they use a minimal number of additional bits to achieve error correction, making them ideal for data streams where bandwidth and storage are at a premium.

Structure and Functionality of Hamming Codes

At the core of Hamming codes is the concept of parity bits. For a given set of data bits, parity bits are strategically placed to ensure that any single-bit error can be detected and corrected. The number of parity bits required is determined by the length of the data bits and follows the formula:

2^r ≥ m + r + 1

where m is the number of data bits, and r is the number of parity bits. The parity bits are placed at positions that are powers of two (1, 2, 4, 8, etc.) in the data stream. Each parity bit covers specific data bits, forming a parity-check relation that can be used to verify the integrity of the data.

Error Detection and Correction Process

When a data stream encoded with Hamming codes is transmitted, the receiver utilizes the parity-check relations to detect any errors. If a single-bit error occurs, the parity checks will not align, creating a unique pattern that indicates which bit is erroneous. The receiver can then correct this error by flipping the indicated bit back to its correct state. This process is highly efficient, as it allows for error detection and correction without the need for retransmission, thus conserving bandwidth and reducing latency.

Propagation Patterns of Errors

Although Hamming codes are highly effective at correcting single-bit errors, understanding how errors propagate in data streams is crucial for optimizing data integrity. Error propagation refers to the potential spread of errors throughout a data stream due to undetected or improperly corrected errors. In the context of Hamming codes, error propagation is primarily a concern when multiple errors occur, as they can disrupt the parity-check relations and lead to incorrect corrections.

One common pattern of error propagation is the cascading effect, where a single uncorrected error causes subsequent bits to be misinterpreted. If two bits are flipped, the parity checks can create a false syndrome that points to an incorrect bit position, resulting in further errors when the wrong bit is corrected. This highlights the limitation of Hamming codes, which are designed only to handle single-bit errors reliably.

Strategies to Mitigate Error Propagation

To address the limitations in error propagation, several strategies can be employed. One approach is to incorporate additional error-correcting codes that can handle multiple-bit errors, such as Reed-Solomon or Bose-Chaudhuri-Hocquenghem (BCH) codes, alongside Hamming codes. These codes provide a more robust error correction capability but at the cost of increased computational complexity and data overhead.

Another strategy is to employ error detection techniques such as checksums or cyclic redundancy checks (CRC) in conjunction with Hamming codes. These techniques can quickly identify when multiple errors have occurred, prompting a request for data retransmission rather than attempting to correct errors that exceed the capability of Hamming codes.

Conclusion

Hamming codes remain a cornerstone in the field of error correction due to their simplicity and efficiency in handling single-bit errors. However, understanding the patterns of error propagation in Hamming-encoded data streams is essential for minimizing the risk of data corruption. By recognizing the limitations of Hamming codes and employing complementary techniques, data integrity can be significantly enhanced, ensuring reliable communication even in environments prone to errors. As technology continues to advance, the principles underlying Hamming codes will undoubtedly remain relevant, underscoring the importance of robust error correction in our increasingly data-driven world.

From 5G NR to SDN and quantum-safe encryption, the digital communication landscape is evolving faster than ever. For R&D teams and IP professionals, tracking protocol shifts, understanding standards like 3GPP and IEEE 802, and monitoring the global patent race are now mission-critical.

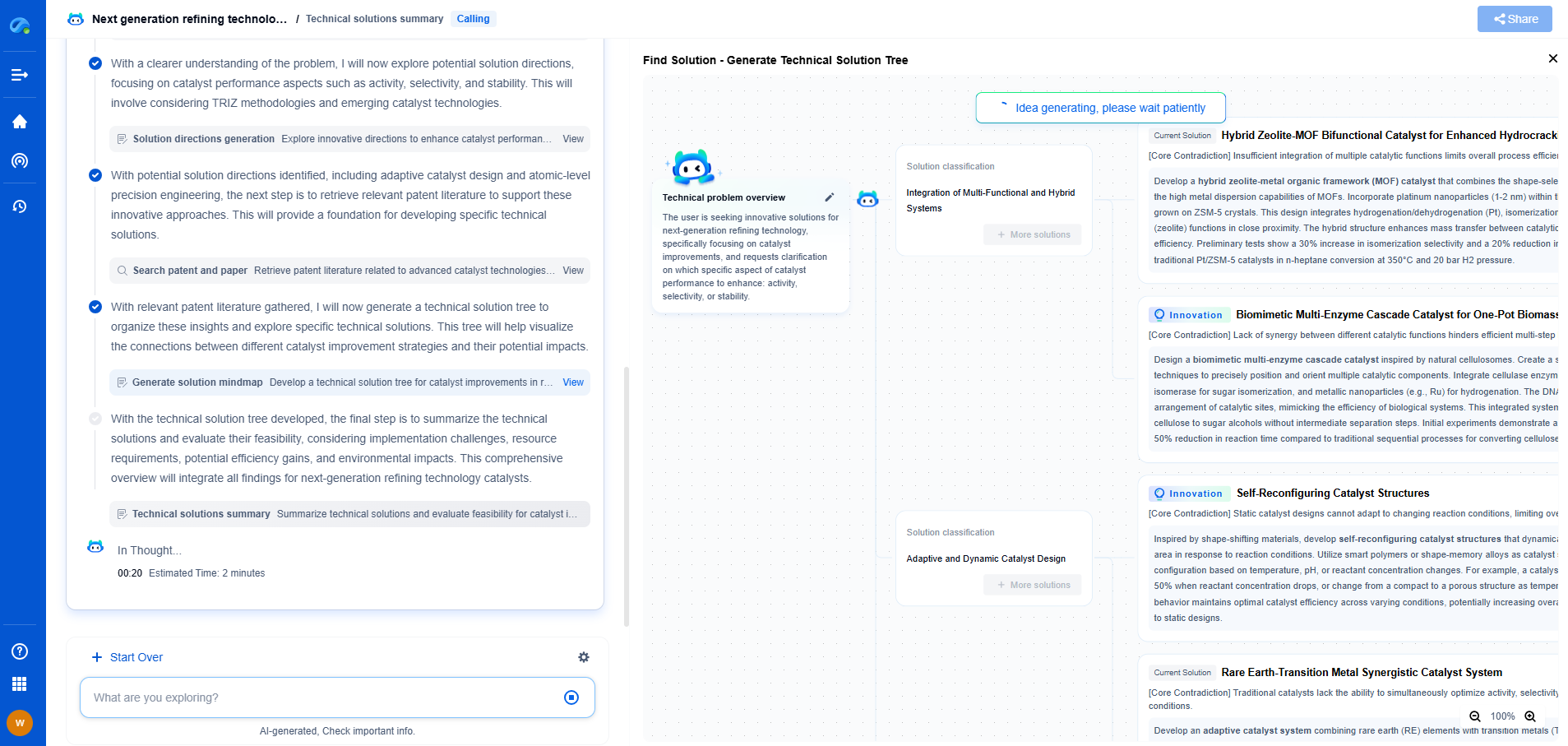

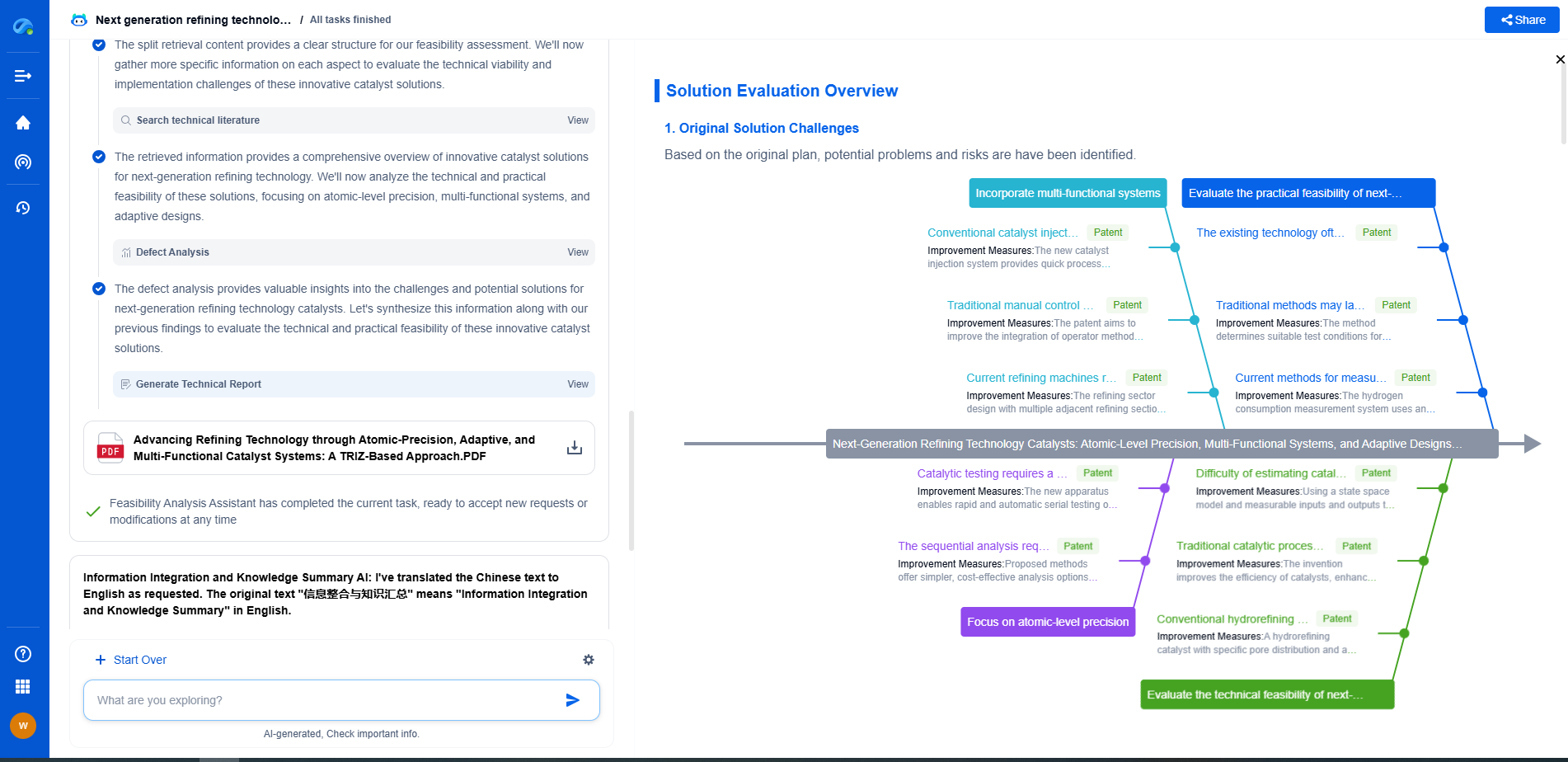

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

📡 Experience Patsnap Eureka today and unlock next-gen insights into digital communication infrastructure, before your competitors do.