Explainability Issues in AI-Driven Network Optimization

JUL 7, 2025 |

Artificial intelligence (AI) and machine learning (ML) have become instrumental in driving network optimization, offering unprecedented capabilities to enhance efficiency, manage traffic, and reduce operational costs. However, these AI-driven solutions often present significant explainability issues, which can hinder their adoption and optimal use. In this blog, we will explore the challenges of explainability in AI-driven network optimization, why they matter, and potential strategies for addressing these issues.

The Role of AI in Network Optimization

AI and ML algorithms are used in network optimization to analyze vast amounts of data, predict traffic patterns, and automate decision-making processes. These systems can dynamically adjust network configurations, manage bandwidth allocation, and even predict and prevent network failures. The benefits of such capabilities are clear: improved network performance, reduced downtime, and more efficient resource utilization.

The Explainability Challenge

Despite these advantages, the use of AI in network optimization introduces significant challenges related to explainability. Explainability refers to the ability to understand and interpret the decision-making process of an AI system. Many AI models, especially deep learning models, operate as "black boxes," meaning their internal workings are not easily interpretable by humans. This lack of transparency can lead to several issues:

1. Trust and Accountability: Network operators may hesitate to rely on AI-driven solutions if they cannot understand or trust the decisions being made. In the event of network failures or performance issues, it becomes challenging to determine accountability without a clear understanding of the AI's decision processes.

2. Compliance and Regulation: In many industries, compliance with regulations requires transparency in decision-making. AI systems that lack explainability can pose significant compliance risks, as stakeholders may not be able to demonstrate how decisions align with regulatory standards.

3. Debugging and Improvement: When AI models make incorrect decisions, understanding why those decisions were made is crucial for debugging and improving the system. Without explainability, it becomes difficult to refine the AI model to enhance its performance.

Strategies for Enhancing Explainability

Several strategies can be employed to enhance the explainability of AI-driven network optimization systems:

1. Model Simplification: Using simpler models can improve interpretability. While simpler models may not always match the performance of complex ones, they offer a trade-off between accuracy and explainability.

2. Explainable AI (XAI) Techniques: XAI aims to create models that are inherently interpretable or provide tools to interpret complex models. Techniques such as feature importance analysis, decision trees, and surrogate models can offer insights into the decision-making process of AI systems.

3. Human-in-the-Loop Systems: Integrating human expertise into AI systems can enhance explainability by allowing domain experts to guide and interpret AI decisions. This collaboration ensures that AI models benefit from human intuition and expertise.

4. Transparency in Data and Processes: Clear documentation and visualization of the data used, as well as the processes and algorithms employed, can improve understanding and trust in AI-driven solutions.

The Future of Explainable AI in Network Optimization

As AI continues to evolve, the demand for explainable AI solutions will only grow. Researchers and developers are actively working on creating models that not only perform well but are also interpretable. The future of AI-driven network optimization will likely see a shift toward more transparent systems, where explainability is a key design consideration from the outset.

Conclusion

Explainability is a critical issue in AI-driven network optimization, affecting trust, accountability, compliance, and system improvement. By adopting strategies such as model simplification, XAI techniques, human-in-the-loop systems, and transparency, we can address these challenges and unlock the full potential of AI in network optimization. As the field progresses, continued focus on explainability will ensure that AI technologies are used effectively and responsibly, paving the way for smarter and more efficient networks.

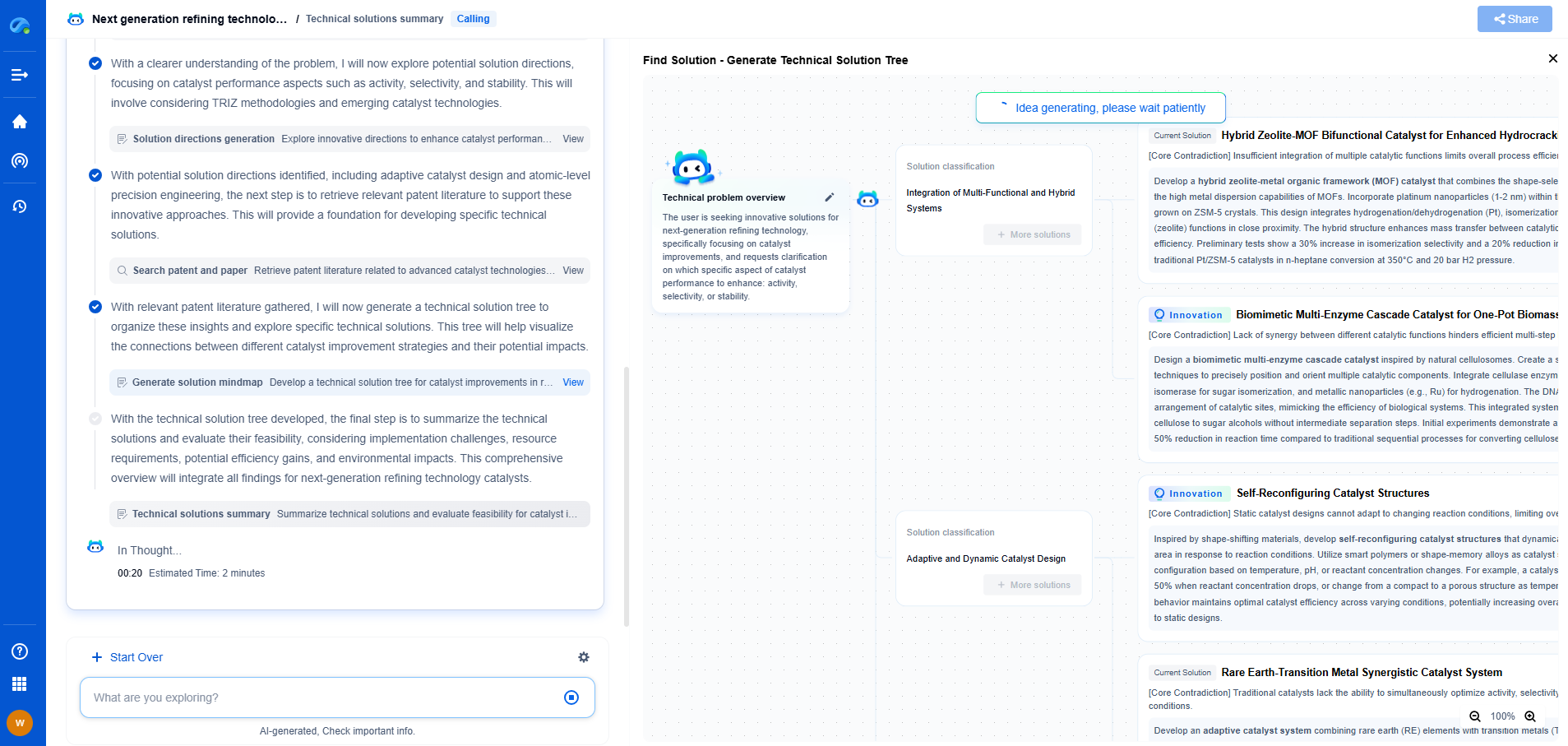

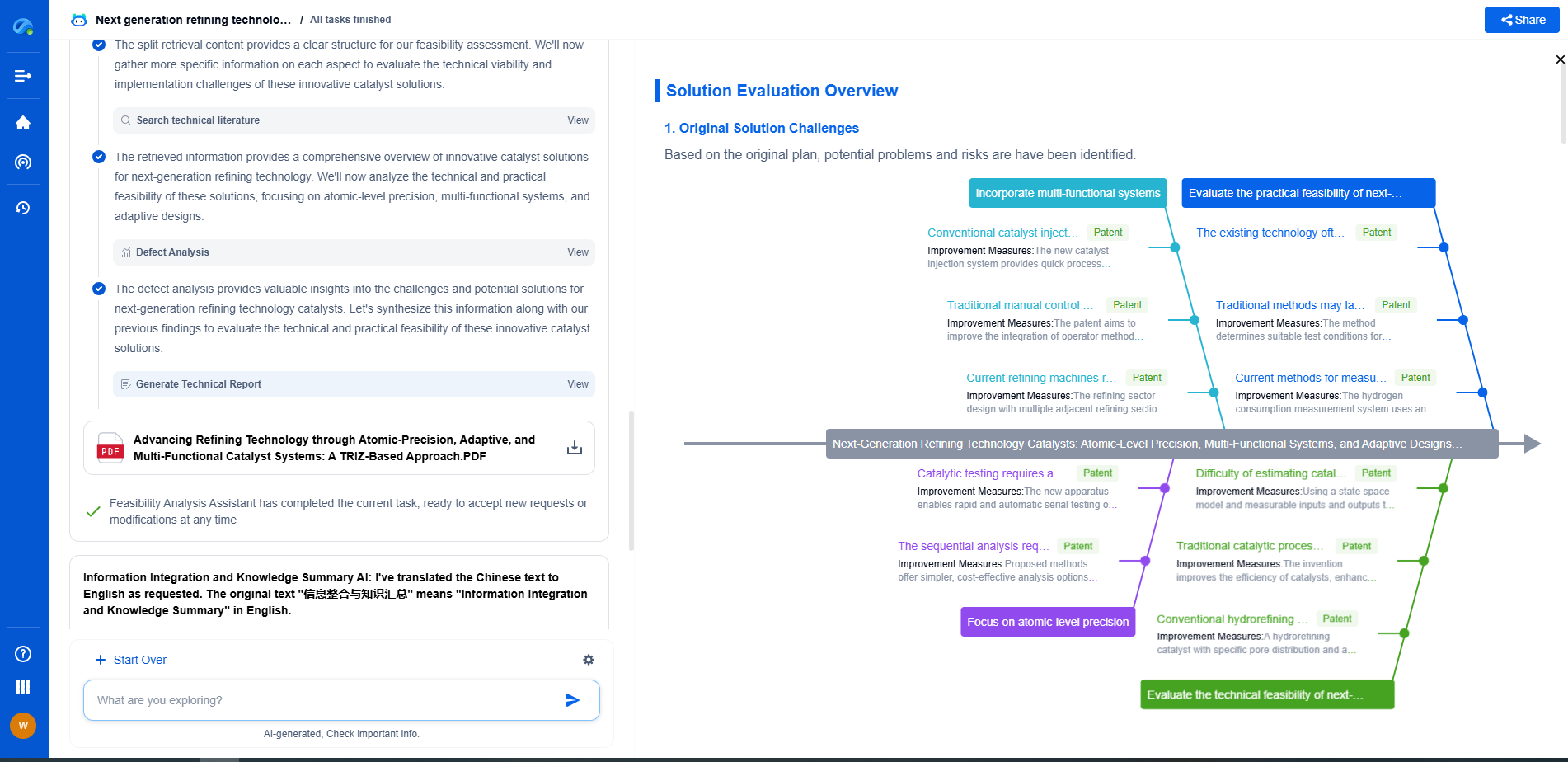

Empower Your Wireless Innovation with Patsnap Eureka

From 5G NR slicing to AI-driven RRM, today’s wireless communication networks are defined by unprecedented complexity and innovation velocity. Whether you’re optimizing handover reliability in ultra-dense networks, exploring mmWave propagation challenges, or analyzing patents for O-RAN interfaces, speed and precision in your R&D and IP workflows are more critical than ever.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

Whether you work in network architecture, protocol design, antenna systems, or spectrum engineering, Patsnap Eureka brings you the intelligence to make faster decisions, uncover novel ideas, and protect what’s next.

🚀 Try Patsnap Eureka today and see how it accelerates wireless communication R&D—one intelligent insight at a time.