Hands-On with Vision Transformers (ViT) Using Hugging Face Transformers

JUL 10, 2025 |

Vision Transformers (ViT) have emerged as a groundbreaking advancement in the field of computer vision. Unlike traditional Convolutional Neural Networks (CNNs) that rely heavily on convolutional layers to process visual information, Vision Transformers utilize the self-attention mechanism, which has been a game-changer in the realm of natural language processing. This approach allows ViTs to model long-range dependencies more effectively, making them particularly powerful for image classification tasks. In this blog, we will dive into the world of Vision Transformers using the Hugging Face Transformers library, exploring their architecture, and learning how to implement them for practical applications.

Understanding the Vision Transformer Architecture

The core idea behind the Vision Transformer is to treat an image as a sequence of patches, similar to how words form a sequence in a sentence. Each image is divided into fixed-size patches, which are then flattened and linearly embedded. These embeddings are supplemented with positional encodings to retain the spatial information of the patches. The sequence of patches is then fed into a Transformer Encoder, which consists of multiple layers of multi-head self-attention and feed-forward networks.

One of the primary advantages of Vision Transformers is their ability to capture global contextual information from the entire image. This capability allows them to outperform CNNs, especially in scenarios where the image contains complex patterns or when dealing with large datasets.

Setting Up the Environment

Before we start working with Vision Transformers, it is essential to set up our environment. The Hugging Face Transformers library provides a user-friendly interface to implement ViTs. First, ensure that you have the necessary libraries installed. You can do this by running:

pip install transformers

pip install torch

pip install torchvision

Loading and Preprocessing Data

For this hands-on guide, we will use a standard dataset like CIFAR-10 to demonstrate how Vision Transformers work. The CIFAR-10 dataset consists of 60,000 32x32 color images in 10 different classes.

To load and preprocess the data, we can use PyTorch’s built-in utilities. It is crucial to perform normalization on the images to ensure that they are on a similar scale, which helps in faster convergence of the model.

Implementing Vision Transformer with Hugging Face

The Hugging Face Transformers library provides a straightforward interface to implement Vision Transformers. We will use the ViTModel class, which is specifically designed for image processing tasks. Here is a step-by-step guide on how to implement ViT:

1. Load the Pre-trained Model: Hugging Face offers pre-trained ViT models which can be easily loaded. This helps in transfer learning, where you fine-tune a model that has already been trained on a large dataset.

2. Define the Model Architecture: Customize the ViTModel to suit your dataset. This involves specifying the number of classes you’re working with for the classification task.

3. Train the Model: Use a suitable optimizer and loss function to train the model. Common choices are Adam optimizer and cross-entropy loss for classification tasks.

4. Evaluate the Model: After training, evaluate the model’s performance on the test dataset to ensure it generalizes well.

Fine-Tuning for Specific Tasks

One of the significant advantages of using Vision Transformers is their flexibility in fine-tuning for specific tasks. Whether you are working on object detection, semantic segmentation, or other computer vision tasks, ViTs can be adapted to improve performance. Fine-tuning involves adjusting the pre-trained weights to cater to the nuances of your specific dataset. This process often results in improved accuracy and reduced training time.

Tips and Tricks for Optimal Performance

While working with Vision Transformers, there are several strategies you can employ to enhance model performance:

1. Data Augmentation: Applying techniques such as random cropping, rotation, and flipping can help in generating more diverse training data, leading to better model generalization.

2. Learning Rate Scheduling: Adjusting the learning rate during training can significantly impact the convergence speed and final accuracy of the model. Techniques like cosine annealing or step decay are popular choices.

3. Batch Size: Experimenting with different batch sizes can lead to improved performance. Larger batch sizes can benefit from faster computation due to parallel processing, but they may also require more memory.

Conclusion

Vision Transformers represent a paradigm shift in the field of computer vision, offering a novel approach that capitalizes on the strengths of self-attention mechanisms. By leveraging the Hugging Face Transformers library, implementing and experimenting with ViTs has become more accessible to researchers and practitioners. As you delve deeper into the capabilities of Vision Transformers, you will discover their potential to revolutionize various applications within computer vision. Whether you’re a seasoned expert or a curious newcomer, ViTs offer exciting opportunities to explore and innovate.

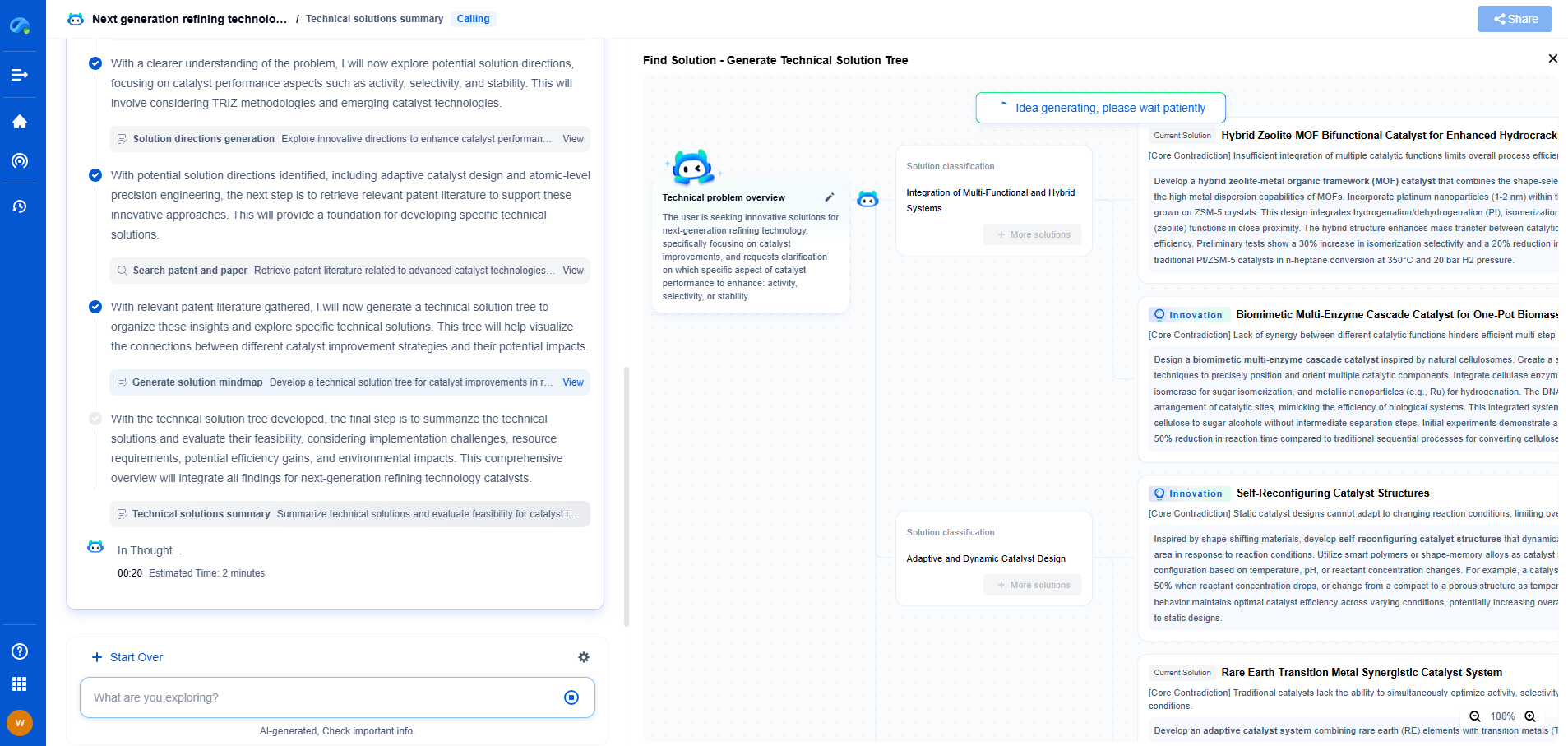

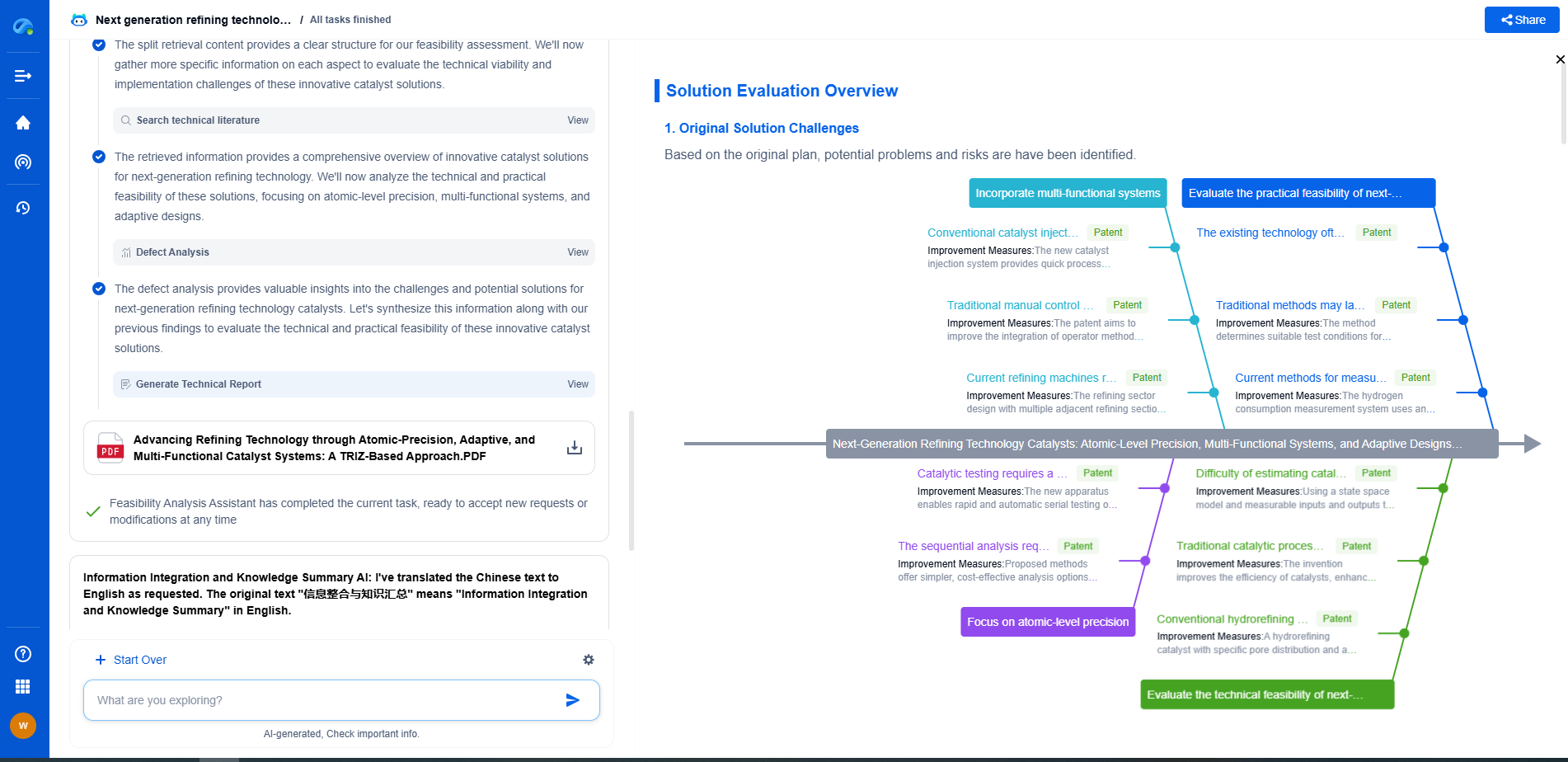

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.