Hardware Accelerators (GPUs/TPUs) for Power System AI Models

JUN 26, 2025 |

As the complexity and scale of power systems continue to grow, the need for advanced artificial intelligence (AI) models becomes imperative. These models are essential for optimizing grid operations, forecasting energy demands, and ensuring stability in real-time. However, the computational demands of these AI models can be staggering. Enter hardware accelerators such as Graphics Processing Units (GPUs) and Tensor Processing Units (TPUs), which have revolutionized the way AI models are developed and deployed, particularly in energy sectors like power systems.

**The Role of AI in Power Systems**

Before delving into hardware accelerators, it is important to understand how AI is used in power systems. AI models are applied in various domains such as load forecasting, fault detection, energy trading, and grid optimization. These tasks often require processing vast amounts of data and performing complex computations, which traditional CPUs (Central Processing Units) struggle to handle efficiently due to their sequential processing nature.

**Why Hardware Accelerators?**

Hardware accelerators like GPUs and TPUs are designed to handle parallel processing, making them highly efficient for the matrix operations often required in AI models. Unlike CPUs that have a few cores optimized for sequential processing, GPUs have thousands of smaller cores designed for handling multiple tasks simultaneously. This capability allows AI algorithms, particularly those involving deep learning and neural networks, to execute faster and more efficiently.

**GPUs: The Powerhouse of Parallel Processing**

GPUs were originally designed for rendering graphics but have proven exceptionally adept at parallel computation, making them ideal for training deep learning models. In power systems, GPUs can accelerate the training of AI models used for predictive maintenance, anomaly detection, and optimization tasks. The parallel processing capabilities of GPUs enable faster model iteration, which is crucial for developing robust AI applications in real-time scenarios. This is particularly beneficial for real-time grid monitoring where rapid decision-making is essential.

**TPUs: Tailored for AI Workloads**

Tensor Processing Units (TPUs) are a newer class of hardware accelerators developed specifically for AI workloads. They are optimized for tensor operations, which are the backbone of many machine learning models. TPUs offer high performance and efficiency for training and deploying AI models at scale. In the context of power systems, TPUs can be used to streamline complex tasks such as voltage regulation, frequency control, and distributed energy resource management. Their ability to handle large-scale AI computations with lower latency and higher throughput makes them an attractive option for power system applications.

**Comparative Analysis: GPUs vs. TPUs**

Both GPUs and TPUs have their strengths and ideal use cases. GPUs are versatile and widely used due to their adaptability and extensive software support. They are a good fit for research and development environments where flexibility is key. On the other hand, TPUs are purpose-built for AI and excel in production environments requiring efficiency and speed, such as handling large-scale deployment of AI models in smart grids. The choice between GPUs and TPUs often depends on the specific requirements of the power system application, including factors like computational needs, deployment scale, and budget constraints.

**Challenges and Considerations**

While hardware accelerators offer significant advantages, their integration into power systems AI workflows is not without challenges. Issues such as the initial cost of setup, power consumption of hardware, and the need for specialized technical expertise can pose barriers. Additionally, the rapid pace of technology evolution means that organizations must be agile in adopting new hardware solutions to stay competitive. There is also a need for robust software frameworks and libraries that can seamlessly interface with these accelerators to maximize their potential.

**Future Prospects**

The future of AI in power systems looks promising with the continued advancements in hardware accelerators. As the energy sector transitions toward more distributed and renewable sources, the demand for real-time data processing and decision-making will grow. GPUs and TPUs will play a critical role in enabling this transformation, providing the necessary computational power to handle complex AI models efficiently.

In conclusion, hardware accelerators like GPUs and TPUs are indispensable tools for enhancing the performance of AI models in power systems. Their ability to accelerate computation, reduce processing time, and support large-scale deployment makes them essential in the ongoing evolution of the energy sector. As AI continues to shape the future of power systems, leveraging these advanced hardware solutions will be key to unlocking their full potential.

Stay Ahead in Power Systems Innovation

From intelligent microgrids and energy storage integration to dynamic load balancing and DC-DC converter optimization, the power supply systems domain is rapidly evolving to meet the demands of electrification, decarbonization, and energy resilience.

In such a high-stakes environment, how can your R&D and patent strategy keep up?

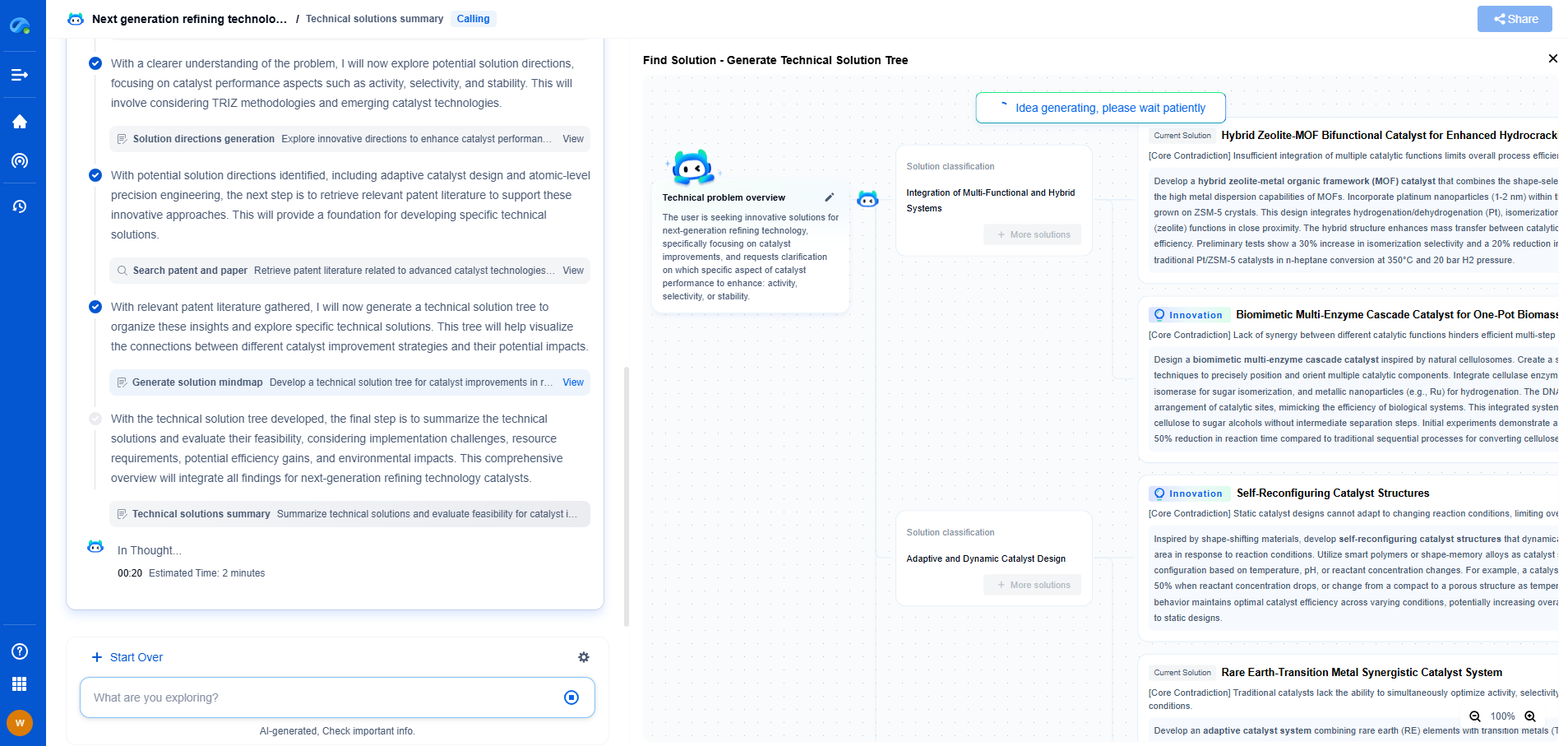

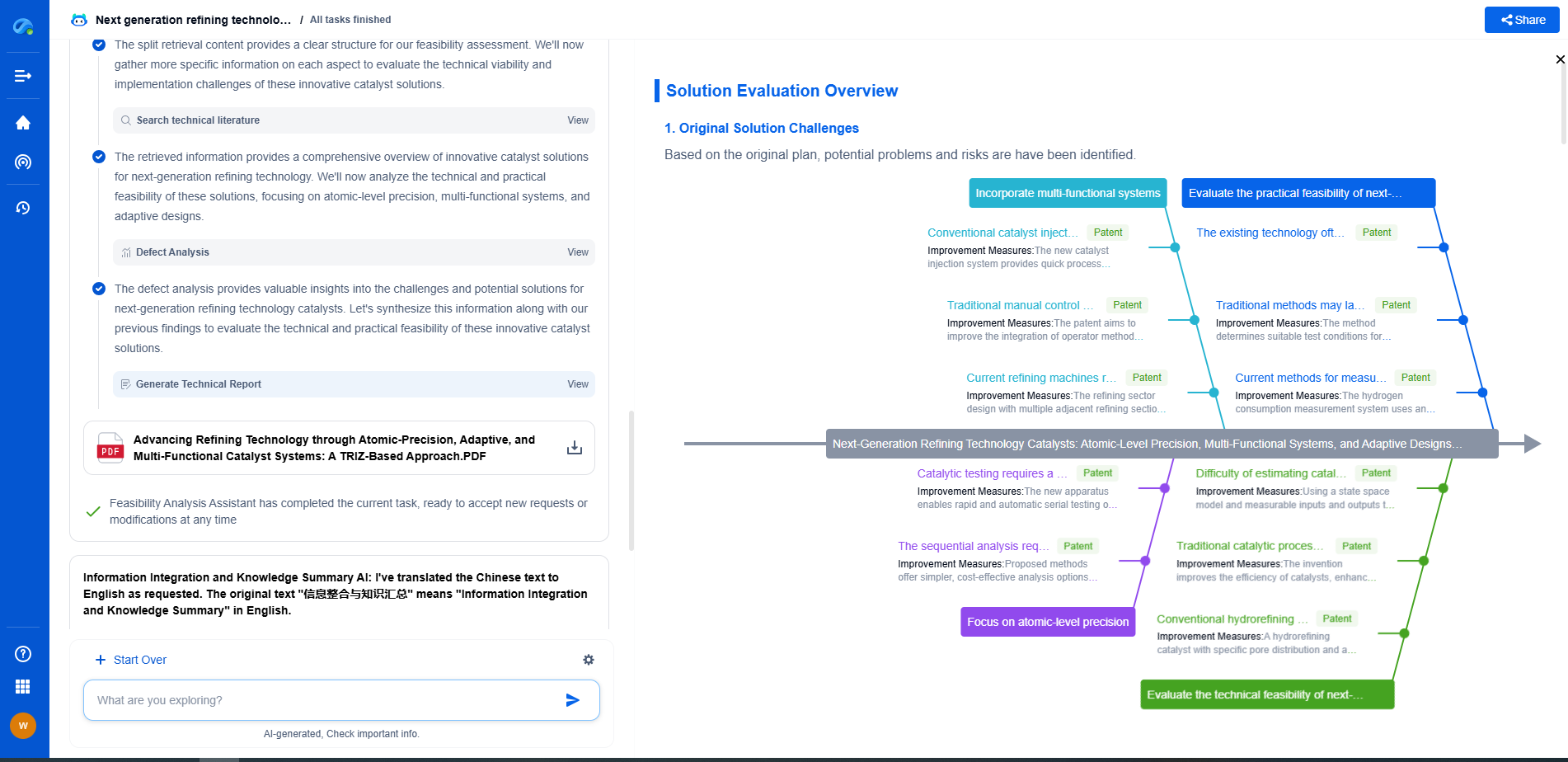

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

👉 Experience how Patsnap Eureka can supercharge your workflow in power systems R&D and IP analysis. Request a live demo or start your trial today.