How Multimodal Sensor Fusion Enhances Application Contexts in Vision Systems

JUL 10, 2025 |

In the rapidly advancing field of computer vision, the integration of multimodal sensor fusion has emerged as a transformative approach, enhancing the capabilities of vision systems across various applications. Multimodal sensor fusion refers to the process of combining data from different types of sensors to create a more comprehensive understanding of the observed environment. This integration not only improves the accuracy and robustness of vision systems but also expands their applicability in complex real-world scenarios.

The Fundamentals of Multimodal Sensor Fusion

To appreciate the impact of multimodal sensor fusion on vision systems, it is essential to understand its foundational principles. At its core, sensor fusion leverages diverse modalities such as cameras, LiDAR, radar, and infrared sensors, each offering unique advantages. Cameras, for instance, provide high-resolution color information, while LiDAR is adept at capturing precise depth data. By fusing data from these different sources, vision systems can overcome the limitations of individual sensors, achieving richer and more reliable interpretations of the environment.

Enhancing Perception in Autonomous Vehicles

One of the most prominent applications of multimodal sensor fusion is in autonomous vehicles. These vehicles rely heavily on accurate perception to navigate safely and efficiently. By integrating data from cameras, LiDAR, radar, and ultrasonic sensors, autonomous systems can detect and classify objects in varied weather and lighting conditions. This fusion not only enhances vehicle safety by providing a more accurate understanding of the surroundings but also facilitates smoother navigation by predicting the behavior of other road users.

Augmenting Capabilities in Robotics

In robotics, multimodal sensor fusion significantly augments the capabilities of robotic vision systems. Robots deployed in industrial settings, for example, can benefit from combining visual data with tactile and auditory information to perform complex tasks with precision. This integration allows robots to adapt to dynamic environments, improving their ability to interact with objects and humans. Moreover, it empowers robots to learn from diverse sensory inputs, enabling more intelligent and autonomous decision-making.

Improving Healthcare and Medical Imaging

The healthcare sector has also witnessed remarkable advancements through the use of multimodal sensor fusion. In medical imaging, combining data from different imaging modalities such as MRI, CT, and PET scans offers a more holistic view of the human body. This comprehensive imaging capability aids in more accurate diagnosis and treatment planning. Furthermore, wearable health monitoring devices equipped with multiple sensors can track vital signs and environmental factors, providing a continuous and integrated view of a patient's health status.

Boosting Performance in Surveillance Systems

Surveillance systems have greatly benefited from the integration of multimodal sensor fusion, particularly in security and monitoring applications. By fusing data from video cameras, thermal sensors, and acoustic sensors, these systems can detect anomalies and identify potential threats with greater accuracy. This is particularly valuable in scenarios where lighting conditions are poor or when there is a need to detect objects or individuals that are not visible to the naked eye. The enhanced situational awareness provided by sensor fusion leads to more effective security measures and quicker response times.

Challenges and Future Directions

Despite its numerous benefits, multimodal sensor fusion is not without challenges. The complexity of integrating data from different sensors can lead to issues such as data synchronization, calibration, and computational demands. Additionally, developing algorithms that effectively merge disparate data types while maintaining real-time performance remains a significant hurdle.

Looking ahead, ongoing research and technological advancements are expected to address these challenges. The future of multimodal sensor fusion lies in the development of more sophisticated algorithms and the integration of machine learning techniques to improve data interpretation. As these systems continue to evolve, they will unlock new possibilities across industries, pushing the boundaries of what vision systems can achieve.

Conclusion

In conclusion, multimodal sensor fusion is revolutionizing the functionality and application scope of vision systems. By harnessing the strengths of various sensors, these systems provide enhanced perception, improved decision-making, and greater adaptability in complex environments. As technology continues to advance, the integration of multimodal sensor fusion will undoubtedly play a pivotal role in shaping the future of vision systems across diverse domains, from autonomous vehicles and robotics to healthcare and surveillance.

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

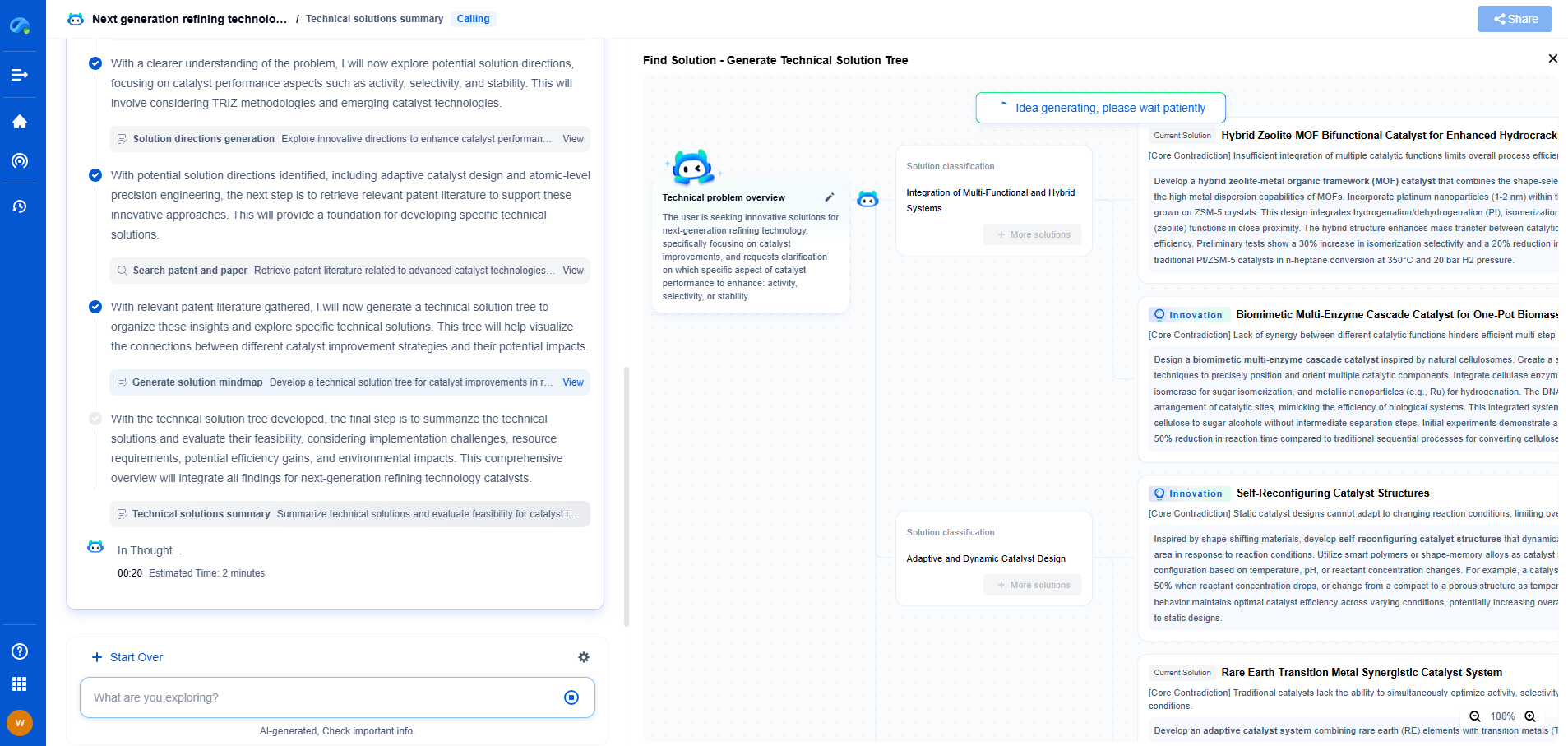

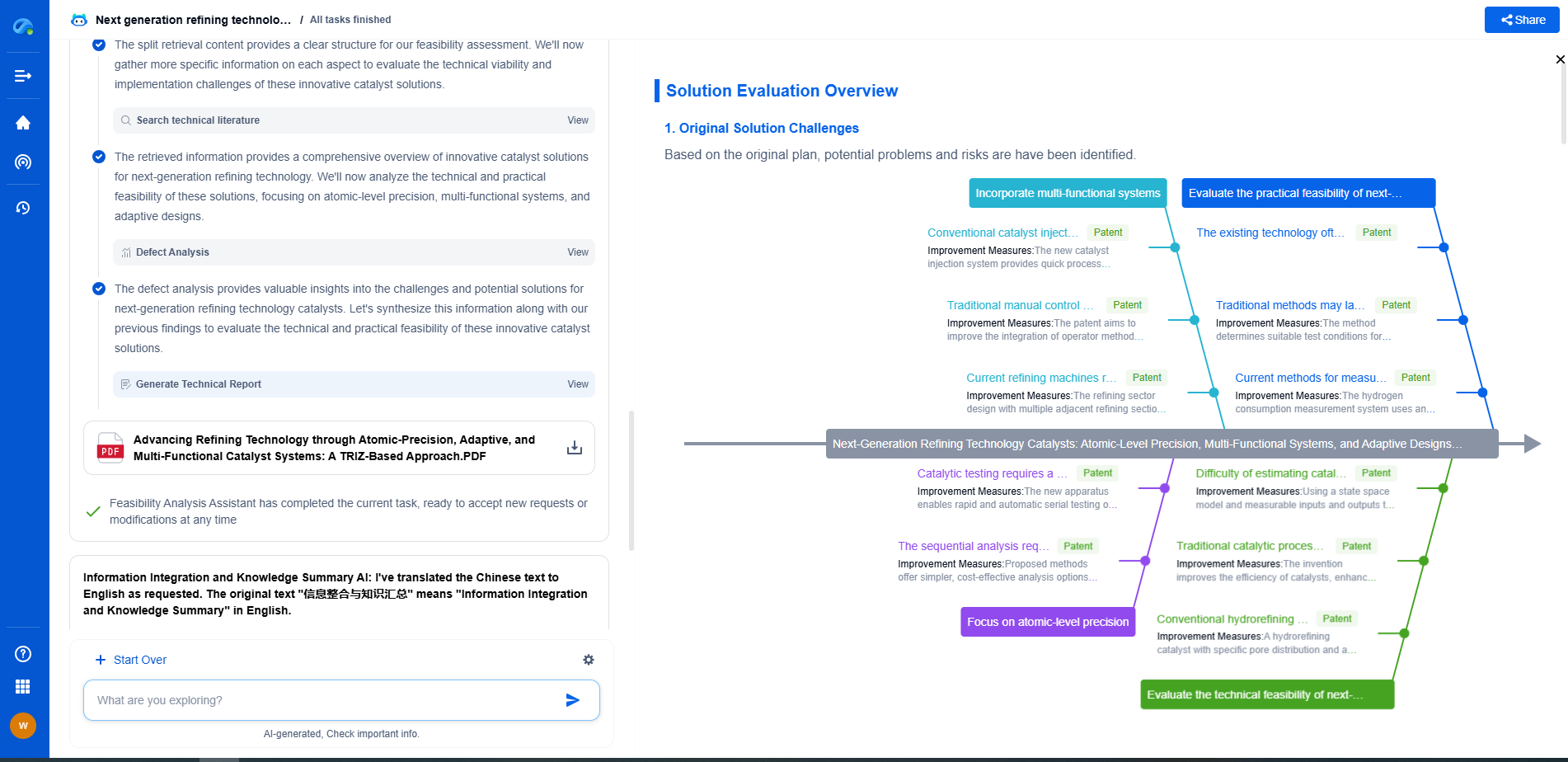

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.