IBR Methods: Light Fields vs. Multiplane Images vs. Depth-Based Synthesis

JUL 10, 2025 |

Image-Based Rendering (IBR) encompasses a collection of techniques in computer graphics that focus on generating 3D views from a sparse set of images. This approach is rooted in using existing imagery to create new views, often circumventing the need for detailed geometric modeling or complex animations. The three dominant methods in the realm of IBR—Light Fields, Multiplane Images, and Depth-Based Synthesis—each offer unique benefits and challenges. Understanding these techniques is crucial for developing efficient and realistic rendering solutions.

Light Fields: High Fidelity Through Rich Data

Light Fields capture comprehensive information about the light traveling in every direction through a scene at every point. This method involves using a dense array of images to represent all possible views of a scene. The primary advantage of Light Fields is their ability to provide very high-quality renderings with realistic lighting effects such as reflections and refractions. This is due to capturing the complete 4D radiance distribution, which encapsulates both intensity and directionality of light.

However, the richness of data captured in Light Fields also poses significant challenges. The storage requirements are substantial, and capturing a full Light Field can be logistically complex, requiring specialized equipment and careful calibration. Additionally, real-time rendering can be computationally intense, demanding advanced hardware solutions. Despite these challenges, Light Fields are often chosen for applications where image quality is paramount, such as virtual reality and cinematic special effects.

Multiplane Images: A Balance of Quality and Efficiency

Multiplane Images represent another approach in IBR that strives to balance rendering quality with computational efficiency. This method involves layering multiple 2D images, each corresponding to a different depth within the scene. By adjusting the transparency and blending these layers, a convincing 3D effect can be achieved with less computational overhead than Light Fields.

This technique is particularly well-suited for environments where the scene depth is limited or can be approximated with a small number of planes. Multiplane Images are often used in applications such as animated films and games, where resource constraints necessitate a compromise between visual fidelity and performance. The main limitation of this method is that it may struggle with scenes that have complex geometry or require high precision in depth representation.

Depth-Based Synthesis: Flexibility and Adaptability

Depth-Based Synthesis leverages depth maps or 3D models along with 2D images to generate new views. This method uses the depth information to accurately warp and project images onto the desired view, allowing for a high degree of flexibility and adaptability in rendering diverse scenes. With the aid of machine learning and artificial intelligence, Depth-Based Synthesis can produce high-quality images even from sparse datasets.

The flexibility of this approach makes it particularly appealing for applications where scene geometry can vary significantly or where user interaction is a priority, such as augmented reality and interactive media. However, the accuracy of Depth-Based Synthesis heavily depends on the quality of the depth data. Inaccuracies in depth maps can lead to visual artifacts, such as distortions or incorrect occlusions.

Comparative Analysis and Applications

When comparing these methods, each has its niche where it excels. Light Fields offer unparalleled image quality at the cost of high data and computational requirements. Multiplane Images provide a practical middle ground, offering good visual quality with moderate resource usage. Depth-Based Synthesis stands out for its flexibility and ability to adapt to different scene complexities.

In practical applications, the choice of method depends largely on the specific needs of the project. For instance, in virtual reality, where immersion and realism are key, Light Fields might be preferred despite their resource demands. In contrast, for video games or mobile applications, where performance is a factor, Multiplane Images or Depth-Based Synthesis might be more appropriate choices.

Conclusion

The landscape of Image-Based Rendering is vast and continually evolving. Each method—whether it be Light Fields, Multiplane Images, or Depth-Based Synthesis—offers unique advantages, and the right choice depends on the specific demands and constraints of the application at hand. As technology advances, these techniques will likely see further refinements, offering even more powerful tools for creating rich, immersive visual experiences. Understanding these methodologies not only aids in selecting the right approach for a given task but also in pushing the boundaries of what is possible in the realm of digital imagery.

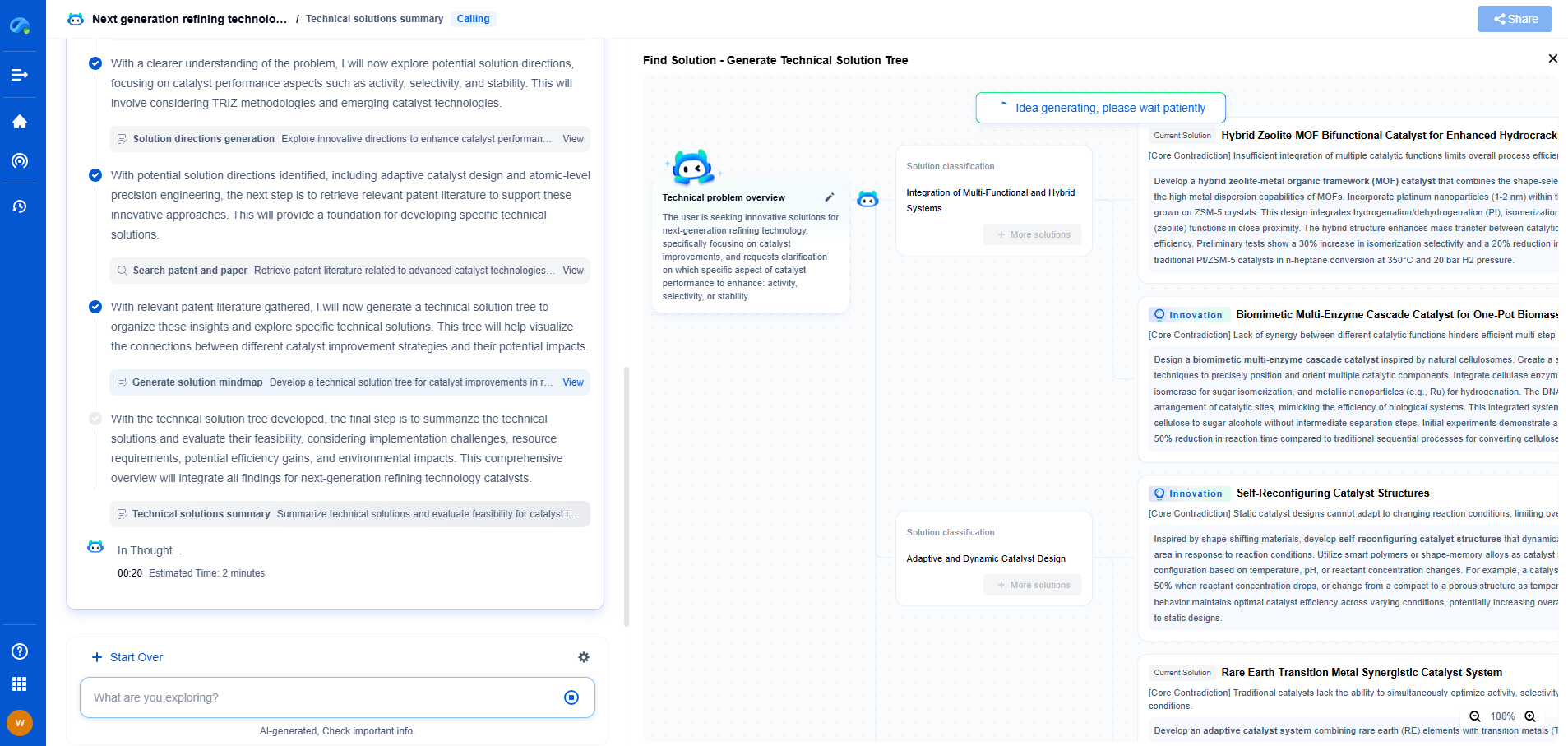

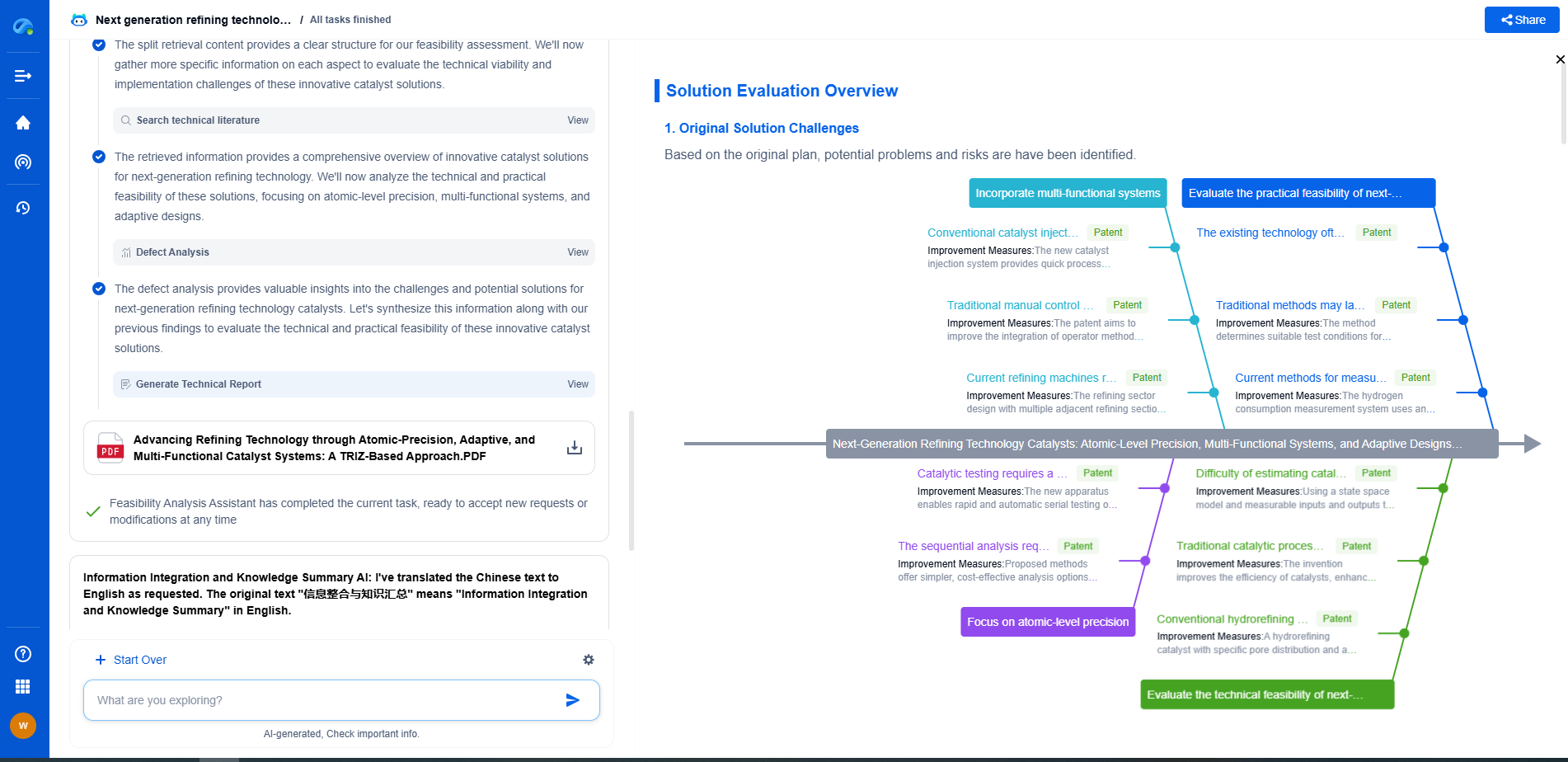

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.