Neuromorphic Vision Sensors: Event-Based Imaging Beyond Frames

JUL 8, 2025 |

In the realm of imaging technologies, a significant shift is taking place, driven by the need for faster, more efficient, and accurate data processing methods. Traditional frame-based sensors, which capture images frame by frame at fixed intervals, have long dominated the scene. However, they come with inherent limitations, particularly in dynamic and high-speed environments. Enter neuromorphic vision sensors, which promise to revolutionize the field through event-based imaging, offering a fresh perspective on how we perceive and process visual information.

Understanding Event-Based Imaging

Unlike conventional cameras that rely on capturing full frames at regular intervals, event-based imaging operates on the principle of capturing only the changes in a scene. This is akin to how the human eye works, focusing on motion and alterations in the environment rather than a constant stream of redundant information. Neuromorphic sensors detect changes in brightness at the pixel level, allowing for a more dynamic and efficient way of processing visual data.

The Advantages of Event-Based Sensors

The most compelling advantage of event-based sensors is their ability to operate at high speed with low latency. By only recording changes, these sensors eliminate the need to process entire frames, reducing the amount of data that needs to be handled. This leads to faster processing times, making them ideal for applications requiring quick reactions, such as robotics, autonomous vehicles, and security systems.

Moreover, event-based sensors are highly energy-efficient. Traditional frame-based methods consume significant power, especially when dealing with high-resolution images. Neuromorphic sensors, on the other hand, reduce energy consumption by processing only the relevant data, thus extending battery life in portable devices and decreasing operational costs in larger systems.

Applications and Implications

The implications of event-based imaging are vast and varied. In robotics, these sensors can provide machines with a human-like ability to perceive their surroundings, allowing for smoother and more adaptive interactions with the world. Autonomous vehicles benefit from the rapid response capabilities of neuromorphic sensors, enabling safer navigation through complex environments.

Event-based imaging is also making waves in the field of augmented and virtual reality, where real-time data processing is crucial for creating immersive experiences. Additionally, in surveillance and security, the ability to detect and react to changes instantly can significantly enhance threat detection and response times.

Challenges and Future Directions

Despite their promise, neuromorphic vision sensors face challenges that must be addressed to maximize their potential. One significant hurdle is the integration of event-based data with existing systems and algorithms, which are predominantly designed for frame-based inputs. Developing new algorithms and adapting old ones to accommodate this new data format is crucial for widespread adoption.

Furthermore, while these sensors excel in dynamic environments, there is a need to improve their performance in static or low-light conditions. Continued research and development are essential to enhance their adaptability and robustness across diverse scenarios.

Looking forward, the future of neuromorphic vision sensors is bright. As technology advances and the demand for efficient, real-time processing grows, event-based imaging is poised to play a pivotal role in a myriad of applications, paving the way for smarter and more responsive machines.

Conclusion

Neuromorphic vision sensors and event-based imaging represent a paradigm shift in how we capture and process visual data. By mimicking the efficiency and speed of the human eye, these sensors offer a glimpse into a future where machines see the world as we do, with clarity and immediacy. As challenges are overcome and the technology matures, the possibilities for innovation and advancement are boundless, promising a future of enhanced interaction and understanding between humans and machines.

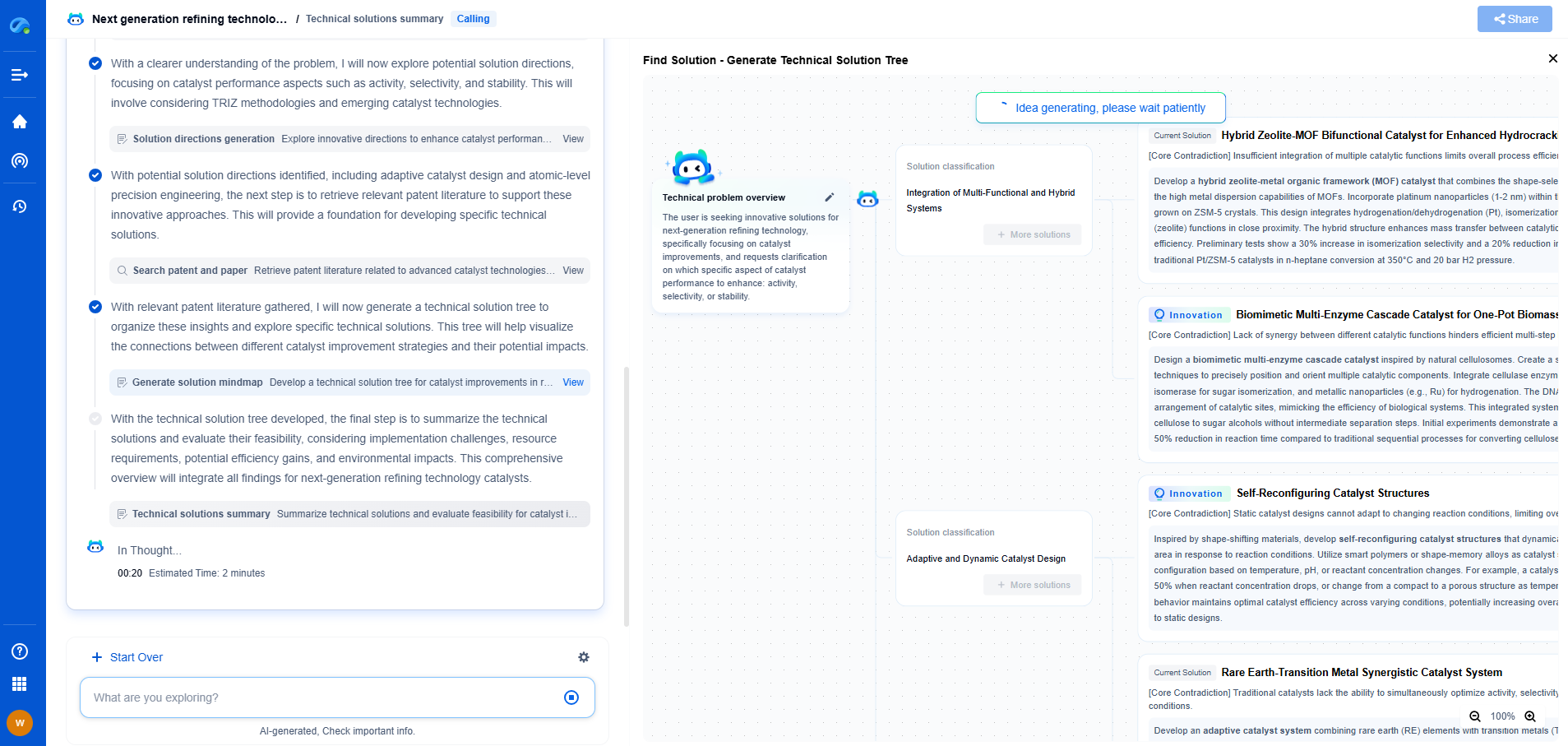

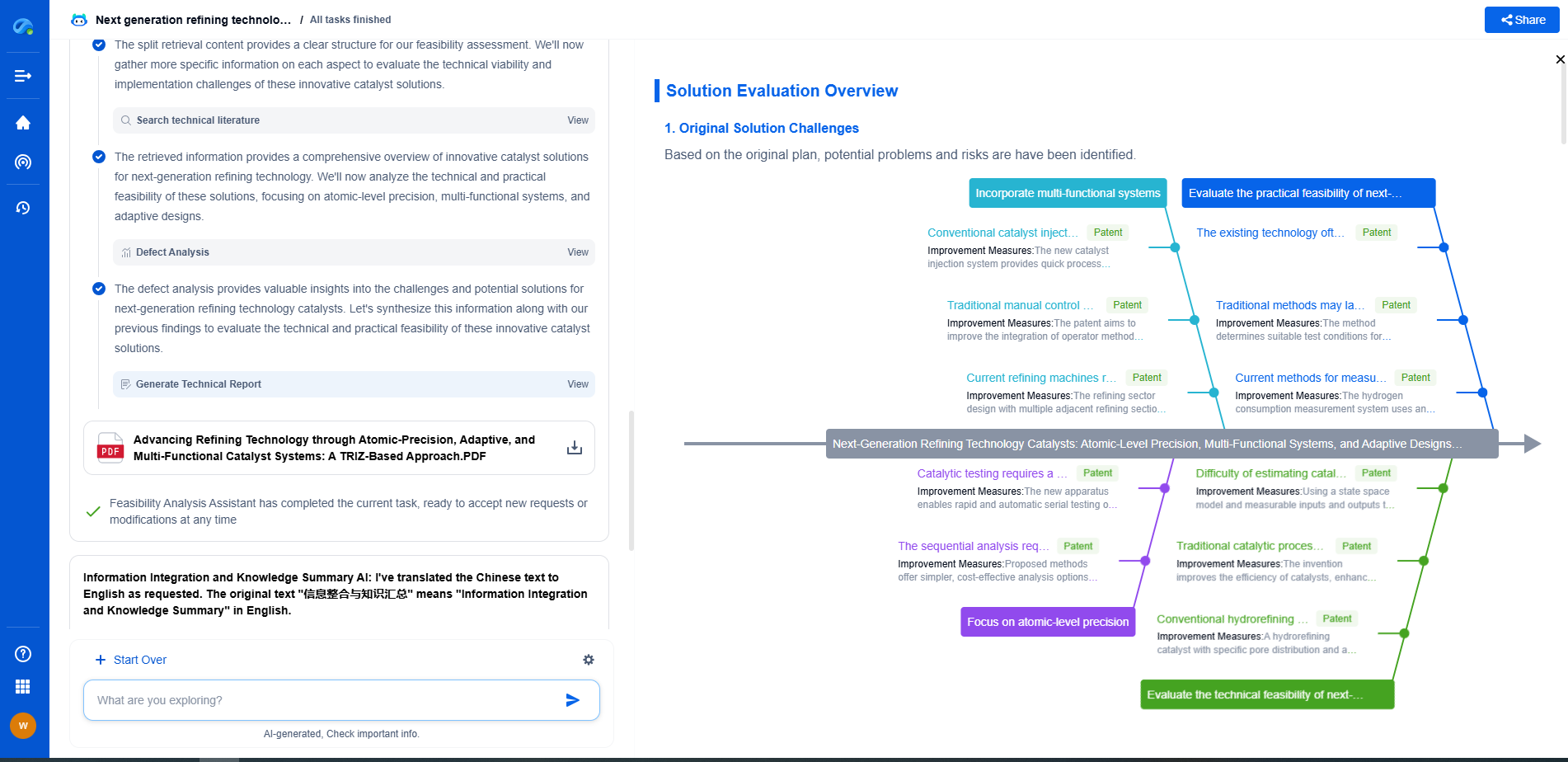

Infuse Insights into Chip R&D with PatSnap Eureka

Whether you're exploring novel transistor architectures, monitoring global IP filings in advanced packaging, or optimizing your semiconductor innovation roadmap—Patsnap Eureka empowers you with AI-driven insights tailored to the pace and complexity of modern chip development.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

👉 Join the new era of semiconductor R&D. Try Patsnap Eureka today and experience the future of innovation intelligence.