Peak Detection False Positives: Tuning Algorithms for Noisy Signals

JUL 17, 2025 |

Peak detection is a critical task in numerous fields, from signal processing and data analysis to bioinformatics and financial forecasting. It involves identifying local maxima—peaks—in a dataset, which often signify important events or transitions. However, the presence of noise in the data can lead to false positives, where random fluctuations are mistaken for genuine peaks. This poses a significant challenge, as false positives can lead to incorrect conclusions and decisions.

Sources of Noise and Their Impacts

Noise in data can arise from various sources, such as measurement errors, environmental factors, or intrinsic variability in the system being studied. This noise introduces randomness into the dataset, complicating the task of distinguishing between true signal peaks and spurious noise-induced peaks.

For example, in biomedical signal processing, noise can result from electrical interference or patient movement, while in financial data, market volatility can create deceptive transient spikes. Understanding the characteristics and sources of noise is a critical step in developing strategies to mitigate its impact on peak detection.

Algorithm Selection: Choosing the Right Tool

Selecting the appropriate algorithm for peak detection is paramount. There are many algorithms available, each with its strengths and weaknesses, and suitability can vary depending on the characteristics of the data. Common algorithms include the moving average, wavelet transforms, and machine learning-based approaches.

The moving average is simple and effective for smoothing data, but it may not perform well in distinguishing overlapping peaks. Wavelet transforms are excellent at localizing peaks in time-frequency space, making them suitable for non-stationary signals. Machine learning approaches can adapt to complex patterns in the data but require substantial training data and computational resources.

Tuning Algorithms for Better Performance

Once an algorithm is chosen, tuning its parameters is crucial for maximizing performance and minimizing false positives. For instance, the sensitivity of a peak detection algorithm can often be adjusted by changing threshold levels or window sizes. A lower threshold may detect more peaks but increase false positives, whereas a higher threshold might miss subtle but genuine peaks.

Cross-validation techniques can be employed to evaluate different parameter settings and select the one that best balances sensitivity and specificity. It is also beneficial to incorporate domain knowledge to guide parameter selection, allowing for better alignment with the specific characteristics of the signal being analyzed.

Data Preprocessing: A Crucial Step

Effective data preprocessing can significantly enhance peak detection performance by reducing noise levels before analysis. Techniques such as filtering, normalization, and detrending can be applied to enhance the signal-to-noise ratio. Filters, like the Gaussian or median filter, can smooth out noise while preserving crucial peak information.

Normalization adjusts data to a common scale, reducing variability that may lead to false positives. Detrending removes long-term trends that can mask real peaks. Preprocessing should be carefully tailored to the specific dataset to avoid over-smoothing, which may lead to loss of important signal features.

Validation and Testing: Ensuring Robustness

After tuning and preprocessing, it is vital to rigorously validate the performance of the peak detection algorithm. This involves comparing detected peaks against a ground truth or expert-annotated dataset to assess accuracy, precision, and recall.

Testing should be conducted on independent datasets to ensure that the algorithm generalizes well across different conditions. Robust validation not only helps in identifying potential issues but also provides insights into areas for further refinement and optimization.

Conclusion: Achieving Reliable Peak Detection

Peak detection in noisy signals is a challenging yet essential task across various domains. By understanding the nature of noise, selecting and tuning appropriate algorithms, and implementing effective preprocessing strategies, one can significantly reduce false positives and improve detection reliability.

Continuous validation and refinement are key to developing robust solutions that can adapt to diverse datasets and evolving noise patterns. Ultimately, achieving reliable peak detection not only enhances data analysis but also empowers informed decision-making in complex, noisy environments.

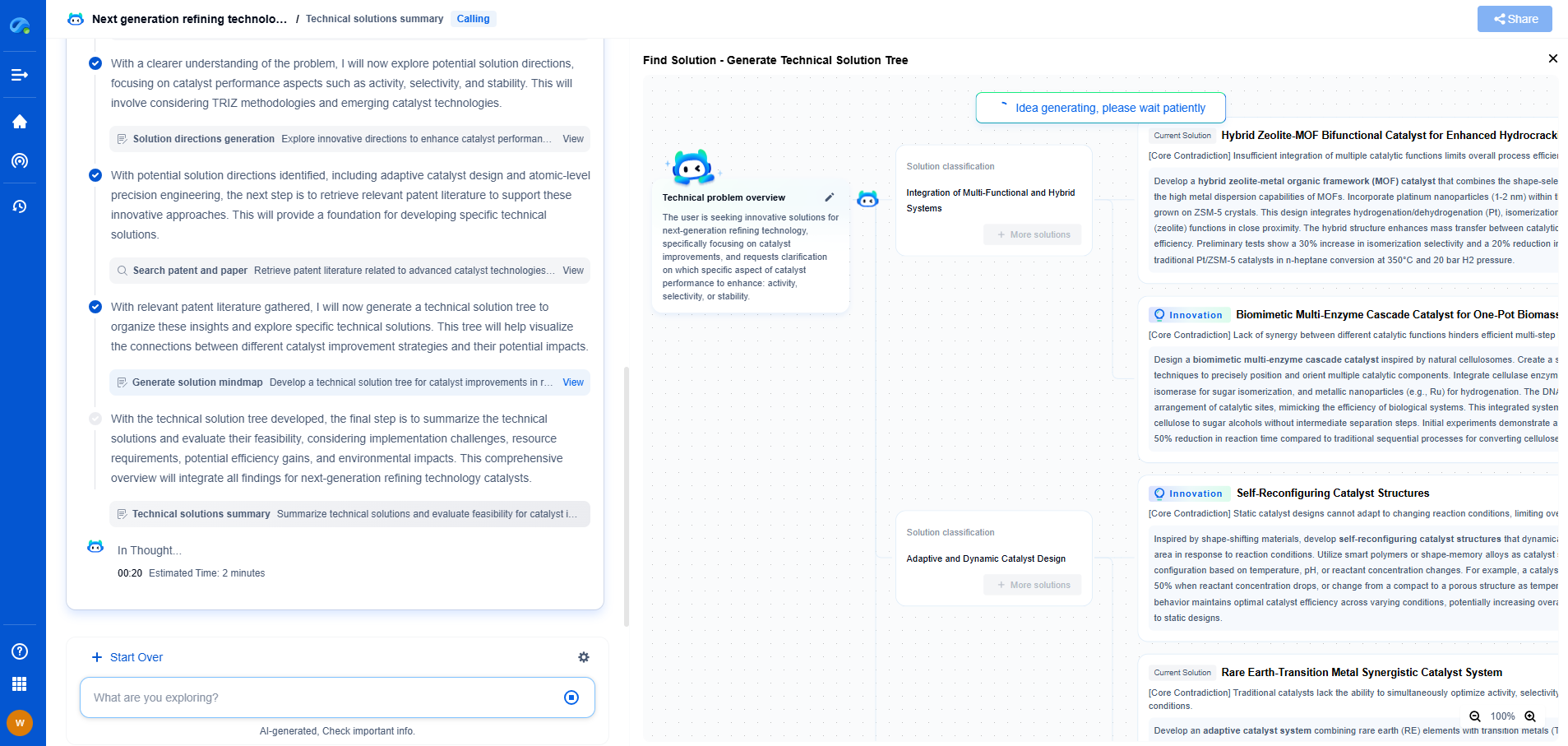

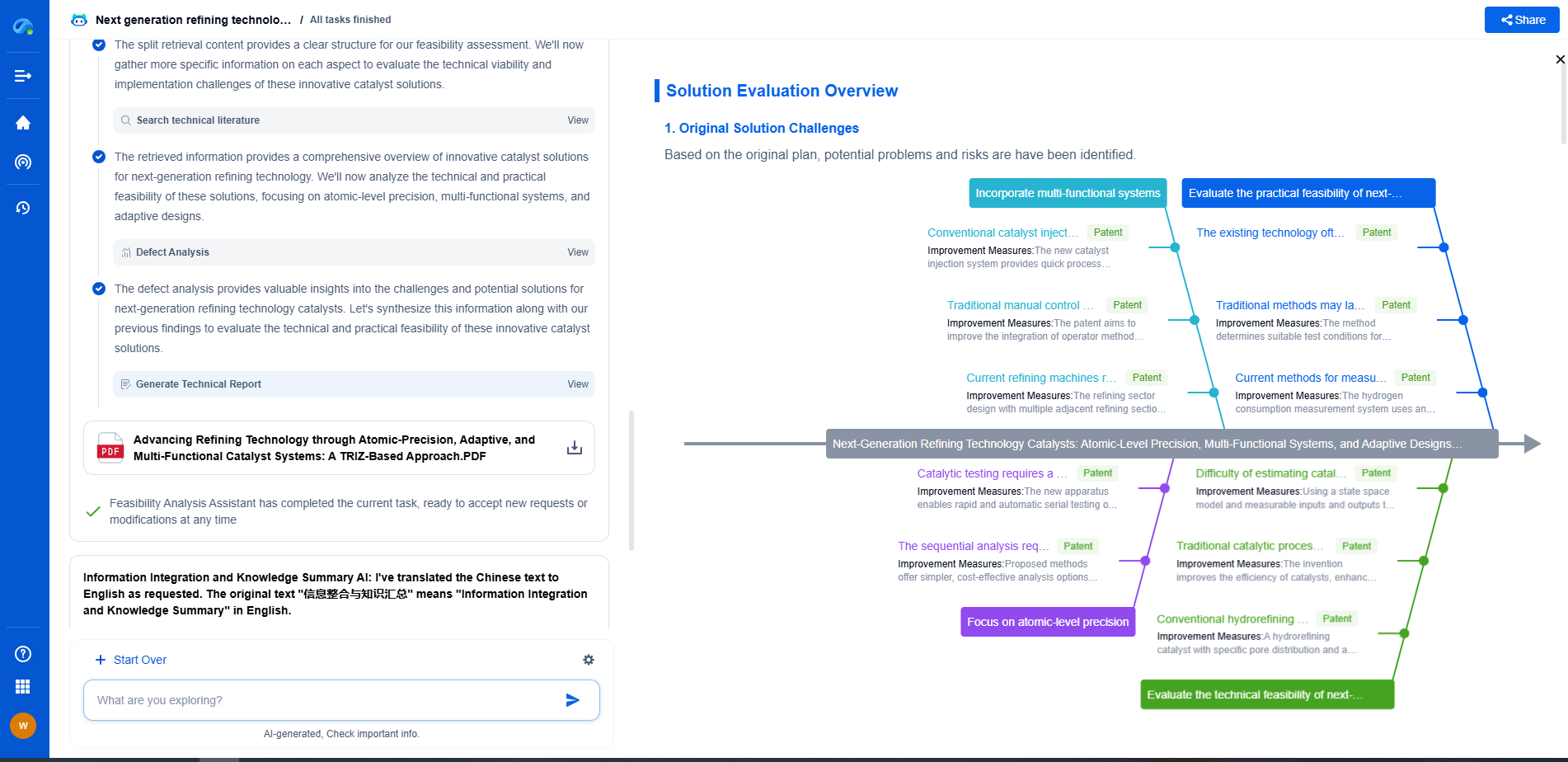

Whether you’re developing multifunctional DAQ platforms, programmable calibration benches, or integrated sensor measurement suites, the ability to track emerging patents, understand competitor strategies, and uncover untapped technology spaces is critical.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🧪 Let Eureka be your digital research assistant—streamlining your technical search across disciplines and giving you the clarity to lead confidently. Experience it today.