Real-Time FFT and Cepstral Features for Edge-Based Sound Classification

JUL 16, 2025 |

Understanding Sound Classification at the Edge

Sound classification is the process of identifying and categorizing sounds into predefined categories. In edge computing, this means performing analysis directly on the device where the data is captured, rather than sending it to a centralized server. This approach requires efficient algorithms that can operate under the resource constraints typical of edge devices, such as limited processing power and memory.

Fast Fourier Transform (FFT) in Sound Processing

The Fast Fourier Transform (FFT) is a mathematical algorithm that transforms time-domain signals into their frequency-domain representations. This transformation is crucial for sound analysis as it allows us to identify the different frequency components that make up an audio signal. In the context of edge-based sound classification, FFT serves as a foundational step to extract meaningful features from raw audio data.

One of the key advantages of using FFT in sound processing is its speed and efficiency. FFT reduces the complexity of computing the Discrete Fourier Transform (DFT), making it feasible to perform real-time sound analysis even on resource-constrained edge devices. By converting audio signals into the frequency domain, FFT enables the identification of spectral patterns that are pivotal for distinguishing between different sound classes.

Cepstral Features for Enhanced Sound Classification

Cepstral features, particularly Mel-Frequency Cepstral Coefficients (MFCCs), are widely used in sound classification and speech recognition tasks. These features capture information about the power spectrum of a sound signal, adjusted according to the human ear's perception of different frequencies. MFCCs are derived from the logarithm of the power spectrum followed by a discrete cosine transform, effectively emphasizing the relevant auditory features while diminishing less significant information.

Incorporating cepstral features into sound classification algorithms enhances the ability to differentiate between sounds with subtle differences. By leveraging MFCCs, edge devices can achieve higher accuracy in sound classification tasks, making them suitable for applications ranging from wildlife monitoring to industrial noise detection.

Implementing Real-Time Sound Classification

Implementing real-time sound classification on edge devices involves several steps. Initially, the captured audio signal is pre-processed to remove noise and standardize amplitude levels. Next, FFT is applied to convert the pre-processed signal into its frequency components. Using these spectral data, MFCCs or other cepstral features are extracted to form the basis for classification.

Machine learning algorithms, such as neural networks or support vector machines, are then employed to classify the sound based on the extracted features. Training these models involves using labeled datasets to learn patterns associated with different sound categories. Once trained, the models can be deployed on edge devices, allowing them to perform sound classification in real-time with minimal computational overhead.

Challenges and Considerations

While the integration of FFT and cepstral features offers numerous advantages for edge-based sound classification, several challenges must be addressed. One major consideration is the need to balance computational efficiency with classification accuracy. Edge devices have limited resources, and overly complex models may not be practical for real-time processing.

Furthermore, real-world sound environments are often noisy and variable, necessitating robust algorithms capable of handling diverse conditions. Techniques such as noise reduction, feature normalization, and model optimization are essential to ensure reliable performance across different scenarios.

The Future of Edge-Based Sound Classification

The future of sound classification at the edge is promising, with advancements in hardware and algorithm design driving improvements in performance and efficiency. As edge devices become more powerful and cost-effective, the deployment of sophisticated sound classification systems will become increasingly feasible across various domains.

In conclusion, real-time FFT and cepstral features play a vital role in enabling effective sound classification on edge devices. By transforming audio signals into frequency and cepstral domains, these techniques provide the necessary tools for accurate and efficient analysis. With continued innovation and research, edge-based sound classification will undoubtedly become a cornerstone of intelligent systems, unlocking new possibilities for real-world applications.

In the world of vibration damping, structural health monitoring, and acoustic noise suppression, staying ahead requires more than intuition—it demands constant awareness of material innovations, sensor architectures, and IP trends across mechanical, automotive, aerospace, and building acoustics.

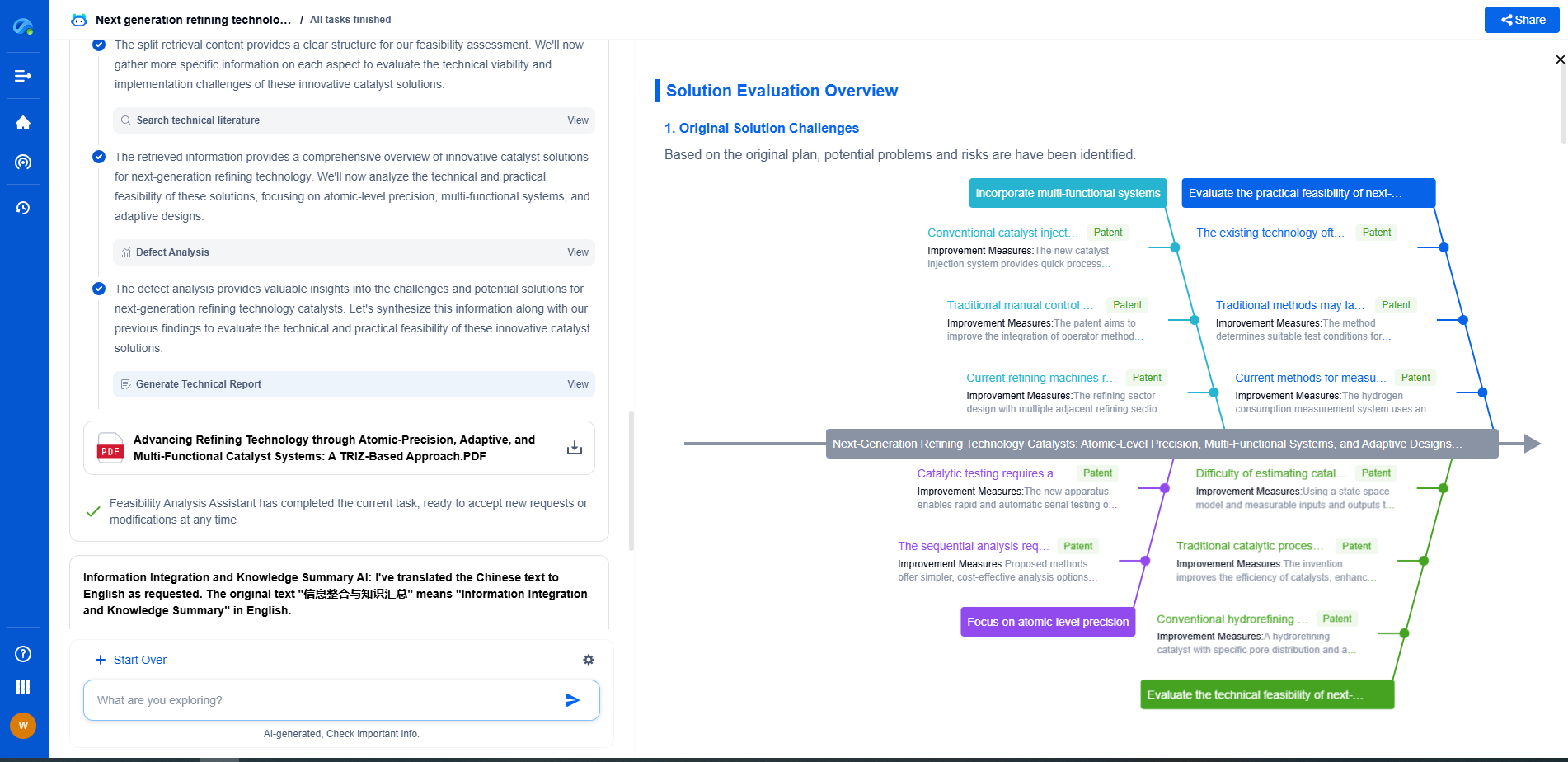

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

⚙️ Bring Eureka into your vibration intelligence workflow—and reduce guesswork in your R&D pipeline. Start your free experience today.