Real-Time Filtering Latency: Minimizing Delay in Critical Systems

JUL 17, 2025 |

In today’s fast-paced world, where decisions need to be made in the blink of an eye, the latency associated with real-time filtering in critical systems can be a matter of life and death. From autonomous vehicles navigating busy streets to financial trading platforms executing complex transactions, the speed at which data is processed can significantly affect outcomes. This blog will delve into the intricacies of real-time filtering latency and explore strategies to minimize delay in critical systems.

Understanding Latency in Real-Time Systems

Latency is the time delay experienced in a system between an input being processed and the corresponding output being produced. In real-time systems, this delay can have serious consequences. For instance, in aerospace applications, a delay in sensor data processing can lead to incorrect flight control decisions. Similarly, in healthcare, the timeliness of data processing can impact patient outcomes. Understanding the sources of latency is the first step in devising strategies to minimize it.

Sources of Latency

1. **Data Acquisition Delays**: The time taken to collect data from sensors before processing begins can contribute to overall latency. The speed and efficiency of data acquisition systems are pivotal in real-time environments.

2. **Processing Delays**: Once data is acquired, it must be processed quickly. This step can introduce significant latency if algorithms are not optimized for performance. Complex computations or inefficient code can hinder real-time processing capabilities.

3. **Network Delays**: If data needs to be transmitted across networks, latency can be introduced. Network conditions, such as bandwidth limitations and congestion, play a crucial role in determining the speed of data transmission.

4. **System Overheads**: Operating system and application layer overheads can add to latency. Scheduling delays and context switching in multitasking environments can exacerbate latency issues.

Techniques to Minimize Filtering Latency

1. **Optimizing Algorithms**: The choice of algorithm has a profound impact on the processing speed. Algorithms should be efficient, with reduced computational complexity, to ensure they meet real-time constraints. Employing parallel processing and exploiting hardware acceleration can further enhance performance.

2. **Improving Data Acquisition**: Implementing faster data collection methods, such as using high-speed sensors and interconnects, can reduce the time taken for data acquisition. Additionally, preferring edge computing over cloud-based solutions where feasible can minimize delays caused by data transmission.

3. **Enhancing Network Performance**: To minimize network-induced latency, utilizing high-speed communication protocols and optimizing data packet sizes can be beneficial. Quality of Service (QoS) techniques can also be deployed to prioritize critical data packets, ensuring timely delivery.

4. **Reducing System Overheads**: Streamlining operating system tasks and using real-time operating systems (RTOS) can lower system overheads. These systems are designed to offer predictable task scheduling, thus reducing latency.

Case Studies in Real-Time Filtering Optimization

Examining real-world examples can provide valuable insights into effective strategies for reducing latency. For instance, in the automotive industry, real-time filtering is crucial for autonomous vehicle systems. By leveraging advanced algorithms and robust network infrastructures, companies have achieved significant improvements in processing times.

In the financial sector, where milliseconds can result in substantial monetary gains or losses, firms employ cutting-edge technology to ensure real-time data processing. These include high-frequency trading platforms that utilize colocated servers to minimize network delays.

Conclusion

Reducing real-time filtering latency is an ongoing challenge that requires a comprehensive approach involving algorithmic optimization, enhanced data acquisition, improved network performance, and reduced system overheads. By understanding the multifaceted nature of latency and implementing targeted strategies, organizations can enhance the efficiency and reliability of critical systems. The continuous evolution of technology promises further advancements in reducing latency, paving the way for more responsive and effective real-time systems.

Whether you’re developing multifunctional DAQ platforms, programmable calibration benches, or integrated sensor measurement suites, the ability to track emerging patents, understand competitor strategies, and uncover untapped technology spaces is critical.

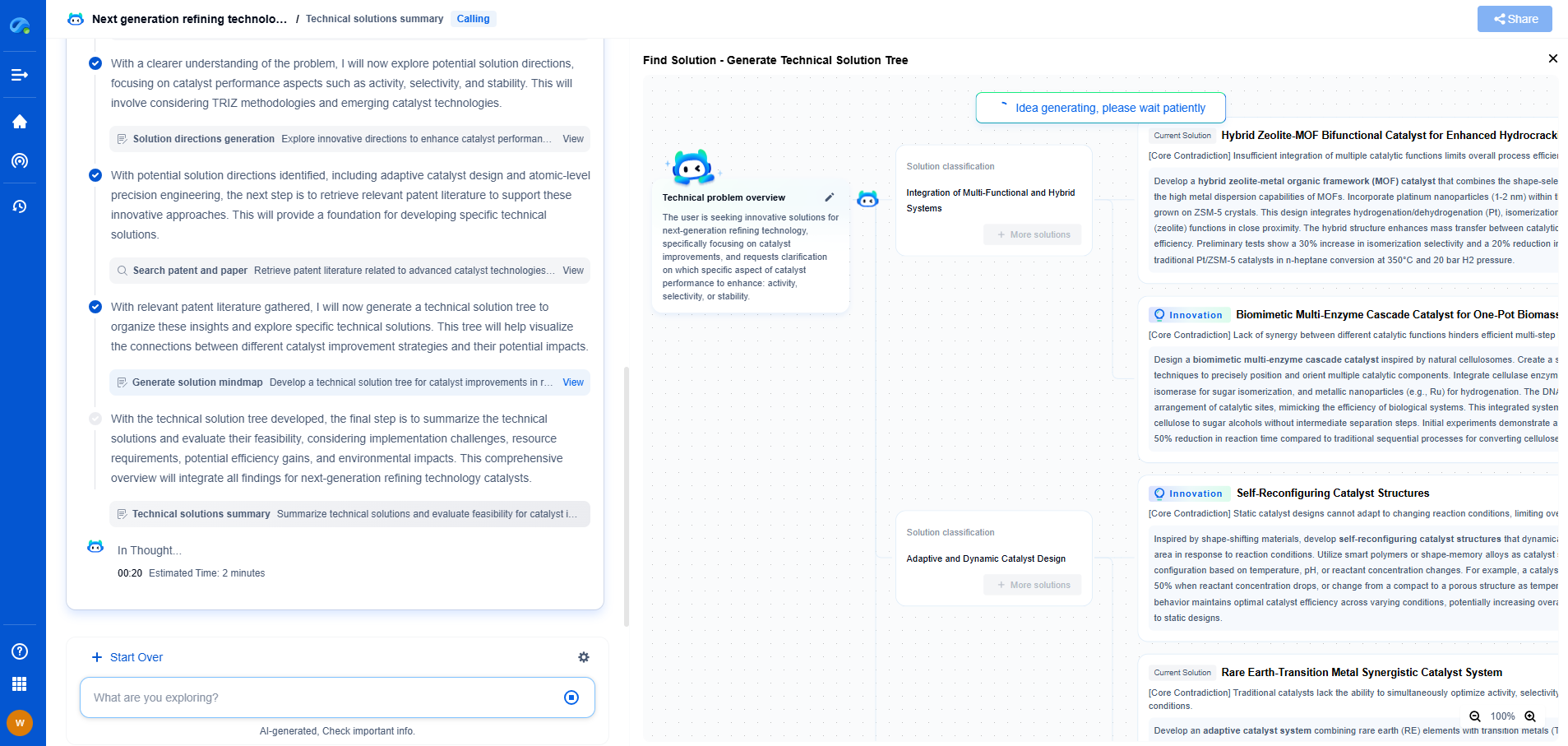

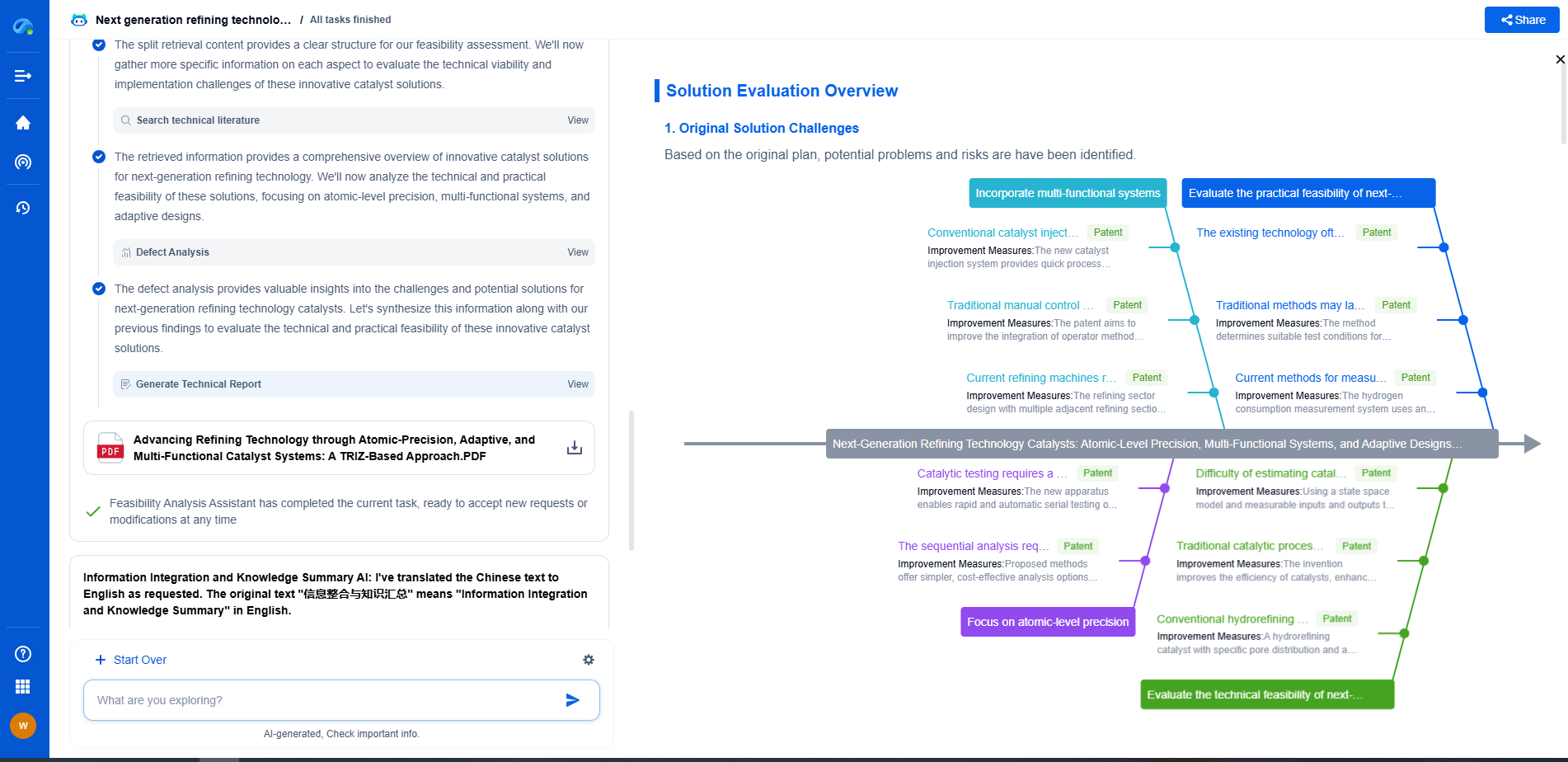

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🧪 Let Eureka be your digital research assistant—streamlining your technical search across disciplines and giving you the clarity to lead confidently. Experience it today.