Reducing Latency in MEC (Multi-access Edge Computing) Deployments

JUL 7, 2025 |

In the rapidly evolving digital landscape, Multi-access Edge Computing (MEC) has emerged as a pivotal technology, bridging the gap between cloud computing and the devices at the network's edge. By bringing computation and data storage closer to the location of the devices, MEC significantly reduces latency, enhances bandwidth efficiency, and improves user experiences in applications ranging from autonomous vehicles to virtual reality. However, as with any technological solution, challenges persist, particularly concerning latency. This article delves into strategies to reduce latency in MEC deployments, ensuring optimal performance and user satisfaction.

Understanding Latency in MEC

Latency, the time delay between an instruction's initiation and execution, is a critical factor in MEC deployments. In edge computing, latency can arise from various sources, including data processing, network communication, and computational resource allocation. Understanding these sources is fundamental to developing effective strategies for latency reduction.

Optimizing Network Infrastructure

A robust and well-optimized network infrastructure forms the backbone of any MEC deployment. By enhancing network connectivity, organizations can significantly reduce latency. Key strategies include:

1. Deploying Edge Nodes Strategically: Positioning edge nodes strategically near user populations can drastically reduce the distance data must travel, thus minimizing latency.

2. Utilizing Advanced Routing Protocols: Implementing sophisticated routing protocols that prioritize MEC traffic can ensure data takes the most efficient path, reducing delays.

3. Employing Network Slicing: By leveraging network slicing, distinct virtual networks can be created for different applications, ensuring that critical services receive the necessary bandwidth and low-latency paths.

Leveraging Caching and Data Locality

Caching plays a crucial role in reducing latency by storing frequently accessed data closer to the end-users. This approach minimizes the need for data retrieval from distant servers, thus cutting down on latency. Key techniques include:

1. Implementing Content Delivery Networks (CDNs): CDNs store copies of data at multiple edge locations, reducing the distance data must travel to reach users.

2. Emphasizing Data Localization: By processing and storing data locally at the edge, MEC can significantly reduce the latency associated with data transfer and processing.

Enhancing Computational Efficiency

The efficiency with which edge devices process data can have a substantial impact on overall latency. Improving computational efficiency involves:

1. Utilizing Advanced Processing Technologies: Incorporating technologies like GPU acceleration and FPGA (Field-Programmable Gate Array) can expedite data processing tasks, reducing computation times.

2. Implementing Load Balancing: Distributing workloads evenly across multiple edge nodes can prevent any single node from becoming a bottleneck, ensuring quicker data processing.

3. Optimizing Software Algorithms: Streamlining software algorithms can reduce the processing time required for data analysis and response generation.

Ensuring Real-Time Data Analysis

For many MEC applications, particularly those requiring real-time data analysis, latency is a critical concern. Organizations can employ several strategies to ensure faster data processing and response generation:

1. Implementing Edge AI: By deploying artificial intelligence models at the edge, data can be analyzed and insights generated in real-time, minimizing the need for data to travel back to centralized servers.

2. Utilizing Predictive Analytics: Predictive analytics can anticipate user needs and pre-emptively prepare data or services, reducing perceived latency.

3. Emphasizing Asynchronous Processing: Allowing certain non-critical processes to occur asynchronously can free up resources for time-sensitive tasks, ensuring faster response times.

Conclusion

Reducing latency in MEC deployments is essential for realizing the full potential of edge computing. By optimizing network infrastructure, leveraging caching and data locality, enhancing computational efficiency, and ensuring real-time data analysis, businesses can significantly improve the performance and user experience of their edge applications. As MEC technology continues to evolve, so too will the strategies for minimizing latency, driving further innovation and efficiency in this burgeoning field.

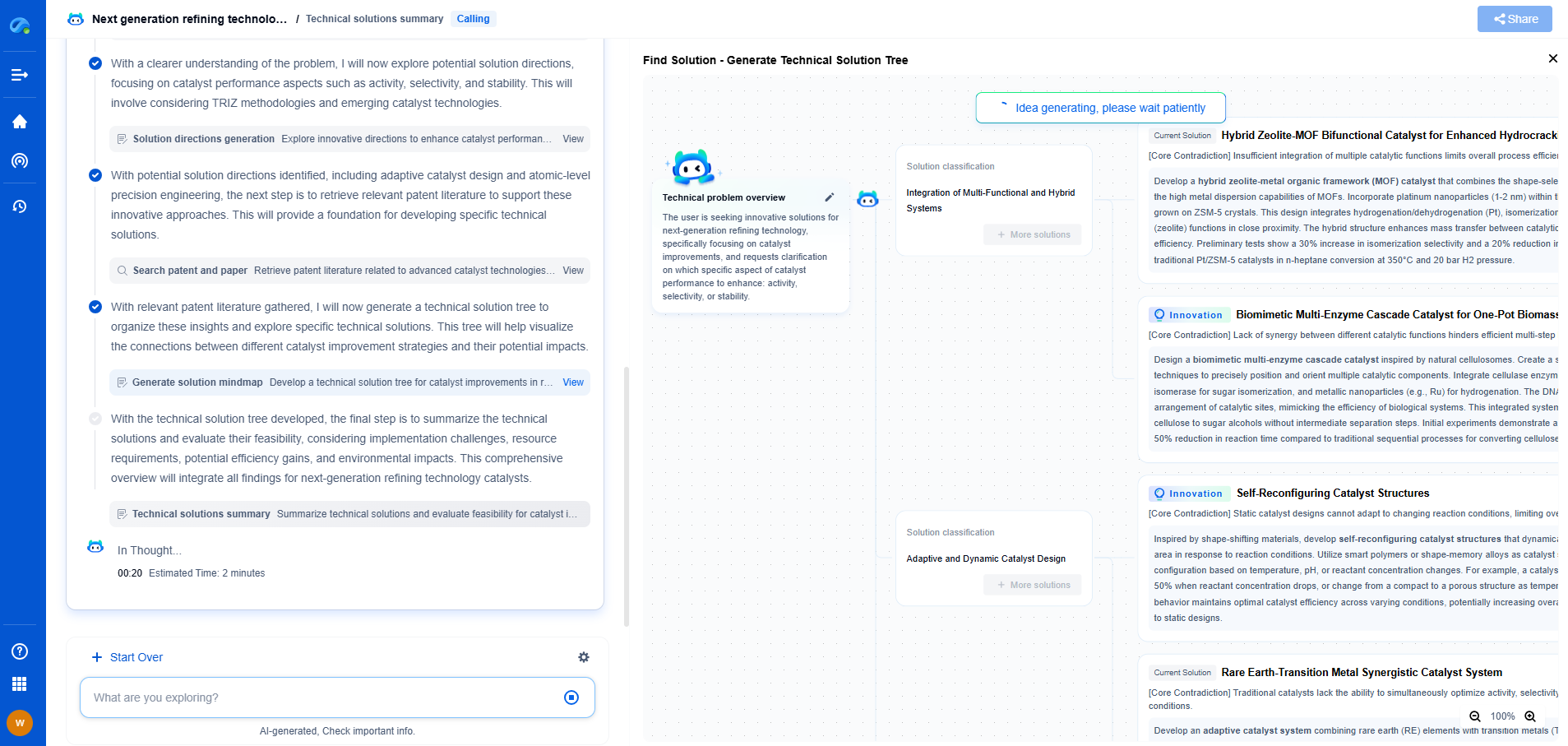

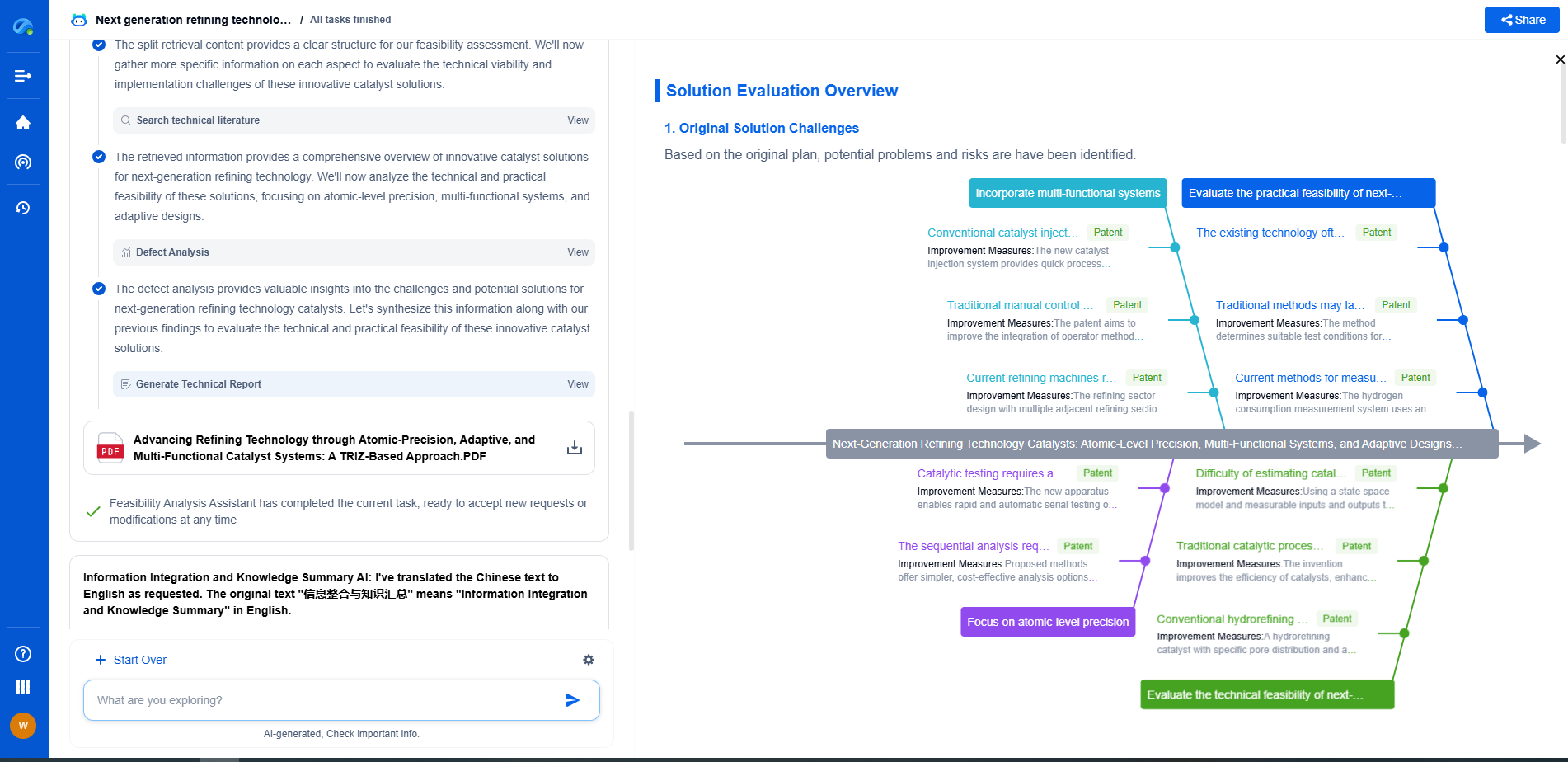

Empower Your Wireless Innovation with Patsnap Eureka

From 5G NR slicing to AI-driven RRM, today’s wireless communication networks are defined by unprecedented complexity and innovation velocity. Whether you’re optimizing handover reliability in ultra-dense networks, exploring mmWave propagation challenges, or analyzing patents for O-RAN interfaces, speed and precision in your R&D and IP workflows are more critical than ever.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

Whether you work in network architecture, protocol design, antenna systems, or spectrum engineering, Patsnap Eureka brings you the intelligence to make faster decisions, uncover novel ideas, and protect what’s next.

🚀 Try Patsnap Eureka today and see how it accelerates wireless communication R&D—one intelligent insight at a time.