Reinforcement Learning vs Model Predictive Control: A Technical Comparison

JUL 2, 2025 |

In the landscape of control systems and decision-making algorithms, Reinforcement Learning (RL) and Model Predictive Control (MPC) have emerged as key players. Both methodologies offer robust frameworks for handling complex systems with dynamic environments, yet they do so through fundamentally different approaches. This blog aims to demystify the technical aspects of RL and MPC, providing a detailed comparison of their strengths, weaknesses, and ideal use-cases.

Understanding Reinforcement Learning

Reinforcement Learning is a subset of machine learning where an agent learns to make decisions by interacting with an environment. The agent seeks to maximize cumulative rewards by taking actions based on its observations. Central to RL is the concept of trial and error, where the agent improves its strategy or policy over time.

Key Components of RL:

- **Agent**: The decision-maker or learner.

- **Environment**: Everything the agent interacts with.

- **Actions**: All possible moves the agent can make.

- **Rewards**: Feedback from the environment based on the actions taken.

- **Policy**: A strategy employed by the agent to decide actions based on states.

- **Value Function**: A prediction of future reward, helping the agent evaluate its current state.

RL's advantage lies in its ability to handle problems where models of the environment are unknown or too complex to design accurately. Applications range from game playing, like in AlphaGo, to robotics and autonomous driving.

Understanding Model Predictive Control

Model Predictive Control is an advanced control strategy that uses a model of the system to predict the future behavior of variables over a certain time horizon. MPC solves an optimization problem at each time step to find the optimal control actions, incorporating constraints and objectives.

Key Components of MPC:

- **Model**: A mathematical representation of the system dynamics.

- **Prediction Horizon**: The future time span over which predictions are made.

- **Optimization**: The process of finding the best control action to achieve the desired outcome.

- **Constraints**: Limitations or bounds on the system's states and controls.

MPC is highly favored in industrial applications due to its ability to handle multi-variable control problems with constraints, making it ideal for chemical process control, automotive, and aerospace industries.

Comparison of Reinforcement Learning and Model Predictive Control

1. **Model Dependency**:

- RL is model-free, meaning it does not require a pre-defined mathematical model of the environment. This makes it flexible and applicable to a wide range of complex and uncertain environments.

- MPC is model-based, relying heavily on an accurate model to predict future outcomes. This can be both an advantage and a limitation, as the quality of control depends on the model's precision.

2. **Adaptability**:

- RL excels in environments that are dynamic and stochastic, as it continuously learns and adapts from the feedback it receives.

- MPC, while adaptive in recalculating optimal actions at each timestep, can struggle in environments where the system dynamics change rapidly and unpredictably unless frequent model updates are feasible.

3. **Computational Resources**:

- RL, particularly deep reinforcement learning, can be computationally expensive due to the need for extensive simulation and training, especially in high-dimensional spaces.

- MPC requires solving an optimization problem in real-time at each control step, which can become computationally intensive for large-scale or fast-sampling systems.

4. **Long-Term Planning**:

- RL is inherently designed to maximize long-term rewards, making it suitable for tasks where long-term planning and foresight are crucial.

- MPC focuses on a finite prediction horizon and may require careful tuning of the horizon length to balance long-term performance with computational feasibility.

5. **Handling Constraints**:

- MPC naturally incorporates constraints into its optimization framework, making it effective for systems with strict operational limits.

- RL can handle constraints, but this often requires additional techniques such as penalty functions or constrained policy optimization, which may complicate the learning process.

Applications and Use Cases

Reinforcement Learning has found significant success in applications where the environment is highly dynamic and uncertain, such as autonomous vehicles, robotic manipulation, and game AI. On the other hand, Model Predictive Control is preferred in scenarios where system dynamics are well understood and constraints are a critical factor, like in process control, energy management, and HVAC systems.

Conclusion

Reinforcement Learning and Model Predictive Control each offer unique advantages for control and decision-making applications. The choice between them depends on the specific requirements of the task at hand, including model availability, environmental dynamics, computational resources, and the need for explicit constraint handling. By understanding the nuances of both approaches, practitioners can make informed decisions to harness the full potential of these powerful methodologies.

Ready to Reinvent How You Work on Control Systems?

Designing, analyzing, and optimizing control systems involves complex decision-making, from selecting the right sensor configurations to ensuring robust fault tolerance and interoperability. If you’re spending countless hours digging through documentation, standards, patents, or simulation results — it's time for a smarter way to work.

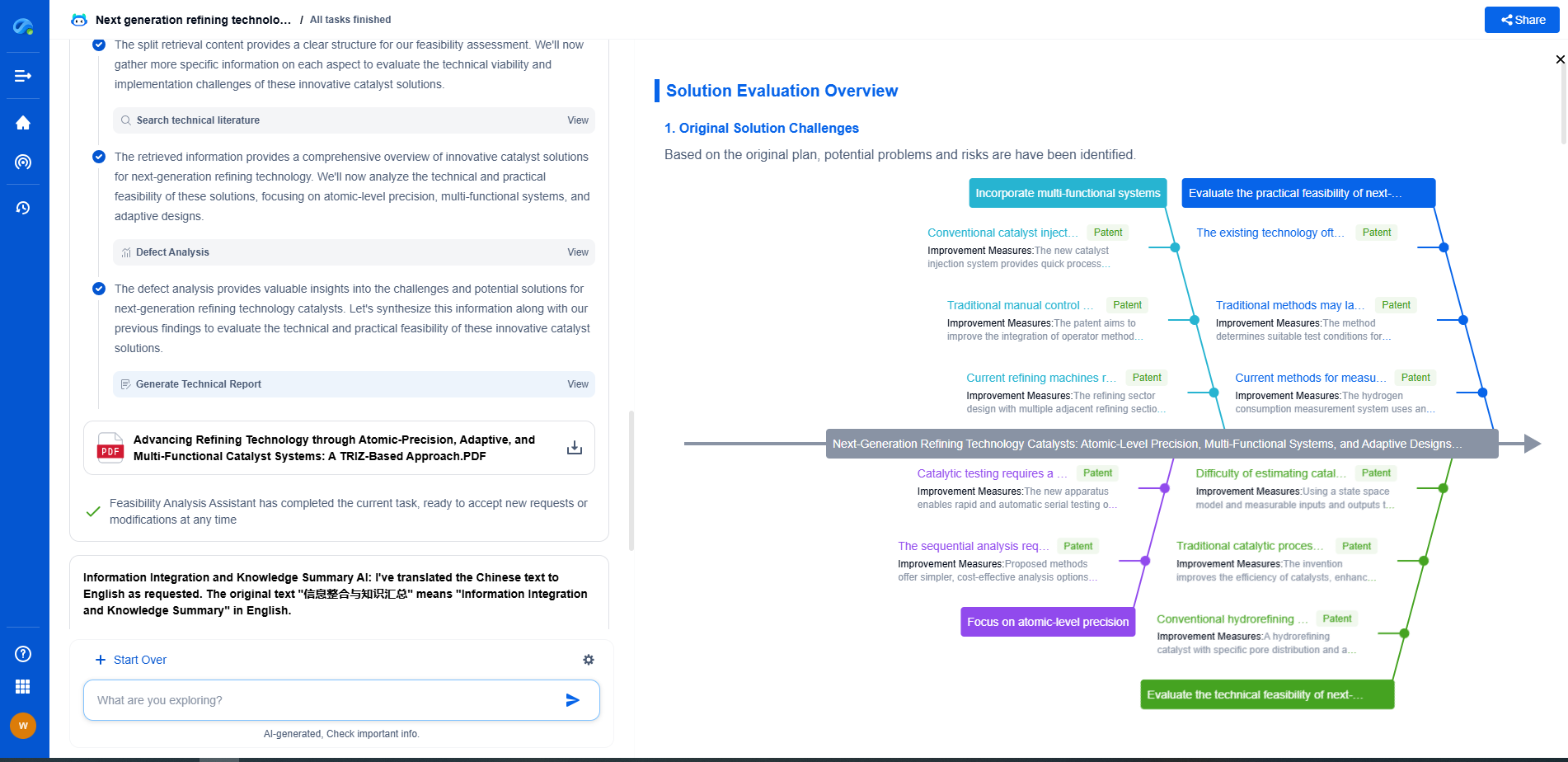

Patsnap Eureka is your intelligent AI Agent, purpose-built for R&D and IP professionals in high-tech industries. Whether you're developing next-gen motion controllers, debugging signal integrity issues, or navigating complex regulatory and patent landscapes in industrial automation, Eureka helps you cut through technical noise and surface the insights that matter—faster.

👉 Experience Patsnap Eureka today — Power up your Control Systems innovation with AI intelligence built for engineers and IP minds.