SDR Latency Optimization: Reducing Host-Device Overhead

JUL 14, 2025 |

Software-Defined Radio (SDR) represents a transformative approach to radio communication systems, offering flexibility and scalability that hardware-based systems cannot match. However, one of the primary challenges in SDR systems is managing latency, particularly the overhead between the host and the device. Optimizing this latency is crucial for applications requiring real-time data processing and communication. In this article, we delve into methods for reducing host-device overhead in SDR systems, ensuring efficient and timely data transfer and processing.

Understanding SDR Latency

Before diving into optimization strategies, it's essential to understand what contributes to latency in SDR systems. Latency can arise from several sources:

1. Data Transfer Latency: This occurs during the transfer of data between the host computer and the SDR hardware. The bandwidth and throughput of the bus (e.g., USB, PCIe) used for this transfer are critical factors.

2. Processing Latency: Once data is received, it must be processed by the host. The speed and efficiency of the host's CPU and memory can impact processing time.

3. Communication Protocol Overhead: The protocols used for communication between the host and device can introduce additional delays.

Identifying these sources is the first step in effective latency management.

Optimizing Data Transfer

1. Selecting the Right Interface

The interface used for communication between the host and SDR device plays a significant role in data transfer speeds. USB connections, especially older versions, can introduce substantial latency. Opting for faster interfaces like PCIe or Thunderbolt can significantly reduce data transfer times.

2. Efficient Buffer Management

Proper buffer management is crucial for minimizing latency. Buffers store data temporarily during transfer, and efficiently managing their size and handling can reduce delays. Implementing techniques like double buffering, where one buffer is filled while the other is being processed, can help maintain a steady data flow.

3. Minimizing Interruptions

Interruptions during data transfer can exacerbate latency issues. Ensuring that the system prioritizes SDR data transfers and minimizes interruptions from other processes can lead to smoother operations. Using real-time operating systems (RTOS) or configuring the host system to prioritize SDR-related tasks can be beneficial.

Enhancing Processing Efficiency

1. Optimizing Signal Processing Algorithms

The algorithms used for signal processing on the host can impact latency. Optimizing these algorithms for speed and efficiency is crucial. Techniques such as algorithm parallelization, using optimized libraries, and leveraging hardware accelerators (e.g., GPUs) can improve processing times.

2. Balancing Load Distribution

Distributing the workload between the host CPU and any available co-processors (like GPUs) can lead to more efficient processing. Balancing this load effectively ensures that no single component becomes a bottleneck, thus reducing latency.

Reducing Protocol Overhead

1. Streamlining Communication Protocols

The protocols used for communication between the host and SDR device should be as streamlined as possible. Reducing unnecessary handshakes and acknowledgments can lower the time taken for data exchange.

2. Custom Protocols for Critical Applications

In scenarios where latency requirements are stringent, developing custom communication protocols tailored to specific application needs can be advantageous. These protocols can be designed to minimize overhead and optimize data throughput.

Conclusion

Reducing host-device overhead in SDR systems is a multifaceted challenge that requires attention to data transfer, processing efficiency, and communication protocols. By carefully selecting the right interfaces, optimizing buffer management, and streamlining communication, significant reductions in latency can be achieved. These optimizations are crucial for SDR applications where real-time processing and minimal delays are non-negotiable. As SDR technology continues to evolve, ongoing research and development in latency reduction techniques will remain pivotal in harnessing the full potential of these versatile systems.

From 5G NR to SDN and quantum-safe encryption, the digital communication landscape is evolving faster than ever. For R&D teams and IP professionals, tracking protocol shifts, understanding standards like 3GPP and IEEE 802, and monitoring the global patent race are now mission-critical.

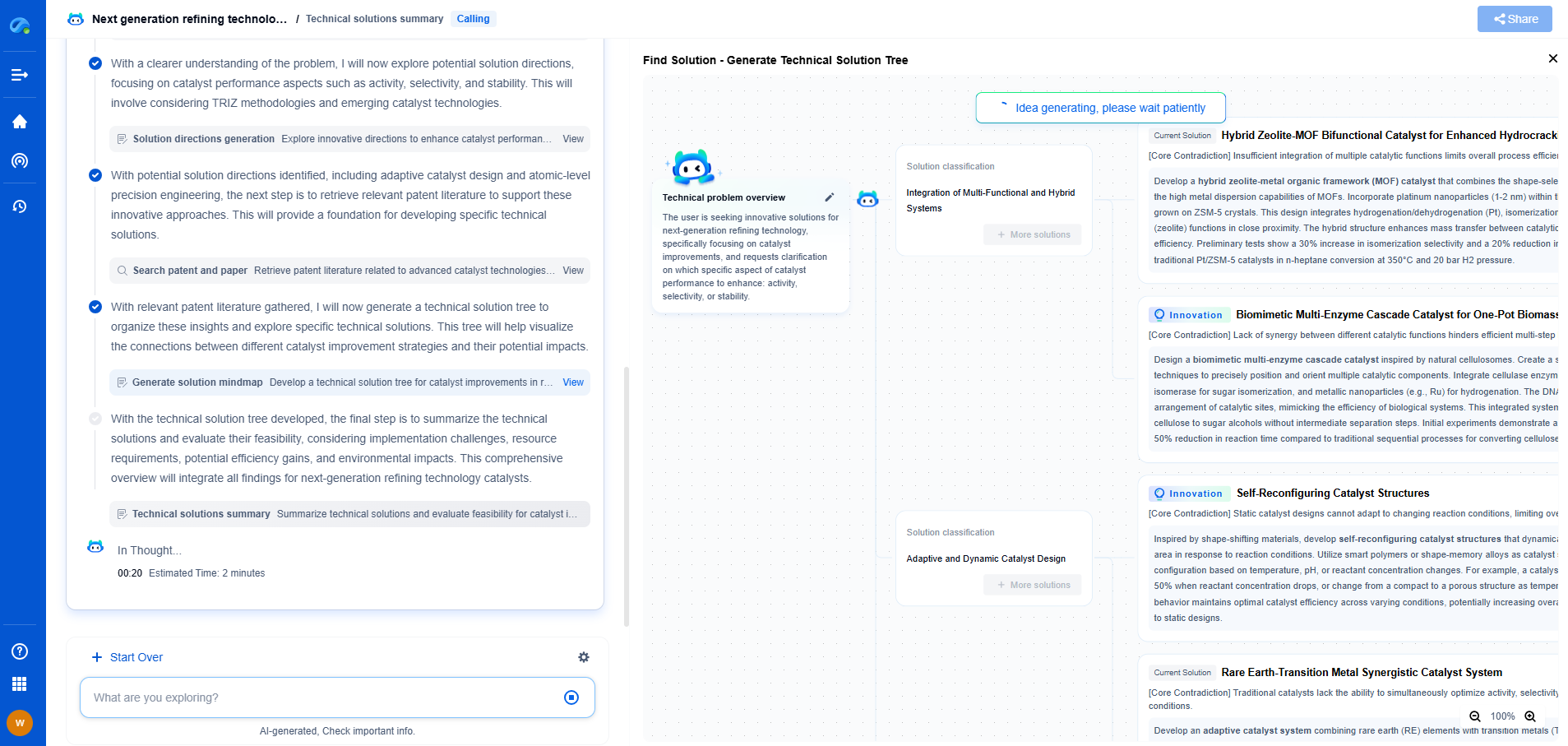

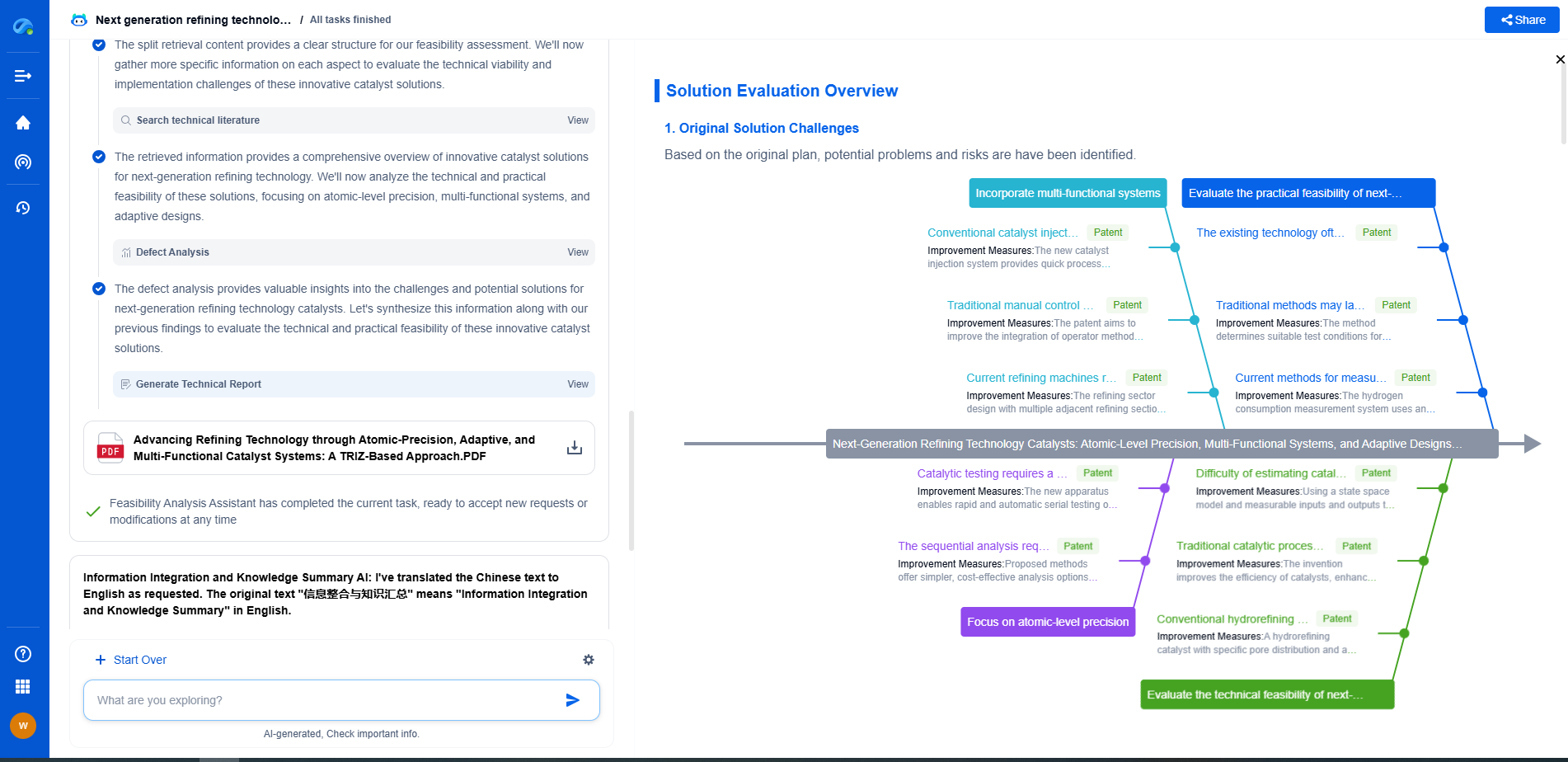

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

📡 Experience Patsnap Eureka today and unlock next-gen insights into digital communication infrastructure, before your competitors do.