Self-Attention in Vision Transformers: Why It Captures Long-Range Dependencies

JUL 10, 2025 |

The world of computer vision has been revolutionized by the introduction of Vision Transformers (ViTs), which leverage the power of self-attention mechanisms to process images. Unlike traditional convolutional neural networks (CNNs) that rely on local image patches, ViTs have introduced a paradigm shift by capturing global information through self-attention layers. This has allowed them to effectively model long-range dependencies in images, leading to improved performance in various vision tasks. Let's explore how self-attention works in Vision Transformers and why it is so effective at capturing long-range dependencies.

Self-Attention: A New Approach to Image Processing

At the heart of Vision Transformers is the self-attention mechanism, which was initially popularized in the context of natural language processing (NLP) with models like the Transformer. In ViTs, this mechanism is adapted to process image data. The idea is simple yet powerful: instead of focusing on local relationships, self-attention considers every part of an image in relation to every other part.

This is achieved by dividing an image into a sequence of patches, treating these patches similarly to words in a sentence. Each patch is linearly embedded into a fixed-size vector, and these vectors are then passed through layers of self-attention. This process allows the model to weigh the importance of different parts of the image when making predictions, effectively capturing both local and global context.

Capturing Long-Range Dependencies

One of the standout features of self-attention in ViTs is its ability to capture long-range dependencies. Traditional CNNs are constrained by the size of their receptive fields, which limits their ability to consider distant relationships in an image. In contrast, self-attention allows each image patch to attend to all other patches, regardless of their spatial distance.

This means that ViTs can recognize patterns and relationships that span across the entire image. For example, understanding the relationship between a person's face and their shoes in a photo, or connecting distant parts of a landscape, becomes natural for a Vision Transformer. This holistic view of images can lead to more accurate and nuanced predictions, especially in complex scenes.

Advantages Over Convolutional Networks

The ability to capture long-range dependencies gives Vision Transformers several advantages over traditional CNNs. First, ViTs can achieve better generalization on vision tasks with fewer computational resources. While CNNs require deep architectures with numerous layers to expand their receptive fields, ViTs can achieve similar effects with shallower structures thanks to their global attention capabilities.

Additionally, ViTs offer enhanced robustness to variations in image input. Their global attention mechanism allows them to maintain performance even when images are subjected to transformations like scaling, rotation, or occlusion. This makes them particularly well-suited for real-world applications where such variations are common.

Challenges and Future Directions

Despite their advantages, Vision Transformers are not without challenges. Training these models from scratch requires large datasets and significant computational power, which can be a barrier for many practitioners. However, ongoing research is focused on improving the efficiency and scalability of ViTs, making them more accessible to a wider audience.

Moreover, hybrid models that combine the strengths of both CNNs and ViTs are being explored. By leveraging the local sensitivity of CNNs and the global awareness of ViTs, these hybrid architectures aim to provide even better performance across a range of vision tasks.

Conclusion

Vision Transformers, with their self-attention mechanisms, have demonstrated a remarkable ability to capture long-range dependencies in images, setting a new standard in computer vision. By considering every part of an image in relation to every other part, ViTs provide a comprehensive understanding that surpasses traditional methods. As research continues to advance, we can expect Vision Transformers to play an increasingly prominent role in the future of image processing, unlocking new possibilities for innovation and application.

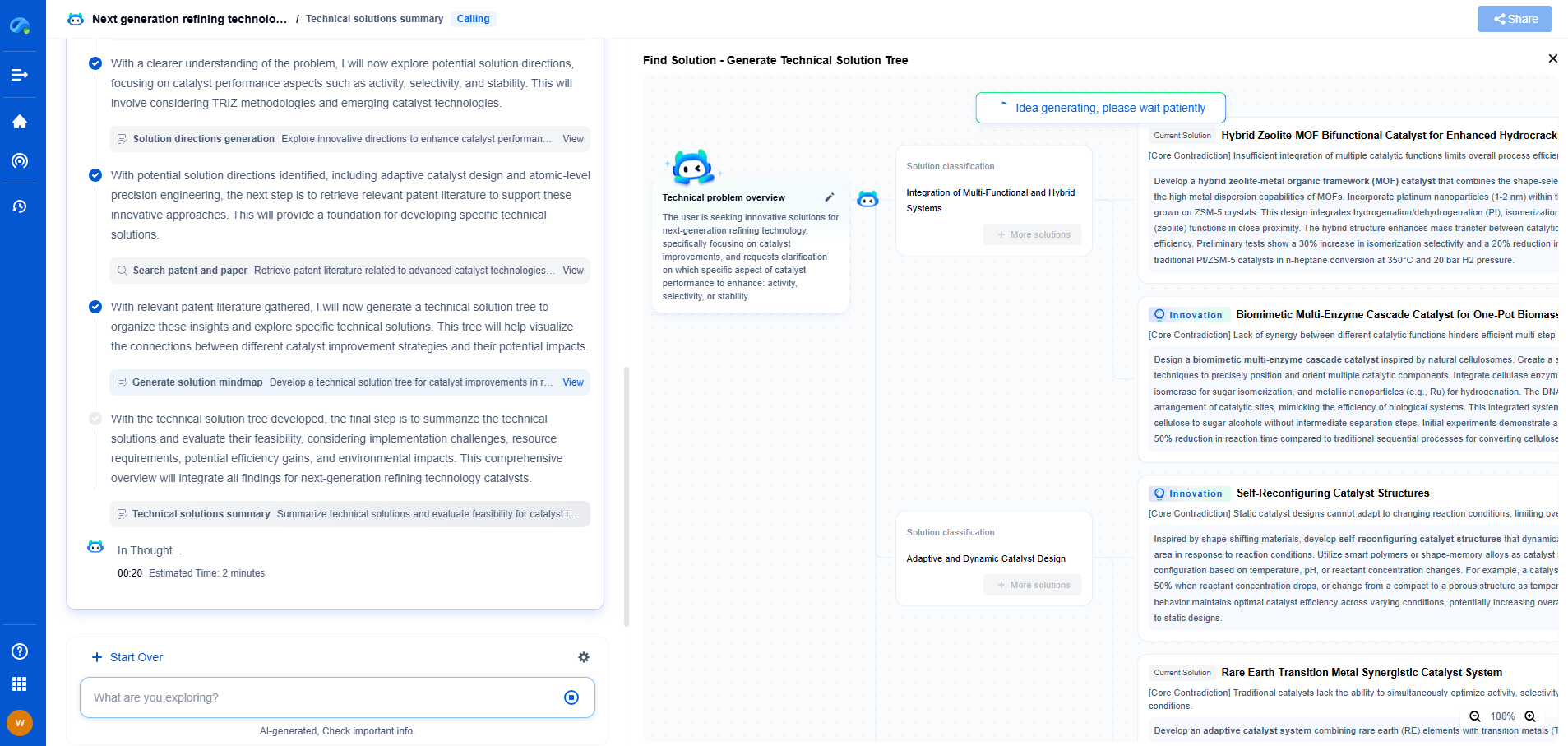

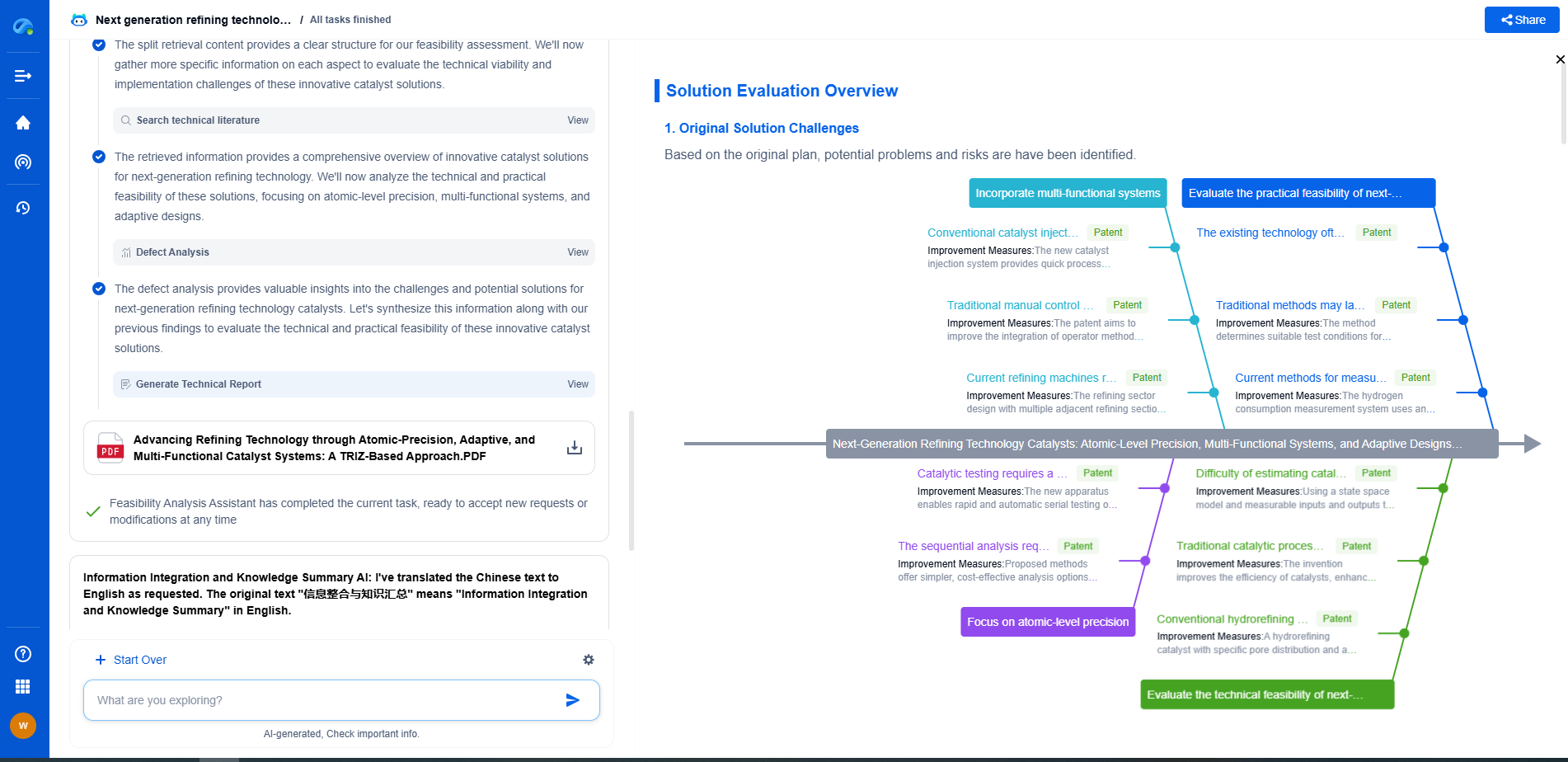

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.