Serverless Edge Computing: New Challenges for Load Balancing

JUL 14, 2025 |

Understanding Serverless Edge Computing

To appreciate the challenges of load balancing in serverless edge computing, it's essential to first understand what this computing model entails. Unlike traditional centralized data centers, edge computing pushes data processing closer to where it is generated and consumed—near the 'edge' of the network. Serverless computing, on the other hand, abstracts away the server management layer, allowing developers to focus purely on code without worrying about infrastructure.

The convergence of these two paradigms—serverless and edge—promises unprecedented scalability, cost-effectiveness, and reduced latency. However, achieving these benefits requires rethinking traditional approaches to load balancing.

The Unique Load Balancing Challenges

In a serverless edge environment, load balancing is not just about distributing requests evenly across servers. It involves ensuring efficient allocation of resources across geographically dispersed edge nodes, each with varying capacities and constraints. This introduces several unique challenges:

1. **Dynamic and Distributed Environments**: In traditional computing, load balancers distribute traffic among a relatively fixed number of servers. However, in serverless edge environments, the number and location of resources can change rapidly based on real-time demand. This dynamism makes predicting and managing loads more complex.

2. **Latency Sensitivity**: One of the primary benefits of edge computing is reduced latency. However, to maintain this advantage, load balancing mechanisms must ensure that requests are routed to the closest and least congested nodes. Failure to do so can negate the latency benefits, frustrating users and degrading application performance.

3. **Resource Constraints**: Edge nodes typically have limited resources compared to centralized data centers. Load balancing must account for these constraints, ensuring that no single node is overwhelmed while still providing the necessary computing power where needed.

4. **Security and Compliance Considerations**: Edge environments often span multiple jurisdictions, each with its own data protection regulations. Load balancers must be aware of these legal boundaries and ensure that data is processed and stored in compliance with local laws, adding another layer of complexity.

Strategies for Effective Load Balancing

1. **Intelligent Load Distribution**: Leveraging AI and machine learning algorithms can enhance load balancing strategies by predicting traffic patterns and reallocating resources in real-time. These advanced algorithms can analyze historical data to anticipate spikes in demand and adjust resource distribution accordingly.

2. **Proximity-Based Routing**: Implementing proximity-based routing ensures that requests are handled by the nearest edge node, minimizing latency. This requires a robust understanding of network topology and the real-time status of each node.

3. **Adaptive Resource Management**: Given the variability in edge node capacities, adaptive resource management strategies are crucial. These strategies involve monitoring the health and performance of each node and redistributing workloads to optimize resource utilization.

4. **Enhanced Monitoring and Analytics**: Comprehensive monitoring tools are essential to track the performance of edge nodes and the effectiveness of load balancing strategies. Analytics can provide insights into traffic patterns, helping to refine strategies over time.

5. **Compliance-Aware Load Balancing**: To address legal and compliance challenges, load balancers must incorporate geospatial intelligence, ensuring that data processing complies with jurisdictional requirements. This may involve rerouting traffic to nodes within specific legal boundaries based on data residency requirements.

Conclusion

Serverless edge computing is reshaping the digital landscape, offering new opportunities and challenges. Effective load balancing in this context requires embracing innovative strategies that account for the dynamic and distributed nature of edge environments. By focusing on intelligent distribution, proximity-based routing, adaptive management, and compliance-aware solutions, organizations can harness the full potential of serverless edge computing while overcoming its inherent challenges. As this technology continues to evolve, so too will the strategies for efficiently managing and balancing loads across the edge.

From 5G NR to SDN and quantum-safe encryption, the digital communication landscape is evolving faster than ever. For R&D teams and IP professionals, tracking protocol shifts, understanding standards like 3GPP and IEEE 802, and monitoring the global patent race are now mission-critical.

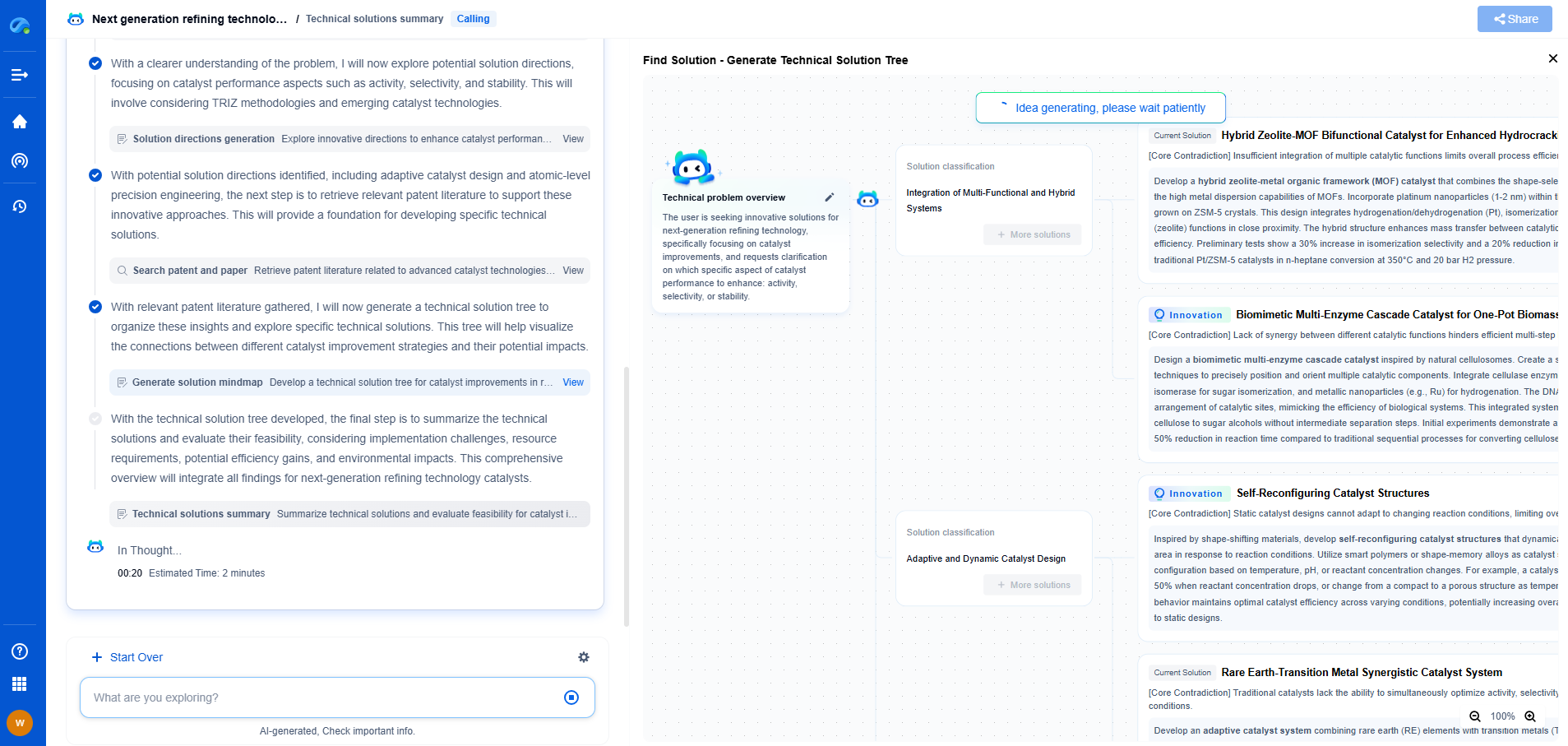

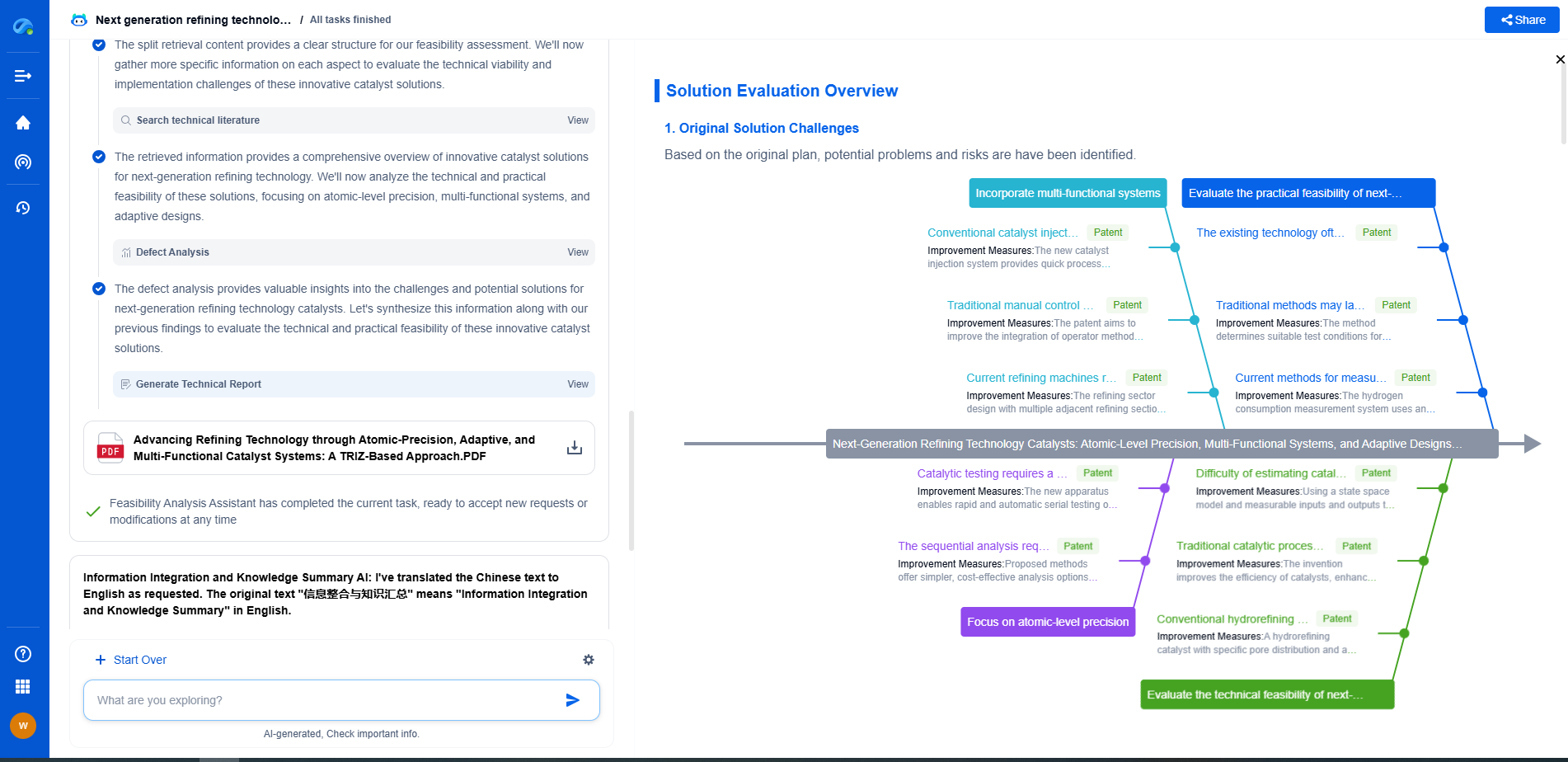

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

📡 Experience Patsnap Eureka today and unlock next-gen insights into digital communication infrastructure, before your competitors do.