Signal Processing in Autonomous Vehicles: Sensor Fusion Explained

JUN 27, 2025 |

Signal processing plays a pivotal role in the operation of autonomous vehicles, acting as the bridge between raw sensor data and meaningful decision-making processes. These vehicles rely on a variety of sensors to perceive their environment, including cameras, LiDAR, radar, and ultrasonic sensors. Each sensor has its own strengths and limitations, making it crucial to combine or "fuse" their data to create a comprehensive understanding of the vehicle's surroundings. This is where sensor fusion, a critical aspect of signal processing, comes into play.

Understanding Sensor Fusion

Sensor fusion involves integrating data from multiple sensors to produce more accurate, reliable, and comprehensive information than would be possible with any single sensor alone. It enhances the perception capabilities of autonomous vehicles, allowing them to better understand their surroundings and make informed driving decisions.

There are several levels at which sensor fusion can occur:

1. **Data Level Fusion**: At this level, raw data from different sensors is combined. This often involves synchronizing data from different sensors and aligning it within a common reference frame.

2. **Feature Level Fusion**: This involves extracting relevant features from raw sensor data and combining these features to form a new, enhanced dataset. Feature level fusion aims to improve the quality of information before it's used in decision-making processes.

3. **Decision Level Fusion**: Here, decisions made by individual sensor systems are combined to arrive at a final decision. This level is typically used when sensors operate independently, and the system needs to synthesize multiple outputs.

Advantages of Sensor Fusion in Autonomous Vehicles

The primary advantage of sensor fusion is that it compensates for the limitations of individual sensors. For example, cameras provide rich color and texture details but struggle in poor lighting conditions. In contrast, LiDAR systems offer accurate distance measurements regardless of light but lack color information. By fusing data from these two sensors, an autonomous vehicle can achieve a more accurate perception of its environment under various conditions.

Another advantage is redundancy. If one sensor fails or provides inaccurate readings, the vehicle can still rely on data from other sensors. This redundancy enhances the reliability and safety of autonomous systems.

Challenges in Implementing Sensor Fusion

Despite its benefits, implementing sensor fusion in autonomous vehicles is not without challenges. One major challenge is dealing with the different data formats and rates at which sensors operate. Cameras, for instance, capture data at a high frame rate, while LiDAR operates at a slower frequency. Aligning and synchronizing these different data streams requires sophisticated algorithms.

Another challenge is computational complexity. Processing large volumes of data from multiple sensors in real-time demands significant computational resources. This has driven the development of specialized hardware and optimization techniques to ensure that sensor fusion processes are both efficient and quick.

Real-World Applications of Sensor Fusion

In real-world scenarios, sensor fusion enables numerous applications within autonomous vehicles. For example, it enhances object detection and classification, crucial for tasks such as detecting pedestrians, cyclists, or other vehicles. By combining data from multiple sensors, the vehicle can accurately determine the position, speed, and trajectory of objects in its vicinity.

Moreover, sensor fusion is essential for high-definition mapping and localization. Autonomous vehicles need to know their precise location on a map to navigate effectively. Fusing data from GPS, LiDAR, and cameras allows vehicles to achieve high localization accuracy, even in challenging environments like urban canyons where GPS signals may be unreliable.

Future Trends and Developments

As technology advances, we can expect to see further developments in sensor fusion techniques. Machine learning and artificial intelligence are playing increasingly significant roles in enhancing sensor fusion processes. These technologies can identify patterns and correlations in sensor data that traditional algorithms might miss, leading to improved perception and decision-making.

Additionally, as new sensors and technologies are developed, such as advanced radar systems and thermal imaging, the opportunities for more robust sensor fusion architectures will expand. These innovations promise to contribute to the ongoing evolution and improvement of autonomous vehicle technologies.

Conclusion

Sensor fusion is a cornerstone of signal processing in autonomous vehicles, offering enhanced perception capabilities that are critical for safe and efficient operation. While challenges remain, ongoing research and development in this field are paving the way for more sophisticated and reliable autonomous driving systems. As technology advances, the integration of diverse sensor data will continue to improve, moving us closer to the widespread adoption of fully autonomous vehicles.

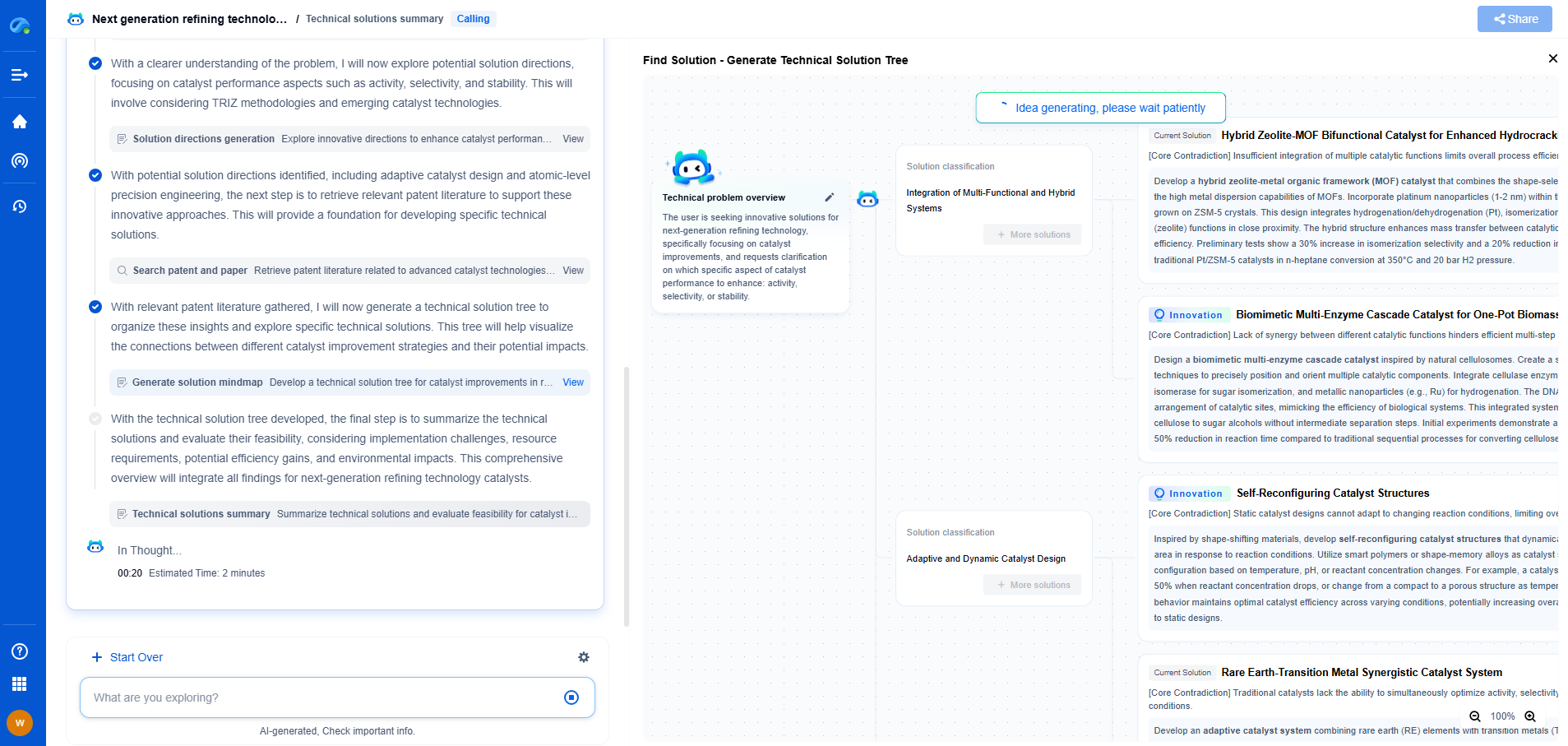

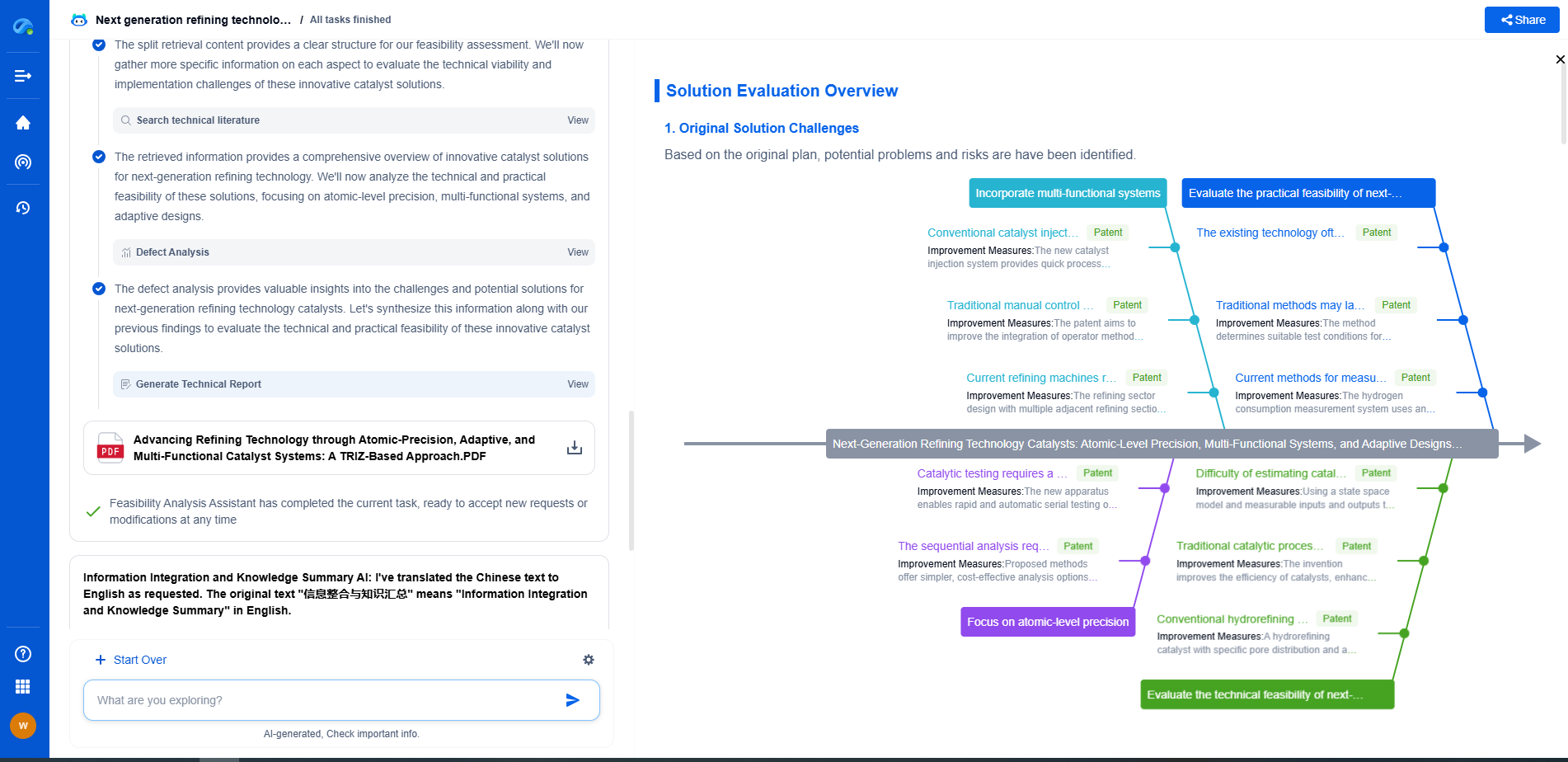

Accelerate Electronic Circuit Innovation with AI-Powered Insights from Patsnap Eureka

The world of electronic circuits is evolving faster than ever—from high-speed analog signal processing to digital modulation systems, PLLs, oscillators, and cutting-edge power management ICs. For R&D engineers, IP professionals, and strategic decision-makers in this space, staying ahead of the curve means navigating a massive and rapidly growing landscape of patents, technical literature, and competitor moves.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🚀 Experience the next level of innovation intelligence. Try Patsnap Eureka today and discover how AI can power your breakthroughs in electronic circuit design and strategy. Book a free trial or schedule a personalized demo now.