Sound Directivity Optimization in VR/AR Spatial Audio

JUL 16, 2025 |

Spatial audio is revolutionizing the way we experience virtual and augmented reality, creating a more immersive and realistic environment. Unlike traditional audio systems that project sound from a single source, spatial audio simulates a three-dimensional sound field, allowing users to perceive sound from all directions. This creates a sense of presence and realism that is crucial for an engaging VR/AR experience. The effectiveness of spatial audio, however, largely depends on one key factor: sound directivity.

What is Sound Directivity?

Sound directivity refers to the direction from which sound waves emanate and how they are distributed in space. In the context of VR/AR, optimizing sound directivity means ensuring that sound sources in a virtual environment are perceived in a way that aligns with their visual counterparts. Proper sound directivity can enhance the realism of a scene, making the user feel as though they are truly part of the environment. Conversely, poor directivity can disrupt the experience, breaking the illusion and reducing user engagement.

Challenges in Optimizing Sound Directivity

Optimizing sound directivity in VR/AR poses several challenges. First, the spatial resolution of audio must be high enough to ensure accurate localization of sound sources. This requires sophisticated algorithms and advanced processing techniques. Additionally, the physical characteristics of the user's environment, such as room size and acoustics, can significantly affect sound propagation and must be considered when designing spatial audio systems.

Another challenge lies in the hardware limitations of VR/AR devices. Headphones and speakers must be capable of delivering high-fidelity sound with precise spatial cues, which often necessitates bespoke designs and innovations in sound technology. Moreover, individual variations in human anatomy, such as the shape of the ear, can influence how sound is perceived, adding another layer of complexity to the task of optimizing sound directivity.

Approaches to Sound Directivity Optimization

Several approaches can be employed to optimize sound directivity in VR/AR environments. One common method is the use of binaural audio, which involves recording sound with two microphones to create a 3D stereo sound sensation. Binaural audio can be highly effective in simulating how sound is heard naturally through human ears, thereby enhancing the spatial audio experience.

Another approach is the use of head-related transfer functions (HRTFs), which model how sound waves interact with the human anatomy. By applying HRTFs, developers can accurately simulate how sound should be perceived from different directions, improving localization and directivity. Machine learning algorithms are also increasingly being used to dynamically adapt sound directivity based on user interactions and environmental changes.

The Role of Artificial Intelligence

Artificial intelligence (AI) is playing an increasingly important role in optimizing sound directivity in VR/AR applications. AI algorithms can analyze user data in real-time, adjusting sound parameters to ensure optimal audio experiences. For instance, AI can be used to model and predict user movements, adjusting sound sources accordingly to maintain accurate spatial audio cues. This adaptability is crucial in creating seamless audio experiences that respond to the dynamic nature of VR/AR environments.

The Future of Spatial Audio in VR/AR

As technology continues to advance, the potential for optimizing sound directivity in VR/AR will only grow. Innovations in hardware, such as improved speaker designs and 3D audio processing chips, will likely enhance the quality and realism of spatial audio experiences. Furthermore, as AI and machine learning technologies evolve, they will offer new possibilities for creating adaptive and personalized audio environments that respond to individual user preferences and behaviors.

In conclusion, sound directivity optimization is a critical component of delivering immersive and realistic spatial audio in VR/AR settings. By addressing current challenges and leveraging emerging technologies, developers can create audio experiences that enhance the sense of presence and realism, ultimately transforming how users interact with virtual and augmented realities.

In the world of vibration damping, structural health monitoring, and acoustic noise suppression, staying ahead requires more than intuition—it demands constant awareness of material innovations, sensor architectures, and IP trends across mechanical, automotive, aerospace, and building acoustics.

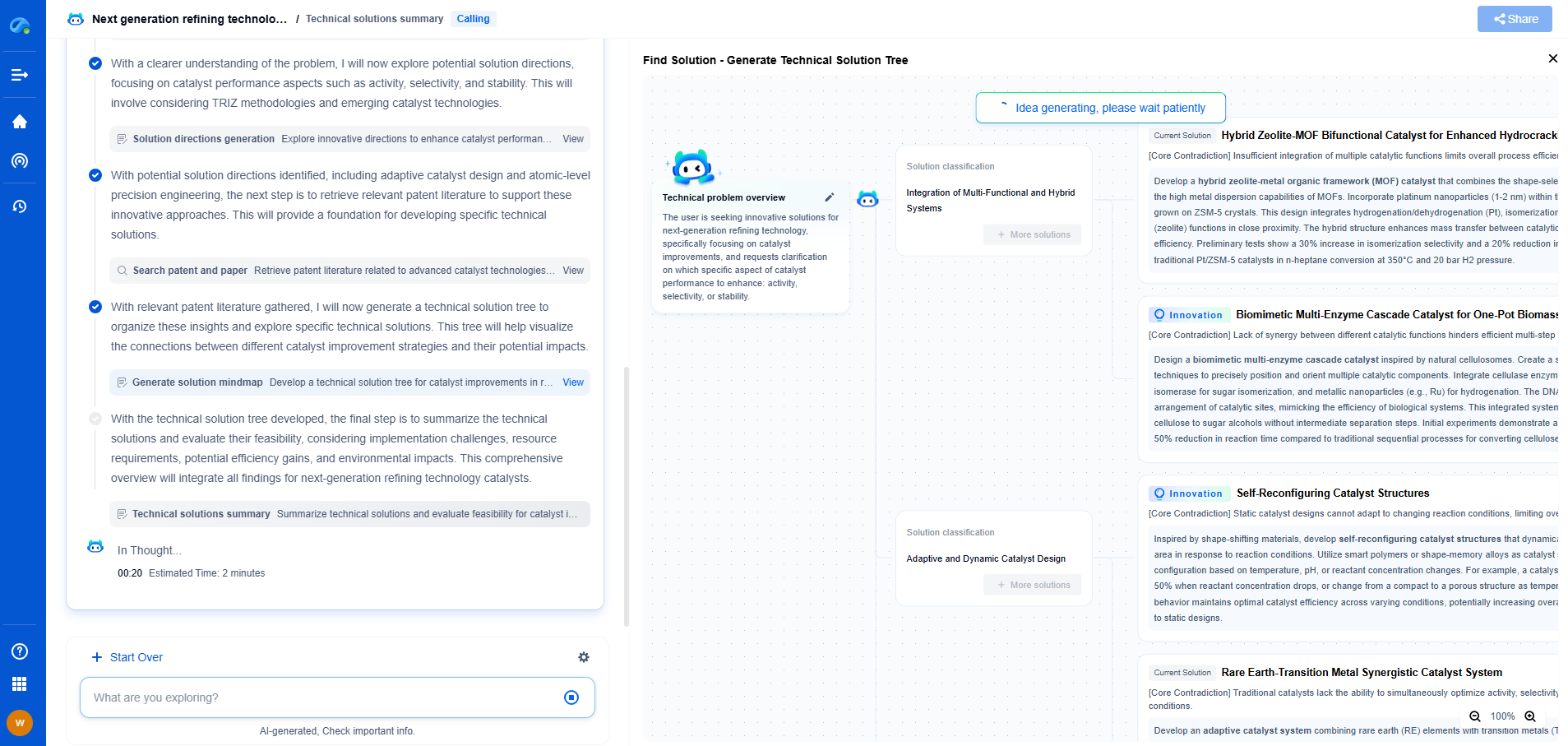

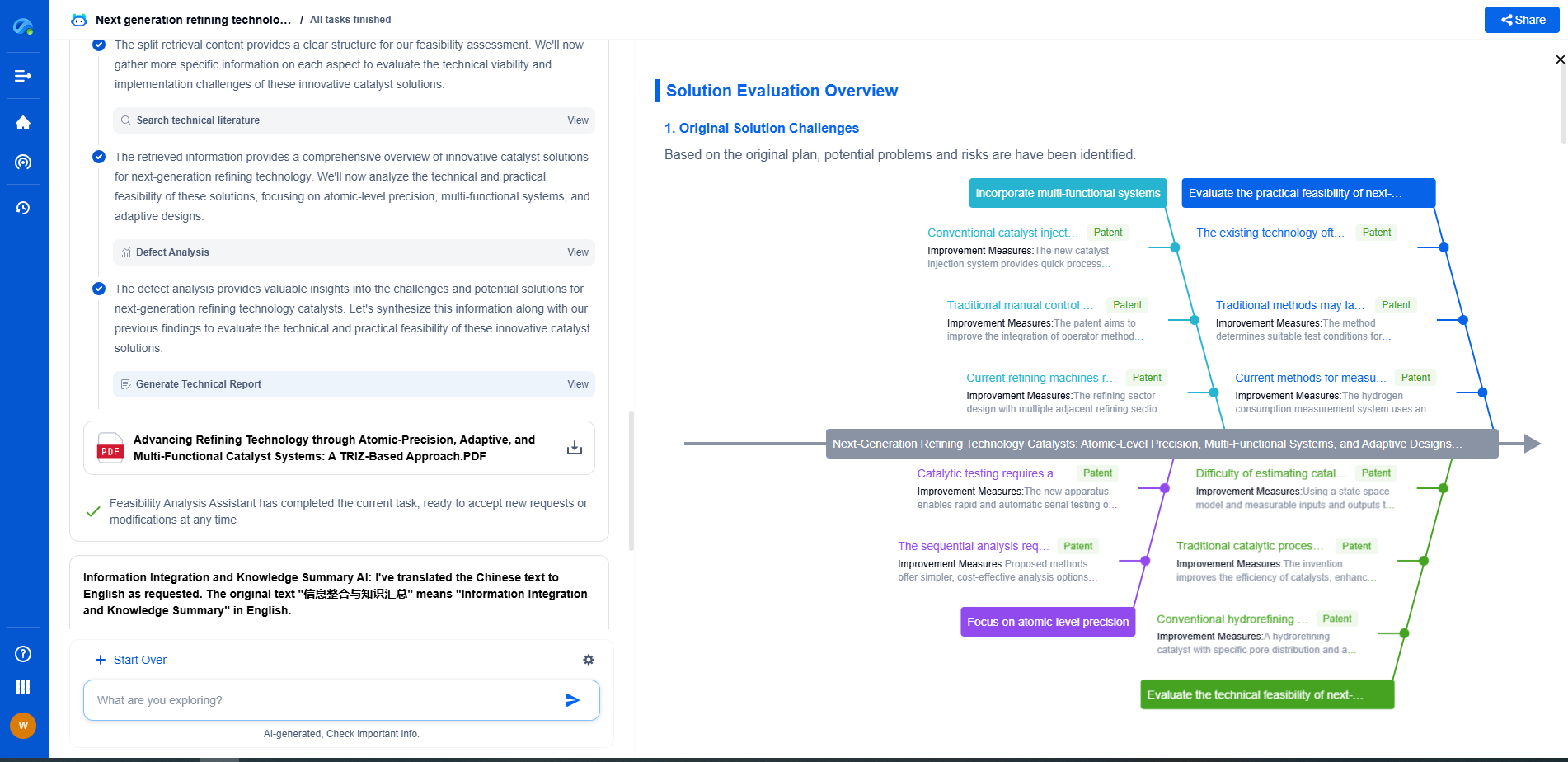

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

⚙️ Bring Eureka into your vibration intelligence workflow—and reduce guesswork in your R&D pipeline. Start your free experience today.