TCP Incast Problem: Solutions for Data Center Networks

JUL 14, 2025 |

In the realm of data center networks, the TCP incast problem has emerged as a significant challenge, particularly as the demand for high-performance computing and data-intensive applications continues to rise. Incast occurs when multiple servers simultaneously send data to a single receiver, often overwhelming the network switch buffers and leading to packet loss. This scenario is common in data centers where distributed storage and parallel computing systems are used, resulting in severe throughput degradation and latency issues.

Causes of TCP Incast

To effectively address the TCP incast problem, it is crucial to understand the underlying causes. One primary factor is the mismatch between the high data transmission rate of multiple servers and the limited buffer capacity of network switches. When many servers send data at the same time, the switch buffer can quickly become congested, leading to packet drops.

Another cause is the way TCP handles congestion control. TCP is designed to adaptively control the flow of data based on network conditions. However, in an incast scenario, TCP’s congestion control mechanisms are often too slow to react to the rapid onset of congestion, exacerbating the problem.

Furthermore, the typical short Round Trip Times (RTTs) in data center environments can contribute to incast. With low RTTs, TCP connections can saturate the network more quickly, leading to buffer overflow before congestion control can effectively mitigate the issue.

Mitigating TCP Incast in Data Centers

Given the negative impact of TCP incast on network performance, several strategies have been proposed to mitigate its effects in data center networks.

1. Increasing Buffer Size

One straightforward approach is to increase the buffer size of network switches. This method provides temporary relief by allowing the switch to accommodate more packets before dropping them. However, simply expanding buffer size is not always feasible due to hardware limitations and increased latency for other traffic.

2. Modifying TCP Congestion Control Algorithms

Enhancing TCP’s congestion control algorithms can also help in addressing the incast problem. Modifications such as reducing the initial congestion window size, implementing more aggressive retransmission timeout mechanisms, or deploying algorithms like Data Center TCP (DCTCP) can improve TCP’s ability to handle sudden congestion. DCTCP, for instance, utilizes Explicit Congestion Notification (ECN) marks rather than packet loss as a signal for congestion, allowing for more precise control.

3. Implementing Application-Level Solutions

At the application level, developers can design systems to minimize the likelihood of incast. Strategies include staggering data transmissions from servers, reducing the number of simultaneous connections, or employing synchronization barriers to control the timing of data sends. These measures can help distribute the network load more evenly, reducing the chances of overwhelming the switch buffers.

4. Utilizing Network Coding and Multipath Transport

Network coding and multipath transport are advanced techniques that can help mitigate the incast problem by optimizing data transmission paths and distributing network load. Network coding involves combining multiple data streams to reduce the number of transmissions required, thereby alleviating congestion. Multipath transport protocols, such as Multipath TCP (MPTCP), allow data to be sent over multiple paths simultaneously, providing a more balanced load across the network.

5. Implementing Rate Limiting and Traffic Shaping

Rate limiting and traffic shaping are network-level solutions that can control the flow of data to prevent congestion. By implementing these techniques, network operators can ensure that no single server or group of servers can saturate the network, thus preventing the conditions that lead to incast.

Conclusion

The TCP incast problem presents a significant challenge for data center networks, particularly as the demand for high-speed data processing continues to grow. While there is no one-size-fits-all solution, a combination of strategies—including increasing buffer sizes, modifying TCP algorithms, employing application-level synchronization, utilizing advanced networking techniques, and implementing traffic management policies—can effectively mitigate the issue. By understanding and addressing the specific causes of TCP incast, network administrators and developers can improve the performance and reliability of data center networks, ensuring they can meet the demands of modern applications.

From 5G NR to SDN and quantum-safe encryption, the digital communication landscape is evolving faster than ever. For R&D teams and IP professionals, tracking protocol shifts, understanding standards like 3GPP and IEEE 802, and monitoring the global patent race are now mission-critical.

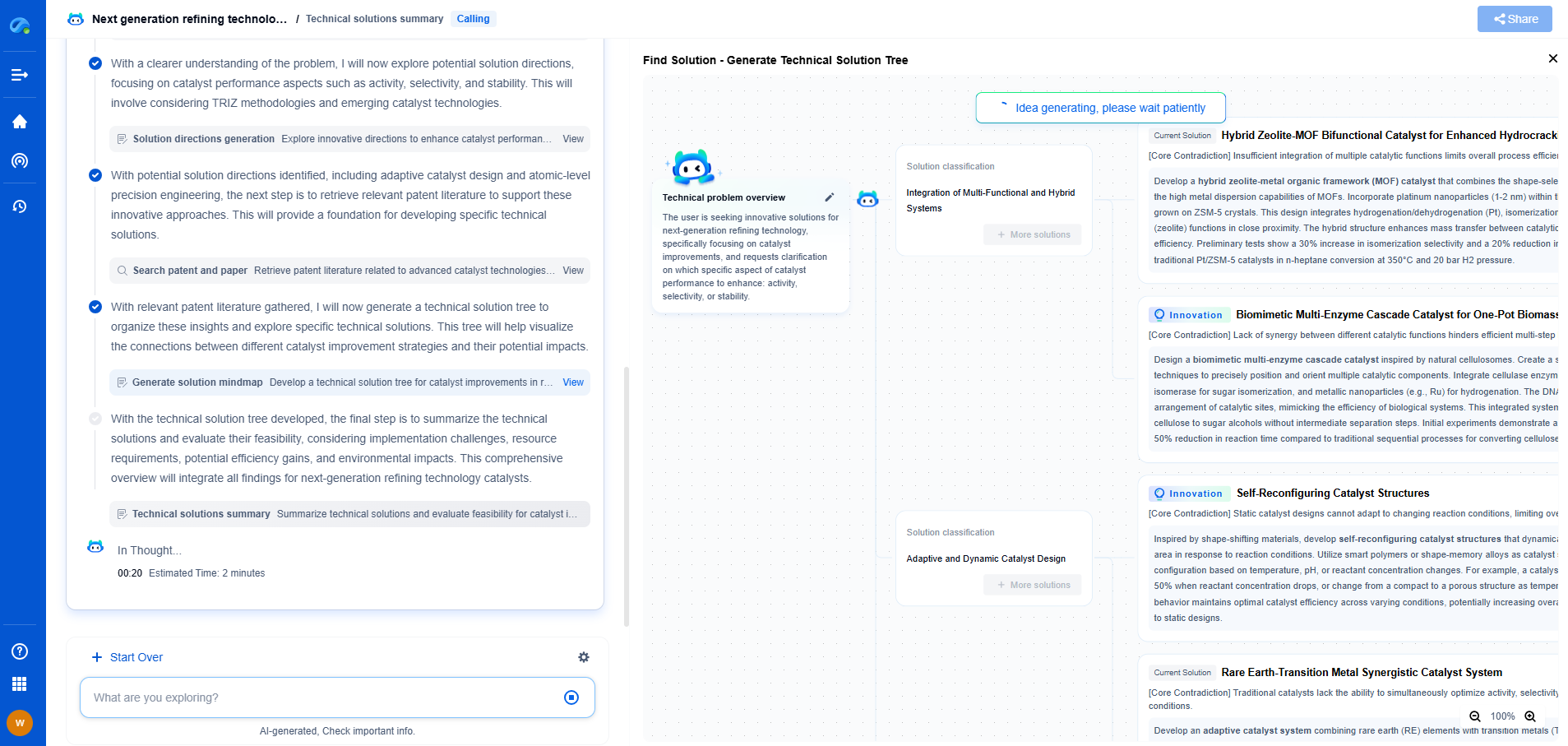

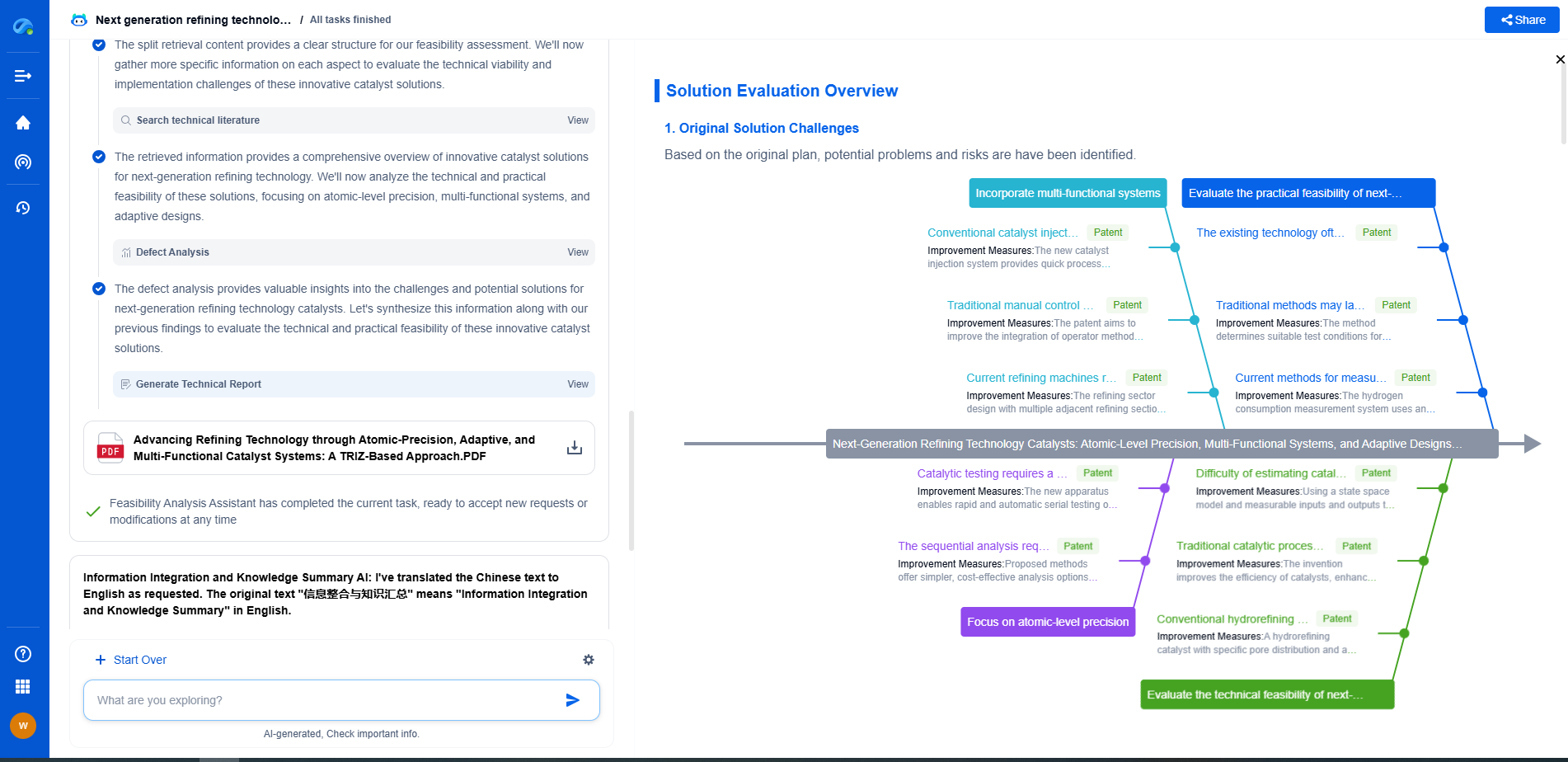

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

📡 Experience Patsnap Eureka today and unlock next-gen insights into digital communication infrastructure, before your competitors do.