The Future of Rendering Engines: Will Neural Models Replace Raster Pipelines?

JUL 10, 2025 |

The rendering engine landscape is on the cusp of a transformation, driven by the emergence of neural models that promise to revolutionize how we generate images and graphics. For decades, traditional raster pipelines have dominated the industry, providing the backbone for everything from video games to cinematic special effects. However, as neural rendering technology advances, it raises the question: will these new models eventually replace raster pipelines?

The Traditional Raster Pipeline

To understand the potential of neural models, we first need to appreciate the mechanisms of the raster pipeline. Rasterization involves converting 3D models into 2D images, pixel by pixel. This process relies on well-established algorithms that consider geometry, lighting, and texture to produce realistic images. While incredibly efficient and capable of real-time rendering, especially with the support of powerful GPUs, rasterization has its limitations.

Raster pipelines can struggle with complex lighting scenarios like global illumination and accurate reflections, often requiring additional techniques such as ray tracing to enhance visual fidelity. These add-ons, however, come at the cost of increased computational demand.

The Rise of Neural Rendering

Neural rendering introduces an entirely different approach by leveraging machine learning algorithms to interpret visual data. Instead of manually programming every aspect of how light interacts with surfaces, neural models learn from vast datasets, enabling them to generate highly realistic images that adhere to learned patterns of natural light and shadow.

The most significant advantage of neural rendering is its ability to produce photorealistic images with less computational overhead for certain tasks. Techniques like neural style transfer and deep image synthesis have already demonstrated impressive capabilities, pointing toward a future where neural networks might handle tasks that once required complex algorithms and high-end hardware.

Comparing the Two Approaches

The potential for neural models to replace raster pipelines hinges on several factors:

1. Performance: Neural rendering can outperform traditional methods in specific scenarios, such as simulating intricate light interactions or generating faces and scenes that are indistinguishable from reality. However, these models currently struggle with real-time applications due to the significant computational resources required for training and inference.

2. Flexibility: Neural networks offer flexibility by learning from data, making them adaptable to various styles and artistic needs. In contrast, raster pipelines require explicit programming for different effects and styles.

3. Scalability: While rasterization scales well with current hardware, neural models benefit from advances in AI hardware, suggesting that they might become more viable as dedicated neural processing units become widespread.

Challenges and Opportunities

Despite their promise, neural models face challenges that must be overcome before they can fully supplant raster pipelines:

1. Real-Time Rendering: Achieving real-time performance with neural models remains a significant hurdle. Research is ongoing to optimize these models for faster inference and reduced latency.

2. Data Dependency: Neural rendering requires large datasets for training, which can be a limitation in developing unique or proprietary graphics.

3. Integration: Transitioning from raster pipelines to neural models involves overcoming significant infrastructure and tooling changes in the industry.

On the flip side, the opportunities presented by neural rendering are vast. They open new avenues for creativity, allowing artists to manipulate images in ways that were previously impossible. Moreover, the potential for automating tedious aspects of rendering could free up creative resources, enabling developers to focus on innovation.

The Future Outlook

While neural models are unlikely to completely replace raster pipelines in the immediate future, they will increasingly complement them. Hybrid approaches, where neural networks handle specific tasks while rasterization covers others, could become the norm. Developers and artists will likely adopt a best-of-both-worlds approach, integrating neural models to enhance the capabilities of existing systems.

As technology progresses, we may see a gradual shift towards neural rendering, especially as the demands for hyper-realistic graphics in gaming, film, and virtual reality continue to grow. The evolution of rendering technology will likely be marked by a convergence of traditional and neural methodologies, paving the way for unprecedented advancements in visual experiences.

Conclusion

The future of rendering engines is not an either-or proposition between neural models and raster pipelines. Instead, it is a dynamic interplay of technologies, each contributing to the ever-evolving landscape of graphic rendering. As neural models advance and hardware becomes more capable, we can anticipate a future where rendering engines leverage the strengths of both approaches to deliver stunning visual experiences. This interplay will define the next generation of rendering technology, transforming how we perceive and interact with digital worlds.

Image processing technologies—from semantic segmentation to photorealistic rendering—are driving the next generation of intelligent systems. For IP analysts and innovation scouts, identifying novel ideas before they go mainstream is essential.

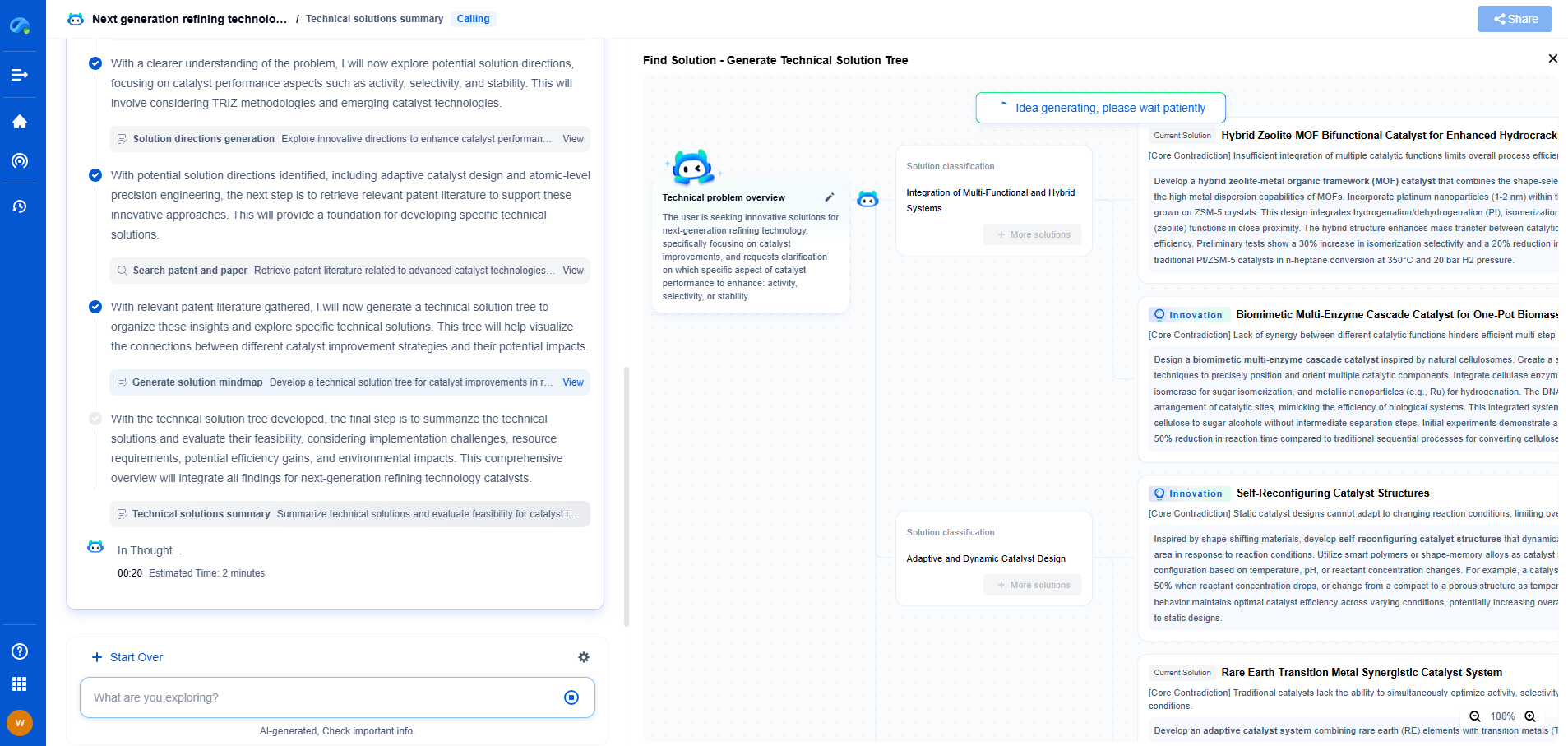

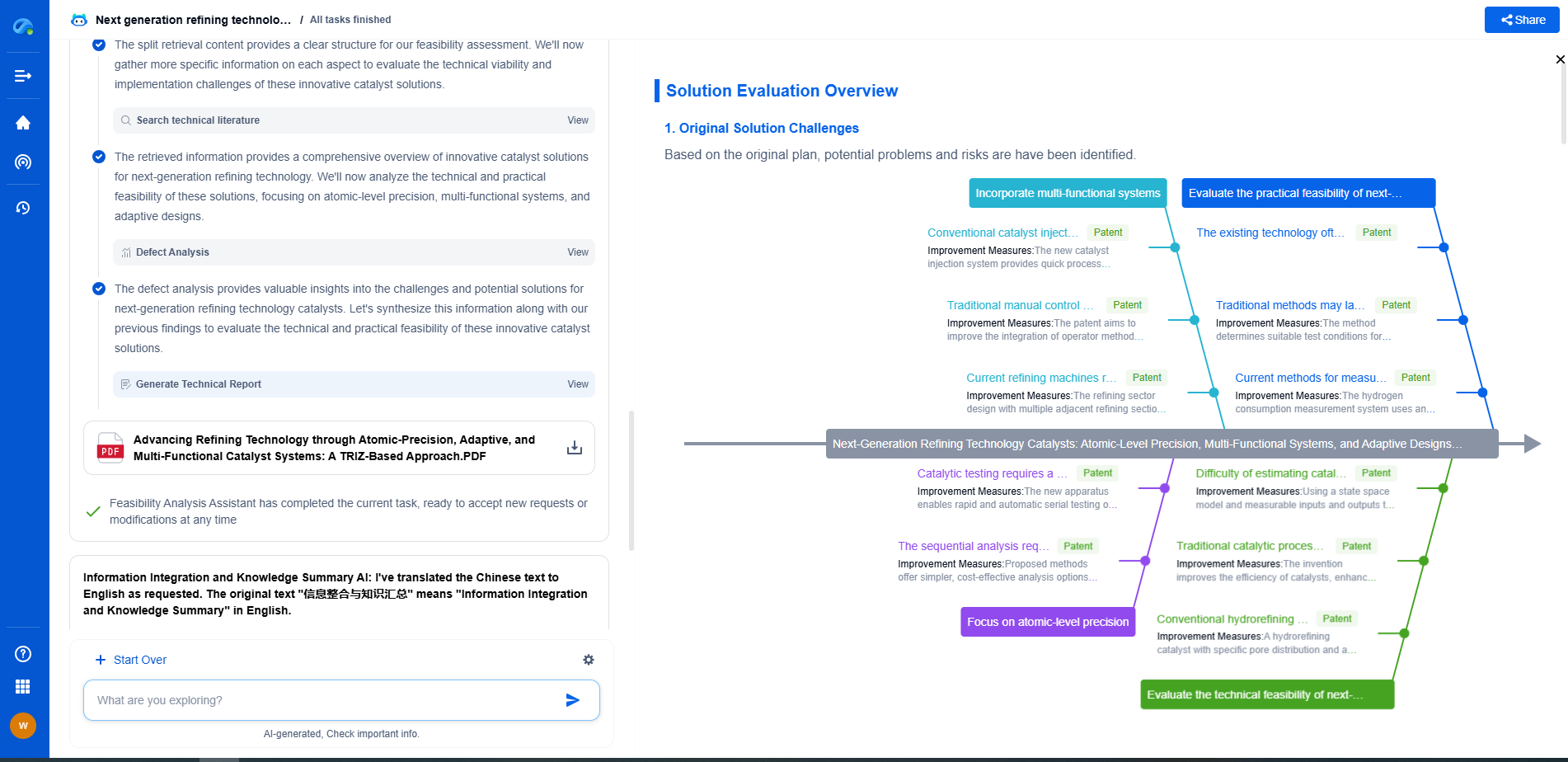

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

🎯 Try Patsnap Eureka now to explore the next wave of breakthroughs in image processing, before anyone else does.