The Latency vs. Throughput Balancing Act in Scheduling

JUL 7, 2025 |

In the world of computer science, particularly in the realm of system design and performance optimization, two critical metrics often come into play: latency and throughput. These two concepts are essential in understanding how systems operate and perform under various loads. Latency refers to the time it takes for a specific task to be completed. It is often the delay between a request and the corresponding response. On the other hand, throughput measures the number of tasks or transactions that a system can process in a given period. It is a gauge of the overall capacity and efficiency of a system.

The Latency-Throughput Trade-Off

When it comes to scheduling, finding a balance between latency and throughput is crucial. Increasing throughput could lead to higher latency, and conversely, optimizing for low latency might constrain throughput. This trade-off is similar to juggling multiple priorities where optimizing one aspect may inadvertently impact the other. In practical terms, if a system prioritizes high throughput, it may batch process tasks to handle more requests simultaneously. While this increases the overall number of tasks processed, each task may take longer to complete, thus increasing latency.

Strategies for Balancing Latency and Throughput

Achieving an optimal balance between latency and throughput involves several strategies:

1. **Task Prioritization**: By prioritizing tasks based on urgency or importance, systems can ensure that critical tasks are completed with lower latency. Implementing priority queues can help in managing this effectively.

2. **Load Balancing**: Distributing tasks evenly across available resources can prevent bottlenecks, thereby reducing latency and maintaining throughput. Load balancers can dynamically allocate tasks to less busy servers or processors.

3. **Concurrency Control**: Implementing concurrency controls, such as threads or parallel processing, can improve both latency and throughput by allowing multiple tasks to be processed simultaneously without interference.

4. **Adaptive Scheduling**: Utilizing adaptive scheduling algorithms that adjust based on current load conditions can help in striking the right balance. These algorithms can dynamically switch between favoring low latency or high throughput based on real-time data.

5. **Caching**: By utilizing caching mechanisms, systems can reduce the time it takes to respond to requests, thus lowering latency. This can also improve throughput by reducing the load on primary processing units.

The Role of Context

The importance of balancing latency and throughput can vary based on the context and specific requirements of a system. In real-time systems, such as video conferencing or online gaming, low latency is often prioritized to ensure a seamless user experience. Conversely, in high-volume data processing environments, like batch processing in data centers, throughput might be the primary focus to handle massive amounts of data efficiently.

The Human Element

One must not forget the human element in this balancing act. End-user expectations and satisfaction often dictate the acceptable levels of latency and throughput. Understanding user behavior and preferences can guide system design to meet these expectations while maintaining efficient operation.

Conclusion: Striking the Right Balance

Balancing latency and throughput in scheduling is a dynamic and ongoing challenge that requires careful consideration of system requirements, user needs, and available resources. By employing thoughtful strategies and adaptable solutions, it is possible to achieve a harmonious balance that meets both performance expectations and operational demands. As technology continues to evolve, so too will the methods for managing these critical performance metrics, ensuring systems remain effective and responsive in an ever-changing digital landscape.

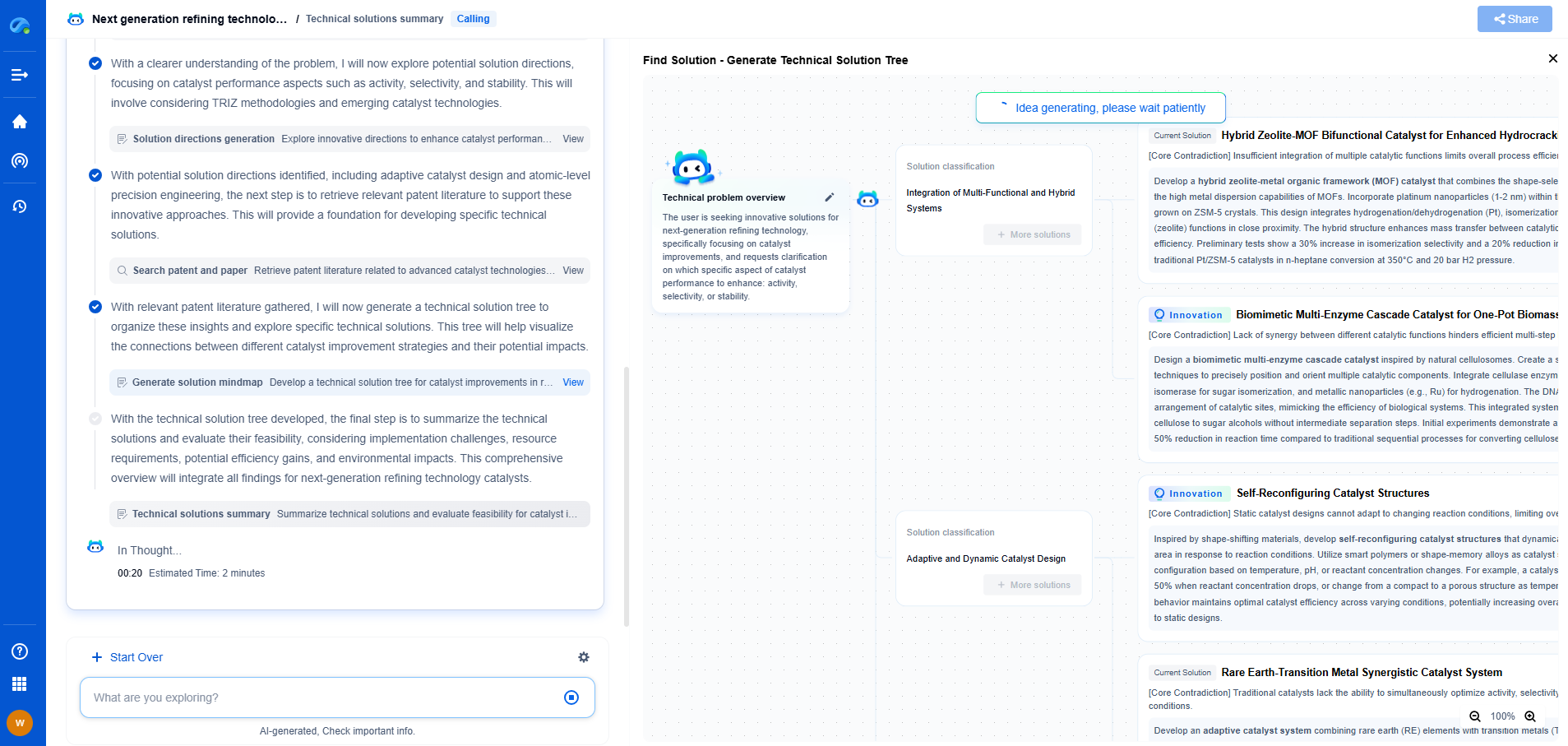

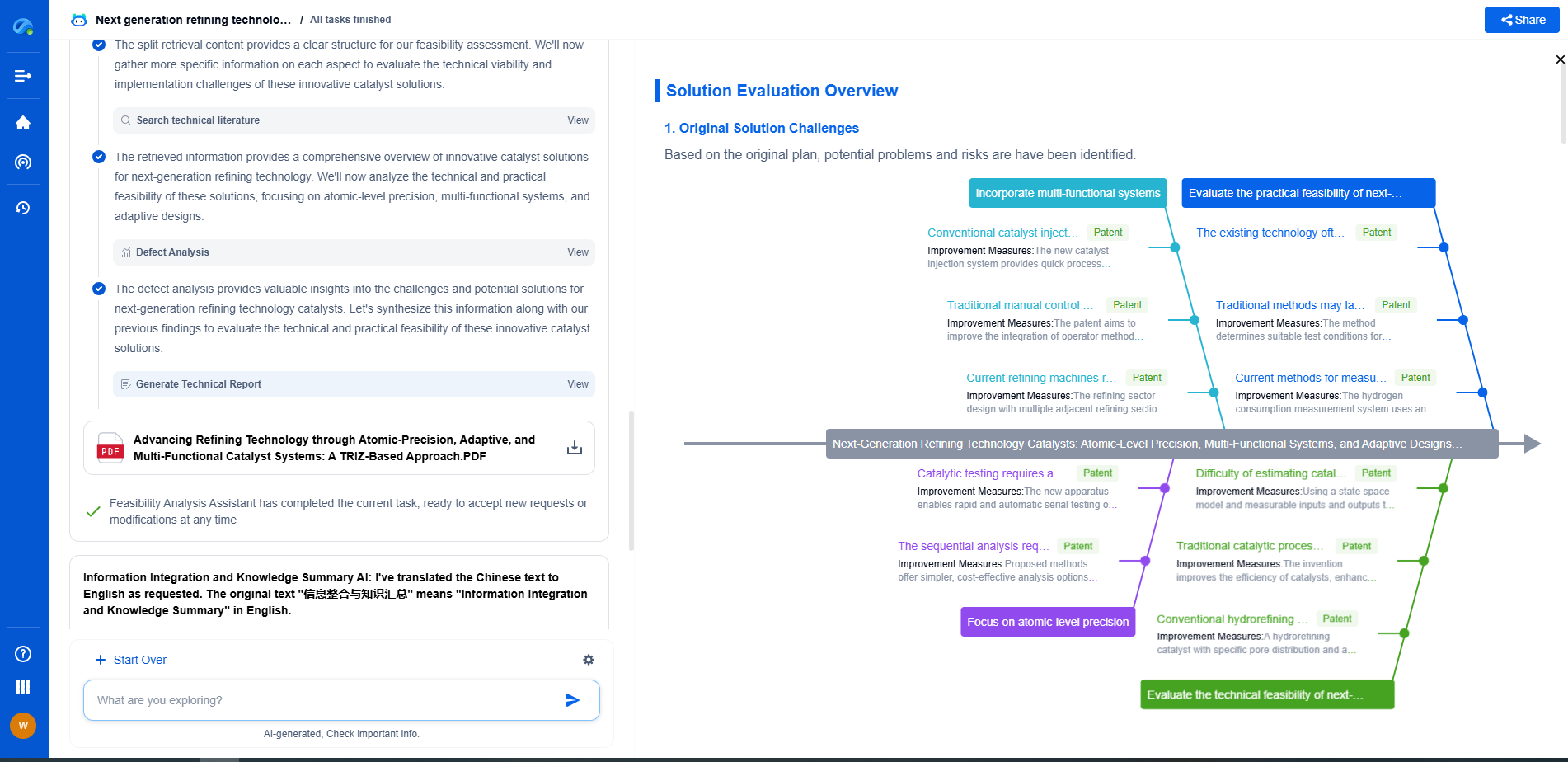

Empower Your Wireless Innovation with Patsnap Eureka

From 5G NR slicing to AI-driven RRM, today’s wireless communication networks are defined by unprecedented complexity and innovation velocity. Whether you’re optimizing handover reliability in ultra-dense networks, exploring mmWave propagation challenges, or analyzing patents for O-RAN interfaces, speed and precision in your R&D and IP workflows are more critical than ever.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

Whether you work in network architecture, protocol design, antenna systems, or spectrum engineering, Patsnap Eureka brings you the intelligence to make faster decisions, uncover novel ideas, and protect what’s next.

🚀 Try Patsnap Eureka today and see how it accelerates wireless communication R&D—one intelligent insight at a time.