Troubleshooting Latency in Edge AI Control Applications

JUL 2, 2025 |

In the realm of modern technology, Edge AI has emerged as a groundbreaking innovation that enables real-time data processing and decision-making closer to the source of data generation. This powerful capability is particularly crucial in control applications where immediate responses are required. However, latency—an inherent challenge of networked systems—can significantly hinder the performance and effectiveness of Edge AI applications. Understanding the sources of latency and employing strategies to mitigate its impact are key to optimizing the performance of these systems.

Sources of Latency

Latency in Edge AI applications can arise from several sources, each contributing to delays in data processing and response times. Identifying these sources is the first step in troubleshooting and addressing latency issues.

1. Network Latency: The time taken for data to travel from the edge device to the server and back can introduce significant delays. Network latency can be exacerbated by bandwidth constraints, network congestion, or the physical distance between the edge device and the data center.

2. Processing Delays: Edge devices often have limited computational resources compared to centralized servers. As a result, processing complex AI models can take longer, contributing to latency. Additionally, the efficiency of the algorithms used can greatly affect processing times.

3. Data Transfer Bottlenecks: The volume of data that needs to be transferred between the edge and the cloud can create bottlenecks, especially if the data is not preprocessed or filtered appropriately at the edge.

4. Resource Contention: Multiple applications running on the same edge device can compete for resources such as CPU, memory, and bandwidth, leading to increased latency.

Strategies for Reducing Latency

Optimizing latency in Edge AI applications involves a multi-faceted approach that includes technological, architectural, and algorithmic strategies.

1. Edge Computing: By processing data closer to its source, edge computing significantly reduces the need for data to travel to centralized servers, thus minimizing network latency. Implementing more robust computing capabilities at the edge can also reduce processing delays.

2. Efficient Algorithms: Employing lightweight and efficient algorithms that require less computational power can help in reducing processing times. Quantization, pruning, and using models specifically designed for edge deployment can make a substantial difference.

3. Data Preprocessing: Implementing data preprocessing techniques to filter, compress, or transform data at the edge can help reduce the volume of data that needs to be transferred, alleviating transfer bottlenecks.

4. Prioritization and Scheduling: Implementing intelligent scheduling and resource allocation techniques can help manage resource contention effectively, ensuring that critical tasks receive the necessary resources promptly.

5. Network Optimization: Techniques such as caching, load balancing, and leveraging Content Delivery Networks (CDNs) can optimize data flow across the network, reducing latency.

Monitoring and Analysis

To effectively troubleshoot and optimize latency, continuous monitoring and analysis of system performance are essential. Employing real-time monitoring tools can help identify latency spikes and their causes promptly. By analyzing these metrics, developers can pinpoint bottlenecks and implement targeted improvements.

Additionally, leveraging AI and machine learning for predictive analysis can provide insights into potential future issues, allowing for proactive measures to prevent latency from escalating.

Conclusion

As Edge AI continues to play a crucial role in control applications, understanding and addressing latency issues becomes increasingly important. By identifying the sources of latency and employing a combination of strategies to mitigate their impact, developers can enhance the performance and reliability of Edge AI systems. The evolution of technology and the continuous development of more efficient algorithms and architectures promise a future where latency will be less of a hindrance, allowing Edge AI to reach its full potential in delivering real-time, intelligent control solutions.

Ready to Reinvent How You Work on Control Systems?

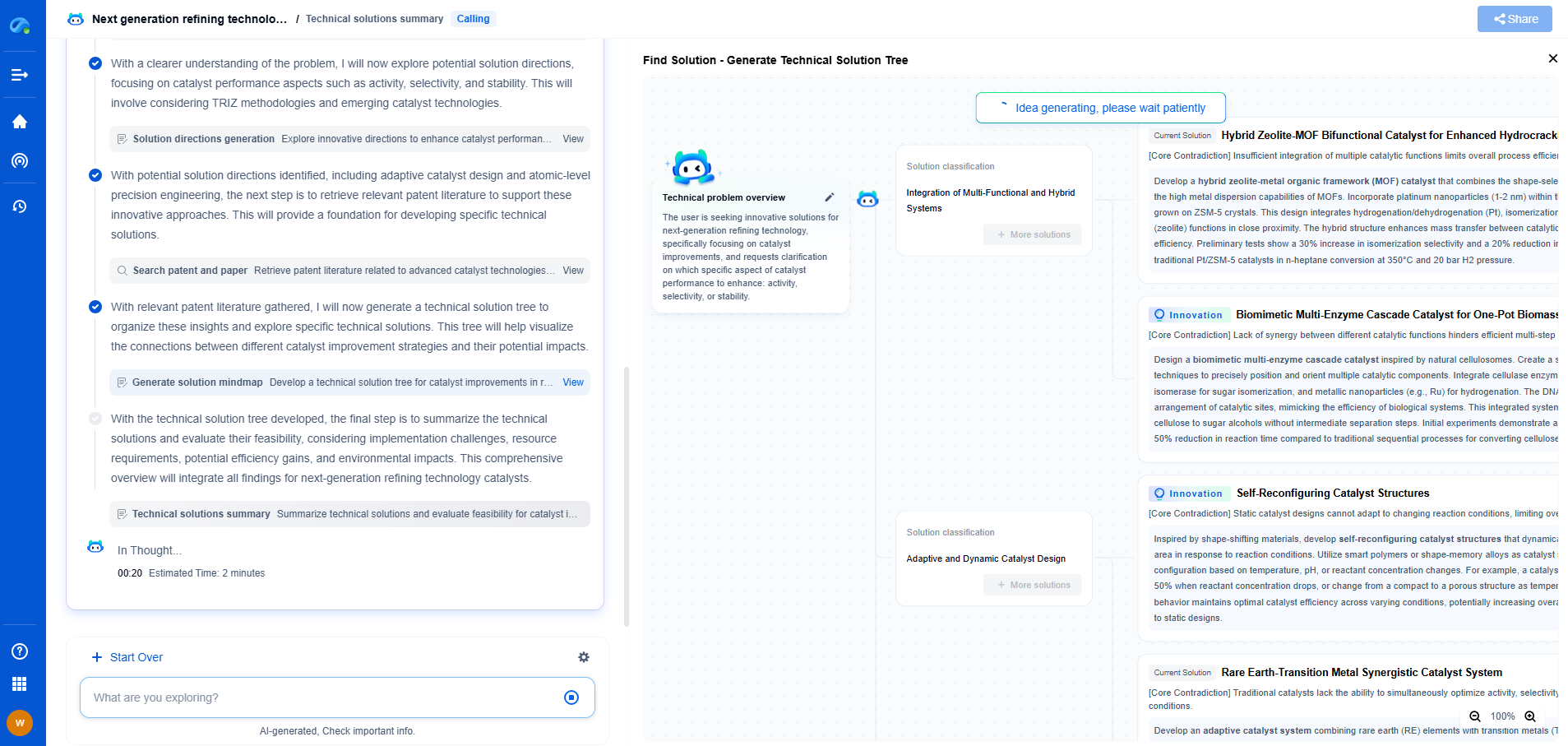

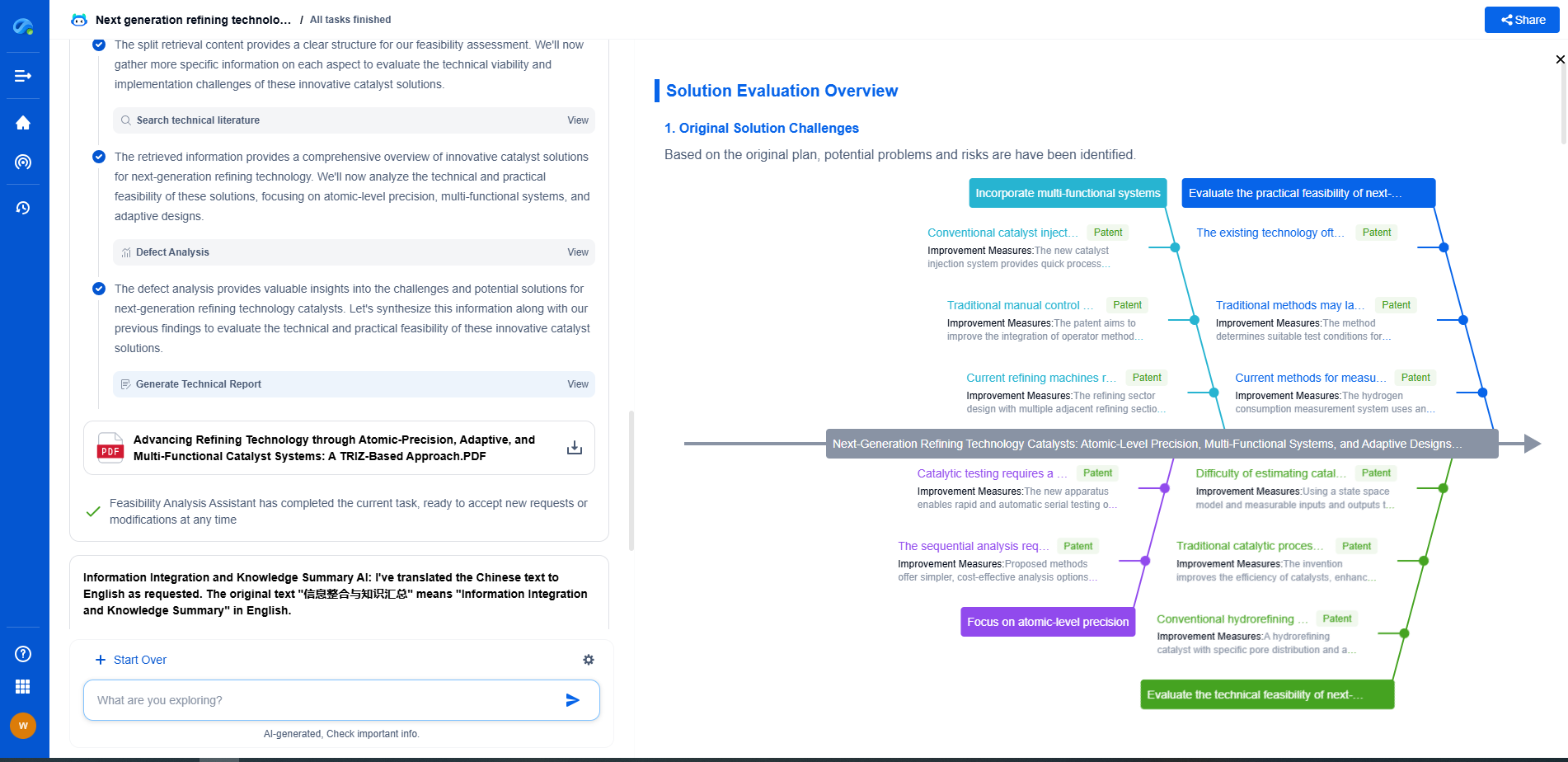

Designing, analyzing, and optimizing control systems involves complex decision-making, from selecting the right sensor configurations to ensuring robust fault tolerance and interoperability. If you’re spending countless hours digging through documentation, standards, patents, or simulation results — it's time for a smarter way to work.

Patsnap Eureka is your intelligent AI Agent, purpose-built for R&D and IP professionals in high-tech industries. Whether you're developing next-gen motion controllers, debugging signal integrity issues, or navigating complex regulatory and patent landscapes in industrial automation, Eureka helps you cut through technical noise and surface the insights that matter—faster.

👉 Experience Patsnap Eureka today — Power up your Control Systems innovation with AI intelligence built for engineers and IP minds.