What Is Neural Network Energy Optimization?

JUN 26, 2025 |

Neural networks, a cornerstone of artificial intelligence and machine learning, have revolutionized various fields by enabling tasks like image recognition, language translation, and more. However, the energy consumption associated with training and deploying these networks has become a growing concern. As neural networks become larger and more complex, their energy demands increase, raising questions about sustainability and efficiency. Neural network energy optimization seeks to address these concerns by minimizing energy consumption while maintaining or improving performance.

The Importance of Energy Efficiency in Neural Networks

Energy efficiency in neural networks is crucial for several reasons. First, the environmental impact of high energy consumption cannot be overlooked. Data centers housing powerful GPUs and TPUs consume vast amounts of electricity, contributing to carbon emissions. Second, efficient energy use can significantly reduce operational costs, which is vital for companies deploying large-scale neural networks. Finally, optimizing energy consumption can extend the battery life of mobile and embedded devices, making AI applications more practical and widespread.

Methods of Energy Optimization

Several methods have been developed to optimize the energy efficiency of neural networks, each with its unique approach and advantages.

1. Pruning and Quantization

Pruning involves removing redundant neurons and connections in a network. This reduces the overall size of the model, thereby lowering its energy demands without significantly affecting accuracy. Quantization, on the other hand, reduces the precision of the weights and activations within the network. Lower precision can lead to faster computations and less energy consumption, especially when implemented on specialized hardware that supports low-precision arithmetic.

2. Efficient Architectures

Designing efficient neural network architectures is another way to optimize energy use. Techniques like depthwise separable convolutions, used in models such as MobileNet, reduce the number of computations needed, thereby saving energy. Additionally, attention mechanisms can be used to focus on the most relevant parts of the data, reducing the processing required for irrelevant information.

3. Adaptive and Dynamic Inference

Adaptive inference allows a neural network to adjust its computational effort based on the task's complexity. For instance, a model might use fewer resources when making predictions on simpler tasks. Dynamic inference goes a step further by enabling the network to decide in real-time which parts of the model to activate, thereby conserving energy on less critical computations.

4. Hardware and Software Co-optimization

Aligning neural network design with the capabilities of hardware can lead to significant energy savings. Custom hardware accelerators, such as Google's Tensor Processing Units (TPUs) and NVIDIA's Deep Learning Accelerators (DLAs), are engineered to perform AI-specific computations more efficiently. Co-optimization also involves tailoring software to exploit these hardware capabilities, ensuring that energy use is minimized at both levels.

Challenges and Future Directions

Despite the promising strategies for neural network energy optimization, several challenges remain. Balancing energy efficiency with model accuracy is a delicate task, as aggressive optimization can degrade performance. Furthermore, the diversity of AI applications requires flexible solutions that can adapt to different requirements and constraints.

Future research is likely to focus on developing more sophisticated optimization techniques that can dynamically adjust to varying workloads and hardware environments. Continuous advancements in hardware technology will also play a crucial role, as newer generations of accelerators promise even greater efficiency.

Conclusion

Neural network energy optimization is an essential field of study as AI continues to integrate into every aspect of modern life. By adopting efficient architectures, leveraging adaptive inference, and aligning software with hardware capabilities, it is possible to create neural networks that are both powerful and sustainable. The pursuit of energy optimization not only addresses the environmental and economic impacts of AI but also paves the way for more practical and accessible AI applications across diverse platforms.

Stay Ahead in Power Systems Innovation

From intelligent microgrids and energy storage integration to dynamic load balancing and DC-DC converter optimization, the power supply systems domain is rapidly evolving to meet the demands of electrification, decarbonization, and energy resilience.

In such a high-stakes environment, how can your R&D and patent strategy keep up?

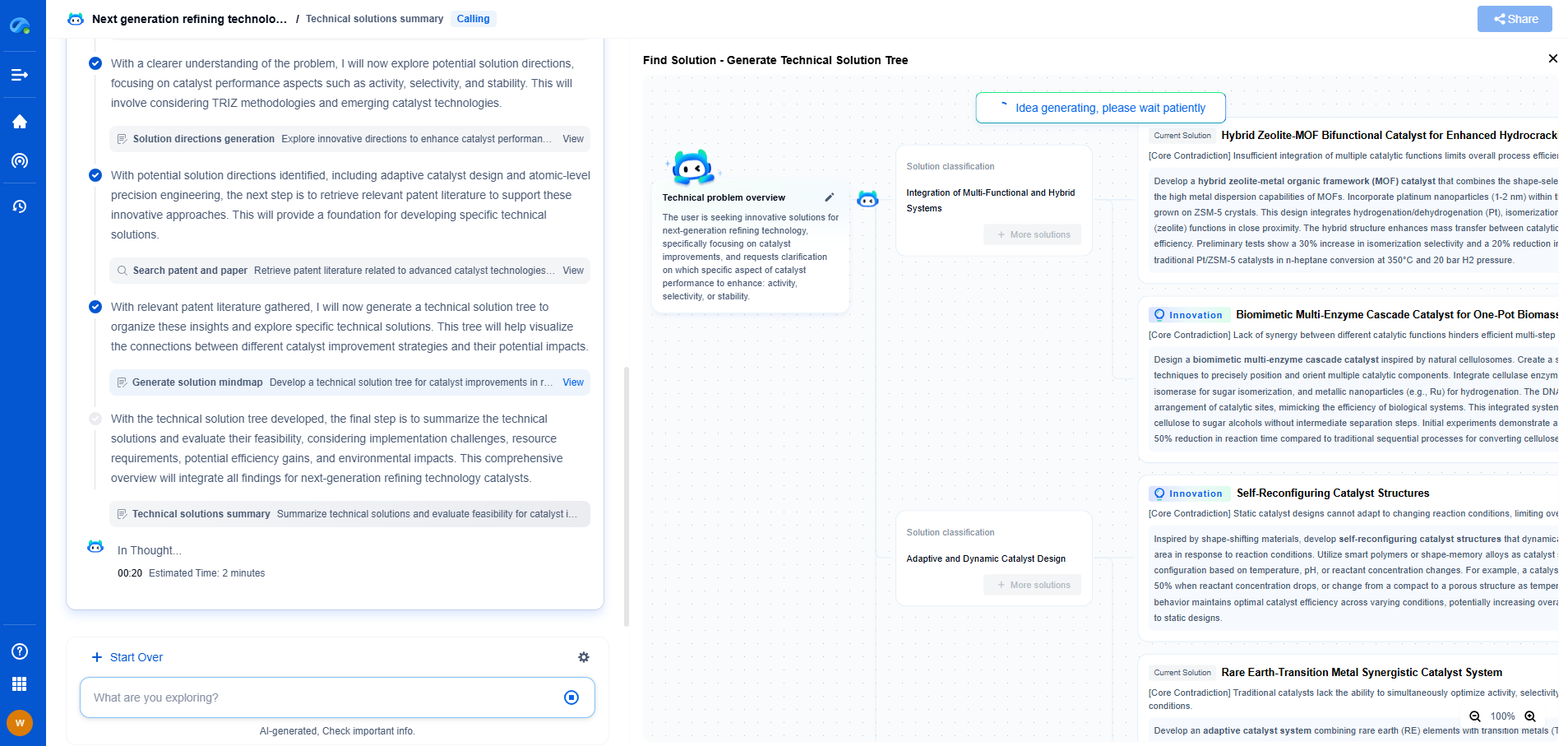

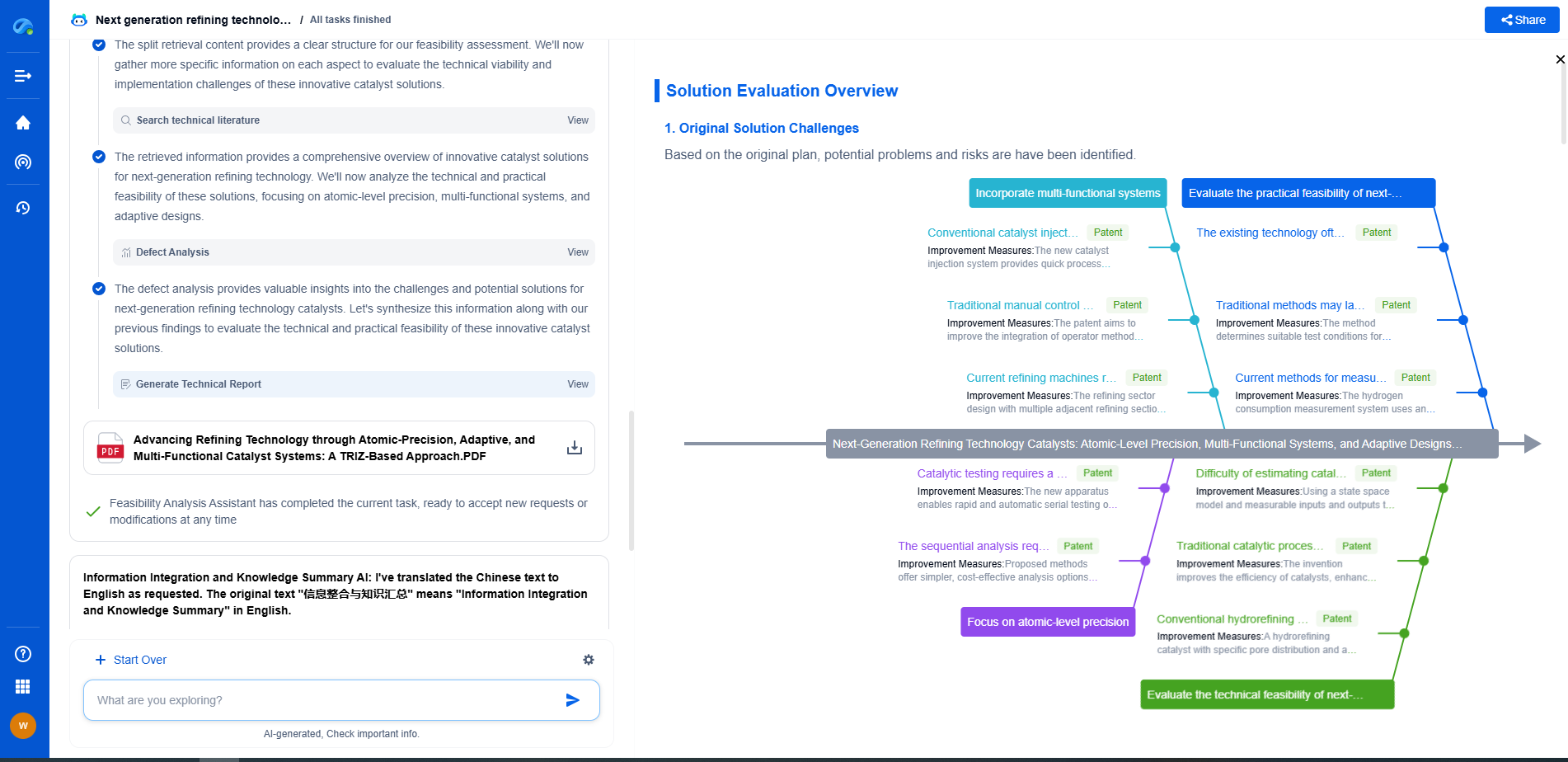

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

👉 Experience how Patsnap Eureka can supercharge your workflow in power systems R&D and IP analysis. Request a live demo or start your trial today.