What Is Reinforcement Learning in Cellular Handover?

JUL 7, 2025 |

Cellular handover is a critical process in mobile communication systems, enabling seamless connectivity as users move from one cell to another. This process ensures uninterrupted service by transferring an ongoing call or data session from one cell tower to another, optimizing signal strength, load balancing, and resource utilization. Traditionally, cellular handover decisions are made using pre-defined algorithms that consider factors such as signal strength, distance, and network capacity. However, these conventional methods sometimes struggle to adapt to dynamic network environments or handle complex scenarios effectively. This is where reinforcement learning comes into play, offering a more adaptive approach to cellular handover.

Introduction to Reinforcement Learning

Reinforcement learning (RL) is a type of machine learning where an agent learns to make decisions by interacting with an environment and receiving feedback in the form of rewards or penalties. The agent aims to maximize the cumulative reward over time by trying different actions and learning from the outcomes. Unlike supervised learning, RL does not require labeled data; instead, it focuses on exploring and exploiting the environment to discover optimal strategies.

Applying Reinforcement Learning to Cellular Handover

1. Dynamic Decision-Making

Reinforcement learning introduces a dynamic decision-making process in cellular handover by allowing the network to learn and adapt to changing conditions. Instead of relying solely on static algorithms, RL enables the network to evaluate multiple factors in real-time, such as user mobility patterns, traffic load, and interference levels. By continuously learning from these factors, RL algorithms can make more informed handover decisions, improving the overall user experience.

2. Balancing Exploration and Exploitation

A key challenge in reinforcement learning is finding the right balance between exploration and exploitation. Exploration involves trying new actions to discover their potential rewards, while exploitation focuses on choosing the best-known action to maximize the immediate reward. In the context of cellular handover, RL algorithms need to explore different handover strategies to identify optimal solutions while exploiting existing knowledge to ensure stable connectivity. Properly managing this balance can lead to better handover performance, reducing dropped calls and enhancing network efficiency.

3. Handling Complex Scenarios

Cellular networks often encounter complex scenarios where traditional handover algorithms may struggle to perform effectively. Reinforcement learning can tackle these scenarios by considering multiple variables simultaneously and learning from the outcomes. For instance, in environments with high user density or varying signal interference, RL can optimize handover decisions by assessing all relevant factors and adapting accordingly. This ability to handle complex, dynamic situations makes RL a promising approach for cellular networks facing unpredictable challenges.

Benefits of Reinforcement Learning in Cellular Handover

1. Improved User Experience

By leveraging reinforcement learning, cellular networks can offer a more consistent and reliable user experience. Enhanced handover decisions lead to fewer dropped calls and better data session continuity, even in challenging environments. As RL algorithms continually learn and adapt, users benefit from smooth transitions between cells, maintaining connectivity without interruption.

2. Increased Network Efficiency

Reinforcement learning can significantly boost network efficiency by optimizing resource allocation during handover processes. By considering real-time network conditions, RL algorithms can distribute traffic load more effectively, minimizing congestion and maximizing resource utilization. This not only enhances network performance but also supports a larger number of users with existing infrastructure.

3. Future-Proofing Cellular Networks

As mobile networks evolve with the introduction of new technologies like 5G and beyond, the complexity of cellular handover will continue to increase. Reinforcement learning provides a future-proof approach by offering a scalable solution that can adapt to emerging challenges. With RL's ability to continuously learn and improve, cellular networks can stay ahead of technological advancements and maintain optimal performance for years to come.

Challenges and Considerations

While reinforcement learning offers promising benefits for cellular handover, there are challenges to consider. RL algorithms require significant computational resources and time to train effectively, which can be a limitation in real-world deployment. Additionally, ensuring the safety and reliability of RL-based handover decisions is crucial to maintaining network stability. Addressing these challenges requires careful design and testing of RL models to ensure they meet the rigorous demands of mobile networks.

Conclusion

Reinforcement learning represents a transformative approach to cellular handover, offering dynamic decision-making capabilities and enhanced adaptability to complex network environments. By improving user experience and boosting network efficiency, RL sets the stage for future-proof cellular networks capable of meeting the demands of modern communication. As technology continues to advance, the integration of reinforcement learning into cellular handover processes will play a vital role in shaping the future of mobile connectivity.

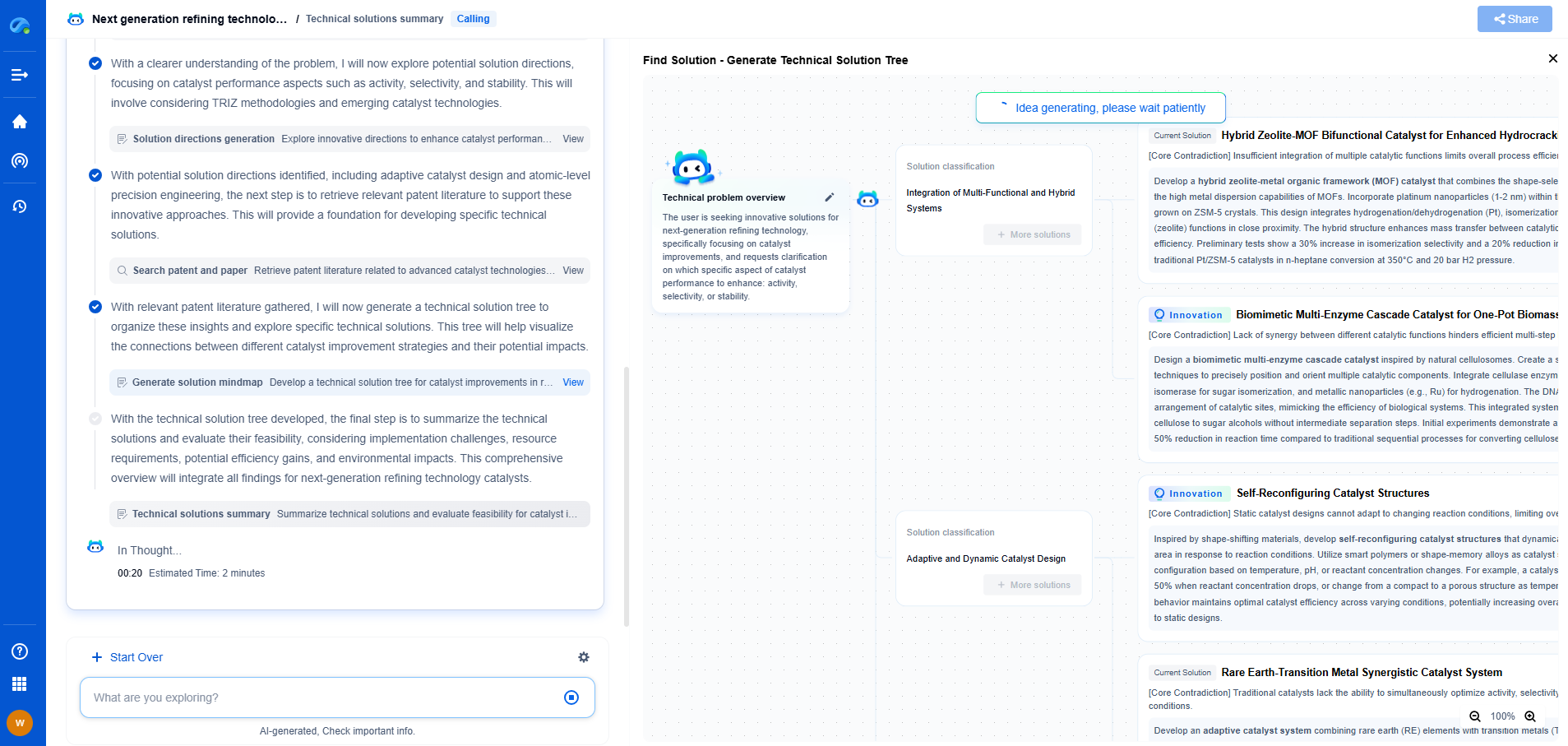

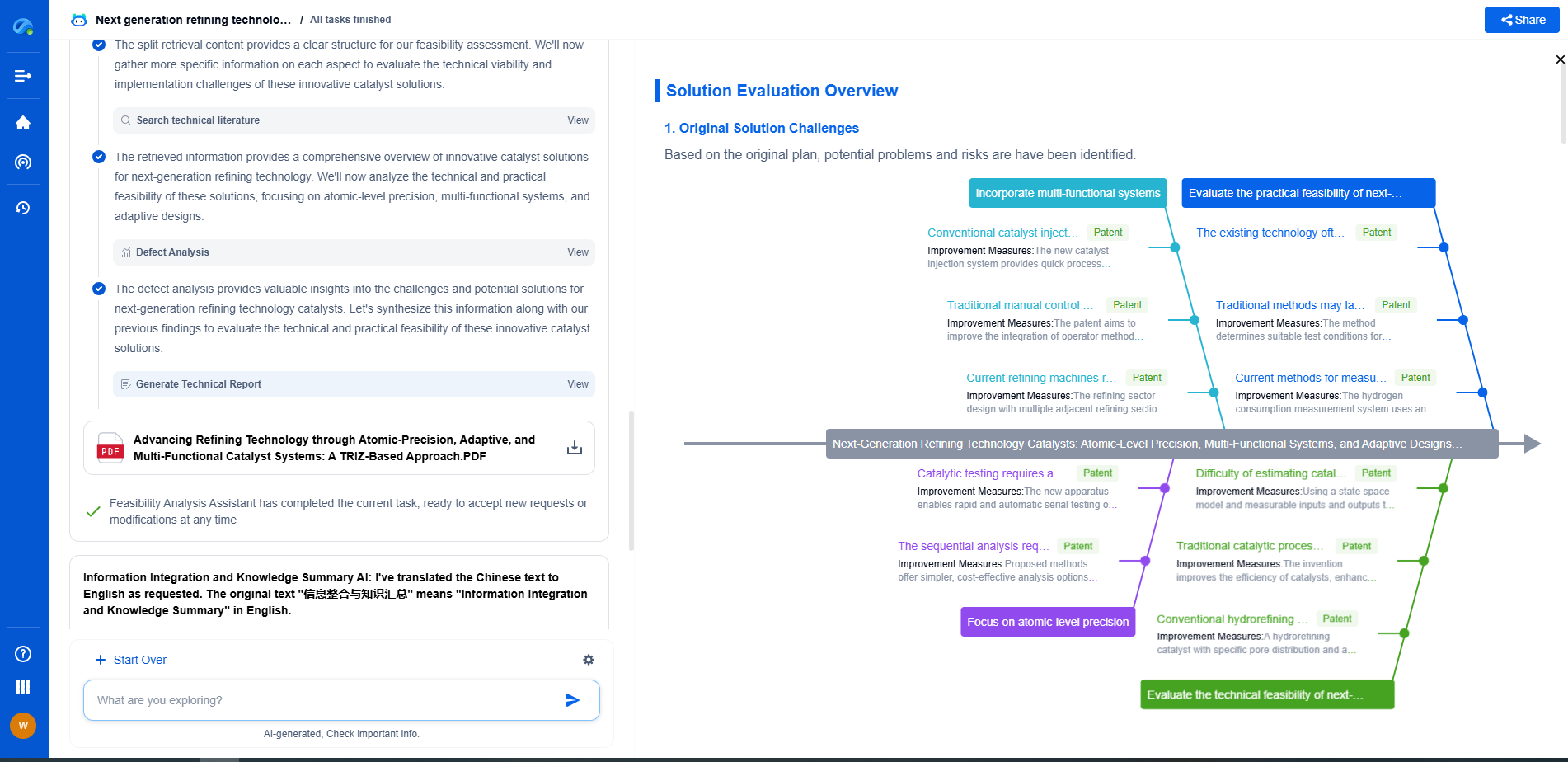

Empower Your Wireless Innovation with Patsnap Eureka

From 5G NR slicing to AI-driven RRM, today’s wireless communication networks are defined by unprecedented complexity and innovation velocity. Whether you’re optimizing handover reliability in ultra-dense networks, exploring mmWave propagation challenges, or analyzing patents for O-RAN interfaces, speed and precision in your R&D and IP workflows are more critical than ever.

Patsnap Eureka, our intelligent AI assistant built for R&D professionals in high-tech sectors, empowers you with real-time expert-level analysis, technology roadmap exploration, and strategic mapping of core patents—all within a seamless, user-friendly interface.

Whether you work in network architecture, protocol design, antenna systems, or spectrum engineering, Patsnap Eureka brings you the intelligence to make faster decisions, uncover novel ideas, and protect what’s next.

🚀 Try Patsnap Eureka today and see how it accelerates wireless communication R&D—one intelligent insight at a time.