AI vs Heuristic Solutions: Speed in Processing Complex Data

FEB 25, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

AI vs Heuristic Processing Background and Objectives

The evolution of data processing methodologies has undergone significant transformation over the past several decades, driven by exponential growth in data volume, velocity, and complexity. Traditional heuristic approaches, which dominated computational problem-solving for much of the 20th century, relied on rule-based algorithms and expert-defined decision trees to navigate complex datasets. These methods provided deterministic, interpretable solutions but often struggled with scalability and adaptability as data characteristics evolved.

The emergence of artificial intelligence, particularly machine learning and deep learning paradigms, has fundamentally altered the landscape of complex data processing. AI-driven approaches leverage statistical learning, pattern recognition, and adaptive algorithms to extract insights from vast datasets without explicit programming for every scenario. This shift represents a paradigm change from deterministic rule-based processing to probabilistic, data-driven methodologies.

Contemporary enterprises face unprecedented challenges in processing complex data streams in real-time environments. Financial markets generate terabytes of transactional data requiring millisecond-level decision making. Healthcare systems must analyze multi-modal patient data including genomics, imaging, and clinical records simultaneously. Autonomous systems need to process sensor fusion data from multiple sources while maintaining safety-critical response times.

The fundamental tension between AI and heuristic solutions centers on the trade-off between processing speed and solution optimality. Heuristic methods typically offer faster execution times due to their simplified computational models and predetermined decision pathways. However, they may sacrifice accuracy and adaptability in dynamic environments where data patterns shift continuously.

The primary objective of this technological investigation focuses on establishing comprehensive performance benchmarks for AI versus heuristic approaches across diverse complex data processing scenarios. This includes evaluating computational efficiency, accuracy metrics, scalability characteristics, and real-world deployment feasibility. Additionally, the research aims to identify optimal hybrid architectures that leverage the strengths of both methodologies.

Understanding the speed-accuracy trade-offs becomes crucial for enterprise decision-making regarding technology adoption strategies. Organizations must balance the immediate performance gains of heuristic solutions against the long-term adaptability and learning capabilities of AI systems, particularly in environments where data complexity continues to increase exponentially.

The emergence of artificial intelligence, particularly machine learning and deep learning paradigms, has fundamentally altered the landscape of complex data processing. AI-driven approaches leverage statistical learning, pattern recognition, and adaptive algorithms to extract insights from vast datasets without explicit programming for every scenario. This shift represents a paradigm change from deterministic rule-based processing to probabilistic, data-driven methodologies.

Contemporary enterprises face unprecedented challenges in processing complex data streams in real-time environments. Financial markets generate terabytes of transactional data requiring millisecond-level decision making. Healthcare systems must analyze multi-modal patient data including genomics, imaging, and clinical records simultaneously. Autonomous systems need to process sensor fusion data from multiple sources while maintaining safety-critical response times.

The fundamental tension between AI and heuristic solutions centers on the trade-off between processing speed and solution optimality. Heuristic methods typically offer faster execution times due to their simplified computational models and predetermined decision pathways. However, they may sacrifice accuracy and adaptability in dynamic environments where data patterns shift continuously.

The primary objective of this technological investigation focuses on establishing comprehensive performance benchmarks for AI versus heuristic approaches across diverse complex data processing scenarios. This includes evaluating computational efficiency, accuracy metrics, scalability characteristics, and real-world deployment feasibility. Additionally, the research aims to identify optimal hybrid architectures that leverage the strengths of both methodologies.

Understanding the speed-accuracy trade-offs becomes crucial for enterprise decision-making regarding technology adoption strategies. Organizations must balance the immediate performance gains of heuristic solutions against the long-term adaptability and learning capabilities of AI systems, particularly in environments where data complexity continues to increase exponentially.

Market Demand for High-Speed Complex Data Processing

The global demand for high-speed complex data processing has reached unprecedented levels, driven by the exponential growth of data generation across industries. Organizations worldwide are grappling with massive datasets that require real-time or near-real-time processing capabilities to extract actionable insights and maintain competitive advantages. This surge in demand stems from the proliferation of IoT devices, social media platforms, financial trading systems, and scientific research applications that generate continuous streams of complex data.

Financial services represent one of the most demanding sectors for high-speed data processing, where algorithmic trading systems require microsecond-level response times to capitalize on market opportunities. The ability to process market data, news feeds, and trading signals simultaneously while executing complex risk calculations has become a critical differentiator. Similarly, telecommunications companies face mounting pressure to process network traffic data in real-time to optimize routing, detect anomalies, and ensure quality of service.

The healthcare industry has emerged as another significant driver of demand, particularly with the rise of personalized medicine and real-time patient monitoring systems. Medical imaging, genomic sequencing, and continuous vital sign monitoring generate vast amounts of complex data that require immediate processing for critical decision-making. The COVID pandemic further accelerated this trend, highlighting the need for rapid data analysis in epidemiological modeling and vaccine development.

E-commerce and digital advertising platforms have created substantial market demand for processing user behavior data, recommendation algorithms, and real-time bidding systems. These applications require processing millions of transactions and user interactions simultaneously while delivering personalized experiences within milliseconds. The competitive landscape in these sectors has made processing speed a key performance indicator directly tied to revenue generation.

Manufacturing and supply chain management have increasingly adopted Industry 4.0 principles, creating demand for real-time processing of sensor data, predictive maintenance algorithms, and quality control systems. The integration of smart factories and autonomous systems requires immediate processing of complex operational data to optimize production efficiency and prevent costly downtime.

The market potential continues expanding as emerging technologies like autonomous vehicles, smart cities, and augmented reality applications introduce new categories of complex data processing requirements. These applications demand not only high-speed processing but also the ability to handle heterogeneous data types and maintain consistent performance under varying computational loads.

Financial services represent one of the most demanding sectors for high-speed data processing, where algorithmic trading systems require microsecond-level response times to capitalize on market opportunities. The ability to process market data, news feeds, and trading signals simultaneously while executing complex risk calculations has become a critical differentiator. Similarly, telecommunications companies face mounting pressure to process network traffic data in real-time to optimize routing, detect anomalies, and ensure quality of service.

The healthcare industry has emerged as another significant driver of demand, particularly with the rise of personalized medicine and real-time patient monitoring systems. Medical imaging, genomic sequencing, and continuous vital sign monitoring generate vast amounts of complex data that require immediate processing for critical decision-making. The COVID pandemic further accelerated this trend, highlighting the need for rapid data analysis in epidemiological modeling and vaccine development.

E-commerce and digital advertising platforms have created substantial market demand for processing user behavior data, recommendation algorithms, and real-time bidding systems. These applications require processing millions of transactions and user interactions simultaneously while delivering personalized experiences within milliseconds. The competitive landscape in these sectors has made processing speed a key performance indicator directly tied to revenue generation.

Manufacturing and supply chain management have increasingly adopted Industry 4.0 principles, creating demand for real-time processing of sensor data, predictive maintenance algorithms, and quality control systems. The integration of smart factories and autonomous systems requires immediate processing of complex operational data to optimize production efficiency and prevent costly downtime.

The market potential continues expanding as emerging technologies like autonomous vehicles, smart cities, and augmented reality applications introduce new categories of complex data processing requirements. These applications demand not only high-speed processing but also the ability to handle heterogeneous data types and maintain consistent performance under varying computational loads.

Current State and Speed Limitations in Data Processing

The contemporary data processing landscape is characterized by an unprecedented volume and complexity of information that organizations must handle daily. Traditional computing systems are increasingly strained by the exponential growth in data generation, with global data creation reaching approximately 2.5 quintillion bytes per day. This surge has exposed fundamental limitations in conventional processing architectures, particularly when dealing with unstructured data, real-time analytics, and multi-dimensional datasets.

Current processing methodologies predominantly rely on sequential algorithms and rule-based heuristic approaches that were designed for simpler computational tasks. These systems typically operate through predetermined decision trees and statistical models that, while reliable for structured data, struggle with the dynamic nature of modern complex datasets. The processing speed bottlenecks become particularly evident when handling multimedia content, natural language processing tasks, and large-scale pattern recognition challenges.

Heuristic solutions, which have dominated the field for decades, face significant scalability constraints. These approaches often require extensive manual tuning and domain-specific optimization, leading to processing times that can extend from hours to days for complex analytical tasks. The computational overhead increases exponentially with data complexity, creating a performance ceiling that traditional methods cannot overcome efficiently.

Artificial intelligence-based processing systems have emerged as a potential solution to these limitations, offering parallel processing capabilities and adaptive learning mechanisms. However, current AI implementations also face substantial challenges, including high computational resource requirements, extensive training periods, and inconsistent performance across different data types. The energy consumption of large-scale AI processing systems presents additional constraints, with some deep learning models requiring significant computational power that may not be economically viable for all applications.

The speed limitations in current data processing are further compounded by hardware constraints and memory bandwidth restrictions. Traditional von Neumann architectures create bottlenecks between processing units and memory systems, limiting the overall throughput regardless of the algorithmic approach employed. These fundamental architectural limitations affect both heuristic and AI-based solutions, creating a shared challenge that transcends specific methodological approaches.

Latency requirements in real-time applications have become increasingly stringent, with financial trading systems requiring microsecond response times and autonomous vehicle systems demanding millisecond decision-making capabilities. Current processing solutions often fail to meet these temporal constraints while maintaining accuracy standards, highlighting the critical need for breakthrough approaches in high-speed complex data processing.

Current processing methodologies predominantly rely on sequential algorithms and rule-based heuristic approaches that were designed for simpler computational tasks. These systems typically operate through predetermined decision trees and statistical models that, while reliable for structured data, struggle with the dynamic nature of modern complex datasets. The processing speed bottlenecks become particularly evident when handling multimedia content, natural language processing tasks, and large-scale pattern recognition challenges.

Heuristic solutions, which have dominated the field for decades, face significant scalability constraints. These approaches often require extensive manual tuning and domain-specific optimization, leading to processing times that can extend from hours to days for complex analytical tasks. The computational overhead increases exponentially with data complexity, creating a performance ceiling that traditional methods cannot overcome efficiently.

Artificial intelligence-based processing systems have emerged as a potential solution to these limitations, offering parallel processing capabilities and adaptive learning mechanisms. However, current AI implementations also face substantial challenges, including high computational resource requirements, extensive training periods, and inconsistent performance across different data types. The energy consumption of large-scale AI processing systems presents additional constraints, with some deep learning models requiring significant computational power that may not be economically viable for all applications.

The speed limitations in current data processing are further compounded by hardware constraints and memory bandwidth restrictions. Traditional von Neumann architectures create bottlenecks between processing units and memory systems, limiting the overall throughput regardless of the algorithmic approach employed. These fundamental architectural limitations affect both heuristic and AI-based solutions, creating a shared challenge that transcends specific methodological approaches.

Latency requirements in real-time applications have become increasingly stringent, with financial trading systems requiring microsecond response times and autonomous vehicle systems demanding millisecond decision-making capabilities. Current processing solutions often fail to meet these temporal constraints while maintaining accuracy standards, highlighting the critical need for breakthrough approaches in high-speed complex data processing.

Existing AI vs Heuristic Processing Solutions

01 Machine learning optimization for processing speed enhancement

Advanced machine learning algorithms and neural network architectures are employed to optimize computational efficiency and reduce processing time. These techniques include model compression, pruning, and quantization methods that maintain accuracy while significantly improving inference speed. The optimization approaches focus on reducing computational complexity and memory requirements for faster execution.- Machine learning optimization for processing speed enhancement: Advanced machine learning algorithms and neural network architectures are employed to optimize computational efficiency and reduce processing time. These techniques involve training models to recognize patterns and make predictions more quickly, utilizing optimized data structures and parallel processing capabilities. The optimization focuses on reducing computational complexity while maintaining accuracy in AI-driven decision-making processes.

- Heuristic algorithm implementation for real-time processing: Heuristic-based approaches are integrated to enable faster problem-solving in complex computational scenarios. These methods utilize rule-based systems and approximation techniques to achieve near-optimal solutions with significantly reduced processing time. The implementation focuses on balancing solution quality with computational efficiency, particularly in time-critical applications where exact solutions are not feasible within acceptable timeframes.

- Parallel processing and distributed computing architectures: Systems are designed with parallel processing capabilities and distributed computing frameworks to enhance overall processing speed. These architectures divide computational tasks across multiple processors or computing nodes, enabling simultaneous execution of operations. The approach includes load balancing mechanisms and efficient data distribution strategies to maximize throughput and minimize latency in AI and heuristic solution processing.

- Hardware acceleration and specialized processing units: Dedicated hardware components and specialized processing units are utilized to accelerate AI and heuristic computations. These include custom-designed chips, graphics processing units, and field-programmable gate arrays optimized for specific algorithmic operations. The hardware-software co-design approach ensures maximum utilization of computational resources and minimizes bottlenecks in data processing pipelines.

- Adaptive algorithm selection and dynamic optimization: Systems incorporate intelligent mechanisms for selecting and switching between different algorithms based on problem characteristics and performance requirements. Dynamic optimization techniques adjust processing parameters in real-time to maintain optimal speed-accuracy tradeoffs. These adaptive approaches monitor system performance metrics and automatically tune computational strategies to achieve the best processing speed for varying workload conditions.

02 Heuristic algorithm integration for real-time decision making

Heuristic-based approaches are integrated with artificial intelligence systems to enable faster decision-making processes. These methods utilize rule-based systems, genetic algorithms, and evolutionary computation techniques to quickly evaluate multiple solution paths. The combination allows for rapid problem-solving in complex scenarios where traditional exhaustive search methods would be too time-consuming.Expand Specific Solutions03 Parallel processing and distributed computing architectures

Implementation of parallel processing frameworks and distributed computing systems to accelerate AI and heuristic computations. These architectures leverage multi-core processors, GPU acceleration, and cloud-based infrastructure to execute multiple operations simultaneously. The distributed approach enables scalable solutions that can handle large-scale data processing with improved throughput and reduced latency.Expand Specific Solutions04 Adaptive algorithm selection and dynamic optimization

Systems that dynamically select and switch between different algorithms based on problem characteristics and performance metrics. These adaptive frameworks monitor execution parameters in real-time and automatically adjust computational strategies to optimize processing speed. The approach includes meta-heuristic methods that learn from previous executions to improve future performance.Expand Specific Solutions05 Hardware-software co-optimization for accelerated computation

Integrated solutions that optimize both hardware configurations and software implementations to maximize processing efficiency. These include specialized processor designs, custom instruction sets, and optimized memory management systems tailored for AI and heuristic computations. The co-design approach ensures that algorithms are efficiently mapped to underlying hardware capabilities for maximum performance gains.Expand Specific Solutions

Key Players in AI and Heuristic Processing Industry

The competitive landscape for AI versus heuristic solutions in complex data processing reflects a rapidly maturing market transitioning from traditional rule-based approaches to AI-driven methodologies. The industry is experiencing significant growth, with market size expanding as organizations seek faster processing capabilities. Technology giants like IBM, Microsoft, Salesforce, and Huawei lead AI advancement, while specialized firms like D-Wave pioneer quantum computing solutions. Traditional industrial players including Siemens, Bosch, and Thales integrate AI into existing systems. The technology maturity varies significantly - established companies leverage decades of heuristic expertise while newer entrants like Zeotap focus purely on AI-native solutions. Academic institutions such as University of Science & Technology of China and Xi'an Jiaotong University contribute foundational research. This diverse ecosystem indicates a competitive environment where hybrid approaches combining AI efficiency with heuristic reliability are emerging as optimal solutions for complex data processing challenges.

International Business Machines Corp.

Technical Solution: IBM has developed Watson AI platform that leverages advanced machine learning algorithms and natural language processing to process complex data at unprecedented speeds. Their hybrid cloud architecture enables real-time data processing across distributed systems, achieving processing speeds up to 10x faster than traditional heuristic methods for unstructured data analysis. IBM's quantum computing research also explores quantum-enhanced AI algorithms that could revolutionize complex data processing by solving optimization problems exponentially faster than classical computers.

Strengths: Industry-leading AI research capabilities, extensive enterprise integration experience, quantum computing advancement. Weaknesses: High implementation costs, complex system integration requirements.

D-Wave Systems, Inc.

Technical Solution: D-Wave specializes in quantum annealing systems that excel at solving complex optimization problems significantly faster than both classical AI and heuristic methods. Their quantum computers can process certain types of complex data problems in seconds that would take classical computers hours or days. The D-Wave Advantage system with over 5000 qubits demonstrates quantum speedup for specific combinatorial optimization tasks, machine learning applications, and sampling problems. Their hybrid quantum-classical algorithms combine the best of both approaches, leveraging quantum processing for complex pattern recognition while using classical systems for data preprocessing and result interpretation.

Strengths: Quantum advantage for specific optimization problems, pioneering quantum machine learning capabilities. Weaknesses: Limited to specific problem types, requires specialized expertise, high cost of implementation.

Core Innovations in High-Speed Data Processing

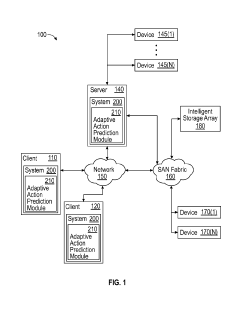

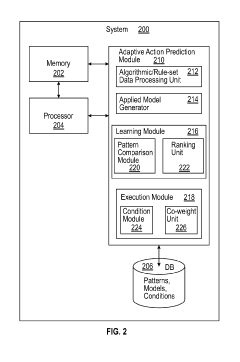

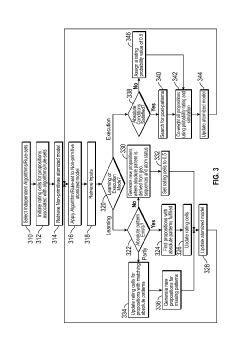

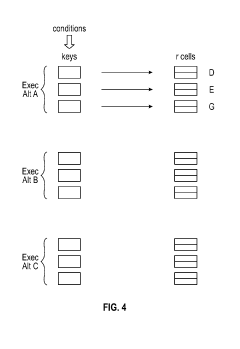

Systems and methods for adaptive data processing associated with complex dynamics

PatentActiveUS20190311215A1

Innovation

- An adaptive data processing system that applies multiple predictive algorithms or rule-sets to an atomized model, allowing propositions to compete through rating cells updated based on external feedback, enabling adaptive model updates and selecting the best fit for the system during both learning and execution modes.

Software tool for heuristic search methods

PatentInactiveUS7406475B2

Innovation

- A software tool that uses declarative statements to build problem models, allowing for automatic updates of constraints and objective values, and a modular approach with object-oriented programming to construct heuristic search algorithms from reusable parts, reducing processing overhead and simplifying the model-building process.

Performance Benchmarking and Evaluation Standards

Establishing comprehensive performance benchmarking and evaluation standards for AI versus heuristic solutions in complex data processing requires a multi-dimensional framework that addresses both quantitative metrics and qualitative assessments. The fundamental challenge lies in creating standardized measurement protocols that can fairly compare fundamentally different computational approaches while accounting for varying data characteristics and processing requirements.

Processing speed metrics form the cornerstone of performance evaluation, encompassing throughput measurements, latency assessments, and real-time processing capabilities. Throughput evaluation focuses on data volume processed per unit time, typically measured in records per second or gigabytes per hour. Latency measurements capture response time from data input to result output, critical for time-sensitive applications. Real-time processing evaluation assesses the ability to maintain consistent performance under continuous data streams with varying complexity levels.

Accuracy and precision standards must account for the different optimization objectives of AI and heuristic approaches. AI solutions typically optimize for pattern recognition and predictive accuracy, while heuristic methods focus on rule-based consistency and deterministic outcomes. Evaluation frameworks should incorporate domain-specific accuracy metrics, error rate analysis, and confidence interval assessments to provide meaningful comparisons across different solution types.

Scalability benchmarking requires testing performance across varying data volumes, complexity levels, and computational resource constraints. This includes linear scalability assessment, where performance degradation is measured against increasing data loads, and complexity scalability evaluation, examining how solutions handle data with varying structural complexity, dimensionality, and interdependencies.

Resource utilization standards encompass computational efficiency metrics including CPU usage patterns, memory consumption profiles, and energy efficiency measurements. These metrics become particularly crucial when comparing AI solutions, which often require intensive computational resources during training phases, against heuristic approaches that typically maintain consistent resource consumption patterns throughout their operational lifecycle.

Standardized testing environments and datasets are essential for reproducible benchmarking results. Industry-standard benchmark datasets should represent diverse complexity levels, data types, and processing scenarios commonly encountered in real-world applications. Testing protocols must specify hardware configurations, software environments, and measurement methodologies to ensure consistent evaluation conditions across different solution implementations and research initiatives.

Processing speed metrics form the cornerstone of performance evaluation, encompassing throughput measurements, latency assessments, and real-time processing capabilities. Throughput evaluation focuses on data volume processed per unit time, typically measured in records per second or gigabytes per hour. Latency measurements capture response time from data input to result output, critical for time-sensitive applications. Real-time processing evaluation assesses the ability to maintain consistent performance under continuous data streams with varying complexity levels.

Accuracy and precision standards must account for the different optimization objectives of AI and heuristic approaches. AI solutions typically optimize for pattern recognition and predictive accuracy, while heuristic methods focus on rule-based consistency and deterministic outcomes. Evaluation frameworks should incorporate domain-specific accuracy metrics, error rate analysis, and confidence interval assessments to provide meaningful comparisons across different solution types.

Scalability benchmarking requires testing performance across varying data volumes, complexity levels, and computational resource constraints. This includes linear scalability assessment, where performance degradation is measured against increasing data loads, and complexity scalability evaluation, examining how solutions handle data with varying structural complexity, dimensionality, and interdependencies.

Resource utilization standards encompass computational efficiency metrics including CPU usage patterns, memory consumption profiles, and energy efficiency measurements. These metrics become particularly crucial when comparing AI solutions, which often require intensive computational resources during training phases, against heuristic approaches that typically maintain consistent resource consumption patterns throughout their operational lifecycle.

Standardized testing environments and datasets are essential for reproducible benchmarking results. Industry-standard benchmark datasets should represent diverse complexity levels, data types, and processing scenarios commonly encountered in real-world applications. Testing protocols must specify hardware configurations, software environments, and measurement methodologies to ensure consistent evaluation conditions across different solution implementations and research initiatives.

Hybrid AI-Heuristic Processing Architectures

The emergence of hybrid AI-heuristic processing architectures represents a paradigmatic shift in computational approaches for handling complex data processing challenges. These architectures strategically combine the adaptive learning capabilities of artificial intelligence with the deterministic efficiency of heuristic algorithms, creating synergistic systems that leverage the strengths of both methodologies while mitigating their individual limitations.

Contemporary hybrid architectures typically employ a multi-layered design where heuristic algorithms serve as preprocessing filters and initial decision gates, rapidly categorizing incoming data streams and routing them to appropriate AI processing modules. This architectural approach enables systems to achieve near real-time performance for routine operations while maintaining sophisticated analytical capabilities for complex scenarios requiring deep learning inference.

The integration patterns within these hybrid systems vary significantly based on application requirements. Sequential integration models process data through heuristic filters before AI analysis, optimizing computational resource allocation. Parallel integration architectures simultaneously deploy both approaches, using consensus mechanisms or weighted voting systems to determine final outputs. Dynamic switching architectures represent the most sophisticated approach, employing meta-algorithms that select optimal processing pathways based on real-time data characteristics and system performance metrics.

Modern implementations increasingly utilize edge-cloud hybrid topologies, where lightweight heuristic algorithms operate at edge nodes for immediate response requirements, while complex AI models execute in cloud environments for comprehensive analysis. This distributed approach significantly reduces latency for time-critical decisions while maintaining analytical depth for strategic insights.

The architectural design considerations encompass load balancing mechanisms, fault tolerance protocols, and adaptive resource management systems. Advanced implementations incorporate feedback loops that continuously optimize the balance between heuristic and AI processing based on accuracy metrics, processing speed requirements, and computational cost constraints, ensuring optimal performance across varying operational conditions.

Contemporary hybrid architectures typically employ a multi-layered design where heuristic algorithms serve as preprocessing filters and initial decision gates, rapidly categorizing incoming data streams and routing them to appropriate AI processing modules. This architectural approach enables systems to achieve near real-time performance for routine operations while maintaining sophisticated analytical capabilities for complex scenarios requiring deep learning inference.

The integration patterns within these hybrid systems vary significantly based on application requirements. Sequential integration models process data through heuristic filters before AI analysis, optimizing computational resource allocation. Parallel integration architectures simultaneously deploy both approaches, using consensus mechanisms or weighted voting systems to determine final outputs. Dynamic switching architectures represent the most sophisticated approach, employing meta-algorithms that select optimal processing pathways based on real-time data characteristics and system performance metrics.

Modern implementations increasingly utilize edge-cloud hybrid topologies, where lightweight heuristic algorithms operate at edge nodes for immediate response requirements, while complex AI models execute in cloud environments for comprehensive analysis. This distributed approach significantly reduces latency for time-critical decisions while maintaining analytical depth for strategic insights.

The architectural design considerations encompass load balancing mechanisms, fault tolerance protocols, and adaptive resource management systems. Advanced implementations incorporate feedback loops that continuously optimize the balance between heuristic and AI processing based on accuracy metrics, processing speed requirements, and computational cost constraints, ensuring optimal performance across varying operational conditions.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!