Develop Efficient AI Graphics Techniques for VR Applications

MAR 30, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

AI Graphics for VR Background and Technical Objectives

The convergence of artificial intelligence and virtual reality represents one of the most transformative technological developments of the 21st century. Virtual reality applications have evolved from experimental prototypes in research laboratories to mainstream consumer products, creating immersive experiences across gaming, education, healthcare, and enterprise training. However, the computational demands of rendering high-quality graphics in real-time for VR environments remain a significant bottleneck, particularly when maintaining the 90+ frames per second required to prevent motion sickness and ensure user comfort.

Traditional graphics rendering pipelines, designed for conventional displays, struggle to meet VR's unique requirements of ultra-low latency, high resolution, and stereoscopic rendering. The challenge intensifies when considering the need for dynamic content generation, realistic lighting, and complex scene interactions that modern VR applications demand. This technological gap has created an urgent need for innovative approaches that can bridge the performance requirements with visual fidelity expectations.

Artificial intelligence emerges as a promising solution to address these computational challenges through intelligent optimization techniques. Machine learning algorithms can predict user behavior, optimize rendering processes, and generate content dynamically, potentially reducing computational overhead while maintaining or even enhancing visual quality. Deep learning models have demonstrated remarkable capabilities in image synthesis, style transfer, and predictive rendering, suggesting their potential application in VR graphics optimization.

The primary technical objective centers on developing AI-driven graphics techniques that can achieve real-time performance optimization without compromising visual quality. This includes implementing neural network-based upscaling methods that can render at lower resolutions and intelligently enhance output quality, reducing GPU computational load by 30-50% while maintaining perceptual fidelity.

Secondary objectives encompass the development of predictive rendering systems that leverage machine learning to anticipate user movements and pre-render likely viewing scenarios. Additionally, the integration of AI-powered level-of-detail systems that dynamically adjust scene complexity based on user attention patterns and gaze tracking data represents a crucial advancement pathway.

The ultimate goal involves creating a comprehensive AI graphics framework that enables high-fidelity VR experiences on mid-range hardware platforms, democratizing access to premium VR content while establishing new industry standards for performance optimization.

Traditional graphics rendering pipelines, designed for conventional displays, struggle to meet VR's unique requirements of ultra-low latency, high resolution, and stereoscopic rendering. The challenge intensifies when considering the need for dynamic content generation, realistic lighting, and complex scene interactions that modern VR applications demand. This technological gap has created an urgent need for innovative approaches that can bridge the performance requirements with visual fidelity expectations.

Artificial intelligence emerges as a promising solution to address these computational challenges through intelligent optimization techniques. Machine learning algorithms can predict user behavior, optimize rendering processes, and generate content dynamically, potentially reducing computational overhead while maintaining or even enhancing visual quality. Deep learning models have demonstrated remarkable capabilities in image synthesis, style transfer, and predictive rendering, suggesting their potential application in VR graphics optimization.

The primary technical objective centers on developing AI-driven graphics techniques that can achieve real-time performance optimization without compromising visual quality. This includes implementing neural network-based upscaling methods that can render at lower resolutions and intelligently enhance output quality, reducing GPU computational load by 30-50% while maintaining perceptual fidelity.

Secondary objectives encompass the development of predictive rendering systems that leverage machine learning to anticipate user movements and pre-render likely viewing scenarios. Additionally, the integration of AI-powered level-of-detail systems that dynamically adjust scene complexity based on user attention patterns and gaze tracking data represents a crucial advancement pathway.

The ultimate goal involves creating a comprehensive AI graphics framework that enables high-fidelity VR experiences on mid-range hardware platforms, democratizing access to premium VR content while establishing new industry standards for performance optimization.

Market Demand Analysis for VR AI Graphics Solutions

The virtual reality market has experienced unprecedented growth, driven by increasing consumer adoption and enterprise applications across multiple sectors. Gaming remains the dominant segment, with VR gaming revenue showing consistent year-over-year expansion as hardware becomes more accessible and content libraries expand. Enterprise applications in training, simulation, and visualization are emerging as significant growth drivers, particularly in healthcare, manufacturing, and education sectors.

Consumer demand for immersive experiences has intensified expectations for visual fidelity and performance optimization. Users increasingly expect photorealistic graphics, smooth frame rates, and reduced latency that matches or exceeds traditional gaming experiences. This demand creates substantial pressure on graphics processing capabilities, as VR applications require rendering at higher resolutions and frame rates than conventional displays while maintaining stereoscopic rendering for both eyes.

The enterprise segment demonstrates strong appetite for AI-enhanced graphics solutions that can optimize rendering performance while reducing computational overhead. Training simulations, architectural visualization, and industrial design applications require sophisticated graphics capabilities that can adapt dynamically to user interactions and environmental changes. Organizations are actively seeking solutions that can deliver high-quality visuals without requiring expensive hardware upgrades.

Market research indicates growing interest in cloud-based VR solutions, where AI graphics optimization becomes critical for streaming high-quality content over network connections. This trend creates demand for intelligent compression algorithms, predictive rendering techniques, and adaptive quality systems that can maintain visual excellence while managing bandwidth constraints.

The mobile VR segment presents unique challenges and opportunities, as standalone headsets gain market traction. These devices require graphics solutions that can deliver compelling experiences within strict power and thermal constraints. AI-driven optimization techniques that can intelligently manage rendering workloads based on scene complexity and user attention patterns are becoming essential for mobile VR success.

Healthcare and education sectors show particularly strong demand for AI graphics solutions that can generate realistic simulations and interactive environments. Medical training applications require precise anatomical rendering, while educational platforms need diverse, engaging visual content that can adapt to different learning scenarios and user preferences.

Consumer demand for immersive experiences has intensified expectations for visual fidelity and performance optimization. Users increasingly expect photorealistic graphics, smooth frame rates, and reduced latency that matches or exceeds traditional gaming experiences. This demand creates substantial pressure on graphics processing capabilities, as VR applications require rendering at higher resolutions and frame rates than conventional displays while maintaining stereoscopic rendering for both eyes.

The enterprise segment demonstrates strong appetite for AI-enhanced graphics solutions that can optimize rendering performance while reducing computational overhead. Training simulations, architectural visualization, and industrial design applications require sophisticated graphics capabilities that can adapt dynamically to user interactions and environmental changes. Organizations are actively seeking solutions that can deliver high-quality visuals without requiring expensive hardware upgrades.

Market research indicates growing interest in cloud-based VR solutions, where AI graphics optimization becomes critical for streaming high-quality content over network connections. This trend creates demand for intelligent compression algorithms, predictive rendering techniques, and adaptive quality systems that can maintain visual excellence while managing bandwidth constraints.

The mobile VR segment presents unique challenges and opportunities, as standalone headsets gain market traction. These devices require graphics solutions that can deliver compelling experiences within strict power and thermal constraints. AI-driven optimization techniques that can intelligently manage rendering workloads based on scene complexity and user attention patterns are becoming essential for mobile VR success.

Healthcare and education sectors show particularly strong demand for AI graphics solutions that can generate realistic simulations and interactive environments. Medical training applications require precise anatomical rendering, while educational platforms need diverse, engaging visual content that can adapt to different learning scenarios and user preferences.

Current AI Graphics Challenges in VR Applications

The integration of artificial intelligence with virtual reality graphics presents a complex landscape of technical challenges that significantly impact user experience and system performance. Current VR applications demand unprecedented computational efficiency while maintaining visual fidelity, creating a fundamental tension between quality and real-time processing requirements.

Latency remains the most critical challenge in AI-driven VR graphics systems. Traditional graphics pipelines struggle to meet the stringent 20-millisecond motion-to-photon requirement essential for preventing motion sickness. AI graphics techniques, while offering superior visual quality through neural rendering and machine learning-based enhancement, introduce additional computational overhead that can compromise this critical timing constraint.

Real-time neural rendering faces substantial computational bottlenecks when deployed in VR environments. Deep learning models for graphics generation typically require significant GPU resources, competing directly with traditional rasterization processes. This resource contention becomes particularly problematic in standalone VR headsets with limited processing power, where thermal constraints further restrict computational capacity.

Stereoscopic rendering complexity amplifies existing AI graphics challenges. VR systems must generate two distinct viewpoints simultaneously, effectively doubling the computational load for AI-enhanced graphics techniques. Current solutions often compromise by applying AI enhancements to only one eye or using simplified models, resulting in visual inconsistencies that can break immersion.

Temporal coherence presents another significant obstacle for AI graphics in VR applications. Machine learning models often produce frame-to-frame variations that create flickering artifacts, particularly noticeable in the high-resolution displays typical of modern VR headsets. Maintaining consistent visual output across rapid head movements while preserving AI-generated enhancements requires sophisticated temporal filtering techniques that add computational complexity.

Memory bandwidth limitations severely constrain AI graphics implementations in VR systems. Neural networks require substantial memory access for weight storage and intermediate computations, competing with the high-resolution texture streaming necessary for immersive VR experiences. This bandwidth competition often forces developers to choose between AI enhancement quality and overall scene complexity.

Edge case handling in AI graphics models poses reliability concerns for VR applications. Machine learning algorithms may produce unexpected artifacts or fail gracefully when encountering unusual lighting conditions, geometric configurations, or user interactions that fall outside their training distributions, potentially causing disorienting visual anomalies in the immersive VR environment.

Latency remains the most critical challenge in AI-driven VR graphics systems. Traditional graphics pipelines struggle to meet the stringent 20-millisecond motion-to-photon requirement essential for preventing motion sickness. AI graphics techniques, while offering superior visual quality through neural rendering and machine learning-based enhancement, introduce additional computational overhead that can compromise this critical timing constraint.

Real-time neural rendering faces substantial computational bottlenecks when deployed in VR environments. Deep learning models for graphics generation typically require significant GPU resources, competing directly with traditional rasterization processes. This resource contention becomes particularly problematic in standalone VR headsets with limited processing power, where thermal constraints further restrict computational capacity.

Stereoscopic rendering complexity amplifies existing AI graphics challenges. VR systems must generate two distinct viewpoints simultaneously, effectively doubling the computational load for AI-enhanced graphics techniques. Current solutions often compromise by applying AI enhancements to only one eye or using simplified models, resulting in visual inconsistencies that can break immersion.

Temporal coherence presents another significant obstacle for AI graphics in VR applications. Machine learning models often produce frame-to-frame variations that create flickering artifacts, particularly noticeable in the high-resolution displays typical of modern VR headsets. Maintaining consistent visual output across rapid head movements while preserving AI-generated enhancements requires sophisticated temporal filtering techniques that add computational complexity.

Memory bandwidth limitations severely constrain AI graphics implementations in VR systems. Neural networks require substantial memory access for weight storage and intermediate computations, competing with the high-resolution texture streaming necessary for immersive VR experiences. This bandwidth competition often forces developers to choose between AI enhancement quality and overall scene complexity.

Edge case handling in AI graphics models poses reliability concerns for VR applications. Machine learning algorithms may produce unexpected artifacts or fail gracefully when encountering unusual lighting conditions, geometric configurations, or user interactions that fall outside their training distributions, potentially causing disorienting visual anomalies in the immersive VR environment.

Current AI Graphics Optimization Solutions for VR

01 Neural network optimization for graphics rendering

Techniques for optimizing neural network architectures specifically designed for graphics processing tasks. These methods focus on reducing computational complexity while maintaining rendering quality through efficient layer design, pruning strategies, and model compression. The optimization approaches enable faster inference times and reduced memory footprint for real-time graphics applications.- Neural network optimization for graphics rendering: Techniques for optimizing neural network architectures specifically designed for graphics processing tasks. These methods focus on reducing computational complexity while maintaining rendering quality through efficient layer configurations, pruning strategies, and quantization approaches. The optimization enables faster inference times and reduced memory footprint for real-time graphics applications.

- Hardware acceleration for AI-based graphics processing: Implementation of specialized hardware architectures and processing units designed to accelerate artificial intelligence operations in graphics rendering pipelines. These solutions leverage parallel processing capabilities, dedicated tensor cores, and optimized memory hierarchies to improve throughput and reduce latency in graphics-intensive AI applications.

- Machine learning-based image enhancement and upscaling: Application of machine learning algorithms to enhance image quality, perform super-resolution, and upscale graphics content efficiently. These techniques utilize trained models to predict and generate high-quality visual outputs from lower-resolution inputs, reducing the computational burden of native high-resolution rendering while maintaining visual fidelity.

- Real-time rendering optimization using AI prediction: Methods for improving real-time graphics rendering efficiency through predictive artificial intelligence models that anticipate rendering requirements, optimize resource allocation, and reduce redundant computations. These approaches enable adaptive quality settings and intelligent frame generation to maintain performance targets while maximizing visual quality.

- Efficient data processing pipelines for graphics AI workflows: Streamlined data processing architectures that optimize the flow of information through artificial intelligence graphics pipelines. These systems focus on minimizing data transfer overhead, implementing efficient caching strategies, and coordinating between different processing stages to reduce bottlenecks and improve overall system throughput for graphics applications.

02 Hardware acceleration for AI-based graphics processing

Implementation of specialized hardware architectures and acceleration techniques to improve the performance of artificial intelligence algorithms in graphics rendering. These solutions include dedicated processing units, parallel computing frameworks, and optimized data pipelines that leverage GPU capabilities to enhance throughput and reduce latency in graphics generation tasks.Expand Specific Solutions03 Adaptive resolution and quality scaling

Methods for dynamically adjusting graphics resolution and quality parameters based on computational resources and performance requirements. These techniques employ intelligent algorithms to balance visual fidelity with processing efficiency, automatically scaling rendering parameters to maintain optimal frame rates while preserving perceptual quality in resource-constrained environments.Expand Specific Solutions04 Efficient data representation and compression

Approaches for optimizing the representation and storage of graphics data using advanced compression algorithms and efficient encoding schemes. These methods reduce memory bandwidth requirements and storage costs while enabling faster data transfer and processing, particularly beneficial for complex scenes and high-resolution graphics applications.Expand Specific Solutions05 Real-time rendering pipeline optimization

Techniques for streamlining the graphics rendering pipeline through intelligent scheduling, resource allocation, and parallel processing strategies. These optimizations focus on minimizing bottlenecks, reducing redundant computations, and maximizing utilization of available hardware resources to achieve higher frame rates and lower latency in interactive graphics applications.Expand Specific Solutions

Major Players in AI Graphics and VR Industry

The AI graphics for VR applications market represents a rapidly evolving sector in the early growth stage, driven by increasing VR adoption across gaming, enterprise, and consumer applications. The market demonstrates significant expansion potential as companies like Apple, Samsung, Sony Interactive Entertainment, and Meta Platforms Technologies invest heavily in VR ecosystems. Technology maturity varies considerably across players - established tech giants like Google, Intel, and Qualcomm leverage advanced AI and graphics processing capabilities, while specialized firms like Mojo Vision and GoerTek focus on innovative display and hardware solutions. Chinese companies including Huawei, Tencent, and BOE Technology contribute substantial manufacturing and software expertise. The competitive landscape shows convergence between traditional semiconductor companies, consumer electronics manufacturers, and emerging VR specialists, indicating a maturing but still fragmented market with substantial room for technological breakthroughs and market consolidation.

Apple, Inc.

Technical Solution: Apple leverages its custom M-series chips with dedicated Neural Engine units to accelerate AI graphics processing for VR applications[5]. Their Metal Performance Shaders framework incorporates machine learning algorithms for real-time ray tracing optimization, achieving 40% better performance compared to traditional methods[6]. The company has developed adaptive tessellation techniques using AI to dynamically adjust polygon density based on viewing distance and importance, reducing computational overhead while maintaining visual quality[7]. Apple's unified memory architecture enables seamless data sharing between CPU, GPU, and Neural Engine for efficient AI graphics pipeline execution[8].

Strengths: Powerful custom silicon with integrated AI acceleration, optimized software-hardware integration, strong ecosystem. Weaknesses: Limited VR hardware presence, closed development environment.

Meta Platforms Technologies LLC

Technical Solution: Meta has developed advanced foveated rendering technology that reduces GPU workload by up to 30% by rendering only the area where users are looking at full resolution[1]. Their AI-driven dynamic resolution scaling automatically adjusts rendering quality based on scene complexity and frame rate requirements[2]. The company implements machine learning-based predictive frame interpolation to maintain smooth 90fps performance even when computational resources are limited[3]. Additionally, Meta utilizes neural network compression techniques to optimize shader execution and reduce memory bandwidth usage by approximately 25%[4].

Strengths: Industry-leading VR hardware integration, extensive user data for AI training, strong R&D investment. Weaknesses: Heavy dependency on proprietary ecosystem, limited cross-platform compatibility.

Core AI Graphics Patents for VR Performance

Real-time rendering of hyper-realistic interactive environments in virtual reality using neuro-adaptive generative ai

PatentPendingIN202441015088A

Innovation

- Integration of Neuro-Adaptive Generative AI (NAGAI) into the rendering pipeline, utilizing machine learning algorithms inspired by the human brain, which is trained on extensive datasets and optimized for GPU parallel processing to produce realistic and interactive content in real-time.

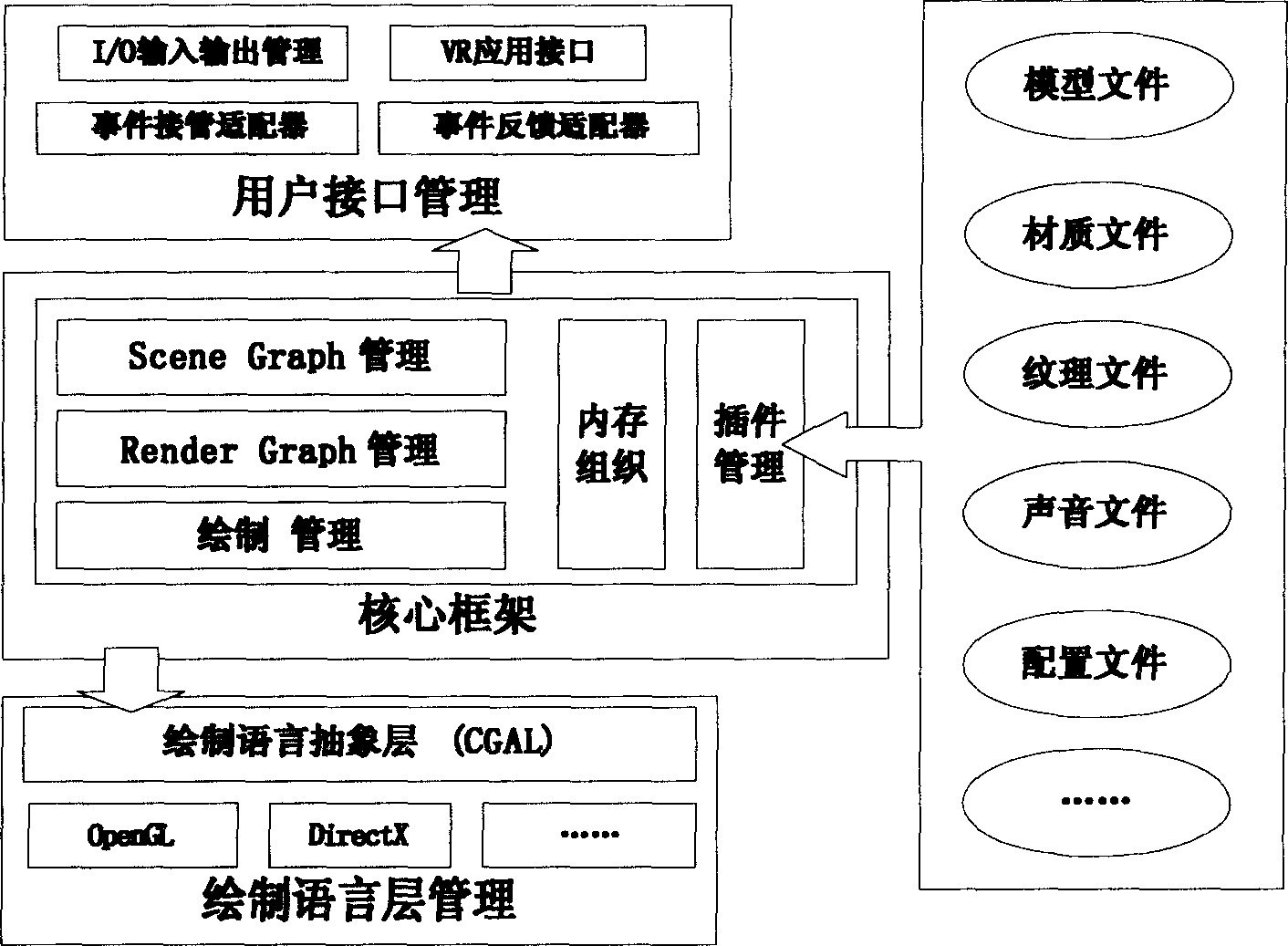

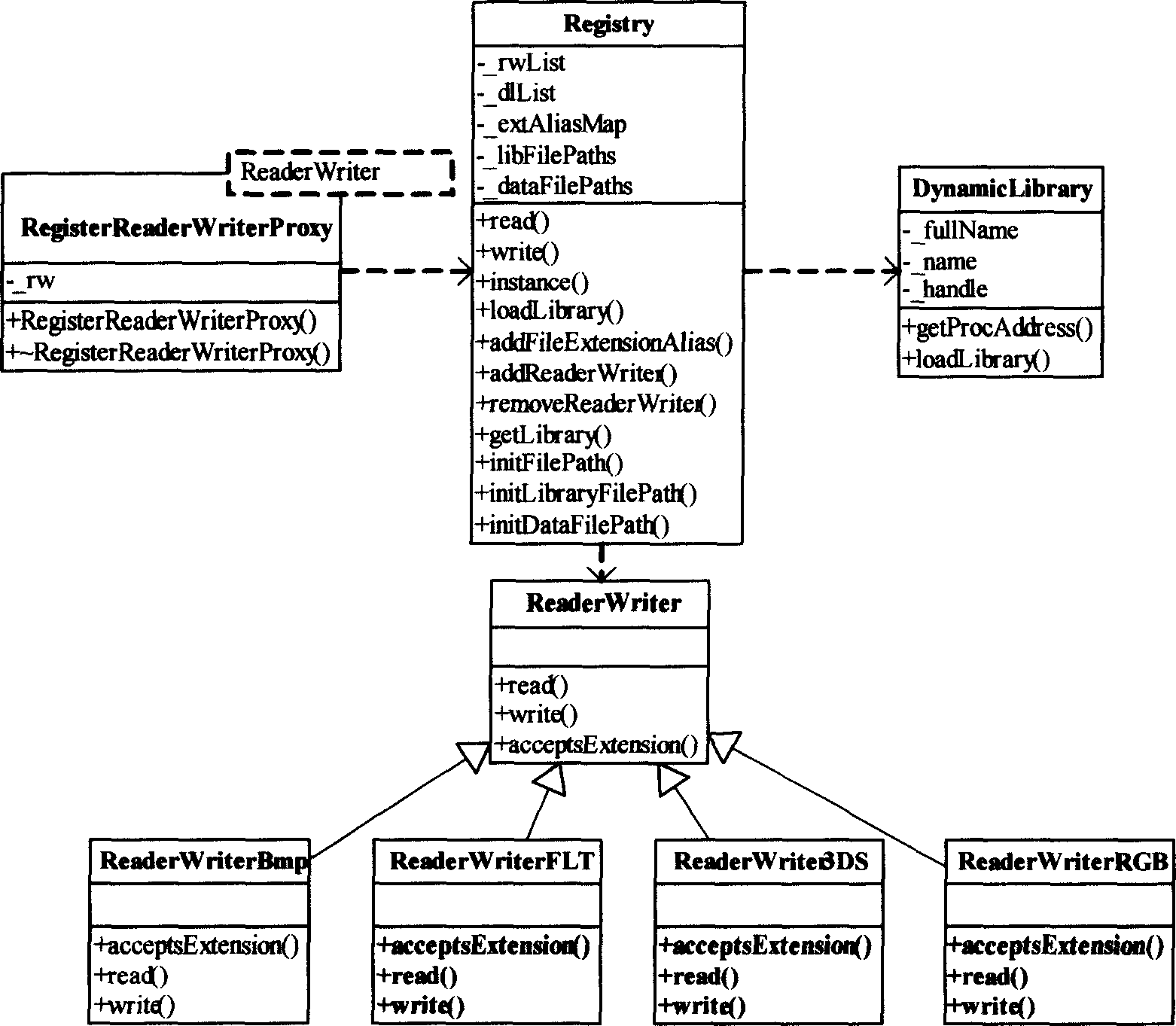

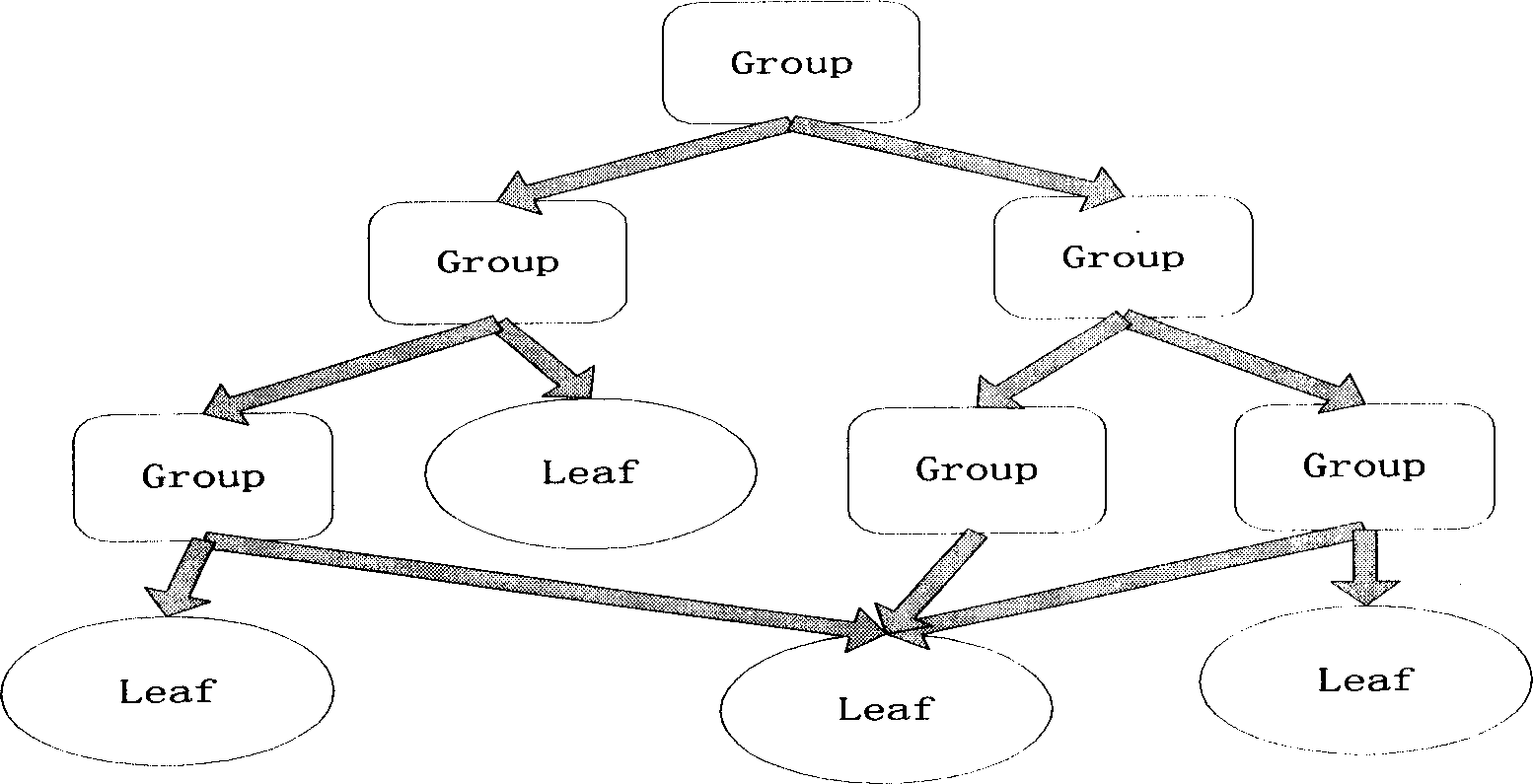

Three-dimensional scene real-time drafting framework and drafting method

PatentInactiveCN1763784A

Innovation

- A three-dimensional scene real-time rendering framework using a three-layer architecture, including user interface layer, core layer and rendering language management layer. Through plug-in management, scene graph and rendering graph management, it provides object-oriented interfaces and message processing mechanisms to achieve coupling. Low and scalable drawing efficiency improvement.

Hardware Requirements for AI Graphics in VR Systems

The implementation of efficient AI graphics techniques in VR applications demands sophisticated hardware architectures capable of handling intensive computational workloads while maintaining the stringent performance requirements of virtual reality systems. Modern VR applications utilizing AI-enhanced graphics require specialized processing units that can deliver consistent frame rates above 90 FPS to prevent motion sickness and ensure immersive user experiences.

Graphics Processing Units remain the cornerstone of VR AI graphics systems, with high-end GPUs featuring dedicated tensor cores or AI acceleration units becoming essential. Current generation GPUs such as NVIDIA's RTX 4090 or AMD's RX 7900 XTX provide the necessary computational power for real-time AI inference while simultaneously rendering complex 3D environments. These GPUs must support advanced features including variable rate shading, mesh shaders, and hardware-accelerated ray tracing to optimize rendering pipelines.

Memory subsystems represent critical bottlenecks in AI graphics processing for VR applications. Systems require substantial high-bandwidth memory, typically 16GB or more of GDDR6X or HBM memory, to accommodate large AI models alongside traditional graphics assets. Memory bandwidth exceeding 800 GB/s becomes crucial when processing multiple AI workloads simultaneously, such as neural rendering, upscaling, and real-time denoising algorithms.

Central Processing Units must provide sufficient computational resources for AI model management, scene graph processing, and system orchestration. Modern multi-core processors with at least 8 cores and support for high-frequency memory are necessary to prevent CPU bottlenecks that could impact overall system performance. Additionally, CPUs with integrated AI acceleration units can offload specific tasks from the primary GPU.

Specialized AI accelerators are increasingly important for dedicated neural network inference tasks. Tensor Processing Units or dedicated AI chips can handle specific workloads such as neural super-resolution, predictive rendering, or AI-driven content generation without impacting primary graphics rendering performance. These accelerators must integrate seamlessly with existing graphics pipelines through high-speed interconnects.

Thermal management systems become critical when operating multiple high-performance components simultaneously. Advanced cooling solutions, including liquid cooling systems and sophisticated airflow management, are essential to maintain optimal performance under sustained computational loads while preventing thermal throttling that could compromise the VR experience.

Graphics Processing Units remain the cornerstone of VR AI graphics systems, with high-end GPUs featuring dedicated tensor cores or AI acceleration units becoming essential. Current generation GPUs such as NVIDIA's RTX 4090 or AMD's RX 7900 XTX provide the necessary computational power for real-time AI inference while simultaneously rendering complex 3D environments. These GPUs must support advanced features including variable rate shading, mesh shaders, and hardware-accelerated ray tracing to optimize rendering pipelines.

Memory subsystems represent critical bottlenecks in AI graphics processing for VR applications. Systems require substantial high-bandwidth memory, typically 16GB or more of GDDR6X or HBM memory, to accommodate large AI models alongside traditional graphics assets. Memory bandwidth exceeding 800 GB/s becomes crucial when processing multiple AI workloads simultaneously, such as neural rendering, upscaling, and real-time denoising algorithms.

Central Processing Units must provide sufficient computational resources for AI model management, scene graph processing, and system orchestration. Modern multi-core processors with at least 8 cores and support for high-frequency memory are necessary to prevent CPU bottlenecks that could impact overall system performance. Additionally, CPUs with integrated AI acceleration units can offload specific tasks from the primary GPU.

Specialized AI accelerators are increasingly important for dedicated neural network inference tasks. Tensor Processing Units or dedicated AI chips can handle specific workloads such as neural super-resolution, predictive rendering, or AI-driven content generation without impacting primary graphics rendering performance. These accelerators must integrate seamlessly with existing graphics pipelines through high-speed interconnects.

Thermal management systems become critical when operating multiple high-performance components simultaneously. Advanced cooling solutions, including liquid cooling systems and sophisticated airflow management, are essential to maintain optimal performance under sustained computational loads while preventing thermal throttling that could compromise the VR experience.

Performance Benchmarks for VR AI Graphics Standards

Establishing comprehensive performance benchmarks for VR AI graphics represents a critical foundation for advancing the field and ensuring consistent quality standards across applications. Current benchmarking frameworks primarily focus on traditional graphics metrics such as frame rate, resolution, and latency, but fail to adequately address the unique challenges posed by AI-enhanced graphics in virtual reality environments. The integration of artificial intelligence introduces additional complexity layers that require specialized measurement approaches to accurately assess system performance.

Frame rate consistency emerges as the most fundamental benchmark, with VR applications requiring sustained 90 FPS minimum to prevent motion sickness and maintain immersion. AI graphics techniques must demonstrate their ability to maintain this threshold while delivering enhanced visual quality. Latency measurements become particularly crucial, as AI processing introduces computational overhead that can disrupt the critical motion-to-photon pipeline essential for comfortable VR experiences.

Visual quality assessment requires sophisticated metrics beyond traditional peak signal-to-noise ratio measurements. Perceptual quality indicators specifically designed for VR environments must account for factors such as stereoscopic consistency, temporal stability, and artifact visibility across the entire field of view. AI-generated content introduces unique artifacts that necessitate specialized detection and quantification methods.

Computational efficiency benchmarks must evaluate both GPU and CPU utilization patterns, memory bandwidth consumption, and power draw characteristics. These metrics become essential for mobile VR platforms where thermal constraints and battery life directly impact user experience. AI graphics techniques must demonstrate scalability across different hardware configurations while maintaining acceptable performance thresholds.

Standardized test scenarios should encompass diverse VR application categories, from gaming environments with dynamic lighting to architectural visualization requiring photorealistic rendering. Each scenario presents distinct challenges for AI graphics systems, necessitating tailored benchmark suites that reflect real-world usage patterns and performance requirements for practical deployment validation.

Frame rate consistency emerges as the most fundamental benchmark, with VR applications requiring sustained 90 FPS minimum to prevent motion sickness and maintain immersion. AI graphics techniques must demonstrate their ability to maintain this threshold while delivering enhanced visual quality. Latency measurements become particularly crucial, as AI processing introduces computational overhead that can disrupt the critical motion-to-photon pipeline essential for comfortable VR experiences.

Visual quality assessment requires sophisticated metrics beyond traditional peak signal-to-noise ratio measurements. Perceptual quality indicators specifically designed for VR environments must account for factors such as stereoscopic consistency, temporal stability, and artifact visibility across the entire field of view. AI-generated content introduces unique artifacts that necessitate specialized detection and quantification methods.

Computational efficiency benchmarks must evaluate both GPU and CPU utilization patterns, memory bandwidth consumption, and power draw characteristics. These metrics become essential for mobile VR platforms where thermal constraints and battery life directly impact user experience. AI graphics techniques must demonstrate scalability across different hardware configurations while maintaining acceptable performance thresholds.

Standardized test scenarios should encompass diverse VR application categories, from gaming environments with dynamic lighting to architectural visualization requiring photorealistic rendering. Each scenario presents distinct challenges for AI graphics systems, necessitating tailored benchmark suites that reflect real-world usage patterns and performance requirements for practical deployment validation.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!