Diffusion Policy for Forensics: How It Affects Signal Accuracy

APR 14, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Diffusion Policy Background and Forensic Signal Goals

Diffusion models represent a paradigm shift in generative artificial intelligence, fundamentally altering how complex data distributions are learned and synthesized. Originally developed for image generation tasks, these probabilistic models operate through a forward diffusion process that gradually adds noise to data until it becomes pure Gaussian noise, followed by a reverse denoising process that reconstructs meaningful data from random noise. The mathematical foundation relies on stochastic differential equations and variational inference, enabling precise control over the generation process through learned probability distributions.

The evolution of diffusion models traces back to thermodynamic principles and non-equilibrium statistical physics, where researchers observed how systems naturally progress from ordered to disordered states. Early implementations in machine learning focused primarily on computer vision applications, demonstrating remarkable capabilities in generating high-fidelity images with unprecedented quality and diversity. The breakthrough came with the development of denoising diffusion probabilistic models (DDPMs) and score-based generative models, which provided tractable training procedures and stable convergence properties.

Recent advances have extended diffusion model applications beyond traditional generative tasks into policy learning and decision-making frameworks. Diffusion policies leverage the inherent smoothness and multimodal capabilities of diffusion processes to model complex behavioral patterns and sequential decision-making scenarios. This approach addresses fundamental limitations in conventional policy optimization methods, particularly in handling high-dimensional action spaces and capturing diverse behavioral modes within a single unified framework.

In forensic science applications, the integration of diffusion policies presents both unprecedented opportunities and significant challenges for signal accuracy preservation. Traditional forensic signal processing relies heavily on deterministic algorithms and established mathematical transforms that maintain strict chain-of-custody requirements for evidence integrity. The introduction of probabilistic generative models into this domain necessitates careful consideration of how stochastic processes might affect the reliability and admissibility of forensic evidence.

The primary technical objectives for implementing diffusion policies in forensic contexts center on maintaining signal fidelity while enhancing analytical capabilities. Key goals include preserving original signal characteristics during processing, ensuring reproducible results across different computational environments, and providing quantifiable uncertainty measures for forensic conclusions. Additionally, the technology aims to improve detection sensitivity for subtle signal anomalies that might indicate tampering or manipulation, while simultaneously reducing false positive rates that could compromise investigative accuracy.

Regulatory compliance represents another critical objective, as forensic applications must adhere to strict legal standards for evidence processing and analysis. The implementation of diffusion policies must demonstrate verifiable accuracy metrics, maintain audit trails for all processing steps, and provide clear documentation of algorithmic decision-making processes that can withstand legal scrutiny in courtroom environments.

The evolution of diffusion models traces back to thermodynamic principles and non-equilibrium statistical physics, where researchers observed how systems naturally progress from ordered to disordered states. Early implementations in machine learning focused primarily on computer vision applications, demonstrating remarkable capabilities in generating high-fidelity images with unprecedented quality and diversity. The breakthrough came with the development of denoising diffusion probabilistic models (DDPMs) and score-based generative models, which provided tractable training procedures and stable convergence properties.

Recent advances have extended diffusion model applications beyond traditional generative tasks into policy learning and decision-making frameworks. Diffusion policies leverage the inherent smoothness and multimodal capabilities of diffusion processes to model complex behavioral patterns and sequential decision-making scenarios. This approach addresses fundamental limitations in conventional policy optimization methods, particularly in handling high-dimensional action spaces and capturing diverse behavioral modes within a single unified framework.

In forensic science applications, the integration of diffusion policies presents both unprecedented opportunities and significant challenges for signal accuracy preservation. Traditional forensic signal processing relies heavily on deterministic algorithms and established mathematical transforms that maintain strict chain-of-custody requirements for evidence integrity. The introduction of probabilistic generative models into this domain necessitates careful consideration of how stochastic processes might affect the reliability and admissibility of forensic evidence.

The primary technical objectives for implementing diffusion policies in forensic contexts center on maintaining signal fidelity while enhancing analytical capabilities. Key goals include preserving original signal characteristics during processing, ensuring reproducible results across different computational environments, and providing quantifiable uncertainty measures for forensic conclusions. Additionally, the technology aims to improve detection sensitivity for subtle signal anomalies that might indicate tampering or manipulation, while simultaneously reducing false positive rates that could compromise investigative accuracy.

Regulatory compliance represents another critical objective, as forensic applications must adhere to strict legal standards for evidence processing and analysis. The implementation of diffusion policies must demonstrate verifiable accuracy metrics, maintain audit trails for all processing steps, and provide clear documentation of algorithmic decision-making processes that can withstand legal scrutiny in courtroom environments.

Market Demand for Enhanced Digital Forensic Accuracy

The digital forensics market is experiencing unprecedented growth driven by escalating cybersecurity threats, regulatory compliance requirements, and the exponential increase in digital evidence volumes. Law enforcement agencies, corporate security teams, and legal professionals are demanding more sophisticated tools capable of processing complex digital artifacts with higher precision and reliability.

Traditional forensic analysis methods face significant limitations when dealing with modern digital evidence, particularly in scenarios involving encrypted data, steganography, and sophisticated data manipulation techniques. The emergence of deepfakes, AI-generated content, and advanced obfuscation methods has created critical gaps in existing forensic capabilities, necessitating innovative approaches to signal analysis and evidence authentication.

Government agencies worldwide are allocating substantial budgets toward enhancing their digital forensic capabilities. The increasing frequency of state-sponsored cyberattacks, ransomware incidents, and digital fraud cases has elevated the importance of accurate signal detection and analysis in forensic investigations. Courts are demanding higher standards of evidence authenticity, creating pressure for forensic tools that can provide mathematically verifiable results.

Corporate enterprises face mounting pressure to implement robust digital forensic capabilities for incident response, intellectual property protection, and regulatory compliance. Industries such as financial services, healthcare, and telecommunications require forensic solutions that can accurately distinguish between legitimate signals and manipulated data while maintaining chain of custody integrity.

The proliferation of Internet of Things devices, mobile communications, and cloud-based services has expanded the digital evidence landscape exponentially. Forensic investigators need advanced signal processing capabilities to extract meaningful information from increasingly complex data sources while ensuring accuracy standards that meet legal admissibility requirements.

Emerging technologies like blockchain forensics, cryptocurrency tracing, and social media analysis are creating new market segments within digital forensics. These applications demand enhanced signal accuracy to trace transaction patterns, identify communication networks, and establish digital fingerprints with sufficient precision for legal proceedings.

The market is particularly focused on solutions that can automate complex analysis processes while maintaining human-interpretable results, addressing the shortage of skilled forensic analysts and the need for scalable investigation capabilities across diverse digital platforms and communication protocols.

Traditional forensic analysis methods face significant limitations when dealing with modern digital evidence, particularly in scenarios involving encrypted data, steganography, and sophisticated data manipulation techniques. The emergence of deepfakes, AI-generated content, and advanced obfuscation methods has created critical gaps in existing forensic capabilities, necessitating innovative approaches to signal analysis and evidence authentication.

Government agencies worldwide are allocating substantial budgets toward enhancing their digital forensic capabilities. The increasing frequency of state-sponsored cyberattacks, ransomware incidents, and digital fraud cases has elevated the importance of accurate signal detection and analysis in forensic investigations. Courts are demanding higher standards of evidence authenticity, creating pressure for forensic tools that can provide mathematically verifiable results.

Corporate enterprises face mounting pressure to implement robust digital forensic capabilities for incident response, intellectual property protection, and regulatory compliance. Industries such as financial services, healthcare, and telecommunications require forensic solutions that can accurately distinguish between legitimate signals and manipulated data while maintaining chain of custody integrity.

The proliferation of Internet of Things devices, mobile communications, and cloud-based services has expanded the digital evidence landscape exponentially. Forensic investigators need advanced signal processing capabilities to extract meaningful information from increasingly complex data sources while ensuring accuracy standards that meet legal admissibility requirements.

Emerging technologies like blockchain forensics, cryptocurrency tracing, and social media analysis are creating new market segments within digital forensics. These applications demand enhanced signal accuracy to trace transaction patterns, identify communication networks, and establish digital fingerprints with sufficient precision for legal proceedings.

The market is particularly focused on solutions that can automate complex analysis processes while maintaining human-interpretable results, addressing the shortage of skilled forensic analysts and the need for scalable investigation capabilities across diverse digital platforms and communication protocols.

Current Forensic Signal Processing Challenges and Limitations

Forensic signal processing faces significant challenges in maintaining signal integrity while extracting meaningful evidence from digital data. Traditional forensic methods often struggle with the inherent trade-off between noise reduction and preservation of critical forensic features. Conventional filtering techniques, while effective at removing unwanted artifacts, frequently introduce distortions that can compromise the authenticity and admissibility of digital evidence in legal proceedings.

The complexity of modern digital signals presents substantial obstacles for forensic analysts. Multi-layered compression algorithms, sophisticated encoding schemes, and intentional obfuscation techniques employed by malicious actors create environments where standard signal processing approaches fail to deliver reliable results. These challenges are particularly pronounced when dealing with multimedia evidence, where lossy compression and format conversions can mask or eliminate crucial forensic markers.

Current forensic signal processing workflows suffer from limited adaptability to diverse signal types and sources. Existing methodologies often require manual parameter tuning and domain-specific expertise, making them unsuitable for automated processing of large-scale digital evidence collections. The lack of standardized approaches across different forensic laboratories further compounds these limitations, leading to inconsistent results and potential challenges to evidence validity.

Temporal and spatial resolution constraints represent another critical limitation in contemporary forensic signal processing. Many existing techniques struggle to maintain fine-grained detail while simultaneously providing robust noise suppression. This limitation is particularly problematic when analyzing degraded or partially corrupted signals, where traditional reconstruction methods may introduce artifacts that could be mistaken for genuine forensic evidence.

The emergence of adversarial attacks specifically targeting forensic analysis tools has exposed fundamental vulnerabilities in current signal processing frameworks. These attacks exploit the deterministic nature of traditional algorithms, introducing carefully crafted perturbations that can fool automated detection systems while remaining imperceptible to human observers. Such vulnerabilities highlight the urgent need for more robust and adaptive signal processing approaches.

Computational efficiency remains a persistent challenge, particularly when processing high-resolution multimedia content or large datasets. Current forensic signal processing techniques often require significant computational resources and extended processing times, limiting their practical applicability in time-sensitive investigations. The scalability issues become more pronounced when dealing with emerging data formats and increasing file sizes in modern digital environments.

The complexity of modern digital signals presents substantial obstacles for forensic analysts. Multi-layered compression algorithms, sophisticated encoding schemes, and intentional obfuscation techniques employed by malicious actors create environments where standard signal processing approaches fail to deliver reliable results. These challenges are particularly pronounced when dealing with multimedia evidence, where lossy compression and format conversions can mask or eliminate crucial forensic markers.

Current forensic signal processing workflows suffer from limited adaptability to diverse signal types and sources. Existing methodologies often require manual parameter tuning and domain-specific expertise, making them unsuitable for automated processing of large-scale digital evidence collections. The lack of standardized approaches across different forensic laboratories further compounds these limitations, leading to inconsistent results and potential challenges to evidence validity.

Temporal and spatial resolution constraints represent another critical limitation in contemporary forensic signal processing. Many existing techniques struggle to maintain fine-grained detail while simultaneously providing robust noise suppression. This limitation is particularly problematic when analyzing degraded or partially corrupted signals, where traditional reconstruction methods may introduce artifacts that could be mistaken for genuine forensic evidence.

The emergence of adversarial attacks specifically targeting forensic analysis tools has exposed fundamental vulnerabilities in current signal processing frameworks. These attacks exploit the deterministic nature of traditional algorithms, introducing carefully crafted perturbations that can fool automated detection systems while remaining imperceptible to human observers. Such vulnerabilities highlight the urgent need for more robust and adaptive signal processing approaches.

Computational efficiency remains a persistent challenge, particularly when processing high-resolution multimedia content or large datasets. Current forensic signal processing techniques often require significant computational resources and extended processing times, limiting their practical applicability in time-sensitive investigations. The scalability issues become more pronounced when dealing with emerging data formats and increasing file sizes in modern digital environments.

Existing Diffusion Policy Solutions for Signal Enhancement

01 Signal processing and filtering techniques for improved accuracy

Advanced signal processing methods including digital filtering, noise reduction algorithms, and adaptive filtering techniques are employed to enhance the accuracy of diffusion policy signals. These techniques help eliminate interference and improve signal-to-noise ratio, resulting in more reliable signal transmission and reception. Multi-stage filtering and error correction mechanisms are implemented to ensure signal integrity throughout the diffusion process.- Signal processing and filtering techniques for improved accuracy: Advanced signal processing methods including digital filtering, noise reduction algorithms, and adaptive filtering techniques are employed to enhance the accuracy of diffusion policy signals. These methods help eliminate interference and improve signal-to-noise ratio, resulting in more reliable signal transmission and reception. Various filtering architectures and signal conditioning circuits are utilized to optimize signal quality before processing.

- Error correction and compensation mechanisms: Implementation of error detection and correction algorithms to maintain signal accuracy during diffusion processes. These mechanisms include feedback loops, calibration procedures, and compensation techniques that adjust for signal degradation or distortion. The systems continuously monitor signal quality and apply corrective measures to ensure accurate signal propagation throughout the diffusion policy network.

- Multi-channel signal synchronization and coordination: Techniques for synchronizing multiple signal channels to ensure coherent and accurate diffusion policy implementation. This involves timing control mechanisms, phase alignment methods, and coordination protocols that maintain signal integrity across different transmission paths. The synchronization ensures that signals from various sources are properly aligned and processed to achieve optimal accuracy.

- Adaptive signal strength and power control: Dynamic adjustment of signal strength and transmission power based on environmental conditions and system requirements. These adaptive mechanisms optimize signal propagation by adjusting parameters in real-time to maintain accuracy under varying conditions. Power control algorithms balance between signal quality and energy efficiency while ensuring reliable diffusion policy signal transmission.

- Machine learning and predictive modeling for signal optimization: Application of artificial intelligence and machine learning algorithms to predict and optimize signal behavior in diffusion policy systems. These methods analyze historical signal patterns, identify potential accuracy issues, and proactively adjust system parameters. Predictive models help anticipate signal degradation and implement preventive measures to maintain high accuracy levels throughout the diffusion process.

02 Calibration and compensation methods for signal accuracy

Calibration procedures and compensation algorithms are utilized to maintain signal accuracy in diffusion policy systems. These methods involve periodic calibration of signal parameters, temperature compensation, and drift correction to account for environmental variations and system aging. Automatic calibration routines and real-time adjustment mechanisms ensure consistent signal accuracy over extended operational periods.Expand Specific Solutions03 Multi-channel and diversity techniques for enhanced signal reliability

Implementation of multi-channel transmission and diversity reception techniques improves signal accuracy by providing redundancy and alternative signal paths. These approaches include spatial diversity, frequency diversity, and time diversity methods that reduce the impact of signal fading and interference. Combining signals from multiple channels through optimal weighting and selection algorithms enhances overall system accuracy.Expand Specific Solutions04 Adaptive modulation and coding for signal optimization

Adaptive modulation and coding schemes dynamically adjust transmission parameters based on channel conditions to optimize signal accuracy. These techniques monitor signal quality metrics and automatically select appropriate modulation formats, coding rates, and power levels to maintain target accuracy levels. Forward error correction and adaptive equalization further enhance signal reliability under varying channel conditions.Expand Specific Solutions05 Machine learning and prediction algorithms for signal accuracy improvement

Machine learning techniques and predictive algorithms are applied to analyze signal patterns and optimize diffusion policy parameters for improved accuracy. These methods include neural networks, pattern recognition, and statistical prediction models that learn from historical data to anticipate signal degradation and proactively adjust system parameters. Real-time learning and adaptation capabilities enable continuous improvement of signal accuracy performance.Expand Specific Solutions

Key Players in Digital Forensics and Signal Processing

The diffusion policy technology for forensics represents an emerging field at the intersection of AI-driven signal processing and digital forensics, currently in its early development stage with significant growth potential. The market remains nascent but shows promise as organizations increasingly require sophisticated signal accuracy solutions for forensic applications. Technology maturity varies considerably across industry players, with established telecommunications and technology giants like Huawei Technologies, Qualcomm, and NEC Corp leading infrastructure development, while specialized companies such as Agilent Technologies and Siemens AG contribute advanced analytical instrumentation. Research institutions including CEA and University of California provide foundational research, though commercial implementations remain limited. The competitive landscape indicates fragmented development with no dominant market leader, suggesting opportunities for breakthrough innovations in signal processing accuracy and forensic application deployment.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed advanced signal processing technologies for forensic applications, incorporating diffusion-based algorithms for enhanced signal reconstruction and analysis. Their approach focuses on maintaining signal integrity while applying noise reduction techniques that preserve critical forensic evidence. The company's solution utilizes adaptive diffusion parameters that adjust based on signal characteristics, ensuring optimal balance between noise suppression and detail preservation. Their implementation includes real-time processing capabilities for live forensic analysis and batch processing for comprehensive evidence examination. The technology demonstrates particular strength in handling corrupted or degraded signals commonly encountered in forensic investigations.

Strengths: Strong R&D capabilities and comprehensive signal processing expertise. Weaknesses: Limited specialized forensic market presence compared to telecommunications focus.

QUALCOMM, Inc.

Technical Solution: QUALCOMM's approach to diffusion policy in forensics leverages their extensive digital signal processing expertise, particularly in wireless communications. Their solution implements sophisticated algorithms that can reconstruct degraded signals while maintaining forensic-grade accuracy standards. The technology incorporates machine learning-enhanced diffusion models that adapt to various signal types and degradation patterns. QUALCOMM's implementation focuses on mobile and wireless forensic applications, where signal integrity is crucial for legal proceedings. Their system provides configurable diffusion parameters allowing forensic analysts to balance between signal clarity and authenticity preservation based on specific case requirements.

Strengths: Leading expertise in wireless signal processing and mobile technologies. Weaknesses: Primary focus on consumer electronics rather than specialized forensic applications.

Core Diffusion Algorithms for Forensic Signal Accuracy

Rapid and sensitive method for detection of biological targets

PatentActiveUS20160370375A1

Innovation

- A method involving a cross-linker molecule with at least two moieties of a peroxidase enzyme substrate, which initiates a free-radical chain reaction to site-directed deposit detectable reporter molecules, ensuring precise localization and stability at target sites with peroxidase activity.

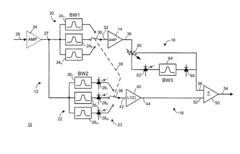

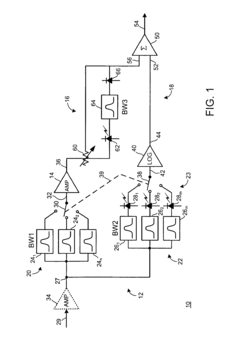

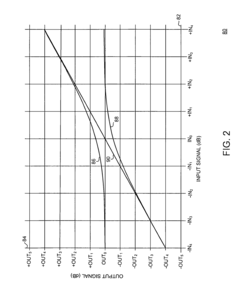

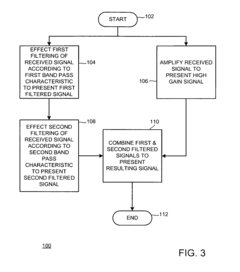

Method and apparatus for treating a received signal to present a resulting signal with improved signal accuracy

PatentInactiveUS8190028B2

Innovation

- The apparatus employs a method involving multiple bandwidth-limited filtering stages and amplification to produce a resulting signal with improved accuracy, using first and second bandpass filtering units, an amplifying unit, and a combining section to handle signals with varying bandwidths, ensuring a seamless and continuous response.

Legal Framework for Digital Evidence and Signal Processing

The legal framework governing digital evidence and signal processing in forensic investigations has evolved significantly to address the challenges posed by advanced technologies like diffusion policies. Traditional evidence admissibility standards, including the Daubert standard in the United States and similar frameworks globally, require scientific evidence to be reliable, relevant, and based on sound methodology. These principles directly impact how diffusion-based signal processing techniques are evaluated in legal contexts.

Digital evidence authentication presents unique challenges when diffusion policies are applied to forensic signals. Courts must determine whether processed signals maintain their evidentiary value after undergoing diffusion-based enhancement or reconstruction. The legal system requires clear chain of custody documentation and validation that any signal processing does not materially alter the original evidence. This creates tension between the need for signal clarity and legal requirements for evidence integrity.

International standards such as ISO/IEC 27037 for digital evidence handling and ASTM E2916 for digital forensics provide guidelines for maintaining evidence authenticity during processing. However, these standards were developed before the widespread adoption of diffusion-based techniques, creating gaps in regulatory coverage. Legal practitioners must navigate between leveraging advanced signal processing capabilities and ensuring compliance with existing evidence standards.

The admissibility of diffusion-processed signals often depends on expert testimony regarding the methodology's scientific validity. Courts evaluate whether the processing technique is generally accepted in the relevant scientific community, whether it has been peer-reviewed, and its known error rates. The probabilistic nature of diffusion models introduces additional complexity, as legal systems traditionally prefer deterministic processes with clear, reproducible outcomes.

Jurisdictional variations in digital evidence standards create additional challenges for forensic practitioners. While some jurisdictions have adapted their frameworks to accommodate advanced signal processing techniques, others maintain stricter interpretations of original evidence requirements. This disparity affects the global applicability of diffusion-based forensic methods and influences how investigators approach signal processing in different legal contexts.

The burden of proof regarding signal accuracy and processing methodology typically falls on the presenting party. This requires comprehensive documentation of diffusion parameters, validation procedures, and accuracy metrics. Legal frameworks increasingly demand transparency in algorithmic decision-making, necessitating explainable diffusion models that can withstand cross-examination and judicial scrutiny while maintaining their technical effectiveness.

Digital evidence authentication presents unique challenges when diffusion policies are applied to forensic signals. Courts must determine whether processed signals maintain their evidentiary value after undergoing diffusion-based enhancement or reconstruction. The legal system requires clear chain of custody documentation and validation that any signal processing does not materially alter the original evidence. This creates tension between the need for signal clarity and legal requirements for evidence integrity.

International standards such as ISO/IEC 27037 for digital evidence handling and ASTM E2916 for digital forensics provide guidelines for maintaining evidence authenticity during processing. However, these standards were developed before the widespread adoption of diffusion-based techniques, creating gaps in regulatory coverage. Legal practitioners must navigate between leveraging advanced signal processing capabilities and ensuring compliance with existing evidence standards.

The admissibility of diffusion-processed signals often depends on expert testimony regarding the methodology's scientific validity. Courts evaluate whether the processing technique is generally accepted in the relevant scientific community, whether it has been peer-reviewed, and its known error rates. The probabilistic nature of diffusion models introduces additional complexity, as legal systems traditionally prefer deterministic processes with clear, reproducible outcomes.

Jurisdictional variations in digital evidence standards create additional challenges for forensic practitioners. While some jurisdictions have adapted their frameworks to accommodate advanced signal processing techniques, others maintain stricter interpretations of original evidence requirements. This disparity affects the global applicability of diffusion-based forensic methods and influences how investigators approach signal processing in different legal contexts.

The burden of proof regarding signal accuracy and processing methodology typically falls on the presenting party. This requires comprehensive documentation of diffusion parameters, validation procedures, and accuracy metrics. Legal frameworks increasingly demand transparency in algorithmic decision-making, necessitating explainable diffusion models that can withstand cross-examination and judicial scrutiny while maintaining their technical effectiveness.

Privacy Implications of Advanced Forensic Signal Methods

The implementation of diffusion policy frameworks in forensic signal processing introduces significant privacy concerns that extend beyond traditional data protection paradigms. These advanced methods, while enhancing signal accuracy and reconstruction capabilities, create new vulnerabilities in personal data exposure and unauthorized surveillance applications.

Diffusion-based forensic techniques possess inherent capabilities to reconstruct degraded or partially corrupted signals with unprecedented precision. This reconstruction power raises concerns about the potential recovery of sensitive information from seemingly anonymized or corrupted data sources. Personal communications, biometric signatures, and behavioral patterns embedded within signal data become susceptible to extraction through sophisticated diffusion algorithms, even when original privacy safeguards were implemented.

The probabilistic nature of diffusion models enables the generation of synthetic data that closely resembles authentic signals, creating challenges in distinguishing between genuine and artificially generated evidence. This capability introduces risks of deepfake-like manipulations in forensic contexts, where malicious actors could potentially fabricate convincing signal evidence for fraudulent purposes or to compromise individual privacy through false attribution.

Cross-domain signal correlation represents another critical privacy dimension. Advanced diffusion policies can identify patterns and relationships across multiple signal types and sources, potentially linking disparate data points to create comprehensive profiles of individuals without their knowledge or consent. This capability enables sophisticated tracking and surveillance applications that transcend traditional privacy boundaries.

The temporal persistence of diffusion models poses long-term privacy risks. These systems can maintain learned representations of signal characteristics indefinitely, creating permanent digital fingerprints that could be exploited years after initial data collection. Such persistence challenges existing privacy frameworks that assume data degradation over time.

Regulatory compliance becomes increasingly complex as diffusion-enhanced forensic methods operate in legal gray areas. Current privacy legislation may not adequately address the sophisticated inference capabilities of these systems, creating gaps in protection against unauthorized signal analysis and reconstruction. Organizations implementing these technologies must navigate evolving regulatory landscapes while ensuring ethical deployment practices.

Diffusion-based forensic techniques possess inherent capabilities to reconstruct degraded or partially corrupted signals with unprecedented precision. This reconstruction power raises concerns about the potential recovery of sensitive information from seemingly anonymized or corrupted data sources. Personal communications, biometric signatures, and behavioral patterns embedded within signal data become susceptible to extraction through sophisticated diffusion algorithms, even when original privacy safeguards were implemented.

The probabilistic nature of diffusion models enables the generation of synthetic data that closely resembles authentic signals, creating challenges in distinguishing between genuine and artificially generated evidence. This capability introduces risks of deepfake-like manipulations in forensic contexts, where malicious actors could potentially fabricate convincing signal evidence for fraudulent purposes or to compromise individual privacy through false attribution.

Cross-domain signal correlation represents another critical privacy dimension. Advanced diffusion policies can identify patterns and relationships across multiple signal types and sources, potentially linking disparate data points to create comprehensive profiles of individuals without their knowledge or consent. This capability enables sophisticated tracking and surveillance applications that transcend traditional privacy boundaries.

The temporal persistence of diffusion models poses long-term privacy risks. These systems can maintain learned representations of signal characteristics indefinitely, creating permanent digital fingerprints that could be exploited years after initial data collection. Such persistence challenges existing privacy frameworks that assume data degradation over time.

Regulatory compliance becomes increasingly complex as diffusion-enhanced forensic methods operate in legal gray areas. Current privacy legislation may not adequately address the sophisticated inference capabilities of these systems, creating gaps in protection against unauthorized signal analysis and reconstruction. Organizations implementing these technologies must navigate evolving regulatory landscapes while ensuring ethical deployment practices.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!