Edge Computing Latency vs Reliability: Trade-offs in Distributed Systems

MAR 26, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Edge Computing Latency-Reliability Background and Objectives

Edge computing has emerged as a transformative paradigm in distributed systems architecture, fundamentally reshaping how computational resources are deployed and utilized across network infrastructures. This technological evolution represents a strategic shift from centralized cloud computing models toward decentralized processing capabilities positioned closer to data sources and end users. The proliferation of Internet of Things devices, autonomous vehicles, industrial automation systems, and real-time applications has created unprecedented demands for low-latency processing and high-reliability service delivery.

The historical development of edge computing can be traced back to content delivery networks and early distributed computing concepts, but has gained significant momentum over the past decade. Initial implementations focused primarily on reducing bandwidth costs and improving content delivery speeds. However, the landscape has evolved dramatically with the advent of 5G networks, artificial intelligence at the edge, and mission-critical applications requiring sub-millisecond response times.

Contemporary edge computing environments face a fundamental challenge in balancing latency optimization against reliability assurance. This trade-off represents one of the most critical design considerations in distributed systems architecture. Applications demanding ultra-low latency, such as autonomous driving systems or industrial control mechanisms, often require computational decisions to be made with minimal data validation and redundancy checks. Conversely, applications prioritizing reliability, including financial transactions or healthcare monitoring systems, typically implement extensive verification protocols that inherently introduce processing delays.

The technical objectives driving current research and development efforts center on developing adaptive frameworks capable of dynamically adjusting the latency-reliability balance based on application requirements and network conditions. These objectives include creating intelligent resource allocation algorithms, implementing predictive failure detection mechanisms, and establishing quality-of-service guarantees across heterogeneous edge environments.

Furthermore, the integration of machine learning techniques into edge computing infrastructure aims to enable autonomous decision-making regarding resource allocation and service prioritization. The ultimate goal involves achieving optimal performance characteristics while maintaining system stability and meeting diverse application requirements across various deployment scenarios.

The historical development of edge computing can be traced back to content delivery networks and early distributed computing concepts, but has gained significant momentum over the past decade. Initial implementations focused primarily on reducing bandwidth costs and improving content delivery speeds. However, the landscape has evolved dramatically with the advent of 5G networks, artificial intelligence at the edge, and mission-critical applications requiring sub-millisecond response times.

Contemporary edge computing environments face a fundamental challenge in balancing latency optimization against reliability assurance. This trade-off represents one of the most critical design considerations in distributed systems architecture. Applications demanding ultra-low latency, such as autonomous driving systems or industrial control mechanisms, often require computational decisions to be made with minimal data validation and redundancy checks. Conversely, applications prioritizing reliability, including financial transactions or healthcare monitoring systems, typically implement extensive verification protocols that inherently introduce processing delays.

The technical objectives driving current research and development efforts center on developing adaptive frameworks capable of dynamically adjusting the latency-reliability balance based on application requirements and network conditions. These objectives include creating intelligent resource allocation algorithms, implementing predictive failure detection mechanisms, and establishing quality-of-service guarantees across heterogeneous edge environments.

Furthermore, the integration of machine learning techniques into edge computing infrastructure aims to enable autonomous decision-making regarding resource allocation and service prioritization. The ultimate goal involves achieving optimal performance characteristics while maintaining system stability and meeting diverse application requirements across various deployment scenarios.

Market Demand for Low-Latency Reliable Edge Solutions

The global edge computing market is experiencing unprecedented growth driven by the increasing demand for ultra-low latency applications and highly reliable distributed systems. Industries across sectors are recognizing the critical need for computing infrastructure that can deliver both minimal response times and consistent performance, creating substantial market opportunities for innovative edge solutions.

Telecommunications operators represent one of the largest market segments demanding low-latency reliable edge solutions. The deployment of 5G networks requires edge infrastructure capable of supporting network slicing, ultra-reliable low-latency communications, and massive machine-type communications. These applications cannot tolerate the latency introduced by traditional centralized cloud architectures, driving significant investment in edge computing platforms that can maintain sub-millisecond response times while ensuring carrier-grade reliability.

Industrial automation and manufacturing sectors are increasingly adopting edge computing solutions to support real-time control systems, predictive maintenance, and quality assurance processes. Manufacturing environments require computing systems that can process sensor data locally while maintaining deterministic behavior and fault tolerance. The convergence of Industry 4.0 initiatives with edge computing is creating substantial demand for solutions that can balance the need for immediate response with the reliability requirements of mission-critical industrial processes.

Autonomous vehicle development and smart transportation infrastructure represent emerging high-growth markets for low-latency reliable edge solutions. Vehicle-to-everything communication systems require edge computing platforms capable of processing safety-critical decisions within strict timing constraints while maintaining extremely high reliability standards. The automotive industry's stringent safety requirements are driving demand for edge solutions that can gracefully handle the latency-reliability trade-off without compromising passenger safety.

Healthcare and medical device sectors are increasingly adopting edge computing for real-time patient monitoring, surgical robotics, and diagnostic imaging applications. These use cases demand computing solutions that can process medical data with minimal delay while ensuring the reliability and accuracy required for patient safety. The regulatory environment in healthcare further emphasizes the need for edge solutions that can demonstrate consistent performance under various operational conditions.

Gaming and entertainment industries are driving demand for edge computing solutions that can deliver immersive experiences through cloud gaming, augmented reality, and virtual reality applications. These applications require edge infrastructure capable of maintaining consistent low latency while handling variable workloads and ensuring service availability across distributed user bases.

The financial services sector is adopting edge computing for high-frequency trading, fraud detection, and real-time risk assessment applications. These use cases require computing solutions that can process transactions with minimal latency while maintaining the reliability and consistency required for financial operations.

Telecommunications operators represent one of the largest market segments demanding low-latency reliable edge solutions. The deployment of 5G networks requires edge infrastructure capable of supporting network slicing, ultra-reliable low-latency communications, and massive machine-type communications. These applications cannot tolerate the latency introduced by traditional centralized cloud architectures, driving significant investment in edge computing platforms that can maintain sub-millisecond response times while ensuring carrier-grade reliability.

Industrial automation and manufacturing sectors are increasingly adopting edge computing solutions to support real-time control systems, predictive maintenance, and quality assurance processes. Manufacturing environments require computing systems that can process sensor data locally while maintaining deterministic behavior and fault tolerance. The convergence of Industry 4.0 initiatives with edge computing is creating substantial demand for solutions that can balance the need for immediate response with the reliability requirements of mission-critical industrial processes.

Autonomous vehicle development and smart transportation infrastructure represent emerging high-growth markets for low-latency reliable edge solutions. Vehicle-to-everything communication systems require edge computing platforms capable of processing safety-critical decisions within strict timing constraints while maintaining extremely high reliability standards. The automotive industry's stringent safety requirements are driving demand for edge solutions that can gracefully handle the latency-reliability trade-off without compromising passenger safety.

Healthcare and medical device sectors are increasingly adopting edge computing for real-time patient monitoring, surgical robotics, and diagnostic imaging applications. These use cases demand computing solutions that can process medical data with minimal delay while ensuring the reliability and accuracy required for patient safety. The regulatory environment in healthcare further emphasizes the need for edge solutions that can demonstrate consistent performance under various operational conditions.

Gaming and entertainment industries are driving demand for edge computing solutions that can deliver immersive experiences through cloud gaming, augmented reality, and virtual reality applications. These applications require edge infrastructure capable of maintaining consistent low latency while handling variable workloads and ensuring service availability across distributed user bases.

The financial services sector is adopting edge computing for high-frequency trading, fraud detection, and real-time risk assessment applications. These use cases require computing solutions that can process transactions with minimal latency while maintaining the reliability and consistency required for financial operations.

Current Edge Computing Trade-off Challenges and Constraints

Edge computing systems face fundamental constraints that create inherent trade-offs between latency optimization and reliability assurance. The distributed nature of edge infrastructure introduces multiple failure points across heterogeneous hardware deployments, network connections, and software stacks. These systems must operate with limited computational resources while maintaining service quality, creating tension between performance requirements and fault tolerance mechanisms.

Resource scarcity represents a primary constraint in edge environments. Edge nodes typically possess limited processing power, memory, and storage compared to centralized cloud facilities. This scarcity forces system designers to make difficult choices between implementing comprehensive redundancy mechanisms and maintaining low-latency response times. Reliability features such as data replication, consensus protocols, and error correction consume valuable computational cycles that could otherwise be dedicated to application processing.

Network connectivity variability poses another significant challenge. Edge deployments often rely on wireless connections or shared network infrastructure that experiences fluctuating bandwidth and intermittent connectivity. These conditions create uncertainty in data synchronization timing and complicate the implementation of distributed consensus mechanisms. Systems must balance aggressive caching strategies that reduce latency against the risk of serving stale data during network partitions.

Geographic distribution amplifies coordination complexity across edge nodes. Maintaining consistency across geographically dispersed edge locations requires sophisticated synchronization protocols that introduce additional latency overhead. The CAP theorem fundamentally limits the ability to simultaneously achieve consistency, availability, and partition tolerance, forcing edge systems to prioritize specific characteristics based on application requirements.

Hardware heterogeneity creates additional constraints as edge deployments often incorporate diverse device types with varying capabilities and reliability profiles. This diversity complicates the implementation of uniform reliability mechanisms and requires adaptive strategies that can accommodate different performance characteristics. Load balancing and failover mechanisms must account for these variations while minimizing service disruption.

Energy consumption limitations further constrain design choices, particularly in battery-powered or resource-constrained edge devices. Reliability mechanisms such as continuous health monitoring, frequent checkpointing, and redundant processing increase power consumption, potentially reducing system availability through battery depletion. These constraints necessitate careful optimization of reliability features to balance protection against failures with operational sustainability.

Resource scarcity represents a primary constraint in edge environments. Edge nodes typically possess limited processing power, memory, and storage compared to centralized cloud facilities. This scarcity forces system designers to make difficult choices between implementing comprehensive redundancy mechanisms and maintaining low-latency response times. Reliability features such as data replication, consensus protocols, and error correction consume valuable computational cycles that could otherwise be dedicated to application processing.

Network connectivity variability poses another significant challenge. Edge deployments often rely on wireless connections or shared network infrastructure that experiences fluctuating bandwidth and intermittent connectivity. These conditions create uncertainty in data synchronization timing and complicate the implementation of distributed consensus mechanisms. Systems must balance aggressive caching strategies that reduce latency against the risk of serving stale data during network partitions.

Geographic distribution amplifies coordination complexity across edge nodes. Maintaining consistency across geographically dispersed edge locations requires sophisticated synchronization protocols that introduce additional latency overhead. The CAP theorem fundamentally limits the ability to simultaneously achieve consistency, availability, and partition tolerance, forcing edge systems to prioritize specific characteristics based on application requirements.

Hardware heterogeneity creates additional constraints as edge deployments often incorporate diverse device types with varying capabilities and reliability profiles. This diversity complicates the implementation of uniform reliability mechanisms and requires adaptive strategies that can accommodate different performance characteristics. Load balancing and failover mechanisms must account for these variations while minimizing service disruption.

Energy consumption limitations further constrain design choices, particularly in battery-powered or resource-constrained edge devices. Reliability mechanisms such as continuous health monitoring, frequent checkpointing, and redundant processing increase power consumption, potentially reducing system availability through battery depletion. These constraints necessitate careful optimization of reliability features to balance protection against failures with operational sustainability.

Existing Latency-Reliability Optimization Approaches

01 Task offloading and resource allocation optimization

Edge computing systems can optimize latency and reliability through intelligent task offloading mechanisms that determine whether computational tasks should be processed locally or at edge servers. Resource allocation algorithms dynamically distribute computing resources based on task requirements, network conditions, and server capabilities. These methods consider factors such as transmission delay, processing time, and energy consumption to minimize overall latency while maintaining service reliability. Advanced scheduling strategies and load balancing techniques ensure efficient utilization of edge resources.- Task offloading and resource allocation optimization: Edge computing systems can optimize latency and reliability through intelligent task offloading mechanisms that determine whether computational tasks should be processed locally or at edge servers. Resource allocation algorithms dynamically distribute computing resources based on task requirements, network conditions, and server capabilities. These methods consider factors such as transmission delay, processing time, and energy consumption to make optimal offloading decisions that minimize overall latency while maintaining system reliability.

- Network slicing and quality of service management: Network slicing technology enables the creation of multiple virtual networks on shared physical infrastructure, allowing different service requirements to be met simultaneously. Quality of service management mechanisms prioritize critical applications and allocate network resources accordingly to ensure low latency and high reliability for time-sensitive services. These approaches implement dynamic resource reservation and traffic management strategies to maintain performance guarantees across diverse edge computing scenarios.

- Edge server deployment and topology optimization: Strategic placement of edge servers closer to end users reduces transmission distances and minimizes latency in edge computing architectures. Topology optimization techniques determine optimal locations and configurations for edge nodes based on user distribution, traffic patterns, and service requirements. Multi-tier edge computing architectures can be designed to balance processing capabilities across different levels, enabling efficient load distribution and improved system reliability through redundancy.

- Caching and content delivery strategies: Intelligent caching mechanisms at edge nodes store frequently accessed data closer to users, significantly reducing access latency and improving content delivery reliability. Predictive caching algorithms analyze usage patterns to proactively cache content before requests occur. Distributed caching strategies coordinate multiple edge servers to maintain data consistency while maximizing cache hit rates, thereby enhancing overall system performance and reducing dependency on distant cloud resources.

- Fault tolerance and reliability enhancement mechanisms: Edge computing systems implement various fault tolerance mechanisms to maintain service continuity and reliability in the presence of failures. Redundancy strategies deploy backup resources and failover mechanisms to ensure uninterrupted service delivery. Real-time monitoring and anomaly detection systems identify potential issues before they impact performance. Recovery protocols enable rapid restoration of services following disruptions, while load balancing techniques distribute workloads to prevent single points of failure and maintain consistent low-latency performance.

02 Network architecture and communication protocols

Optimized network architectures and communication protocols are essential for reducing latency in edge computing environments. This includes the design of hierarchical edge computing frameworks, multi-tier architectures, and efficient data transmission mechanisms. Protocol optimization focuses on reducing handshake overhead, minimizing packet loss, and ensuring reliable data delivery. Network slicing and quality of service mechanisms can be implemented to guarantee performance requirements for latency-sensitive applications.Expand Specific Solutions03 Caching and content delivery strategies

Edge caching mechanisms improve both latency and reliability by storing frequently accessed data closer to end users. Intelligent caching policies predict content popularity and pre-fetch data to edge nodes, reducing retrieval time and network congestion. Content delivery strategies include distributed storage systems, data replication across multiple edge servers, and cache replacement algorithms that optimize hit rates. These approaches minimize data access latency while ensuring content availability and system reliability.Expand Specific Solutions04 Fault tolerance and redundancy mechanisms

Reliability in edge computing is enhanced through fault tolerance mechanisms and redundancy strategies. These include backup server deployment, failover protocols, and error recovery procedures that maintain service continuity during node failures or network disruptions. Redundant data storage and processing capabilities ensure that critical tasks can be completed even when individual edge nodes become unavailable. Health monitoring systems detect anomalies and trigger automatic recovery processes to minimize service interruption.Expand Specific Solutions05 Predictive analytics and machine learning optimization

Machine learning and predictive analytics techniques are applied to optimize edge computing performance by forecasting network conditions, user behavior, and resource demands. These methods enable proactive resource provisioning, adaptive task scheduling, and intelligent routing decisions that reduce latency. Prediction models analyze historical data patterns to anticipate congestion, optimize cache placement, and improve overall system reliability. Reinforcement learning algorithms continuously adapt strategies based on real-time feedback to maintain optimal performance.Expand Specific Solutions

Major Players in Edge Computing Infrastructure Market

The edge computing latency versus reliability trade-off represents a rapidly evolving market in the early growth stage, driven by increasing demand for real-time processing and distributed applications. The market demonstrates significant expansion potential as enterprises seek to balance performance optimization with system dependability. Technology maturity varies considerably across market players, with established infrastructure giants like Intel, Qualcomm, and Samsung Electronics leading in hardware optimization and processing capabilities. Telecommunications leaders including Ericsson, NTT Docomo, and China Unicom drive network infrastructure development, while enterprise solution providers such as IBM, VMware, and Microsoft Technology Licensing advance software-defined approaches. Academic institutions like MIT and Southeast University contribute foundational research, indicating strong innovation pipeline. The competitive landscape shows convergence between traditional hardware manufacturers, cloud service providers, and telecom operators, suggesting technology consolidation and standardization efforts are accelerating market maturation.

QUALCOMM, Inc.

Technical Solution: Qualcomm addresses edge computing latency-reliability trade-offs through their Snapdragon Edge AI platforms, implementing distributed intelligence across 5G networks. Their solution uses machine learning algorithms to predict network congestion and dynamically route computational tasks between edge nodes and mobile devices. The system employs adaptive quality-of-service mechanisms that can sacrifice non-critical processing accuracy to maintain low latency for time-sensitive applications, while ensuring reliability through multi-path redundancy and edge-to-edge communication protocols.

Strengths: Excellent mobile integration and 5G optimization. Weaknesses: Limited performance in high-compute server applications compared to x86 solutions.

Intel Corp.

Technical Solution: Intel's edge computing solution focuses on adaptive latency management through their Edge Insights platform, utilizing dynamic workload distribution across edge nodes. Their approach employs predictive analytics to anticipate network conditions and automatically adjust computation placement between local edge devices and cloud resources. The system implements intelligent caching mechanisms and uses hardware-accelerated processing with their Xeon processors and FPGA integration to minimize latency while maintaining service reliability through redundant processing paths and real-time failover mechanisms.

Strengths: Strong hardware integration and mature ecosystem. Weaknesses: Higher power consumption and cost compared to ARM-based solutions.

Core Technologies for Edge System Performance Balance

Jitter-less distributed function-as-a-service using flavor clustering

PatentActiveUS20210021485A1

Innovation

- Implementing jitter-less distributed FaaS through flavor clustering, where FaaS functions are mapped to underlying hardware architectures with quality-of-service (QoS) control points, and a Jitter controller ensures bounded latency and network resources are allocated to create a Jitter-less Software Defined Wide Area Network (SD-WAN), ensuring deterministic execution across multiple edge nodes.

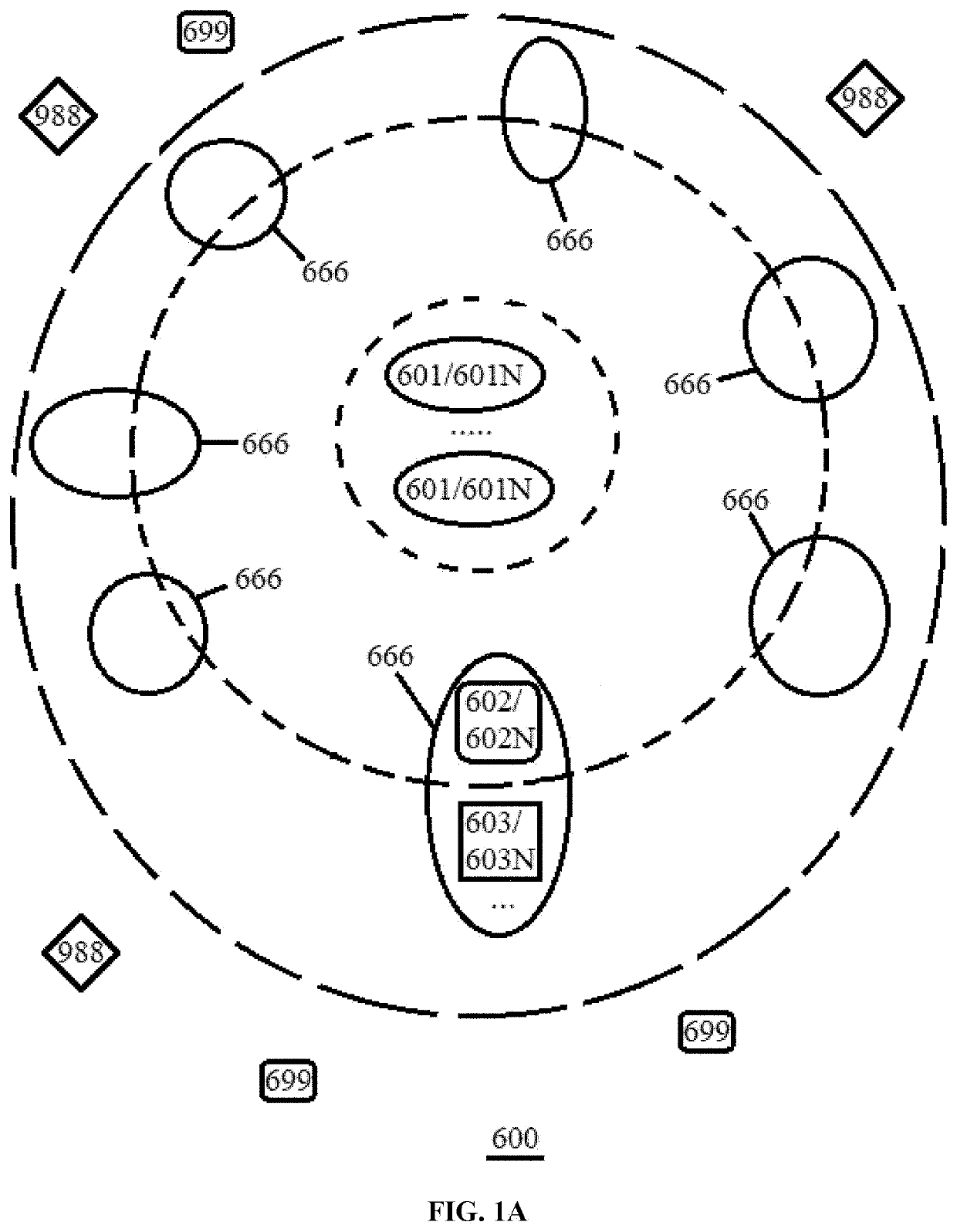

Method of scaling reliability of computing network

PatentActiveUS20210336839A1

Innovation

- A hierarchical computing network is proposed, where multiple service nodes are organized into layers with redundancy and incentives for participants to share spare capacity, allowing for linear scaling of uptime and reliability, and enabling end-users to choose service levels based on their needs.

Network Standards and Edge Computing Compliance

Network standards play a crucial role in defining the operational framework for edge computing systems, particularly when addressing the fundamental trade-offs between latency and reliability in distributed architectures. The IEEE 802.11 family of standards, including Wi-Fi 6 and the emerging Wi-Fi 7, establishes critical parameters for wireless edge connectivity, defining maximum allowable latency thresholds and minimum reliability requirements that directly impact system performance.

The 5G New Radio (NR) specifications under 3GPP Release 16 and 17 introduce Ultra-Reliable Low Latency Communication (URLLC) standards, mandating sub-millisecond latency with 99.999% reliability for mission-critical edge applications. These standards create a regulatory framework that forces system architects to implement specific redundancy mechanisms and failover protocols, inherently affecting the latency-reliability balance.

Edge computing compliance requirements vary significantly across different network standards. The ETSI Multi-access Edge Computing (MEC) framework defines standardized APIs and service interfaces that must maintain consistent performance metrics across distributed nodes. Compliance with these standards often necessitates additional protocol overhead, introducing latency penalties while enhancing system interoperability and reliability.

Network Function Virtualization (NFV) standards, particularly ETSI NFV-SOL specifications, establish mandatory orchestration and management protocols for edge deployments. These compliance requirements introduce standardized monitoring and reporting mechanisms that consume computational resources and network bandwidth, creating measurable impacts on both latency performance and system reliability metrics.

The Industrial Internet Consortium (IIC) testbed specifications mandate specific security and communication protocols for industrial edge computing applications. Compliance with these standards requires implementation of encrypted communication channels and authenticated device interactions, adding cryptographic processing delays while significantly improving system security and operational reliability.

Emerging standards like IEEE 802.1CB Frame Replication and Elimination for Reliability (FRER) specifically address the latency-reliability trade-off by defining standardized redundant transmission mechanisms. These protocols enable deterministic network behavior but require careful configuration to balance the additional network overhead against improved fault tolerance capabilities in distributed edge computing environments.

The 5G New Radio (NR) specifications under 3GPP Release 16 and 17 introduce Ultra-Reliable Low Latency Communication (URLLC) standards, mandating sub-millisecond latency with 99.999% reliability for mission-critical edge applications. These standards create a regulatory framework that forces system architects to implement specific redundancy mechanisms and failover protocols, inherently affecting the latency-reliability balance.

Edge computing compliance requirements vary significantly across different network standards. The ETSI Multi-access Edge Computing (MEC) framework defines standardized APIs and service interfaces that must maintain consistent performance metrics across distributed nodes. Compliance with these standards often necessitates additional protocol overhead, introducing latency penalties while enhancing system interoperability and reliability.

Network Function Virtualization (NFV) standards, particularly ETSI NFV-SOL specifications, establish mandatory orchestration and management protocols for edge deployments. These compliance requirements introduce standardized monitoring and reporting mechanisms that consume computational resources and network bandwidth, creating measurable impacts on both latency performance and system reliability metrics.

The Industrial Internet Consortium (IIC) testbed specifications mandate specific security and communication protocols for industrial edge computing applications. Compliance with these standards requires implementation of encrypted communication channels and authenticated device interactions, adding cryptographic processing delays while significantly improving system security and operational reliability.

Emerging standards like IEEE 802.1CB Frame Replication and Elimination for Reliability (FRER) specifically address the latency-reliability trade-off by defining standardized redundant transmission mechanisms. These protocols enable deterministic network behavior but require careful configuration to balance the additional network overhead against improved fault tolerance capabilities in distributed edge computing environments.

Security Implications in Distributed Edge Architectures

The distributed nature of edge computing architectures introduces significant security vulnerabilities that directly impact the fundamental trade-offs between latency and reliability. Edge nodes, positioned at network peripheries, present expanded attack surfaces that traditional centralized security models cannot adequately address. These distributed endpoints often operate with limited computational resources, constraining the implementation of robust security mechanisms that might otherwise enhance system reliability.

Authentication and authorization mechanisms in edge environments face unique challenges when balancing performance requirements with security rigor. Lightweight authentication protocols designed to minimize latency may compromise the thoroughness of identity verification, potentially allowing unauthorized access to critical edge resources. Conversely, comprehensive security validation processes can introduce substantial delays that undermine the low-latency advantages that edge computing promises to deliver.

Data integrity and confidentiality concerns become particularly acute in distributed edge architectures where information traverses multiple network segments and processing nodes. End-to-end encryption, while essential for protecting sensitive data, introduces computational overhead and transmission delays that can significantly impact system responsiveness. The challenge intensifies when considering dynamic key management across geographically dispersed edge nodes, where synchronization delays can create security gaps or performance bottlenecks.

Network segmentation and isolation strategies in edge deployments must carefully balance security containment with operational flexibility. Overly restrictive network policies can impede the rapid data exchange necessary for maintaining low latency, while permissive configurations may enable lateral movement of security threats across the distributed infrastructure. This tension becomes particularly evident during fault recovery scenarios, where security protocols may conflict with rapid failover mechanisms designed to maintain service reliability.

The proliferation of Internet of Things devices and sensors connected to edge nodes creates additional security complexity. These endpoints often lack sophisticated security capabilities, yet their compromise can cascade through the distributed system, affecting both data integrity and service availability. Managing security updates and patches across thousands of distributed devices presents logistical challenges that can leave systems vulnerable for extended periods, directly impacting overall reliability metrics.

Authentication and authorization mechanisms in edge environments face unique challenges when balancing performance requirements with security rigor. Lightweight authentication protocols designed to minimize latency may compromise the thoroughness of identity verification, potentially allowing unauthorized access to critical edge resources. Conversely, comprehensive security validation processes can introduce substantial delays that undermine the low-latency advantages that edge computing promises to deliver.

Data integrity and confidentiality concerns become particularly acute in distributed edge architectures where information traverses multiple network segments and processing nodes. End-to-end encryption, while essential for protecting sensitive data, introduces computational overhead and transmission delays that can significantly impact system responsiveness. The challenge intensifies when considering dynamic key management across geographically dispersed edge nodes, where synchronization delays can create security gaps or performance bottlenecks.

Network segmentation and isolation strategies in edge deployments must carefully balance security containment with operational flexibility. Overly restrictive network policies can impede the rapid data exchange necessary for maintaining low latency, while permissive configurations may enable lateral movement of security threats across the distributed infrastructure. This tension becomes particularly evident during fault recovery scenarios, where security protocols may conflict with rapid failover mechanisms designed to maintain service reliability.

The proliferation of Internet of Things devices and sensors connected to edge nodes creates additional security complexity. These endpoints often lack sophisticated security capabilities, yet their compromise can cascade through the distributed system, affecting both data integrity and service availability. Managing security updates and patches across thousands of distributed devices presents logistical challenges that can leave systems vulnerable for extended periods, directly impacting overall reliability metrics.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!