How to Optimize Neural Network for Image Recognition

FEB 27, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Neural Network Image Recognition Background and Objectives

Neural network optimization for image recognition has emerged as one of the most transformative technological domains in artificial intelligence, fundamentally reshaping how machines perceive and interpret visual information. The field traces its origins to early perceptron models in the 1950s, evolving through decades of theoretical breakthroughs and computational advances to reach today's sophisticated deep learning architectures.

The historical development of neural networks for image recognition experienced several pivotal moments, beginning with the introduction of convolutional neural networks (CNNs) by Yann LeCun in the 1980s. The breakthrough moment arrived in 2012 when AlexNet demonstrated unprecedented performance on the ImageNet dataset, igniting widespread interest in deep learning approaches. Subsequent innovations including ResNet, VGG, and more recently, Vision Transformers, have continuously pushed the boundaries of what machines can achieve in visual understanding tasks.

Current technological evolution trends indicate a shift toward more efficient architectures that balance accuracy with computational requirements. The industry is witnessing growing emphasis on mobile-optimized models, real-time processing capabilities, and energy-efficient designs. Emerging paradigms such as neural architecture search, pruning techniques, and quantization methods are becoming increasingly important for practical deployment scenarios.

The primary technical objectives driving neural network optimization for image recognition center around achieving superior accuracy while maintaining computational efficiency. Organizations seek to develop models that can process high-resolution images with minimal latency, operate effectively on resource-constrained devices, and generalize well across diverse visual domains. Key performance metrics include classification accuracy, inference speed, memory footprint, and power consumption.

Strategic goals encompass enabling real-world applications across industries including autonomous vehicles, medical imaging, manufacturing quality control, and consumer electronics. The technology aims to bridge the gap between laboratory performance and practical deployment, ensuring robust operation in varied environmental conditions and lighting scenarios.

Future aspirations involve developing neural networks that can approach human-level visual understanding while operating within practical computational constraints. This includes advancing few-shot learning capabilities, improving robustness against adversarial attacks, and creating more interpretable models that can explain their decision-making processes. The ultimate objective is establishing neural network systems that seamlessly integrate into existing technological ecosystems while delivering consistent, reliable image recognition performance across diverse application domains.

The historical development of neural networks for image recognition experienced several pivotal moments, beginning with the introduction of convolutional neural networks (CNNs) by Yann LeCun in the 1980s. The breakthrough moment arrived in 2012 when AlexNet demonstrated unprecedented performance on the ImageNet dataset, igniting widespread interest in deep learning approaches. Subsequent innovations including ResNet, VGG, and more recently, Vision Transformers, have continuously pushed the boundaries of what machines can achieve in visual understanding tasks.

Current technological evolution trends indicate a shift toward more efficient architectures that balance accuracy with computational requirements. The industry is witnessing growing emphasis on mobile-optimized models, real-time processing capabilities, and energy-efficient designs. Emerging paradigms such as neural architecture search, pruning techniques, and quantization methods are becoming increasingly important for practical deployment scenarios.

The primary technical objectives driving neural network optimization for image recognition center around achieving superior accuracy while maintaining computational efficiency. Organizations seek to develop models that can process high-resolution images with minimal latency, operate effectively on resource-constrained devices, and generalize well across diverse visual domains. Key performance metrics include classification accuracy, inference speed, memory footprint, and power consumption.

Strategic goals encompass enabling real-world applications across industries including autonomous vehicles, medical imaging, manufacturing quality control, and consumer electronics. The technology aims to bridge the gap between laboratory performance and practical deployment, ensuring robust operation in varied environmental conditions and lighting scenarios.

Future aspirations involve developing neural networks that can approach human-level visual understanding while operating within practical computational constraints. This includes advancing few-shot learning capabilities, improving robustness against adversarial attacks, and creating more interpretable models that can explain their decision-making processes. The ultimate objective is establishing neural network systems that seamlessly integrate into existing technological ecosystems while delivering consistent, reliable image recognition performance across diverse application domains.

Market Demand for Optimized Image Recognition Systems

The global image recognition market has experienced unprecedented growth driven by the proliferation of artificial intelligence applications across diverse industries. Healthcare organizations increasingly rely on optimized neural networks for medical imaging diagnostics, where enhanced accuracy and reduced processing time directly impact patient outcomes. The demand for real-time analysis of X-rays, MRIs, and CT scans has created substantial market opportunities for advanced image recognition solutions.

Autonomous vehicle manufacturers represent another critical demand driver, requiring neural networks capable of processing visual data with exceptional speed and precision. These systems must identify pedestrians, traffic signs, road conditions, and obstacles within milliseconds while maintaining high accuracy rates under varying environmental conditions. The stringent safety requirements have intensified the need for optimized neural architectures that can operate reliably in resource-constrained automotive computing environments.

Security and surveillance sectors demonstrate robust demand for optimized image recognition systems capable of facial recognition, object detection, and behavioral analysis. Government agencies, airports, and commercial facilities seek solutions that can process multiple video streams simultaneously while maintaining accuracy across diverse lighting conditions and camera angles. The growing emphasis on public safety has accelerated adoption of advanced neural network optimization techniques.

Manufacturing industries increasingly implement computer vision systems for quality control, defect detection, and automated inspection processes. These applications require neural networks optimized for specific industrial environments, capable of identifying minute defects in products ranging from semiconductors to automotive components. The push toward Industry 4.0 has created substantial demand for customized image recognition solutions.

Consumer electronics manufacturers integrate optimized image recognition capabilities into smartphones, smart cameras, and IoT devices. The constraint of limited computational resources in mobile devices has driven demand for neural network optimization techniques that maintain performance while reducing power consumption and memory requirements.

E-commerce platforms utilize image recognition for product search, recommendation systems, and inventory management. The volume of visual content processed daily necessitates highly optimized neural networks capable of handling massive datasets efficiently while delivering accurate results for enhanced user experiences.

Autonomous vehicle manufacturers represent another critical demand driver, requiring neural networks capable of processing visual data with exceptional speed and precision. These systems must identify pedestrians, traffic signs, road conditions, and obstacles within milliseconds while maintaining high accuracy rates under varying environmental conditions. The stringent safety requirements have intensified the need for optimized neural architectures that can operate reliably in resource-constrained automotive computing environments.

Security and surveillance sectors demonstrate robust demand for optimized image recognition systems capable of facial recognition, object detection, and behavioral analysis. Government agencies, airports, and commercial facilities seek solutions that can process multiple video streams simultaneously while maintaining accuracy across diverse lighting conditions and camera angles. The growing emphasis on public safety has accelerated adoption of advanced neural network optimization techniques.

Manufacturing industries increasingly implement computer vision systems for quality control, defect detection, and automated inspection processes. These applications require neural networks optimized for specific industrial environments, capable of identifying minute defects in products ranging from semiconductors to automotive components. The push toward Industry 4.0 has created substantial demand for customized image recognition solutions.

Consumer electronics manufacturers integrate optimized image recognition capabilities into smartphones, smart cameras, and IoT devices. The constraint of limited computational resources in mobile devices has driven demand for neural network optimization techniques that maintain performance while reducing power consumption and memory requirements.

E-commerce platforms utilize image recognition for product search, recommendation systems, and inventory management. The volume of visual content processed daily necessitates highly optimized neural networks capable of handling massive datasets efficiently while delivering accurate results for enhanced user experiences.

Current State and Challenges in Neural Network Optimization

Neural network optimization for image recognition has reached a sophisticated level of maturity, yet continues to face significant technical barriers that limit further advancement. Current state-of-the-art architectures such as Vision Transformers, EfficientNets, and ResNet variants have demonstrated remarkable performance across various image classification benchmarks, achieving human-level accuracy on datasets like ImageNet. However, these achievements come with substantial computational overhead and energy consumption requirements that pose practical deployment challenges.

The computational complexity remains one of the most pressing constraints in neural network optimization. Modern deep learning models for image recognition typically require millions to billions of parameters, demanding extensive GPU resources for both training and inference. This computational burden translates directly into increased operational costs and energy consumption, making deployment particularly challenging for edge computing scenarios and resource-constrained environments.

Memory limitations present another critical bottleneck in current optimization efforts. Large-scale neural networks often exceed available GPU memory during training, necessitating complex memory management strategies such as gradient checkpointing, model parallelism, and mixed-precision training. These workarounds introduce additional complexity and may compromise training stability or convergence speed.

Training efficiency challenges persist despite advances in optimization algorithms and hardware acceleration. Current neural networks for image recognition require extensive training periods, often spanning days or weeks on high-performance computing clusters. The training process remains sensitive to hyperparameter selection, initialization strategies, and learning rate scheduling, requiring significant expertise and computational resources for optimal configuration.

Generalization and robustness issues continue to plague neural network optimization efforts. While models achieve excellent performance on standard benchmarks, they often struggle with domain shift, adversarial attacks, and out-of-distribution samples. This limitation restricts their reliability in real-world applications where input conditions may vary significantly from training data distributions.

The interpretability and explainability gap represents a fundamental challenge in neural network optimization. Current architectures operate as black boxes, making it difficult to understand decision-making processes or identify failure modes. This opacity complicates debugging, optimization, and regulatory compliance in critical applications such as medical imaging or autonomous systems.

Hardware-software co-optimization remains an emerging challenge as specialized accelerators and neuromorphic computing platforms require tailored optimization strategies that differ significantly from traditional GPU-based approaches.

The computational complexity remains one of the most pressing constraints in neural network optimization. Modern deep learning models for image recognition typically require millions to billions of parameters, demanding extensive GPU resources for both training and inference. This computational burden translates directly into increased operational costs and energy consumption, making deployment particularly challenging for edge computing scenarios and resource-constrained environments.

Memory limitations present another critical bottleneck in current optimization efforts. Large-scale neural networks often exceed available GPU memory during training, necessitating complex memory management strategies such as gradient checkpointing, model parallelism, and mixed-precision training. These workarounds introduce additional complexity and may compromise training stability or convergence speed.

Training efficiency challenges persist despite advances in optimization algorithms and hardware acceleration. Current neural networks for image recognition require extensive training periods, often spanning days or weeks on high-performance computing clusters. The training process remains sensitive to hyperparameter selection, initialization strategies, and learning rate scheduling, requiring significant expertise and computational resources for optimal configuration.

Generalization and robustness issues continue to plague neural network optimization efforts. While models achieve excellent performance on standard benchmarks, they often struggle with domain shift, adversarial attacks, and out-of-distribution samples. This limitation restricts their reliability in real-world applications where input conditions may vary significantly from training data distributions.

The interpretability and explainability gap represents a fundamental challenge in neural network optimization. Current architectures operate as black boxes, making it difficult to understand decision-making processes or identify failure modes. This opacity complicates debugging, optimization, and regulatory compliance in critical applications such as medical imaging or autonomous systems.

Hardware-software co-optimization remains an emerging challenge as specialized accelerators and neuromorphic computing platforms require tailored optimization strategies that differ significantly from traditional GPU-based approaches.

Current Neural Network Optimization Solutions

01 Neural network architecture optimization and structure design

This category focuses on optimizing the structure and architecture of neural networks to improve performance and efficiency. Techniques include designing novel network topologies, optimizing layer configurations, and developing specialized architectures for specific tasks. Methods involve automated architecture search, pruning redundant connections, and creating more efficient computational graphs to reduce complexity while maintaining or improving accuracy.- Neural network architecture optimization and structure design: This category focuses on optimizing the structure and architecture of neural networks to improve performance and efficiency. Techniques include designing novel network topologies, adjusting layer configurations, and implementing specialized architectural components. Methods involve automated architecture search, dynamic network structure adaptation, and hierarchical design approaches to enhance computational efficiency while maintaining or improving accuracy.

- Training algorithm and learning rate optimization: This approach concentrates on improving the training process through advanced optimization algorithms and adaptive learning rate strategies. Techniques include gradient descent variants, momentum-based methods, and adaptive learning rate schedulers. The optimization focuses on accelerating convergence, avoiding local minima, and improving generalization capabilities through refined parameter update mechanisms and loss function design.

- Hardware acceleration and computational efficiency: This category addresses optimization through hardware-level improvements and computational resource management. Approaches include parallel processing techniques, specialized hardware utilization, memory optimization, and efficient tensor operations. Methods focus on reducing computational overhead, minimizing latency, and maximizing throughput through hardware-software co-design and resource allocation strategies.

- Model compression and pruning techniques: This optimization strategy involves reducing model size and complexity while preserving performance. Techniques include weight pruning, quantization, knowledge distillation, and low-rank factorization. These methods aim to create lightweight models suitable for deployment on resource-constrained devices, reducing memory footprint and inference time without significant accuracy loss.

- Hyperparameter tuning and automated optimization: This category encompasses systematic approaches to optimizing neural network hyperparameters through automated search and tuning methods. Techniques include grid search, random search, bayesian optimization, and evolutionary algorithms. The focus is on finding optimal configurations for batch size, regularization parameters, network depth, and other critical hyperparameters to maximize model performance across different tasks and datasets.

02 Training algorithm and learning rate optimization

This approach concentrates on improving the training process through advanced optimization algorithms and adaptive learning rate strategies. Techniques include gradient descent variants, momentum-based methods, and dynamic learning rate scheduling. The focus is on accelerating convergence, avoiding local minima, and improving the stability of the training process through better parameter update mechanisms and loss function optimization.Expand Specific Solutions03 Hardware acceleration and computational efficiency

This category addresses optimization through hardware-specific implementations and computational efficiency improvements. Methods include leveraging specialized processors, parallel computing architectures, and memory optimization techniques. The focus is on reducing computational overhead, minimizing latency, and improving throughput through efficient resource utilization and hardware-software co-design strategies.Expand Specific Solutions04 Model compression and quantization techniques

This approach focuses on reducing model size and computational requirements while preserving performance. Techniques include weight quantization, knowledge distillation, and low-rank factorization. The goal is to create lightweight models suitable for deployment on resource-constrained devices by reducing precision requirements, eliminating redundant parameters, and compressing model representations without significant accuracy loss.Expand Specific Solutions05 Hyperparameter tuning and automated optimization

This category encompasses methods for automatically selecting and optimizing hyperparameters to improve neural network performance. Approaches include grid search, random search, Bayesian optimization, and evolutionary algorithms. The focus is on systematically exploring the hyperparameter space to find optimal configurations for batch size, regularization parameters, network depth, and other critical settings that affect model performance and generalization.Expand Specific Solutions

Key Players in Neural Network and Image Recognition Industry

The neural network optimization for image recognition field represents a mature and highly competitive landscape characterized by rapid technological advancement and substantial market growth. The industry has evolved from early-stage research to widespread commercial deployment, with global market size reaching billions annually and projected continued expansion driven by AI adoption across sectors. Technology maturity varies significantly among market participants, with established giants like Samsung Electronics, Huawei Technologies, and Microsoft Technology Licensing leading through extensive R&D investments and comprehensive AI platforms. Chinese companies including Tencent Technology, Beijing Sensetime Technology, and NAVER Corp demonstrate strong capabilities in deep learning and computer vision applications. Traditional technology leaders such as Canon, Sony Semiconductor Solutions, and Panasonic Automotive Systems leverage decades of imaging expertise to optimize neural architectures. Academic institutions like Shanghai Jiao Tong University and Politecnico di Torino contribute fundamental research breakthroughs, while specialized firms like StradVision focus on domain-specific optimization for automotive applications, creating a diverse ecosystem spanning hardware acceleration, algorithm development, and industry-specific implementations.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung's optimization approach focuses on hardware-software co-design through their Exynos processors with integrated Neural Processing Units (NPUs) capable of delivering up to 26 TOPS of AI performance. They implement on-device model optimization techniques including 8-bit and 16-bit quantization, channel pruning, and layer fusion to maximize efficiency on mobile devices. Samsung develops custom memory architectures with Processing-in-Memory (PIM) technology to reduce data movement overhead during neural network inference. Their optimization framework includes adaptive batch sizing and dynamic voltage frequency scaling to balance performance and power consumption for image recognition applications.

Strengths: Advanced semiconductor manufacturing capabilities, integrated mobile ecosystem. Weaknesses: Limited software ecosystem compared to pure AI companies, focus primarily on consumer electronics applications.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed the Ascend AI processor series specifically optimized for neural network inference and training in image recognition tasks. Their approach includes model compression techniques such as pruning and quantization to reduce computational complexity while maintaining accuracy. The company implements knowledge distillation methods to transfer learning from large teacher models to smaller student models suitable for mobile deployment. Huawei's MindSpore framework provides automatic differentiation and graph optimization capabilities, enabling efficient neural network execution across different hardware platforms including their Kirin chipsets with dedicated Neural Processing Units (NPUs).

Strengths: Integrated hardware-software optimization, strong mobile AI capabilities. Weaknesses: Limited global market access due to trade restrictions, ecosystem constraints outside China.

Core Innovations in Neural Network Architecture Design

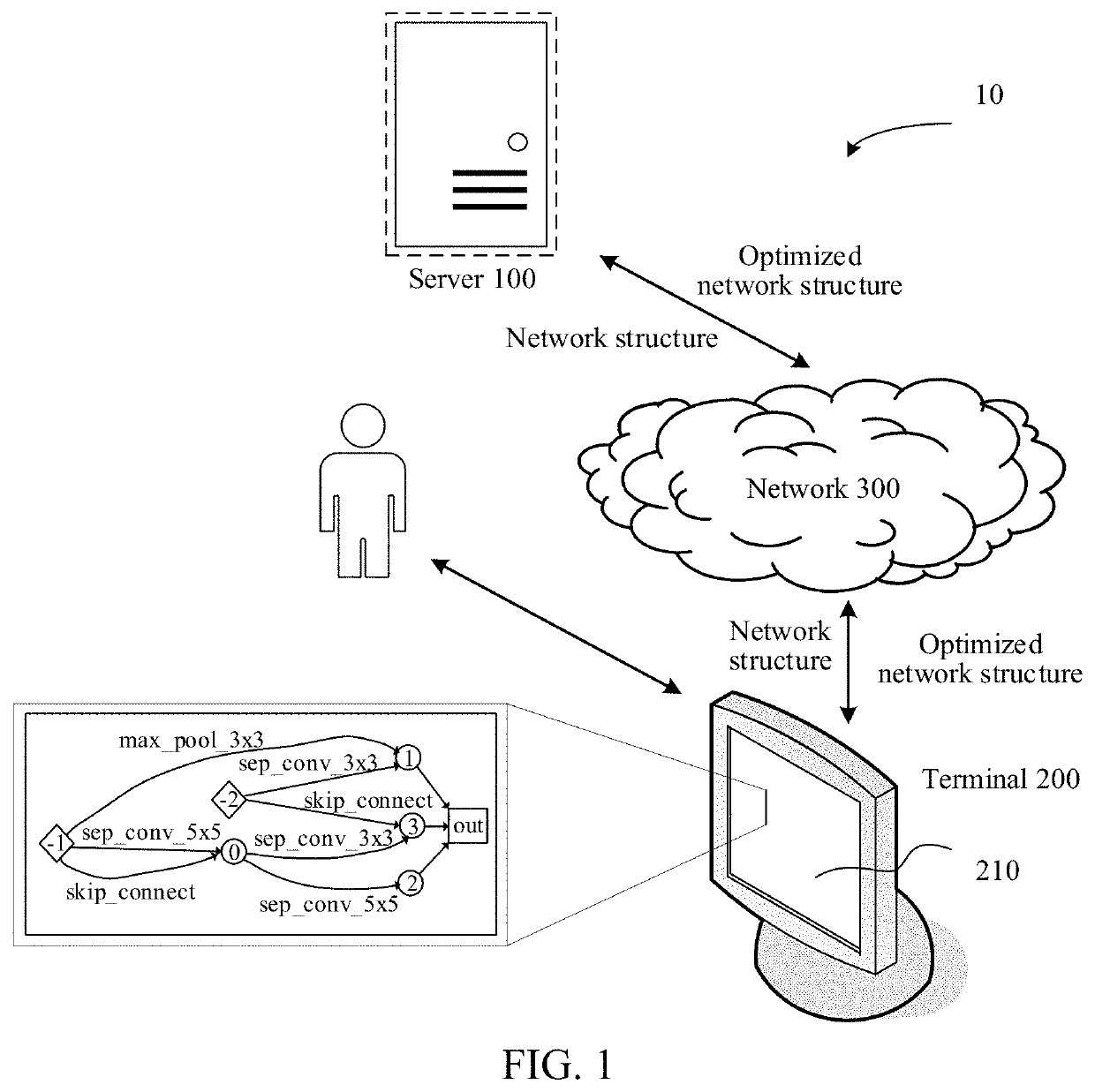

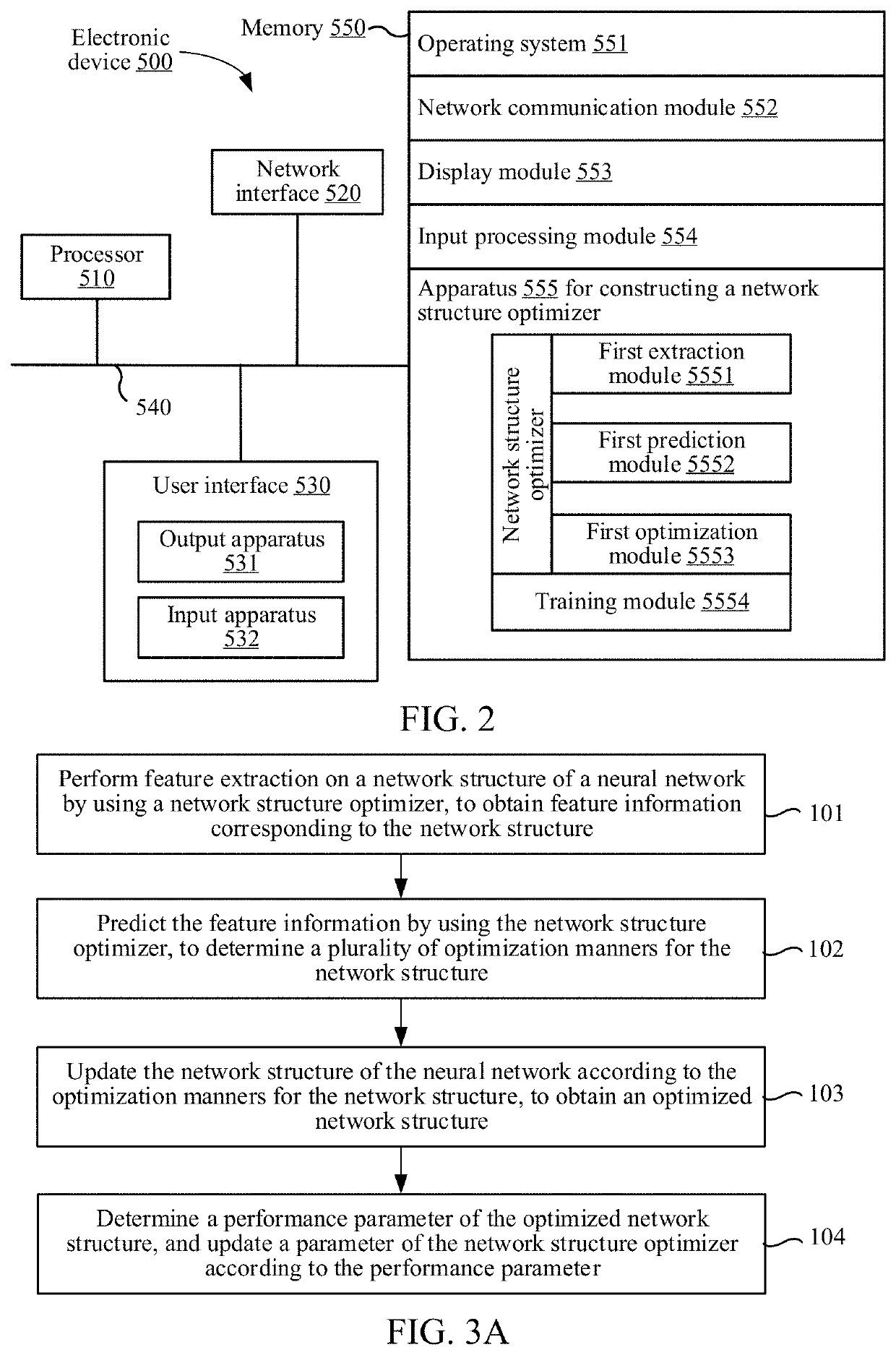

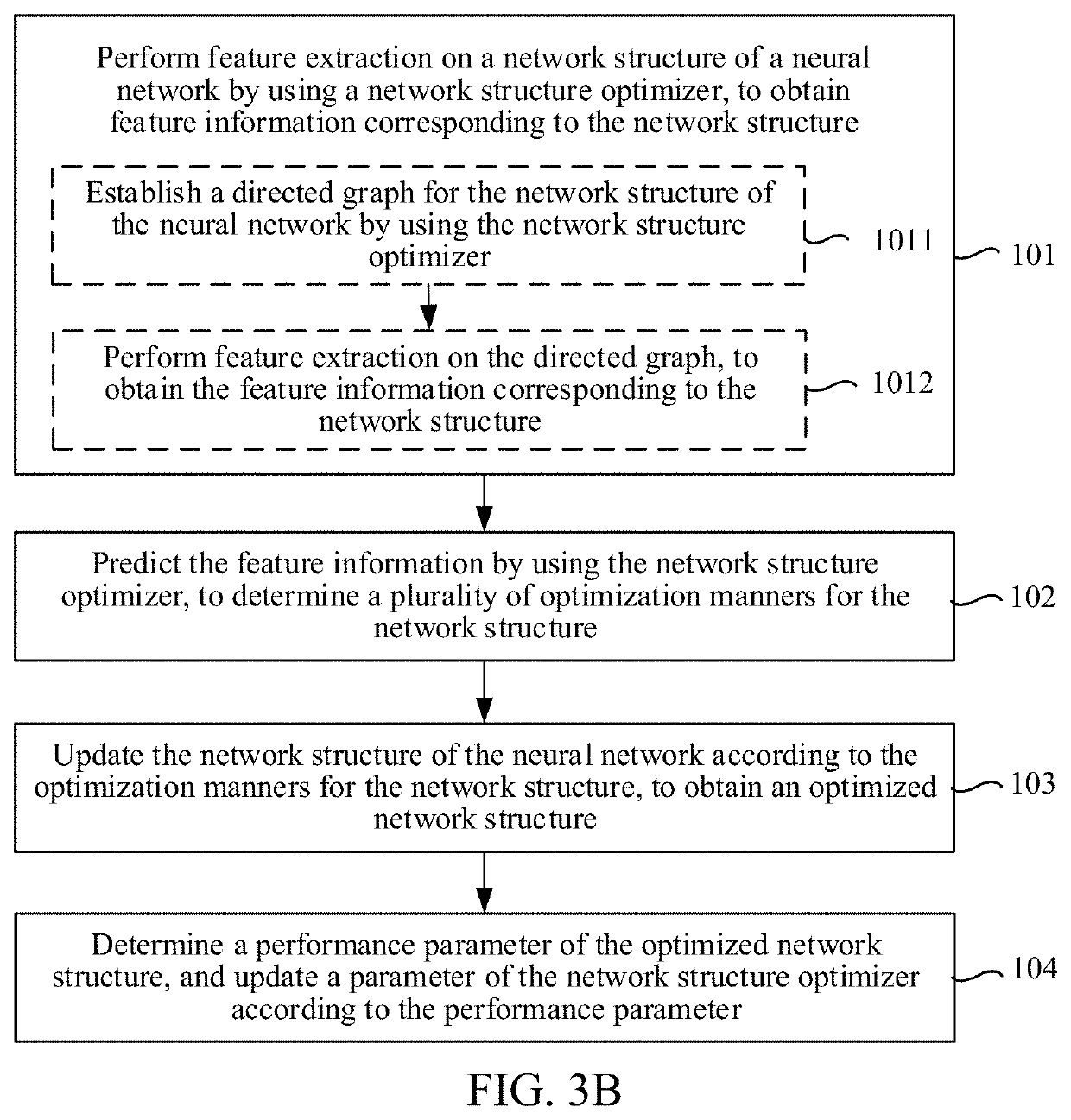

Method and apparatus for constructing network structure optimizer, and computer-readable storage medium

PatentPendingUS20220044094A1

Innovation

- A network structure optimizer is employed to perform feature extraction, predict optimization manners, and update the network structure, removing redundant operations and optimizing the architecture without additional calculation costs.

Method for optimizing on-device neural network model by using sub-kernel searching module and device using the same

PatentWO2021230463A1

Innovation

- The method employs a Sub-kernel Searching Module (SSM) to generate a Small Neural Network Model with optimized architecture based on the edge device's computing power and environment, reducing computational load and weights by identifying constraints and generating state vectors for specific sub-kernels, allowing for continuous improvement of sub-architectures.

Data Privacy and Security in Neural Network Applications

Data privacy and security represent critical considerations in neural network applications for image recognition, particularly as these systems increasingly handle sensitive visual data across healthcare, surveillance, and personal device applications. The inherent vulnerability of neural networks to various attack vectors, combined with stringent regulatory requirements, necessitates comprehensive security frameworks that protect both training data and inference processes.

Privacy-preserving techniques have emerged as fundamental components in secure neural network deployment. Differential privacy mechanisms add calibrated noise to training datasets or model outputs, ensuring individual data points cannot be reverse-engineered from model behavior. Federated learning architectures enable distributed training across multiple devices without centralizing sensitive image data, allowing healthcare institutions or mobile device manufacturers to collaborate on model development while maintaining data sovereignty. Homomorphic encryption techniques permit computation on encrypted image data, though computational overhead remains a significant implementation challenge.

Adversarial attacks pose substantial security risks to image recognition systems, with techniques like adversarial examples capable of fooling neural networks through imperceptible pixel modifications. Gradient-based attacks, including Fast Gradient Sign Method and Projected Gradient Descent, can systematically generate malicious inputs that cause misclassification. Defense mechanisms encompass adversarial training, where models learn from adversarial examples during training, and certified defense approaches that provide mathematical guarantees against specific attack types.

Model extraction and membership inference attacks represent emerging threat vectors where attackers attempt to steal proprietary neural network architectures or determine whether specific images were used in training datasets. These attacks exploit model confidence scores and prediction patterns, potentially exposing intellectual property and violating individual privacy rights. Countermeasures include output perturbation, query limiting, and ensemble-based obfuscation techniques.

Secure multi-party computation protocols enable collaborative neural network training and inference across untrusted parties, utilizing cryptographic techniques to ensure data confidentiality throughout the computational process. These approaches, while computationally intensive, provide strong security guarantees for applications requiring absolute data protection, such as medical imaging analysis involving multiple healthcare providers.

Privacy-preserving techniques have emerged as fundamental components in secure neural network deployment. Differential privacy mechanisms add calibrated noise to training datasets or model outputs, ensuring individual data points cannot be reverse-engineered from model behavior. Federated learning architectures enable distributed training across multiple devices without centralizing sensitive image data, allowing healthcare institutions or mobile device manufacturers to collaborate on model development while maintaining data sovereignty. Homomorphic encryption techniques permit computation on encrypted image data, though computational overhead remains a significant implementation challenge.

Adversarial attacks pose substantial security risks to image recognition systems, with techniques like adversarial examples capable of fooling neural networks through imperceptible pixel modifications. Gradient-based attacks, including Fast Gradient Sign Method and Projected Gradient Descent, can systematically generate malicious inputs that cause misclassification. Defense mechanisms encompass adversarial training, where models learn from adversarial examples during training, and certified defense approaches that provide mathematical guarantees against specific attack types.

Model extraction and membership inference attacks represent emerging threat vectors where attackers attempt to steal proprietary neural network architectures or determine whether specific images were used in training datasets. These attacks exploit model confidence scores and prediction patterns, potentially exposing intellectual property and violating individual privacy rights. Countermeasures include output perturbation, query limiting, and ensemble-based obfuscation techniques.

Secure multi-party computation protocols enable collaborative neural network training and inference across untrusted parties, utilizing cryptographic techniques to ensure data confidentiality throughout the computational process. These approaches, while computationally intensive, provide strong security guarantees for applications requiring absolute data protection, such as medical imaging analysis involving multiple healthcare providers.

Energy Efficiency and Sustainability in Neural Computing

Energy efficiency has emerged as a critical consideration in neural network optimization for image recognition, driven by the exponential growth in computational demands and environmental concerns. Modern deep learning models for computer vision tasks consume substantial amounts of energy during both training and inference phases, with large-scale models requiring thousands of kilowatt-hours for training alone. This energy consumption translates directly into carbon emissions and operational costs, making sustainability a paramount concern for organizations deploying image recognition systems at scale.

The pursuit of energy-efficient neural architectures has led to significant innovations in model design and optimization techniques. Techniques such as knowledge distillation enable the creation of smaller, more efficient student networks that maintain competitive accuracy while consuming significantly less computational resources. Pruning methodologies systematically remove redundant parameters and connections, reducing both memory footprint and energy consumption without substantial performance degradation. These approaches can achieve energy reductions of 50-90% compared to their full-scale counterparts.

Quantization represents another pivotal strategy for enhancing energy efficiency in neural computing. By reducing the precision of weights and activations from 32-bit floating-point to 8-bit or even binary representations, quantization dramatically decreases memory bandwidth requirements and computational complexity. This reduction in numerical precision directly correlates with lower energy consumption in both storage and arithmetic operations, making it particularly valuable for edge deployment scenarios.

Hardware-software co-design approaches are revolutionizing energy efficiency in neural network deployment. Specialized accelerators such as neuromorphic chips and dedicated AI processors are designed to execute neural network operations with optimal energy characteristics. These custom silicon solutions can achieve orders of magnitude improvements in energy efficiency compared to general-purpose processors, while maintaining the computational throughput required for real-time image recognition applications.

The sustainability aspect extends beyond immediate energy consumption to encompass the entire lifecycle of neural computing systems. This includes considerations of hardware manufacturing, data center infrastructure, cooling requirements, and end-of-life disposal. Green computing initiatives are promoting the use of renewable energy sources for training large-scale models and implementing carbon-aware scheduling algorithms that optimize training processes based on grid carbon intensity.

Emerging paradigms such as federated learning and edge computing contribute to sustainability by reducing the need for centralized data processing and minimizing data transmission requirements. These distributed approaches enable image recognition capabilities to operate closer to data sources, reducing network energy consumption and improving overall system efficiency while maintaining privacy and security standards.

The pursuit of energy-efficient neural architectures has led to significant innovations in model design and optimization techniques. Techniques such as knowledge distillation enable the creation of smaller, more efficient student networks that maintain competitive accuracy while consuming significantly less computational resources. Pruning methodologies systematically remove redundant parameters and connections, reducing both memory footprint and energy consumption without substantial performance degradation. These approaches can achieve energy reductions of 50-90% compared to their full-scale counterparts.

Quantization represents another pivotal strategy for enhancing energy efficiency in neural computing. By reducing the precision of weights and activations from 32-bit floating-point to 8-bit or even binary representations, quantization dramatically decreases memory bandwidth requirements and computational complexity. This reduction in numerical precision directly correlates with lower energy consumption in both storage and arithmetic operations, making it particularly valuable for edge deployment scenarios.

Hardware-software co-design approaches are revolutionizing energy efficiency in neural network deployment. Specialized accelerators such as neuromorphic chips and dedicated AI processors are designed to execute neural network operations with optimal energy characteristics. These custom silicon solutions can achieve orders of magnitude improvements in energy efficiency compared to general-purpose processors, while maintaining the computational throughput required for real-time image recognition applications.

The sustainability aspect extends beyond immediate energy consumption to encompass the entire lifecycle of neural computing systems. This includes considerations of hardware manufacturing, data center infrastructure, cooling requirements, and end-of-life disposal. Green computing initiatives are promoting the use of renewable energy sources for training large-scale models and implementing carbon-aware scheduling algorithms that optimize training processes based on grid carbon intensity.

Emerging paradigms such as federated learning and edge computing contribute to sustainability by reducing the need for centralized data processing and minimizing data transmission requirements. These distributed approaches enable image recognition capabilities to operate closer to data sources, reducing network energy consumption and improving overall system efficiency while maintaining privacy and security standards.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!