Inverse Design vs Analytical Approaches: Reliability

APR 22, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Inverse Design vs Analytical Methods Background and Objectives

The evolution of design methodologies in engineering and materials science has witnessed a fundamental paradigm shift from traditional analytical approaches to emerging inverse design strategies. Analytical methods, rooted in centuries of scientific development, follow a forward-thinking process where designers specify material properties or geometric parameters and predict resulting performance through established physical laws and mathematical models. This conventional approach has dominated engineering practice since the Industrial Revolution, providing predictable and well-understood pathways to solution development.

Inverse design represents a revolutionary departure from this traditional framework, employing computational algorithms to work backwards from desired performance specifications to determine optimal material compositions, structures, or system configurations. This methodology leverages advanced optimization techniques, machine learning algorithms, and high-throughput computational screening to explore vast design spaces that would be impractical to investigate through conventional means. The approach has gained significant momentum in the past two decades, particularly with advances in artificial intelligence and computational power.

The reliability comparison between these methodologies has emerged as a critical research frontier, driven by increasing demands for robust, predictable design outcomes in high-stakes applications. Industries ranging from aerospace and automotive to pharmaceuticals and renewable energy require design approaches that not only deliver optimal performance but also provide quantifiable confidence levels and risk assessments. This reliability imperative has intensified as systems become more complex and failure consequences more severe.

Current technological objectives focus on establishing comprehensive reliability frameworks that can evaluate both methodologies across multiple dimensions including prediction accuracy, uncertainty quantification, failure mode identification, and long-term performance stability. The goal extends beyond simple performance comparison to developing hybrid approaches that leverage the interpretability and physical grounding of analytical methods with the optimization power and design space exploration capabilities of inverse design.

The strategic importance of this reliability assessment lies in enabling informed decision-making for technology adoption, regulatory compliance, and risk management across diverse industrial sectors where design methodology selection directly impacts product safety, performance, and market success.

Inverse design represents a revolutionary departure from this traditional framework, employing computational algorithms to work backwards from desired performance specifications to determine optimal material compositions, structures, or system configurations. This methodology leverages advanced optimization techniques, machine learning algorithms, and high-throughput computational screening to explore vast design spaces that would be impractical to investigate through conventional means. The approach has gained significant momentum in the past two decades, particularly with advances in artificial intelligence and computational power.

The reliability comparison between these methodologies has emerged as a critical research frontier, driven by increasing demands for robust, predictable design outcomes in high-stakes applications. Industries ranging from aerospace and automotive to pharmaceuticals and renewable energy require design approaches that not only deliver optimal performance but also provide quantifiable confidence levels and risk assessments. This reliability imperative has intensified as systems become more complex and failure consequences more severe.

Current technological objectives focus on establishing comprehensive reliability frameworks that can evaluate both methodologies across multiple dimensions including prediction accuracy, uncertainty quantification, failure mode identification, and long-term performance stability. The goal extends beyond simple performance comparison to developing hybrid approaches that leverage the interpretability and physical grounding of analytical methods with the optimization power and design space exploration capabilities of inverse design.

The strategic importance of this reliability assessment lies in enabling informed decision-making for technology adoption, regulatory compliance, and risk management across diverse industrial sectors where design methodology selection directly impacts product safety, performance, and market success.

Market Demand for Reliable Design Optimization Solutions

The global engineering design optimization market is experiencing unprecedented growth driven by increasing demands for reliable, efficient, and cost-effective design solutions across multiple industries. Traditional analytical approaches, while mathematically rigorous, often fall short in addressing complex multi-objective optimization problems where reliability constraints are paramount. This gap has created substantial market opportunities for advanced inverse design methodologies that can systematically incorporate reliability considerations into the design process.

Aerospace and automotive industries represent the largest market segments demanding reliable design optimization solutions. These sectors face stringent safety regulations and performance requirements where design failures can result in catastrophic consequences. The growing complexity of modern aircraft and vehicles, coupled with the need for weight reduction and fuel efficiency, has intensified the demand for sophisticated design tools that can balance performance optimization with reliability assurance.

The semiconductor and electronics industry constitutes another rapidly expanding market segment. As device miniaturization continues and performance requirements escalate, traditional trial-and-error design approaches become increasingly inadequate. Manufacturers require design optimization solutions that can predict and mitigate reliability issues early in the development cycle, reducing time-to-market and development costs while ensuring product longevity.

Manufacturing industries are increasingly recognizing the economic value of reliable design optimization. The cost of product recalls, warranty claims, and reputation damage associated with unreliable products has driven companies to invest heavily in advanced design methodologies. This trend is particularly pronounced in consumer electronics, medical devices, and industrial equipment sectors where product reliability directly impacts brand value and market competitiveness.

Emerging markets in renewable energy and electric vehicles are creating new demand patterns for reliable design optimization solutions. These industries require innovative approaches to handle the unique challenges of energy storage systems, power electronics, and sustainable materials, where long-term reliability is crucial for commercial viability and environmental impact.

The integration of artificial intelligence and machine learning technologies with inverse design approaches is opening new market opportunities. Organizations seek solutions that can learn from historical failure data and incorporate probabilistic reliability models into the design optimization process, enabling more robust and dependable product development workflows.

Aerospace and automotive industries represent the largest market segments demanding reliable design optimization solutions. These sectors face stringent safety regulations and performance requirements where design failures can result in catastrophic consequences. The growing complexity of modern aircraft and vehicles, coupled with the need for weight reduction and fuel efficiency, has intensified the demand for sophisticated design tools that can balance performance optimization with reliability assurance.

The semiconductor and electronics industry constitutes another rapidly expanding market segment. As device miniaturization continues and performance requirements escalate, traditional trial-and-error design approaches become increasingly inadequate. Manufacturers require design optimization solutions that can predict and mitigate reliability issues early in the development cycle, reducing time-to-market and development costs while ensuring product longevity.

Manufacturing industries are increasingly recognizing the economic value of reliable design optimization. The cost of product recalls, warranty claims, and reputation damage associated with unreliable products has driven companies to invest heavily in advanced design methodologies. This trend is particularly pronounced in consumer electronics, medical devices, and industrial equipment sectors where product reliability directly impacts brand value and market competitiveness.

Emerging markets in renewable energy and electric vehicles are creating new demand patterns for reliable design optimization solutions. These industries require innovative approaches to handle the unique challenges of energy storage systems, power electronics, and sustainable materials, where long-term reliability is crucial for commercial viability and environmental impact.

The integration of artificial intelligence and machine learning technologies with inverse design approaches is opening new market opportunities. Organizations seek solutions that can learn from historical failure data and incorporate probabilistic reliability models into the design optimization process, enabling more robust and dependable product development workflows.

Current Reliability Challenges in Inverse Design Methods

Inverse design methods face significant reliability challenges that stem from their fundamental computational architecture and optimization processes. Unlike analytical approaches that provide deterministic solutions based on established physical principles, inverse design relies on iterative algorithms that can converge to multiple local optima, leading to inconsistent results across different runs or initial conditions.

The non-uniqueness problem represents one of the most critical reliability issues in inverse design. Multiple design configurations can theoretically produce identical or nearly identical target responses, making it difficult to identify the optimal solution. This ambiguity becomes particularly pronounced in complex design spaces where the relationship between design parameters and performance metrics is highly nonlinear.

Convergence stability poses another substantial challenge, as gradient-based optimization algorithms commonly used in inverse design can exhibit sensitivity to initial parameter values and numerical precision. Small perturbations in starting conditions may lead to dramatically different final designs, undermining confidence in the methodology's reproducibility and raising questions about solution robustness.

Computational noise and discretization errors further compromise reliability in inverse design workflows. Finite element analysis, electromagnetic simulations, and other numerical methods introduce inherent approximations that can accumulate throughout the optimization process. These errors may cause the algorithm to converge to suboptimal solutions or fail to identify feasible designs altogether.

The black-box nature of many inverse design algorithms creates additional reliability concerns, as designers often lack insight into why specific solutions emerge. This opacity makes it challenging to validate results, assess solution quality, or troubleshoot when algorithms fail to converge to acceptable designs.

Fabrication constraints and manufacturing tolerances represent practical reliability challenges that analytical approaches handle more predictably. Inverse design solutions may be highly sensitive to geometric variations, material property fluctuations, or processing imperfections, leading to significant performance degradation in real-world implementations compared to simulated predictions.

Cross-validation and benchmarking difficulties arise because inverse design problems often lack established ground truth solutions for comparison. This limitation makes it challenging to assess algorithm performance objectively and establish confidence intervals for design predictions, particularly when dealing with novel or unconventional design configurations.

The non-uniqueness problem represents one of the most critical reliability issues in inverse design. Multiple design configurations can theoretically produce identical or nearly identical target responses, making it difficult to identify the optimal solution. This ambiguity becomes particularly pronounced in complex design spaces where the relationship between design parameters and performance metrics is highly nonlinear.

Convergence stability poses another substantial challenge, as gradient-based optimization algorithms commonly used in inverse design can exhibit sensitivity to initial parameter values and numerical precision. Small perturbations in starting conditions may lead to dramatically different final designs, undermining confidence in the methodology's reproducibility and raising questions about solution robustness.

Computational noise and discretization errors further compromise reliability in inverse design workflows. Finite element analysis, electromagnetic simulations, and other numerical methods introduce inherent approximations that can accumulate throughout the optimization process. These errors may cause the algorithm to converge to suboptimal solutions or fail to identify feasible designs altogether.

The black-box nature of many inverse design algorithms creates additional reliability concerns, as designers often lack insight into why specific solutions emerge. This opacity makes it challenging to validate results, assess solution quality, or troubleshoot when algorithms fail to converge to acceptable designs.

Fabrication constraints and manufacturing tolerances represent practical reliability challenges that analytical approaches handle more predictably. Inverse design solutions may be highly sensitive to geometric variations, material property fluctuations, or processing imperfections, leading to significant performance degradation in real-world implementations compared to simulated predictions.

Cross-validation and benchmarking difficulties arise because inverse design problems often lack established ground truth solutions for comparison. This limitation makes it challenging to assess algorithm performance objectively and establish confidence intervals for design predictions, particularly when dealing with novel or unconventional design configurations.

Existing Reliability Assessment Solutions for Design Methods

01 Machine learning and AI-based inverse design methods

Advanced computational techniques utilizing machine learning algorithms and artificial intelligence are employed for inverse design processes. These methods enable automated optimization and prediction of design parameters by learning from existing data patterns. The approaches improve design efficiency by reducing iteration cycles and enhancing the accuracy of parameter selection through data-driven models.- Machine learning and AI-based inverse design methods: Advanced computational techniques utilizing machine learning algorithms and artificial intelligence are employed for inverse design processes. These methods enable automated optimization and prediction of design parameters by learning from existing data patterns. The approaches enhance reliability through iterative refinement and validation against multiple design constraints, allowing for efficient exploration of design spaces that would be impractical through traditional methods.

- Statistical analysis and probabilistic reliability assessment: Reliability evaluation frameworks incorporate statistical methods and probabilistic models to assess design robustness and failure rates. These analytical approaches utilize Monte Carlo simulations, Bayesian inference, and uncertainty quantification techniques to predict system performance under various conditions. The methods provide confidence intervals and reliability metrics that guide design decisions and validate inverse design outcomes.

- Optimization algorithms for inverse problem solving: Sophisticated optimization techniques are applied to solve inverse design problems by iteratively adjusting parameters to meet specified performance criteria. These algorithms include gradient-based methods, genetic algorithms, and multi-objective optimization strategies that balance competing design requirements. The approaches ensure convergence to optimal or near-optimal solutions while maintaining computational efficiency and design feasibility.

- Validation and verification methodologies: Comprehensive validation frameworks are established to verify the accuracy and reliability of inverse design results through experimental testing and simulation comparisons. These methodologies include cross-validation techniques, sensitivity analysis, and benchmark testing against known solutions. The verification processes ensure that inverse design solutions meet specified tolerances and perform reliably under real-world conditions.

- Data-driven analytical frameworks and modeling: Integrated analytical frameworks leverage large datasets and computational models to support inverse design processes and reliability assessment. These systems combine finite element analysis, computational fluid dynamics, and empirical data to create predictive models that inform design decisions. The frameworks enable rapid prototyping and testing of design alternatives while maintaining high reliability standards through continuous data feedback and model refinement.

02 Reliability analysis and verification frameworks

Systematic frameworks for assessing and verifying the reliability of inverse design solutions are implemented through statistical analysis and validation methods. These frameworks incorporate uncertainty quantification, sensitivity analysis, and robustness testing to ensure design outcomes meet specified reliability criteria. Multiple verification stages are integrated to validate the consistency and dependability of analytical results.Expand Specific Solutions03 Optimization algorithms for inverse problem solving

Specialized optimization algorithms are developed to solve complex inverse problems by iteratively refining design parameters. These algorithms employ gradient-based methods, evolutionary strategies, or hybrid approaches to navigate solution spaces efficiently. The techniques balance computational cost with solution accuracy while handling multi-objective constraints and non-linear relationships in the design space.Expand Specific Solutions04 Analytical modeling and simulation techniques

Comprehensive analytical models and simulation tools are utilized to predict system behavior and validate inverse design outcomes. These techniques integrate physics-based modeling with numerical methods to simulate real-world conditions and assess design performance. The approaches enable rapid prototyping and testing of design alternatives before physical implementation.Expand Specific Solutions05 Data-driven reliability assessment methods

Statistical and probabilistic methods are applied to evaluate reliability using historical data and experimental results. These assessment techniques incorporate failure mode analysis, lifetime prediction models, and confidence interval estimation to quantify design reliability. The methods enable continuous improvement through feedback loops that refine design parameters based on performance data.Expand Specific Solutions

Key Players in Computational Design and Optimization Industry

The inverse design versus analytical approaches reliability landscape represents an emerging technological paradigm in early development stages, with significant growth potential driven by AI and computational advances. The market remains fragmented across multiple sectors including semiconductors, energy, and materials science. Technology maturity varies considerably, with leading academic institutions like Zhejiang University, Beihang University, and Princeton University conducting foundational research, while industrial players such as Samsung Electronics, Apple, Siemens AG, and Fujitsu are implementing practical applications. Companies like Avathon and specialized consulting firms like MathConsult are developing AI-powered solutions to bridge reliability gaps between traditional analytical methods and emerging inverse design approaches, indicating increasing commercial viability and cross-industry adoption potential.

Fujitsu Ltd.

Technical Solution: Fujitsu has developed inverse design methodologies for quantum computing systems and high-performance computing architectures, with particular focus on reliability optimization for quantum devices and classical computing systems. Their approach combines quantum-inspired optimization algorithms with traditional reliability engineering principles to design fault-tolerant systems. The company employs inverse design techniques for thermal management and electromagnetic interference mitigation in data centers and computing systems. Fujitsu's methodology integrates predictive analytics with inverse optimization to enhance system reliability while maintaining computational performance requirements.

Strengths: Strong quantum computing expertise, advanced thermal management solutions, comprehensive system-level approach. Weaknesses: Limited to computing applications, high complexity in quantum system implementation, requires specialized expertise for deployment.

Apple, Inc.

Technical Solution: Apple utilizes inverse design principles primarily in semiconductor and antenna design for mobile devices, emphasizing reliability through iterative optimization processes. Their approach combines topology optimization with reliability constraints to develop compact, high-performance components. The company focuses on inverse design for thermal management systems and electromagnetic compatibility, using advanced simulation tools to ensure product reliability under various operating conditions. Apple's methodology integrates machine learning-based inverse design with traditional analytical validation methods to achieve optimal performance-reliability trade-offs in consumer electronics.

Strengths: Advanced computational resources, strong focus on miniaturization and performance optimization, extensive testing validation. Weaknesses: Limited to consumer electronics applications, proprietary methods with limited academic collaboration.

Core Innovations in Inverse Design Reliability Enhancement

Inverse design system and training method thereof

PatentPendingTW202402035A

Innovation

- A generative model based on a controllable generative adversarial network (cGAN) is used to efficiently design nano-optical devices with desired properties by training a reverse design system to generate structural patterns associated with nano-optical devices.

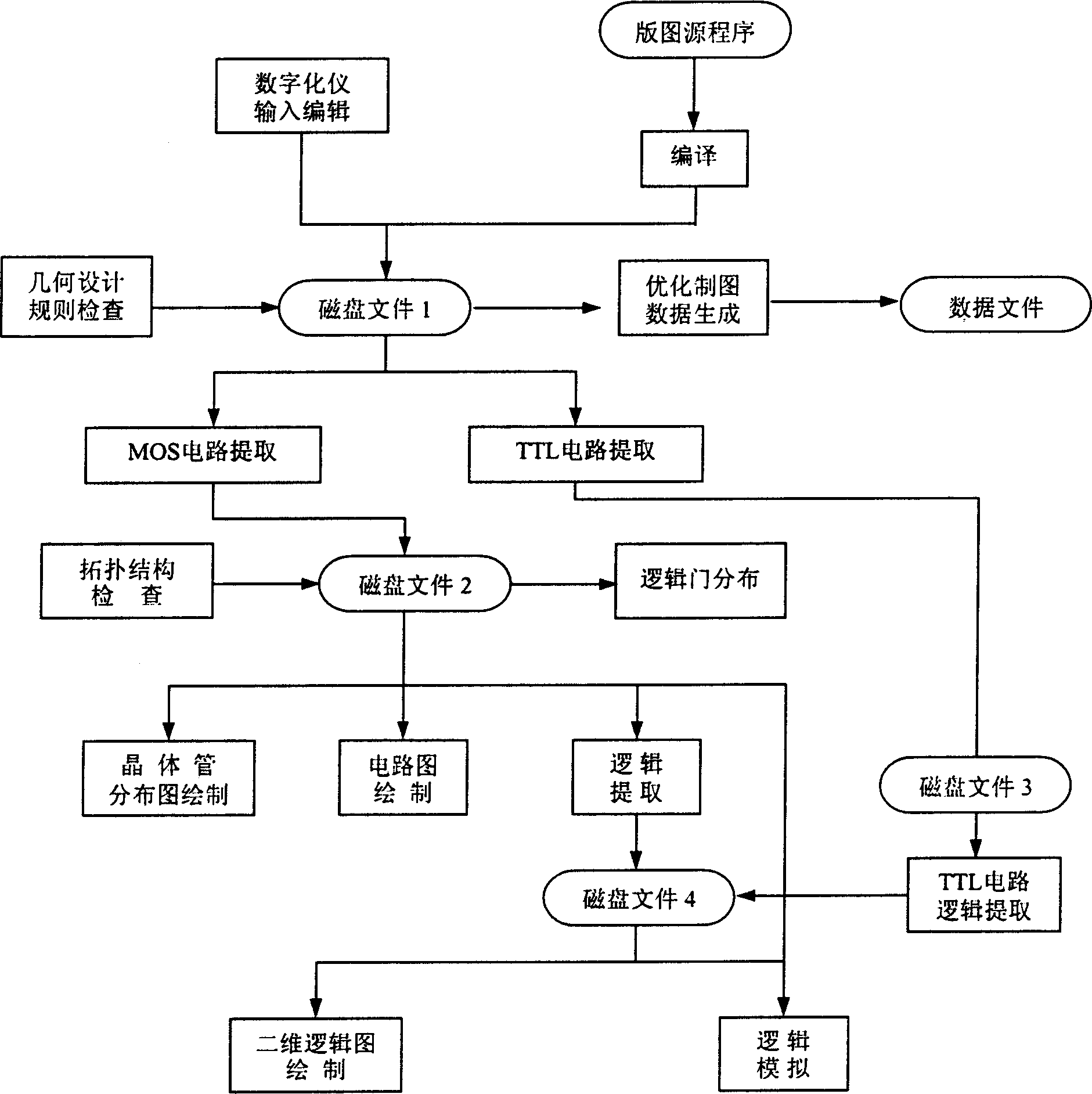

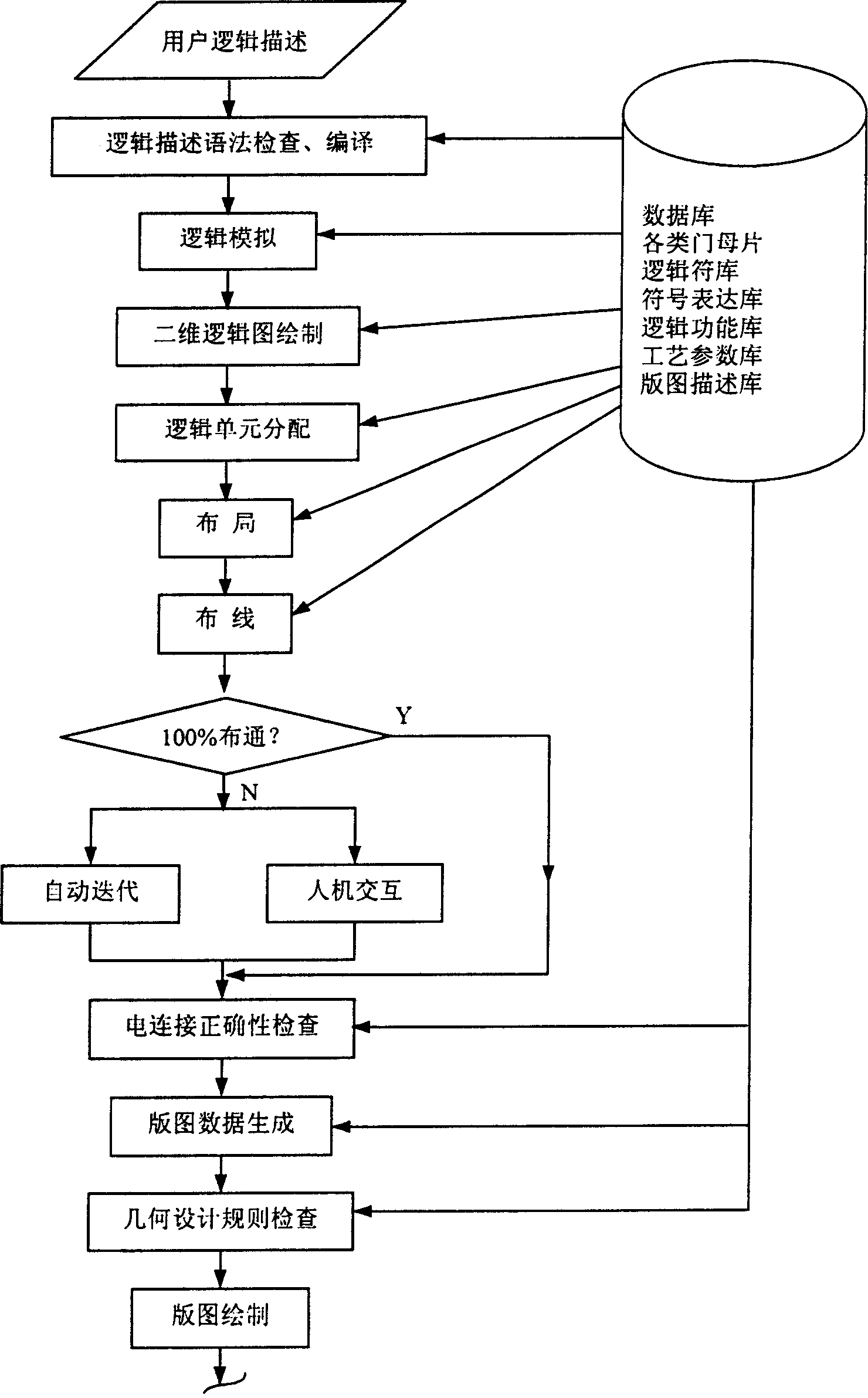

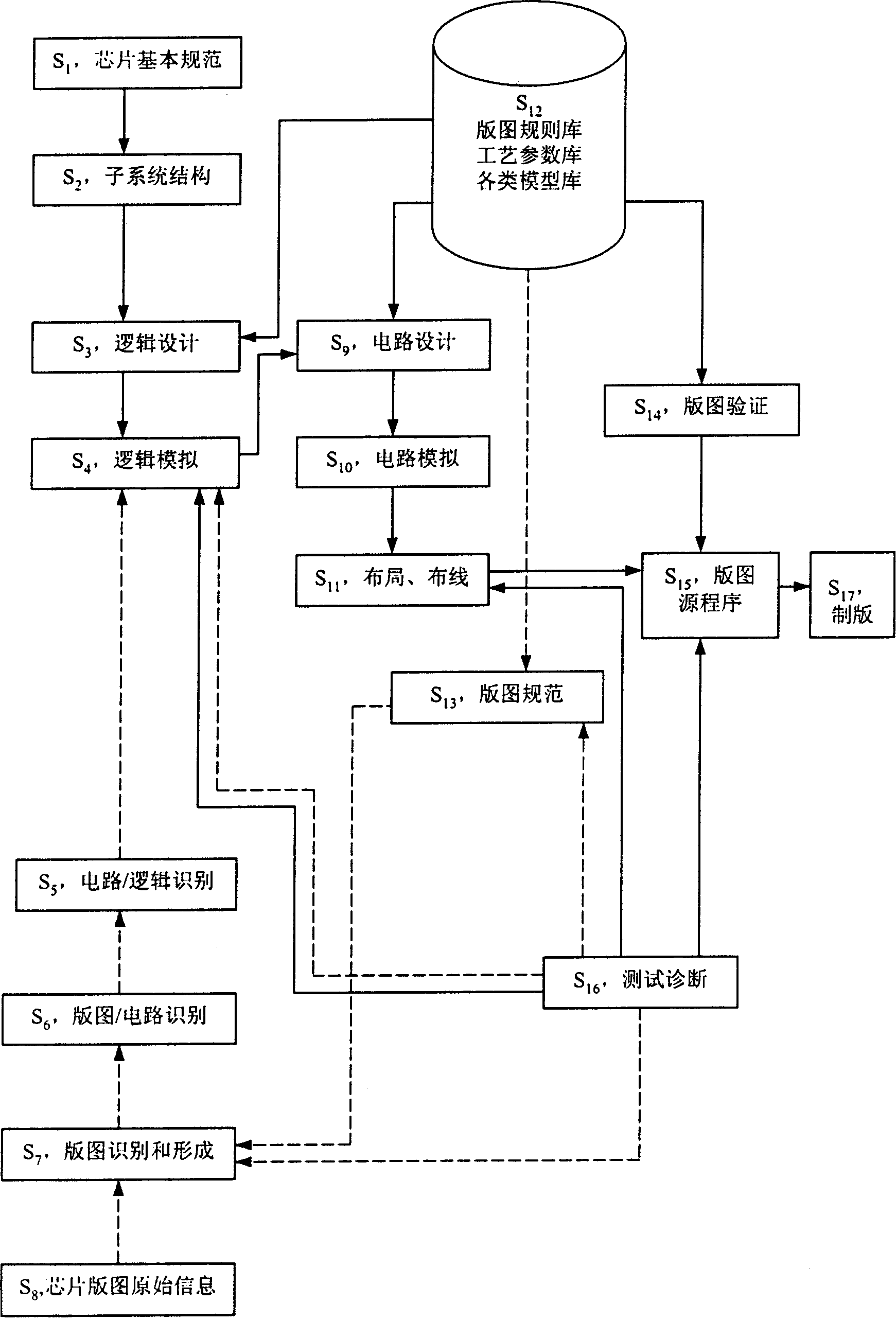

Bidirectional technique system of integrated circuit design

PatentInactiveCN1523660A

Innovation

- Design a two-way technology system for integrated circuit design. Through the integration of reverse analysis and forward design, a two-way process from the original information of the chip layout to the logical structure is realized, including the steps of reverse analysis and forward design, using digitizer, layout recognition, logic Simulation and other technical means are used to form a mutually compatible system.

Validation Standards for Computational Design Methods

The establishment of robust validation standards for computational design methods represents a critical foundation for ensuring reliability in both inverse design and analytical approaches. Current validation frameworks primarily rely on experimental benchmarking, cross-validation with established analytical solutions, and statistical verification methods. However, the complexity of modern computational design problems necessitates more sophisticated validation protocols that can adequately assess the accuracy and reliability of different methodological approaches.

Traditional validation standards have been developed primarily around analytical methods, where closed-form solutions provide clear benchmarks for accuracy assessment. These standards typically involve comparing computational results against known theoretical solutions, experimental data, or established design codes. The validation process often includes mesh convergence studies, sensitivity analyses, and uncertainty quantification to ensure computational reliability.

For inverse design methods, validation presents unique challenges due to the inherently iterative and optimization-driven nature of these approaches. Standard validation protocols must account for convergence criteria, objective function formulation, and the potential for multiple optimal solutions. The validation framework needs to assess not only the final design outcome but also the optimization path and computational efficiency.

Emerging validation standards are incorporating machine learning validation techniques, including cross-validation, holdout testing, and bootstrap sampling methods. These approaches are particularly relevant for inverse design methods that utilize artificial intelligence and machine learning algorithms. The validation process must evaluate both the training performance and generalization capability of these computational methods.

Industry-specific validation standards are being developed to address domain-specific requirements in aerospace, automotive, biomedical, and structural engineering applications. These standards define acceptable error tolerances, required validation datasets, and certification procedures for computational design methods. The integration of uncertainty quantification and reliability assessment into validation protocols ensures that computational design methods meet industry safety and performance requirements.

The development of standardized benchmark problems and reference datasets facilitates consistent validation across different computational design approaches. These benchmarks enable direct comparison between inverse design and analytical methods, providing quantitative metrics for reliability assessment and method selection in practical engineering applications.

Traditional validation standards have been developed primarily around analytical methods, where closed-form solutions provide clear benchmarks for accuracy assessment. These standards typically involve comparing computational results against known theoretical solutions, experimental data, or established design codes. The validation process often includes mesh convergence studies, sensitivity analyses, and uncertainty quantification to ensure computational reliability.

For inverse design methods, validation presents unique challenges due to the inherently iterative and optimization-driven nature of these approaches. Standard validation protocols must account for convergence criteria, objective function formulation, and the potential for multiple optimal solutions. The validation framework needs to assess not only the final design outcome but also the optimization path and computational efficiency.

Emerging validation standards are incorporating machine learning validation techniques, including cross-validation, holdout testing, and bootstrap sampling methods. These approaches are particularly relevant for inverse design methods that utilize artificial intelligence and machine learning algorithms. The validation process must evaluate both the training performance and generalization capability of these computational methods.

Industry-specific validation standards are being developed to address domain-specific requirements in aerospace, automotive, biomedical, and structural engineering applications. These standards define acceptable error tolerances, required validation datasets, and certification procedures for computational design methods. The integration of uncertainty quantification and reliability assessment into validation protocols ensures that computational design methods meet industry safety and performance requirements.

The development of standardized benchmark problems and reference datasets facilitates consistent validation across different computational design approaches. These benchmarks enable direct comparison between inverse design and analytical methods, providing quantitative metrics for reliability assessment and method selection in practical engineering applications.

Risk Assessment Frameworks for Design Method Selection

The selection between inverse design and analytical approaches requires comprehensive risk assessment frameworks that systematically evaluate the reliability implications of each methodology. These frameworks must address the fundamental uncertainty characteristics inherent in both approaches, where inverse design methods often exhibit higher computational complexity but potentially superior optimization outcomes, while analytical approaches provide more predictable but potentially limited solutions.

A multi-criteria risk assessment framework should incorporate quantitative reliability metrics including convergence probability, solution stability, and computational resource requirements. For inverse design approaches, the framework must evaluate the risk of local optima entrapment, gradient explosion scenarios, and the reliability of objective function formulations. Analytical methods require assessment of model approximation errors, boundary condition sensitivity, and the validity range of underlying assumptions.

Probabilistic risk modeling forms the cornerstone of effective framework implementation, utilizing Monte Carlo simulations to characterize uncertainty propagation through design processes. The framework should establish confidence intervals for design outcomes, enabling decision-makers to quantify the trade-offs between solution optimality and reliability assurance. Statistical process control methods can be integrated to monitor design method performance over multiple iterations.

Industry-specific risk tolerance thresholds must be incorporated into the selection framework, recognizing that aerospace applications demand higher reliability assurance compared to consumer product development. The framework should include failure mode analysis capabilities, identifying potential points of method breakdown and establishing contingency protocols for design process recovery.

Implementation considerations include the development of standardized risk scoring matrices that weight factors such as design complexity, time constraints, available computational resources, and required accuracy levels. The framework should provide clear decision trees that guide engineers toward the most appropriate method based on project-specific risk profiles and organizational capabilities.

Validation protocols within the framework must establish benchmarking procedures against known solutions, enabling continuous calibration of risk assessment accuracy. Regular framework updates should incorporate lessons learned from completed projects, ensuring the risk assessment methodology evolves with advancing design capabilities and changing industry requirements.

A multi-criteria risk assessment framework should incorporate quantitative reliability metrics including convergence probability, solution stability, and computational resource requirements. For inverse design approaches, the framework must evaluate the risk of local optima entrapment, gradient explosion scenarios, and the reliability of objective function formulations. Analytical methods require assessment of model approximation errors, boundary condition sensitivity, and the validity range of underlying assumptions.

Probabilistic risk modeling forms the cornerstone of effective framework implementation, utilizing Monte Carlo simulations to characterize uncertainty propagation through design processes. The framework should establish confidence intervals for design outcomes, enabling decision-makers to quantify the trade-offs between solution optimality and reliability assurance. Statistical process control methods can be integrated to monitor design method performance over multiple iterations.

Industry-specific risk tolerance thresholds must be incorporated into the selection framework, recognizing that aerospace applications demand higher reliability assurance compared to consumer product development. The framework should include failure mode analysis capabilities, identifying potential points of method breakdown and establishing contingency protocols for design process recovery.

Implementation considerations include the development of standardized risk scoring matrices that weight factors such as design complexity, time constraints, available computational resources, and required accuracy levels. The framework should provide clear decision trees that guide engineers toward the most appropriate method based on project-specific risk profiles and organizational capabilities.

Validation protocols within the framework must establish benchmarking procedures against known solutions, enabling continuous calibration of risk assessment accuracy. Regular framework updates should incorporate lessons learned from completed projects, ensuring the risk assessment methodology evolves with advancing design capabilities and changing industry requirements.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!