Optimizing Sensor Fusion for Real-Time Telemetry Applications

APR 3, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Sensor Fusion Telemetry Background and Objectives

Sensor fusion technology has emerged as a critical enabler for modern telemetry systems, representing the convergence of multiple sensing modalities to create comprehensive situational awareness. The evolution of this field traces back to early military applications in the 1960s, where radar and sonar data were combined for target tracking. Over subsequent decades, the technology expanded into civilian domains including aerospace, automotive, and industrial monitoring systems.

The fundamental principle underlying sensor fusion involves the intelligent combination of data from heterogeneous sensors to produce information that is more accurate, complete, and reliable than any individual sensor could provide. This approach addresses inherent limitations of single-sensor systems, such as measurement noise, environmental interference, and coverage gaps. Modern implementations leverage advanced algorithms including Kalman filters, particle filters, and machine learning techniques to process multi-modal sensor streams.

Contemporary telemetry applications demand increasingly sophisticated fusion capabilities to handle diverse sensor types including inertial measurement units, GPS receivers, cameras, LiDAR systems, and environmental sensors. The integration challenge extends beyond simple data aggregation to encompass temporal synchronization, spatial alignment, and uncertainty quantification across different measurement domains.

The primary objective of optimizing sensor fusion for real-time telemetry centers on achieving minimal latency while maintaining high accuracy and reliability standards. This requires developing algorithms capable of processing multiple data streams simultaneously within strict timing constraints, typically measured in milliseconds for critical applications such as autonomous vehicle navigation or aircraft flight control systems.

Key performance targets include reducing fusion latency below 10 milliseconds for safety-critical applications, achieving position accuracy within centimeter-level precision, and maintaining system reliability above 99.9% under various environmental conditions. Additionally, the optimization effort aims to minimize computational resource requirements while maximizing the robustness of fused estimates against sensor failures and environmental disturbances.

The technological advancement trajectory focuses on implementing adaptive fusion strategies that can dynamically adjust processing parameters based on real-time assessment of sensor quality and environmental conditions. This includes developing fault-tolerant architectures capable of graceful degradation when individual sensors fail, ensuring continuous operation of telemetry systems in mission-critical scenarios.

The fundamental principle underlying sensor fusion involves the intelligent combination of data from heterogeneous sensors to produce information that is more accurate, complete, and reliable than any individual sensor could provide. This approach addresses inherent limitations of single-sensor systems, such as measurement noise, environmental interference, and coverage gaps. Modern implementations leverage advanced algorithms including Kalman filters, particle filters, and machine learning techniques to process multi-modal sensor streams.

Contemporary telemetry applications demand increasingly sophisticated fusion capabilities to handle diverse sensor types including inertial measurement units, GPS receivers, cameras, LiDAR systems, and environmental sensors. The integration challenge extends beyond simple data aggregation to encompass temporal synchronization, spatial alignment, and uncertainty quantification across different measurement domains.

The primary objective of optimizing sensor fusion for real-time telemetry centers on achieving minimal latency while maintaining high accuracy and reliability standards. This requires developing algorithms capable of processing multiple data streams simultaneously within strict timing constraints, typically measured in milliseconds for critical applications such as autonomous vehicle navigation or aircraft flight control systems.

Key performance targets include reducing fusion latency below 10 milliseconds for safety-critical applications, achieving position accuracy within centimeter-level precision, and maintaining system reliability above 99.9% under various environmental conditions. Additionally, the optimization effort aims to minimize computational resource requirements while maximizing the robustness of fused estimates against sensor failures and environmental disturbances.

The technological advancement trajectory focuses on implementing adaptive fusion strategies that can dynamically adjust processing parameters based on real-time assessment of sensor quality and environmental conditions. This includes developing fault-tolerant architectures capable of graceful degradation when individual sensors fail, ensuring continuous operation of telemetry systems in mission-critical scenarios.

Real-Time Telemetry Market Demand Analysis

The real-time telemetry market is experiencing unprecedented growth driven by the proliferation of IoT devices, autonomous systems, and industrial automation applications. Industries ranging from aerospace and defense to automotive and healthcare are increasingly demanding sophisticated telemetry solutions that can process and transmit sensor data with minimal latency. This surge in demand stems from the critical need for instantaneous decision-making capabilities in mission-critical applications where delays can result in system failures or safety hazards.

Aerospace and defense sectors represent the most mature segment of the real-time telemetry market, with applications spanning satellite communications, missile guidance systems, and unmanned aerial vehicles. These applications require sensor fusion capabilities that can integrate data from multiple sources including GPS, inertial measurement units, radar, and optical sensors while maintaining strict timing constraints and reliability standards.

The automotive industry has emerged as a rapidly expanding market segment, particularly with the advancement of autonomous driving technologies. Modern vehicles incorporate dozens of sensors including LiDAR, cameras, radar, and ultrasonic sensors that must be fused in real-time to enable safe navigation and collision avoidance. The complexity of urban driving environments demands increasingly sophisticated sensor fusion algorithms capable of handling dynamic scenarios with sub-millisecond response times.

Industrial automation and smart manufacturing applications are driving significant demand for real-time telemetry systems that can monitor equipment health, optimize production processes, and predict maintenance requirements. These systems must integrate data from vibration sensors, temperature monitors, pressure gauges, and other instrumentation to provide comprehensive situational awareness for industrial operators.

Healthcare and medical device markets are witnessing growing adoption of real-time telemetry for patient monitoring, surgical robotics, and diagnostic equipment. These applications require sensor fusion capabilities that can combine physiological measurements, imaging data, and environmental parameters while ensuring patient safety and regulatory compliance.

The telecommunications infrastructure sector is increasingly relying on real-time telemetry for network optimization, fault detection, and quality of service monitoring. The deployment of 5G networks and edge computing architectures is creating new opportunities for distributed sensor fusion applications that can process telemetry data closer to the source, reducing latency and bandwidth requirements.

Market demand is particularly strong for solutions that can address the challenges of heterogeneous sensor integration, where different sensor types with varying sampling rates, data formats, and accuracy levels must be combined effectively. Organizations are seeking telemetry systems that can adapt to changing operational conditions while maintaining consistent performance and reliability standards across diverse deployment environments.

Aerospace and defense sectors represent the most mature segment of the real-time telemetry market, with applications spanning satellite communications, missile guidance systems, and unmanned aerial vehicles. These applications require sensor fusion capabilities that can integrate data from multiple sources including GPS, inertial measurement units, radar, and optical sensors while maintaining strict timing constraints and reliability standards.

The automotive industry has emerged as a rapidly expanding market segment, particularly with the advancement of autonomous driving technologies. Modern vehicles incorporate dozens of sensors including LiDAR, cameras, radar, and ultrasonic sensors that must be fused in real-time to enable safe navigation and collision avoidance. The complexity of urban driving environments demands increasingly sophisticated sensor fusion algorithms capable of handling dynamic scenarios with sub-millisecond response times.

Industrial automation and smart manufacturing applications are driving significant demand for real-time telemetry systems that can monitor equipment health, optimize production processes, and predict maintenance requirements. These systems must integrate data from vibration sensors, temperature monitors, pressure gauges, and other instrumentation to provide comprehensive situational awareness for industrial operators.

Healthcare and medical device markets are witnessing growing adoption of real-time telemetry for patient monitoring, surgical robotics, and diagnostic equipment. These applications require sensor fusion capabilities that can combine physiological measurements, imaging data, and environmental parameters while ensuring patient safety and regulatory compliance.

The telecommunications infrastructure sector is increasingly relying on real-time telemetry for network optimization, fault detection, and quality of service monitoring. The deployment of 5G networks and edge computing architectures is creating new opportunities for distributed sensor fusion applications that can process telemetry data closer to the source, reducing latency and bandwidth requirements.

Market demand is particularly strong for solutions that can address the challenges of heterogeneous sensor integration, where different sensor types with varying sampling rates, data formats, and accuracy levels must be combined effectively. Organizations are seeking telemetry systems that can adapt to changing operational conditions while maintaining consistent performance and reliability standards across diverse deployment environments.

Current Sensor Fusion Challenges in Telemetry Systems

Real-time telemetry systems face significant challenges in achieving optimal sensor fusion performance, primarily stemming from the inherent complexity of processing multiple heterogeneous data streams simultaneously. The fundamental challenge lies in synchronizing data from sensors operating at different sampling rates, measurement accuracies, and temporal characteristics while maintaining system responsiveness under strict latency constraints.

Data synchronization represents one of the most critical obstacles in current telemetry applications. Sensors often operate with varying update frequencies, creating temporal misalignment issues that can severely impact fusion accuracy. GPS sensors typically update at 1-10 Hz, while inertial measurement units may operate at 100-1000 Hz, and radar systems might provide updates at irregular intervals. This temporal disparity requires sophisticated buffering and interpolation mechanisms that introduce computational overhead and potential accuracy degradation.

Computational resource limitations pose another significant constraint, particularly in embedded telemetry systems where processing power, memory, and energy consumption are strictly bounded. Traditional Kalman filtering approaches, while mathematically robust, often struggle to scale efficiently when dealing with high-dimensional state spaces or multiple sensor modalities. The computational complexity increases exponentially with the number of sensors and state variables, making real-time implementation challenging for resource-constrained platforms.

Sensor reliability and fault tolerance present ongoing challenges in maintaining system integrity. Individual sensors may experience temporary failures, degraded performance due to environmental conditions, or systematic biases that evolve over time. Current fusion algorithms often lack robust mechanisms for detecting and compensating for sensor anomalies in real-time, leading to potential system-wide performance degradation or catastrophic failures in critical applications.

Environmental interference and dynamic operating conditions further complicate sensor fusion optimization. Telemetry systems must operate across diverse environments where electromagnetic interference, temperature variations, vibrations, and other external factors can significantly impact sensor performance. The challenge lies in developing adaptive fusion algorithms that can automatically adjust their behavior based on changing environmental conditions while maintaining consistent performance metrics.

Latency management remains a persistent challenge, particularly in applications requiring sub-millisecond response times. The trade-off between fusion accuracy and processing speed creates a fundamental tension in system design. While more sophisticated fusion algorithms can improve accuracy, they typically require additional computational time that may violate real-time constraints in time-critical applications such as autonomous navigation or industrial control systems.

Data synchronization represents one of the most critical obstacles in current telemetry applications. Sensors often operate with varying update frequencies, creating temporal misalignment issues that can severely impact fusion accuracy. GPS sensors typically update at 1-10 Hz, while inertial measurement units may operate at 100-1000 Hz, and radar systems might provide updates at irregular intervals. This temporal disparity requires sophisticated buffering and interpolation mechanisms that introduce computational overhead and potential accuracy degradation.

Computational resource limitations pose another significant constraint, particularly in embedded telemetry systems where processing power, memory, and energy consumption are strictly bounded. Traditional Kalman filtering approaches, while mathematically robust, often struggle to scale efficiently when dealing with high-dimensional state spaces or multiple sensor modalities. The computational complexity increases exponentially with the number of sensors and state variables, making real-time implementation challenging for resource-constrained platforms.

Sensor reliability and fault tolerance present ongoing challenges in maintaining system integrity. Individual sensors may experience temporary failures, degraded performance due to environmental conditions, or systematic biases that evolve over time. Current fusion algorithms often lack robust mechanisms for detecting and compensating for sensor anomalies in real-time, leading to potential system-wide performance degradation or catastrophic failures in critical applications.

Environmental interference and dynamic operating conditions further complicate sensor fusion optimization. Telemetry systems must operate across diverse environments where electromagnetic interference, temperature variations, vibrations, and other external factors can significantly impact sensor performance. The challenge lies in developing adaptive fusion algorithms that can automatically adjust their behavior based on changing environmental conditions while maintaining consistent performance metrics.

Latency management remains a persistent challenge, particularly in applications requiring sub-millisecond response times. The trade-off between fusion accuracy and processing speed creates a fundamental tension in system design. While more sophisticated fusion algorithms can improve accuracy, they typically require additional computational time that may violate real-time constraints in time-critical applications such as autonomous navigation or industrial control systems.

Existing Real-Time Sensor Fusion Solutions

01 Multi-sensor data fusion algorithms and methods

Advanced algorithms are employed to combine data from multiple sensors to improve accuracy and reliability. These methods include Kalman filtering, Bayesian inference, and machine learning approaches that process heterogeneous sensor inputs. The fusion algorithms handle data synchronization, weighting, and conflict resolution to produce optimized output that is more accurate than individual sensor readings.- Multi-sensor data fusion algorithms and methods: Advanced algorithms are employed to combine data from multiple sensors to improve accuracy and reliability. These methods include Kalman filtering, Bayesian inference, and machine learning approaches that process heterogeneous sensor inputs. The fusion algorithms handle data synchronization, weighting, and conflict resolution to produce optimized output that is more accurate than individual sensor readings.

- Sensor fusion for autonomous vehicle perception: Integration of camera, radar, lidar, and ultrasonic sensors to create comprehensive environmental perception for autonomous driving systems. The fusion process combines complementary strengths of different sensor modalities to detect objects, estimate distances, and recognize road conditions under various weather and lighting scenarios. This approach enhances safety and decision-making capabilities in autonomous navigation.

- Optimization of sensor fusion architecture and hardware: Design and implementation of efficient hardware architectures and processing units specifically optimized for sensor fusion tasks. This includes edge computing solutions, dedicated fusion processors, and distributed computing frameworks that reduce latency and power consumption. The optimization focuses on real-time processing capabilities while maintaining computational efficiency and system reliability.

- Adaptive sensor fusion with dynamic weighting: Techniques for dynamically adjusting the contribution of each sensor based on environmental conditions, sensor reliability, and data quality metrics. The system continuously evaluates sensor performance and adapts fusion parameters in real-time to maintain optimal accuracy. This approach handles sensor degradation, temporary failures, and varying operational conditions to ensure robust performance.

- Machine learning-based sensor fusion optimization: Application of deep learning and artificial intelligence techniques to optimize sensor fusion processes. Neural networks are trained to learn optimal fusion strategies from large datasets, automatically discovering patterns and relationships between sensor modalities. These methods enable adaptive fusion that improves over time and can handle complex, non-linear sensor interactions for enhanced performance.

02 Sensor fusion for autonomous vehicle perception

Integration of camera, radar, lidar, and ultrasonic sensors to create comprehensive environmental perception for autonomous driving systems. The fusion process combines complementary strengths of different sensor modalities to detect objects, estimate distances, and understand road conditions under various weather and lighting scenarios. This approach enhances safety and decision-making capabilities in autonomous navigation.Expand Specific Solutions03 Optimization of sensor fusion architecture and hardware configuration

Design and optimization of the physical and logical architecture for sensor fusion systems, including sensor placement, communication protocols, and computational resource allocation. This involves determining optimal sensor configurations, reducing latency, minimizing power consumption, and improving processing efficiency through hardware acceleration and distributed computing approaches.Expand Specific Solutions04 Adaptive and dynamic sensor fusion strategies

Techniques that dynamically adjust fusion parameters and strategies based on environmental conditions, sensor availability, and data quality. These adaptive methods include real-time sensor selection, dynamic weighting adjustment, and fault-tolerant mechanisms that maintain system performance when individual sensors fail or provide unreliable data. The systems can learn and optimize fusion strategies through continuous operation.Expand Specific Solutions05 Sensor fusion for positioning and navigation systems

Integration of GPS, inertial measurement units, magnetometers, and other positioning sensors to achieve high-precision localization and navigation. The fusion techniques compensate for individual sensor limitations such as GPS signal loss in urban canyons or IMU drift over time. These systems provide continuous and accurate position estimation for applications in robotics, drones, and mobile devices.Expand Specific Solutions

Major Players in Sensor Fusion and Telemetry Industry

The sensor fusion optimization landscape for real-time telemetry applications represents a rapidly maturing market driven by increasing demand for autonomous systems and IoT deployments. The industry has progressed from experimental phases to commercial implementation, with market growth accelerated by automotive, aerospace, and industrial automation sectors. Technology maturity varies significantly across players, with established giants like Intel Corp., Bosch, and Mitsubishi Electric leading in hardware integration and processing capabilities, while specialized firms like Digital Global Systems and u-blox AG focus on RF spectrum management and positioning technologies. Defense contractors including Lockheed Martin and L3 Technologies drive high-precision applications, whereas emerging players like Helsing GmbH introduce AI-enhanced fusion algorithms. The competitive landscape shows convergence between traditional sensor manufacturers, semiconductor companies, and software-driven solution providers, indicating technology consolidation and cross-industry collaboration trends.

Robert Bosch GmbH

Technical Solution: Bosch implements multi-sensor fusion architecture combining radar, camera, and ultrasonic sensors for automotive and industrial telemetry systems. Their solution employs Kalman filtering and particle filter algorithms for real-time state estimation, processing sensor data at frequencies up to 100Hz for critical safety applications. The company's sensor fusion platform integrates proprietary MEMS sensors with advanced signal processing algorithms, enabling precise motion detection and environmental perception. Bosch's approach includes adaptive sensor weighting based on environmental conditions and sensor reliability metrics, optimizing performance across diverse operational scenarios including harsh industrial environments and automotive applications.

Strengths: Extensive automotive industry experience, robust MEMS sensor technology, proven reliability in safety-critical applications. Weaknesses: Limited flexibility for non-automotive applications, proprietary ecosystem constraints.

Continental Teves AG & Co. oHG

Technical Solution: Continental develops integrated sensor fusion solutions for automotive telemetry applications, combining radar, camera, and LiDAR data streams for advanced driver assistance systems. Their platform utilizes centralized processing architecture with dedicated automotive-grade processors, achieving sub-10ms latency for critical safety functions. The system implements probabilistic sensor fusion algorithms with uncertainty quantification, enabling robust performance in adverse weather conditions. Continental's approach includes predictive sensor health monitoring and automatic reconfiguration capabilities, ensuring continuous operation even with partial sensor failures. Their solution supports vehicle-to-everything (V2X) communication integration for enhanced situational awareness.

Strengths: Automotive-grade reliability, extensive validation in production vehicles, strong integration with vehicle systems. Weaknesses: Limited applicability outside automotive domain, high development costs for custom implementations.

Core Patents in Optimized Sensor Fusion Algorithms

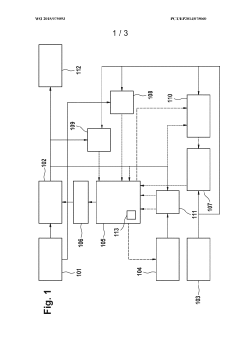

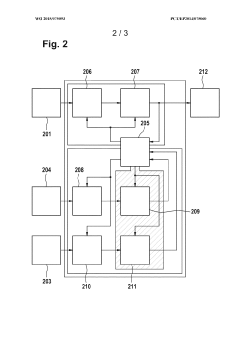

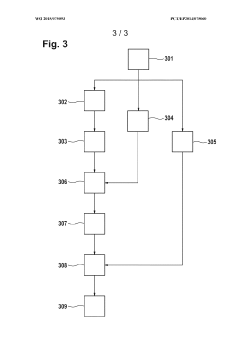

Arrangement and method for sensor fusion

PatentInactiveEP3105715A1

Innovation

- An arrangement and method for sensor fusion using a fusion machine that generates a factor graph from received sensor data, with configurable graph sections and rules, allowing for easy adaptation to various sensor constellations and tasks through configuration data and rules, processed using an inference engine.

Method, fusion filter, and system for fusing sensor signals with different temporal signal output delays into a fusion data set

PatentWO2015075093A1

Innovation

- A method that merges sensor signals with different temporal delays by determining error values using comparisons with other sensor systems, assuming they remain constant between adjustments, allowing for real-time and accurate fusion of sensor data while avoiding redundant corrections and maintaining data consistency.

Data Privacy and Security in Telemetry Systems

Real-time telemetry applications face unprecedented data privacy and security challenges as sensor fusion systems collect, process, and transmit vast amounts of sensitive information across distributed networks. The integration of multiple sensor modalities creates complex data streams that require robust protection mechanisms to prevent unauthorized access, data breaches, and malicious interference. These systems often handle critical infrastructure data, personal information, and proprietary operational metrics that demand comprehensive security frameworks.

Data encryption represents the primary defense mechanism for telemetry systems, with end-to-end encryption protocols ensuring data integrity throughout the sensor-to-cloud pipeline. Advanced encryption standards such as AES-256 and elliptic curve cryptography provide computational efficiency while maintaining security strength suitable for real-time processing constraints. However, the challenge lies in balancing encryption overhead with the stringent latency requirements of real-time applications, particularly in resource-constrained edge computing environments.

Authentication and access control mechanisms form critical security layers, implementing multi-factor authentication, certificate-based device identification, and role-based access controls. Zero-trust architecture principles are increasingly adopted, requiring continuous verification of all system components and data flows. Blockchain-based authentication systems are emerging as promising solutions for distributed telemetry networks, providing immutable audit trails and decentralized trust mechanisms.

Privacy-preserving techniques such as differential privacy and homomorphic encryption enable secure data analytics without exposing raw sensor data. These approaches allow organizations to extract valuable insights from fused sensor data while maintaining individual privacy and regulatory compliance. Federated learning frameworks further enhance privacy by enabling distributed model training without centralizing sensitive data.

Regulatory compliance presents ongoing challenges, with frameworks like GDPR, CCPA, and industry-specific standards requiring continuous adaptation of security protocols. Data minimization principles, consent management systems, and automated compliance monitoring tools are becoming essential components of modern telemetry architectures.

Emerging threats include adversarial attacks on sensor fusion algorithms, side-channel attacks exploiting timing information, and sophisticated man-in-the-middle attacks targeting communication protocols. Advanced threat detection systems utilizing machine learning algorithms can identify anomalous patterns and potential security breaches in real-time, enabling rapid response and system isolation when necessary.

Data encryption represents the primary defense mechanism for telemetry systems, with end-to-end encryption protocols ensuring data integrity throughout the sensor-to-cloud pipeline. Advanced encryption standards such as AES-256 and elliptic curve cryptography provide computational efficiency while maintaining security strength suitable for real-time processing constraints. However, the challenge lies in balancing encryption overhead with the stringent latency requirements of real-time applications, particularly in resource-constrained edge computing environments.

Authentication and access control mechanisms form critical security layers, implementing multi-factor authentication, certificate-based device identification, and role-based access controls. Zero-trust architecture principles are increasingly adopted, requiring continuous verification of all system components and data flows. Blockchain-based authentication systems are emerging as promising solutions for distributed telemetry networks, providing immutable audit trails and decentralized trust mechanisms.

Privacy-preserving techniques such as differential privacy and homomorphic encryption enable secure data analytics without exposing raw sensor data. These approaches allow organizations to extract valuable insights from fused sensor data while maintaining individual privacy and regulatory compliance. Federated learning frameworks further enhance privacy by enabling distributed model training without centralizing sensitive data.

Regulatory compliance presents ongoing challenges, with frameworks like GDPR, CCPA, and industry-specific standards requiring continuous adaptation of security protocols. Data minimization principles, consent management systems, and automated compliance monitoring tools are becoming essential components of modern telemetry architectures.

Emerging threats include adversarial attacks on sensor fusion algorithms, side-channel attacks exploiting timing information, and sophisticated man-in-the-middle attacks targeting communication protocols. Advanced threat detection systems utilizing machine learning algorithms can identify anomalous patterns and potential security breaches in real-time, enabling rapid response and system isolation when necessary.

Performance Benchmarking for Fusion Algorithms

Performance benchmarking for sensor fusion algorithms in real-time telemetry applications requires comprehensive evaluation frameworks that assess multiple dimensions of algorithmic effectiveness. The benchmarking process must establish standardized metrics that capture both accuracy and computational efficiency, as these factors directly impact the viability of fusion algorithms in time-critical telemetry systems. Key performance indicators include fusion accuracy measured through root mean square error, computational latency expressed in processing time per data frame, memory utilization patterns, and throughput capacity under varying data loads.

Standardized datasets play a crucial role in enabling consistent performance comparisons across different fusion algorithms. Industry-standard benchmarking suites such as the KITTI dataset for automotive applications and custom telemetry datasets from aerospace and industrial monitoring systems provide reference points for algorithm evaluation. These datasets incorporate various sensor modalities including IMU, GPS, LiDAR, and camera data with ground truth annotations, enabling objective assessment of fusion algorithm performance under controlled conditions.

Real-time performance evaluation requires specialized testing environments that simulate operational constraints. Hardware-in-the-loop testing platforms equipped with multi-core processors, FPGA accelerators, and GPU computing units provide realistic assessment of algorithm performance under actual deployment conditions. Benchmarking protocols must account for varying sensor sampling rates, data synchronization challenges, and communication latencies that affect real-world telemetry applications.

Comparative analysis methodologies enable systematic evaluation of different fusion approaches including Kalman filtering variants, particle filters, and machine learning-based fusion techniques. Performance profiling tools measure execution time distribution across algorithm components, identifying computational bottlenecks and optimization opportunities. Statistical significance testing ensures that performance differences between algorithms are meaningful rather than attributable to measurement variance.

Scalability benchmarking addresses the critical requirement for fusion algorithms to maintain performance as sensor networks expand. Load testing protocols evaluate algorithm behavior under increasing sensor counts, higher data rates, and extended operational periods. These assessments reveal performance degradation patterns and help establish operational limits for different fusion approaches in large-scale telemetry deployments.

Standardized datasets play a crucial role in enabling consistent performance comparisons across different fusion algorithms. Industry-standard benchmarking suites such as the KITTI dataset for automotive applications and custom telemetry datasets from aerospace and industrial monitoring systems provide reference points for algorithm evaluation. These datasets incorporate various sensor modalities including IMU, GPS, LiDAR, and camera data with ground truth annotations, enabling objective assessment of fusion algorithm performance under controlled conditions.

Real-time performance evaluation requires specialized testing environments that simulate operational constraints. Hardware-in-the-loop testing platforms equipped with multi-core processors, FPGA accelerators, and GPU computing units provide realistic assessment of algorithm performance under actual deployment conditions. Benchmarking protocols must account for varying sensor sampling rates, data synchronization challenges, and communication latencies that affect real-world telemetry applications.

Comparative analysis methodologies enable systematic evaluation of different fusion approaches including Kalman filtering variants, particle filters, and machine learning-based fusion techniques. Performance profiling tools measure execution time distribution across algorithm components, identifying computational bottlenecks and optimization opportunities. Statistical significance testing ensures that performance differences between algorithms are meaningful rather than attributable to measurement variance.

Scalability benchmarking addresses the critical requirement for fusion algorithms to maintain performance as sensor networks expand. Load testing protocols evaluate algorithm behavior under increasing sensor counts, higher data rates, and extended operational periods. These assessments reveal performance degradation patterns and help establish operational limits for different fusion approaches in large-scale telemetry deployments.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!