Optimizing Sensor Fusion in Autonomous Vehicles with World Models

APR 13, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Autonomous Vehicle Sensor Fusion Background and Objectives

Autonomous vehicles represent one of the most transformative technological developments in modern transportation, fundamentally reshaping how we perceive mobility and safety. The evolution of autonomous driving technology has progressed through distinct phases, beginning with basic driver assistance systems in the 1990s to today's sophisticated Level 4 autonomous capabilities. This progression has been marked by exponential improvements in computational power, artificial intelligence algorithms, and sensor technologies.

The historical development of sensor fusion in autonomous vehicles traces back to early military applications in the 1980s, where multiple sensor modalities were first integrated for navigation purposes. The transition to civilian automotive applications began in earnest during the 2000s, with pioneering efforts by research institutions and automotive manufacturers. Key milestones include the DARPA Grand Challenges of 2004-2007, which demonstrated the feasibility of autonomous navigation, and subsequent commercial deployments by companies like Google's Waymo project starting in 2009.

Current technological trends indicate a shift toward more sophisticated sensor fusion architectures that incorporate world models as fundamental components. World models represent learned representations of the environment that enable predictive reasoning and improved decision-making under uncertainty. This approach addresses critical limitations of traditional sensor fusion methods, which often struggle with sensor degradation, environmental variability, and real-time processing constraints.

The primary technical objectives driving current research focus on achieving robust perception capabilities across diverse operating conditions. These include maintaining accurate environmental understanding during adverse weather conditions, handling sensor failures gracefully, and reducing computational overhead while improving prediction accuracy. The integration of world models aims to create more resilient systems that can anticipate and adapt to dynamic scenarios.

Contemporary autonomous vehicle systems must process data from multiple sensor modalities including LiDAR, cameras, radar, and inertial measurement units. The challenge lies in optimally combining these heterogeneous data streams to create coherent, accurate representations of the vehicle's surroundings. World models offer a promising framework for achieving this integration by learning temporal and spatial relationships within the environment, enabling more sophisticated predictive capabilities than traditional rule-based fusion approaches.

The strategic importance of advancing sensor fusion with world models extends beyond immediate performance improvements. These technologies are essential for achieving higher levels of autonomy, reducing accident rates, and enabling widespread deployment of autonomous vehicles in complex urban environments.

The historical development of sensor fusion in autonomous vehicles traces back to early military applications in the 1980s, where multiple sensor modalities were first integrated for navigation purposes. The transition to civilian automotive applications began in earnest during the 2000s, with pioneering efforts by research institutions and automotive manufacturers. Key milestones include the DARPA Grand Challenges of 2004-2007, which demonstrated the feasibility of autonomous navigation, and subsequent commercial deployments by companies like Google's Waymo project starting in 2009.

Current technological trends indicate a shift toward more sophisticated sensor fusion architectures that incorporate world models as fundamental components. World models represent learned representations of the environment that enable predictive reasoning and improved decision-making under uncertainty. This approach addresses critical limitations of traditional sensor fusion methods, which often struggle with sensor degradation, environmental variability, and real-time processing constraints.

The primary technical objectives driving current research focus on achieving robust perception capabilities across diverse operating conditions. These include maintaining accurate environmental understanding during adverse weather conditions, handling sensor failures gracefully, and reducing computational overhead while improving prediction accuracy. The integration of world models aims to create more resilient systems that can anticipate and adapt to dynamic scenarios.

Contemporary autonomous vehicle systems must process data from multiple sensor modalities including LiDAR, cameras, radar, and inertial measurement units. The challenge lies in optimally combining these heterogeneous data streams to create coherent, accurate representations of the vehicle's surroundings. World models offer a promising framework for achieving this integration by learning temporal and spatial relationships within the environment, enabling more sophisticated predictive capabilities than traditional rule-based fusion approaches.

The strategic importance of advancing sensor fusion with world models extends beyond immediate performance improvements. These technologies are essential for achieving higher levels of autonomy, reducing accident rates, and enabling widespread deployment of autonomous vehicles in complex urban environments.

Market Demand for Advanced Autonomous Driving Systems

The global autonomous vehicle market is experiencing unprecedented growth driven by increasing consumer demand for enhanced safety, convenience, and mobility solutions. Advanced sensor fusion technologies utilizing world models represent a critical component in meeting these evolving market expectations, as consumers and fleet operators seek vehicles capable of operating safely across diverse environmental conditions and complex traffic scenarios.

Regulatory frameworks worldwide are accelerating the adoption of advanced driver assistance systems and autonomous driving capabilities. The European Union's General Safety Regulation mandates various ADAS features in new vehicles, while similar initiatives in North America and Asia-Pacific regions are creating substantial market pull for sophisticated sensor fusion solutions. These regulatory requirements establish minimum performance standards that drive demand for more robust and reliable autonomous driving systems.

Commercial fleet operators, particularly in ride-hailing, delivery, and logistics sectors, represent a significant demand driver for advanced autonomous driving systems. These operators seek to reduce operational costs, improve safety records, and enhance service reliability through deployment of vehicles equipped with sophisticated sensor fusion capabilities. The economic benefits of reduced driver costs and improved operational efficiency create strong market incentives for adopting world model-enhanced autonomous systems.

Urban mobility challenges, including traffic congestion, parking limitations, and environmental concerns, are generating substantial consumer interest in autonomous vehicle solutions. Metropolitan areas worldwide are implementing smart city initiatives that favor autonomous vehicles, creating favorable market conditions for advanced sensor fusion technologies that enable reliable urban navigation and integration with intelligent transportation infrastructure.

The aging population in developed markets presents additional demand factors, as autonomous vehicles offer mobility solutions for individuals who may face driving limitations. This demographic trend, combined with increasing urbanization rates globally, expands the addressable market for autonomous driving systems that can operate safely and efficiently in complex environments through advanced sensor fusion and world model integration.

Enterprise adoption across industries including mining, agriculture, and construction is driving demand for specialized autonomous vehicle applications. These sectors require robust sensor fusion capabilities to operate in challenging environments where traditional GPS-based navigation may be insufficient, highlighting the market need for world model-enhanced systems that can maintain operational reliability across diverse conditions.

Regulatory frameworks worldwide are accelerating the adoption of advanced driver assistance systems and autonomous driving capabilities. The European Union's General Safety Regulation mandates various ADAS features in new vehicles, while similar initiatives in North America and Asia-Pacific regions are creating substantial market pull for sophisticated sensor fusion solutions. These regulatory requirements establish minimum performance standards that drive demand for more robust and reliable autonomous driving systems.

Commercial fleet operators, particularly in ride-hailing, delivery, and logistics sectors, represent a significant demand driver for advanced autonomous driving systems. These operators seek to reduce operational costs, improve safety records, and enhance service reliability through deployment of vehicles equipped with sophisticated sensor fusion capabilities. The economic benefits of reduced driver costs and improved operational efficiency create strong market incentives for adopting world model-enhanced autonomous systems.

Urban mobility challenges, including traffic congestion, parking limitations, and environmental concerns, are generating substantial consumer interest in autonomous vehicle solutions. Metropolitan areas worldwide are implementing smart city initiatives that favor autonomous vehicles, creating favorable market conditions for advanced sensor fusion technologies that enable reliable urban navigation and integration with intelligent transportation infrastructure.

The aging population in developed markets presents additional demand factors, as autonomous vehicles offer mobility solutions for individuals who may face driving limitations. This demographic trend, combined with increasing urbanization rates globally, expands the addressable market for autonomous driving systems that can operate safely and efficiently in complex environments through advanced sensor fusion and world model integration.

Enterprise adoption across industries including mining, agriculture, and construction is driving demand for specialized autonomous vehicle applications. These sectors require robust sensor fusion capabilities to operate in challenging environments where traditional GPS-based navigation may be insufficient, highlighting the market need for world model-enhanced systems that can maintain operational reliability across diverse conditions.

Current Sensor Fusion Challenges in Autonomous Vehicles

Sensor fusion in autonomous vehicles faces significant computational complexity challenges when processing multiple data streams simultaneously. Current systems must integrate information from LiDAR, cameras, radar, IMU, and GPS sensors in real-time, creating substantial processing bottlenecks. The heterogeneous nature of these sensors, each operating at different frequencies and producing varying data formats, complicates the fusion process and often leads to latency issues that can compromise vehicle safety.

Temporal synchronization represents another critical challenge in existing sensor fusion architectures. Different sensors capture environmental data at varying time intervals, with cameras typically operating at 30-60 Hz while LiDAR systems may function at 10-20 Hz. This temporal misalignment creates difficulties in correlating sensor readings to construct coherent environmental representations, particularly in dynamic scenarios where objects move rapidly between sensor measurements.

Environmental robustness remains a persistent issue across current sensor fusion implementations. Weather conditions such as heavy rain, snow, or fog can severely degrade sensor performance, with cameras losing visibility and LiDAR experiencing signal attenuation. Current fusion algorithms struggle to dynamically adjust sensor weightings based on environmental conditions, often resulting in degraded perception accuracy when individual sensors fail or provide unreliable data.

Calibration drift and sensor degradation pose ongoing operational challenges for deployed autonomous vehicle systems. Over time, mechanical vibrations, temperature fluctuations, and component aging can cause sensors to drift from their initial calibration parameters. Current systems lack robust mechanisms for detecting and compensating for these gradual changes, leading to accumulated errors in sensor fusion outputs that can compromise navigation accuracy.

The integration of semantic understanding with geometric perception presents another significant hurdle. While current sensor fusion techniques excel at detecting and tracking objects geometrically, they often struggle to maintain consistent semantic classifications across different sensor modalities. This limitation becomes particularly problematic in complex urban environments where accurate object classification is crucial for safe navigation decisions.

Scalability constraints in current sensor fusion architectures limit their ability to incorporate additional sensors or adapt to new sensor technologies. Most existing systems are designed around fixed sensor configurations, making it difficult to integrate emerging sensor types or adjust to different vehicle platforms without substantial system redesign and revalidation efforts.

Temporal synchronization represents another critical challenge in existing sensor fusion architectures. Different sensors capture environmental data at varying time intervals, with cameras typically operating at 30-60 Hz while LiDAR systems may function at 10-20 Hz. This temporal misalignment creates difficulties in correlating sensor readings to construct coherent environmental representations, particularly in dynamic scenarios where objects move rapidly between sensor measurements.

Environmental robustness remains a persistent issue across current sensor fusion implementations. Weather conditions such as heavy rain, snow, or fog can severely degrade sensor performance, with cameras losing visibility and LiDAR experiencing signal attenuation. Current fusion algorithms struggle to dynamically adjust sensor weightings based on environmental conditions, often resulting in degraded perception accuracy when individual sensors fail or provide unreliable data.

Calibration drift and sensor degradation pose ongoing operational challenges for deployed autonomous vehicle systems. Over time, mechanical vibrations, temperature fluctuations, and component aging can cause sensors to drift from their initial calibration parameters. Current systems lack robust mechanisms for detecting and compensating for these gradual changes, leading to accumulated errors in sensor fusion outputs that can compromise navigation accuracy.

The integration of semantic understanding with geometric perception presents another significant hurdle. While current sensor fusion techniques excel at detecting and tracking objects geometrically, they often struggle to maintain consistent semantic classifications across different sensor modalities. This limitation becomes particularly problematic in complex urban environments where accurate object classification is crucial for safe navigation decisions.

Scalability constraints in current sensor fusion architectures limit their ability to incorporate additional sensors or adapt to new sensor technologies. Most existing systems are designed around fixed sensor configurations, making it difficult to integrate emerging sensor types or adjust to different vehicle platforms without substantial system redesign and revalidation efforts.

Existing Sensor Fusion Solutions with World Models

01 Multi-sensor data fusion algorithms and methods

Advanced algorithms are employed to combine data from multiple sensors to improve accuracy and reliability. These methods include Kalman filtering, Bayesian inference, and machine learning approaches that process heterogeneous sensor inputs. The fusion algorithms handle data synchronization, weighting, and conflict resolution to produce optimized output that is more accurate than individual sensor readings.- Multi-sensor data fusion algorithms and methods: Advanced algorithms are employed to combine data from multiple sensors to improve accuracy and reliability. These methods include Kalman filtering, Bayesian inference, and machine learning approaches that process heterogeneous sensor inputs. The fusion algorithms handle data synchronization, weighting, and conflict resolution to produce optimized output that is more accurate than individual sensor readings.

- Sensor fusion for autonomous vehicle perception: Integration of camera, radar, lidar, and ultrasonic sensors to create comprehensive environmental perception for autonomous driving systems. The fusion process combines complementary strengths of different sensor modalities to detect objects, estimate distances, and understand road conditions under various weather and lighting scenarios. This approach enhances safety and decision-making capabilities in autonomous navigation.

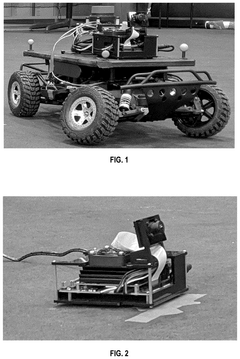

- Optimization of sensor fusion architecture and hardware configuration: Design and optimization of the physical and computational architecture for sensor fusion systems, including sensor placement, processing unit selection, and communication protocols. This involves determining optimal sensor configurations, reducing computational complexity, and minimizing latency while maximizing fusion performance. Hardware-software co-design approaches are utilized to achieve efficient real-time processing.

- Adaptive and dynamic sensor fusion strategies: Techniques that dynamically adjust fusion parameters and sensor weights based on real-time conditions and sensor reliability. These adaptive methods monitor sensor health, detect anomalies, and reconfigure the fusion process to maintain optimal performance under changing environmental conditions or sensor degradation. The systems can automatically switch between different fusion modes based on operational requirements.

- Sensor fusion for industrial and IoT applications: Application of sensor fusion optimization in industrial monitoring, smart manufacturing, and Internet of Things environments. Multiple sensors measuring temperature, pressure, vibration, and other parameters are fused to enable predictive maintenance, quality control, and process optimization. The fusion systems are designed for energy efficiency, scalability, and integration with existing industrial infrastructure.

02 Sensor fusion for autonomous vehicle perception

Integration of camera, radar, lidar, and ultrasonic sensors to create comprehensive environmental perception for autonomous driving systems. The fusion optimization enhances object detection, tracking, and classification capabilities while reducing false positives. This approach improves decision-making for path planning and obstacle avoidance in complex driving scenarios.Expand Specific Solutions03 Real-time sensor fusion processing optimization

Techniques for reducing computational latency and improving processing efficiency in sensor fusion systems. These optimizations include parallel processing architectures, edge computing implementations, and resource allocation strategies. The methods ensure that fused sensor data is available with minimal delay for time-critical applications.Expand Specific Solutions04 Adaptive sensor fusion with dynamic weighting

Systems that dynamically adjust the contribution of individual sensors based on environmental conditions and sensor reliability. The optimization includes confidence scoring, fault detection, and automatic reconfiguration when sensors degrade or fail. This adaptive approach maintains system performance across varying operational conditions.Expand Specific Solutions05 Sensor fusion calibration and alignment optimization

Methods for precise spatial and temporal calibration of multiple sensors to ensure accurate data fusion. These techniques address sensor mounting variations, timing synchronization, and coordinate system transformations. Optimization algorithms minimize calibration errors and maintain alignment accuracy over the system lifecycle.Expand Specific Solutions

Key Players in Autonomous Vehicle Sensor Technology

The autonomous vehicle sensor fusion market is experiencing rapid growth, driven by increasing demand for advanced driver assistance systems and fully autonomous capabilities. The industry is currently in a transitional phase between Level 2 and Level 3 automation, with significant investments flowing into research and development. Market size is projected to reach substantial figures as regulatory frameworks evolve and consumer acceptance grows. Technology maturity varies significantly across players, with established automotive suppliers like Robert Bosch GmbH, Continental Teves, and Infineon Technologies providing foundational sensor technologies, while specialized companies such as Waymo LLC and GM Cruise Holdings lead in advanced AI-driven fusion algorithms. Traditional automakers including Toyota Motor Corp., BMW, and Nissan are integrating these technologies into production vehicles, whereas emerging players like BYD and Chinese manufacturers are rapidly advancing their capabilities. The competitive landscape shows a clear division between hardware providers, software developers, and integrated solution providers, with world models representing the cutting-edge approach to sensor fusion optimization.

Robert Bosch GmbH

Technical Solution: Bosch has developed an integrated sensor fusion platform that combines radar, camera, and ultrasonic sensors with their proprietary world modeling algorithms. Their approach focuses on creating a unified environmental representation that processes multi-modal sensor data through advanced Kalman filtering and machine learning techniques. The system employs hierarchical world models that operate at different temporal and spatial scales, enabling both immediate obstacle detection and long-term path planning. Bosch's solution emphasizes cost-effectiveness and scalability, utilizing optimized algorithms that can run on automotive-grade processors while maintaining high accuracy in diverse driving conditions.

Strengths: Strong automotive industry partnerships and cost-effective solutions suitable for mass production vehicles. Weaknesses: May have less advanced AI capabilities compared to pure-play autonomous driving companies.

Waymo LLC

Technical Solution: Waymo employs a comprehensive sensor fusion architecture that integrates LiDAR, cameras, and radar data through advanced machine learning algorithms and world models. Their system uses deep neural networks to create detailed 3D representations of the environment, enabling real-time object detection, tracking, and prediction. The world model continuously updates based on sensor inputs, allowing the vehicle to anticipate and respond to dynamic scenarios. Waymo's approach emphasizes redundancy and cross-validation between sensor modalities to ensure robust perception even when individual sensors face limitations due to weather or environmental conditions.

Strengths: Industry-leading autonomous driving experience with extensive real-world testing data and proven safety record. Weaknesses: High computational requirements and dependency on expensive LiDAR systems may limit scalability.

Core Innovations in Multi-Modal Sensor Integration

World model generation and correction for autonomous vehicles

PatentPendingUS20250003766A1

Innovation

- The system generates and corrects a world model in real-time or near real-time using sensor data from LiDAR, radar, cameras, and IMU, incorporating semantic and geometric corrections to ensure accurate navigation-relevant features, including road conditions and traffic signals, by comparing sensor data with static map data.

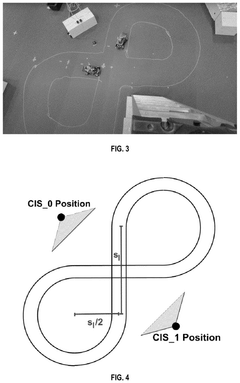

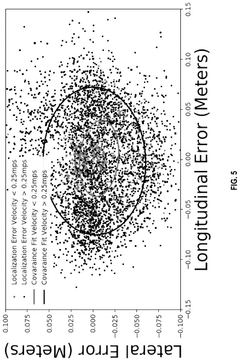

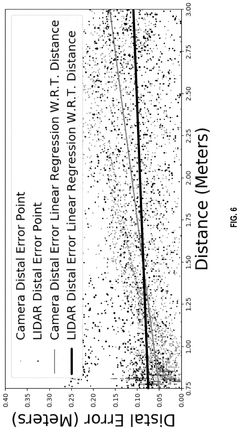

Systems and methods for cooperative sensor fusion by parameterized covariance generation in connected autonomous vehicles

PatentPendingUS20250026371A1

Innovation

- A parameterized covariance generation system that estimates errors using key predictor terms correlated with sensing and localization accuracy, incorporating a tiered fusion model with local and global sensor fusion steps, and using measured distance and velocity to generate accurate covariance matrices.

Safety Standards and Regulations for Autonomous Vehicles

The regulatory landscape for autonomous vehicles with advanced sensor fusion and world model technologies is rapidly evolving across multiple jurisdictions. Current safety standards primarily focus on functional safety requirements outlined in ISO 26262, which establishes systematic approaches for automotive safety lifecycle management. However, these existing frameworks were not specifically designed to address the complexities introduced by machine learning-based world models and multi-sensor fusion systems.

The Society of Automotive Engineers (SAE) J3016 standard defines automation levels from 0 to 5, providing a foundational framework for regulatory classification. Level 4 and 5 autonomous vehicles utilizing sophisticated sensor fusion with world models face particular scrutiny under emerging regulations. The European Union's Type Approval Framework and the United States' Federal Motor Vehicle Safety Standards are being continuously updated to accommodate these advanced systems.

Key regulatory challenges emerge from the probabilistic nature of world model predictions and sensor fusion algorithms. Traditional deterministic safety validation methods struggle to adequately assess systems that rely on continuous learning and environmental interpretation. Regulators are developing new testing protocols that incorporate scenario-based validation, requiring demonstration of safe performance across thousands of edge cases and environmental conditions.

Data privacy and cybersecurity regulations significantly impact sensor fusion implementations. The General Data Protection Regulation (GDPR) in Europe and similar frameworks globally impose strict requirements on how sensor data is collected, processed, and stored. World models that continuously learn from environmental data must comply with these privacy standards while maintaining operational effectiveness.

Certification processes are evolving to include simulation-based validation methodologies. Regulators increasingly accept virtual testing environments for validating sensor fusion algorithms and world model performance, recognizing the impracticality of real-world testing for all possible scenarios. However, these simulation frameworks must meet stringent accuracy and coverage requirements to gain regulatory approval.

International harmonization efforts are underway through organizations like the World Forum for Harmonization of Vehicle Regulations, aiming to establish consistent global standards for autonomous vehicle technologies incorporating advanced sensor fusion and world modeling capabilities.

The Society of Automotive Engineers (SAE) J3016 standard defines automation levels from 0 to 5, providing a foundational framework for regulatory classification. Level 4 and 5 autonomous vehicles utilizing sophisticated sensor fusion with world models face particular scrutiny under emerging regulations. The European Union's Type Approval Framework and the United States' Federal Motor Vehicle Safety Standards are being continuously updated to accommodate these advanced systems.

Key regulatory challenges emerge from the probabilistic nature of world model predictions and sensor fusion algorithms. Traditional deterministic safety validation methods struggle to adequately assess systems that rely on continuous learning and environmental interpretation. Regulators are developing new testing protocols that incorporate scenario-based validation, requiring demonstration of safe performance across thousands of edge cases and environmental conditions.

Data privacy and cybersecurity regulations significantly impact sensor fusion implementations. The General Data Protection Regulation (GDPR) in Europe and similar frameworks globally impose strict requirements on how sensor data is collected, processed, and stored. World models that continuously learn from environmental data must comply with these privacy standards while maintaining operational effectiveness.

Certification processes are evolving to include simulation-based validation methodologies. Regulators increasingly accept virtual testing environments for validating sensor fusion algorithms and world model performance, recognizing the impracticality of real-world testing for all possible scenarios. However, these simulation frameworks must meet stringent accuracy and coverage requirements to gain regulatory approval.

International harmonization efforts are underway through organizations like the World Forum for Harmonization of Vehicle Regulations, aiming to establish consistent global standards for autonomous vehicle technologies incorporating advanced sensor fusion and world modeling capabilities.

Real-Time Processing Requirements for AV Sensor Systems

Real-time processing in autonomous vehicle sensor systems represents one of the most critical technical challenges in achieving safe and reliable self-driving capabilities. The stringent latency requirements demand that sensor data from cameras, LiDAR, radar, and IMU units be processed within milliseconds to enable timely decision-making. Current industry standards typically require end-to-end processing latencies of less than 100 milliseconds for critical safety functions, with perception pipelines needing to operate at frequencies of 10-30 Hz depending on the driving scenario.

The computational complexity of sensor fusion algorithms creates significant bottlenecks in meeting these real-time constraints. Modern autonomous vehicles generate terabytes of data per hour from multiple sensor modalities, requiring sophisticated processing architectures to handle the massive data throughput. Edge computing platforms equipped with specialized hardware accelerators, including GPUs, FPGAs, and dedicated AI chips, have become essential components for achieving the necessary processing speeds while maintaining power efficiency within vehicle constraints.

Latency optimization strategies focus on several key areas including algorithmic efficiency, hardware acceleration, and data pipeline optimization. Techniques such as temporal data buffering, predictive processing, and selective attention mechanisms help reduce computational overhead while maintaining accuracy. Multi-threaded processing architectures enable parallel execution of sensor fusion tasks, allowing different sensor modalities to be processed simultaneously rather than sequentially.

The integration of world models introduces additional computational demands that must be balanced against real-time requirements. Dynamic model updating, state prediction, and uncertainty quantification all require significant processing resources. Advanced scheduling algorithms and priority-based processing ensure that critical safety functions receive computational resources first, while less time-sensitive tasks can be deferred or processed at lower frequencies.

Power consumption constraints further complicate real-time processing requirements, as autonomous vehicles must balance computational performance with energy efficiency. Thermal management becomes crucial when high-performance processors operate continuously in automotive environments, requiring sophisticated cooling systems and dynamic performance scaling to prevent overheating while maintaining processing capabilities.

The computational complexity of sensor fusion algorithms creates significant bottlenecks in meeting these real-time constraints. Modern autonomous vehicles generate terabytes of data per hour from multiple sensor modalities, requiring sophisticated processing architectures to handle the massive data throughput. Edge computing platforms equipped with specialized hardware accelerators, including GPUs, FPGAs, and dedicated AI chips, have become essential components for achieving the necessary processing speeds while maintaining power efficiency within vehicle constraints.

Latency optimization strategies focus on several key areas including algorithmic efficiency, hardware acceleration, and data pipeline optimization. Techniques such as temporal data buffering, predictive processing, and selective attention mechanisms help reduce computational overhead while maintaining accuracy. Multi-threaded processing architectures enable parallel execution of sensor fusion tasks, allowing different sensor modalities to be processed simultaneously rather than sequentially.

The integration of world models introduces additional computational demands that must be balanced against real-time requirements. Dynamic model updating, state prediction, and uncertainty quantification all require significant processing resources. Advanced scheduling algorithms and priority-based processing ensure that critical safety functions receive computational resources first, while less time-sensitive tasks can be deferred or processed at lower frequencies.

Power consumption constraints further complicate real-time processing requirements, as autonomous vehicles must balance computational performance with energy efficiency. Thermal management becomes crucial when high-performance processors operate continuously in automotive environments, requiring sophisticated cooling systems and dynamic performance scaling to prevent overheating while maintaining processing capabilities.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!