AI Techniques in Graphics vs Classic Techniques: Render Speed

MAR 30, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

AI Graphics Rendering Background and Speed Objectives

The evolution of computer graphics rendering has undergone a fundamental transformation over the past decade, driven by the convergence of artificial intelligence and traditional rasterization techniques. Classic rendering methods, established since the 1970s, have relied on mathematical algorithms for polygon rasterization, texture mapping, and lighting calculations. These approaches achieved predictable performance through optimized hardware pipelines but faced inherent limitations in handling complex visual phenomena such as global illumination, realistic material interactions, and dynamic lighting scenarios.

The emergence of AI-driven rendering techniques represents a paradigm shift in how graphics processing units handle visual computation. Machine learning models, particularly neural networks, have demonstrated remarkable capabilities in accelerating ray tracing operations, denoising rendered images, and generating photorealistic textures. Deep learning super-resolution techniques like NVIDIA's DLSS and AMD's FSR have revolutionized performance optimization by rendering at lower resolutions and intelligently upscaling the output.

Contemporary graphics applications demand unprecedented rendering speeds while maintaining visual fidelity across diverse platforms, from mobile devices to high-end gaming systems. The gaming industry requires consistent frame rates exceeding 60 FPS for competitive gameplay, while virtual reality applications necessitate 90+ FPS to prevent motion sickness. Professional visualization sectors, including architectural rendering and film production, prioritize photorealistic quality alongside reasonable processing times for iterative design workflows.

The primary objective of integrating AI techniques into graphics rendering focuses on achieving superior performance-to-quality ratios compared to traditional methods. Specific targets include reducing rendering latency by 40-60% through intelligent frame interpolation, minimizing computational overhead for complex lighting calculations, and enabling real-time ray tracing on mid-range hardware. Additionally, AI-enhanced rendering aims to maintain temporal stability across animated sequences while reducing artifacts commonly associated with traditional upscaling and anti-aliasing techniques.

Modern rendering pipelines increasingly adopt hybrid approaches that combine the reliability of classic rasterization with the efficiency gains of AI acceleration. This integration strategy addresses the growing demand for immersive visual experiences across gaming, simulation, and interactive media applications while ensuring backward compatibility with existing graphics infrastructure.

The emergence of AI-driven rendering techniques represents a paradigm shift in how graphics processing units handle visual computation. Machine learning models, particularly neural networks, have demonstrated remarkable capabilities in accelerating ray tracing operations, denoising rendered images, and generating photorealistic textures. Deep learning super-resolution techniques like NVIDIA's DLSS and AMD's FSR have revolutionized performance optimization by rendering at lower resolutions and intelligently upscaling the output.

Contemporary graphics applications demand unprecedented rendering speeds while maintaining visual fidelity across diverse platforms, from mobile devices to high-end gaming systems. The gaming industry requires consistent frame rates exceeding 60 FPS for competitive gameplay, while virtual reality applications necessitate 90+ FPS to prevent motion sickness. Professional visualization sectors, including architectural rendering and film production, prioritize photorealistic quality alongside reasonable processing times for iterative design workflows.

The primary objective of integrating AI techniques into graphics rendering focuses on achieving superior performance-to-quality ratios compared to traditional methods. Specific targets include reducing rendering latency by 40-60% through intelligent frame interpolation, minimizing computational overhead for complex lighting calculations, and enabling real-time ray tracing on mid-range hardware. Additionally, AI-enhanced rendering aims to maintain temporal stability across animated sequences while reducing artifacts commonly associated with traditional upscaling and anti-aliasing techniques.

Modern rendering pipelines increasingly adopt hybrid approaches that combine the reliability of classic rasterization with the efficiency gains of AI acceleration. This integration strategy addresses the growing demand for immersive visual experiences across gaming, simulation, and interactive media applications while ensuring backward compatibility with existing graphics infrastructure.

Market Demand for High-Speed AI-Enhanced Rendering

The entertainment and media industry represents the largest segment driving demand for high-speed AI-enhanced rendering technologies. Gaming companies require real-time rendering capabilities that can deliver photorealistic graphics while maintaining consistent frame rates above 60 FPS. Major gaming studios are increasingly adopting AI-powered techniques like DLSS and FSR to achieve higher resolutions without proportional increases in computational overhead. The film and animation sector demands accelerated rendering pipelines to reduce production timelines, with studios seeking solutions that can cut rendering times from weeks to days for complex scenes.

Enterprise visualization markets are experiencing rapid growth in demand for AI-enhanced rendering solutions. Architectural firms, automotive manufacturers, and product design companies require interactive 3D visualization tools that can render complex models in real-time during client presentations and design reviews. The ability to modify materials, lighting, and geometry while maintaining smooth visual feedback has become a critical competitive advantage in these sectors.

Cloud gaming and streaming services represent an emerging high-growth market segment. These platforms require efficient rendering solutions that can deliver high-quality graphics while minimizing bandwidth requirements and latency. AI-enhanced rendering techniques that can maintain visual fidelity at lower computational costs are essential for the economic viability of cloud-based gaming services, particularly as user expectations for visual quality continue to rise.

The virtual and augmented reality markets are driving specialized demand for ultra-low latency rendering solutions. VR applications require consistent frame rates to prevent motion sickness, while AR applications need real-time integration of virtual objects with real-world environments. AI-enhanced rendering techniques that can predict and pre-render frames or optimize rendering pipelines for specific hardware configurations are becoming increasingly valuable.

Professional visualization sectors including medical imaging, scientific simulation, and engineering analysis are adopting AI-enhanced rendering for improved workflow efficiency. These applications often involve large datasets that benefit from intelligent rendering optimizations, such as adaptive level-of-detail algorithms and predictive caching systems that can anticipate user interactions and pre-compute relevant visualizations.

Enterprise visualization markets are experiencing rapid growth in demand for AI-enhanced rendering solutions. Architectural firms, automotive manufacturers, and product design companies require interactive 3D visualization tools that can render complex models in real-time during client presentations and design reviews. The ability to modify materials, lighting, and geometry while maintaining smooth visual feedback has become a critical competitive advantage in these sectors.

Cloud gaming and streaming services represent an emerging high-growth market segment. These platforms require efficient rendering solutions that can deliver high-quality graphics while minimizing bandwidth requirements and latency. AI-enhanced rendering techniques that can maintain visual fidelity at lower computational costs are essential for the economic viability of cloud-based gaming services, particularly as user expectations for visual quality continue to rise.

The virtual and augmented reality markets are driving specialized demand for ultra-low latency rendering solutions. VR applications require consistent frame rates to prevent motion sickness, while AR applications need real-time integration of virtual objects with real-world environments. AI-enhanced rendering techniques that can predict and pre-render frames or optimize rendering pipelines for specific hardware configurations are becoming increasingly valuable.

Professional visualization sectors including medical imaging, scientific simulation, and engineering analysis are adopting AI-enhanced rendering for improved workflow efficiency. These applications often involve large datasets that benefit from intelligent rendering optimizations, such as adaptive level-of-detail algorithms and predictive caching systems that can anticipate user interactions and pre-compute relevant visualizations.

Current AI vs Classic Rendering Performance Landscape

The contemporary rendering landscape presents a stark dichotomy between traditional rasterization techniques and emerging AI-powered methodologies, each demonstrating distinct performance characteristics across various computational scenarios. Traditional rendering pipelines, built upon decades of optimization in GPU architectures, continue to dominate real-time applications through highly parallelized rasterization processes that can achieve consistent frame rates exceeding 60 FPS in complex gaming environments.

Classic techniques leverage specialized hardware acceleration through dedicated graphics processing units, utilizing established algorithms such as z-buffering, texture mapping, and shader-based lighting calculations. These methods demonstrate predictable performance scaling with hardware capabilities, allowing developers to optimize rendering workloads through established techniques like level-of-detail management, occlusion culling, and temporal reprojection.

AI-driven rendering approaches, particularly neural rendering and machine learning-enhanced graphics pipelines, exhibit fundamentally different performance profiles. Deep learning super-resolution techniques like DLSS and FSR demonstrate remarkable efficiency gains by rendering at lower resolutions and intelligently upscaling, achieving 40-70% performance improvements while maintaining visual fidelity comparable to native resolution rendering.

Neural radiance fields and differentiable rendering techniques present mixed performance outcomes, excelling in photorealistic scene reconstruction but requiring substantial computational overhead during training phases. Real-time inference performance varies significantly based on network architecture complexity, with lightweight models achieving interactive frame rates while high-fidelity implementations often necessitate offline processing.

Hybrid approaches increasingly dominate the performance landscape, combining traditional rasterization for geometric processing with AI acceleration for specific rendering tasks such as denoising, anti-aliasing, and global illumination approximation. These integrated solutions demonstrate superior performance characteristics compared to purely classical or AI-based approaches, leveraging the computational efficiency of established graphics pipelines while incorporating machine learning enhancements for quality improvements.

Current benchmarking data indicates that AI techniques excel in scenarios requiring complex lighting simulation, material appearance modeling, and temporal stability enhancement, while traditional methods maintain advantages in geometric complexity handling and memory efficiency for large-scale scenes.

Classic techniques leverage specialized hardware acceleration through dedicated graphics processing units, utilizing established algorithms such as z-buffering, texture mapping, and shader-based lighting calculations. These methods demonstrate predictable performance scaling with hardware capabilities, allowing developers to optimize rendering workloads through established techniques like level-of-detail management, occlusion culling, and temporal reprojection.

AI-driven rendering approaches, particularly neural rendering and machine learning-enhanced graphics pipelines, exhibit fundamentally different performance profiles. Deep learning super-resolution techniques like DLSS and FSR demonstrate remarkable efficiency gains by rendering at lower resolutions and intelligently upscaling, achieving 40-70% performance improvements while maintaining visual fidelity comparable to native resolution rendering.

Neural radiance fields and differentiable rendering techniques present mixed performance outcomes, excelling in photorealistic scene reconstruction but requiring substantial computational overhead during training phases. Real-time inference performance varies significantly based on network architecture complexity, with lightweight models achieving interactive frame rates while high-fidelity implementations often necessitate offline processing.

Hybrid approaches increasingly dominate the performance landscape, combining traditional rasterization for geometric processing with AI acceleration for specific rendering tasks such as denoising, anti-aliasing, and global illumination approximation. These integrated solutions demonstrate superior performance characteristics compared to purely classical or AI-based approaches, leveraging the computational efficiency of established graphics pipelines while incorporating machine learning enhancements for quality improvements.

Current benchmarking data indicates that AI techniques excel in scenarios requiring complex lighting simulation, material appearance modeling, and temporal stability enhancement, while traditional methods maintain advantages in geometric complexity handling and memory efficiency for large-scale scenes.

Existing AI and Classic Rendering Speed Solutions

01 AI-accelerated rendering using neural networks

Artificial intelligence techniques employ neural networks and machine learning models to accelerate graphics rendering processes. These methods can predict and generate intermediate frames, optimize rendering paths, and reduce computational overhead compared to traditional rasterization approaches. Deep learning models are trained to understand scene composition and can intelligently skip unnecessary calculations while maintaining visual quality.- AI-accelerated rendering using neural networks: Artificial intelligence techniques employ neural networks and machine learning models to accelerate graphics rendering processes. These methods can predict and generate intermediate frames, optimize rendering paths, and reduce computational overhead compared to traditional rasterization approaches. Deep learning models are trained to understand scene composition and can intelligently skip unnecessary calculations while maintaining visual quality.

- Real-time ray tracing optimization: Advanced techniques combine artificial intelligence with ray tracing algorithms to improve rendering speed. These methods utilize intelligent sampling, denoising algorithms, and predictive models to reduce the number of rays needed for photorealistic results. The approach significantly outperforms classic ray tracing methods by selectively focusing computational resources on visually important areas.

- Hybrid rendering pipelines: Hybrid approaches integrate both artificial intelligence-based techniques and traditional rendering methods to balance speed and quality. These systems dynamically switch between different rendering modes based on scene complexity and performance requirements. The combination leverages the strengths of both methodologies to achieve faster frame rates while maintaining acceptable visual fidelity.

- Procedural content generation with AI: Artificial intelligence techniques enable automated generation of graphics content, reducing manual modeling time and accelerating the overall rendering workflow. These methods use generative models to create textures, geometry, and lighting configurations that would traditionally require extensive artist input. The automated approach significantly speeds up content creation compared to conventional manual techniques.

- Adaptive level-of-detail rendering: Intelligent systems dynamically adjust rendering quality and detail levels based on viewing distance, scene importance, and available computational resources. These techniques use predictive algorithms to determine optimal detail levels in real-time, resulting in faster rendering speeds compared to fixed-detail classic approaches. The adaptive methods maintain visual quality where needed while reducing unnecessary computations in less critical areas.

02 Real-time ray tracing optimization

Advanced techniques combine AI-based denoising with hardware-accelerated ray tracing to achieve faster rendering speeds. These methods utilize intelligent sampling strategies and noise reduction algorithms to produce high-quality images with fewer samples per pixel, significantly reducing render times compared to classic ray tracing implementations that require extensive sampling for clean results.Expand Specific Solutions03 Hybrid rendering pipelines

Modern graphics systems implement hybrid approaches that intelligently switch between AI-driven techniques and traditional rendering methods based on scene complexity and performance requirements. These adaptive systems analyze rendering workloads in real-time and allocate computational resources efficiently, combining the speed advantages of both methodologies to optimize overall performance.Expand Specific Solutions04 Texture and material synthesis using AI

Machine learning algorithms can generate and synthesize textures and materials procedurally, reducing memory bandwidth requirements and preprocessing time compared to traditional texture mapping techniques. These AI-driven methods can create high-resolution details on-demand during rendering, eliminating the need for storing and loading large texture assets, thereby improving rendering throughput.Expand Specific Solutions05 Predictive frame generation and interpolation

AI techniques enable predictive rendering where future frames are anticipated and partially computed based on motion vectors and scene analysis. These methods can interpolate frames intelligently to maintain smooth frame rates, reducing the actual number of fully rendered frames needed. This approach offers significant speed improvements over classic techniques that must render every frame from scratch without prediction capabilities.Expand Specific Solutions

Major Players in AI Graphics and Rendering Industry

The AI techniques in graphics versus classic techniques for render speed represents a rapidly evolving competitive landscape currently in its growth phase. The market demonstrates significant expansion potential as traditional rendering approaches face disruption from AI-accelerated solutions. Technology maturity varies considerably across market players, with established graphics leaders like Adobe, Autodesk, and Sony Interactive Entertainment integrating AI capabilities into existing pipelines, while semiconductor giants Intel, AMD, Huawei, and MediaTek develop specialized hardware acceleration. Cloud rendering specialists like Jiangsu Zanqi Technology and emerging AI-focused companies such as Google and Baidu are pioneering novel approaches. The competitive dynamics show traditional graphics software companies adapting their classic techniques with AI enhancements, while tech giants leverage their AI expertise to enter graphics markets, creating a diverse ecosystem where render speed optimization increasingly depends on AI integration capabilities.

Adobe, Inc.

Technical Solution: Adobe has integrated AI-powered rendering acceleration into their Creative Cloud suite, particularly through their Sensei AI platform. Their approach combines traditional graphics techniques with machine learning algorithms to enhance rendering speed in applications like After Effects and Premiere Pro. Adobe's AI techniques include intelligent frame interpolation, AI-assisted ray tracing optimization, and neural network-based denoising that can reduce render times by up to 50% compared to classical methods. Their technology focuses on content-aware rendering optimizations that selectively apply computational resources based on scene complexity and visual importance, making it particularly effective for video production and motion graphics workflows.

Strengths: Deep integration with creative workflows, proven AI platform, strong content creation ecosystem. Weaknesses: Primarily software-focused, limited real-time gaming applications, subscription-based model dependency.

Autodesk, Inc.

Technical Solution: Autodesk has integrated AI-powered rendering acceleration into their professional 3D software suite including Maya, 3ds Max, and Arnold renderer. Their approach combines machine learning algorithms with traditional ray tracing to optimize rendering workflows for professional content creation. Autodesk's AI techniques include neural network-based denoising that can reduce render times by 60-80% while maintaining production-quality output, intelligent sampling algorithms that focus computational resources on visually important areas, and AI-driven material optimization that automatically adjusts shader complexity based on viewing distance and lighting conditions. Their cloud rendering services leverage AI to predict optimal resource allocation and reduce overall rendering costs for large-scale productions.

Strengths: Industry-standard professional tools, proven rendering expertise, strong cloud infrastructure. Weaknesses: High licensing costs, primarily focused on offline rendering, limited real-time applications.

Core AI Algorithms for Accelerated Graphics Processing

Machine learning based image attribute determination

PatentActiveUS20220092848A1

Innovation

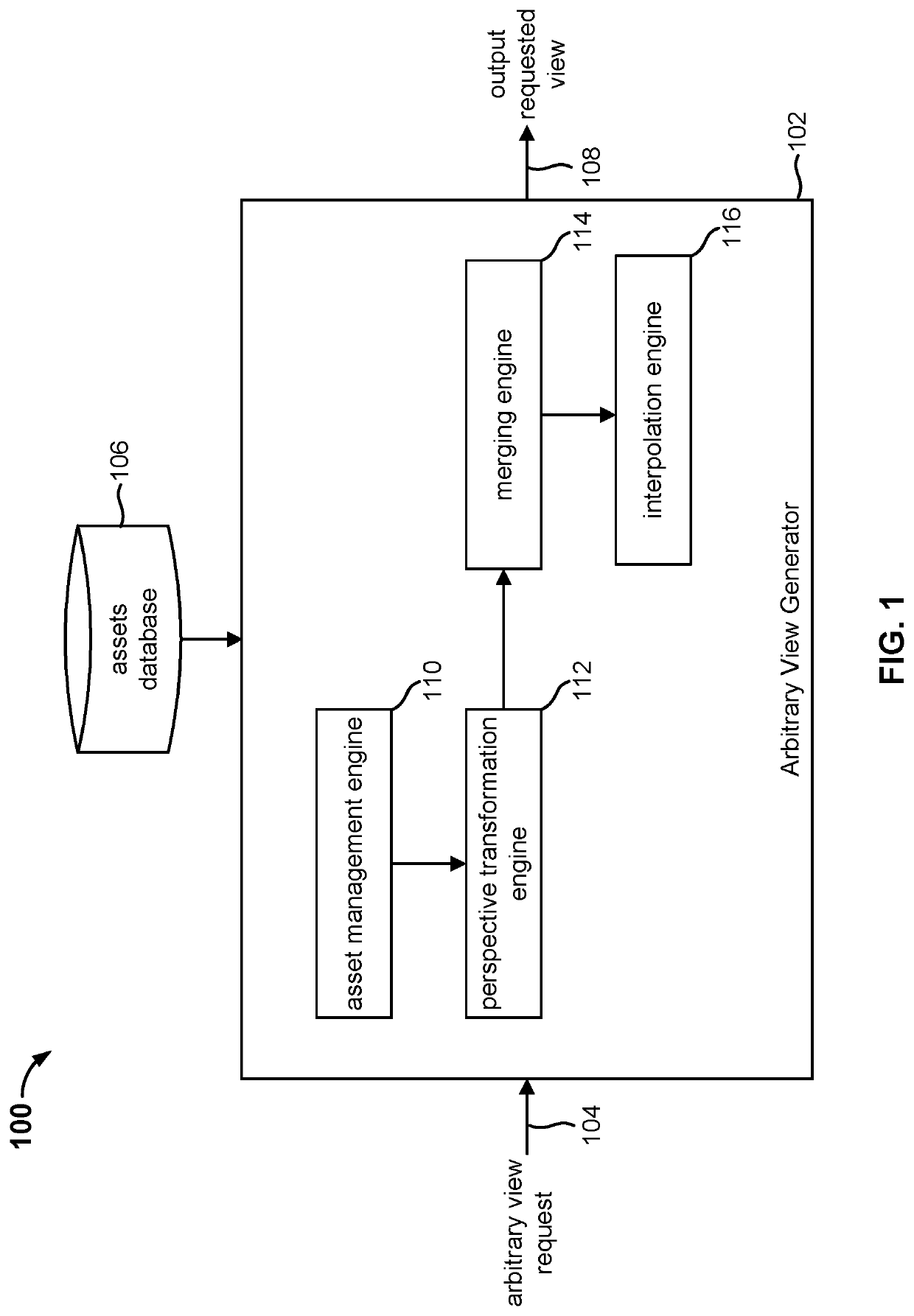

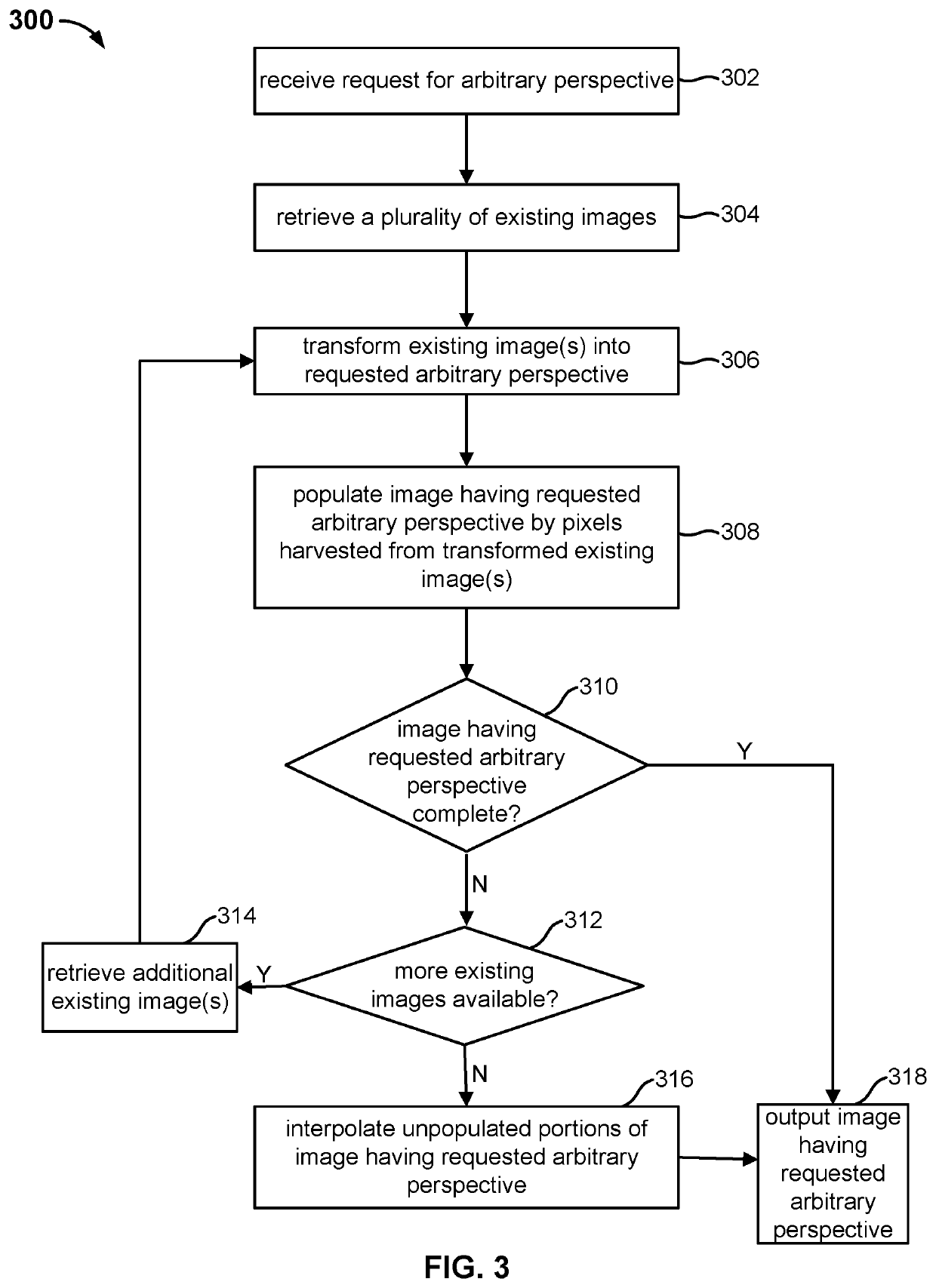

- A system and method for generating an arbitrary view of a scene with low processing overhead, using a database of high-definition images and metadata to transform and combine existing views, allowing for fast generation of high-quality outputs by harvesting pixels from multiple perspectives and interpolating missing data as needed.

Arbitrary view generation

PatentActiveUS20210125402A1

Innovation

- The system generates arbitrary views of a scene by using a database of high-definition images with associated camera attributes, employing perspective transformation and interpolation to create new views with minimal processing overhead, allowing for fast and high-quality rendering.

Hardware Requirements for AI Graphics Acceleration

The implementation of AI-driven graphics techniques demands significantly more computational resources compared to traditional rendering methods. Modern AI graphics acceleration requires specialized hardware architectures designed to handle the parallel processing demands of neural networks and machine learning algorithms used in real-time rendering applications.

Graphics Processing Units remain the cornerstone of AI graphics acceleration, but the requirements have evolved beyond conventional gaming GPUs. High-end consumer cards like the RTX 4090 or professional-grade solutions such as the A6000 provide the necessary CUDA cores and RT cores for AI-enhanced rendering. These GPUs must feature substantial VRAM capacity, typically 16GB or more, to accommodate large neural network models and high-resolution texture data simultaneously.

Tensor Processing Units and dedicated AI accelerators have emerged as critical components for optimal performance. These specialized chips excel at the matrix operations fundamental to neural network inference, offering superior performance-per-watt ratios compared to traditional GPU architectures. Integration of TPUs alongside conventional graphics hardware creates hybrid systems capable of distributing AI workloads efficiently.

Memory bandwidth represents a critical bottleneck in AI graphics applications. High-bandwidth memory solutions, such as HBM2E or HBM3, provide the necessary data throughput to feed AI models with texture data, geometry information, and intermediate processing results. Systems require memory bandwidth exceeding 1TB/s to maintain real-time performance in demanding scenarios.

CPU requirements have also intensified, as AI graphics pipelines often involve complex preprocessing and post-processing stages. Multi-core processors with high clock speeds and large cache hierarchies ensure smooth coordination between AI inference engines and traditional graphics pipelines. Modern implementations typically require at least 16-core processors with support for advanced vector instructions.

Storage infrastructure must accommodate the substantial data requirements of AI graphics models. NVMe SSDs with high sequential read speeds become essential for loading large neural network weights and training data. Enterprise applications may require distributed storage systems to handle the massive datasets associated with AI-enhanced rendering workflows.

Graphics Processing Units remain the cornerstone of AI graphics acceleration, but the requirements have evolved beyond conventional gaming GPUs. High-end consumer cards like the RTX 4090 or professional-grade solutions such as the A6000 provide the necessary CUDA cores and RT cores for AI-enhanced rendering. These GPUs must feature substantial VRAM capacity, typically 16GB or more, to accommodate large neural network models and high-resolution texture data simultaneously.

Tensor Processing Units and dedicated AI accelerators have emerged as critical components for optimal performance. These specialized chips excel at the matrix operations fundamental to neural network inference, offering superior performance-per-watt ratios compared to traditional GPU architectures. Integration of TPUs alongside conventional graphics hardware creates hybrid systems capable of distributing AI workloads efficiently.

Memory bandwidth represents a critical bottleneck in AI graphics applications. High-bandwidth memory solutions, such as HBM2E or HBM3, provide the necessary data throughput to feed AI models with texture data, geometry information, and intermediate processing results. Systems require memory bandwidth exceeding 1TB/s to maintain real-time performance in demanding scenarios.

CPU requirements have also intensified, as AI graphics pipelines often involve complex preprocessing and post-processing stages. Multi-core processors with high clock speeds and large cache hierarchies ensure smooth coordination between AI inference engines and traditional graphics pipelines. Modern implementations typically require at least 16-core processors with support for advanced vector instructions.

Storage infrastructure must accommodate the substantial data requirements of AI graphics models. NVMe SSDs with high sequential read speeds become essential for loading large neural network weights and training data. Enterprise applications may require distributed storage systems to handle the massive datasets associated with AI-enhanced rendering workflows.

Quality vs Speed Trade-offs in AI Rendering Systems

The fundamental tension between rendering quality and speed represents one of the most critical challenges in modern AI-powered graphics systems. Traditional rendering pipelines have long established predictable trade-offs where higher quality outputs require proportionally longer processing times. However, AI-driven rendering introduces new paradigms that fundamentally alter this relationship, creating both opportunities and complexities in balancing visual fidelity against performance requirements.

Neural rendering techniques demonstrate remarkable capabilities in achieving high-quality outputs through learned approximations rather than exhaustive computational processes. Deep learning models can compress complex lighting calculations, material interactions, and geometric details into efficient inference operations. This approach enables certain AI systems to produce visually compelling results at speeds that would be impossible with traditional ray tracing or rasterization methods for equivalent quality levels.

The quality-speed relationship in AI rendering systems exhibits non-linear characteristics that differ significantly from classical approaches. While traditional methods show relatively predictable scaling where doubling quality often requires quadrupling processing time, AI systems can achieve dramatic quality improvements with minimal speed penalties once properly trained. However, this advantage comes with the caveat that training these models requires substantial upfront computational investment.

Real-time AI rendering applications must navigate dynamic quality adjustment mechanisms to maintain consistent frame rates. Adaptive sampling techniques, temporal upscaling, and progressive refinement allow systems to prioritize speed during rapid scene changes while maintaining quality during static moments. These intelligent trade-off mechanisms represent a significant advancement over fixed-quality traditional rendering pipelines.

The emergence of specialized hardware architectures, particularly tensor processing units and dedicated AI accelerators, has fundamentally shifted the quality-speed equation for neural rendering. These platforms can execute AI inference operations with dramatically improved efficiency compared to general-purpose graphics processors, enabling quality levels previously achievable only through offline rendering to be realized in interactive applications.

Contemporary AI rendering frameworks increasingly employ hybrid approaches that dynamically balance neural and traditional techniques based on scene complexity and performance requirements. This adaptive methodology allows systems to leverage the strengths of both paradigms, using AI acceleration for complex phenomena while falling back to classical methods for simpler geometric operations where traditional approaches remain more efficient.

Neural rendering techniques demonstrate remarkable capabilities in achieving high-quality outputs through learned approximations rather than exhaustive computational processes. Deep learning models can compress complex lighting calculations, material interactions, and geometric details into efficient inference operations. This approach enables certain AI systems to produce visually compelling results at speeds that would be impossible with traditional ray tracing or rasterization methods for equivalent quality levels.

The quality-speed relationship in AI rendering systems exhibits non-linear characteristics that differ significantly from classical approaches. While traditional methods show relatively predictable scaling where doubling quality often requires quadrupling processing time, AI systems can achieve dramatic quality improvements with minimal speed penalties once properly trained. However, this advantage comes with the caveat that training these models requires substantial upfront computational investment.

Real-time AI rendering applications must navigate dynamic quality adjustment mechanisms to maintain consistent frame rates. Adaptive sampling techniques, temporal upscaling, and progressive refinement allow systems to prioritize speed during rapid scene changes while maintaining quality during static moments. These intelligent trade-off mechanisms represent a significant advancement over fixed-quality traditional rendering pipelines.

The emergence of specialized hardware architectures, particularly tensor processing units and dedicated AI accelerators, has fundamentally shifted the quality-speed equation for neural rendering. These platforms can execute AI inference operations with dramatically improved efficiency compared to general-purpose graphics processors, enabling quality levels previously achievable only through offline rendering to be realized in interactive applications.

Contemporary AI rendering frameworks increasingly employ hybrid approaches that dynamically balance neural and traditional techniques based on scene complexity and performance requirements. This adaptive methodology allows systems to leverage the strengths of both paradigms, using AI acceleration for complex phenomena while falling back to classical methods for simpler geometric operations where traditional approaches remain more efficient.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!