AI vs Statistical Methods: Effectiveness in Trend Analysis

FEB 25, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

AI vs Statistical Methods Background and Objectives

The evolution of trend analysis methodologies has undergone significant transformation over the past several decades, transitioning from purely statistical approaches to increasingly sophisticated artificial intelligence-driven solutions. Traditional statistical methods, rooted in mathematical foundations established in the early 20th century, have long served as the cornerstone for identifying patterns and predicting future trends across various domains including finance, economics, and business intelligence.

Statistical methods such as time series analysis, regression models, and autoregressive integrated moving average (ARIMA) have demonstrated consistent reliability in trend identification and forecasting. These approaches leverage established mathematical principles, offering interpretable results and well-understood confidence intervals. However, their effectiveness often diminishes when confronted with complex, non-linear relationships and high-dimensional datasets that characterize modern business environments.

The emergence of artificial intelligence, particularly machine learning and deep learning technologies, has introduced revolutionary capabilities in trend analysis. AI-powered systems can process vast amounts of unstructured data, identify subtle patterns invisible to traditional methods, and adapt to changing market conditions in real-time. Neural networks, ensemble methods, and advanced algorithms like Long Short-Term Memory (LSTM) networks have shown remarkable performance in capturing complex temporal dependencies and non-linear relationships.

The primary objective of this comparative analysis is to establish a comprehensive framework for evaluating the relative effectiveness of AI versus statistical methods in trend analysis applications. This evaluation encompasses accuracy metrics, computational efficiency, interpretability requirements, and practical implementation considerations across different industry contexts.

Furthermore, this research aims to identify optimal scenarios for each methodological approach, recognizing that neither AI nor statistical methods represent universal solutions. The goal extends to developing hybrid frameworks that leverage the strengths of both approaches, potentially offering superior performance compared to standalone implementations.

The investigation seeks to provide actionable insights for organizations facing critical decisions regarding trend analysis infrastructure investments, helping them align technological choices with specific business requirements, data characteristics, and analytical objectives while considering long-term scalability and maintenance considerations.

Statistical methods such as time series analysis, regression models, and autoregressive integrated moving average (ARIMA) have demonstrated consistent reliability in trend identification and forecasting. These approaches leverage established mathematical principles, offering interpretable results and well-understood confidence intervals. However, their effectiveness often diminishes when confronted with complex, non-linear relationships and high-dimensional datasets that characterize modern business environments.

The emergence of artificial intelligence, particularly machine learning and deep learning technologies, has introduced revolutionary capabilities in trend analysis. AI-powered systems can process vast amounts of unstructured data, identify subtle patterns invisible to traditional methods, and adapt to changing market conditions in real-time. Neural networks, ensemble methods, and advanced algorithms like Long Short-Term Memory (LSTM) networks have shown remarkable performance in capturing complex temporal dependencies and non-linear relationships.

The primary objective of this comparative analysis is to establish a comprehensive framework for evaluating the relative effectiveness of AI versus statistical methods in trend analysis applications. This evaluation encompasses accuracy metrics, computational efficiency, interpretability requirements, and practical implementation considerations across different industry contexts.

Furthermore, this research aims to identify optimal scenarios for each methodological approach, recognizing that neither AI nor statistical methods represent universal solutions. The goal extends to developing hybrid frameworks that leverage the strengths of both approaches, potentially offering superior performance compared to standalone implementations.

The investigation seeks to provide actionable insights for organizations facing critical decisions regarding trend analysis infrastructure investments, helping them align technological choices with specific business requirements, data characteristics, and analytical objectives while considering long-term scalability and maintenance considerations.

Market Demand for Advanced Trend Analysis Solutions

The global trend analysis market is experiencing unprecedented growth driven by the exponential increase in data generation across industries. Organizations worldwide are recognizing the critical importance of accurate trend prediction for strategic decision-making, risk management, and competitive advantage. This surge in demand stems from the need to process vast amounts of structured and unstructured data from diverse sources including social media, IoT devices, financial markets, and consumer behavior patterns.

Financial services sector represents one of the most significant demand drivers for advanced trend analysis solutions. Investment firms, banks, and insurance companies require sophisticated analytical capabilities to identify market patterns, assess risk exposure, and optimize portfolio performance. The complexity of modern financial instruments and the volatility of global markets have intensified the need for more accurate and responsive trend analysis methodologies that can outperform traditional statistical approaches.

Healthcare and pharmaceutical industries are increasingly demanding advanced trend analysis solutions for drug discovery, epidemiological studies, and patient outcome prediction. The COVID-19 pandemic highlighted the critical importance of accurate trend forecasting in public health management, accelerating adoption of AI-powered analytical tools. Medical research institutions and healthcare providers are seeking solutions that can process complex biological data and identify subtle patterns that traditional statistical methods might overlook.

E-commerce and retail sectors are driving substantial demand for trend analysis solutions to understand consumer behavior, optimize inventory management, and predict market shifts. The rapid growth of online commerce has generated massive datasets requiring sophisticated analytical approaches to extract actionable insights. Companies are particularly interested in solutions that can integrate multiple data sources and provide real-time trend identification capabilities.

Manufacturing and supply chain management represent emerging high-demand sectors for advanced trend analysis. Industry 4.0 initiatives and the increasing complexity of global supply networks have created urgent needs for predictive analytics that can anticipate disruptions, optimize production schedules, and improve operational efficiency. The recent supply chain challenges have further emphasized the importance of robust trend forecasting capabilities.

The demand landscape is characterized by a clear preference for solutions that combine the interpretability of statistical methods with the pattern recognition capabilities of AI approaches. Organizations are seeking hybrid solutions that can provide both accuracy and transparency in their analytical processes, reflecting the ongoing debate between AI and statistical methodologies in trend analysis applications.

Financial services sector represents one of the most significant demand drivers for advanced trend analysis solutions. Investment firms, banks, and insurance companies require sophisticated analytical capabilities to identify market patterns, assess risk exposure, and optimize portfolio performance. The complexity of modern financial instruments and the volatility of global markets have intensified the need for more accurate and responsive trend analysis methodologies that can outperform traditional statistical approaches.

Healthcare and pharmaceutical industries are increasingly demanding advanced trend analysis solutions for drug discovery, epidemiological studies, and patient outcome prediction. The COVID-19 pandemic highlighted the critical importance of accurate trend forecasting in public health management, accelerating adoption of AI-powered analytical tools. Medical research institutions and healthcare providers are seeking solutions that can process complex biological data and identify subtle patterns that traditional statistical methods might overlook.

E-commerce and retail sectors are driving substantial demand for trend analysis solutions to understand consumer behavior, optimize inventory management, and predict market shifts. The rapid growth of online commerce has generated massive datasets requiring sophisticated analytical approaches to extract actionable insights. Companies are particularly interested in solutions that can integrate multiple data sources and provide real-time trend identification capabilities.

Manufacturing and supply chain management represent emerging high-demand sectors for advanced trend analysis. Industry 4.0 initiatives and the increasing complexity of global supply networks have created urgent needs for predictive analytics that can anticipate disruptions, optimize production schedules, and improve operational efficiency. The recent supply chain challenges have further emphasized the importance of robust trend forecasting capabilities.

The demand landscape is characterized by a clear preference for solutions that combine the interpretability of statistical methods with the pattern recognition capabilities of AI approaches. Organizations are seeking hybrid solutions that can provide both accuracy and transparency in their analytical processes, reflecting the ongoing debate between AI and statistical methodologies in trend analysis applications.

Current State and Challenges in AI and Statistical Approaches

The contemporary landscape of trend analysis presents a complex dichotomy between artificial intelligence methodologies and traditional statistical approaches, each demonstrating distinct strengths and limitations in their current implementations. Statistical methods, rooted in decades of mathematical rigor, continue to serve as the backbone for many analytical frameworks, offering interpretable results through established techniques such as ARIMA models, exponential smoothing, and regression analysis. These approaches provide transparent mathematical foundations that enable practitioners to understand the underlying assumptions and validate results through well-established statistical tests.

Artificial intelligence approaches, particularly machine learning and deep learning models, have gained significant traction in trend analysis applications. Neural networks, ensemble methods, and advanced algorithms like LSTM and transformer architectures demonstrate superior performance in capturing complex, non-linear patterns within large datasets. These AI-driven solutions excel in processing high-dimensional data and identifying subtle correlations that traditional statistical methods might overlook.

However, both paradigms face substantial challenges in real-world implementations. Statistical methods often struggle with non-stationary data, complex seasonality patterns, and the assumption of linear relationships that may not hold in dynamic market conditions. The rigid mathematical frameworks can become inadequate when dealing with rapidly changing environments or datasets containing multiple interacting variables with varying temporal dependencies.

AI approaches encounter different but equally significant obstacles. The "black box" nature of many machine learning models creates interpretability challenges, making it difficult for analysts to understand decision-making processes or explain results to stakeholders. Additionally, AI models require substantial computational resources and extensive training data, which may not always be available or representative of future conditions.

Data quality and availability represent universal challenges across both methodologies. Incomplete datasets, measurement errors, and temporal inconsistencies affect model performance regardless of the chosen approach. The challenge becomes more pronounced when dealing with emerging markets or novel phenomena where historical data may be limited or non-representative.

Integration complexity emerges as another critical challenge, as organizations often struggle to determine optimal combinations of AI and statistical methods. The lack of standardized frameworks for hybrid approaches creates implementation difficulties and increases the risk of suboptimal solution selection for specific trend analysis requirements.

Artificial intelligence approaches, particularly machine learning and deep learning models, have gained significant traction in trend analysis applications. Neural networks, ensemble methods, and advanced algorithms like LSTM and transformer architectures demonstrate superior performance in capturing complex, non-linear patterns within large datasets. These AI-driven solutions excel in processing high-dimensional data and identifying subtle correlations that traditional statistical methods might overlook.

However, both paradigms face substantial challenges in real-world implementations. Statistical methods often struggle with non-stationary data, complex seasonality patterns, and the assumption of linear relationships that may not hold in dynamic market conditions. The rigid mathematical frameworks can become inadequate when dealing with rapidly changing environments or datasets containing multiple interacting variables with varying temporal dependencies.

AI approaches encounter different but equally significant obstacles. The "black box" nature of many machine learning models creates interpretability challenges, making it difficult for analysts to understand decision-making processes or explain results to stakeholders. Additionally, AI models require substantial computational resources and extensive training data, which may not always be available or representative of future conditions.

Data quality and availability represent universal challenges across both methodologies. Incomplete datasets, measurement errors, and temporal inconsistencies affect model performance regardless of the chosen approach. The challenge becomes more pronounced when dealing with emerging markets or novel phenomena where historical data may be limited or non-representative.

Integration complexity emerges as another critical challenge, as organizations often struggle to determine optimal combinations of AI and statistical methods. The lack of standardized frameworks for hybrid approaches creates implementation difficulties and increases the risk of suboptimal solution selection for specific trend analysis requirements.

Existing AI and Statistical Trend Analysis Solutions

01 Machine learning algorithms for predictive modeling and pattern recognition

Artificial intelligence methods, particularly machine learning algorithms, demonstrate enhanced capabilities in identifying complex patterns and making predictions from large datasets. These approaches can automatically learn features and relationships without explicit programming, offering advantages in handling non-linear relationships and high-dimensional data compared to traditional statistical methods.- Machine learning algorithms for predictive modeling and pattern recognition: Artificial intelligence methods, particularly machine learning algorithms, demonstrate enhanced capabilities in identifying complex patterns and making predictions from large datasets. These approaches can automatically learn features and relationships without explicit programming, offering advantages in handling non-linear relationships and high-dimensional data compared to traditional statistical methods.

- Statistical methods for hypothesis testing and inference: Traditional statistical approaches provide rigorous frameworks for hypothesis testing, confidence intervals, and statistical inference. These methods offer interpretability and theoretical guarantees that are well-established in scientific research. Statistical techniques remain effective for structured data analysis where assumptions can be validated and results need to be explainable.

- Hybrid approaches combining AI and statistical methods: Integration of artificial intelligence techniques with statistical methods creates synergistic frameworks that leverage strengths of both approaches. These hybrid systems can utilize statistical principles for validation while employing AI for complex pattern recognition and prediction tasks, providing both accuracy and interpretability.

- Comparative evaluation frameworks for method effectiveness: Systematic frameworks for comparing artificial intelligence and statistical methods assess performance across multiple metrics including accuracy, computational efficiency, interpretability, and generalizability. These evaluation systems help determine optimal method selection based on specific application requirements and data characteristics.

- Domain-specific applications and method optimization: Different domains require tailored approaches where either AI or statistical methods may prove more effective. Optimization techniques adapt methodologies to specific use cases, considering factors such as data volume, complexity, interpretability requirements, and computational resources to maximize effectiveness in particular application contexts.

02 Hybrid approaches combining AI and statistical methods

Integration of artificial intelligence techniques with conventional statistical methods creates hybrid frameworks that leverage the strengths of both approaches. These combined methodologies can provide improved accuracy, interpretability, and robustness by utilizing statistical foundations for validation while employing AI for complex pattern detection and automated feature extraction.Expand Specific Solutions03 Statistical inference and hypothesis testing frameworks

Traditional statistical methods provide rigorous mathematical frameworks for hypothesis testing, confidence intervals, and statistical inference. These approaches offer well-established theoretical foundations, interpretability, and reliability in scenarios requiring formal statistical validation and understanding of uncertainty quantification.Expand Specific Solutions04 Deep learning and neural network architectures for complex data analysis

Advanced neural network architectures and deep learning techniques enable processing of unstructured and complex data types. These methods excel in tasks involving image recognition, natural language processing, and sequential data analysis, providing capabilities that extend beyond traditional statistical approaches in handling large-scale, multi-modal datasets.Expand Specific Solutions05 Comparative evaluation metrics and performance assessment

Systematic frameworks for comparing effectiveness between artificial intelligence and statistical methods involve multiple evaluation criteria including accuracy, computational efficiency, interpretability, and generalization capability. These assessment methodologies enable objective comparison across different application domains and help determine the most appropriate approach for specific use cases.Expand Specific Solutions

Key Players in AI and Statistical Analytics Industry

The competitive landscape for AI versus statistical methods in trend analysis reflects a rapidly evolving industry transitioning from traditional statistical approaches to AI-driven solutions. The market demonstrates significant growth potential, driven by increasing demand for predictive analytics across sectors. Technology maturity varies considerably among key players. Established technology giants like IBM, Huawei, Samsung Electronics, and Oracle leverage their extensive R&D capabilities to integrate advanced AI algorithms with traditional statistical frameworks. Financial institutions including Bank of America and ICBC are implementing hybrid approaches for market forecasting. Specialized AI companies such as Nichefire, Intelus, WISSEE, and Nowcasting.ai represent the emerging wave of pure AI-focused solutions, while traditional players like Siemens and NEC Laboratories bridge industrial applications with modern analytics, indicating a maturing ecosystem where AI increasingly complements rather than replaces statistical methods.

International Business Machines Corp.

Technical Solution: IBM has developed Watson Analytics platform that combines AI and statistical methods for comprehensive trend analysis. Their approach integrates machine learning algorithms with traditional statistical models to provide hybrid forecasting capabilities. The platform utilizes deep learning neural networks for pattern recognition while maintaining statistical regression models for baseline predictions. IBM's solution offers automated feature selection and model ensemble techniques that dynamically weight AI versus statistical approaches based on data characteristics and prediction horizons.

Strengths: Mature enterprise platform with proven scalability and reliability. Weaknesses: High implementation costs and complexity requiring specialized expertise for optimal configuration.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung utilizes hybrid forecasting systems combining AI neural networks with statistical time series models for demand forecasting and market trend analysis in consumer electronics. Their approach integrates convolutional neural networks for pattern recognition with traditional statistical methods like moving averages and exponential smoothing for baseline predictions. The system employs ensemble learning techniques that dynamically weight AI and statistical components based on forecast horizon and market volatility, enabling adaptive prediction strategies for rapidly changing technology markets.

Strengths: Strong hardware-software integration capabilities with proven performance in consumer electronics forecasting and global market reach. Weaknesses: Limited focus on general-purpose analytics solutions and primarily optimized for manufacturing and supply chain applications.

Core Innovations in AI-Driven Trend Analysis Technologies

Time-series forecasting based on artificial intelligence and statistical methods ensemble

PatentPendingEP4553724A1

Innovation

- The DI/SM-TS-RIV technology employs a multi-parameter algorithm that autonomously synthesizes a suite of AI and statistical techniques through automatic machine learning, enabling robust and precise time series predictions.

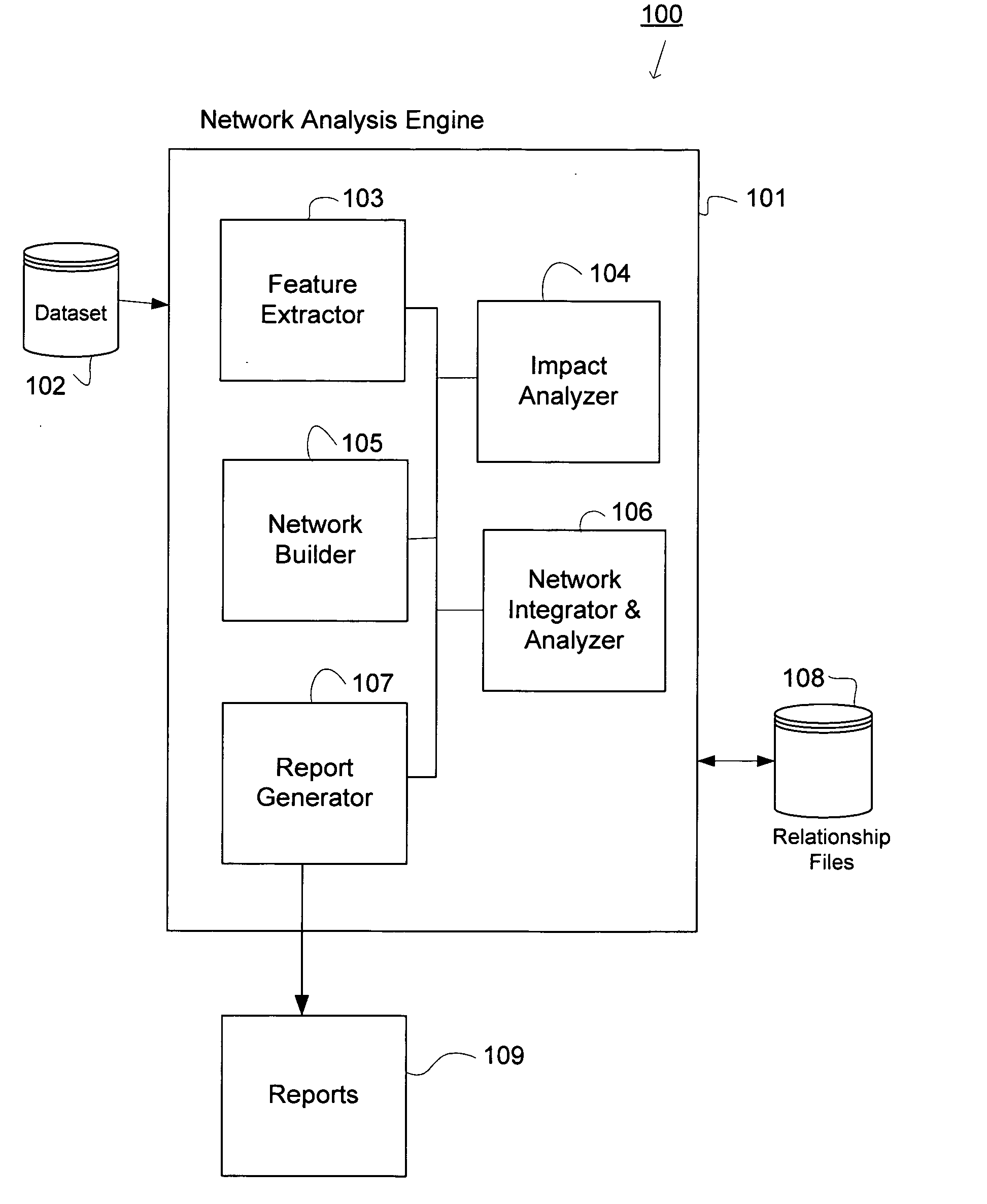

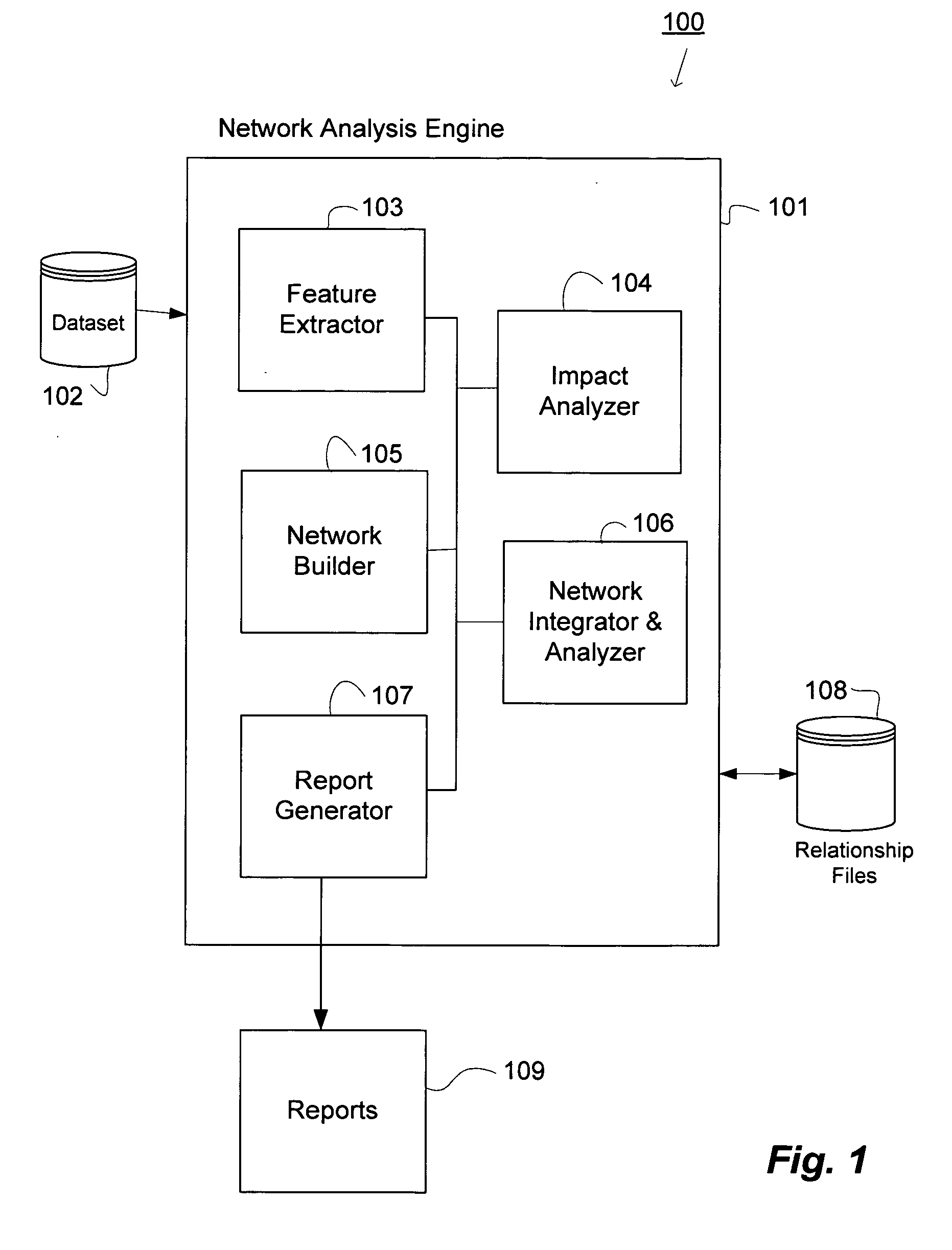

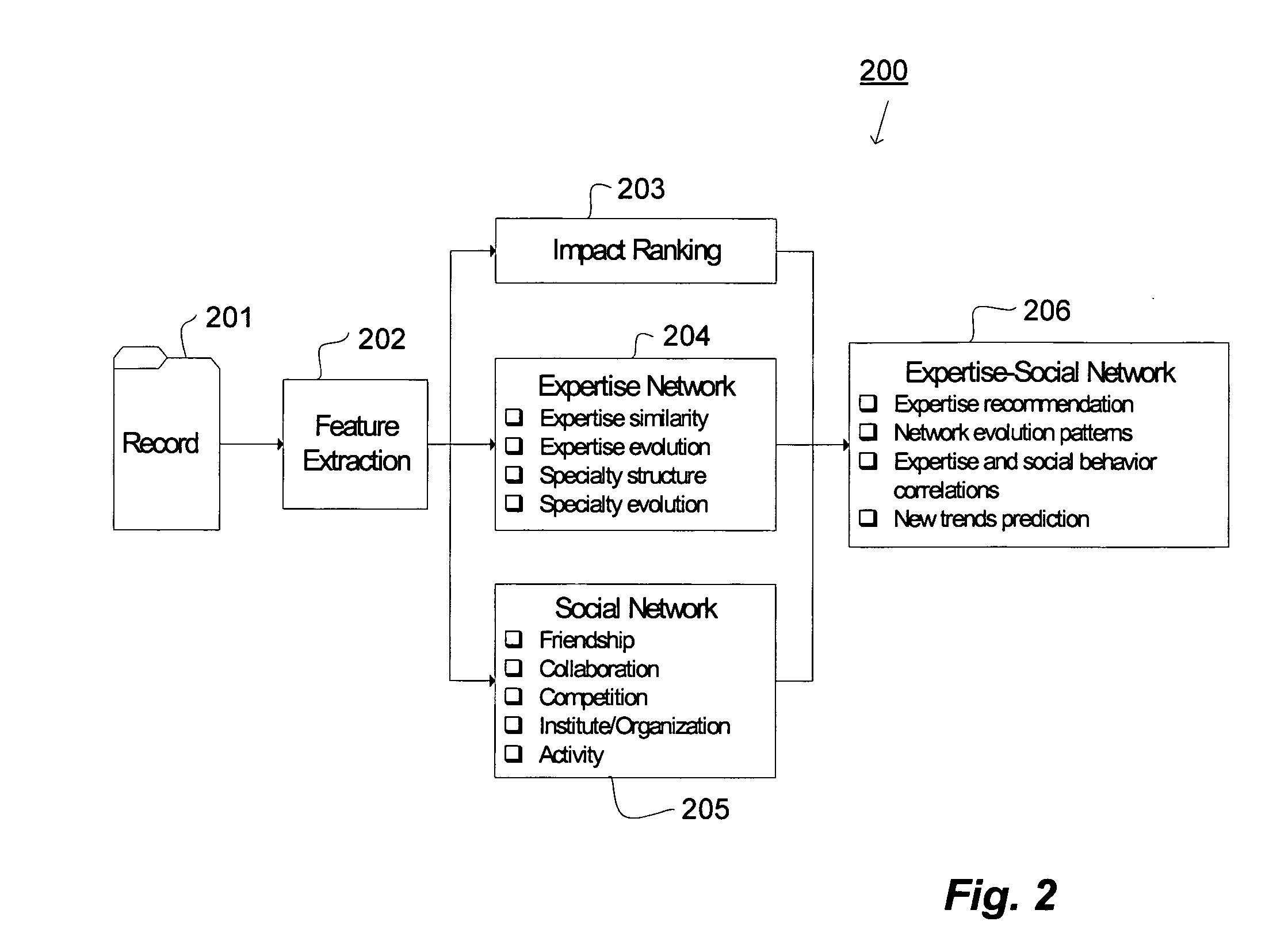

System and methods for data analysis and trend prediction

PatentInactiveUS20060184464A1

Innovation

- A system and method that builds an expertise-social network by generating nodes from datasets, determining relationships using heuristic algorithms, and integrating expertise and social network analysis to provide impact rankings, correlation analysis, and prediction of future trends.

Data Privacy and Governance in AI Trend Analysis

Data privacy and governance represent critical considerations when implementing AI-driven trend analysis systems, particularly as organizations increasingly rely on large-scale data processing to extract meaningful insights. The intersection of artificial intelligence and statistical methods in trend analysis creates unique privacy challenges that require comprehensive governance frameworks to address regulatory compliance, ethical data usage, and stakeholder trust.

The fundamental privacy concerns in AI trend analysis stem from the technology's capacity to process vast amounts of potentially sensitive data, including personal information, behavioral patterns, and proprietary business metrics. Unlike traditional statistical methods that often work with aggregated or anonymized datasets, AI systems frequently require access to granular, real-time data to achieve optimal performance. This creates tension between analytical effectiveness and privacy protection, necessitating sophisticated data governance strategies.

Regulatory frameworks such as GDPR, CCPA, and emerging AI-specific legislation impose stringent requirements on data collection, processing, and retention practices. Organizations must implement privacy-by-design principles, ensuring that data protection measures are integrated throughout the AI trend analysis pipeline rather than added as an afterthought. This includes establishing clear data lineage tracking, implementing robust access controls, and maintaining comprehensive audit trails.

Technical privacy-preserving approaches have emerged to address these challenges while maintaining analytical capabilities. Differential privacy techniques add controlled noise to datasets, protecting individual privacy while preserving statistical utility for trend analysis. Federated learning enables AI models to learn from distributed data sources without centralizing sensitive information, particularly valuable for cross-organizational trend analysis initiatives.

Data governance frameworks must establish clear policies for data classification, handling procedures, and retention schedules specific to AI trend analysis applications. This includes defining roles and responsibilities for data stewardship, implementing automated compliance monitoring systems, and establishing incident response procedures for potential privacy breaches. Organizations must also address algorithmic transparency requirements, ensuring that AI-driven trend analysis results can be explained and validated when necessary.

The governance structure should encompass both technical and organizational measures, including regular privacy impact assessments, ongoing model monitoring for bias and fairness, and stakeholder engagement processes to maintain public trust in AI-driven analytical capabilities.

The fundamental privacy concerns in AI trend analysis stem from the technology's capacity to process vast amounts of potentially sensitive data, including personal information, behavioral patterns, and proprietary business metrics. Unlike traditional statistical methods that often work with aggregated or anonymized datasets, AI systems frequently require access to granular, real-time data to achieve optimal performance. This creates tension between analytical effectiveness and privacy protection, necessitating sophisticated data governance strategies.

Regulatory frameworks such as GDPR, CCPA, and emerging AI-specific legislation impose stringent requirements on data collection, processing, and retention practices. Organizations must implement privacy-by-design principles, ensuring that data protection measures are integrated throughout the AI trend analysis pipeline rather than added as an afterthought. This includes establishing clear data lineage tracking, implementing robust access controls, and maintaining comprehensive audit trails.

Technical privacy-preserving approaches have emerged to address these challenges while maintaining analytical capabilities. Differential privacy techniques add controlled noise to datasets, protecting individual privacy while preserving statistical utility for trend analysis. Federated learning enables AI models to learn from distributed data sources without centralizing sensitive information, particularly valuable for cross-organizational trend analysis initiatives.

Data governance frameworks must establish clear policies for data classification, handling procedures, and retention schedules specific to AI trend analysis applications. This includes defining roles and responsibilities for data stewardship, implementing automated compliance monitoring systems, and establishing incident response procedures for potential privacy breaches. Organizations must also address algorithmic transparency requirements, ensuring that AI-driven trend analysis results can be explained and validated when necessary.

The governance structure should encompass both technical and organizational measures, including regular privacy impact assessments, ongoing model monitoring for bias and fairness, and stakeholder engagement processes to maintain public trust in AI-driven analytical capabilities.

Performance Benchmarking Framework for Method Comparison

Establishing a robust performance benchmarking framework is essential for conducting objective comparisons between AI-based methods and traditional statistical approaches in trend analysis applications. The framework must encompass multiple evaluation dimensions to capture the nuanced differences in methodology effectiveness across various analytical scenarios.

The foundation of any comprehensive benchmarking framework lies in defining standardized datasets that represent diverse trend patterns commonly encountered in real-world applications. These datasets should include linear trends, seasonal variations, cyclical patterns, structural breaks, and noise-corrupted signals with varying complexity levels. Synthetic datasets with known ground truth parameters enable precise accuracy measurements, while real-world datasets from financial markets, economic indicators, and operational metrics provide practical validation contexts.

Evaluation metrics form the core component of the benchmarking framework, requiring careful selection to address different aspects of trend analysis performance. Accuracy metrics such as Mean Absolute Error (MAE), Root Mean Square Error (RMSE), and Mean Absolute Percentage Error (MAPE) quantify prediction precision. Directional accuracy measures assess the ability to correctly identify trend directions, which is often more critical than precise magnitude predictions in business applications.

Temporal consistency metrics evaluate how well methods maintain performance across different time horizons and varying data frequencies. Robustness indicators measure sensitivity to outliers, missing data, and parameter variations. Computational efficiency metrics including training time, inference speed, and memory requirements are crucial for practical deployment considerations.

The framework must incorporate standardized experimental protocols to ensure fair comparisons. This includes consistent data preprocessing procedures, cross-validation strategies appropriate for time series data, and statistical significance testing methodologies. Rolling window validation and walk-forward analysis protocols are particularly important for trend analysis applications where temporal dependencies cannot be ignored.

Implementation considerations require establishing baseline performance thresholds and defining clear criteria for method superiority across different use cases. The framework should accommodate both automated evaluation pipelines and manual expert assessment procedures to capture qualitative aspects that quantitative metrics might miss.

The foundation of any comprehensive benchmarking framework lies in defining standardized datasets that represent diverse trend patterns commonly encountered in real-world applications. These datasets should include linear trends, seasonal variations, cyclical patterns, structural breaks, and noise-corrupted signals with varying complexity levels. Synthetic datasets with known ground truth parameters enable precise accuracy measurements, while real-world datasets from financial markets, economic indicators, and operational metrics provide practical validation contexts.

Evaluation metrics form the core component of the benchmarking framework, requiring careful selection to address different aspects of trend analysis performance. Accuracy metrics such as Mean Absolute Error (MAE), Root Mean Square Error (RMSE), and Mean Absolute Percentage Error (MAPE) quantify prediction precision. Directional accuracy measures assess the ability to correctly identify trend directions, which is often more critical than precise magnitude predictions in business applications.

Temporal consistency metrics evaluate how well methods maintain performance across different time horizons and varying data frequencies. Robustness indicators measure sensitivity to outliers, missing data, and parameter variations. Computational efficiency metrics including training time, inference speed, and memory requirements are crucial for practical deployment considerations.

The framework must incorporate standardized experimental protocols to ensure fair comparisons. This includes consistent data preprocessing procedures, cross-validation strategies appropriate for time series data, and statistical significance testing methodologies. Rolling window validation and walk-forward analysis protocols are particularly important for trend analysis applications where temporal dependencies cannot be ignored.

Implementation considerations require establishing baseline performance thresholds and defining clear criteria for method superiority across different use cases. The framework should accommodate both automated evaluation pipelines and manual expert assessment procedures to capture qualitative aspects that quantitative metrics might miss.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!