Comparing Data Density: Near-Memory vs Conventional Storage

APR 24, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Near-Memory Computing Background and Density Goals

Near-memory computing represents a paradigm shift in computer architecture that addresses the growing disparity between processor performance and memory access latency, commonly known as the "memory wall" problem. This architectural approach integrates computational capabilities directly within or adjacent to memory subsystems, fundamentally altering how data processing occurs in modern computing systems. The evolution from traditional von Neumann architectures to near-memory computing reflects decades of research aimed at overcoming bandwidth limitations and energy inefficiencies inherent in conventional storage hierarchies.

The historical development of near-memory computing can be traced back to early research in the 1990s, when researchers first recognized the potential benefits of processing data closer to its storage location. Initial concepts focused on simple operations within memory controllers, but technological advances in semiconductor manufacturing and 3D integration have enabled more sophisticated implementations. The emergence of high-bandwidth memory technologies, such as HBM and processing-in-memory solutions, has accelerated the practical deployment of near-memory computing architectures across various application domains.

Contemporary near-memory computing encompasses multiple implementation strategies, including processing-in-memory, near-data computing, and memory-centric architectures. These approaches share the common objective of reducing data movement overhead while maximizing computational throughput per unit of energy consumed. The integration of specialized processing elements within memory stacks or memory controllers enables parallel execution of operations on large datasets without the traditional bottlenecks associated with data transfer between separate processing and storage units.

The primary density goals for near-memory computing systems center on achieving optimal balance between computational capability, memory capacity, and energy efficiency within constrained physical footprints. Current research targets include maximizing memory density while incorporating sufficient processing power to handle compute-intensive workloads effectively. Advanced packaging technologies and through-silicon via implementations are enabling higher integration densities, with goals of achieving terabyte-scale memory capacities alongside meaningful computational resources.

Future density objectives focus on leveraging emerging memory technologies, including resistive RAM, phase-change memory, and magnetic RAM, which offer both storage and computational capabilities within single devices. These technologies promise to blur the traditional boundaries between memory and processing, enabling unprecedented levels of integration density while maintaining performance characteristics suitable for demanding applications in artificial intelligence, data analytics, and scientific computing domains.

The historical development of near-memory computing can be traced back to early research in the 1990s, when researchers first recognized the potential benefits of processing data closer to its storage location. Initial concepts focused on simple operations within memory controllers, but technological advances in semiconductor manufacturing and 3D integration have enabled more sophisticated implementations. The emergence of high-bandwidth memory technologies, such as HBM and processing-in-memory solutions, has accelerated the practical deployment of near-memory computing architectures across various application domains.

Contemporary near-memory computing encompasses multiple implementation strategies, including processing-in-memory, near-data computing, and memory-centric architectures. These approaches share the common objective of reducing data movement overhead while maximizing computational throughput per unit of energy consumed. The integration of specialized processing elements within memory stacks or memory controllers enables parallel execution of operations on large datasets without the traditional bottlenecks associated with data transfer between separate processing and storage units.

The primary density goals for near-memory computing systems center on achieving optimal balance between computational capability, memory capacity, and energy efficiency within constrained physical footprints. Current research targets include maximizing memory density while incorporating sufficient processing power to handle compute-intensive workloads effectively. Advanced packaging technologies and through-silicon via implementations are enabling higher integration densities, with goals of achieving terabyte-scale memory capacities alongside meaningful computational resources.

Future density objectives focus on leveraging emerging memory technologies, including resistive RAM, phase-change memory, and magnetic RAM, which offer both storage and computational capabilities within single devices. These technologies promise to blur the traditional boundaries between memory and processing, enabling unprecedented levels of integration density while maintaining performance characteristics suitable for demanding applications in artificial intelligence, data analytics, and scientific computing domains.

Market Demand for High-Density Storage Solutions

The global storage market is experiencing unprecedented demand for high-density solutions driven by exponential data growth across multiple sectors. Enterprise data centers, cloud service providers, and hyperscale computing facilities are generating massive volumes of data that require efficient storage architectures capable of handling both capacity and performance requirements simultaneously.

Traditional storage hierarchies are being challenged by emerging workloads including artificial intelligence, machine learning, and real-time analytics applications. These workloads demand not only vast storage capacity but also rapid data access patterns that conventional storage systems struggle to accommodate efficiently. The gap between processor performance and storage access speeds continues to widen, creating bottlenecks that impact overall system performance.

Near-memory storage technologies are gaining significant traction as organizations seek to bridge the performance gap between volatile memory and persistent storage. The market demand for these solutions is particularly strong in high-performance computing environments, financial trading systems, and data-intensive scientific research applications where microsecond-level latencies can translate to substantial competitive advantages or research breakthroughs.

Cloud computing infrastructure represents one of the largest demand drivers for high-density storage solutions. Major cloud providers are continuously expanding their data center footprints while simultaneously seeking to maximize storage density per rack unit to optimize operational costs and energy efficiency. This dual requirement for capacity and space efficiency is reshaping storage architecture preferences across the industry.

The emergence of edge computing applications is creating additional demand for compact, high-density storage solutions that can operate in distributed environments with limited physical space and power constraints. Internet of Things deployments, autonomous vehicle systems, and smart city infrastructure require storage solutions that can deliver high capacity within stringent form factor limitations.

Memory-centric computing architectures are driving demand for storage solutions that can seamlessly integrate with system memory hierarchies. Applications requiring large in-memory datasets, such as graph databases and real-time recommendation engines, are pushing the boundaries of traditional storage paradigms and creating market opportunities for hybrid memory-storage solutions that combine the benefits of both technologies.

Traditional storage hierarchies are being challenged by emerging workloads including artificial intelligence, machine learning, and real-time analytics applications. These workloads demand not only vast storage capacity but also rapid data access patterns that conventional storage systems struggle to accommodate efficiently. The gap between processor performance and storage access speeds continues to widen, creating bottlenecks that impact overall system performance.

Near-memory storage technologies are gaining significant traction as organizations seek to bridge the performance gap between volatile memory and persistent storage. The market demand for these solutions is particularly strong in high-performance computing environments, financial trading systems, and data-intensive scientific research applications where microsecond-level latencies can translate to substantial competitive advantages or research breakthroughs.

Cloud computing infrastructure represents one of the largest demand drivers for high-density storage solutions. Major cloud providers are continuously expanding their data center footprints while simultaneously seeking to maximize storage density per rack unit to optimize operational costs and energy efficiency. This dual requirement for capacity and space efficiency is reshaping storage architecture preferences across the industry.

The emergence of edge computing applications is creating additional demand for compact, high-density storage solutions that can operate in distributed environments with limited physical space and power constraints. Internet of Things deployments, autonomous vehicle systems, and smart city infrastructure require storage solutions that can deliver high capacity within stringent form factor limitations.

Memory-centric computing architectures are driving demand for storage solutions that can seamlessly integrate with system memory hierarchies. Applications requiring large in-memory datasets, such as graph databases and real-time recommendation engines, are pushing the boundaries of traditional storage paradigms and creating market opportunities for hybrid memory-storage solutions that combine the benefits of both technologies.

Current State of Near-Memory vs Conventional Storage

Near-memory computing represents a paradigm shift in data processing architecture, where computational capabilities are integrated directly adjacent to or within memory modules. This approach fundamentally challenges conventional storage hierarchies by positioning processing elements closer to data sources, thereby reducing data movement overhead and improving overall system efficiency. Current implementations include processing-in-memory (PIM) technologies, near-data computing architectures, and hybrid memory-compute modules that blur traditional boundaries between storage and processing units.

The technological landscape reveals significant disparities in data density capabilities between these two approaches. Conventional storage systems, particularly enterprise-grade solutions, have achieved remarkable density improvements through advanced techniques such as 3D NAND stacking, shingled magnetic recording (SMR), and heat-assisted magnetic recording (HAMR). Modern conventional storage can deliver densities exceeding 15TB per 3.5-inch drive for HDDs and over 100TB for enterprise SSDs using multi-level cell technologies.

Near-memory storage solutions currently face density constraints due to the integration requirements of processing logic alongside memory cells. Contemporary near-memory implementations typically achieve 30-50% lower raw storage density compared to equivalent conventional memory technologies. This reduction stems from the additional silicon area required for processing units, interconnects, and thermal management components. However, recent advances in through-silicon via (TSV) technology and 3D integration techniques are beginning to narrow this gap.

Manufacturing challenges significantly impact the current state of both technologies. Near-memory solutions require sophisticated fabrication processes that combine memory cell optimization with logic circuit integration, leading to higher production costs and yield challenges. Conventional storage benefits from mature manufacturing ecosystems and economies of scale, enabling cost-effective high-density production. The complexity of near-memory manufacturing currently limits widespread adoption and contributes to higher per-bit costs.

Performance characteristics reveal contrasting strengths between the two approaches. While conventional storage excels in raw capacity and cost per bit, near-memory solutions demonstrate superior bandwidth utilization and reduced latency for data-intensive applications. Current near-memory implementations can achieve 10-100x improvement in effective data throughput for specific workloads, despite lower absolute storage density. This performance advantage becomes particularly pronounced in applications requiring frequent data access patterns and computational operations on stored data.

The integration ecosystem presents another critical differentiator in the current technological landscape. Conventional storage systems benefit from standardized interfaces, mature software stacks, and extensive compatibility across diverse computing platforms. Near-memory solutions require specialized programming models, modified operating system support, and application-specific optimization to realize their full potential, creating adoption barriers in existing infrastructure environments.

The technological landscape reveals significant disparities in data density capabilities between these two approaches. Conventional storage systems, particularly enterprise-grade solutions, have achieved remarkable density improvements through advanced techniques such as 3D NAND stacking, shingled magnetic recording (SMR), and heat-assisted magnetic recording (HAMR). Modern conventional storage can deliver densities exceeding 15TB per 3.5-inch drive for HDDs and over 100TB for enterprise SSDs using multi-level cell technologies.

Near-memory storage solutions currently face density constraints due to the integration requirements of processing logic alongside memory cells. Contemporary near-memory implementations typically achieve 30-50% lower raw storage density compared to equivalent conventional memory technologies. This reduction stems from the additional silicon area required for processing units, interconnects, and thermal management components. However, recent advances in through-silicon via (TSV) technology and 3D integration techniques are beginning to narrow this gap.

Manufacturing challenges significantly impact the current state of both technologies. Near-memory solutions require sophisticated fabrication processes that combine memory cell optimization with logic circuit integration, leading to higher production costs and yield challenges. Conventional storage benefits from mature manufacturing ecosystems and economies of scale, enabling cost-effective high-density production. The complexity of near-memory manufacturing currently limits widespread adoption and contributes to higher per-bit costs.

Performance characteristics reveal contrasting strengths between the two approaches. While conventional storage excels in raw capacity and cost per bit, near-memory solutions demonstrate superior bandwidth utilization and reduced latency for data-intensive applications. Current near-memory implementations can achieve 10-100x improvement in effective data throughput for specific workloads, despite lower absolute storage density. This performance advantage becomes particularly pronounced in applications requiring frequent data access patterns and computational operations on stored data.

The integration ecosystem presents another critical differentiator in the current technological landscape. Conventional storage systems benefit from standardized interfaces, mature software stacks, and extensive compatibility across diverse computing platforms. Near-memory solutions require specialized programming models, modified operating system support, and application-specific optimization to realize their full potential, creating adoption barriers in existing infrastructure environments.

Existing Data Density Optimization Solutions

01 Near-memory computing architectures for enhanced data density

Near-memory computing architectures integrate processing capabilities closer to memory storage, enabling higher data density through reduced data movement and improved bandwidth utilization. These architectures employ processing-in-memory or processing-near-memory techniques to minimize the distance between computation and data storage, thereby increasing effective data density and reducing latency. The integration allows for more efficient use of physical space while maintaining or improving performance characteristics.- Near-memory computing architectures for enhanced data density: Near-memory computing architectures integrate processing capabilities closer to memory storage, enabling higher data density through reduced data movement and improved bandwidth utilization. These architectures employ processing-in-memory or processing-near-memory techniques to minimize the distance between computation and data storage, thereby increasing effective data density and reducing latency. The integration allows for more efficient use of physical space while maintaining or improving performance characteristics.

- Multi-level memory hierarchies for optimized storage density: Multi-level memory hierarchies combine different storage technologies to optimize data density across various performance tiers. These systems utilize cache memories, main memories, and storage devices in coordinated configurations to maximize overall data density while balancing access speed requirements. The hierarchical approach enables efficient data placement strategies that improve density utilization by storing frequently accessed data in faster, lower-density memories and less frequently accessed data in higher-density storage.

- Advanced memory cell structures for increased storage density: Advanced memory cell structures employ innovative designs to increase storage density in both volatile and non-volatile memory technologies. These structures include three-dimensional stacking arrangements, multi-bit storage per cell, and novel transistor configurations that reduce the physical footprint per bit. The designs enable higher integration density through vertical scaling and improved cell efficiency, allowing more data storage within the same or smaller physical area.

- Data compression and encoding techniques for density improvement: Data compression and encoding techniques enhance effective storage density by reducing the physical space required to store information. These methods include various compression algorithms, encoding schemes, and data deduplication approaches that minimize redundancy and optimize bit utilization. The techniques can be applied at different levels of the storage hierarchy to improve overall system density without requiring changes to the underlying physical storage technology.

- Hybrid storage systems combining different memory technologies: Hybrid storage systems integrate multiple memory and storage technologies to achieve optimal data density characteristics. These systems combine technologies such as DRAM, flash memory, and emerging non-volatile memories to leverage the density advantages of each technology type. The hybrid approach enables intelligent data placement and migration strategies that maximize overall system density while meeting performance and endurance requirements for different data types and access patterns.

02 Multi-level memory hierarchies for optimized storage density

Multi-level memory hierarchies combine different types of storage technologies to optimize data density across various performance tiers. These systems utilize cache memories, main memories, and storage devices in coordinated configurations to maximize overall data density while balancing access speed and capacity requirements. The hierarchical approach enables efficient data placement strategies that improve both density and performance metrics.Expand Specific Solutions03 Advanced memory cell structures for increased storage density

Advanced memory cell structures employ innovative designs to increase the number of bits stored per unit area. These structures include three-dimensional stacking arrangements, multi-bit storage per cell, and novel transistor configurations that enable higher integration density. The designs focus on minimizing cell size while maintaining reliability and performance characteristics, resulting in significant improvements in overall storage density.Expand Specific Solutions04 Data compression and encoding techniques for effective density improvement

Data compression and encoding techniques enhance effective storage density by reducing the physical space required to store information. These methods include various compression algorithms, encoding schemes, and data deduplication approaches that minimize redundancy. The techniques can be applied at different levels of the storage hierarchy to maximize capacity utilization without requiring changes to physical storage media.Expand Specific Solutions05 Hybrid storage systems combining different memory technologies

Hybrid storage systems integrate multiple memory and storage technologies to achieve optimal data density and performance characteristics. These systems combine volatile and non-volatile memories, such as DRAM with flash or emerging memory technologies, to leverage the advantages of each technology type. The hybrid approach enables higher overall system density through intelligent data placement and migration strategies that match data characteristics with appropriate storage media.Expand Specific Solutions

Key Players in Near-Memory and Storage Industry

The near-memory versus conventional storage data density comparison represents a rapidly evolving market segment within the broader memory and storage industry, currently in a transitional phase from early adoption to mainstream deployment. The market demonstrates significant growth potential, driven by increasing demands for high-performance computing and AI workloads requiring faster data access. Technology maturity varies considerably across the competitive landscape, with established memory leaders like Samsung Electronics, Micron Technology, and Western Digital Technologies advancing processing-in-memory and storage-class memory solutions, while specialized companies such as Avalanche Technology and DapuStor focus on innovative non-volatile memory architectures. Research institutions including Chinese Academy of Sciences' Institute of Computing Technology and universities like Zhejiang University contribute fundamental research, while infrastructure giants like IBM, Huawei, and Hewlett Packard Enterprise integrate these technologies into enterprise solutions, creating a diverse ecosystem spanning from semiconductor innovation to system-level implementation.

Micron Technology, Inc.

Technical Solution: Micron has pioneered near-data computing architectures through their development of computational storage devices and memory-centric processing solutions. Their technology stack includes intelligent SSDs with embedded processing capabilities that can execute data analytics, compression, and filtering operations directly within the storage layer. This approach significantly reduces data movement between storage and compute resources, improving overall system data density by keeping frequently accessed data closer to processing units and minimizing bandwidth bottlenecks in data-intensive applications.

Strengths: Strong expertise in memory technologies and computational storage innovation. Weaknesses: Limited ecosystem support compared to traditional storage solutions, requiring specialized software stacks.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has developed advanced near-memory computing solutions including Processing-in-Memory (PIM) technology integrated with their DRAM and storage products. Their approach focuses on embedding computational units directly within memory arrays, enabling data processing at the source without traditional data movement overhead. The company's PIM-enabled memory devices can perform operations like vector addition, matrix multiplication, and neural network inference directly within the memory substrate, achieving significant improvements in data density utilization and energy efficiency compared to conventional von Neumann architectures.

Strengths: Market leadership in memory manufacturing, proven PIM technology integration. Weaknesses: Higher manufacturing complexity and cost compared to conventional memory solutions.

Core Innovations in Near-Memory Architecture Design

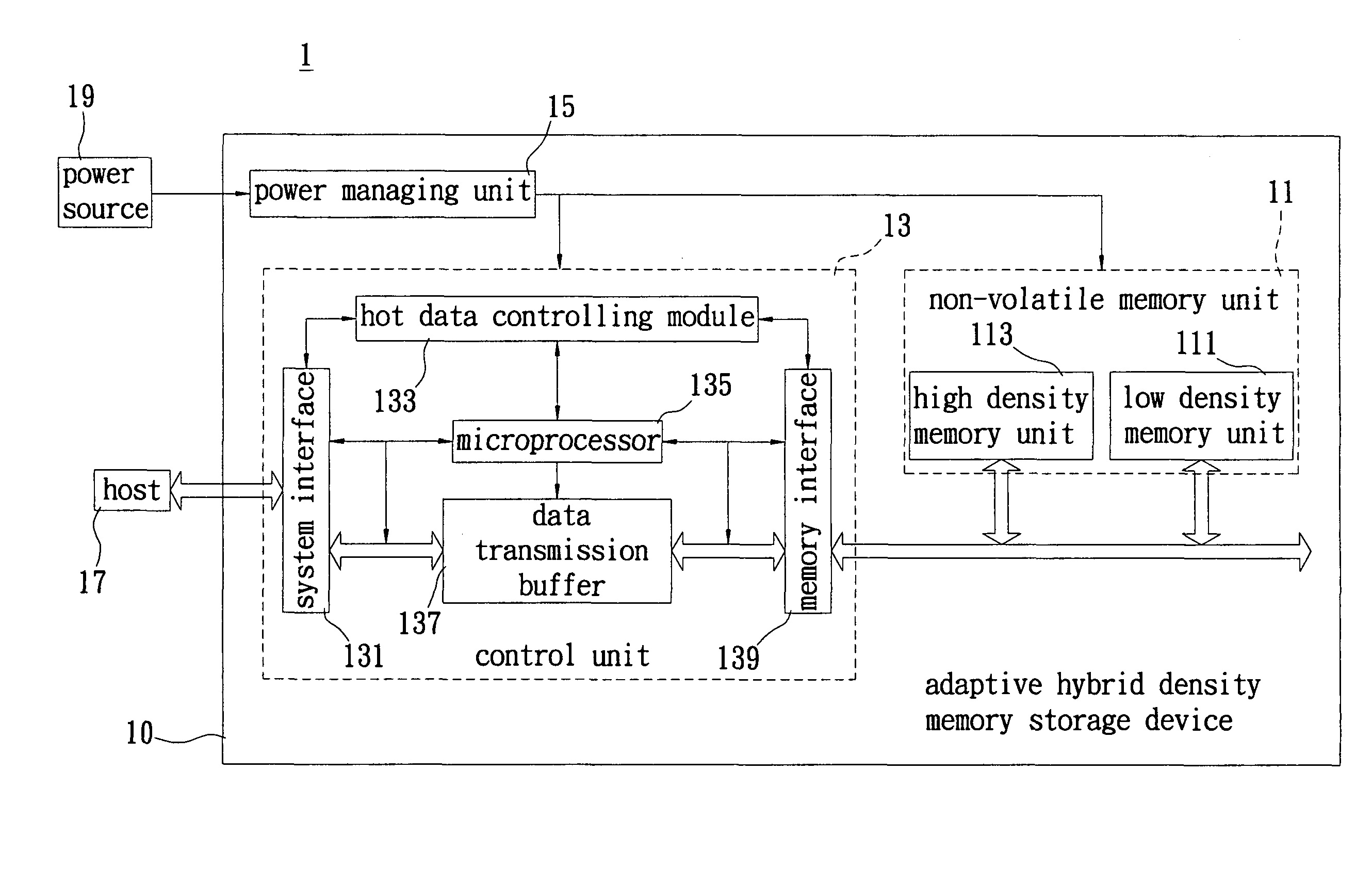

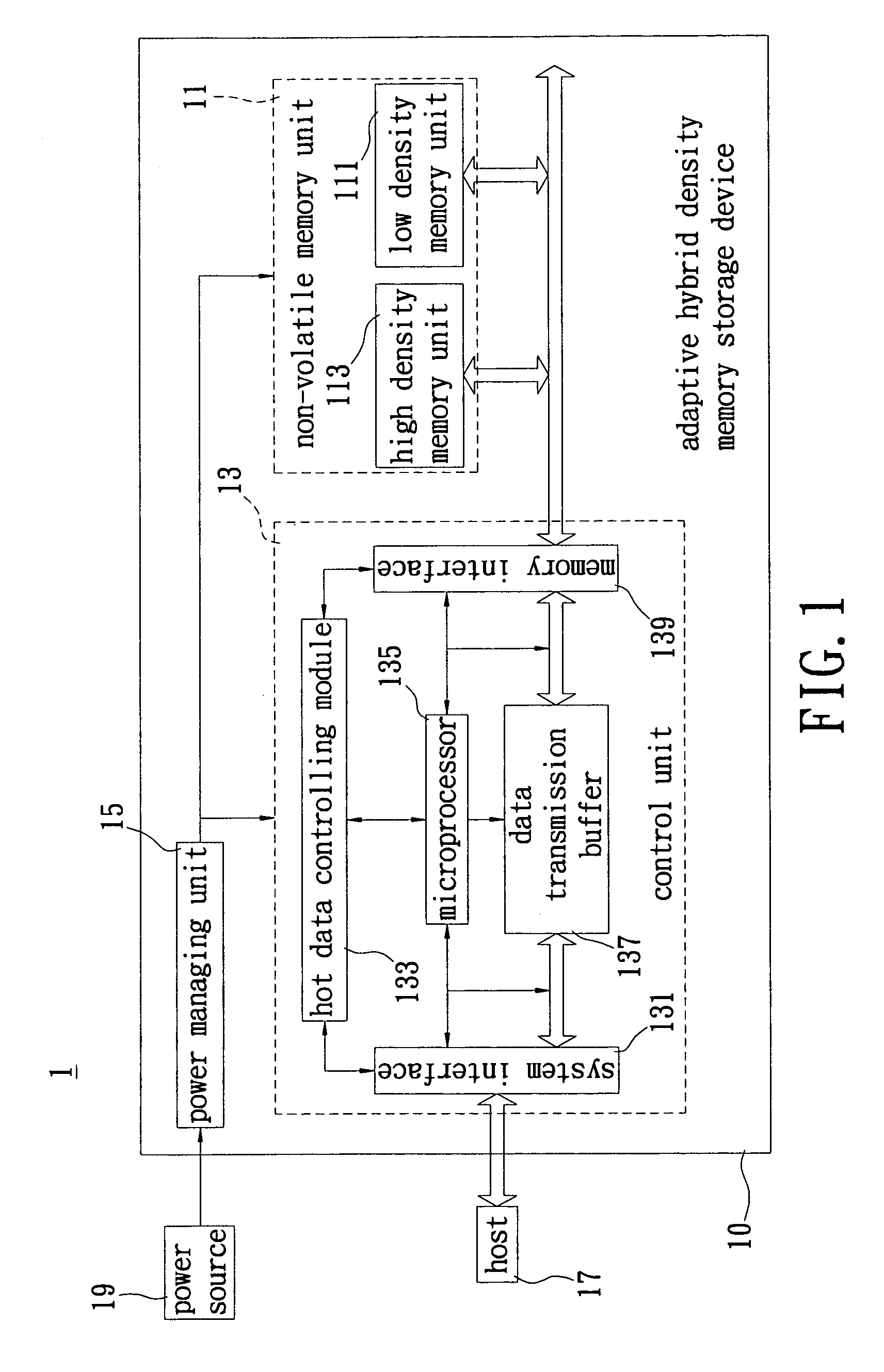

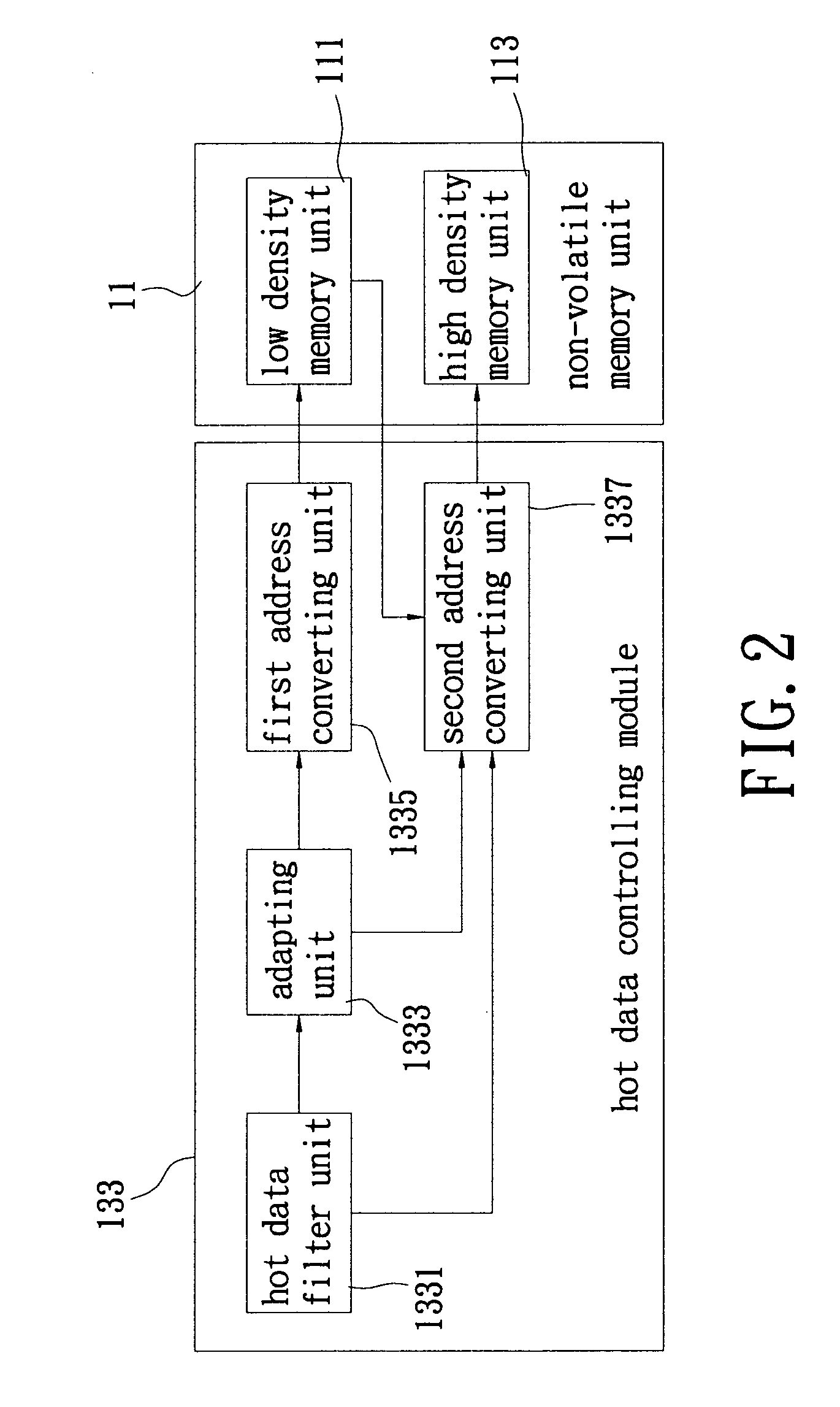

Adaptive hybrid density memory storage device and control method thereof

PatentActiveUS8171207B2

Innovation

- An adaptive hybrid density memory storage device and control method that utilizes a hot data filter to determine data length and a wearing rate analysis to decide the location of writing data between low and high density memory units, ensuring efficient data placement and balanced erase cycles.

Memory system having hybrid density memory and methods for wear-leveling management and file distribution management thereof

PatentActiveUS8015346B2

Innovation

- A memory system that employs weighting factors based on endurance counts to evenly distribute erase counts across blocks, using a sampling table to select storage positions and a reference table for file distribution to optimize storage performance.

Performance Trade-offs in Data Density Architectures

The fundamental performance trade-offs in data density architectures between near-memory and conventional storage systems present complex optimization challenges that significantly impact overall system efficiency. These trade-offs manifest across multiple dimensions including latency, bandwidth, power consumption, and cost per bit, creating a multifaceted decision matrix for system architects.

Near-memory computing architectures achieve superior data density performance through reduced data movement overhead, enabling processing elements to operate directly on data stored in high-bandwidth memory layers. This approach eliminates the traditional memory wall bottleneck by minimizing the distance between computation and storage, resulting in dramatically improved latency characteristics. However, this performance gain comes at the expense of higher per-bit storage costs and increased power density, particularly when implementing processing-in-memory or processing-near-memory configurations.

Conventional storage architectures maintain cost advantages through economies of scale and mature manufacturing processes, offering significantly lower cost per bit for large-scale data storage. The hierarchical memory structure in conventional systems provides flexibility in balancing performance and cost through intelligent data placement strategies. Nevertheless, these systems suffer from inherent bandwidth limitations and latency penalties associated with data movement across the memory hierarchy, particularly when handling data-intensive workloads with irregular access patterns.

The performance trade-offs become particularly pronounced in applications requiring high data throughput with concurrent processing demands. Near-memory architectures excel in scenarios involving streaming data processing, graph analytics, and machine learning inference where data locality can be effectively exploited. Conversely, conventional storage systems demonstrate superior performance in applications with predictable access patterns and where data persistence requirements outweigh immediate processing needs.

Energy efficiency considerations further complicate the trade-off analysis, as near-memory solutions reduce data movement energy costs but may increase static power consumption due to the integration of processing elements within memory arrays. The optimal architecture selection depends heavily on workload characteristics, performance requirements, and cost constraints specific to each application domain.

Near-memory computing architectures achieve superior data density performance through reduced data movement overhead, enabling processing elements to operate directly on data stored in high-bandwidth memory layers. This approach eliminates the traditional memory wall bottleneck by minimizing the distance between computation and storage, resulting in dramatically improved latency characteristics. However, this performance gain comes at the expense of higher per-bit storage costs and increased power density, particularly when implementing processing-in-memory or processing-near-memory configurations.

Conventional storage architectures maintain cost advantages through economies of scale and mature manufacturing processes, offering significantly lower cost per bit for large-scale data storage. The hierarchical memory structure in conventional systems provides flexibility in balancing performance and cost through intelligent data placement strategies. Nevertheless, these systems suffer from inherent bandwidth limitations and latency penalties associated with data movement across the memory hierarchy, particularly when handling data-intensive workloads with irregular access patterns.

The performance trade-offs become particularly pronounced in applications requiring high data throughput with concurrent processing demands. Near-memory architectures excel in scenarios involving streaming data processing, graph analytics, and machine learning inference where data locality can be effectively exploited. Conversely, conventional storage systems demonstrate superior performance in applications with predictable access patterns and where data persistence requirements outweigh immediate processing needs.

Energy efficiency considerations further complicate the trade-off analysis, as near-memory solutions reduce data movement energy costs but may increase static power consumption due to the integration of processing elements within memory arrays. The optimal architecture selection depends heavily on workload characteristics, performance requirements, and cost constraints specific to each application domain.

Energy Efficiency Considerations in Dense Storage Systems

Energy efficiency has emerged as a critical design consideration in dense storage systems, particularly when evaluating the trade-offs between near-memory and conventional storage architectures. The power consumption characteristics of these two approaches differ significantly, with near-memory storage typically exhibiting higher instantaneous power draw but potentially lower overall energy consumption due to reduced data movement overhead.

Near-memory storage systems demonstrate superior energy efficiency in workloads requiring frequent data access patterns. By positioning storage elements closer to processing units, these architectures eliminate the energy overhead associated with long-distance data transfers across traditional memory hierarchies. The reduced latency translates directly into lower energy consumption per operation, as processors spend less time in idle states waiting for data retrieval.

Conventional storage systems, while consuming less power per storage unit, often require substantial energy for data movement operations. The energy cost of transferring data through multiple cache levels and across system buses can exceed the actual computation energy requirements. This inefficiency becomes particularly pronounced in data-intensive applications where large datasets must be repeatedly accessed from remote storage locations.

The energy density metrics reveal interesting patterns when comparing these architectures. Near-memory solutions achieve higher performance per watt in compute-intensive scenarios, while conventional storage maintains advantages in scenarios requiring long-term data retention with infrequent access. The break-even point typically occurs when data access frequency exceeds certain thresholds, making workload characterization essential for optimal energy efficiency.

Dynamic power management strategies further differentiate these approaches. Near-memory systems can implement fine-grained power gating and voltage scaling at the storage element level, enabling rapid transitions between active and dormant states. Conventional storage systems rely more heavily on bulk power management techniques, which may not provide the same level of energy optimization granularity.

Thermal considerations also impact energy efficiency in dense storage deployments. Near-memory architectures distribute heat generation more evenly across the system, potentially reducing cooling requirements. However, the higher power density may necessitate more sophisticated thermal management solutions, affecting overall system energy consumption beyond the storage subsystem itself.

Near-memory storage systems demonstrate superior energy efficiency in workloads requiring frequent data access patterns. By positioning storage elements closer to processing units, these architectures eliminate the energy overhead associated with long-distance data transfers across traditional memory hierarchies. The reduced latency translates directly into lower energy consumption per operation, as processors spend less time in idle states waiting for data retrieval.

Conventional storage systems, while consuming less power per storage unit, often require substantial energy for data movement operations. The energy cost of transferring data through multiple cache levels and across system buses can exceed the actual computation energy requirements. This inefficiency becomes particularly pronounced in data-intensive applications where large datasets must be repeatedly accessed from remote storage locations.

The energy density metrics reveal interesting patterns when comparing these architectures. Near-memory solutions achieve higher performance per watt in compute-intensive scenarios, while conventional storage maintains advantages in scenarios requiring long-term data retention with infrequent access. The break-even point typically occurs when data access frequency exceeds certain thresholds, making workload characterization essential for optimal energy efficiency.

Dynamic power management strategies further differentiate these approaches. Near-memory systems can implement fine-grained power gating and voltage scaling at the storage element level, enabling rapid transitions between active and dormant states. Conventional storage systems rely more heavily on bulk power management techniques, which may not provide the same level of energy optimization granularity.

Thermal considerations also impact energy efficiency in dense storage deployments. Near-memory architectures distribute heat generation more evenly across the system, potentially reducing cooling requirements. However, the higher power density may necessitate more sophisticated thermal management solutions, affecting overall system energy consumption beyond the storage subsystem itself.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!