Comparing Persistent Memory Write Coalescing Mechanisms in Databases

MAY 13, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Persistent Memory Database Evolution and Objectives

Persistent memory technology represents a paradigm shift in database storage architecture, bridging the traditional gap between volatile memory and non-volatile storage. This revolutionary technology emerged from the limitations of conventional storage hierarchies, where databases relied heavily on DRAM for performance and disk storage for persistence, creating significant bottlenecks in data-intensive applications.

The evolution of persistent memory databases began with the introduction of Intel's 3D XPoint technology and subsequent NVDIMM implementations. Early adoption focused on leveraging persistent memory as a high-performance storage tier, but the technology's unique characteristics soon revealed opportunities for fundamental architectural redesigns. Database systems traditionally optimized for disk-based storage found themselves needing complete reimagining to harness persistent memory's byte-addressability and near-DRAM performance.

Write coalescing mechanisms emerged as a critical optimization technique within this evolution. As database workloads increasingly demanded higher throughput and lower latency, the ability to efficiently batch and optimize write operations to persistent memory became paramount. The challenge lies in balancing write performance, data consistency, and durability guarantees while maximizing the utilization of persistent memory's unique properties.

Current technological objectives center on developing sophisticated write coalescing strategies that can intelligently aggregate multiple small writes into larger, more efficient operations. These mechanisms must address the inherent trade-offs between write amplification, latency, and consistency requirements. Advanced coalescing algorithms aim to minimize the overhead associated with persistence operations while maintaining ACID properties essential for database reliability.

The primary goal driving this technological advancement is achieving near-memory performance for persistent operations without compromising data integrity. Modern implementations focus on adaptive coalescing strategies that can dynamically adjust based on workload characteristics, memory bandwidth utilization, and application-specific requirements. This includes developing intelligent buffering mechanisms, optimized flush strategies, and hardware-aware algorithms that leverage persistent memory's unique characteristics.

Future objectives emphasize creating unified frameworks that can seamlessly integrate multiple coalescing approaches, enabling database systems to automatically select optimal strategies based on real-time performance metrics and workload patterns. The ultimate vision involves transparent persistence layers that deliver both the performance benefits of in-memory computing and the reliability guarantees of traditional persistent storage systems.

The evolution of persistent memory databases began with the introduction of Intel's 3D XPoint technology and subsequent NVDIMM implementations. Early adoption focused on leveraging persistent memory as a high-performance storage tier, but the technology's unique characteristics soon revealed opportunities for fundamental architectural redesigns. Database systems traditionally optimized for disk-based storage found themselves needing complete reimagining to harness persistent memory's byte-addressability and near-DRAM performance.

Write coalescing mechanisms emerged as a critical optimization technique within this evolution. As database workloads increasingly demanded higher throughput and lower latency, the ability to efficiently batch and optimize write operations to persistent memory became paramount. The challenge lies in balancing write performance, data consistency, and durability guarantees while maximizing the utilization of persistent memory's unique properties.

Current technological objectives center on developing sophisticated write coalescing strategies that can intelligently aggregate multiple small writes into larger, more efficient operations. These mechanisms must address the inherent trade-offs between write amplification, latency, and consistency requirements. Advanced coalescing algorithms aim to minimize the overhead associated with persistence operations while maintaining ACID properties essential for database reliability.

The primary goal driving this technological advancement is achieving near-memory performance for persistent operations without compromising data integrity. Modern implementations focus on adaptive coalescing strategies that can dynamically adjust based on workload characteristics, memory bandwidth utilization, and application-specific requirements. This includes developing intelligent buffering mechanisms, optimized flush strategies, and hardware-aware algorithms that leverage persistent memory's unique characteristics.

Future objectives emphasize creating unified frameworks that can seamlessly integrate multiple coalescing approaches, enabling database systems to automatically select optimal strategies based on real-time performance metrics and workload patterns. The ultimate vision involves transparent persistence layers that deliver both the performance benefits of in-memory computing and the reliability guarantees of traditional persistent storage systems.

Market Demand for High-Performance Database Systems

The global database market is experiencing unprecedented growth driven by the exponential increase in data generation and the critical need for real-time processing capabilities. Organizations across industries are generating massive volumes of structured and unstructured data that require sophisticated storage and retrieval mechanisms. Traditional database systems are increasingly challenged by performance bottlenecks, particularly in write-intensive applications where data persistence and consistency are paramount.

Enterprise applications demand sub-millisecond response times for transaction processing, real-time analytics, and concurrent user access. Financial trading platforms, e-commerce systems, and IoT applications generate continuous streams of data that must be processed and stored with minimal latency. The emergence of persistent memory technologies has created new opportunities to bridge the performance gap between volatile memory and traditional storage systems.

Cloud computing adoption has intensified the demand for scalable database solutions that can handle dynamic workloads efficiently. Organizations are migrating mission-critical applications to cloud environments, requiring database systems that can maintain consistent performance under varying load conditions. The shift toward microservices architectures and containerized deployments further emphasizes the need for databases that can optimize write operations through advanced coalescing mechanisms.

Memory-centric computing paradigms are reshaping database architecture requirements. Applications in artificial intelligence, machine learning, and real-time analytics require databases that can leverage persistent memory's unique characteristics to accelerate data processing workflows. The ability to maintain data persistence while achieving near-DRAM performance levels has become a critical differentiator in competitive markets.

Industry verticals including telecommunications, healthcare, and autonomous systems are driving demand for databases that can handle high-frequency write operations with guaranteed durability. These sectors require systems that can optimize write coalescing to reduce wear leveling on persistent memory devices while maintaining ACID compliance. The growing adoption of edge computing further amplifies the need for efficient write mechanisms that can operate under resource-constrained environments.

The convergence of 5G networks, edge computing, and IoT deployments is creating new market segments that prioritize write performance optimization. Database vendors are responding by developing specialized solutions that leverage persistent memory write coalescing to achieve superior performance characteristics compared to traditional disk-based systems.

Enterprise applications demand sub-millisecond response times for transaction processing, real-time analytics, and concurrent user access. Financial trading platforms, e-commerce systems, and IoT applications generate continuous streams of data that must be processed and stored with minimal latency. The emergence of persistent memory technologies has created new opportunities to bridge the performance gap between volatile memory and traditional storage systems.

Cloud computing adoption has intensified the demand for scalable database solutions that can handle dynamic workloads efficiently. Organizations are migrating mission-critical applications to cloud environments, requiring database systems that can maintain consistent performance under varying load conditions. The shift toward microservices architectures and containerized deployments further emphasizes the need for databases that can optimize write operations through advanced coalescing mechanisms.

Memory-centric computing paradigms are reshaping database architecture requirements. Applications in artificial intelligence, machine learning, and real-time analytics require databases that can leverage persistent memory's unique characteristics to accelerate data processing workflows. The ability to maintain data persistence while achieving near-DRAM performance levels has become a critical differentiator in competitive markets.

Industry verticals including telecommunications, healthcare, and autonomous systems are driving demand for databases that can handle high-frequency write operations with guaranteed durability. These sectors require systems that can optimize write coalescing to reduce wear leveling on persistent memory devices while maintaining ACID compliance. The growing adoption of edge computing further amplifies the need for efficient write mechanisms that can operate under resource-constrained environments.

The convergence of 5G networks, edge computing, and IoT deployments is creating new market segments that prioritize write performance optimization. Database vendors are responding by developing specialized solutions that leverage persistent memory write coalescing to achieve superior performance characteristics compared to traditional disk-based systems.

Current State of PM Write Coalescing Technologies

Persistent memory write coalescing technologies have emerged as critical optimization mechanisms for database systems leveraging non-volatile memory architectures. Current implementations primarily focus on reducing write amplification and improving transaction throughput by aggregating multiple small writes into larger, more efficient operations before committing to persistent storage.

The dominant approach in contemporary database systems involves buffer-based coalescing mechanisms, where write operations are temporarily accumulated in volatile memory buffers before being flushed to persistent memory. Intel's PMDK library provides foundational support through its libpmem interface, enabling applications to implement custom coalescing strategies. Major database vendors have adopted variations of this approach, with each implementing proprietary optimizations tailored to their specific storage engines.

Log-structured coalescing represents another prevalent technique, particularly effective in write-intensive workloads. This method batches transaction logs and metadata updates, reducing the frequency of expensive persistence operations. Systems like Apache Cassandra and RocksDB have demonstrated significant performance improvements through log-based write coalescing, achieving up to 40% reduction in write latency under high-concurrency scenarios.

Hardware-assisted coalescing mechanisms are gaining traction, leveraging features like Intel's eADR (enhanced Asynchronous DRAM Refresh) and write-combining buffers. These solutions operate at the memory controller level, automatically merging adjacent writes without requiring explicit application-level intervention. However, their effectiveness varies significantly based on access patterns and memory alignment characteristics.

Current challenges include managing consistency guarantees during coalescing operations, particularly in ACID-compliant database systems. The temporal gap between write initiation and actual persistence creates complex failure scenarios that require sophisticated recovery mechanisms. Additionally, determining optimal coalescing window sizes remains an active area of research, as overly aggressive batching can increase transaction latency while insufficient coalescing fails to maximize throughput benefits.

Recent developments focus on adaptive coalescing algorithms that dynamically adjust batching parameters based on workload characteristics and system performance metrics. These intelligent mechanisms show promise for addressing the inherent trade-offs between latency and throughput optimization in persistent memory database architectures.

The dominant approach in contemporary database systems involves buffer-based coalescing mechanisms, where write operations are temporarily accumulated in volatile memory buffers before being flushed to persistent memory. Intel's PMDK library provides foundational support through its libpmem interface, enabling applications to implement custom coalescing strategies. Major database vendors have adopted variations of this approach, with each implementing proprietary optimizations tailored to their specific storage engines.

Log-structured coalescing represents another prevalent technique, particularly effective in write-intensive workloads. This method batches transaction logs and metadata updates, reducing the frequency of expensive persistence operations. Systems like Apache Cassandra and RocksDB have demonstrated significant performance improvements through log-based write coalescing, achieving up to 40% reduction in write latency under high-concurrency scenarios.

Hardware-assisted coalescing mechanisms are gaining traction, leveraging features like Intel's eADR (enhanced Asynchronous DRAM Refresh) and write-combining buffers. These solutions operate at the memory controller level, automatically merging adjacent writes without requiring explicit application-level intervention. However, their effectiveness varies significantly based on access patterns and memory alignment characteristics.

Current challenges include managing consistency guarantees during coalescing operations, particularly in ACID-compliant database systems. The temporal gap between write initiation and actual persistence creates complex failure scenarios that require sophisticated recovery mechanisms. Additionally, determining optimal coalescing window sizes remains an active area of research, as overly aggressive batching can increase transaction latency while insufficient coalescing fails to maximize throughput benefits.

Recent developments focus on adaptive coalescing algorithms that dynamically adjust batching parameters based on workload characteristics and system performance metrics. These intelligent mechanisms show promise for addressing the inherent trade-offs between latency and throughput optimization in persistent memory database architectures.

Existing Write Coalescing Implementation Approaches

01 Buffer-based write coalescing mechanisms

Write coalescing mechanisms that utilize buffer structures to combine multiple small write operations into larger, more efficient writes to persistent memory. These mechanisms typically employ temporary storage areas where incoming write requests are accumulated and merged before being committed to the persistent storage medium, reducing the overall number of write operations and improving system performance.- Buffer-based write coalescing mechanisms: Write coalescing techniques that utilize buffer structures to temporarily hold multiple write operations before committing them to persistent memory. These mechanisms aggregate small writes into larger, more efficient write operations, reducing the overall number of memory transactions and improving system performance. The buffer-based approach allows for intelligent merging of adjacent or overlapping write requests.

- Cache-line aligned write coalescing: Optimization techniques that align write operations with cache line boundaries to maximize coalescing efficiency in persistent memory systems. This approach ensures that multiple writes targeting the same cache line are combined before being written to persistent storage, minimizing memory bandwidth usage and reducing write amplification effects.

- Temporal write coalescing with delay mechanisms: Systems that implement time-based delays to allow multiple write operations to accumulate before flushing to persistent memory. These mechanisms use configurable time windows or thresholds to determine optimal coalescing opportunities, balancing between write latency and coalescing effectiveness for improved overall system throughput.

- Hardware-accelerated write coalescing controllers: Dedicated hardware components and controllers designed specifically for managing write coalescing operations in persistent memory architectures. These specialized units handle the detection, aggregation, and scheduling of write operations at the hardware level, providing low-latency coalescing with minimal software overhead.

- Multi-level write coalescing hierarchies: Advanced architectures that implement multiple layers of write coalescing across different levels of the memory hierarchy. These systems coordinate coalescing operations between various cache levels, memory controllers, and persistent storage interfaces to achieve optimal write efficiency and minimize redundant data transfers throughout the entire memory subsystem.

02 Cache-based write coalescing optimization

Techniques that leverage cache hierarchies to optimize write coalescing for persistent memory systems. These approaches use various cache levels to temporarily hold write data, allowing the system to identify and merge related write operations before they reach the persistent memory layer, thereby reducing write amplification and improving overall system efficiency.Expand Specific Solutions03 Address-based write coalescing strategies

Methods that analyze memory addresses and access patterns to intelligently coalesce write operations targeting nearby or related memory locations. These strategies examine the spatial and temporal locality of write requests to determine optimal coalescing opportunities, enabling more efficient utilization of persistent memory bandwidth and reducing wear on storage devices.Expand Specific Solutions04 Transaction-based write coalescing protocols

Protocols that implement write coalescing within transactional memory frameworks for persistent storage systems. These mechanisms ensure atomicity and consistency while combining multiple write operations into single transactions, providing both performance benefits through reduced write operations and maintaining data integrity guarantees required for persistent memory applications.Expand Specific Solutions05 Hardware-accelerated write coalescing implementations

Hardware-based solutions that implement write coalescing mechanisms directly in memory controllers or specialized processing units. These implementations provide low-latency write coalescing capabilities by utilizing dedicated hardware resources to identify, buffer, and merge write operations at the hardware level, offering superior performance compared to software-only approaches.Expand Specific Solutions

Major Database and Storage Technology Vendors

The persistent memory write coalescing mechanisms in databases represent a rapidly evolving technological domain currently in the growth phase, driven by increasing demand for high-performance data processing and storage optimization. The market demonstrates significant expansion potential as enterprises seek enhanced database performance and reduced latency. Technology maturity varies considerably across key players, with established semiconductor leaders like Intel, Micron Technology, and Samsung Electro-Mechanics advancing hardware-level persistent memory solutions, while database specialists including Oracle and SAP focus on software optimization mechanisms. Chinese technology giants Huawei and ZTE, alongside research institutions like Huazhong University of Science & Technology, contribute innovative approaches to memory management architectures. The competitive landscape shows convergence between hardware manufacturers developing next-generation persistent memory technologies and software companies implementing sophisticated coalescing algorithms, indicating a maturing ecosystem where integrated hardware-software solutions are becoming increasingly critical for competitive advantage in high-performance database applications.

Intel Corp.

Technical Solution: Intel has developed comprehensive persistent memory solutions including Intel Optane DC Persistent Memory with advanced write coalescing mechanisms. Their approach utilizes hardware-level write combining buffers and memory controllers that can aggregate multiple small writes into larger, more efficient transactions before committing to persistent storage. The technology incorporates intelligent caching algorithms that delay and batch writes to reduce wear leveling and improve performance. Intel's persistent memory architecture includes specialized drivers and APIs that enable database systems to optimize write patterns, supporting both volatile and non-volatile usage modes with seamless integration into existing database infrastructures.

Strengths: Industry-leading hardware integration, proven scalability in enterprise environments, comprehensive software stack support. Weaknesses: Higher cost compared to traditional storage solutions, limited availability and vendor lock-in concerns.

Oracle International Corp.

Technical Solution: Oracle has implemented sophisticated write coalescing mechanisms in their database systems specifically optimized for persistent memory technologies. Their approach includes the Oracle Database In-Memory option with persistent memory support, featuring intelligent write batching algorithms that group related database operations before committing to persistent storage. The system employs adaptive coalescing strategies that analyze workload patterns and dynamically adjust write grouping policies to minimize latency while ensuring data consistency. Oracle's implementation includes specialized buffer management techniques and transaction log optimization that work seamlessly with persistent memory characteristics, providing both performance benefits and durability guarantees for enterprise database workloads.

Strengths: Deep database expertise, enterprise-grade reliability, integrated with comprehensive database management features. Weaknesses: Proprietary solutions with high licensing costs, limited flexibility for custom implementations.

Key Patents in PM Write Optimization Techniques

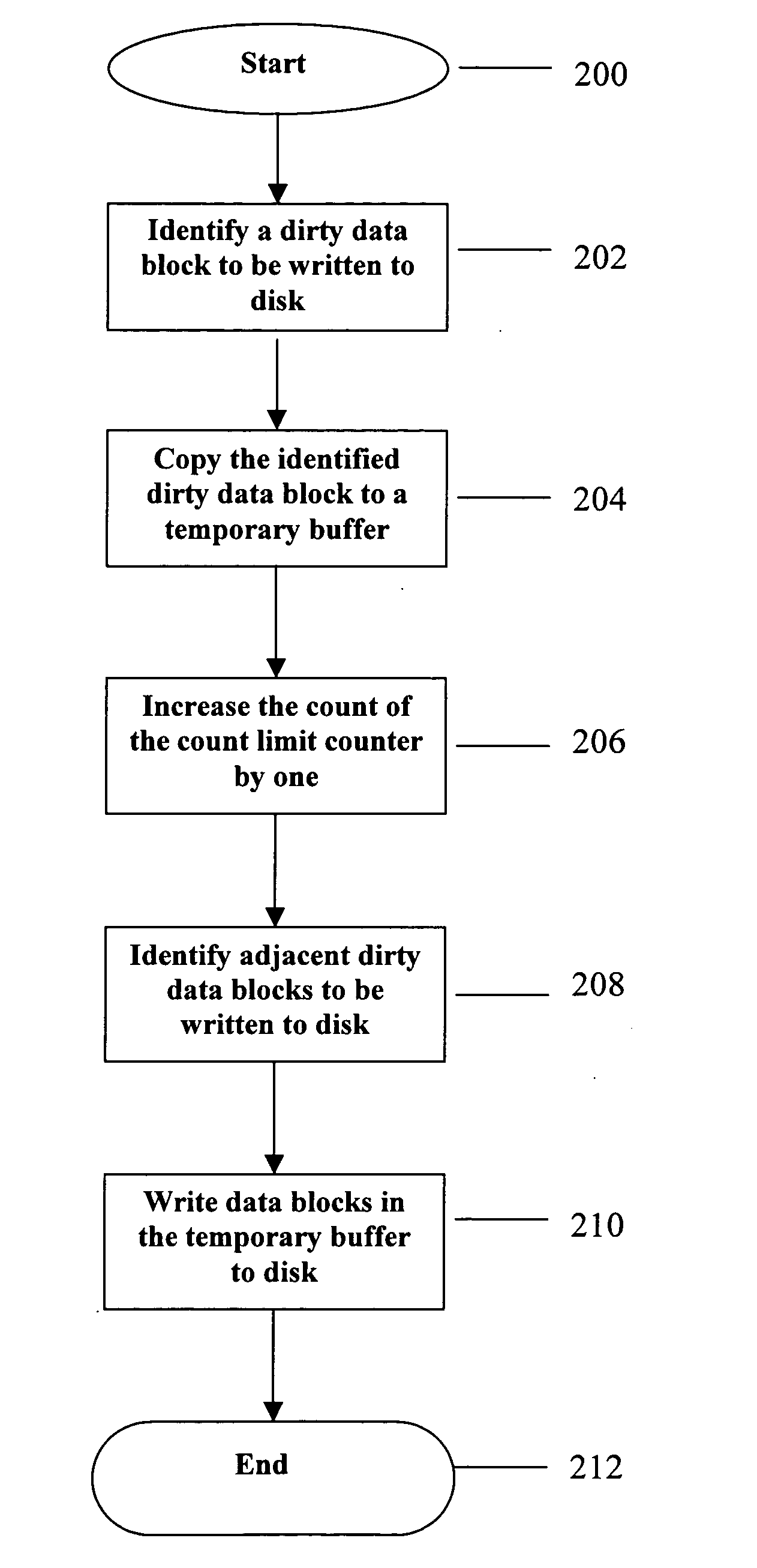

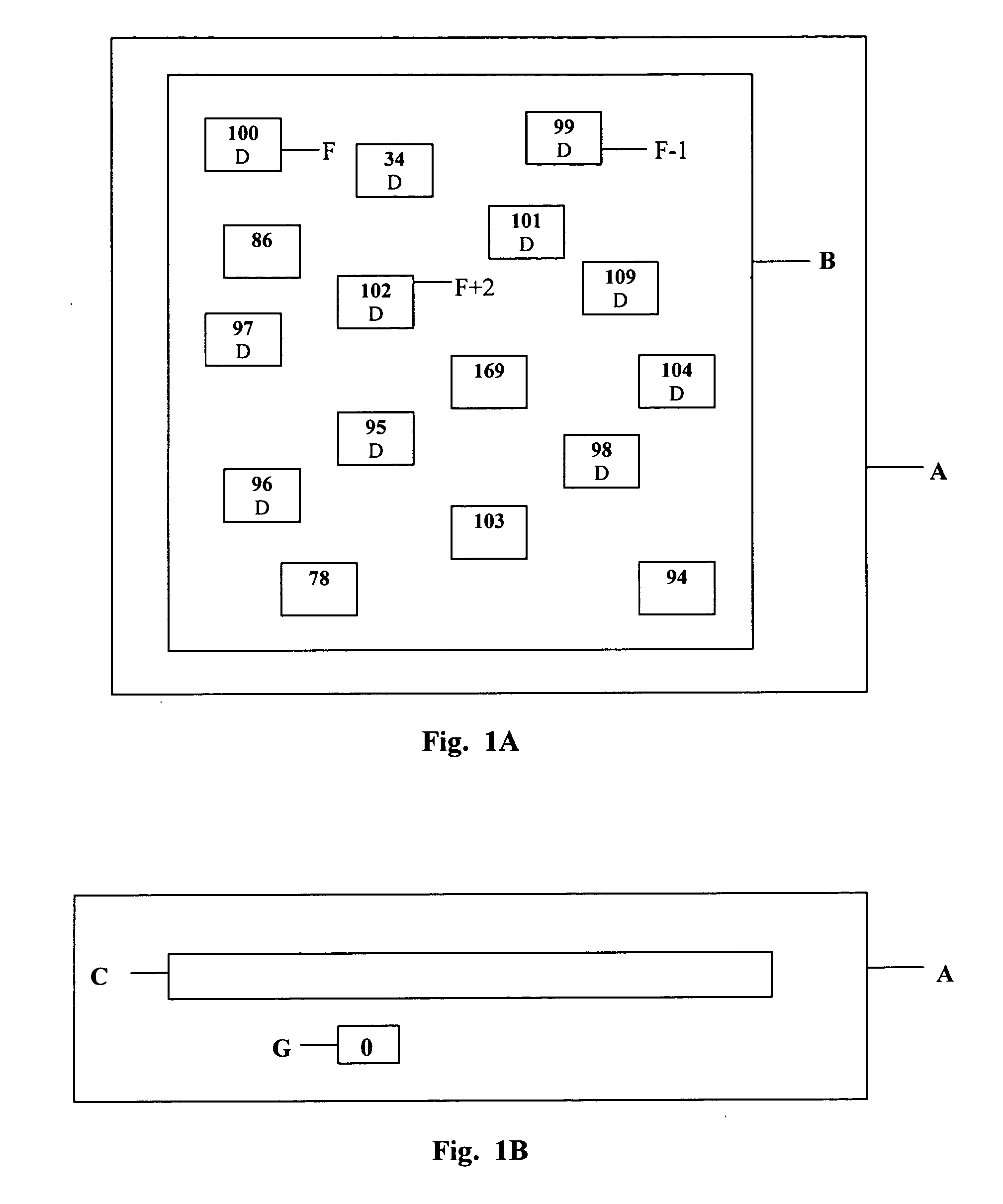

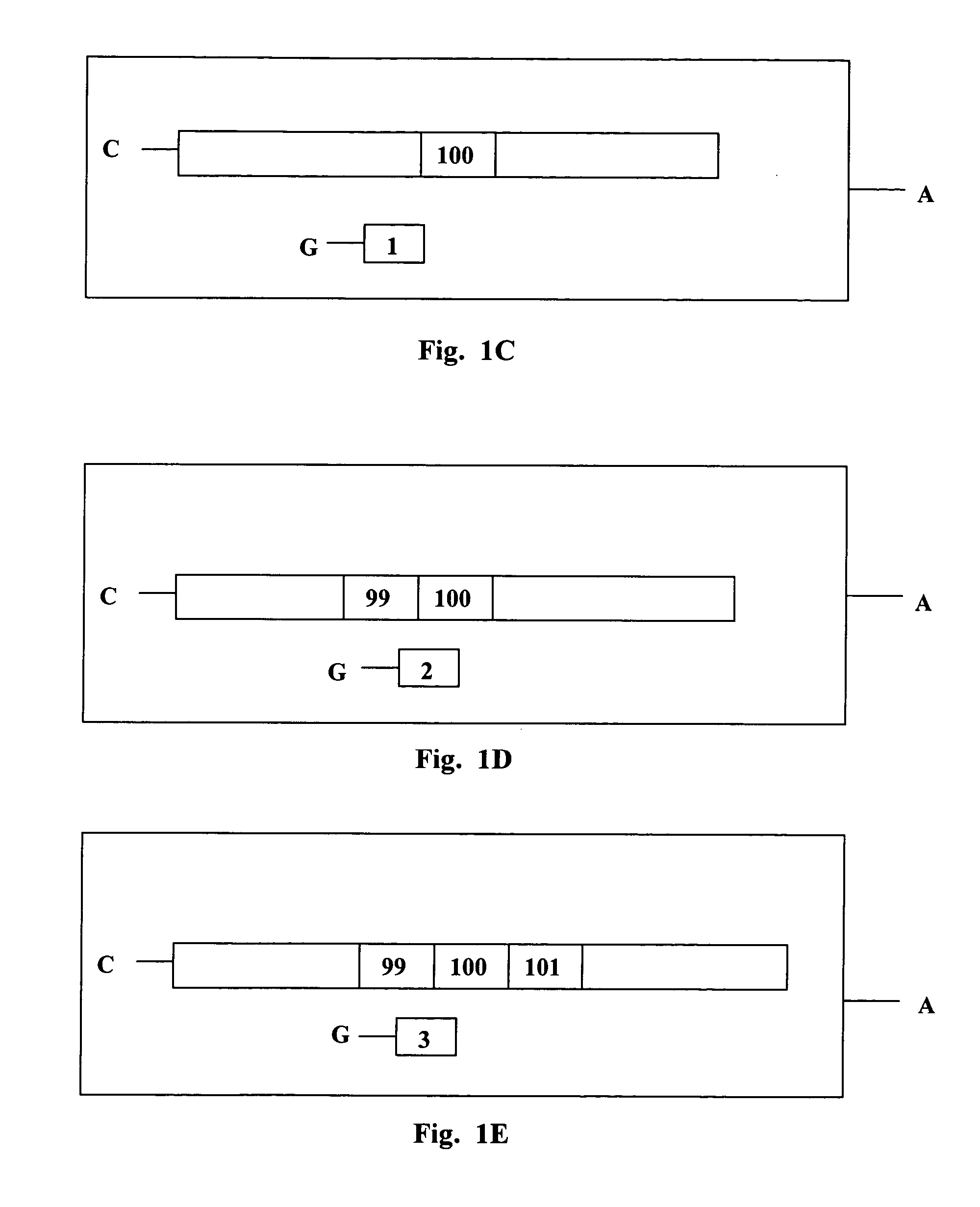

Reducing disk IO by full-cache write-merging

PatentInactiveUS20050044311A1

Innovation

- The method involves coalescing writes by identifying and writing adjacent dirty data blocks in a buffer cache as a single IO operation, reducing disk head movements and maintaining high IO throughput without requiring additional disks or changes to storage management.

Techniques for reducing memory write operations using coalescing memory buffers and difference information

PatentActiveUS20170269855A1

Innovation

- A system and method that reduce write operations in memory by identifying and delaying operations that shorten its lifetime, using difference information to minimize the number of write operations and extend the memory's usability period.

Performance Benchmarking Standards for PM Databases

Establishing standardized performance benchmarking frameworks for persistent memory databases requires comprehensive evaluation methodologies that address the unique characteristics of PM storage systems. Current benchmarking approaches often fail to capture the nuanced performance implications of write coalescing mechanisms, necessitating specialized metrics and testing protocols designed specifically for PM-enabled database systems.

The foundation of effective PM database benchmarking lies in developing workload-specific performance indicators that reflect real-world usage patterns. Traditional database benchmarks like TPC-C and YCSB require substantial modifications to accurately assess PM write coalescing efficiency. Key metrics should include write amplification ratios, coalescing effectiveness percentages, and latency distributions under varying write burst scenarios. These measurements must account for the asymmetric read-write performance characteristics inherent in persistent memory technologies.

Standardized testing environments should incorporate diverse hardware configurations representing different PM technologies, including Intel Optane DC Persistent Memory and emerging storage-class memory solutions. Benchmark suites must evaluate performance across multiple dimensions: transaction throughput under mixed workloads, recovery time objectives following system failures, and energy consumption patterns during intensive write operations. The testing framework should also assess scalability characteristics as database sizes approach PM capacity limits.

Reproducibility standards constitute another critical component of PM database benchmarking. Standardized configuration parameters, including PM allocation strategies, write coalescing buffer sizes, and persistence guarantee levels, must be clearly defined and consistently applied across different testing scenarios. Documentation requirements should specify hardware specifications, software versions, and environmental conditions to ensure meaningful performance comparisons between different coalescing mechanisms.

Industry collaboration remains essential for establishing widely-adopted benchmarking standards. Leading database vendors, hardware manufacturers, and academic institutions must contribute to developing comprehensive benchmark suites that reflect diverse application requirements. These standards should evolve continuously to accommodate emerging PM technologies and evolving database architectures, ensuring long-term relevance and applicability across the rapidly advancing persistent memory ecosystem.

The foundation of effective PM database benchmarking lies in developing workload-specific performance indicators that reflect real-world usage patterns. Traditional database benchmarks like TPC-C and YCSB require substantial modifications to accurately assess PM write coalescing efficiency. Key metrics should include write amplification ratios, coalescing effectiveness percentages, and latency distributions under varying write burst scenarios. These measurements must account for the asymmetric read-write performance characteristics inherent in persistent memory technologies.

Standardized testing environments should incorporate diverse hardware configurations representing different PM technologies, including Intel Optane DC Persistent Memory and emerging storage-class memory solutions. Benchmark suites must evaluate performance across multiple dimensions: transaction throughput under mixed workloads, recovery time objectives following system failures, and energy consumption patterns during intensive write operations. The testing framework should also assess scalability characteristics as database sizes approach PM capacity limits.

Reproducibility standards constitute another critical component of PM database benchmarking. Standardized configuration parameters, including PM allocation strategies, write coalescing buffer sizes, and persistence guarantee levels, must be clearly defined and consistently applied across different testing scenarios. Documentation requirements should specify hardware specifications, software versions, and environmental conditions to ensure meaningful performance comparisons between different coalescing mechanisms.

Industry collaboration remains essential for establishing widely-adopted benchmarking standards. Leading database vendors, hardware manufacturers, and academic institutions must contribute to developing comprehensive benchmark suites that reflect diverse application requirements. These standards should evolve continuously to accommodate emerging PM technologies and evolving database architectures, ensuring long-term relevance and applicability across the rapidly advancing persistent memory ecosystem.

Energy Efficiency Considerations in PM Systems

Energy efficiency has emerged as a critical design consideration in persistent memory systems, particularly when implementing write coalescing mechanisms in database applications. The unique characteristics of persistent memory technologies, including their higher energy consumption compared to traditional DRAM and their position in the memory hierarchy, necessitate careful optimization strategies to minimize power overhead while maintaining performance benefits.

Write coalescing mechanisms in persistent memory systems present distinct energy trade-offs that differ significantly from volatile memory implementations. The energy cost of persistent memory writes is substantially higher due to the underlying storage technology requirements, whether using 3D XPoint, phase-change memory, or other non-volatile technologies. Coalescing multiple small writes into larger, sequential operations can reduce the total energy consumption by minimizing the overhead associated with individual write operations and reducing the frequency of expensive persistence operations.

Buffer management strategies play a crucial role in energy optimization within write coalescing systems. Intelligent buffering can reduce the number of actual persistent memory accesses by temporarily holding data in lower-power DRAM before committing coalesced writes. However, this approach must balance energy savings against the risk of data loss and the additional energy overhead of maintaining consistency between volatile and persistent storage layers.

The temporal aspects of write coalescing directly impact energy efficiency through their influence on system idle states and power management. Aggressive coalescing with longer delay windows can enable more effective power gating and reduce dynamic power consumption, but may conflict with durability requirements and increase the energy cost of maintaining intermediate state. Conversely, immediate write-through approaches minimize buffering energy but increase the frequency of high-energy persistent memory operations.

Hardware-software co-design approaches offer promising avenues for energy-efficient write coalescing in persistent memory systems. Advanced power management features, including selective memory bank activation and dynamic voltage scaling, can be coordinated with database-level coalescing policies to optimize energy consumption patterns. Additionally, emerging persistent memory controllers with built-in coalescing capabilities can reduce software overhead while providing more granular energy management controls.

The evaluation of energy efficiency in persistent memory write coalescing mechanisms requires comprehensive measurement methodologies that account for both dynamic and static power consumption across the entire memory subsystem, including controllers, interconnects, and the persistent memory devices themselves.

Write coalescing mechanisms in persistent memory systems present distinct energy trade-offs that differ significantly from volatile memory implementations. The energy cost of persistent memory writes is substantially higher due to the underlying storage technology requirements, whether using 3D XPoint, phase-change memory, or other non-volatile technologies. Coalescing multiple small writes into larger, sequential operations can reduce the total energy consumption by minimizing the overhead associated with individual write operations and reducing the frequency of expensive persistence operations.

Buffer management strategies play a crucial role in energy optimization within write coalescing systems. Intelligent buffering can reduce the number of actual persistent memory accesses by temporarily holding data in lower-power DRAM before committing coalesced writes. However, this approach must balance energy savings against the risk of data loss and the additional energy overhead of maintaining consistency between volatile and persistent storage layers.

The temporal aspects of write coalescing directly impact energy efficiency through their influence on system idle states and power management. Aggressive coalescing with longer delay windows can enable more effective power gating and reduce dynamic power consumption, but may conflict with durability requirements and increase the energy cost of maintaining intermediate state. Conversely, immediate write-through approaches minimize buffering energy but increase the frequency of high-energy persistent memory operations.

Hardware-software co-design approaches offer promising avenues for energy-efficient write coalescing in persistent memory systems. Advanced power management features, including selective memory bank activation and dynamic voltage scaling, can be coordinated with database-level coalescing policies to optimize energy consumption patterns. Additionally, emerging persistent memory controllers with built-in coalescing capabilities can reduce software overhead while providing more granular energy management controls.

The evaluation of energy efficiency in persistent memory write coalescing mechanisms requires comprehensive measurement methodologies that account for both dynamic and static power consumption across the entire memory subsystem, including controllers, interconnects, and the persistent memory devices themselves.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!