Persistent Memory vs External Storage for High-Velocity Applications

MAY 13, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Persistent Memory Technology Background and Objectives

Persistent memory technology represents a revolutionary paradigm shift in computer storage architecture, bridging the traditional gap between volatile memory and non-volatile storage. This technology emerged from the fundamental need to address the growing performance bottlenecks in data-intensive applications where conventional storage hierarchies create significant latency penalties. The evolution began with early research into phase-change memory and memristor technologies in the 2000s, progressing through Intel's 3D XPoint technology development, and culminating in commercial products like Intel Optane DC Persistent Memory.

The historical development trajectory shows persistent memory addressing critical limitations in traditional storage systems. Conventional architectures force applications to navigate between fast but volatile DRAM and slower but persistent storage devices, creating performance gaps that become increasingly problematic as data volumes and processing demands escalate. This dichotomy has historically required complex software architectures with extensive caching mechanisms, data serialization processes, and recovery procedures that introduce both complexity and performance overhead.

Current persistent memory technologies aim to eliminate the traditional storage hierarchy by providing byte-addressable, directly accessible non-volatile memory that combines the speed characteristics approaching DRAM with the persistence of traditional storage. The technology operates at the intersection of memory and storage, offering access latencies measured in hundreds of nanoseconds rather than milliseconds typical of SSDs or microseconds of traditional storage interfaces.

The primary technical objectives center on achieving near-memory performance while maintaining data persistence across power cycles. This involves developing memory controllers capable of managing both volatile and non-volatile access patterns, implementing efficient wear-leveling algorithms to ensure longevity, and creating programming models that allow applications to directly manipulate persistent data structures without traditional file system overhead.

For high-velocity applications, persistent memory technology targets specific performance and reliability goals. These include reducing application restart times by eliminating lengthy data loading phases, minimizing transaction commit latencies by removing storage I/O bottlenecks, and enabling real-time analytics on persistent datasets without the overhead of moving data between memory and storage tiers. The technology also aims to simplify application architectures by providing a unified memory-storage interface that reduces the complexity of data management in performance-critical scenarios.

The historical development trajectory shows persistent memory addressing critical limitations in traditional storage systems. Conventional architectures force applications to navigate between fast but volatile DRAM and slower but persistent storage devices, creating performance gaps that become increasingly problematic as data volumes and processing demands escalate. This dichotomy has historically required complex software architectures with extensive caching mechanisms, data serialization processes, and recovery procedures that introduce both complexity and performance overhead.

Current persistent memory technologies aim to eliminate the traditional storage hierarchy by providing byte-addressable, directly accessible non-volatile memory that combines the speed characteristics approaching DRAM with the persistence of traditional storage. The technology operates at the intersection of memory and storage, offering access latencies measured in hundreds of nanoseconds rather than milliseconds typical of SSDs or microseconds of traditional storage interfaces.

The primary technical objectives center on achieving near-memory performance while maintaining data persistence across power cycles. This involves developing memory controllers capable of managing both volatile and non-volatile access patterns, implementing efficient wear-leveling algorithms to ensure longevity, and creating programming models that allow applications to directly manipulate persistent data structures without traditional file system overhead.

For high-velocity applications, persistent memory technology targets specific performance and reliability goals. These include reducing application restart times by eliminating lengthy data loading phases, minimizing transaction commit latencies by removing storage I/O bottlenecks, and enabling real-time analytics on persistent datasets without the overhead of moving data between memory and storage tiers. The technology also aims to simplify application architectures by providing a unified memory-storage interface that reduces the complexity of data management in performance-critical scenarios.

Market Demand for High-Velocity Storage Solutions

The global demand for high-velocity storage solutions has experienced unprecedented growth driven by the exponential increase in data generation and the need for real-time processing capabilities. Organizations across industries are grappling with applications that require microsecond-level response times, creating a substantial market opportunity for advanced storage technologies that can bridge the performance gap between traditional storage and system memory.

Financial services represent one of the most demanding sectors for high-velocity storage, where algorithmic trading platforms require ultra-low latency data access to maintain competitive advantages. High-frequency trading systems process millions of transactions per second, making storage performance a critical factor in profitability. Similarly, fraud detection systems must analyze transaction patterns in real-time, necessitating storage solutions that can deliver consistent sub-millisecond response times.

The telecommunications industry has emerged as another significant driver of demand, particularly with the rollout of 5G networks and edge computing infrastructure. Network function virtualization and software-defined networking applications require storage systems capable of handling massive concurrent connections while maintaining quality of service guarantees. Edge computing deployments specifically demand storage solutions that combine high performance with reliability in distributed environments.

Gaming and entertainment sectors continue to push storage performance boundaries, with modern video games requiring seamless asset streaming and virtual reality applications demanding consistent frame rates. Cloud gaming services have intensified these requirements, as any storage-induced latency directly impacts user experience across network connections.

Database and analytics workloads constitute a substantial portion of the high-velocity storage market. In-memory databases, real-time analytics platforms, and machine learning inference engines all benefit significantly from storage technologies that can eliminate traditional I/O bottlenecks. The growing adoption of artificial intelligence applications has further amplified demand for storage solutions that can support both training and inference workloads with minimal latency overhead.

Enterprise applications increasingly require storage systems that can handle mixed workloads with predictable performance characteristics. Virtualization environments, containerized applications, and microservices architectures all benefit from storage technologies that can provide consistent performance across diverse application requirements while maintaining cost-effectiveness at scale.

Financial services represent one of the most demanding sectors for high-velocity storage, where algorithmic trading platforms require ultra-low latency data access to maintain competitive advantages. High-frequency trading systems process millions of transactions per second, making storage performance a critical factor in profitability. Similarly, fraud detection systems must analyze transaction patterns in real-time, necessitating storage solutions that can deliver consistent sub-millisecond response times.

The telecommunications industry has emerged as another significant driver of demand, particularly with the rollout of 5G networks and edge computing infrastructure. Network function virtualization and software-defined networking applications require storage systems capable of handling massive concurrent connections while maintaining quality of service guarantees. Edge computing deployments specifically demand storage solutions that combine high performance with reliability in distributed environments.

Gaming and entertainment sectors continue to push storage performance boundaries, with modern video games requiring seamless asset streaming and virtual reality applications demanding consistent frame rates. Cloud gaming services have intensified these requirements, as any storage-induced latency directly impacts user experience across network connections.

Database and analytics workloads constitute a substantial portion of the high-velocity storage market. In-memory databases, real-time analytics platforms, and machine learning inference engines all benefit significantly from storage technologies that can eliminate traditional I/O bottlenecks. The growing adoption of artificial intelligence applications has further amplified demand for storage solutions that can support both training and inference workloads with minimal latency overhead.

Enterprise applications increasingly require storage systems that can handle mixed workloads with predictable performance characteristics. Virtualization environments, containerized applications, and microservices architectures all benefit from storage technologies that can provide consistent performance across diverse application requirements while maintaining cost-effectiveness at scale.

Current State of Persistent Memory vs External Storage

The persistent memory landscape has undergone significant transformation over the past decade, with Intel's 3D XPoint technology leading the charge through products like Optane DC Persistent Memory. This technology bridges the traditional gap between volatile DRAM and non-volatile storage, offering byte-addressable access with persistence capabilities. However, Intel's discontinuation of Optane in 2022 has created uncertainty in the market, though the underlying concepts continue to drive innovation across the industry.

Current persistent memory implementations primarily utilize phase-change memory (PCM), resistive RAM (ReRAM), and magnetoresistive RAM (MRAM) technologies. These solutions typically deliver latencies in the range of 100-300 nanoseconds for read operations, significantly faster than traditional NAND flash storage but slower than conventional DRAM. Write operations generally exhibit higher latencies, often 2-10 times slower than reads, presenting challenges for write-intensive applications.

External storage systems have simultaneously evolved to address high-velocity application demands through NVMe SSDs, which have become the dominant interface for enterprise storage. Modern NVMe drives achieve sequential read speeds exceeding 7 GB/s and random IOPS performance surpassing 1 million operations per second. The introduction of PCIe 5.0 and emerging PCIe 6.0 standards further enhance bandwidth capabilities, with theoretical maximums reaching 32 GB/s and 64 GB/s respectively.

Storage-class memory represents an emerging middle ground, combining aspects of both persistent memory and external storage. Technologies like Samsung's Z-NAND and Western Digital's ReRAM-based solutions offer improved latency characteristics compared to traditional NAND while maintaining the familiar block-based access patterns that existing applications can readily adopt.

The current technical challenge lies in optimizing software stacks to fully exploit persistent memory capabilities. Traditional storage I/O paths introduce unnecessary overhead when dealing with byte-addressable persistent memory, leading to the development of specialized programming models like PMDK (Persistent Memory Development Kit) and new file systems such as NOVA and SplitFS.

Memory tiering and hybrid approaches are gaining traction as practical solutions for high-velocity applications. These systems automatically migrate frequently accessed data to faster tiers while maintaining less critical data on cost-effective storage layers, optimizing both performance and economic efficiency for diverse workload requirements.

Current persistent memory implementations primarily utilize phase-change memory (PCM), resistive RAM (ReRAM), and magnetoresistive RAM (MRAM) technologies. These solutions typically deliver latencies in the range of 100-300 nanoseconds for read operations, significantly faster than traditional NAND flash storage but slower than conventional DRAM. Write operations generally exhibit higher latencies, often 2-10 times slower than reads, presenting challenges for write-intensive applications.

External storage systems have simultaneously evolved to address high-velocity application demands through NVMe SSDs, which have become the dominant interface for enterprise storage. Modern NVMe drives achieve sequential read speeds exceeding 7 GB/s and random IOPS performance surpassing 1 million operations per second. The introduction of PCIe 5.0 and emerging PCIe 6.0 standards further enhance bandwidth capabilities, with theoretical maximums reaching 32 GB/s and 64 GB/s respectively.

Storage-class memory represents an emerging middle ground, combining aspects of both persistent memory and external storage. Technologies like Samsung's Z-NAND and Western Digital's ReRAM-based solutions offer improved latency characteristics compared to traditional NAND while maintaining the familiar block-based access patterns that existing applications can readily adopt.

The current technical challenge lies in optimizing software stacks to fully exploit persistent memory capabilities. Traditional storage I/O paths introduce unnecessary overhead when dealing with byte-addressable persistent memory, leading to the development of specialized programming models like PMDK (Persistent Memory Development Kit) and new file systems such as NOVA and SplitFS.

Memory tiering and hybrid approaches are gaining traction as practical solutions for high-velocity applications. These systems automatically migrate frequently accessed data to faster tiers while maintaining less critical data on cost-effective storage layers, optimizing both performance and economic efficiency for diverse workload requirements.

Current Solutions for High-Velocity Data Processing

01 Memory management and optimization techniques

Various techniques for managing and optimizing memory performance in persistent storage systems. These methods focus on improving data access patterns, reducing latency, and enhancing overall system efficiency through advanced memory management algorithms and data structures.- Memory management and optimization techniques: Various techniques for managing and optimizing memory performance in persistent storage systems. These methods focus on improving data access patterns, reducing latency, and enhancing overall system efficiency through advanced memory management algorithms and data structures.

- Storage interface and controller technologies: Advanced controller architectures and interface technologies designed to bridge the gap between persistent memory and external storage devices. These solutions provide enhanced data transfer rates, improved reliability, and better integration between different storage tiers.

- Data caching and buffering mechanisms: Sophisticated caching strategies and buffering techniques that optimize data flow between persistent memory and external storage systems. These approaches minimize access times and improve overall system performance by intelligently managing data placement and retrieval operations.

- Storage virtualization and abstraction layers: Technologies that create abstraction layers between applications and underlying storage hardware, enabling seamless integration of persistent memory with traditional external storage. These solutions provide unified storage interfaces and improve system scalability and flexibility.

- Performance monitoring and adaptive optimization: Systems and methods for real-time monitoring of storage performance metrics and implementing adaptive optimization strategies. These technologies dynamically adjust system parameters to maintain optimal performance across varying workloads and usage patterns.

02 Storage interface and controller optimization

Technologies for optimizing storage controllers and interfaces to improve data transfer rates and reduce bottlenecks between persistent memory and external storage devices. These solutions enhance communication protocols and data pathways for better performance.Expand Specific Solutions03 Caching and buffering mechanisms

Advanced caching strategies and buffering techniques designed to bridge the performance gap between different storage tiers. These mechanisms optimize data placement and retrieval to maximize system throughput and minimize access delays.Expand Specific Solutions04 Data compression and storage efficiency

Methods for improving storage efficiency through data compression, deduplication, and space optimization techniques. These approaches reduce storage requirements while maintaining or improving access performance in persistent memory systems.Expand Specific Solutions05 Performance monitoring and adaptive optimization

Systems for monitoring storage performance metrics and implementing adaptive optimization strategies. These solutions dynamically adjust system parameters and configurations to maintain optimal performance under varying workload conditions.Expand Specific Solutions

Key Players in Persistent Memory and Storage Industry

The persistent memory versus external storage landscape for high-velocity applications represents a rapidly evolving market driven by increasing demands for real-time data processing and ultra-low latency requirements. The industry is transitioning from traditional storage hierarchies toward memory-centric architectures, with the market experiencing significant growth as enterprises seek to eliminate storage bottlenecks in high-performance computing environments. Technology maturity varies considerably across players, with established semiconductor giants like Intel, Samsung Electronics, and KIOXIA leading in persistent memory innovations, while companies such as SunRise Memory and FADU focus on next-generation memory architectures. Traditional storage leaders including Western Digital Technologies and NetApp are adapting their portfolios to bridge conventional and emerging memory technologies. The competitive landscape also features major cloud infrastructure providers like Alibaba Group and technology integrators such as Huawei Technologies, who are implementing these solutions at scale to support data-intensive applications requiring microsecond-level response times.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung offers advanced persistent memory solutions through their Z-NAND and Storage Class Memory (SCM) technologies, combining the benefits of NAND flash with DRAM-like performance characteristics. Their approach focuses on ultra-low latency storage solutions that can serve as both high-speed cache and persistent storage for high-velocity applications. Samsung's persistent memory products feature enhanced endurance, reduced latency compared to traditional SSDs, and support for byte-addressable operations, making them suitable for real-time data processing and in-memory computing workloads that require data persistence.

Strengths: Strong manufacturing capabilities, competitive pricing, good integration with existing storage ecosystems. Weaknesses: Less mature ecosystem compared to Intel's solutions, limited software optimization tools for application developers.

Intel Corp.

Technical Solution: Intel has developed comprehensive persistent memory solutions including Intel Optane DC Persistent Memory, which bridges the gap between DRAM and storage with byte-addressable non-volatile memory. Their technology provides near-DRAM performance with storage-class persistence, enabling applications to maintain data across power cycles while achieving microsecond-level access times. Intel's persistent memory architecture supports both Memory Mode and App Direct Mode, allowing applications to either use it as volatile memory or as persistent storage with direct CPU access, eliminating traditional I/O stack overhead for high-velocity applications.

Strengths: Industry-leading persistent memory technology with proven enterprise deployment, excellent performance characteristics. Weaknesses: Higher cost per GB compared to traditional storage, limited capacity scaling compared to external storage solutions.

Core Technologies in Persistent Memory Architecture

Computing method and apparatus with persistent memory

PatentWO2016094003A1

Innovation

- A computing method and apparatus that dynamically allocates regions of persistent memory as either volatile or persistent, allowing for direct access by the CPU and reducing the need for data copying through metadata management, enabling a 2-level memory configuration where persistent memory can serve both volatile and persistent data needs.

Persistent memory storage engine device based on log structure and control method thereof

PatentActiveUS20210019257A1

Innovation

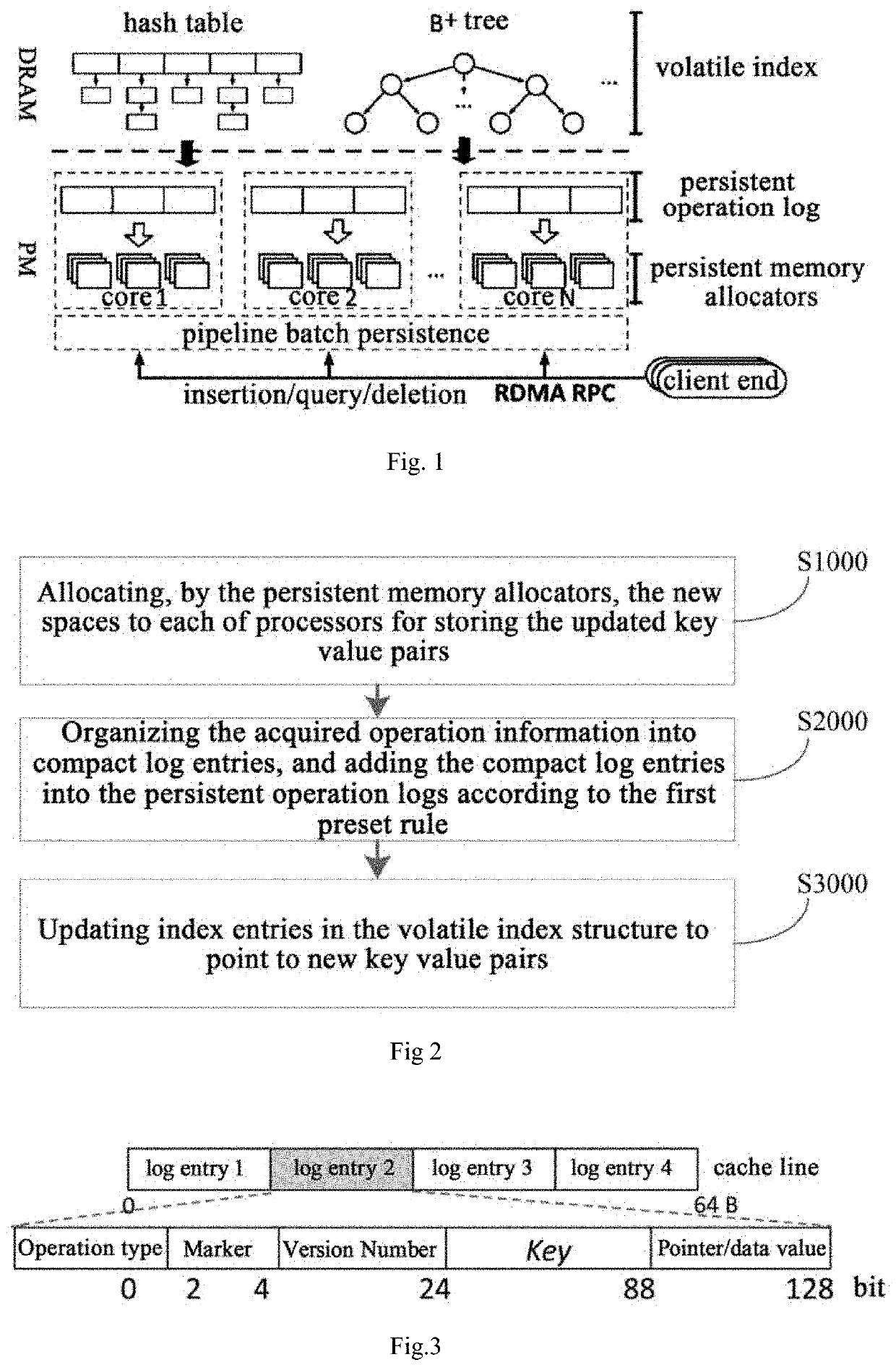

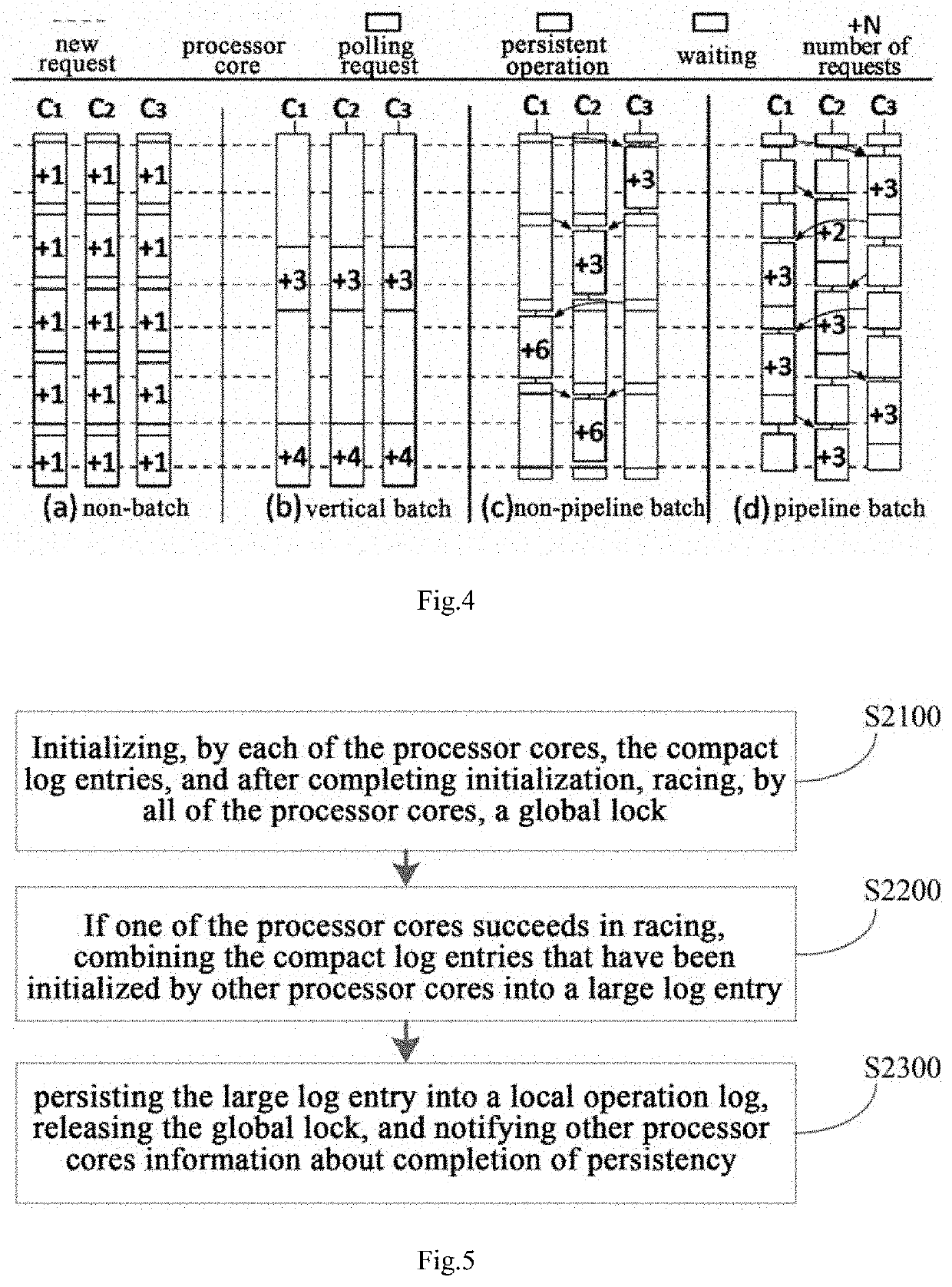

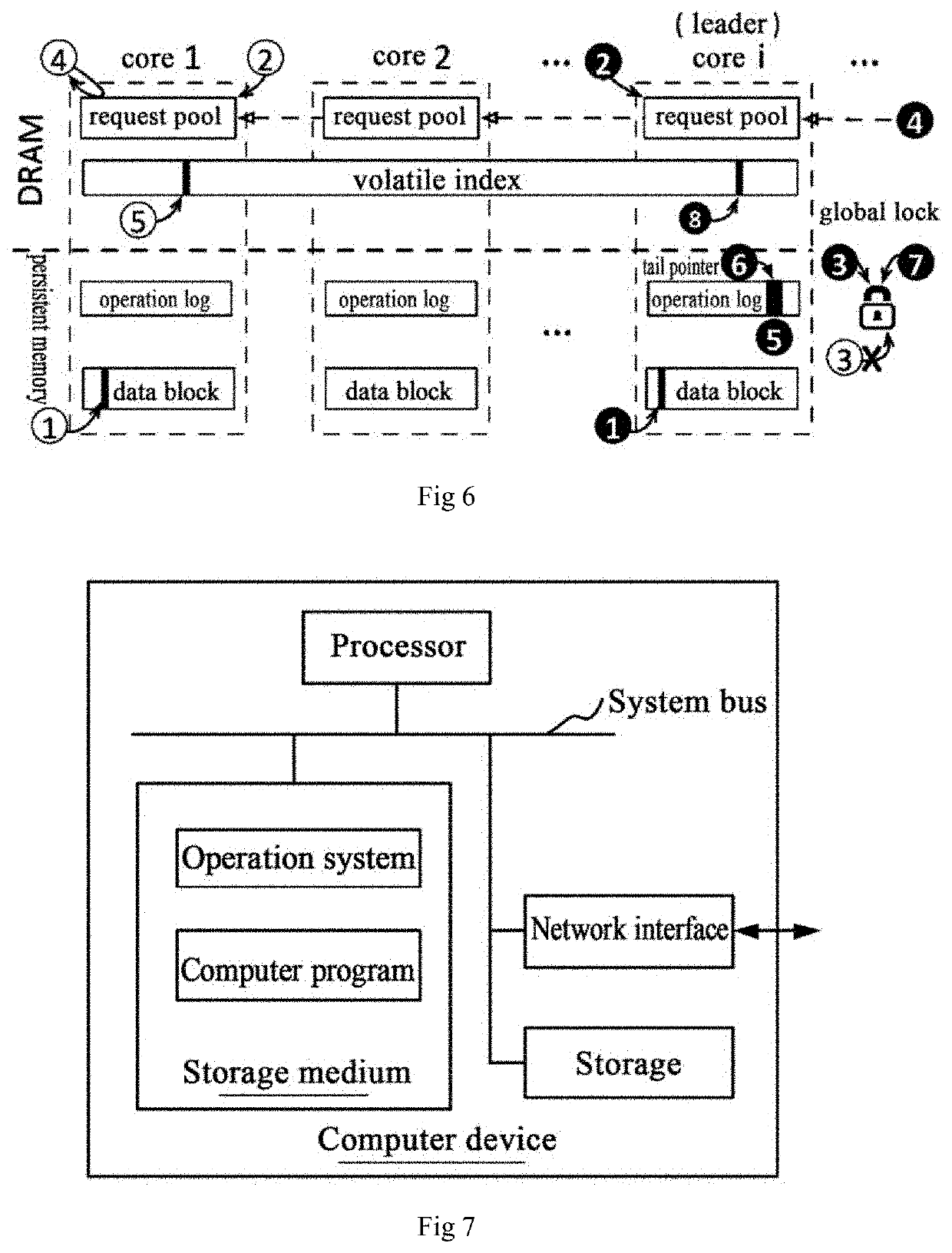

- A redesigned log-structured persistent memory key-value storage engine that includes persistent memory allocators, operation logs, and a volatile index structure, utilizing batch persistency and pipeline batch persistence technology to reduce latency while maintaining high system throughput, with global locking and memory region management to synchronize processor cores and optimize memory allocation.

Performance Benchmarking and Evaluation Metrics

Performance evaluation of persistent memory versus external storage in high-velocity applications requires comprehensive benchmarking methodologies that capture the nuanced differences between these storage paradigms. Traditional storage benchmarks often fail to adequately represent the unique characteristics of persistent memory technologies, necessitating specialized evaluation frameworks that account for byte-addressability, near-DRAM latencies, and persistence guarantees.

Latency measurements constitute the primary differentiator between persistent memory and external storage solutions. Persistent memory technologies typically exhibit sub-microsecond access times, ranging from 100-300 nanoseconds for read operations, while external storage systems demonstrate millisecond-level latencies even with high-performance NVMe SSDs. Evaluation metrics must capture not only average latency but also tail latencies, particularly the 99th and 99.9th percentiles, which significantly impact application responsiveness in high-velocity scenarios.

Throughput benchmarking requires distinct approaches for each storage type. Persistent memory evaluation focuses on memory bandwidth utilization, typically measured in GB/s, while external storage assessment emphasizes IOPS capabilities. Mixed workload patterns, including random and sequential access patterns with varying block sizes, provide comprehensive performance profiles. Queue depth variations reveal how each technology scales under concurrent access scenarios.

Consistency and durability metrics present unique challenges for persistent memory evaluation. Unlike external storage with well-defined write completion semantics, persistent memory requires assessment of cache flush operations, memory ordering constraints, and persistence domain boundaries. Benchmarks must measure the overhead of ensuring data persistence through mechanisms like cache line flushes and memory barriers.

Application-specific evaluation metrics prove crucial for high-velocity scenarios. Database transaction processing benchmarks, real-time analytics workloads, and streaming data ingestion patterns reveal performance characteristics under realistic usage conditions. Memory allocation and deallocation patterns, garbage collection impacts, and memory fragmentation effects require specialized measurement approaches for persistent memory systems.

Power consumption and thermal characteristics represent increasingly important evaluation dimensions. Persistent memory technologies demonstrate different power profiles compared to external storage, particularly during idle states and under varying workload intensities. Energy efficiency metrics, measured as operations per watt, provide insights into total cost of ownership considerations for large-scale deployments.

Latency measurements constitute the primary differentiator between persistent memory and external storage solutions. Persistent memory technologies typically exhibit sub-microsecond access times, ranging from 100-300 nanoseconds for read operations, while external storage systems demonstrate millisecond-level latencies even with high-performance NVMe SSDs. Evaluation metrics must capture not only average latency but also tail latencies, particularly the 99th and 99.9th percentiles, which significantly impact application responsiveness in high-velocity scenarios.

Throughput benchmarking requires distinct approaches for each storage type. Persistent memory evaluation focuses on memory bandwidth utilization, typically measured in GB/s, while external storage assessment emphasizes IOPS capabilities. Mixed workload patterns, including random and sequential access patterns with varying block sizes, provide comprehensive performance profiles. Queue depth variations reveal how each technology scales under concurrent access scenarios.

Consistency and durability metrics present unique challenges for persistent memory evaluation. Unlike external storage with well-defined write completion semantics, persistent memory requires assessment of cache flush operations, memory ordering constraints, and persistence domain boundaries. Benchmarks must measure the overhead of ensuring data persistence through mechanisms like cache line flushes and memory barriers.

Application-specific evaluation metrics prove crucial for high-velocity scenarios. Database transaction processing benchmarks, real-time analytics workloads, and streaming data ingestion patterns reveal performance characteristics under realistic usage conditions. Memory allocation and deallocation patterns, garbage collection impacts, and memory fragmentation effects require specialized measurement approaches for persistent memory systems.

Power consumption and thermal characteristics represent increasingly important evaluation dimensions. Persistent memory technologies demonstrate different power profiles compared to external storage, particularly during idle states and under varying workload intensities. Energy efficiency metrics, measured as operations per watt, provide insights into total cost of ownership considerations for large-scale deployments.

Cost-Benefit Analysis for Enterprise Adoption

The cost-benefit analysis for enterprise adoption of persistent memory versus external storage in high-velocity applications reveals significant financial implications that extend beyond initial capital expenditure. Organizations must evaluate both direct and indirect costs while considering long-term operational benefits and strategic advantages.

Initial capital investment represents the most visible cost component. Persistent memory technologies typically command premium pricing compared to traditional external storage solutions, with costs ranging from 3-5 times higher per gigabyte. However, this comparison becomes misleading when factoring in the total cost of ownership, as persistent memory eliminates the need for complex storage area networks, reduces server counts through improved efficiency, and minimizes licensing costs for storage management software.

Operational expenditure analysis demonstrates where persistent memory delivers substantial value. Power consumption reductions of 40-60% are achievable due to eliminated storage controller overhead and reduced data movement operations. Cooling requirements decrease proportionally, while datacenter space utilization improves through server consolidation. These factors contribute to annual operational savings that can offset higher initial investments within 18-24 months for high-velocity workloads.

Performance-driven cost benefits emerge through application acceleration and infrastructure optimization. Reduced latency enables higher transaction throughput with fewer servers, while improved application response times translate to enhanced user experience and potential revenue increases. Database licensing costs decrease significantly when fewer CPU cores are required to achieve equivalent performance levels.

Risk mitigation costs favor persistent memory adoption through enhanced data durability and simplified disaster recovery architectures. Traditional external storage systems require complex backup and replication strategies, while persistent memory's inherent durability reduces these operational burdens and associated costs.

The financial justification strengthens for enterprises processing high-frequency transactions, real-time analytics, or latency-sensitive applications where performance improvements directly correlate with business value creation and competitive advantage.

Initial capital investment represents the most visible cost component. Persistent memory technologies typically command premium pricing compared to traditional external storage solutions, with costs ranging from 3-5 times higher per gigabyte. However, this comparison becomes misleading when factoring in the total cost of ownership, as persistent memory eliminates the need for complex storage area networks, reduces server counts through improved efficiency, and minimizes licensing costs for storage management software.

Operational expenditure analysis demonstrates where persistent memory delivers substantial value. Power consumption reductions of 40-60% are achievable due to eliminated storage controller overhead and reduced data movement operations. Cooling requirements decrease proportionally, while datacenter space utilization improves through server consolidation. These factors contribute to annual operational savings that can offset higher initial investments within 18-24 months for high-velocity workloads.

Performance-driven cost benefits emerge through application acceleration and infrastructure optimization. Reduced latency enables higher transaction throughput with fewer servers, while improved application response times translate to enhanced user experience and potential revenue increases. Database licensing costs decrease significantly when fewer CPU cores are required to achieve equivalent performance levels.

Risk mitigation costs favor persistent memory adoption through enhanced data durability and simplified disaster recovery architectures. Traditional external storage systems require complex backup and replication strategies, while persistent memory's inherent durability reduces these operational burdens and associated costs.

The financial justification strengthens for enterprises processing high-frequency transactions, real-time analytics, or latency-sensitive applications where performance improvements directly correlate with business value creation and competitive advantage.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!