Compute Express Link vs InfiniBand: Cost-Effectiveness in Clusters

APR 13, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

CXL vs InfiniBand Technology Background and Objectives

Compute Express Link (CXL) represents a revolutionary advancement in high-speed interconnect technology, emerging as an open industry standard designed to maintain cache coherency between CPUs and attached devices. Developed through collaboration between major technology companies including Intel, AMD, ARM, and others, CXL builds upon the PCIe 5.0 physical layer while introducing sophisticated protocols for memory, caching, and I/O operations. The technology addresses the growing demand for heterogeneous computing architectures where processors, accelerators, and memory resources must work seamlessly together.

InfiniBand, established over two decades ago, has evolved into a mature high-performance computing interconnect standard widely adopted in enterprise data centers and supercomputing environments. Originally developed by the InfiniBand Trade Association, this technology provides low-latency, high-bandwidth communication capabilities essential for distributed computing applications. InfiniBand's architecture supports advanced features including remote direct memory access (RDMA), hardware-based transport protocols, and sophisticated quality of service mechanisms.

The fundamental distinction between these technologies lies in their architectural approaches and target applications. CXL operates as a cache-coherent interconnect primarily focused on processor-to-device communication within individual servers, enabling direct memory sharing between CPUs and accelerators such as GPUs, FPGAs, and specialized processors. This approach eliminates traditional bottlenecks associated with data movement between processing elements and memory hierarchies.

InfiniBand functions as a network fabric connecting multiple independent computing nodes, facilitating high-speed inter-node communication across distributed systems. Its strength lies in enabling scalable cluster architectures where hundreds or thousands of servers can communicate efficiently through switched fabric topologies.

The primary objective of comparing these technologies centers on evaluating their cost-effectiveness in cluster computing environments. This analysis must consider multiple dimensions including initial hardware costs, implementation complexity, performance characteristics, scalability limitations, and long-term operational expenses. Understanding the total cost of ownership becomes crucial as organizations seek to optimize their computing infrastructure investments while meeting demanding performance requirements.

Performance objectives differ significantly between the two approaches. CXL aims to maximize single-node computational efficiency by eliminating memory access bottlenecks and enabling transparent resource sharing between heterogeneous processing elements. InfiniBand targets cluster-wide performance optimization through minimizing inter-node communication latencies and maximizing aggregate network throughput across distributed workloads.

The evaluation framework must also consider emerging trends in artificial intelligence, machine learning, and data analytics workloads that increasingly demand both high computational density and efficient data movement capabilities. These applications challenge traditional assumptions about optimal cluster architectures and interconnect technologies.

InfiniBand, established over two decades ago, has evolved into a mature high-performance computing interconnect standard widely adopted in enterprise data centers and supercomputing environments. Originally developed by the InfiniBand Trade Association, this technology provides low-latency, high-bandwidth communication capabilities essential for distributed computing applications. InfiniBand's architecture supports advanced features including remote direct memory access (RDMA), hardware-based transport protocols, and sophisticated quality of service mechanisms.

The fundamental distinction between these technologies lies in their architectural approaches and target applications. CXL operates as a cache-coherent interconnect primarily focused on processor-to-device communication within individual servers, enabling direct memory sharing between CPUs and accelerators such as GPUs, FPGAs, and specialized processors. This approach eliminates traditional bottlenecks associated with data movement between processing elements and memory hierarchies.

InfiniBand functions as a network fabric connecting multiple independent computing nodes, facilitating high-speed inter-node communication across distributed systems. Its strength lies in enabling scalable cluster architectures where hundreds or thousands of servers can communicate efficiently through switched fabric topologies.

The primary objective of comparing these technologies centers on evaluating their cost-effectiveness in cluster computing environments. This analysis must consider multiple dimensions including initial hardware costs, implementation complexity, performance characteristics, scalability limitations, and long-term operational expenses. Understanding the total cost of ownership becomes crucial as organizations seek to optimize their computing infrastructure investments while meeting demanding performance requirements.

Performance objectives differ significantly between the two approaches. CXL aims to maximize single-node computational efficiency by eliminating memory access bottlenecks and enabling transparent resource sharing between heterogeneous processing elements. InfiniBand targets cluster-wide performance optimization through minimizing inter-node communication latencies and maximizing aggregate network throughput across distributed workloads.

The evaluation framework must also consider emerging trends in artificial intelligence, machine learning, and data analytics workloads that increasingly demand both high computational density and efficient data movement capabilities. These applications challenge traditional assumptions about optimal cluster architectures and interconnect technologies.

Market Demand for Cost-Effective Cluster Interconnects

The global high-performance computing market continues to experience unprecedented growth, driven by increasing demands from artificial intelligence, machine learning, scientific computing, and data analytics applications. Organizations across industries are seeking interconnect solutions that deliver optimal performance while maintaining cost efficiency, creating substantial market opportunities for both established and emerging technologies.

Enterprise data centers and cloud service providers represent the largest segment of demand for cost-effective cluster interconnects. These organizations require solutions that can scale efficiently while managing total cost of ownership. The growing adoption of GPU-accelerated computing for AI workloads has intensified the need for high-bandwidth, low-latency interconnects that can handle massive data transfers without creating bottlenecks.

Research institutions and academic organizations constitute another significant market segment, where budget constraints often drive the selection of interconnect technologies. These environments typically prioritize cost-effectiveness over absolute peak performance, making them ideal candidates for evaluating alternative interconnect solutions that offer favorable price-performance ratios.

The emergence of edge computing and distributed AI inference applications has created new market dynamics. Organizations deploying smaller-scale clusters at edge locations require interconnect solutions that provide adequate performance at reduced costs compared to traditional high-end options. This trend has expanded the addressable market for cost-optimized interconnect technologies.

Manufacturing and automotive industries are increasingly adopting HPC clusters for simulation, modeling, and autonomous vehicle development. These sectors demonstrate strong sensitivity to infrastructure costs while requiring reliable, high-performance interconnects. The market demand from these industries emphasizes practical cost-effectiveness over theoretical maximum performance specifications.

Financial services organizations running risk analysis, algorithmic trading, and fraud detection workloads represent another growing market segment. These applications require consistent low-latency performance but operate under strict budget controls, driving demand for interconnect solutions that optimize cost per transaction or calculation.

The market trend toward hybrid and multi-cloud deployments has created additional complexity in interconnect selection. Organizations seek solutions that provide flexibility across different deployment models while maintaining cost predictability. This requirement has increased interest in interconnect technologies that offer competitive performance characteristics at various price points, enabling more strategic infrastructure investments.

Enterprise data centers and cloud service providers represent the largest segment of demand for cost-effective cluster interconnects. These organizations require solutions that can scale efficiently while managing total cost of ownership. The growing adoption of GPU-accelerated computing for AI workloads has intensified the need for high-bandwidth, low-latency interconnects that can handle massive data transfers without creating bottlenecks.

Research institutions and academic organizations constitute another significant market segment, where budget constraints often drive the selection of interconnect technologies. These environments typically prioritize cost-effectiveness over absolute peak performance, making them ideal candidates for evaluating alternative interconnect solutions that offer favorable price-performance ratios.

The emergence of edge computing and distributed AI inference applications has created new market dynamics. Organizations deploying smaller-scale clusters at edge locations require interconnect solutions that provide adequate performance at reduced costs compared to traditional high-end options. This trend has expanded the addressable market for cost-optimized interconnect technologies.

Manufacturing and automotive industries are increasingly adopting HPC clusters for simulation, modeling, and autonomous vehicle development. These sectors demonstrate strong sensitivity to infrastructure costs while requiring reliable, high-performance interconnects. The market demand from these industries emphasizes practical cost-effectiveness over theoretical maximum performance specifications.

Financial services organizations running risk analysis, algorithmic trading, and fraud detection workloads represent another growing market segment. These applications require consistent low-latency performance but operate under strict budget controls, driving demand for interconnect solutions that optimize cost per transaction or calculation.

The market trend toward hybrid and multi-cloud deployments has created additional complexity in interconnect selection. Organizations seek solutions that provide flexibility across different deployment models while maintaining cost predictability. This requirement has increased interest in interconnect technologies that offer competitive performance characteristics at various price points, enabling more strategic infrastructure investments.

Current State of CXL and InfiniBand in HPC Clusters

Compute Express Link has emerged as a transformative interconnect technology in high-performance computing environments, fundamentally altering how processors, memory, and accelerators communicate within cluster architectures. Currently deployed in production systems from major vendors including Intel, AMD, and Samsung, CXL enables cache-coherent memory sharing across heterogeneous computing elements. The technology operates at PCIe 5.0 and 6.0 speeds, delivering bandwidth capabilities of up to 64 GT/s per direction while maintaining low latency characteristics essential for memory-intensive workloads.

InfiniBand continues to dominate the HPC interconnect landscape, particularly in large-scale supercomputing installations where its proven reliability and performance characteristics have established market leadership. The latest HDR and NDR InfiniBand implementations deliver aggregate bandwidth exceeding 400 Gbps per port, with sub-microsecond latency performance that remains unmatched in production environments. Major deployments span across national laboratories, research institutions, and enterprise HPC centers, with NVIDIA's acquisition of Mellanox solidifying InfiniBand's position in AI and machine learning clusters.

The current deployment patterns reveal distinct application domains for each technology. CXL primarily addresses memory disaggregation and near-data computing scenarios within individual nodes or small cluster configurations, where its cache-coherent memory model provides significant advantages for memory-bound applications. InfiniBand maintains its stronghold in large-scale distributed computing environments, particularly in MPI-based scientific computing workloads that require high-bandwidth, low-latency inter-node communication across thousands of compute nodes.

Recent market developments indicate growing convergence between these technologies, with several vendors exploring hybrid architectures that leverage CXL for intra-node communication and InfiniBand for inter-node connectivity. This approach aims to optimize both memory hierarchy efficiency and network performance, addressing the diverse requirements of modern HPC workloads that increasingly combine traditional simulation tasks with data-intensive analytics and machine learning components.

Performance benchmarking results from leading research institutions demonstrate that CXL excels in memory-intensive applications with irregular access patterns, while InfiniBand maintains superiority in communication-intensive parallel computing scenarios. The technology maturity levels differ significantly, with InfiniBand representing a mature, battle-tested solution and CXL representing an emerging technology with substantial growth potential but limited large-scale deployment experience.

InfiniBand continues to dominate the HPC interconnect landscape, particularly in large-scale supercomputing installations where its proven reliability and performance characteristics have established market leadership. The latest HDR and NDR InfiniBand implementations deliver aggregate bandwidth exceeding 400 Gbps per port, with sub-microsecond latency performance that remains unmatched in production environments. Major deployments span across national laboratories, research institutions, and enterprise HPC centers, with NVIDIA's acquisition of Mellanox solidifying InfiniBand's position in AI and machine learning clusters.

The current deployment patterns reveal distinct application domains for each technology. CXL primarily addresses memory disaggregation and near-data computing scenarios within individual nodes or small cluster configurations, where its cache-coherent memory model provides significant advantages for memory-bound applications. InfiniBand maintains its stronghold in large-scale distributed computing environments, particularly in MPI-based scientific computing workloads that require high-bandwidth, low-latency inter-node communication across thousands of compute nodes.

Recent market developments indicate growing convergence between these technologies, with several vendors exploring hybrid architectures that leverage CXL for intra-node communication and InfiniBand for inter-node connectivity. This approach aims to optimize both memory hierarchy efficiency and network performance, addressing the diverse requirements of modern HPC workloads that increasingly combine traditional simulation tasks with data-intensive analytics and machine learning components.

Performance benchmarking results from leading research institutions demonstrate that CXL excels in memory-intensive applications with irregular access patterns, while InfiniBand maintains superiority in communication-intensive parallel computing scenarios. The technology maturity levels differ significantly, with InfiniBand representing a mature, battle-tested solution and CXL representing an emerging technology with substantial growth potential but limited large-scale deployment experience.

Current Interconnect Solutions for Cluster Computing

01 CXL protocol implementation and optimization for cost-effective memory expansion

Compute Express Link (CXL) technology enables cost-effective memory pooling and expansion by providing high-bandwidth, low-latency interconnect between processors and memory devices. The protocol allows for dynamic memory allocation and sharing across multiple hosts, reducing the need for dedicated memory per device. Implementation techniques focus on optimizing cache coherency mechanisms and memory access patterns to maximize performance while minimizing hardware costs. The technology supports various memory types and configurations, enabling flexible deployment options that balance performance requirements with budget constraints.- CXL protocol implementation and optimization: Compute Express Link (CXL) protocol implementations focus on optimizing memory coherency, cache management, and data transfer efficiency between processors and devices. These implementations include protocol layer enhancements, transaction ordering mechanisms, and latency reduction techniques to improve overall system performance and cost-effectiveness in data center environments.

- InfiniBand network architecture and fabric management: InfiniBand network architectures incorporate advanced fabric management techniques, including adaptive routing, congestion control, and quality of service mechanisms. These technologies enable efficient data transmission across high-performance computing clusters while optimizing resource utilization and reducing operational costs through improved bandwidth management and reduced latency.

- Hybrid interconnect solutions combining multiple protocols: Hybrid interconnect architectures integrate multiple communication protocols to leverage the advantages of different technologies. These solutions provide flexible connectivity options, enabling systems to dynamically select the most cost-effective protocol based on workload requirements, distance, bandwidth needs, and power consumption constraints.

- Resource pooling and disaggregation techniques: Resource pooling technologies enable the disaggregation of computing, memory, and storage resources across interconnect fabrics. These approaches improve resource utilization rates, reduce hardware redundancy, and lower total cost of ownership by allowing dynamic allocation of resources based on demand and enabling more efficient scaling of infrastructure.

- Performance monitoring and cost optimization analytics: Performance monitoring systems provide real-time analytics for interconnect technologies, tracking metrics such as bandwidth utilization, latency, error rates, and power consumption. These monitoring solutions enable data-driven decisions for optimizing cost-effectiveness by identifying bottlenecks, predicting failures, and recommending configuration adjustments to maximize return on investment.

02 InfiniBand network architecture and resource utilization optimization

InfiniBand provides high-performance networking solutions with focus on maximizing resource utilization and reducing total cost of ownership. The architecture supports advanced features like remote direct memory access (RDMA) and quality of service (QoS) mechanisms that improve efficiency. Network topology optimization and intelligent routing algorithms help reduce the number of required switches and cables, lowering infrastructure costs. Virtualization capabilities enable multiple applications to share the same physical infrastructure, improving cost-effectiveness through better resource utilization and reduced hardware requirements.Expand Specific Solutions03 Hybrid interconnect solutions combining CXL and traditional fabrics

Hybrid approaches integrate CXL with existing interconnect technologies to provide cost-optimized solutions for diverse workloads. These implementations leverage the strengths of different protocols, using CXL for memory-centric operations and other fabrics for network-intensive tasks. The integration strategies include protocol translation layers and unified management interfaces that simplify deployment and reduce operational costs. Resource allocation algorithms dynamically assign workloads to the most cost-effective interconnect based on performance requirements and current utilization patterns.Expand Specific Solutions04 Performance monitoring and cost analysis frameworks for interconnect selection

Comprehensive monitoring and analysis tools enable data-driven decisions for interconnect technology selection based on cost-effectiveness metrics. These frameworks collect performance data including bandwidth utilization, latency measurements, and power consumption to calculate total cost of ownership. Predictive modeling capabilities help forecast future requirements and identify optimal upgrade paths. The systems provide comparative analysis between different interconnect options, considering factors such as initial investment, operational expenses, scalability potential, and application-specific performance characteristics.Expand Specific Solutions05 Power efficiency and thermal management for reduced operational costs

Advanced power management techniques for both CXL and InfiniBand implementations significantly reduce operational expenses through lower energy consumption. Dynamic power scaling adjusts interconnect performance based on workload demands, minimizing unnecessary power draw during idle or low-activity periods. Thermal optimization strategies reduce cooling requirements and associated costs through improved heat dissipation designs and intelligent thermal throttling. Energy-efficient signaling methods and low-power idle states contribute to overall cost savings while maintaining performance levels adequate for most applications.Expand Specific Solutions

Major Players in CXL and InfiniBand Ecosystem

The competitive landscape for Compute Express Link versus InfiniBand in cluster cost-effectiveness reveals a mature, rapidly evolving market driven by AI and high-performance computing demands. The industry is transitioning from traditional InfiniBand dominance toward emerging CXL adoption, with market size exceeding billions annually. Technology maturity varies significantly: InfiniBand represents established, proven interconnect solutions championed by companies like Mellanox Technologies (now NVIDIA-owned), Intel Corp., and IBM, while CXL emerges as next-generation technology with Intel Corp. leading development alongside Samsung Electronics, Hewlett Packard Enterprise, and Oracle International Corp. Chinese players including Inspur, Dawning Information Industry, and xFusion Digital Technologies are accelerating domestic capabilities. The cost-effectiveness debate centers on InfiniBand's proven performance versus CXL's promising efficiency and standardization benefits, creating a dynamic competitive environment where established networking giants compete with emerging semiconductor innovators.

International Business Machines Corp.

Technical Solution: IBM provides comprehensive cluster solutions supporting both CXL and InfiniBand technologies through their Power Systems and x86-based offerings. Their approach focuses on hybrid architectures that can leverage CXL for memory-centric workloads and InfiniBand for network-intensive applications. IBM's OpenPOWER platform integrates CXL-compatible interfaces for accelerator connectivity while maintaining InfiniBand support for inter-node communication. They emphasize total cost of ownership optimization through workload-specific technology selection, offering consulting services to help enterprises choose between CXL and InfiniBand based on specific performance requirements and budget constraints.

Strengths: Comprehensive platform support, strong enterprise consulting capabilities, flexible hybrid solutions. Weaknesses: Higher complexity in hybrid deployments, premium pricing for enterprise solutions.

Intel Corp.

Technical Solution: Intel developed CXL (Compute Express Link) as a next-generation interconnect technology that provides cache-coherent connectivity between CPUs and accelerators. Their CXL solution offers lower latency compared to PCIe while maintaining cost-effectiveness through existing PCIe infrastructure compatibility. Intel's CXL implementation supports memory pooling and disaggregation, enabling flexible resource allocation in data centers. The technology provides bandwidth up to 64 GT/s with CXL 2.0 specification, supporting memory, cache, and I/O coherency protocols. Intel positions CXL as a more cost-effective alternative to InfiniBand for certain cluster applications, particularly those requiring memory expansion and accelerator connectivity rather than pure network performance.

Strengths: Industry leadership in CXL development, broad ecosystem support, cost-effective infrastructure reuse. Weaknesses: Limited to shorter distances, newer technology with less deployment experience compared to InfiniBand.

Core Patents in CXL and InfiniBand Technologies

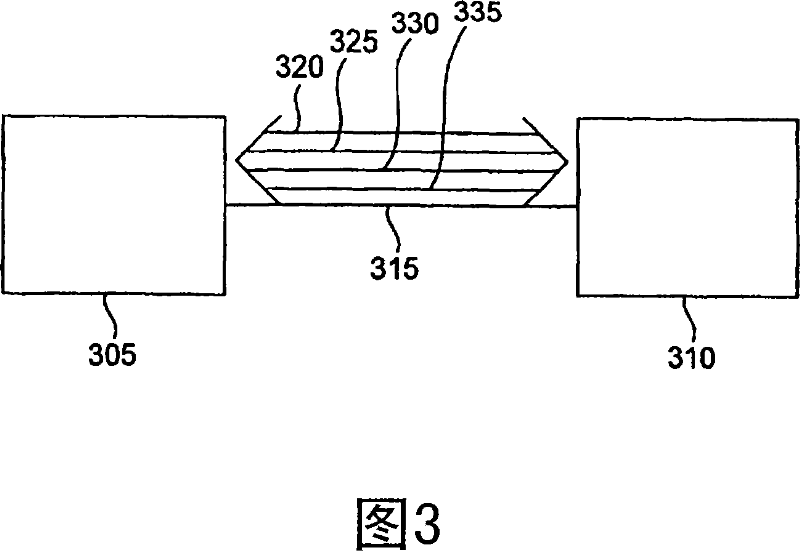

Bandwidth-based memory scheduling method and device, equipment and medium

PatentPendingCN118093181A

Innovation

- Obtain memory environment variables through the dynamic memory allocator, use performance counters and memory latency detection tools to monitor the bandwidth occupancy of local memory, determine whether the preset conditions are met based on the memory type and bandwidth occupancy, and allocate memory to ensure the reliability of DDR and CXL memory. Reasonable allocation.

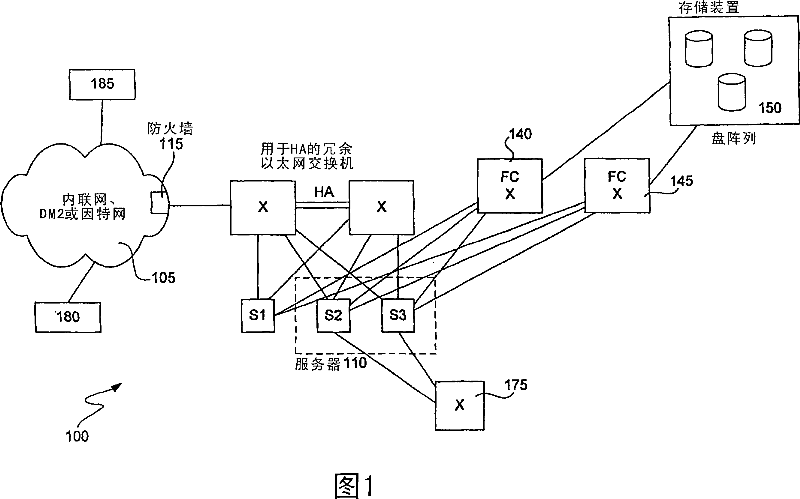

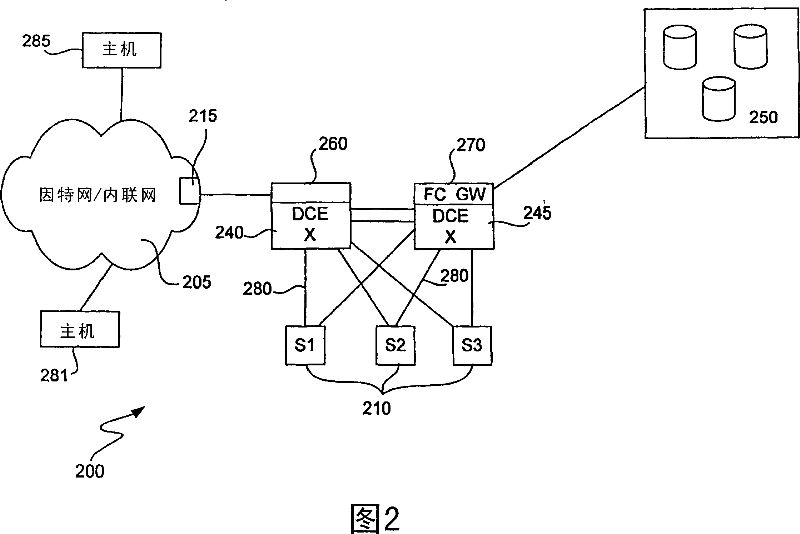

Fibre channel over ethernet

PatentInactiveCN101044717A

Innovation

- By implementing a Low Latency Ethernet (LLE) solution in the data center, multiple virtual channels (VL) are used to manage network traffic, combined with credit and bandwidth guarantee mechanisms, dynamically adjust the behavior and bandwidth of VLs, use active buffers to manage and Explicit congestion notification enables high-bandwidth and low-latency network transmission.

Total Cost of Ownership Analysis Framework

The Total Cost of Ownership (TCO) analysis framework for evaluating Compute Express Link (CXL) versus InfiniBand in cluster environments requires a comprehensive multi-dimensional approach that captures both direct and indirect costs throughout the technology lifecycle. This framework establishes standardized methodologies for quantifying financial impacts across procurement, deployment, operation, and maintenance phases.

The framework begins with capital expenditure assessment, encompassing hardware acquisition costs for switches, adapters, cables, and associated infrastructure components. For CXL implementations, this includes PCIe-based devices and memory expansion modules, while InfiniBand requires specialized network interface cards, switches, and high-speed copper or fiber optic cabling. The analysis must account for scalability factors, as initial deployment costs may differ significantly from expansion costs due to economies of scale and technology maturation.

Operational expenditure evaluation forms the second pillar, incorporating power consumption patterns, cooling requirements, and facility space utilization. CXL's integration with existing PCIe infrastructure typically results in lower power overhead compared to InfiniBand's dedicated networking stack. However, the framework must consider performance-per-watt metrics and thermal management implications, particularly in high-density cluster configurations where cooling costs can represent substantial ongoing expenses.

The framework incorporates lifecycle management costs, including software licensing, maintenance contracts, and technical support requirements. InfiniBand's mature ecosystem often provides comprehensive vendor support packages, while CXL's emerging nature may require additional investment in specialized expertise and training programs. Migration costs from existing infrastructure must also be quantified, considering data transfer requirements and potential downtime impacts.

Risk assessment components address technology obsolescence, vendor lock-in scenarios, and future upgrade pathways. The framework evaluates total economic impact over typical cluster lifecycles of three to five years, incorporating depreciation schedules and residual value considerations. This holistic approach enables organizations to make informed decisions based on comprehensive financial modeling rather than initial acquisition costs alone.

The framework begins with capital expenditure assessment, encompassing hardware acquisition costs for switches, adapters, cables, and associated infrastructure components. For CXL implementations, this includes PCIe-based devices and memory expansion modules, while InfiniBand requires specialized network interface cards, switches, and high-speed copper or fiber optic cabling. The analysis must account for scalability factors, as initial deployment costs may differ significantly from expansion costs due to economies of scale and technology maturation.

Operational expenditure evaluation forms the second pillar, incorporating power consumption patterns, cooling requirements, and facility space utilization. CXL's integration with existing PCIe infrastructure typically results in lower power overhead compared to InfiniBand's dedicated networking stack. However, the framework must consider performance-per-watt metrics and thermal management implications, particularly in high-density cluster configurations where cooling costs can represent substantial ongoing expenses.

The framework incorporates lifecycle management costs, including software licensing, maintenance contracts, and technical support requirements. InfiniBand's mature ecosystem often provides comprehensive vendor support packages, while CXL's emerging nature may require additional investment in specialized expertise and training programs. Migration costs from existing infrastructure must also be quantified, considering data transfer requirements and potential downtime impacts.

Risk assessment components address technology obsolescence, vendor lock-in scenarios, and future upgrade pathways. The framework evaluates total economic impact over typical cluster lifecycles of three to five years, incorporating depreciation schedules and residual value considerations. This holistic approach enables organizations to make informed decisions based on comprehensive financial modeling rather than initial acquisition costs alone.

Performance-Cost Trade-offs in Cluster Architecture

The performance-cost trade-offs between Compute Express Link (CXL) and InfiniBand in cluster architectures present distinct optimization pathways that fundamentally reshape computational economics. CXL's integration with existing PCIe infrastructure delivers immediate cost advantages through reduced hardware complexity and simplified deployment models, while InfiniBand's specialized architecture commands premium pricing but offers superior bandwidth efficiency and deterministic latency characteristics.

Performance scaling behaviors differ significantly between these interconnect technologies. CXL leverages cache-coherent memory sharing across compute nodes, enabling applications to achieve near-linear performance improvements with minimal software modifications. This architectural approach reduces the total cost of ownership by minimizing application porting efforts and development cycles. Conversely, InfiniBand requires specialized programming models and optimized communication libraries to fully exploit its high-bandwidth, low-latency capabilities, resulting in higher initial development investments but potentially superior peak performance for bandwidth-intensive workloads.

Economic efficiency metrics reveal contrasting value propositions across different cluster scales. Small to medium-scale deployments typically favor CXL implementations due to lower entry barriers and reduced infrastructure complexity. The technology's backward compatibility with existing PCIe ecosystems eliminates costly hardware refresh cycles while providing incremental performance improvements. Large-scale enterprise clusters often justify InfiniBand's premium costs through superior aggregate throughput and reduced per-transaction processing overhead.

Power efficiency considerations further complicate the cost-performance equation. CXL's integration with standard server architectures typically results in lower power consumption per compute unit, translating to reduced operational expenses over extended deployment periods. InfiniBand's dedicated switching infrastructure and specialized network interface cards consume additional power but deliver higher computational throughput per watt for optimized applications.

The total cost of ownership analysis must incorporate maintenance complexity and operational overhead. CXL's alignment with standard IT infrastructure reduces specialized training requirements and simplifies troubleshooting procedures. InfiniBand deployments demand specialized expertise for network optimization and performance tuning, increasing operational costs but enabling fine-grained performance optimization for mission-critical applications requiring maximum computational efficiency.

Performance scaling behaviors differ significantly between these interconnect technologies. CXL leverages cache-coherent memory sharing across compute nodes, enabling applications to achieve near-linear performance improvements with minimal software modifications. This architectural approach reduces the total cost of ownership by minimizing application porting efforts and development cycles. Conversely, InfiniBand requires specialized programming models and optimized communication libraries to fully exploit its high-bandwidth, low-latency capabilities, resulting in higher initial development investments but potentially superior peak performance for bandwidth-intensive workloads.

Economic efficiency metrics reveal contrasting value propositions across different cluster scales. Small to medium-scale deployments typically favor CXL implementations due to lower entry barriers and reduced infrastructure complexity. The technology's backward compatibility with existing PCIe ecosystems eliminates costly hardware refresh cycles while providing incremental performance improvements. Large-scale enterprise clusters often justify InfiniBand's premium costs through superior aggregate throughput and reduced per-transaction processing overhead.

Power efficiency considerations further complicate the cost-performance equation. CXL's integration with standard server architectures typically results in lower power consumption per compute unit, translating to reduced operational expenses over extended deployment periods. InfiniBand's dedicated switching infrastructure and specialized network interface cards consume additional power but deliver higher computational throughput per watt for optimized applications.

The total cost of ownership analysis must incorporate maintenance complexity and operational overhead. CXL's alignment with standard IT infrastructure reduces specialized training requirements and simplifies troubleshooting procedures. InfiniBand deployments demand specialized expertise for network optimization and performance tuning, increasing operational costs but enabling fine-grained performance optimization for mission-critical applications requiring maximum computational efficiency.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!