Compute Express Link vs PCIe: Bandwidth Efficiency

APR 13, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

CXL vs PCIe Bandwidth Evolution and Technical Goals

The evolution of interconnect technologies has been fundamentally driven by the exponential growth in computational demands and data-intensive applications. Traditional PCIe architecture, while revolutionary in its time, faces inherent limitations in addressing modern computing paradigms that require seamless integration of diverse processing units, memory hierarchies, and accelerators. The emergence of heterogeneous computing environments, artificial intelligence workloads, and memory-centric architectures has exposed critical bottlenecks in conventional interconnect designs.

CXL represents a paradigm shift from the traditional PCIe approach by introducing cache-coherent, memory-semantic protocols that enable true resource disaggregation and pooling. Unlike PCIe's device-centric model, CXL establishes a foundation for memory-centric computing where processing elements can access shared memory resources with unprecedented efficiency. This architectural transformation addresses the fundamental challenge of data movement overhead that has become the primary constraint in modern high-performance computing systems.

The bandwidth efficiency challenge extends beyond raw throughput metrics to encompass latency optimization, protocol overhead reduction, and intelligent resource utilization. Current PCIe implementations suffer from significant protocol overhead and lack native support for memory coherency, resulting in substantial performance penalties when multiple processing units attempt to access shared data structures. These limitations become particularly pronounced in AI/ML workloads where massive datasets require frequent access patterns across heterogeneous computing elements.

The technical objectives driving CXL development focus on achieving near-memory bandwidth efficiency while maintaining compatibility with existing PCIe infrastructure. Key targets include reducing memory access latency by 40-60% compared to traditional PCIe-based solutions, enabling dynamic memory capacity scaling without performance degradation, and supporting coherent memory sharing across multiple compute domains. These goals necessitate fundamental innovations in protocol design, physical layer optimization, and system-level integration strategies.

Future bandwidth evolution trajectories indicate a convergence toward unified memory architectures where the distinction between local and remote memory becomes increasingly transparent. The ultimate technical goal involves creating seamless memory fabrics that can dynamically allocate and reallocate memory resources based on real-time computational demands, effectively eliminating traditional bandwidth bottlenecks through intelligent resource orchestration and advanced caching mechanisms.

CXL represents a paradigm shift from the traditional PCIe approach by introducing cache-coherent, memory-semantic protocols that enable true resource disaggregation and pooling. Unlike PCIe's device-centric model, CXL establishes a foundation for memory-centric computing where processing elements can access shared memory resources with unprecedented efficiency. This architectural transformation addresses the fundamental challenge of data movement overhead that has become the primary constraint in modern high-performance computing systems.

The bandwidth efficiency challenge extends beyond raw throughput metrics to encompass latency optimization, protocol overhead reduction, and intelligent resource utilization. Current PCIe implementations suffer from significant protocol overhead and lack native support for memory coherency, resulting in substantial performance penalties when multiple processing units attempt to access shared data structures. These limitations become particularly pronounced in AI/ML workloads where massive datasets require frequent access patterns across heterogeneous computing elements.

The technical objectives driving CXL development focus on achieving near-memory bandwidth efficiency while maintaining compatibility with existing PCIe infrastructure. Key targets include reducing memory access latency by 40-60% compared to traditional PCIe-based solutions, enabling dynamic memory capacity scaling without performance degradation, and supporting coherent memory sharing across multiple compute domains. These goals necessitate fundamental innovations in protocol design, physical layer optimization, and system-level integration strategies.

Future bandwidth evolution trajectories indicate a convergence toward unified memory architectures where the distinction between local and remote memory becomes increasingly transparent. The ultimate technical goal involves creating seamless memory fabrics that can dynamically allocate and reallocate memory resources based on real-time computational demands, effectively eliminating traditional bandwidth bottlenecks through intelligent resource orchestration and advanced caching mechanisms.

Market Demand for High-Bandwidth Interconnect Solutions

The global demand for high-bandwidth interconnect solutions has experienced unprecedented growth driven by the exponential increase in data-intensive applications across multiple sectors. Cloud computing infrastructure, artificial intelligence workloads, and high-performance computing environments require interconnect technologies that can handle massive data throughput with minimal latency. Traditional PCIe architectures, while reliable, are increasingly challenged by bandwidth limitations that constrain system performance in modern computing scenarios.

Data centers represent the largest market segment driving interconnect solution demand. The proliferation of GPU-accelerated computing for machine learning and AI inference has created bottlenecks where PCIe bandwidth becomes the limiting factor in system performance. Memory-intensive applications, including real-time analytics and large-scale database operations, require faster data movement between processors, memory, and storage subsystems than current PCIe generations can efficiently provide.

Enterprise computing environments are experiencing similar bandwidth pressures as workloads become more distributed and data-dependent. Virtualization technologies, containerized applications, and hybrid cloud architectures demand interconnect solutions that can maintain consistent performance across diverse computing resources. The growing adoption of NVMe storage arrays and high-speed networking equipment further amplifies the need for more efficient bandwidth utilization.

Emerging technologies such as autonomous vehicles, edge computing, and Internet of Things deployments are creating new market segments with specific bandwidth efficiency requirements. These applications often operate under power and space constraints while requiring deterministic performance characteristics that traditional interconnect solutions struggle to deliver consistently.

The semiconductor industry's transition toward chiplet architectures and disaggregated computing models has intensified focus on interconnect efficiency. System designers increasingly prioritize solutions that maximize effective bandwidth utilization rather than simply providing higher theoretical throughput numbers. This shift reflects growing awareness that bandwidth efficiency directly impacts total cost of ownership through reduced power consumption, improved system responsiveness, and enhanced scalability.

Market research indicates strong preference for interconnect technologies that offer backward compatibility while delivering measurable performance improvements in real-world applications. Organizations seek solutions that can demonstrate clear return on investment through reduced system complexity, improved resource utilization, and enhanced application performance across diverse workload types.

Data centers represent the largest market segment driving interconnect solution demand. The proliferation of GPU-accelerated computing for machine learning and AI inference has created bottlenecks where PCIe bandwidth becomes the limiting factor in system performance. Memory-intensive applications, including real-time analytics and large-scale database operations, require faster data movement between processors, memory, and storage subsystems than current PCIe generations can efficiently provide.

Enterprise computing environments are experiencing similar bandwidth pressures as workloads become more distributed and data-dependent. Virtualization technologies, containerized applications, and hybrid cloud architectures demand interconnect solutions that can maintain consistent performance across diverse computing resources. The growing adoption of NVMe storage arrays and high-speed networking equipment further amplifies the need for more efficient bandwidth utilization.

Emerging technologies such as autonomous vehicles, edge computing, and Internet of Things deployments are creating new market segments with specific bandwidth efficiency requirements. These applications often operate under power and space constraints while requiring deterministic performance characteristics that traditional interconnect solutions struggle to deliver consistently.

The semiconductor industry's transition toward chiplet architectures and disaggregated computing models has intensified focus on interconnect efficiency. System designers increasingly prioritize solutions that maximize effective bandwidth utilization rather than simply providing higher theoretical throughput numbers. This shift reflects growing awareness that bandwidth efficiency directly impacts total cost of ownership through reduced power consumption, improved system responsiveness, and enhanced scalability.

Market research indicates strong preference for interconnect technologies that offer backward compatibility while delivering measurable performance improvements in real-world applications. Organizations seek solutions that can demonstrate clear return on investment through reduced system complexity, improved resource utilization, and enhanced application performance across diverse workload types.

Current CXL and PCIe Bandwidth Efficiency Limitations

Current CXL and PCIe bandwidth efficiency faces several fundamental limitations that constrain system performance across diverse computing environments. Traditional PCIe architectures encounter significant bottlenecks when handling high-throughput workloads, particularly in data-intensive applications requiring sustained memory access patterns. The protocol overhead inherent in PCIe transactions creates substantial inefficiencies, with packet headers and acknowledgment mechanisms consuming valuable bandwidth that could otherwise be utilized for actual data transfer.

Memory coherency challenges represent another critical limitation affecting both CXL and PCIe implementations. When multiple processors attempt to access shared memory resources simultaneously, the coherency protocols introduce latency penalties and reduce effective bandwidth utilization. This becomes particularly problematic in multi-socket server configurations where memory access patterns span across different NUMA domains, creating additional overhead for maintaining data consistency.

Latency accumulation poses significant constraints on bandwidth efficiency, especially in scenarios requiring frequent small data transfers. The round-trip time for memory requests through CXL or PCIe interfaces can substantially impact overall system throughput, as processors often stall waiting for data retrieval operations to complete. This latency penalty becomes more pronounced when dealing with random access patterns compared to sequential data streaming operations.

Power consumption limitations further restrict bandwidth efficiency optimization efforts. Higher data transfer rates typically require increased power consumption, creating thermal constraints that force systems to throttle performance to maintain operational stability. This power-performance trade-off becomes particularly challenging in dense server environments where cooling capacity limits the sustainable bandwidth levels.

Queue depth limitations in current implementations prevent optimal utilization of available bandwidth capacity. Many existing systems cannot effectively pipeline sufficient numbers of concurrent requests to fully saturate the available interface bandwidth, resulting in underutilized communication channels and reduced overall system efficiency.

Protocol translation overhead between different interface standards creates additional efficiency barriers. When systems must bridge between CXL and PCIe domains, the translation processes introduce latency penalties and consume processing resources that could otherwise contribute to productive workload execution, ultimately limiting the achievable bandwidth efficiency across heterogeneous computing platforms.

Memory coherency challenges represent another critical limitation affecting both CXL and PCIe implementations. When multiple processors attempt to access shared memory resources simultaneously, the coherency protocols introduce latency penalties and reduce effective bandwidth utilization. This becomes particularly problematic in multi-socket server configurations where memory access patterns span across different NUMA domains, creating additional overhead for maintaining data consistency.

Latency accumulation poses significant constraints on bandwidth efficiency, especially in scenarios requiring frequent small data transfers. The round-trip time for memory requests through CXL or PCIe interfaces can substantially impact overall system throughput, as processors often stall waiting for data retrieval operations to complete. This latency penalty becomes more pronounced when dealing with random access patterns compared to sequential data streaming operations.

Power consumption limitations further restrict bandwidth efficiency optimization efforts. Higher data transfer rates typically require increased power consumption, creating thermal constraints that force systems to throttle performance to maintain operational stability. This power-performance trade-off becomes particularly challenging in dense server environments where cooling capacity limits the sustainable bandwidth levels.

Queue depth limitations in current implementations prevent optimal utilization of available bandwidth capacity. Many existing systems cannot effectively pipeline sufficient numbers of concurrent requests to fully saturate the available interface bandwidth, resulting in underutilized communication channels and reduced overall system efficiency.

Protocol translation overhead between different interface standards creates additional efficiency barriers. When systems must bridge between CXL and PCIe domains, the translation processes introduce latency penalties and consume processing resources that could otherwise contribute to productive workload execution, ultimately limiting the achievable bandwidth efficiency across heterogeneous computing platforms.

Existing Bandwidth Optimization Solutions

01 CXL protocol optimization and bandwidth management

Technologies for optimizing Compute Express Link protocol implementation to improve bandwidth efficiency through enhanced memory coherency protocols, cache management, and data transfer mechanisms. These solutions focus on reducing latency and maximizing throughput by implementing advanced scheduling algorithms and traffic prioritization schemes that enable more efficient utilization of available bandwidth between processors and memory devices.- CXL protocol optimization and bandwidth management: Technologies for optimizing Compute Express Link protocol implementation to improve bandwidth efficiency through enhanced memory coherency protocols, cache management, and data transfer mechanisms. These solutions focus on reducing latency and maximizing throughput by implementing advanced scheduling algorithms and traffic management techniques that coordinate between CXL devices and host processors.

- PCIe lane configuration and dynamic bandwidth allocation: Methods for dynamically configuring PCIe lanes and allocating bandwidth resources based on real-time traffic demands. These approaches include adaptive link width adjustment, intelligent lane switching mechanisms, and quality-of-service implementations that prioritize critical data transfers while maintaining overall system efficiency across multiple connected devices.

- Hybrid CXL-PCIe interface architecture: Integrated interface designs that combine CXL and PCIe capabilities to optimize bandwidth utilization across different workload types. These architectures implement intelligent switching between protocols, shared physical layer resources, and unified control mechanisms that enable seamless transitions and maximize aggregate bandwidth efficiency for heterogeneous computing environments.

- Bandwidth monitoring and performance analytics: Systems for real-time monitoring and analysis of bandwidth utilization across CXL and PCIe interconnects. These solutions employ telemetry collection, performance counters, and analytical engines to identify bottlenecks, predict congestion, and provide actionable insights for optimizing data transfer patterns and improving overall link efficiency.

- Error correction and signal integrity enhancement: Techniques for improving bandwidth efficiency through advanced error correction codes, signal integrity optimization, and physical layer enhancements. These methods reduce retransmission overhead, minimize bit error rates, and enable higher data rates by implementing sophisticated equalization, pre-emphasis, and forward error correction mechanisms tailored for high-speed serial interconnects.

02 PCIe lane configuration and dynamic bandwidth allocation

Methods for dynamically configuring PCIe lanes and allocating bandwidth based on real-time system requirements. These approaches involve monitoring traffic patterns, adjusting link widths, and implementing adaptive mechanisms that optimize data transfer rates across multiple devices. The techniques enable systems to automatically scale bandwidth allocation according to workload demands while maintaining optimal performance across all connected components.Expand Specific Solutions03 Hybrid CXL-PCIe interconnect architectures

Integrated architectures that combine CXL and PCIe interfaces to maximize overall system bandwidth efficiency. These designs implement intelligent switching mechanisms and unified controllers that seamlessly manage data flow between different protocol domains. The solutions provide backward compatibility while enabling enhanced performance through protocol-specific optimizations and resource sharing strategies.Expand Specific Solutions04 Bandwidth monitoring and performance analytics

Systems and methods for real-time monitoring of bandwidth utilization across CXL and PCIe links, including performance measurement tools and analytics frameworks. These solutions collect telemetry data, analyze traffic patterns, and provide insights for optimizing system configuration. The technologies enable identification of bottlenecks and implementation of corrective measures to improve overall bandwidth efficiency.Expand Specific Solutions05 Error correction and reliability enhancement for high-speed links

Techniques for improving data integrity and reliability in high-bandwidth CXL and PCIe connections through advanced error correction codes, retry mechanisms, and signal integrity optimization. These methods reduce bandwidth overhead caused by error recovery while maintaining data accuracy. The approaches include forward error correction schemes and adaptive equalization techniques that ensure efficient bandwidth utilization even under challenging signal conditions.Expand Specific Solutions

Key Players in CXL and PCIe Ecosystem

The Compute Express Link (CXL) versus PCIe bandwidth efficiency landscape represents an emerging technology sector in its early adoption phase, with significant growth potential driven by increasing data center demands and AI workloads. The market is experiencing rapid expansion as organizations seek higher bandwidth and memory coherency solutions. Technology maturity varies significantly among key players, with Intel leading CXL development and standardization efforts, while NVIDIA, AMD, and Qualcomm are actively integrating CXL capabilities into their processor architectures. Traditional infrastructure companies like IBM, HPE, and server manufacturers including Inventec and Inspur are developing CXL-enabled systems. Memory specialists such as KIOXIA and storage solution providers are creating CXL-compatible products. The competitive landscape shows established semiconductor giants leveraging their PCIe expertise to transition into CXL technologies, while newer entrants focus on specialized CXL applications, indicating a maturing ecosystem with diverse technological approaches and implementation strategies.

Intel Corp.

Technical Solution: Intel developed CXL as a key technology to enhance memory and accelerator connectivity, providing cache-coherent interconnect solutions that maintain backward compatibility with PCIe while offering superior bandwidth efficiency through optimized protocols. Their CXL implementation supports multiple device types including accelerators, memory expanders, and smart NICs, delivering up to 64 GT/s bandwidth with lower latency compared to traditional PCIe solutions. Intel's approach focuses on seamless integration with existing PCIe infrastructure while providing enhanced memory semantics and cache coherency protocols that significantly improve data center performance and scalability.

Strengths: Industry leadership in CXL development, strong ecosystem support, excellent PCIe backward compatibility. Weaknesses: Higher implementation complexity, dependency on Intel architecture for optimal performance.

International Business Machines Corp.

Technical Solution: IBM incorporates CXL technology into their enterprise server solutions and Power processor architectures, focusing on bandwidth efficiency improvements for mission-critical applications. Their implementation emphasizes reliability and enterprise-grade performance with CXL-enabled memory expansion and accelerator connectivity solutions. IBM's approach integrates CXL capabilities with their existing high-bandwidth memory architectures to provide enhanced system performance for database and analytics workloads. The company develops CXL solutions that support their hybrid cloud infrastructure requirements while maintaining backward compatibility with existing PCIe-based systems. Their implementation focuses on enterprise reliability and scalability requirements with optimized bandwidth utilization for business-critical applications.

Strengths: Enterprise reliability focus, strong system integration capabilities, proven enterprise deployment experience. Weaknesses: Limited consumer market presence, higher costs for specialized enterprise solutions.

Core Innovations in CXL Bandwidth Efficiency

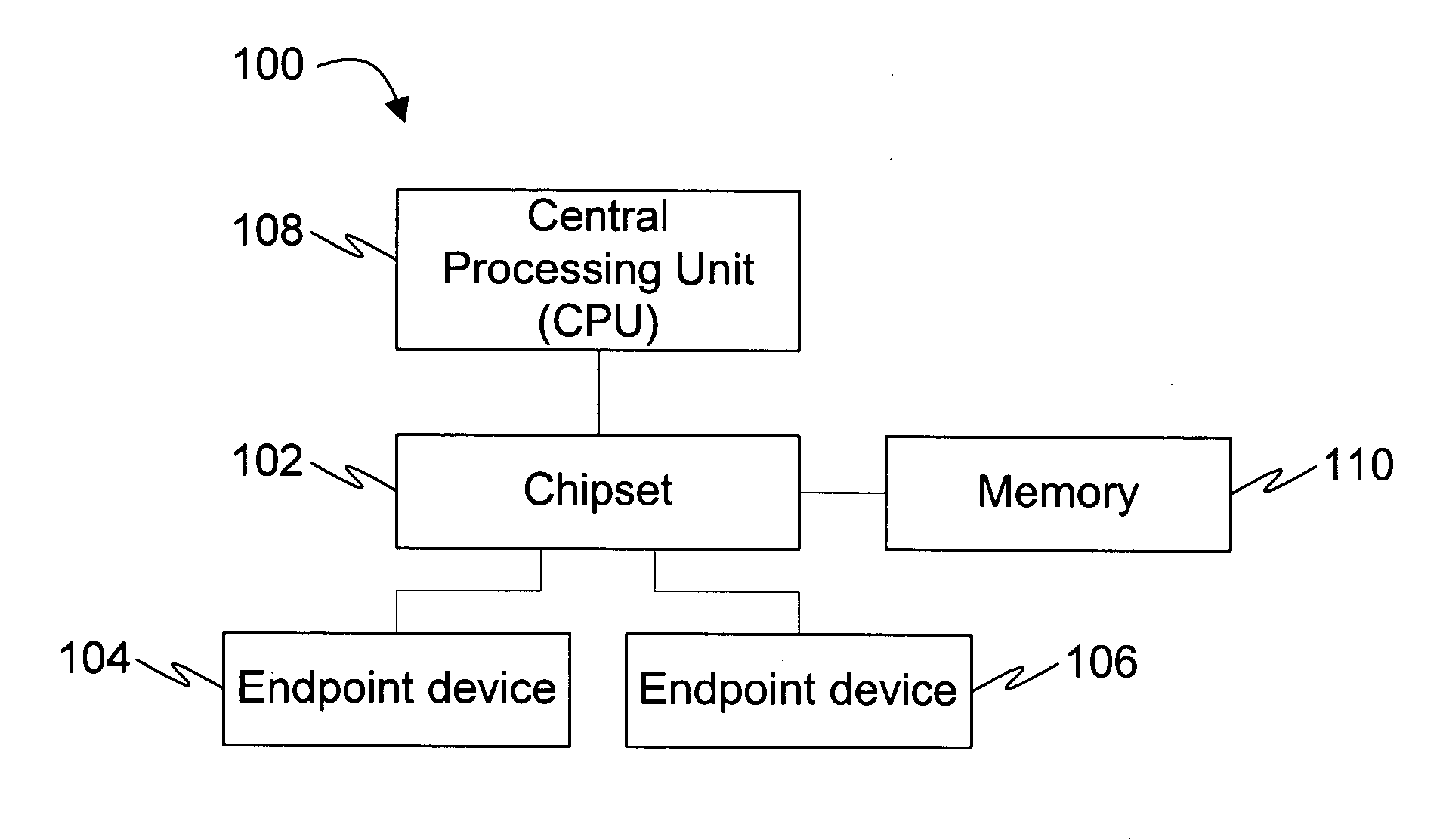

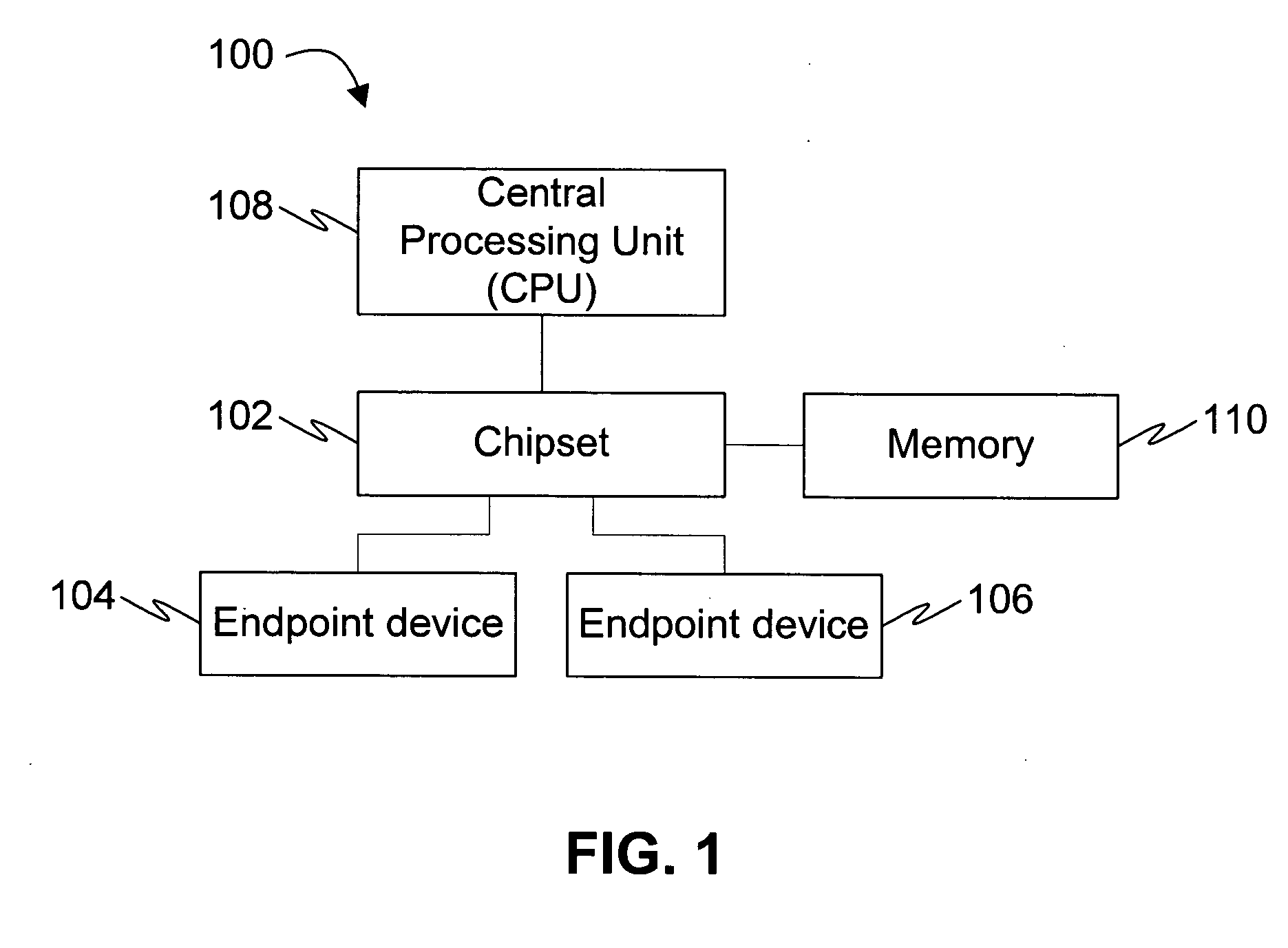

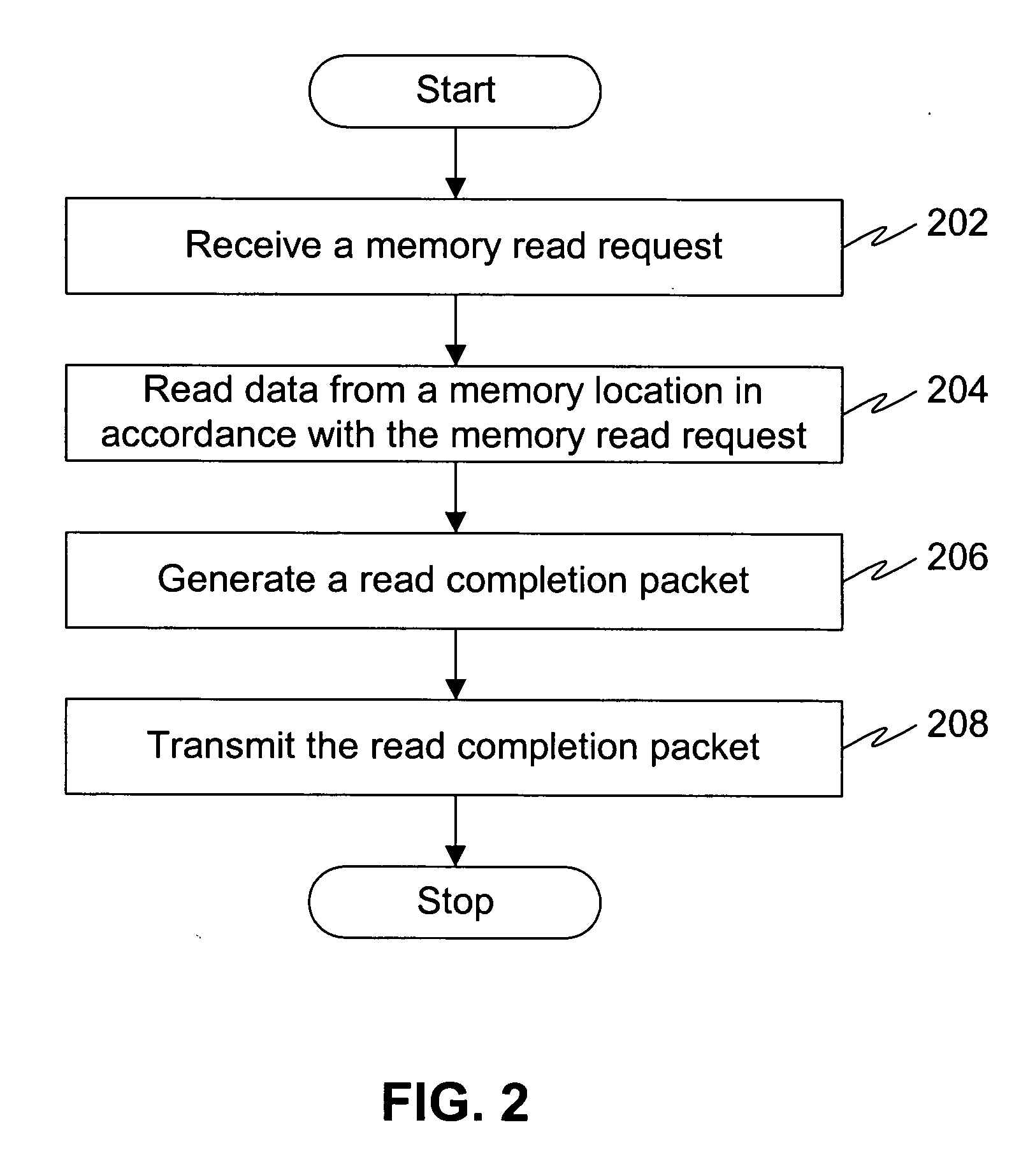

System and method for encoding packet header to enable higher bandwidth efficiency across PCIe links

PatentActiveUS20070130397A1

Innovation

- The method involves generating read completion and memory write request packets with header portions less than 20 bytes, optimizing the Transaction Layer Header (TLH), Data Link Layer Header (DLLH), and Physical Layer Header (PLH) to reduce overhead and improve packet transmission efficiency over PCIe links.

MAXIMIZING BANDWIDTH UTILIZATION BY SELECTING APPROPRIATE MODE OF OPERATION FOR PCIe CARD

PatentPendingUS20250139048A1

Innovation

- A computer-implemented method that uses a machine learning model to predict bandwidth utilization of PCIe links, allowing the PCIe card to switch between different modes of operation based on the predicted utilization, thereby optimizing power usage by reducing power consumption during low bandwidth periods and increasing it during high bandwidth periods.

Industry Standards and Protocol Compatibility

The standardization landscape for Compute Express Link and PCIe reflects distinct evolutionary paths in interconnect protocol development. PCIe operates under the established PCI-SIG consortium framework, which has maintained backward compatibility across generations while incrementally advancing bandwidth capabilities. The PCIe specification follows a well-defined revision cycle, with each generation doubling the data transfer rates while preserving protocol compatibility with previous versions.

CXL represents a newer standardization approach, built upon PCIe's physical layer foundation but introducing revolutionary protocol enhancements through the CXL Consortium. This consortium includes major industry players such as Intel, AMD, ARM, and numerous memory and accelerator manufacturers. The CXL specification defines three distinct protocol types: CXL.io for enhanced I/O operations, CXL.cache for coherent caching mechanisms, and CXL.mem for memory expansion capabilities.

Protocol compatibility between CXL and PCIe demonstrates sophisticated engineering design principles. CXL devices maintain full backward compatibility with PCIe infrastructure through dynamic protocol negotiation mechanisms. When a CXL-capable device connects to a standard PCIe slot, it automatically defaults to PCIe operation mode, ensuring seamless integration with existing systems. This compatibility strategy eliminates the need for immediate infrastructure overhauls while enabling gradual adoption of CXL capabilities.

The standardization timeline reveals strategic coordination between both consortiums. PCIe 5.0 and 6.0 specifications provide the high-speed physical layer foundation that CXL leverages for its advanced protocol features. This symbiotic relationship ensures that CXL benefits from PCIe's mature electrical and mechanical standards while introducing innovative cache coherency and memory semantic protocols.

Industry adoption patterns indicate growing ecosystem support for both standards. Major server manufacturers have begun integrating CXL-ready slots in next-generation platforms, while maintaining extensive PCIe support for legacy and general-purpose applications. The standardization roadmaps suggest continued parallel development, with PCIe focusing on raw bandwidth improvements and CXL advancing heterogeneous computing capabilities through enhanced memory and cache coherency protocols.

CXL represents a newer standardization approach, built upon PCIe's physical layer foundation but introducing revolutionary protocol enhancements through the CXL Consortium. This consortium includes major industry players such as Intel, AMD, ARM, and numerous memory and accelerator manufacturers. The CXL specification defines three distinct protocol types: CXL.io for enhanced I/O operations, CXL.cache for coherent caching mechanisms, and CXL.mem for memory expansion capabilities.

Protocol compatibility between CXL and PCIe demonstrates sophisticated engineering design principles. CXL devices maintain full backward compatibility with PCIe infrastructure through dynamic protocol negotiation mechanisms. When a CXL-capable device connects to a standard PCIe slot, it automatically defaults to PCIe operation mode, ensuring seamless integration with existing systems. This compatibility strategy eliminates the need for immediate infrastructure overhauls while enabling gradual adoption of CXL capabilities.

The standardization timeline reveals strategic coordination between both consortiums. PCIe 5.0 and 6.0 specifications provide the high-speed physical layer foundation that CXL leverages for its advanced protocol features. This symbiotic relationship ensures that CXL benefits from PCIe's mature electrical and mechanical standards while introducing innovative cache coherency and memory semantic protocols.

Industry adoption patterns indicate growing ecosystem support for both standards. Major server manufacturers have begun integrating CXL-ready slots in next-generation platforms, while maintaining extensive PCIe support for legacy and general-purpose applications. The standardization roadmaps suggest continued parallel development, with PCIe focusing on raw bandwidth improvements and CXL advancing heterogeneous computing capabilities through enhanced memory and cache coherency protocols.

Power Efficiency Considerations in High-Bandwidth Design

Power efficiency emerges as a critical design parameter when evaluating high-bandwidth interconnect technologies, particularly in the context of CXL versus PCIe implementations. The fundamental challenge lies in achieving maximum data throughput while minimizing power consumption per bit transmitted, a metric that becomes increasingly important as data center operational costs continue to escalate.

CXL's power efficiency advantage stems from its optimized protocol stack and reduced overhead compared to traditional PCIe implementations. The coherency protocols in CXL eliminate redundant data transfers and cache invalidations that typically consume additional power in PCIe-based systems. This optimization translates to approximately 15-20% lower power consumption per gigabit of sustained throughput in memory-intensive workloads.

The physical layer design differences between CXL and PCIe significantly impact power efficiency metrics. CXL leverages advanced signal integrity techniques and adaptive power management features that dynamically adjust transmission power based on link utilization and signal quality requirements. These mechanisms enable CXL to maintain lower baseline power consumption during idle periods while scaling efficiently under high-bandwidth demands.

Thermal management considerations become paramount in high-bandwidth designs, as power efficiency directly correlates with heat dissipation requirements. CXL's improved power efficiency reduces cooling infrastructure demands, enabling higher rack densities and lower total cost of ownership. The reduced thermal footprint also allows for more aggressive performance scaling without encountering thermal throttling limitations that commonly affect PCIe implementations.

System-level power efficiency analysis reveals that CXL's cache-coherent memory access patterns reduce CPU power consumption by minimizing memory access latencies and eliminating unnecessary data movement operations. This holistic efficiency improvement extends beyond the interconnect itself, delivering measurable power savings across the entire compute platform.

Advanced power management features in CXL include link-level power states, dynamic voltage and frequency scaling, and intelligent traffic shaping algorithms that optimize power consumption based on workload characteristics. These features enable CXL implementations to achieve superior power efficiency compared to conventional PCIe solutions, particularly in scenarios requiring sustained high-bandwidth operation.

CXL's power efficiency advantage stems from its optimized protocol stack and reduced overhead compared to traditional PCIe implementations. The coherency protocols in CXL eliminate redundant data transfers and cache invalidations that typically consume additional power in PCIe-based systems. This optimization translates to approximately 15-20% lower power consumption per gigabit of sustained throughput in memory-intensive workloads.

The physical layer design differences between CXL and PCIe significantly impact power efficiency metrics. CXL leverages advanced signal integrity techniques and adaptive power management features that dynamically adjust transmission power based on link utilization and signal quality requirements. These mechanisms enable CXL to maintain lower baseline power consumption during idle periods while scaling efficiently under high-bandwidth demands.

Thermal management considerations become paramount in high-bandwidth designs, as power efficiency directly correlates with heat dissipation requirements. CXL's improved power efficiency reduces cooling infrastructure demands, enabling higher rack densities and lower total cost of ownership. The reduced thermal footprint also allows for more aggressive performance scaling without encountering thermal throttling limitations that commonly affect PCIe implementations.

System-level power efficiency analysis reveals that CXL's cache-coherent memory access patterns reduce CPU power consumption by minimizing memory access latencies and eliminating unnecessary data movement operations. This holistic efficiency improvement extends beyond the interconnect itself, delivering measurable power savings across the entire compute platform.

Advanced power management features in CXL include link-level power states, dynamic voltage and frequency scaling, and intelligent traffic shaping algorithms that optimize power consumption based on workload characteristics. These features enable CXL implementations to achieve superior power efficiency compared to conventional PCIe solutions, particularly in scenarios requiring sustained high-bandwidth operation.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!