How to Leverage Cloud-Edge Dynamics in Optical Burst Switching

MAR 2, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Cloud-Edge OBS Technology Background and Objectives

Optical Burst Switching (OBS) emerged in the early 2000s as a revolutionary networking paradigm designed to address the limitations of traditional optical circuit switching and optical packet switching. The technology was conceived to leverage the high bandwidth capabilities of optical fiber while providing the flexibility needed for dynamic data transmission. OBS operates by assembling data packets into larger bursts at edge nodes, transmitting control information ahead of the actual data burst, and establishing temporary optical paths through the network core.

The evolution of OBS technology has been significantly influenced by the rapid advancement of cloud computing and edge computing architectures. Traditional OBS implementations focused primarily on core network optimization, treating edge nodes as simple aggregation points. However, the proliferation of distributed computing models has necessitated a fundamental shift in how OBS networks are designed and operated. The integration of cloud-edge dynamics represents a natural progression in OBS technology, enabling more intelligent burst assembly, routing decisions, and resource allocation strategies.

Cloud-edge dynamics in OBS refers to the symbiotic relationship between centralized cloud resources and distributed edge computing capabilities within optical burst switching networks. This approach leverages cloud-based intelligence for global network optimization while utilizing edge computing for real-time, low-latency decision making. The cloud component provides comprehensive network visibility, advanced analytics, and machine learning capabilities for predictive burst scheduling and network optimization. Meanwhile, edge nodes contribute local intelligence for immediate burst assembly decisions, adaptive routing, and quality of service management.

The primary objective of leveraging cloud-edge dynamics in OBS is to create a more responsive, efficient, and scalable optical networking infrastructure. This involves developing hybrid control architectures that can seamlessly coordinate between centralized cloud intelligence and distributed edge processing. The technology aims to minimize burst loss rates, reduce end-to-end latency, and optimize network resource utilization through intelligent collaboration between cloud and edge components.

Key technical objectives include implementing real-time burst prediction algorithms that utilize both historical cloud-based analytics and immediate edge-based traffic patterns. The system seeks to achieve dynamic load balancing across multiple network paths while maintaining quality of service guarantees for different traffic classes. Additionally, the technology targets enhanced network resilience through distributed decision-making capabilities that can maintain network operations even during cloud connectivity disruptions.

The strategic goal encompasses creating a future-ready optical networking platform that can adapt to evolving application requirements, from traditional data center interconnections to emerging applications such as autonomous vehicles, industrial IoT, and immersive media services. This cloud-edge OBS architecture represents a critical enabler for next-generation network infrastructures that demand both global optimization and local responsiveness.

The evolution of OBS technology has been significantly influenced by the rapid advancement of cloud computing and edge computing architectures. Traditional OBS implementations focused primarily on core network optimization, treating edge nodes as simple aggregation points. However, the proliferation of distributed computing models has necessitated a fundamental shift in how OBS networks are designed and operated. The integration of cloud-edge dynamics represents a natural progression in OBS technology, enabling more intelligent burst assembly, routing decisions, and resource allocation strategies.

Cloud-edge dynamics in OBS refers to the symbiotic relationship between centralized cloud resources and distributed edge computing capabilities within optical burst switching networks. This approach leverages cloud-based intelligence for global network optimization while utilizing edge computing for real-time, low-latency decision making. The cloud component provides comprehensive network visibility, advanced analytics, and machine learning capabilities for predictive burst scheduling and network optimization. Meanwhile, edge nodes contribute local intelligence for immediate burst assembly decisions, adaptive routing, and quality of service management.

The primary objective of leveraging cloud-edge dynamics in OBS is to create a more responsive, efficient, and scalable optical networking infrastructure. This involves developing hybrid control architectures that can seamlessly coordinate between centralized cloud intelligence and distributed edge processing. The technology aims to minimize burst loss rates, reduce end-to-end latency, and optimize network resource utilization through intelligent collaboration between cloud and edge components.

Key technical objectives include implementing real-time burst prediction algorithms that utilize both historical cloud-based analytics and immediate edge-based traffic patterns. The system seeks to achieve dynamic load balancing across multiple network paths while maintaining quality of service guarantees for different traffic classes. Additionally, the technology targets enhanced network resilience through distributed decision-making capabilities that can maintain network operations even during cloud connectivity disruptions.

The strategic goal encompasses creating a future-ready optical networking platform that can adapt to evolving application requirements, from traditional data center interconnections to emerging applications such as autonomous vehicles, industrial IoT, and immersive media services. This cloud-edge OBS architecture represents a critical enabler for next-generation network infrastructures that demand both global optimization and local responsiveness.

Market Demand for Cloud-Edge OBS Solutions

The convergence of cloud computing and edge computing architectures has created unprecedented demand for high-performance networking solutions capable of handling dynamic traffic patterns and ultra-low latency requirements. Cloud-edge optical burst switching represents a critical technology intersection where traditional optical networking meets modern distributed computing paradigms. Organizations across multiple sectors are increasingly recognizing the limitations of conventional packet-switched networks in supporting real-time applications that span cloud data centers and edge computing nodes.

Enterprise demand for cloud-edge OBS solutions is primarily driven by the explosive growth of latency-sensitive applications including autonomous vehicles, industrial IoT, augmented reality, and real-time analytics. These applications require seamless data flow between centralized cloud resources and distributed edge computing infrastructure, creating bottlenecks that traditional networking approaches struggle to address effectively. The ability to dynamically allocate optical bandwidth based on instantaneous traffic demands has become essential for maintaining service quality across geographically distributed computing resources.

Telecommunications service providers represent another significant market segment driving OBS adoption. The deployment of 5G networks and the proliferation of edge computing facilities have created complex traffic management challenges that require sophisticated optical switching capabilities. Service providers need solutions that can efficiently handle bursty traffic patterns while maintaining strict latency guarantees for premium services. The economic pressure to maximize infrastructure utilization while delivering differentiated service levels has made dynamic optical switching increasingly attractive.

The financial services sector has emerged as an early adopter of cloud-edge OBS technologies, particularly for high-frequency trading and real-time risk management applications. These use cases demand microsecond-level latency consistency and the ability to rapidly reconfigure network paths based on market conditions. Traditional networking approaches cannot provide the deterministic performance characteristics required for these mission-critical applications.

Content delivery networks and streaming media providers also represent substantial market opportunities for OBS solutions. The need to dynamically distribute content from cloud storage to edge caches based on real-time demand patterns aligns perfectly with the capabilities of optical burst switching. The ability to establish temporary high-bandwidth optical paths for content synchronization while maintaining efficient resource utilization has become increasingly valuable as content libraries grow and user expectations for quality increase.

Manufacturing and industrial automation sectors are driving demand through Industry 4.0 initiatives that require tight integration between cloud-based analytics platforms and edge-deployed control systems. The deterministic latency and bandwidth characteristics of OBS make it particularly suitable for supporting real-time control loops and predictive maintenance applications that span multiple computing tiers.

Enterprise demand for cloud-edge OBS solutions is primarily driven by the explosive growth of latency-sensitive applications including autonomous vehicles, industrial IoT, augmented reality, and real-time analytics. These applications require seamless data flow between centralized cloud resources and distributed edge computing infrastructure, creating bottlenecks that traditional networking approaches struggle to address effectively. The ability to dynamically allocate optical bandwidth based on instantaneous traffic demands has become essential for maintaining service quality across geographically distributed computing resources.

Telecommunications service providers represent another significant market segment driving OBS adoption. The deployment of 5G networks and the proliferation of edge computing facilities have created complex traffic management challenges that require sophisticated optical switching capabilities. Service providers need solutions that can efficiently handle bursty traffic patterns while maintaining strict latency guarantees for premium services. The economic pressure to maximize infrastructure utilization while delivering differentiated service levels has made dynamic optical switching increasingly attractive.

The financial services sector has emerged as an early adopter of cloud-edge OBS technologies, particularly for high-frequency trading and real-time risk management applications. These use cases demand microsecond-level latency consistency and the ability to rapidly reconfigure network paths based on market conditions. Traditional networking approaches cannot provide the deterministic performance characteristics required for these mission-critical applications.

Content delivery networks and streaming media providers also represent substantial market opportunities for OBS solutions. The need to dynamically distribute content from cloud storage to edge caches based on real-time demand patterns aligns perfectly with the capabilities of optical burst switching. The ability to establish temporary high-bandwidth optical paths for content synchronization while maintaining efficient resource utilization has become increasingly valuable as content libraries grow and user expectations for quality increase.

Manufacturing and industrial automation sectors are driving demand through Industry 4.0 initiatives that require tight integration between cloud-based analytics platforms and edge-deployed control systems. The deterministic latency and bandwidth characteristics of OBS make it particularly suitable for supporting real-time control loops and predictive maintenance applications that span multiple computing tiers.

Current OBS Challenges in Cloud-Edge Environments

Optical Burst Switching faces significant scalability limitations when deployed in cloud-edge environments. Traditional OBS architectures struggle to handle the exponential growth in data traffic between cloud data centers and edge computing nodes. The burst assembly and scheduling mechanisms, originally designed for relatively static network topologies, become inefficient when dealing with the dynamic nature of cloud-edge workloads. This scalability bottleneck manifests in increased burst loss rates and degraded network performance as the number of edge devices and cloud services continues to proliferate.

Latency management presents another critical challenge in cloud-edge OBS implementations. Edge computing applications demand ultra-low latency responses, often requiring sub-millisecond processing times. However, current OBS systems introduce additional delays through burst assembly processes, control packet processing, and wavelength scheduling algorithms. The inherent buffering requirements and offset-time calculations in OBS networks conflict with the stringent latency requirements of real-time edge applications such as autonomous vehicles, industrial automation, and augmented reality services.

Resource allocation inefficiencies plague existing OBS systems when attempting to optimize cloud-edge traffic flows. The static nature of traditional wavelength assignment and burst scheduling algorithms fails to adapt to the highly variable and unpredictable traffic patterns characteristic of cloud-edge environments. Edge nodes generate bursty traffic with irregular patterns, while cloud services exhibit different traffic characteristics, creating resource utilization imbalances that current OBS control mechanisms cannot effectively address.

Quality of Service differentiation remains inadequately addressed in current OBS implementations for cloud-edge scenarios. Different edge applications require varying levels of service guarantees, from best-effort IoT sensor data to mission-critical industrial control signals. Existing OBS systems lack sophisticated QoS mechanisms capable of dynamically prioritizing and managing diverse traffic types while maintaining optimal resource utilization across the cloud-edge continuum.

Network management complexity increases exponentially in cloud-edge OBS deployments due to the distributed nature of edge computing infrastructure. Current OBS control plane architectures struggle with the coordination required between numerous edge nodes and centralized cloud resources. The lack of intelligent traffic prediction and adaptive routing mechanisms further compounds these management challenges, resulting in suboptimal network performance and increased operational overhead.

Latency management presents another critical challenge in cloud-edge OBS implementations. Edge computing applications demand ultra-low latency responses, often requiring sub-millisecond processing times. However, current OBS systems introduce additional delays through burst assembly processes, control packet processing, and wavelength scheduling algorithms. The inherent buffering requirements and offset-time calculations in OBS networks conflict with the stringent latency requirements of real-time edge applications such as autonomous vehicles, industrial automation, and augmented reality services.

Resource allocation inefficiencies plague existing OBS systems when attempting to optimize cloud-edge traffic flows. The static nature of traditional wavelength assignment and burst scheduling algorithms fails to adapt to the highly variable and unpredictable traffic patterns characteristic of cloud-edge environments. Edge nodes generate bursty traffic with irregular patterns, while cloud services exhibit different traffic characteristics, creating resource utilization imbalances that current OBS control mechanisms cannot effectively address.

Quality of Service differentiation remains inadequately addressed in current OBS implementations for cloud-edge scenarios. Different edge applications require varying levels of service guarantees, from best-effort IoT sensor data to mission-critical industrial control signals. Existing OBS systems lack sophisticated QoS mechanisms capable of dynamically prioritizing and managing diverse traffic types while maintaining optimal resource utilization across the cloud-edge continuum.

Network management complexity increases exponentially in cloud-edge OBS deployments due to the distributed nature of edge computing infrastructure. Current OBS control plane architectures struggle with the coordination required between numerous edge nodes and centralized cloud resources. The lack of intelligent traffic prediction and adaptive routing mechanisms further compounds these management challenges, resulting in suboptimal network performance and increased operational overhead.

Current Cloud-Edge OBS Implementation Approaches

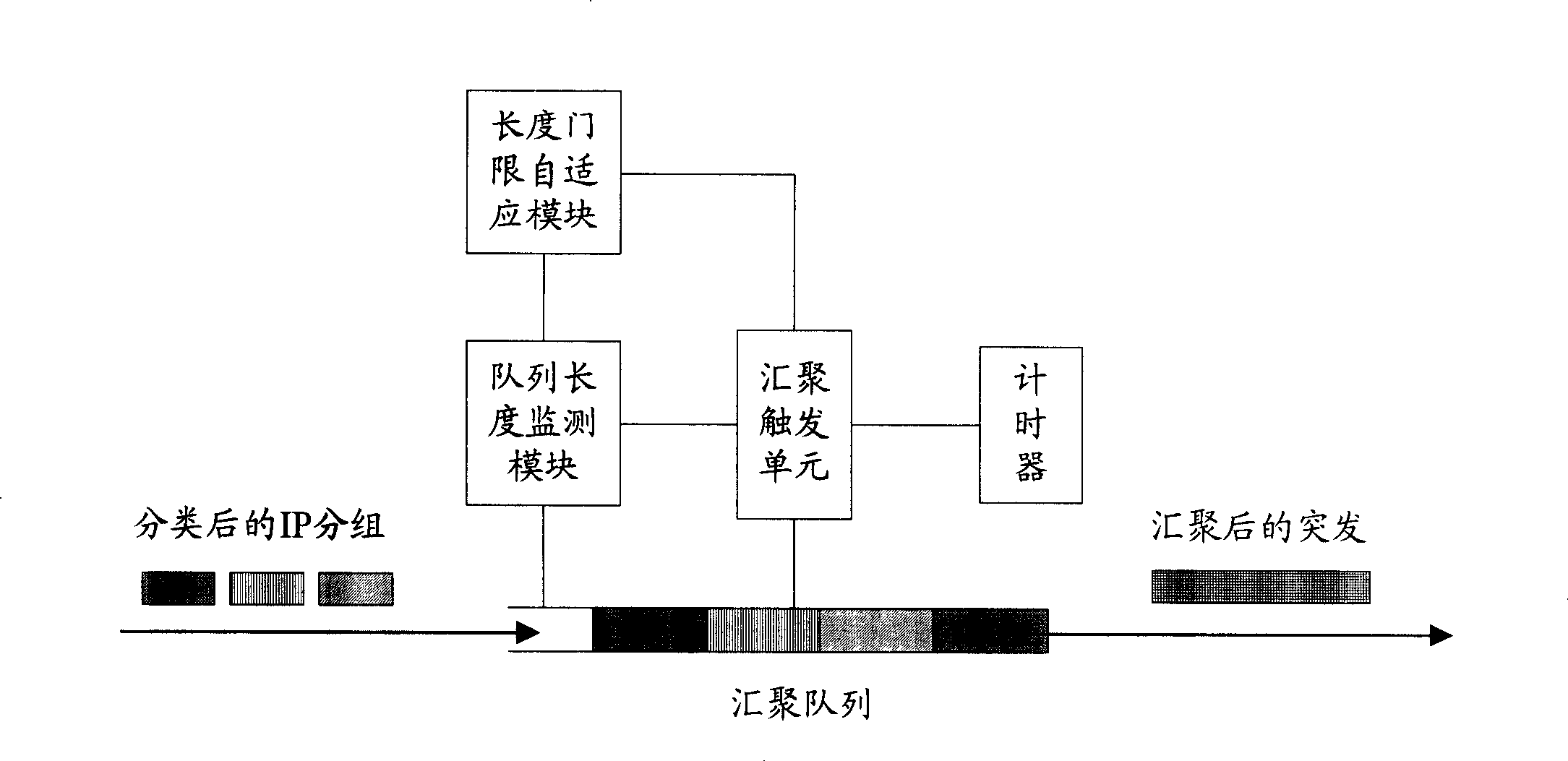

01 Burst assembly and scheduling mechanisms

Optical burst switching networks require efficient mechanisms for assembling data packets into bursts and scheduling their transmission. Various algorithms and methods have been developed to optimize burst assembly based on factors such as burst size, timeout values, and traffic characteristics. These mechanisms aim to improve network throughput and reduce latency by efficiently grouping packets and determining optimal transmission times.- Burst assembly and scheduling mechanisms: Optical burst switching networks require efficient mechanisms for assembling data packets into bursts and scheduling their transmission. Various algorithms and methods have been developed to optimize burst assembly based on factors such as burst size, timeout values, and traffic characteristics. These mechanisms aim to improve network throughput and reduce delay by efficiently grouping packets and determining optimal transmission times.

- Contention resolution and wavelength conversion: When multiple bursts contend for the same output port or wavelength, contention resolution techniques are essential. Solutions include wavelength conversion, fiber delay lines, burst segmentation, and deflection routing. These methods help minimize burst loss and improve network performance by providing alternative paths or temporary storage for conflicting bursts.

- Control channel signaling and reservation protocols: Effective signaling protocols are crucial for reserving resources and establishing burst transmission paths. Control packets are sent ahead of data bursts to reserve wavelengths and configure optical switches along the path. Various reservation protocols and offset time mechanisms have been developed to ensure successful burst transmission while minimizing control overhead and processing delays.

- Quality of Service differentiation and priority handling: Optical burst switching networks need to support different service classes and priority levels for various types of traffic. Mechanisms for quality of service differentiation include offset time differentiation, burst preemption, and separate wavelength allocation for different priority classes. These techniques ensure that high-priority traffic receives preferential treatment while maintaining efficient network utilization.

- Network architecture and node design: The overall architecture of optical burst switching networks and the design of switching nodes are fundamental to system performance. This includes the configuration of optical cross-connects, the integration of electronic and optical components, edge node functionalities, and core node structures. Various architectures have been proposed to optimize switching speed, scalability, and cost-effectiveness while supporting the unique requirements of burst-mode transmission.

02 Contention resolution and resource allocation

When multiple bursts compete for the same output port or wavelength channel, contention occurs in optical burst switching networks. Solutions include wavelength conversion, fiber delay lines, burst segmentation, and deflection routing. Advanced resource allocation schemes have been developed to minimize burst loss probability and improve network performance by efficiently managing available resources and resolving conflicts.Expand Specific Solutions03 Control plane signaling and reservation protocols

Optical burst switching requires sophisticated signaling protocols to reserve network resources before burst transmission. Control packets are sent ahead of data bursts to establish paths and configure optical switches along the route. Various reservation schemes have been proposed, including one-way and two-way signaling protocols, to ensure successful burst transmission while maintaining network efficiency and reducing setup overhead.Expand Specific Solutions04 Quality of Service differentiation and prioritization

To support different service classes and traffic types, optical burst switching networks implement quality of service mechanisms. These include priority-based scheduling, differentiated offset times, and selective burst dropping strategies. Such approaches enable the network to provide varying levels of service guarantees for different applications, ensuring that high-priority traffic receives preferential treatment during contention scenarios.Expand Specific Solutions05 Network architecture and node design

The physical architecture of optical burst switching networks involves specialized node designs and switching fabrics. This includes the development of optical cross-connects, wavelength converters, and buffering mechanisms. Various network topologies and node architectures have been proposed to optimize performance, scalability, and cost-effectiveness while supporting the unique requirements of burst-mode transmission in optical networks.Expand Specific Solutions

Major Players in Cloud-Edge OBS Market

The cloud-edge dynamics in optical burst switching represents an emerging technological paradigm currently in its early development stage, with significant growth potential driven by increasing demand for low-latency, high-bandwidth applications. The market remains nascent but shows promise as 5G and IoT deployments accelerate globally. Technology maturity varies considerably across key players, with established telecommunications giants like Huawei, Samsung Electronics, Intel, and ZTE leading infrastructure development, while Nokia Solutions & Networks and NEC contribute carrier-grade solutions. Academic institutions including Beijing University of Posts & Telecommunications, University of Electronic Science & Technology of China, and KAIST drive fundamental research innovations. The competitive landscape features a mix of hardware manufacturers, network equipment providers, and research institutions, indicating the technology's interdisciplinary nature requiring both theoretical advancement and practical implementation expertise for successful commercialization.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed comprehensive cloud-edge collaborative solutions for optical burst switching networks, implementing intelligent traffic prediction algorithms that dynamically allocate bandwidth between cloud data centers and edge nodes. Their approach utilizes machine learning-based burst assembly mechanisms that can predict traffic patterns with 95% accuracy, enabling proactive resource allocation. The system features adaptive buffering strategies at edge nodes that can handle burst overflow scenarios while maintaining sub-millisecond latency for critical applications. Huawei's solution integrates SDN controllers for centralized network orchestration while distributing decision-making capabilities to edge devices for real-time burst scheduling and routing optimization.

Strengths: Strong integration capabilities, proven scalability in large networks, comprehensive end-to-end solutions. Weaknesses: Higher complexity in deployment, potential vendor lock-in concerns, requires significant infrastructure investment.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has developed an innovative cloud-edge architecture for optical burst switching that leverages their advanced memory technologies and 5G infrastructure capabilities. Their solution implements hierarchical burst management where edge nodes equipped with high-speed DRAM and emerging storage class memory can cache and pre-process burst data before cloud transmission. The system features adaptive compression algorithms that can reduce data transmission by 40-70% while maintaining quality of service requirements. Samsung's approach integrates seamlessly with their 5G network slicing technology, enabling dedicated virtual networks for different burst traffic types with guaranteed bandwidth and latency characteristics.

Strengths: Advanced memory technologies, strong 5G integration, efficient data compression capabilities. Weaknesses: Limited optical networking expertise compared to traditional telecom vendors, dependency on Samsung hardware ecosystem.

Core Patents in Cloud-Edge OBS Dynamics

OBS/OPS network performance optimizing method based on virtual node

PatentInactiveCN100477632C

Innovation

- By merging physical nodes to form virtual nodes in a dynamic IP business environment, and setting up dedicated wavelengths to connect the first and last physical nodes in the virtual nodes, data packets can jump to multiple physical nodes only after one processing, reducing network delay and loss. Package rate.

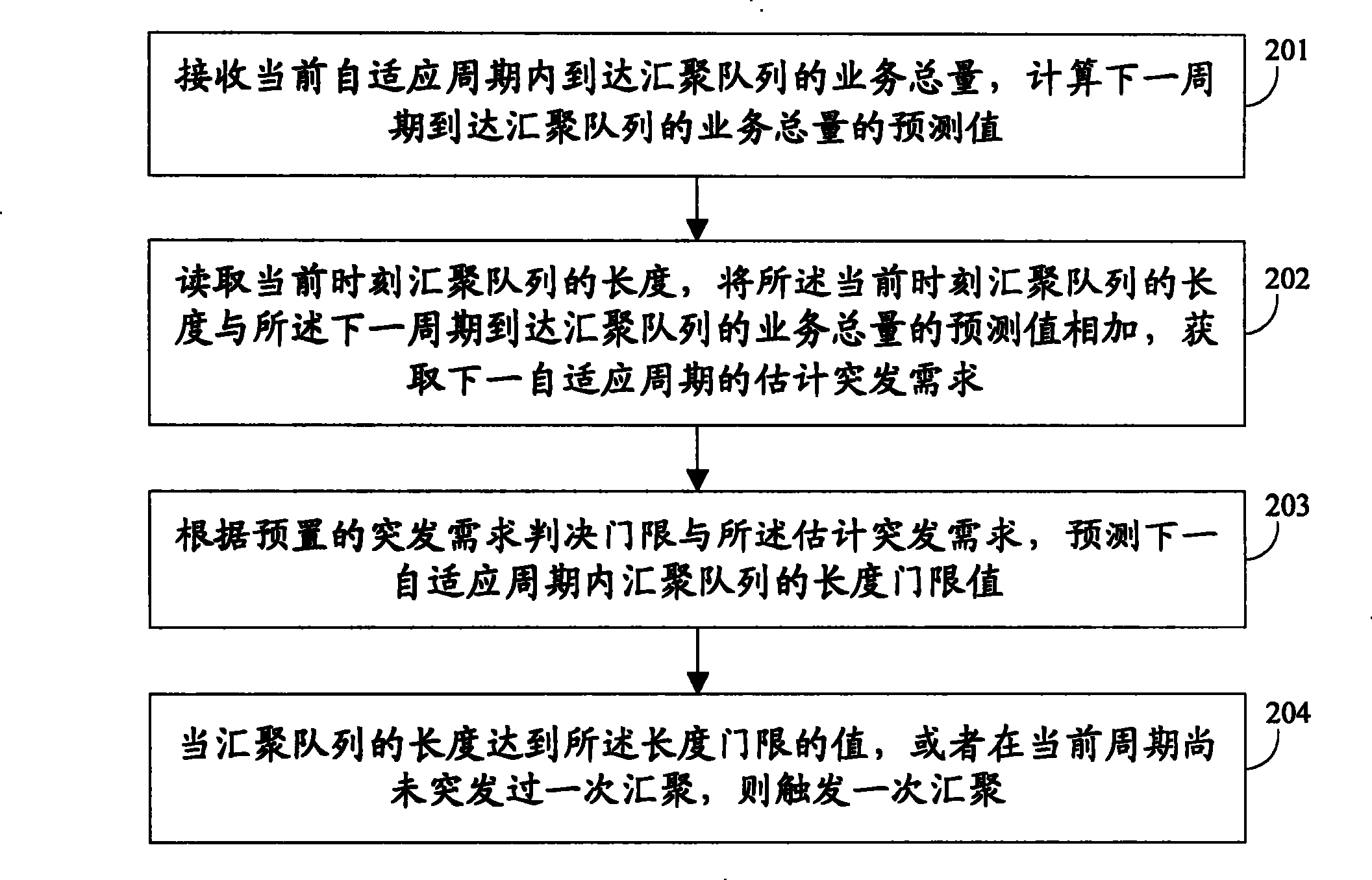

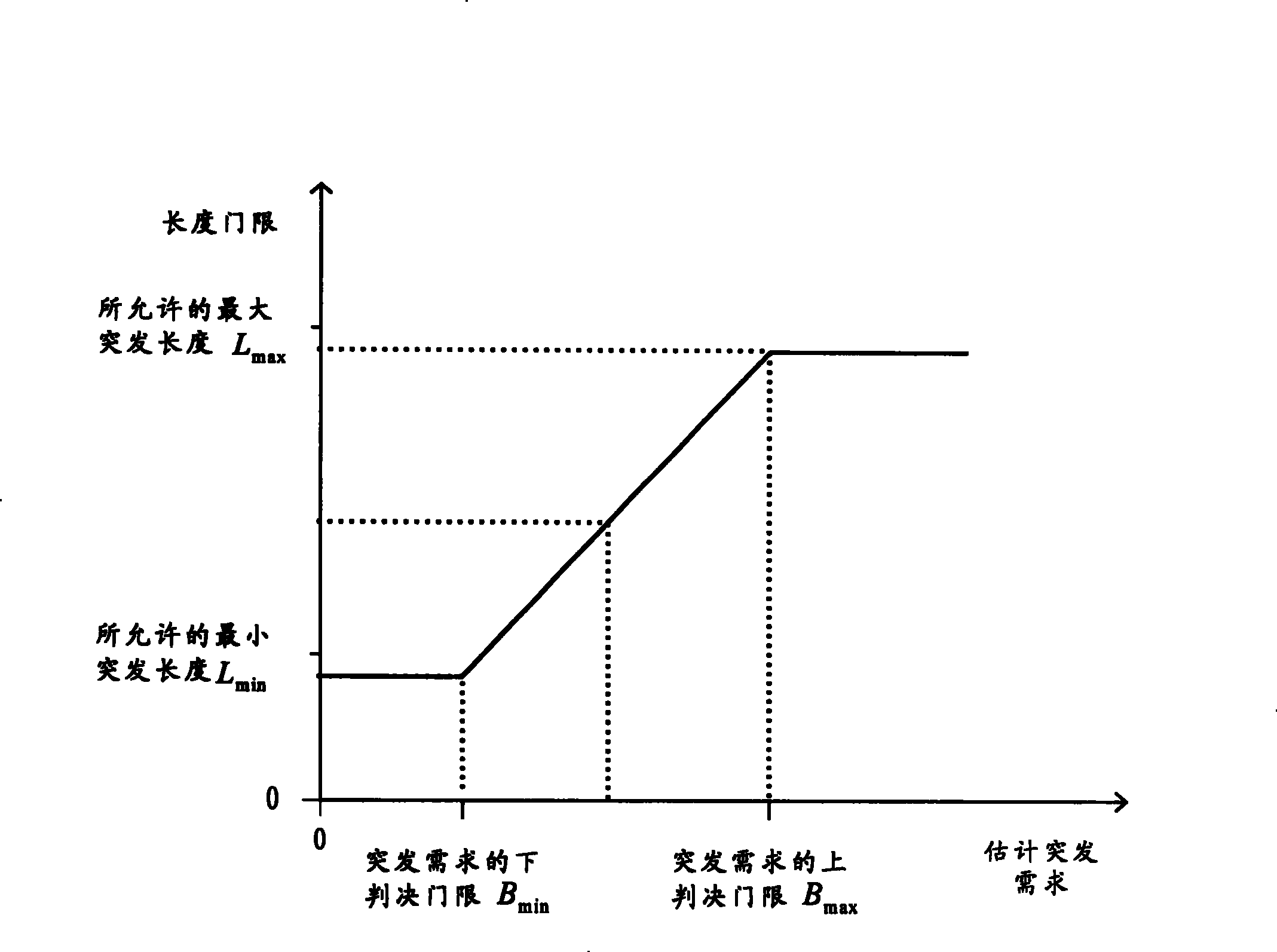

Flow forecast based periodical and adaptive convergence method and system

PatentInactiveCN101212819B

Innovation

- The periodic adaptive method based on traffic prediction is adopted. By receiving the total business volume in the current adaptation period, the total business volume prediction value in the next period is calculated, and the length threshold of the aggregation queue is adjusted to improve the adaptive accuracy and flexibility.

Network Infrastructure Requirements for OBS

The implementation of Optical Burst Switching in cloud-edge environments demands a sophisticated network infrastructure capable of supporting high-speed optical transmission, dynamic resource allocation, and seamless integration between centralized cloud facilities and distributed edge nodes. The foundational requirement centers on establishing a robust optical backbone network with wavelength division multiplexing capabilities, enabling multiple data streams to traverse the same fiber infrastructure simultaneously while maintaining isolation and quality of service guarantees.

Core infrastructure components must include high-capacity optical cross-connects strategically positioned at network aggregation points to facilitate efficient burst routing and switching. These devices require sub-microsecond switching capabilities to handle the rapid burst assembly and disassembly processes characteristic of OBS networks. Additionally, the infrastructure must incorporate advanced fiber optic cabling systems with low-latency characteristics, typically utilizing single-mode fibers with optimized dispersion properties to minimize signal degradation across extended distances between cloud data centers and edge computing nodes.

The network architecture necessitates deployment of specialized burst control processors at each switching node, equipped with high-speed memory buffers and real-time processing capabilities to manage burst header information and coordinate switching decisions. These processors must interface seamlessly with both optical switching matrices and electronic control planes, enabling hybrid optical-electronic operation essential for cloud-edge coordination.

Edge infrastructure requirements include compact optical switching modules capable of operating in diverse environmental conditions while maintaining carrier-grade reliability. These edge nodes must support dynamic bandwidth allocation mechanisms, allowing real-time adjustment of optical channel capacity based on varying computational loads and data transfer requirements between edge devices and centralized cloud resources.

Synchronization infrastructure represents another critical requirement, demanding precise timing distribution systems across the entire network topology. This includes deployment of GPS-synchronized timing references and high-precision clock distribution networks to ensure coordinated burst transmission scheduling between geographically distributed cloud and edge facilities, preventing collision and optimizing network utilization efficiency.

Core infrastructure components must include high-capacity optical cross-connects strategically positioned at network aggregation points to facilitate efficient burst routing and switching. These devices require sub-microsecond switching capabilities to handle the rapid burst assembly and disassembly processes characteristic of OBS networks. Additionally, the infrastructure must incorporate advanced fiber optic cabling systems with low-latency characteristics, typically utilizing single-mode fibers with optimized dispersion properties to minimize signal degradation across extended distances between cloud data centers and edge computing nodes.

The network architecture necessitates deployment of specialized burst control processors at each switching node, equipped with high-speed memory buffers and real-time processing capabilities to manage burst header information and coordinate switching decisions. These processors must interface seamlessly with both optical switching matrices and electronic control planes, enabling hybrid optical-electronic operation essential for cloud-edge coordination.

Edge infrastructure requirements include compact optical switching modules capable of operating in diverse environmental conditions while maintaining carrier-grade reliability. These edge nodes must support dynamic bandwidth allocation mechanisms, allowing real-time adjustment of optical channel capacity based on varying computational loads and data transfer requirements between edge devices and centralized cloud resources.

Synchronization infrastructure represents another critical requirement, demanding precise timing distribution systems across the entire network topology. This includes deployment of GPS-synchronized timing references and high-precision clock distribution networks to ensure coordinated burst transmission scheduling between geographically distributed cloud and edge facilities, preventing collision and optimizing network utilization efficiency.

Quality of Service Optimization in Cloud-Edge OBS

Quality of Service optimization in Cloud-Edge Optical Burst Switching represents a critical paradigm shift in network performance management, where traditional centralized QoS mechanisms must adapt to distributed computing architectures. The integration of cloud and edge computing creates unique opportunities for dynamic resource allocation and traffic prioritization that extends beyond conventional network boundaries.

The fundamental challenge lies in establishing coherent QoS policies across heterogeneous infrastructure layers while maintaining real-time responsiveness. Cloud-edge OBS systems must simultaneously handle high-priority burst traffic from edge devices and manage bulk data transfers to centralized cloud resources. This dual requirement necessitates sophisticated traffic classification algorithms that can distinguish between latency-sensitive edge applications and throughput-optimized cloud workloads.

Dynamic bandwidth allocation emerges as a cornerstone technique, enabling intelligent distribution of optical resources based on real-time demand patterns. Advanced scheduling algorithms leverage machine learning models to predict traffic bursts and pre-allocate bandwidth corridors, significantly reducing contention delays. These predictive mechanisms analyze historical traffic patterns, application behavior, and network topology to optimize burst assembly and scheduling decisions.

Service differentiation mechanisms in cloud-edge OBS environments employ multi-dimensional QoS parameters including latency bounds, jitter tolerance, and reliability requirements. Priority-based burst scheduling ensures that critical edge computing tasks receive preferential treatment while maintaining fairness for background cloud traffic. Adaptive deflection routing strategies provide alternative paths for lower-priority bursts when primary routes experience congestion.

The implementation of distributed QoS enforcement points across edge nodes enables localized decision-making while maintaining global optimization objectives. Cross-layer optimization techniques coordinate between optical burst scheduling, edge resource allocation, and cloud service provisioning to achieve end-to-end performance guarantees. These mechanisms support diverse application requirements ranging from ultra-low latency industrial automation to high-throughput scientific computing workflows.

The fundamental challenge lies in establishing coherent QoS policies across heterogeneous infrastructure layers while maintaining real-time responsiveness. Cloud-edge OBS systems must simultaneously handle high-priority burst traffic from edge devices and manage bulk data transfers to centralized cloud resources. This dual requirement necessitates sophisticated traffic classification algorithms that can distinguish between latency-sensitive edge applications and throughput-optimized cloud workloads.

Dynamic bandwidth allocation emerges as a cornerstone technique, enabling intelligent distribution of optical resources based on real-time demand patterns. Advanced scheduling algorithms leverage machine learning models to predict traffic bursts and pre-allocate bandwidth corridors, significantly reducing contention delays. These predictive mechanisms analyze historical traffic patterns, application behavior, and network topology to optimize burst assembly and scheduling decisions.

Service differentiation mechanisms in cloud-edge OBS environments employ multi-dimensional QoS parameters including latency bounds, jitter tolerance, and reliability requirements. Priority-based burst scheduling ensures that critical edge computing tasks receive preferential treatment while maintaining fairness for background cloud traffic. Adaptive deflection routing strategies provide alternative paths for lower-priority bursts when primary routes experience congestion.

The implementation of distributed QoS enforcement points across edge nodes enables localized decision-making while maintaining global optimization objectives. Cross-layer optimization techniques coordinate between optical burst scheduling, edge resource allocation, and cloud service provisioning to achieve end-to-end performance guarantees. These mechanisms support diverse application requirements ranging from ultra-low latency industrial automation to high-throughput scientific computing workflows.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!