Improving Energy Efficiency in Data Centers with Active Memory Expansion

MAR 19, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Active Memory Expansion Technology Background and Objectives

Active Memory Expansion (AME) technology has emerged as a critical innovation in the evolution of data center infrastructure, addressing the growing tension between computational demands and energy efficiency constraints. This technology represents a paradigm shift from traditional static memory allocation models to dynamic, intelligent memory management systems that can adapt to real-time workload requirements while optimizing power consumption.

The historical development of memory management in data centers has been characterized by incremental improvements in memory density and speed, but with limited focus on energy optimization. Traditional approaches relied on over-provisioning memory resources to ensure performance, resulting in significant energy waste during periods of low utilization. The advent of virtualization and cloud computing has intensified these challenges, as data centers must now support highly variable and unpredictable workloads while maintaining strict service level agreements.

Active Memory Expansion technology builds upon decades of research in memory hierarchy optimization, dynamic resource allocation, and power management. The foundational concepts trace back to early work in virtual memory systems and have evolved through contributions in areas such as memory compression, tiered storage architectures, and intelligent caching mechanisms. Recent advances in machine learning and predictive analytics have enabled more sophisticated approaches to memory management that can anticipate workload patterns and proactively adjust resource allocation.

The primary objective of implementing AME technology in data centers is to achieve substantial improvements in energy efficiency while maintaining or enhancing system performance. This involves developing intelligent algorithms that can dynamically expand and contract memory allocation based on real-time demand, thereby reducing idle power consumption during low-utilization periods. The technology aims to optimize the trade-off between memory availability and energy consumption through predictive modeling and adaptive resource management.

Secondary objectives include improving overall system reliability through better resource utilization, reducing operational costs associated with energy consumption, and enabling more sustainable data center operations. The technology also seeks to provide greater flexibility in workload management, allowing data centers to handle peak demands more efficiently without requiring permanent infrastructure expansion.

The ultimate goal is to establish AME as a foundational technology for next-generation data centers, enabling a new class of energy-aware computing infrastructure that can automatically balance performance requirements with environmental sustainability objectives while reducing total cost of ownership.

The historical development of memory management in data centers has been characterized by incremental improvements in memory density and speed, but with limited focus on energy optimization. Traditional approaches relied on over-provisioning memory resources to ensure performance, resulting in significant energy waste during periods of low utilization. The advent of virtualization and cloud computing has intensified these challenges, as data centers must now support highly variable and unpredictable workloads while maintaining strict service level agreements.

Active Memory Expansion technology builds upon decades of research in memory hierarchy optimization, dynamic resource allocation, and power management. The foundational concepts trace back to early work in virtual memory systems and have evolved through contributions in areas such as memory compression, tiered storage architectures, and intelligent caching mechanisms. Recent advances in machine learning and predictive analytics have enabled more sophisticated approaches to memory management that can anticipate workload patterns and proactively adjust resource allocation.

The primary objective of implementing AME technology in data centers is to achieve substantial improvements in energy efficiency while maintaining or enhancing system performance. This involves developing intelligent algorithms that can dynamically expand and contract memory allocation based on real-time demand, thereby reducing idle power consumption during low-utilization periods. The technology aims to optimize the trade-off between memory availability and energy consumption through predictive modeling and adaptive resource management.

Secondary objectives include improving overall system reliability through better resource utilization, reducing operational costs associated with energy consumption, and enabling more sustainable data center operations. The technology also seeks to provide greater flexibility in workload management, allowing data centers to handle peak demands more efficiently without requiring permanent infrastructure expansion.

The ultimate goal is to establish AME as a foundational technology for next-generation data centers, enabling a new class of energy-aware computing infrastructure that can automatically balance performance requirements with environmental sustainability objectives while reducing total cost of ownership.

Market Demand for Energy-Efficient Data Center Solutions

The global data center industry is experiencing unprecedented growth driven by digital transformation, cloud computing adoption, and the exponential increase in data generation. This surge has created an urgent market demand for energy-efficient solutions as data centers now consume approximately three percent of global electricity supply. Organizations are increasingly prioritizing sustainability initiatives while simultaneously seeking to reduce operational expenditures, making energy efficiency a critical business imperative rather than merely an environmental consideration.

Enterprise customers are demanding comprehensive energy optimization solutions that can deliver measurable reductions in power consumption without compromising performance or reliability. The market shows particularly strong demand from hyperscale cloud providers, colocation facilities, and enterprise data centers facing rising electricity costs and regulatory pressure to meet carbon neutrality goals. Active memory expansion technologies are gaining traction as they address the growing memory wall problem while potentially reducing overall system power consumption through improved resource utilization.

Financial drivers are compelling organizations to invest in energy-efficient infrastructure as electricity costs represent a significant portion of total cost of ownership. The market demonstrates willingness to adopt innovative memory technologies that can optimize workload distribution and reduce the need for energy-intensive storage tier access. Companies are specifically seeking solutions that can improve memory bandwidth utilization while maintaining or enhancing application performance metrics.

Regulatory frameworks worldwide are establishing stricter energy efficiency standards for data centers, creating mandatory compliance requirements that drive market adoption of advanced technologies. The European Union's Energy Efficiency Directive and similar regulations in other regions are establishing performance benchmarks that necessitate deployment of cutting-edge solutions like active memory expansion systems.

The market opportunity extends beyond traditional data center operators to include edge computing deployments, where power constraints and thermal limitations make energy-efficient memory solutions particularly valuable. Organizations operating distributed computing infrastructure are actively seeking technologies that can maximize computational density while minimizing power footprint, creating substantial demand for innovative memory architectures that can deliver superior performance per watt metrics.

Enterprise customers are demanding comprehensive energy optimization solutions that can deliver measurable reductions in power consumption without compromising performance or reliability. The market shows particularly strong demand from hyperscale cloud providers, colocation facilities, and enterprise data centers facing rising electricity costs and regulatory pressure to meet carbon neutrality goals. Active memory expansion technologies are gaining traction as they address the growing memory wall problem while potentially reducing overall system power consumption through improved resource utilization.

Financial drivers are compelling organizations to invest in energy-efficient infrastructure as electricity costs represent a significant portion of total cost of ownership. The market demonstrates willingness to adopt innovative memory technologies that can optimize workload distribution and reduce the need for energy-intensive storage tier access. Companies are specifically seeking solutions that can improve memory bandwidth utilization while maintaining or enhancing application performance metrics.

Regulatory frameworks worldwide are establishing stricter energy efficiency standards for data centers, creating mandatory compliance requirements that drive market adoption of advanced technologies. The European Union's Energy Efficiency Directive and similar regulations in other regions are establishing performance benchmarks that necessitate deployment of cutting-edge solutions like active memory expansion systems.

The market opportunity extends beyond traditional data center operators to include edge computing deployments, where power constraints and thermal limitations make energy-efficient memory solutions particularly valuable. Organizations operating distributed computing infrastructure are actively seeking technologies that can maximize computational density while minimizing power footprint, creating substantial demand for innovative memory architectures that can deliver superior performance per watt metrics.

Current State and Challenges of Data Center Memory Systems

Data center memory systems currently face significant challenges in balancing performance demands with energy efficiency requirements. Traditional memory architectures rely heavily on DRAM, which consumes substantial power through constant refresh cycles and high-speed operations. Modern data centers typically allocate 15-25% of their total power budget to memory subsystems, making memory one of the largest energy consumers after processors.

The existing memory hierarchy presents inherent inefficiencies due to the performance gap between different storage tiers. DRAM provides fast access times but requires continuous power for data retention, while storage-class memory technologies like NVDIMM and Intel Optane offer persistence but with higher latency. This creates a complex trade-off between speed, capacity, and power consumption that current systems struggle to optimize effectively.

Memory utilization patterns in data centers reveal significant inefficiencies in resource allocation. Studies indicate that average memory utilization across enterprise workloads ranges from 40-60%, meaning substantial portions of provisioned memory remain idle while still consuming power. This underutilization stems from static memory allocation policies that cannot dynamically adapt to varying workload demands throughout operational cycles.

Thermal management represents another critical challenge in current memory systems. High-density memory configurations generate substantial heat, requiring additional cooling infrastructure that further increases overall energy consumption. The concentration of memory modules in server chassis creates hotspots that can lead to thermal throttling, reducing system performance while maintaining high power draw.

Current memory expansion technologies face limitations in achieving true energy proportionality. Traditional approaches involve adding more physical memory modules, which linearly increases power consumption regardless of actual utilization. Existing virtualization and containerization technologies provide some improvement through better resource sharing, but they cannot address the fundamental energy overhead of maintaining large memory pools.

The lack of fine-grained power management capabilities in conventional memory systems prevents optimization at the application level. Most current implementations operate memory subsystems at fixed power states, unable to dynamically scale energy consumption based on real-time workload characteristics or performance requirements.

Emerging memory technologies like persistent memory and near-data computing show promise but face integration challenges with existing infrastructure. The complexity of managing hybrid memory systems, combined with software stack limitations, creates barriers to implementing more energy-efficient memory architectures in production environments.

The existing memory hierarchy presents inherent inefficiencies due to the performance gap between different storage tiers. DRAM provides fast access times but requires continuous power for data retention, while storage-class memory technologies like NVDIMM and Intel Optane offer persistence but with higher latency. This creates a complex trade-off between speed, capacity, and power consumption that current systems struggle to optimize effectively.

Memory utilization patterns in data centers reveal significant inefficiencies in resource allocation. Studies indicate that average memory utilization across enterprise workloads ranges from 40-60%, meaning substantial portions of provisioned memory remain idle while still consuming power. This underutilization stems from static memory allocation policies that cannot dynamically adapt to varying workload demands throughout operational cycles.

Thermal management represents another critical challenge in current memory systems. High-density memory configurations generate substantial heat, requiring additional cooling infrastructure that further increases overall energy consumption. The concentration of memory modules in server chassis creates hotspots that can lead to thermal throttling, reducing system performance while maintaining high power draw.

Current memory expansion technologies face limitations in achieving true energy proportionality. Traditional approaches involve adding more physical memory modules, which linearly increases power consumption regardless of actual utilization. Existing virtualization and containerization technologies provide some improvement through better resource sharing, but they cannot address the fundamental energy overhead of maintaining large memory pools.

The lack of fine-grained power management capabilities in conventional memory systems prevents optimization at the application level. Most current implementations operate memory subsystems at fixed power states, unable to dynamically scale energy consumption based on real-time workload characteristics or performance requirements.

Emerging memory technologies like persistent memory and near-data computing show promise but face integration challenges with existing infrastructure. The complexity of managing hybrid memory systems, combined with software stack limitations, creates barriers to implementing more energy-efficient memory architectures in production environments.

Existing Active Memory Expansion Solutions

01 Dynamic memory allocation and power management

Technologies for dynamically allocating memory resources based on workload demands while implementing power management strategies. This includes techniques for selectively powering down unused memory banks or regions, adjusting memory refresh rates, and implementing sleep states for memory modules when not actively in use. These approaches reduce overall power consumption while maintaining system performance by ensuring memory resources are available when needed.- Dynamic memory allocation and power management: Technologies for dynamically allocating memory resources based on workload demands while implementing power management strategies. This includes techniques for selectively powering down unused memory segments, adjusting memory refresh rates, and implementing adaptive voltage scaling to reduce energy consumption during memory expansion operations. The approach enables systems to balance performance requirements with energy efficiency by monitoring memory usage patterns and adjusting power states accordingly.

- Memory compression and deduplication techniques: Methods for expanding effective memory capacity through data compression and deduplication algorithms that reduce physical memory requirements. These techniques identify redundant data patterns and compress memory contents to increase available memory space without additional hardware. The approach improves energy efficiency by reducing the number of memory accesses and minimizing data transfer operations, thereby lowering overall power consumption while effectively expanding memory capacity.

- Tiered memory architecture with energy-aware data placement: Implementation of hierarchical memory systems that utilize multiple memory tiers with different performance and power characteristics. This includes intelligent data placement algorithms that migrate frequently accessed data to faster, more power-efficient memory layers while moving less critical data to slower, lower-power storage. The system optimizes energy consumption by matching data access patterns with appropriate memory technologies and implementing predictive algorithms for data migration.

- Virtual memory management with reduced overhead: Advanced virtual memory management techniques that minimize energy overhead associated with address translation and page table management during memory expansion. This includes optimized page table structures, efficient translation lookaside buffer management, and reduced context switching overhead. The methods focus on decreasing the computational and memory access costs of virtual memory operations, thereby improving overall system energy efficiency when expanding available memory resources.

- Adaptive memory refresh and retention optimization: Techniques for optimizing memory refresh operations and data retention strategies to reduce energy consumption in expanded memory configurations. This includes variable refresh rate control based on temperature and data criticality, selective refresh of active memory regions, and implementation of error correction codes that allow for reduced refresh frequencies. The approach significantly decreases power consumption associated with maintaining data integrity in large memory systems while ensuring reliable operation.

02 Memory compression and deduplication techniques

Methods for reducing the physical memory footprint through compression algorithms and deduplication of redundant data. By compressing data before storage and identifying duplicate memory pages, these techniques effectively expand available memory capacity without requiring additional physical memory modules. This reduces the energy required per unit of effective memory capacity and decreases the need for energy-intensive memory access operations.Expand Specific Solutions03 Tiered memory architecture with heterogeneous memory types

Implementation of multi-tier memory hierarchies combining different memory technologies with varying performance and power characteristics. This includes pairing high-speed, power-intensive memory with lower-power, higher-capacity memory types. Intelligent data placement and migration algorithms move frequently accessed data to faster tiers while relegating less critical data to more energy-efficient storage, optimizing the balance between performance and energy consumption.Expand Specific Solutions04 Adaptive memory refresh optimization

Techniques for optimizing memory refresh operations to reduce energy consumption while maintaining data integrity. This includes variable refresh rate adjustment based on temperature, memory content criticality, and error rates. By reducing unnecessary refresh cycles and implementing selective refresh strategies for different memory regions, significant energy savings can be achieved in dynamic memory systems without compromising reliability.Expand Specific Solutions05 Virtual memory management with energy-aware paging

Energy-efficient virtual memory systems that incorporate power consumption considerations into page replacement and memory allocation decisions. These systems implement algorithms that consider both access patterns and energy costs when determining which pages to keep in physical memory versus secondary storage. This includes predictive paging strategies and intelligent prefetching that minimize energy-intensive memory operations while maintaining application performance.Expand Specific Solutions

Key Players in Data Center Memory and Energy Management Industry

The data center energy efficiency market through active memory expansion represents a rapidly evolving sector driven by escalating computational demands and sustainability imperatives. The industry is transitioning from early adoption to mainstream implementation, with market size expanding significantly as enterprises prioritize operational cost reduction and environmental compliance. Technology maturity varies considerably across market participants, with established giants like IBM, Intel, and Huawei leading advanced memory architecture development, while companies such as Lenovo, Dell, and GlobalFoundries focus on hardware optimization solutions. Infrastructure specialists including Schneider Electric and Zonit provide complementary power management technologies. Chinese players like Inspur and academic institutions such as Beijing Institute of Technology contribute emerging innovations, creating a competitive landscape where traditional computing leaders compete alongside specialized energy management providers and emerging technology developers.

International Business Machines Corp.

Technical Solution: IBM has developed comprehensive active memory expansion solutions that utilize intelligent memory tiering and compression technologies to optimize data center energy efficiency. Their approach combines hardware-based memory compression with software-defined memory management, enabling dynamic allocation of memory resources based on workload demands. The system employs predictive analytics to anticipate memory usage patterns and proactively moves less frequently accessed data to lower-power memory tiers. IBM's solution integrates with their Power Systems architecture, providing up to 40% reduction in memory-related power consumption while maintaining application performance through advanced caching mechanisms and real-time memory optimization algorithms.

Strengths: Mature enterprise-grade solutions with proven scalability and comprehensive system integration capabilities. Weaknesses: Higher implementation costs and complexity requiring specialized expertise for deployment and maintenance.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei's active memory expansion solution integrates their Kunpeng processors with intelligent memory management systems designed specifically for energy-efficient data center operations. Their technology employs adaptive memory compression algorithms and dynamic memory pooling to optimize resource utilization across server clusters. The system features real-time workload analysis capabilities that automatically adjust memory allocation and power states based on application demands. Huawei's approach includes proprietary memory controllers that can reduce memory subsystem power consumption by up to 30% through intelligent voltage scaling and selective memory bank activation. The solution also incorporates AI-driven predictive analytics to anticipate memory requirements and preemptively optimize memory configurations for maximum energy efficiency while ensuring consistent application performance.

Strengths: Cost-effective solutions with strong AI integration and comprehensive data center ecosystem support. Weaknesses: Limited market presence in some regions and concerns about technology transfer restrictions.

Core Innovations in Energy-Efficient Memory Architecture

Data center energy efficiency optimization method based on reinforcement learning

PatentActiveCN110609474A

Innovation

- The data center energy efficiency optimization method based on reinforcement learning is adopted, and the cooling tower fan frequency and cooling pump frequency are automatically adjusted through Actor-Critic network model training and updating, cooling side equipment power is optimized, and the optimal strategy is explored by combining gradient descent algorithm and random process.

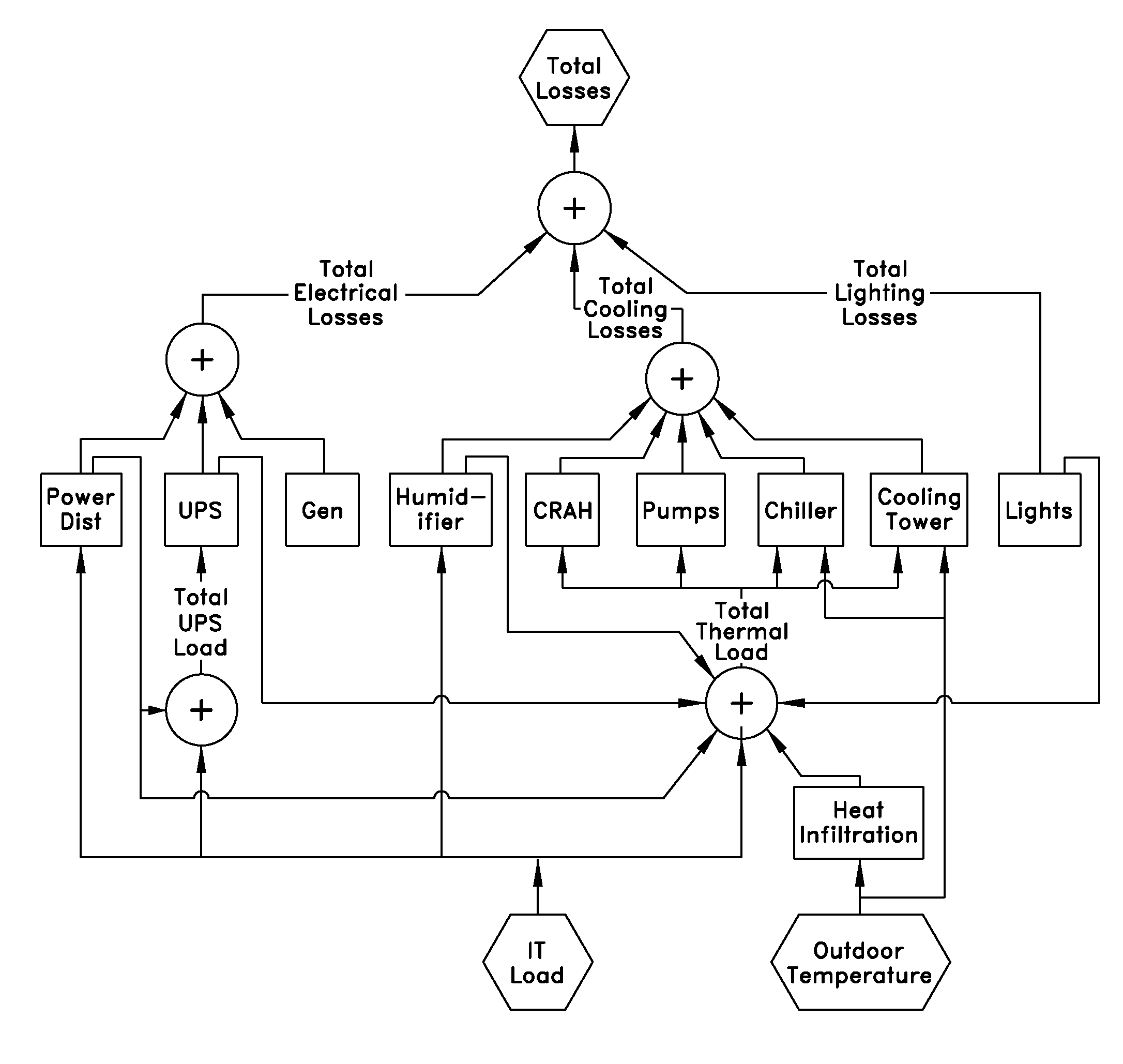

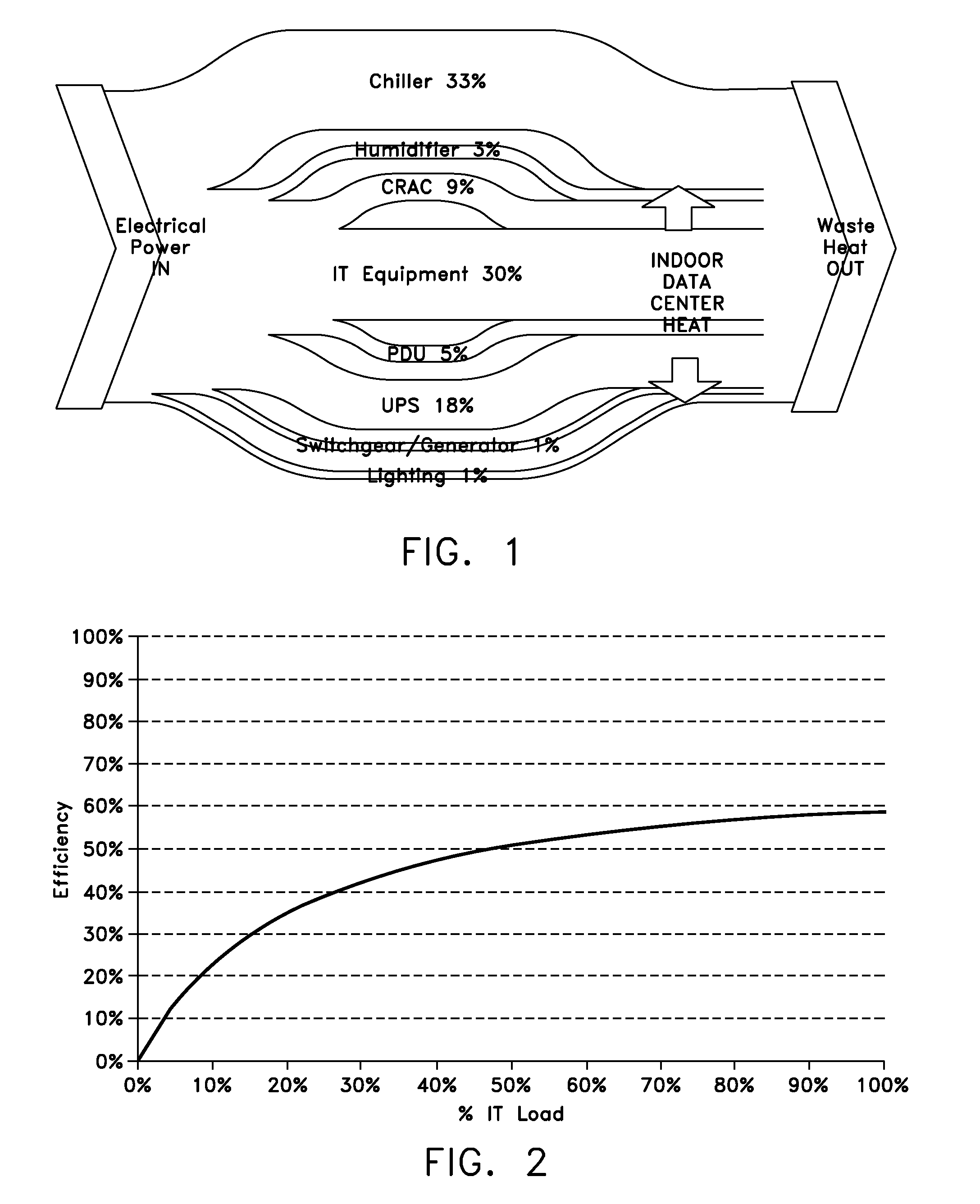

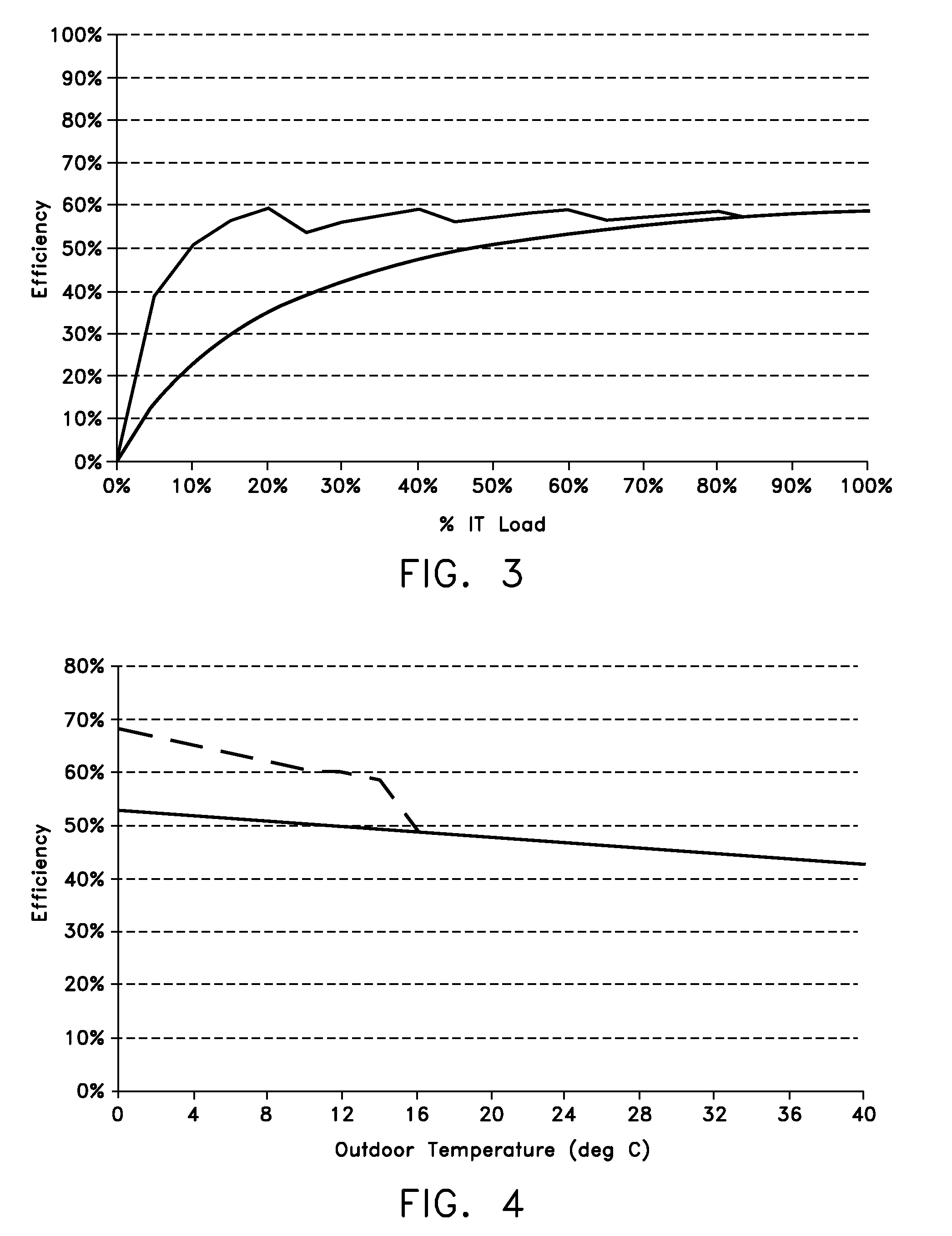

Electrical efficiency measurement for data centers

PatentInactiveUS20090112522A1

Innovation

- A method and system for managing and modeling data center power efficiency through initial and ongoing power measurements, using a processor-based management system to establish efficiency models, identify device contributions to losses, and provide warnings for deviations from benchmark performance levels, incorporating climate data and device characteristics to optimize power and cooling configurations.

Environmental Regulations and Green Computing Policies

The global regulatory landscape for data center energy efficiency has undergone significant transformation in recent years, driven by mounting concerns over carbon emissions and energy consumption. The European Union's Energy Efficiency Directive mandates that large data centers report their energy usage effectiveness (PUE) metrics and implement energy management systems. Similarly, the EU Taxonomy Regulation establishes criteria for environmentally sustainable economic activities, directly impacting data center operations and investment decisions.

In the United States, the Environmental Protection Agency's ENERGY STAR program for data centers provides voluntary guidelines for energy efficiency improvements, while California's Title 24 Building Energy Efficiency Standards impose mandatory requirements for new data center construction. These regulations increasingly emphasize the adoption of advanced technologies, including memory expansion solutions that can reduce overall system energy consumption through improved resource utilization.

Green computing policies worldwide are converging on similar principles that directly support active memory expansion technologies. The Singapore Green Plan 2030 includes specific targets for data center energy efficiency, encouraging the deployment of innovative cooling and memory management solutions. Japan's Top Runner Program sets efficiency benchmarks that drive adoption of technologies like active memory expansion, which can significantly reduce the number of physical servers required for equivalent computational capacity.

Carbon pricing mechanisms and renewable energy mandates are reshaping data center investment priorities across multiple jurisdictions. The UK's Climate Change Levy and similar carbon tax structures in Nordic countries create economic incentives for deploying energy-efficient technologies. These policies make active memory expansion particularly attractive, as it enables higher server utilization rates and reduces the overall carbon footprint per unit of computational output.

Emerging regulatory frameworks specifically address memory and storage efficiency in data centers. The proposed EU Ecodesign Regulation for servers includes provisions for memory utilization metrics, while China's national standards for green data centers explicitly recognize memory expansion technologies as qualifying efficiency measures. These developments signal a regulatory shift toward recognizing and incentivizing advanced memory management as a critical component of sustainable computing infrastructure.

In the United States, the Environmental Protection Agency's ENERGY STAR program for data centers provides voluntary guidelines for energy efficiency improvements, while California's Title 24 Building Energy Efficiency Standards impose mandatory requirements for new data center construction. These regulations increasingly emphasize the adoption of advanced technologies, including memory expansion solutions that can reduce overall system energy consumption through improved resource utilization.

Green computing policies worldwide are converging on similar principles that directly support active memory expansion technologies. The Singapore Green Plan 2030 includes specific targets for data center energy efficiency, encouraging the deployment of innovative cooling and memory management solutions. Japan's Top Runner Program sets efficiency benchmarks that drive adoption of technologies like active memory expansion, which can significantly reduce the number of physical servers required for equivalent computational capacity.

Carbon pricing mechanisms and renewable energy mandates are reshaping data center investment priorities across multiple jurisdictions. The UK's Climate Change Levy and similar carbon tax structures in Nordic countries create economic incentives for deploying energy-efficient technologies. These policies make active memory expansion particularly attractive, as it enables higher server utilization rates and reduces the overall carbon footprint per unit of computational output.

Emerging regulatory frameworks specifically address memory and storage efficiency in data centers. The proposed EU Ecodesign Regulation for servers includes provisions for memory utilization metrics, while China's national standards for green data centers explicitly recognize memory expansion technologies as qualifying efficiency measures. These developments signal a regulatory shift toward recognizing and incentivizing advanced memory management as a critical component of sustainable computing infrastructure.

Cost-Benefit Analysis of Active Memory Implementation

The economic evaluation of active memory expansion implementation in data centers reveals a complex investment landscape with significant long-term benefits despite substantial upfront costs. Initial capital expenditure typically ranges from $2,000 to $5,000 per server for comprehensive active memory solutions, including specialized memory controllers, expanded DRAM modules, and intelligent caching systems. This investment represents approximately 15-25% increase in total server procurement costs, creating immediate budget pressure for data center operators.

However, operational cost savings emerge rapidly through reduced energy consumption patterns. Active memory expansion systems demonstrate 20-35% reduction in overall server power consumption by optimizing memory access patterns and reducing CPU idle states. For a typical 1,000-server data center, this translates to annual electricity savings of $180,000 to $320,000, assuming average power costs of $0.08 per kWh. Additional cooling cost reductions of 15-20% further enhance operational savings, as lower heat generation reduces HVAC system workload.

The technology delivers substantial performance-related cost benefits through improved server utilization rates. Active memory expansion enables 40-60% higher virtual machine density per physical server, effectively reducing the total number of required servers for equivalent workloads. This consolidation effect generates significant savings in real estate, power distribution infrastructure, and network equipment costs, often exceeding $500,000 annually for medium-scale deployments.

Return on investment calculations indicate payback periods of 18-24 months for most implementations, with net present value becoming positive within the second operational year. The total cost of ownership analysis over a five-year period shows 25-40% reduction compared to traditional memory architectures, primarily driven by energy savings and improved resource utilization.

Risk factors include potential compatibility issues with legacy applications and the need for specialized technical expertise, which may increase implementation costs by 10-15%. However, the compelling economic case, combined with growing energy cost pressures and sustainability requirements, makes active memory expansion a financially attractive investment for most data center operators seeking long-term operational efficiency improvements.

However, operational cost savings emerge rapidly through reduced energy consumption patterns. Active memory expansion systems demonstrate 20-35% reduction in overall server power consumption by optimizing memory access patterns and reducing CPU idle states. For a typical 1,000-server data center, this translates to annual electricity savings of $180,000 to $320,000, assuming average power costs of $0.08 per kWh. Additional cooling cost reductions of 15-20% further enhance operational savings, as lower heat generation reduces HVAC system workload.

The technology delivers substantial performance-related cost benefits through improved server utilization rates. Active memory expansion enables 40-60% higher virtual machine density per physical server, effectively reducing the total number of required servers for equivalent workloads. This consolidation effect generates significant savings in real estate, power distribution infrastructure, and network equipment costs, often exceeding $500,000 annually for medium-scale deployments.

Return on investment calculations indicate payback periods of 18-24 months for most implementations, with net present value becoming positive within the second operational year. The total cost of ownership analysis over a five-year period shows 25-40% reduction compared to traditional memory architectures, primarily driven by energy savings and improved resource utilization.

Risk factors include potential compatibility issues with legacy applications and the need for specialized technical expertise, which may increase implementation costs by 10-15%. However, the compelling economic case, combined with growing energy cost pressures and sustainability requirements, makes active memory expansion a financially attractive investment for most data center operators seeking long-term operational efficiency improvements.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!