Near-Memory Computing vs Virtualization: Efficiency Breakdown

APR 24, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Near-Memory Computing Evolution and Efficiency Goals

Near-memory computing has emerged as a transformative paradigm in response to the growing performance bottlenecks caused by the traditional von Neumann architecture's separation of processing and memory units. This architectural evolution traces back to the early 2000s when researchers first identified the "memory wall" problem, where data movement between processors and memory became the primary constraint limiting system performance and energy efficiency.

The foundational concept of processing-in-memory (PIM) gained momentum through pioneering research at institutions like Carnegie Mellon and Stanford, where early prototypes demonstrated the potential for embedding computational capabilities directly within memory arrays. These initial efforts focused on simple arithmetic operations performed within DRAM structures, establishing the groundwork for more sophisticated near-memory computing architectures.

The evolution accelerated significantly around 2010-2015 with the advent of 3D memory technologies and advanced semiconductor manufacturing processes. Companies like Samsung, SK Hynix, and Micron began developing memory devices with integrated processing elements, while research institutions explored novel memory technologies such as resistive RAM (ReRAM) and phase-change memory (PCM) that inherently supported computational operations.

Modern near-memory computing encompasses a spectrum of approaches, from simple data filtering and aggregation operations performed within memory controllers to complex neural network inference executed directly on memory chips. The technology has evolved to address specific computational patterns prevalent in big data analytics, machine learning workloads, and graph processing applications where traditional architectures suffer from excessive data movement overhead.

The primary technical objectives driving near-memory computing development center on achieving substantial improvements in energy efficiency, typically targeting 10-100x reductions in data movement energy compared to conventional architectures. Performance goals focus on eliminating memory bandwidth bottlenecks that constrain modern applications, particularly in scenarios involving large dataset processing where memory access patterns exhibit poor spatial locality.

Contemporary research initiatives aim to establish standardized interfaces and programming models that enable seamless integration of near-memory computing capabilities into existing software ecosystems. The ultimate vision encompasses heterogeneous computing environments where near-memory processing units collaborate with traditional processors to optimize overall system efficiency while maintaining programming simplicity and compatibility with established development frameworks.

The foundational concept of processing-in-memory (PIM) gained momentum through pioneering research at institutions like Carnegie Mellon and Stanford, where early prototypes demonstrated the potential for embedding computational capabilities directly within memory arrays. These initial efforts focused on simple arithmetic operations performed within DRAM structures, establishing the groundwork for more sophisticated near-memory computing architectures.

The evolution accelerated significantly around 2010-2015 with the advent of 3D memory technologies and advanced semiconductor manufacturing processes. Companies like Samsung, SK Hynix, and Micron began developing memory devices with integrated processing elements, while research institutions explored novel memory technologies such as resistive RAM (ReRAM) and phase-change memory (PCM) that inherently supported computational operations.

Modern near-memory computing encompasses a spectrum of approaches, from simple data filtering and aggregation operations performed within memory controllers to complex neural network inference executed directly on memory chips. The technology has evolved to address specific computational patterns prevalent in big data analytics, machine learning workloads, and graph processing applications where traditional architectures suffer from excessive data movement overhead.

The primary technical objectives driving near-memory computing development center on achieving substantial improvements in energy efficiency, typically targeting 10-100x reductions in data movement energy compared to conventional architectures. Performance goals focus on eliminating memory bandwidth bottlenecks that constrain modern applications, particularly in scenarios involving large dataset processing where memory access patterns exhibit poor spatial locality.

Contemporary research initiatives aim to establish standardized interfaces and programming models that enable seamless integration of near-memory computing capabilities into existing software ecosystems. The ultimate vision encompasses heterogeneous computing environments where near-memory processing units collaborate with traditional processors to optimize overall system efficiency while maintaining programming simplicity and compatibility with established development frameworks.

Market Demand for High-Performance Computing Solutions

The global high-performance computing market is experiencing unprecedented growth driven by the exponential increase in data-intensive applications across multiple industries. Organizations are increasingly demanding computing solutions that can handle complex workloads including artificial intelligence, machine learning, scientific simulations, and real-time analytics. This surge in computational requirements has created a critical need for architectures that can deliver superior performance while maintaining energy efficiency and cost-effectiveness.

Traditional computing architectures face significant bottlenecks when processing large datasets, particularly due to the memory wall problem where data movement between processors and memory becomes the primary performance constraint. Near-memory computing emerges as a compelling solution by bringing computation closer to data storage, thereby reducing latency and energy consumption. Simultaneously, virtualization technologies continue to evolve, offering improved resource utilization and workload management capabilities that appeal to enterprises seeking operational flexibility.

The enterprise sector represents the largest demand segment, with organizations requiring scalable computing solutions for business intelligence, financial modeling, and customer analytics. Cloud service providers constitute another major market segment, continuously seeking technologies that can improve their infrastructure efficiency and reduce operational costs. The competition between near-memory computing and virtualization approaches reflects the industry's search for optimal performance-to-cost ratios in different deployment scenarios.

Research institutions and academic organizations drive demand for specialized high-performance computing solutions capable of handling scientific workloads such as climate modeling, genomics research, and particle physics simulations. These applications often require sustained computational performance over extended periods, making efficiency considerations paramount in technology selection decisions.

The telecommunications industry's transition to edge computing and the automotive sector's advancement in autonomous vehicle development have created new market segments demanding low-latency, high-throughput computing solutions. These emerging applications require architectures that can process data streams in real-time while maintaining strict power consumption constraints, influencing the comparative evaluation of near-memory computing versus virtualization technologies.

Manufacturing and industrial automation sectors increasingly rely on high-performance computing for predictive maintenance, quality control, and supply chain optimization. The growing adoption of Industry 4.0 principles has amplified the demand for computing solutions that can integrate seamlessly with existing infrastructure while delivering measurable performance improvements across diverse operational environments.

Traditional computing architectures face significant bottlenecks when processing large datasets, particularly due to the memory wall problem where data movement between processors and memory becomes the primary performance constraint. Near-memory computing emerges as a compelling solution by bringing computation closer to data storage, thereby reducing latency and energy consumption. Simultaneously, virtualization technologies continue to evolve, offering improved resource utilization and workload management capabilities that appeal to enterprises seeking operational flexibility.

The enterprise sector represents the largest demand segment, with organizations requiring scalable computing solutions for business intelligence, financial modeling, and customer analytics. Cloud service providers constitute another major market segment, continuously seeking technologies that can improve their infrastructure efficiency and reduce operational costs. The competition between near-memory computing and virtualization approaches reflects the industry's search for optimal performance-to-cost ratios in different deployment scenarios.

Research institutions and academic organizations drive demand for specialized high-performance computing solutions capable of handling scientific workloads such as climate modeling, genomics research, and particle physics simulations. These applications often require sustained computational performance over extended periods, making efficiency considerations paramount in technology selection decisions.

The telecommunications industry's transition to edge computing and the automotive sector's advancement in autonomous vehicle development have created new market segments demanding low-latency, high-throughput computing solutions. These emerging applications require architectures that can process data streams in real-time while maintaining strict power consumption constraints, influencing the comparative evaluation of near-memory computing versus virtualization technologies.

Manufacturing and industrial automation sectors increasingly rely on high-performance computing for predictive maintenance, quality control, and supply chain optimization. The growing adoption of Industry 4.0 principles has amplified the demand for computing solutions that can integrate seamlessly with existing infrastructure while delivering measurable performance improvements across diverse operational environments.

Current NMC and Virtualization Performance Limitations

Near-Memory Computing architectures face significant performance bottlenecks stemming from memory bandwidth limitations and data movement overhead. Current NMC implementations struggle with the fundamental challenge of processing-in-memory efficiency, where computational units integrated near DRAM arrays exhibit limited processing capabilities compared to traditional CPU cores. The bandwidth between processing elements and memory cells, while improved over conventional architectures, still constrains throughput for memory-intensive workloads. Additionally, the programming complexity of NMC systems creates substantial overhead in task scheduling and data orchestration.

Virtualization technologies encounter distinct performance degradation patterns that compound efficiency challenges in modern computing environments. Hypervisor overhead introduces latency penalties ranging from 5-15% for CPU-intensive tasks and up to 30% for I/O operations. Memory virtualization mechanisms, including shadow page tables and nested page tables, create additional translation layers that significantly impact memory access patterns. The virtualization of hardware resources leads to resource contention issues, particularly in multi-tenant environments where virtual machines compete for shared physical resources.

The intersection of NMC and virtualization presents compounded performance limitations that exceed the sum of individual technology constraints. Virtualized NMC deployments suffer from double-layer abstraction penalties, where hypervisor memory management conflicts with NMC's direct memory access requirements. The virtualization layer disrupts the tight coupling between processing elements and memory that NMC architectures depend upon for efficiency gains. This results in degraded memory locality and increased data movement overhead.

Current measurement frameworks inadequately capture the nuanced performance characteristics of hybrid NMC-virtualization systems. Traditional benchmarking tools fail to account for the complex interaction patterns between virtualized resource allocation and near-memory processing efficiency. The lack of standardized performance metrics specifically designed for virtualized NMC environments hampers accurate efficiency assessment and optimization efforts.

Thermal and power management constraints further exacerbate performance limitations in both technologies. NMC systems generate concentrated heat loads near memory arrays, requiring aggressive thermal throttling that reduces processing frequency. Virtualization overhead increases overall system power consumption while simultaneously reducing the effectiveness of power management policies designed for native hardware execution, creating a compounding effect on energy efficiency.

Virtualization technologies encounter distinct performance degradation patterns that compound efficiency challenges in modern computing environments. Hypervisor overhead introduces latency penalties ranging from 5-15% for CPU-intensive tasks and up to 30% for I/O operations. Memory virtualization mechanisms, including shadow page tables and nested page tables, create additional translation layers that significantly impact memory access patterns. The virtualization of hardware resources leads to resource contention issues, particularly in multi-tenant environments where virtual machines compete for shared physical resources.

The intersection of NMC and virtualization presents compounded performance limitations that exceed the sum of individual technology constraints. Virtualized NMC deployments suffer from double-layer abstraction penalties, where hypervisor memory management conflicts with NMC's direct memory access requirements. The virtualization layer disrupts the tight coupling between processing elements and memory that NMC architectures depend upon for efficiency gains. This results in degraded memory locality and increased data movement overhead.

Current measurement frameworks inadequately capture the nuanced performance characteristics of hybrid NMC-virtualization systems. Traditional benchmarking tools fail to account for the complex interaction patterns between virtualized resource allocation and near-memory processing efficiency. The lack of standardized performance metrics specifically designed for virtualized NMC environments hampers accurate efficiency assessment and optimization efforts.

Thermal and power management constraints further exacerbate performance limitations in both technologies. NMC systems generate concentrated heat loads near memory arrays, requiring aggressive thermal throttling that reduces processing frequency. Virtualization overhead increases overall system power consumption while simultaneously reducing the effectiveness of power management policies designed for native hardware execution, creating a compounding effect on energy efficiency.

Existing NMC and Virtualization Implementation Approaches

01 Memory-side processing architecture for reducing data movement

Near-memory computing architectures place processing units adjacent to or within memory modules to minimize data transfer overhead. This approach reduces latency and power consumption by performing computations closer to where data resides, rather than moving large amounts of data to distant processors. The architecture typically involves specialized processing elements integrated with memory controllers or embedded within memory chips themselves.- Memory-side processing architecture for reducing data movement: Near-memory computing architectures place processing units adjacent to or within memory modules to minimize data transfer overhead. This approach reduces latency and energy consumption by performing computations closer to where data resides, rather than moving large amounts of data to distant processors. The architecture typically involves specialized processing elements integrated with memory controllers or embedded within memory chips themselves, enabling efficient execution of memory-intensive operations.

- Virtual machine memory management optimization: Virtualization efficiency is enhanced through advanced memory management techniques that optimize how virtual machines access and utilize physical memory resources. These techniques include memory page sharing, dynamic memory allocation, and intelligent caching strategies that reduce memory overhead in virtualized environments. The methods enable multiple virtual machines to coexist efficiently while maintaining performance isolation and minimizing resource contention.

- Hardware-assisted virtualization with near-memory acceleration: Integration of hardware acceleration features with near-memory computing capabilities improves virtualization performance by offloading specific tasks to specialized units located near memory. This combination leverages hardware extensions for virtualization support while utilizing proximity to memory for faster data access patterns. The approach reduces hypervisor overhead and improves overall system throughput in virtualized environments.

- Memory bandwidth optimization in virtualized systems: Techniques for maximizing memory bandwidth utilization in virtualized environments address the bottleneck created by multiple virtual machines competing for memory resources. These solutions implement intelligent scheduling algorithms, memory access prioritization, and bandwidth allocation mechanisms that ensure fair and efficient distribution of memory bandwidth among virtual machines. The methods prevent performance degradation caused by memory bandwidth saturation.

- Distributed memory computing for virtualization scalability: Distributed memory architectures enable scalable virtualization by distributing memory resources across multiple nodes while maintaining coherent access patterns. This approach allows virtual machines to access memory resources beyond local boundaries, supporting larger-scale deployments and improved resource utilization. The system coordinates memory operations across distributed components to maintain consistency and performance.

02 Virtual machine memory management optimization

Virtualization efficiency is enhanced through improved memory management techniques that optimize how virtual machines access and utilize physical memory resources. These methods include advanced page table management, memory deduplication, and dynamic memory allocation strategies that reduce overhead in virtualized environments. The techniques enable better resource utilization and improved performance for multiple concurrent virtual machines.Expand Specific Solutions03 Hardware-assisted virtualization with near-memory acceleration

Integration of hardware acceleration features with near-memory computing capabilities to improve virtualization performance. This involves specialized hardware extensions that support virtualized workloads while leveraging proximity to memory for faster operations. The approach combines virtualization support at the processor level with memory-centric computing paradigms to achieve better efficiency in cloud and data center environments.Expand Specific Solutions04 Memory bandwidth optimization for virtualized systems

Techniques for maximizing memory bandwidth utilization in virtualized computing environments through intelligent scheduling and data placement strategies. These methods address the memory wall problem by optimizing how virtual machines access shared memory resources and reducing contention. Solutions include memory access pattern prediction, prefetching mechanisms, and bandwidth allocation policies tailored for multi-tenant virtualized infrastructures.Expand Specific Solutions05 Processing-in-memory for virtual machine workloads

Implementation of processing-in-memory technologies specifically designed to accelerate virtualized workload execution. This approach embeds computational capabilities directly within memory devices to handle common virtualization operations such as memory translation, data filtering, and security checks. The integration reduces the burden on host processors and improves overall system throughput for virtualized applications.Expand Specific Solutions

Major Players in NMC and Virtualization Markets

The near-memory computing versus virtualization efficiency landscape represents a rapidly evolving sector at the intersection of hardware acceleration and software abstraction technologies. The industry is transitioning from traditional virtualization-centric architectures toward hybrid approaches that leverage proximity computing capabilities. Market growth is driven by increasing data processing demands and latency-sensitive applications. Technology maturity varies significantly across players: established virtualization leaders like VMware and Microsoft Technology Licensing maintain strong positions in software-defined infrastructure, while hardware innovators including Intel, Huawei Technologies, and Taiwan Semiconductor Manufacturing are advancing near-memory processing solutions. Research institutions such as Shanghai Jiao Tong University and Institute of Computing Technology contribute foundational innovations. The competitive dynamics reflect a convergence where traditional boundaries between memory, storage, and compute are dissolving, creating opportunities for integrated solutions that optimize both virtualization efficiency and near-memory processing capabilities.

VMware LLC

Technical Solution: VMware's virtualization platform utilizes advanced memory management techniques including Transparent Page Sharing (TPS) and memory ballooning to optimize memory utilization across virtual machines. Their vSphere platform achieves memory consolidation ratios of up to 200% through intelligent memory deduplication and compression algorithms. The company's approach focuses on dynamic memory allocation and real-time workload balancing, enabling efficient resource utilization in virtualized environments while maintaining performance isolation between different virtual instances.

Strengths: Mature virtualization ecosystem, excellent memory consolidation capabilities, robust enterprise support. Weaknesses: Overhead from hypervisor layer, potential performance degradation under high memory contention scenarios.

International Business Machines Corp.

Technical Solution: IBM's near-memory computing strategy centers around their Power10 processor architecture with integrated Memory Inception technology, which provides up to 4TB of memory capacity per socket with enhanced bandwidth utilization. Their solution combines hardware-accelerated memory compression with AI-optimized memory controllers that can predict and prefetch data patterns, resulting in 40% improvement in memory-intensive workloads. IBM also develops cognitive computing frameworks that leverage near-memory processing for real-time analytics and decision-making applications.

Strengths: Advanced processor-memory integration, strong AI acceleration capabilities, enterprise-grade reliability. Weaknesses: Limited market adoption outside enterprise segment, higher total cost of ownership compared to commodity solutions.

Core Patents in Memory Computing Architecture

Near-memory computing systems and methods

PatentActiveUS11645005B2

Innovation

- A flexible NMC architecture is introduced, incorporating embedded FPGA/DSP logic, high-bandwidth SRAM, real-time processors, and a bus system within the SSD controller, enabling local data processing and supporting multiple applications through versatile processing units, inter-process communication hubs, and quality of service arbiters.

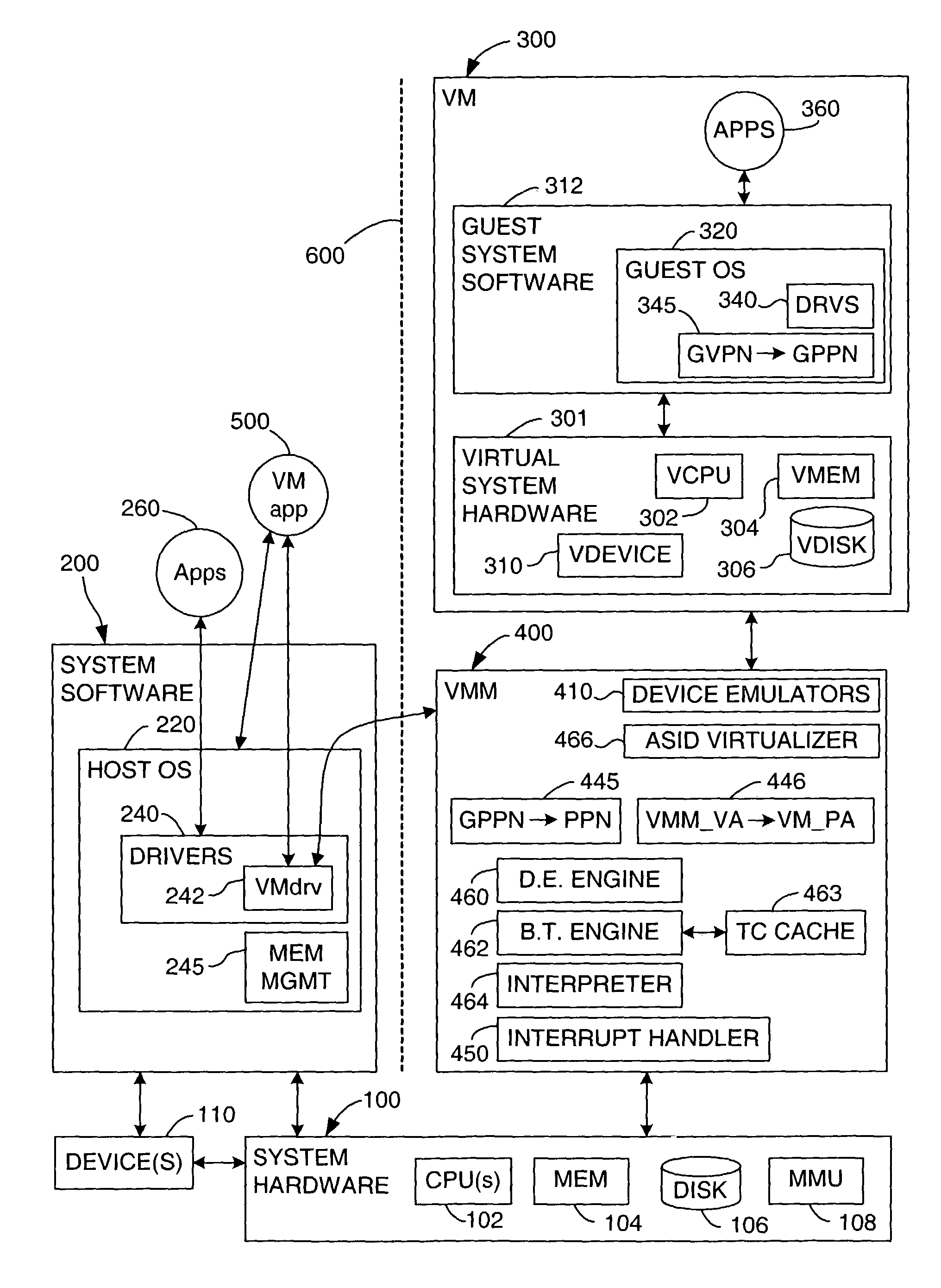

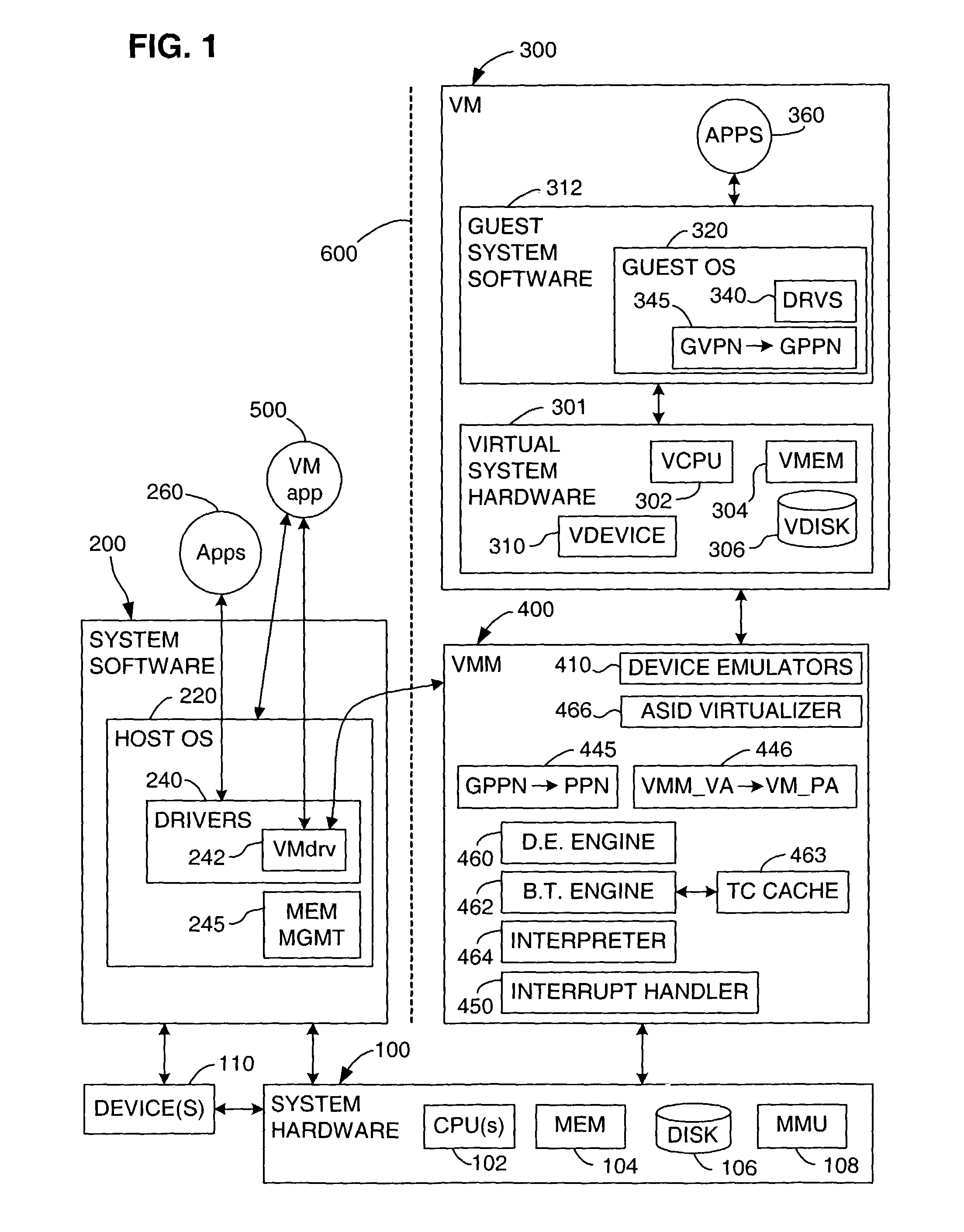

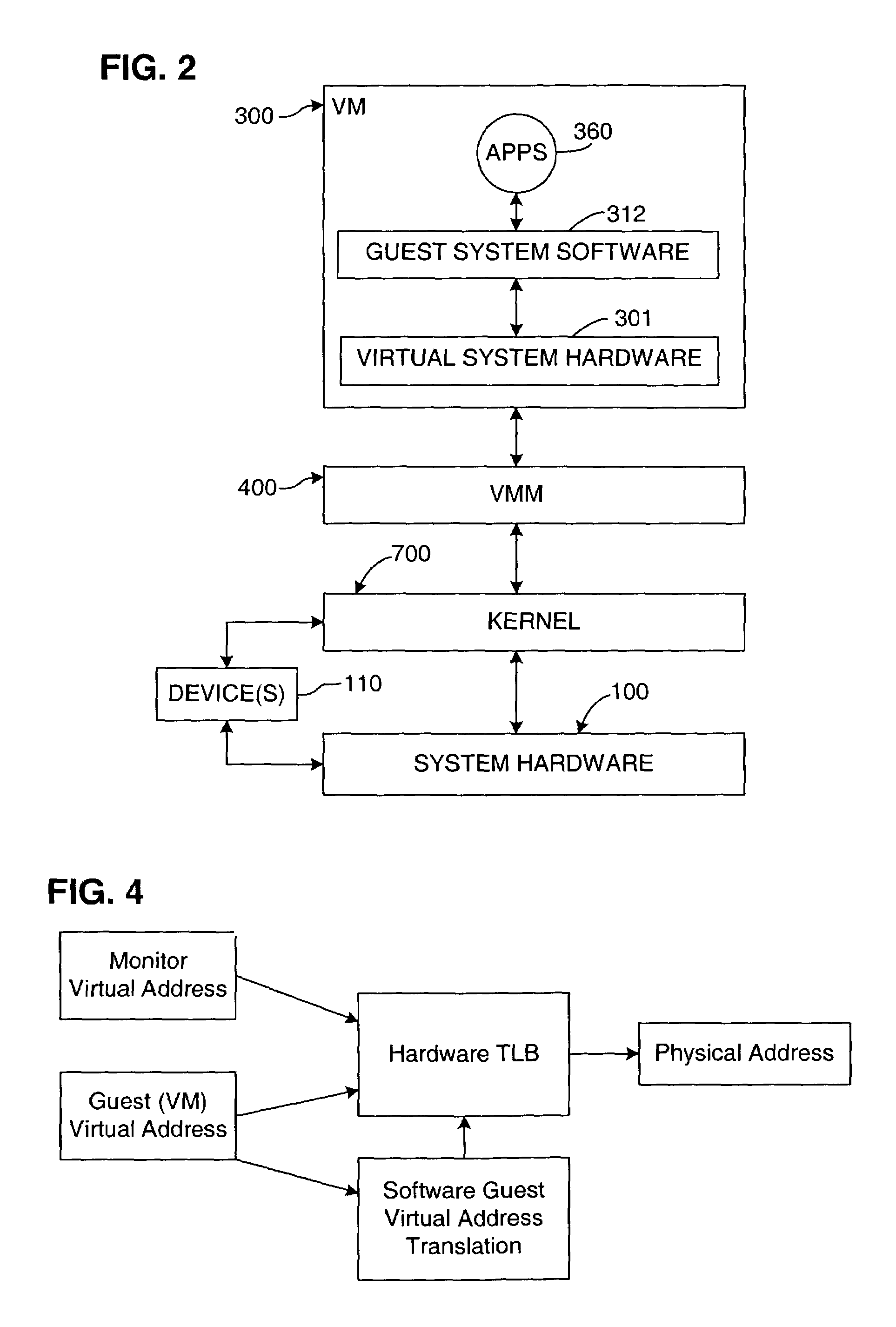

Virtualization system for computers that use address space indentifiers

PatentInactiveUS7409487B1

Innovation

- A mechanism is introduced to manage address space identifiers (ASIDs) by allocating unique shadow ASIDs for each guest ASID, allowing address translations to occur independently within the virtual machine, thereby avoiding the need for TLB purges when switching between VMs.

Energy Efficiency Standards for Computing Systems

Energy efficiency standards for computing systems have become increasingly critical as the industry grapples with the performance trade-offs between near-memory computing and virtualization technologies. Current regulatory frameworks primarily focus on traditional metrics such as Performance per Watt (PERF/W) and Power Usage Effectiveness (PUE), but these standards inadequately address the nuanced energy consumption patterns inherent in memory-centric architectures versus virtualized environments.

The IEEE 1621 standard for notebook computer energy efficiency provides foundational guidelines, yet it lacks specific provisions for evaluating near-memory computing systems where processing occurs closer to data storage. Similarly, the ENERGY STAR program's server efficiency requirements, while comprehensive for conventional architectures, do not adequately differentiate between the energy profiles of virtualized workloads and those optimized for memory proximity.

Emerging standards development initiatives are beginning to address these gaps. The Green Grid's updated PUE 2.0 framework introduces memory subsystem energy accounting, recognizing that near-memory computing can significantly alter traditional CPU-centric power models. The proposed ISO/IEC 30134 series on data center resource efficiency includes provisions for memory bandwidth efficiency metrics, which directly impact the comparative analysis between near-memory and virtualized approaches.

Industry consortiums are driving the development of specialized benchmarking standards. The Storage Performance Council's SNIA Emerald program has introduced memory-aware efficiency metrics that better capture the energy implications of data locality in near-memory systems. These standards recognize that virtualization overhead can negate energy savings when memory access patterns become fragmented across virtual machines.

Regulatory compliance frameworks are evolving to accommodate hybrid architectures that combine both technologies. The European Union's Ecodesign Directive for servers now includes provisions for memory subsystem efficiency reporting, while the US Department of Energy's server efficiency standards are incorporating workload-specific energy measurements that distinguish between virtualized and near-memory computing scenarios.

Future standards development must establish unified metrics that enable fair comparison between these architectural approaches while accounting for their distinct energy consumption characteristics and operational contexts.

The IEEE 1621 standard for notebook computer energy efficiency provides foundational guidelines, yet it lacks specific provisions for evaluating near-memory computing systems where processing occurs closer to data storage. Similarly, the ENERGY STAR program's server efficiency requirements, while comprehensive for conventional architectures, do not adequately differentiate between the energy profiles of virtualized workloads and those optimized for memory proximity.

Emerging standards development initiatives are beginning to address these gaps. The Green Grid's updated PUE 2.0 framework introduces memory subsystem energy accounting, recognizing that near-memory computing can significantly alter traditional CPU-centric power models. The proposed ISO/IEC 30134 series on data center resource efficiency includes provisions for memory bandwidth efficiency metrics, which directly impact the comparative analysis between near-memory and virtualized approaches.

Industry consortiums are driving the development of specialized benchmarking standards. The Storage Performance Council's SNIA Emerald program has introduced memory-aware efficiency metrics that better capture the energy implications of data locality in near-memory systems. These standards recognize that virtualization overhead can negate energy savings when memory access patterns become fragmented across virtual machines.

Regulatory compliance frameworks are evolving to accommodate hybrid architectures that combine both technologies. The European Union's Ecodesign Directive for servers now includes provisions for memory subsystem efficiency reporting, while the US Department of Energy's server efficiency standards are incorporating workload-specific energy measurements that distinguish between virtualized and near-memory computing scenarios.

Future standards development must establish unified metrics that enable fair comparison between these architectural approaches while accounting for their distinct energy consumption characteristics and operational contexts.

Hardware-Software Co-design Optimization Strategies

The convergence of near-memory computing and virtualization technologies necessitates sophisticated hardware-software co-design optimization strategies to maximize system efficiency while maintaining operational flexibility. Traditional optimization approaches that treat hardware and software as separate entities prove inadequate when addressing the complex interdependencies between memory-centric computing architectures and virtualized environments.

Unified memory management represents a critical optimization strategy that bridges the gap between physical near-memory computing capabilities and virtualized resource allocation. This approach involves developing hybrid memory controllers that can simultaneously handle direct memory operations from near-memory processors while maintaining virtualization layer transparency. The strategy requires careful coordination between hypervisor memory management units and specialized near-memory computing instruction sets to minimize translation overhead and maximize data locality benefits.

Adaptive resource partitioning emerges as another essential strategy, enabling dynamic allocation of near-memory computing resources across multiple virtual machines based on workload characteristics. This involves implementing intelligent scheduling algorithms that consider both computational proximity to data and virtualization overhead costs. The optimization framework must account for memory access patterns, data movement costs, and virtual machine isolation requirements while maximizing overall system throughput.

Cross-layer performance monitoring and feedback mechanisms form the foundation for effective co-design optimization. These systems continuously analyze performance metrics across hardware accelerators, memory subsystems, and virtualization layers to identify bottlenecks and optimization opportunities. Real-time profiling data enables dynamic reconfiguration of both hardware resource allocation and software execution strategies.

Compiler and runtime system integration represents a sophisticated optimization approach that leverages compile-time analysis to generate code optimized for specific near-memory computing and virtualization configurations. This strategy involves developing specialized compiler passes that understand both hardware memory topology and virtualization overhead characteristics, enabling generation of code that minimizes data movement while respecting virtual machine boundaries and security requirements.

Hardware abstraction layer optimization focuses on creating efficient interfaces between near-memory computing units and virtualization software stacks. This involves designing lightweight virtualization primitives specifically tailored for memory-centric computing workloads, reducing the traditional overhead associated with hardware abstraction while maintaining system security and isolation guarantees essential for multi-tenant environments.

Unified memory management represents a critical optimization strategy that bridges the gap between physical near-memory computing capabilities and virtualized resource allocation. This approach involves developing hybrid memory controllers that can simultaneously handle direct memory operations from near-memory processors while maintaining virtualization layer transparency. The strategy requires careful coordination between hypervisor memory management units and specialized near-memory computing instruction sets to minimize translation overhead and maximize data locality benefits.

Adaptive resource partitioning emerges as another essential strategy, enabling dynamic allocation of near-memory computing resources across multiple virtual machines based on workload characteristics. This involves implementing intelligent scheduling algorithms that consider both computational proximity to data and virtualization overhead costs. The optimization framework must account for memory access patterns, data movement costs, and virtual machine isolation requirements while maximizing overall system throughput.

Cross-layer performance monitoring and feedback mechanisms form the foundation for effective co-design optimization. These systems continuously analyze performance metrics across hardware accelerators, memory subsystems, and virtualization layers to identify bottlenecks and optimization opportunities. Real-time profiling data enables dynamic reconfiguration of both hardware resource allocation and software execution strategies.

Compiler and runtime system integration represents a sophisticated optimization approach that leverages compile-time analysis to generate code optimized for specific near-memory computing and virtualization configurations. This strategy involves developing specialized compiler passes that understand both hardware memory topology and virtualization overhead characteristics, enabling generation of code that minimizes data movement while respecting virtual machine boundaries and security requirements.

Hardware abstraction layer optimization focuses on creating efficient interfaces between near-memory computing units and virtualization software stacks. This involves designing lightweight virtualization primitives specifically tailored for memory-centric computing workloads, reducing the traditional overhead associated with hardware abstraction while maintaining system security and isolation guarantees essential for multi-tenant environments.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!